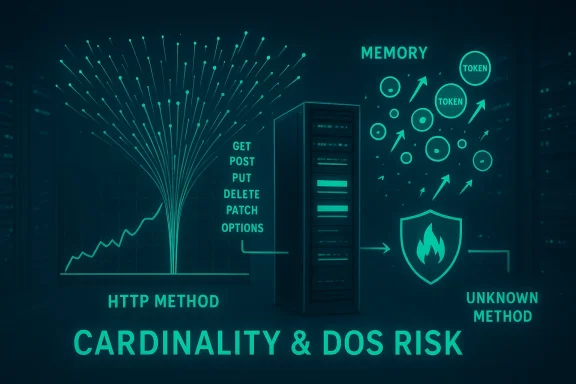

The promhttp vulnerability tracked as CVE-2022-21698 exposed a surprising — yet instructive — weakness at the intersection of observability and availability: by allowing unbounded metric label values to be created from unvalidated HTTP methods, the Prometheus Go client library (client_golang) could be forced into uncontrolled resource consumption and an availability-impacting denial-of-service (DoS). This is a classic cardinality‑explosion problem made practical because instrumentation middleware trusted an unbounded input (the HTTP method) and turned it into a label value without sufficient validation or limits, producing many thousands of time series and ultimately exhausting memory. (github.com)

Prometheus client libraries expose metrics to be scraped by Prometheus servers; those metrics are identified by a metric name plus a set of labels. Each distinct combination of label values becomes a separate time series, and time‑series cardinality is the single most important resource constraint in Prometheus: as the number of series grows, memory, CPU, disk, and query complexity grow too. The official Prometheus instrumentation guidance warns explicitly to not overuse labels and to keep cardinality bounded; a general rule‑of‑thumb is to target small, finite sets of label values and avoid labels that vary per‑request or per‑user.

CVE-2022-21698 is rooted in that principle. The vulnerable code path lived in the promhttp package of the Prometheus Go client library (client_golang). Several convenience middleware functions — grouped under promhttp.InstrumentHandler — were designed to automatically instrument HTTP handlers by incrementing counters (requests total), recording latencies, sizes, and other metrics, using labels such as the HTTP method. When those middleware functions accepted the HTTP method verb directly as a label value, an attacker able to send requests with many different method tokens (for example FOO1, FOO2, BAZ-XYZ, etc.) could create arbitrarily many label values. Each unique token produced a new time series; with enough distinct tokens the target process could run out of memory or become unresponsive. The security advisory and NVD entry make the vulnerability and its preconditions explicit: affected versions are client_golang before v1.11.1, and the exploit only applies when the application uses the InstrumentHandler middleware (except RequestsInFlight) and does not sanitize or filter incoming methods before instrumentation. (github.com)

If you cannot upgrade immediately, the advisory lists several practical workarounds: remove the method label from metrics used by the InstrumentHandler; disable the affected promhttp handlers; add custom middleware that sanitizes or canonicalizes the request method before the promhttp middleware runs; or place a reverse proxy/WAF that restricts allowed HTTP methods. Each workaround has tradeoffs that I examine below. (github.com)

For operators, the vulnerability underscores the importance of two disciplines that are often treated separately:

Operators should assume that any public or poorly filtered metrics/HTTP endpoint instrumented with older versions of client_golang and using InstrumentHandler* is potentially vulnerable until proven otherwise. Conduct a prioritized inventory, patch, and apply network method filtering as a defense‑in‑depth measure. Where vendor artifacts are used, consult vendor advisories and inventory attestations to confirm whether a particular product build includes the vulnerable library.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

Prometheus client libraries expose metrics to be scraped by Prometheus servers; those metrics are identified by a metric name plus a set of labels. Each distinct combination of label values becomes a separate time series, and time‑series cardinality is the single most important resource constraint in Prometheus: as the number of series grows, memory, CPU, disk, and query complexity grow too. The official Prometheus instrumentation guidance warns explicitly to not overuse labels and to keep cardinality bounded; a general rule‑of‑thumb is to target small, finite sets of label values and avoid labels that vary per‑request or per‑user.CVE-2022-21698 is rooted in that principle. The vulnerable code path lived in the promhttp package of the Prometheus Go client library (client_golang). Several convenience middleware functions — grouped under promhttp.InstrumentHandler — were designed to automatically instrument HTTP handlers by incrementing counters (requests total), recording latencies, sizes, and other metrics, using labels such as the HTTP method. When those middleware functions accepted the HTTP method verb directly as a label value, an attacker able to send requests with many different method tokens (for example FOO1, FOO2, BAZ-XYZ, etc.) could create arbitrarily many label values. Each unique token produced a new time series; with enough distinct tokens the target process could run out of memory or become unresponsive. The security advisory and NVD entry make the vulnerability and its preconditions explicit: affected versions are client_golang before v1.11.1, and the exploit only applies when the application uses the InstrumentHandler middleware (except RequestsInFlight) and does not sanitize or filter incoming methods before instrumentation. (github.com)

What exactly went wrong: technical anatomy

How promhttp instrumentation uses labels

The purpose of promhttp middleware is to instrument HTTP handlers in a zero‑touch way: register a counter (e.g., requests_total) with label names such as method, status, and handler, then call WithLabelValues(method, status, handler). That code pattern is convenient and safe when label values are bounded and predictable (for example method ∈ {GET, POST, PUT, DELETE}). But when label values are derived from untrusted sources with unbounded domain, every new value expands the set of series stored in memory. Prometheus stores series descriptors and recent sample data in memory; creating thousands of series quickly raises resident memory usage and CPU pressure. The official Prometheus instrumentation guidance highlights this cost and recommends keeping label cardinality low.The exploitation vector

An attacker only needs network access to the instrumented endpoint and the ability to send HTTP requests with arbitrary method names. Unlike HTTP headers or bodies, method tokens are part of the request line and many web frameworks will accept non‑standard tokens unless upstream proxies or firewalls block them. If the server associates the method token with a metric label (for example method in requests_total) and the middleware increments a counter for every unique method seen, an attacker can create thousands or millions of unique token values in short order. This is a low-complexity, remotely exploitable vector with no privileges required. The GitHub security advisory and related vulnerability databases lay out the same chain of conditions required for exploitation. (github.com)Why memory and availability suffer

Each new time series consumes memory for label storage and other Prometheus internal structures. In addition:- Scraping and writing newly created series increases CPU and GC pressure in the instrumented process.

- Queries that touch high‑cardinality metrics slow down and can time out.

- If series creation continues unchecked, processes can reach out‑of‑memory limits, crash, or become so slow that they effectively deny service.

Scope: who and what was affected

- Affected component: github.com/prometheus/client_golang (promhttp package) prior to v1.11.1. (github.com)

- Affected configurations: any Go HTTP server using promhttp.InstrumentHandler* middleware (except RequestsInFlight), and which exposes the metrics endpoint or an instrumented handler without filtering/sanitizing unknown HTTP methods. Upstream load balancers or WAFs that block unknown methods reduce exposure. (github.com)

- Real‑world impact: services that embed the vulnerable client library and expose an instrumented HTTP handler (including maations and internal services) could be targeted. The advisory explicitly enumerates the middleware symbols that were implicated. (github.com)

Patches, fixes, and timelines

The Prometheus client_golang maintainers released fixes and a security advisory in February 2022; the vulnerability was assigned CVE‑2022‑21698 and patched in client_golang v1.11.1. The GitHub advisory references two pull requests that implement the mitigations and harden instrumentation behaviour. Operators were advised to update to v1.11.1 or later. (github.com)If you cannot upgrade immediately, the advisory lists several practical workarounds: remove the method label from metrics used by the InstrumentHandler; disable the affected promhttp handlers; add custom middleware that sanitizes or canonicalizes the request method before the promhttp middleware runs; or place a reverse proxy/WAF that restricts allowed HTTP methods. Each workaround has tradeoffs that I examine below. (github.com)

Detection and hunting: how to know if you're being targeted

Detecting an ongoing or prior exploitation attempt requires looking for two signals: abnormal metric cardinality and unusual HTTP traffic patterns.PromQL queries to highlight cardinality spikes

Use Prometheus queries to find metrics whose label cardinality is growing rapidly. Replace http_requests_total with the real metric your service exposes.- High cardinality by label value count:

- Count distinct label values for a metric:

- count(count by (method) (http_requests_total))

- Look for sudden jumps:

- increase(count by (method) (http_requests_total)[5m])

- Rapid creation of new label values:

- A heuristic that shows number of distinct method labels per minute:

- count(count by (method) (increase(http_requests_total[1m])))

Network and application log indicators

- A flood of requests where the HTTP method token does not match standard verbs.

- Requests containing method tokens with GUIDs, timestamps, or otherwise procedurally generated portions.

- Memory or GC pressure alarms on the instrumented process coinciding with unusual method diversity.

Mitigation and remediation: immediate steps and durable fixes

1) Immediate remediation (fastest, recommended)

- Upgrade the client_golang library to v1.11.1 or later. This is the definitive fix from the maintainers and is the highest‑priority action. (github.com)

2) If you cannot upgrade right away — short‑term mitigations

- Remove the method label from the counter/gauge passed to InstrumentHandler so the middleware does not create a series per method. This changes metrics dimensionality but immediately removes the vector. (github.com)

- Insert a small custom middleware before promhttp that validates and canonicalizes http.Request.Method to a small approved set (e.g., map any unknown tokens to "UNKNOWN" or reject the request outright). This keeps a bounded set of label values while preserving method visibility. (github.com)

- Put a reverse proxy, ingress controller or web application firewall (WAF) in front of your service and restrict allowed HTTP methods to the standard set (GET, POST, PUT, DELETE, HEAD, OPTIONS, PATCH as needed). Blocking unknown methods at the edge prevents the labels from being created in the first place. (github.com)

3) Long‑term controls and best practices

- Avoid exposing raw instrumentation endpoints or instrumented handlers directly to the internet. Keep metrics endpoints internal or behind authenticated tunnels.

- Apply a bounded label design: prefer labels whose value sets are small, finite, and predictable. The Prometheus instrumentation docs provide explicit recommendations on limiting cardinality.

- Add cardinality monitoring and alerting into your telemetry pipeline: alert on sudden growth in series count per metric or in the whole registry.

- Where appropriate, use relabeling rules during scrape time to drop or rewrite overly variable labels and prevent unbounded series from entering your TSDB.

Example mitigation snippets (patterns, not vendor‑specific commands)

Note: these are example patterns that convey intent rather than full copy/paste production configs.- Simple method‑sanitizing middleware (Go pseudo‑code)

- Before promhttp instrumentation, run:

- allowed := map[string]bool{"GET":true,"POST":true,"PUT":true,"DELETE":true,"HEAD":true,"OPTIONS":true,"PATCH":true}

- if !allowed[r.Method] { r.Method = "UNKNOWN" } // or return 405

- Nginx as an edge filter (conceptual)

- Deny requests whose method is not in a configured allow list; this avoids creating novel method tokens that reach upstream services.

Risk analysis: strengths, weaknesses, and operational implications

Strengths of the Prometheus approach

- Prometheus' label model is powerful and enables detailed, flexible querying without changing metric names.

- Client libraries provide convenient instrumentation middleware to lower the cost of adding metrics.

- The Prometheus community and maintainers responded by documenting the advisory and releasing a patch, showing responsible disclosure and remediation cadence. (github.com)

Weaknesses and systemic risks highlighted by CVE‑2022‑21698

- Convenience can be dangerous: automation that implicitly casts untrusted inputs into label values creates attack surfaces if inputs are unbounded.

- Libraries that make decisions about label names/values must treat external inputs (including HTTP method tokens) as untrusted and either bound, canonicalize, or reject them.

- Observability tooling is not neutral with respect to availability: instrumentation can harm the system it monitors if cardinality is uncontrolled.

Operational risk tradeoffs

- Removing the method label reduces the dimensionality of your metrics (and potentially the effectiveness of some alerts and dashboards), but it may be necessary for safety until a sanitized approach is in place.

- Blocking unknown HTTP methods at the edge is excellent for public endpoints, but for internal services where non‑standard methods are used for legitimate reasons (rare), you must adopt a more nuanced, canonicalizing middleware.

Practical checklist for operators (actionable)

- Inventory: find all services that vendor or custom‑build with github.com/prometheus/client_golang and check versions; any below v1.11.1 is a candidate for remediation. (github.com)

- Patch: schedule an upgrade to client_golang v1.11.1+ as soon as feasible. (github.com)

- If immediate patching is impossible:

- Remove method labeling from InstrumentHandler metrics, or

- Add pre‑instrumentation middleware to canonicalize/rewrite method tokens, or

- Place an ingress filter to allow only known methods.

- Monitor: add PromQL alerts to detect cardinality anomalies and memory pressure spikes; investigate any sudden growth in distinct label counts.

- Harden: keep metrics endpoints internal when possible and secure scraping endpoints with network rules, mTLS, or authentication.

Lessons learned and larger implications

CVE‑2022‑21698 is a sober reminder that observability can be an attack surface. Instrumentation libraries are not just passive telemetry collectors — they materially affect runtime state (memory, CPU, GC) because they allocate and maintain time series. Libraries must be defensive by default: validate inputs, bound label value domains, and provide opt‑out or safer defaults for middleware that auto‑labels from request attributes.For operators, the vulnerability underscores the importance of two disciplines that are often treated separately:

- Secure‑by‑design instrumentation: treat metrics labels like any other externally influenced configuration parameter and document their cardinality implications.

- Runtime telemetry and guardrails: proactively monitor cardinality and resource usage as part of SRE and inooks.

Final verdict and risk posture

CVE‑2022‑21698 is a high‑severity availability issue (CVSS 7.5) because it is remotely exploitable, has low complexity, and directly impacts availability via memory exhaustion and DoS. The remediation (upgrade to v1.11.1) is straightforward for most codebases and should be treated as high priority wherever the vulnerable middleware is in use. Workarounds are available for environments where an immediate upgrade is not possible, but they carry tradeoffs and must be implemented carefully. (github.com)Operators should assume that any public or poorly filtered metrics/HTTP endpoint instrumented with older versions of client_golang and using InstrumentHandler* is potentially vulnerable until proven otherwise. Conduct a prioritized inventory, patch, and apply network method filtering as a defense‑in‑depth measure. Where vendor artifacts are used, consult vendor advisories and inventory attestations to confirm whether a particular product build includes the vulnerable library.

Conclusion

CVE‑2022‑21698 shows how the convenience of automatic instrumentation can interact badly with core operational constraints. The Prometheus client_golang maintainers fixed the bug and published an advisory; operators who exposed instrumented handlers without method validation should upgrade and harden immediately. Beyond the immediate patching imperative, this episode is a clear operational lesson: control metric cardinality, treat observability code as part of your attack surface, and bake cardinality monitoring and label‑validation into your deployment standards so your monitoring system aids reliability — rather than becoming the cause of outages. (github.com)Source: MSRC Security Update Guide - Microsoft Security Response Center