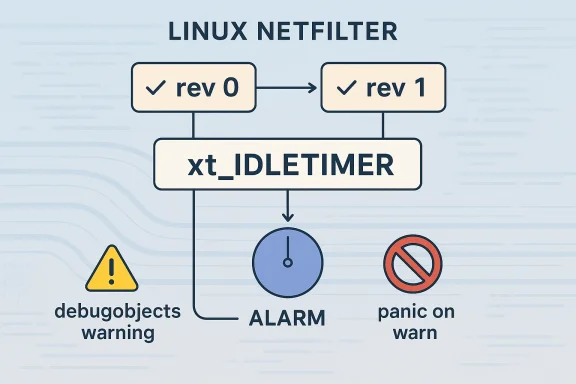

Linux kernel CVE-2026-23274 is a small-looking bug with a very specific failure mode, but it sits in exactly the kind of kernel plumbing that can turn a bookkeeping mistake into a crash. The issue is in the netfilter xt_IDLETIMER path: revision 0 rules can reuse an existing timer object by label, and if that object was first created by revision 1 with XT_IDLETIMER_ALARM, the timer internals are never initialized for the older rule’s expectations. Reusing that object and calling

This CVE is notable because the bug is not a classic memory corruption issue in the ordinary sense. Instead, it is a lifecycle mismatch: revision 0 expects a reusable timer with a normal

The kernel.org description makes the operational consequence clear: the old code can end up calling

The CVE’s publicatof the story. Microsoft’s Security Update Guide now routinely tracks Linux kernel CVEs alongside Windows issues, which reflects how vulnerability management has become platform-agnostic in enterprise environments. This matters because many organizations no longer care where a fix originates; they care whether the advisory is trustworthy, whether the issue has a stable backport, and whether the affected build is in their fleet. Microsoft’s publication of a Linux kernel CVE is therefore less surprising than it once was, and more a sign that patch coordination is now a cross-vendor discipline.

The NVD record is still marked for enrichment, and as of publication it does not yet provide a finalized CVSS assessment. That is common for freshly received kernel CVEs, especially when the upstream description is precise but the downstream severity calculus remains under review. In other words, the technical flaw is already understood well enough to fix, but the final severity label may lag behind the upstream resolution.

At its c about incompatible reuse. Revision 0 rules for xt_IDLETIMER reuse timers by label and always call

The important nuance is that the vulnerable behavior is not merely “wrong timer type,” but wrong object contract under label reuse. In kernel code, labels often act like soft identifiers for state sharing, and that is convenient until a subsystem starts supporting multiple object flavors under the same label namespace. Once that happens, the label no longer tells the whole truth. The actual type and initialization path matter, and if those are not checked before reuse, the bug becomes a logic trap.

The broader lesson is that identity and compatibility are not the same thing. A shared label does not guarantee that the object behind it is safe for every caller, especially when the caller is an older revision with assumptions baked into its implementation. In kernel maintenance, this is exactly the sort of trap that appears small in a patch but large in operational terms.

Why

In a development kernel, a warning might be an inconvenience. On a production system with panic-on-warn enabled, it becomes a potential outage trigger. This is why apparently modest correctness defects are tracked as CVEs: their impact is often not determined by the size of the code change, but by where the bug sits in the kernel’s fail-fast policy chain.

The reason is simple: kernel correctness and kernel security are increasingly intertte machine in a privileged subsystem can be enough for denial of service, especially in environments where kernel warnings are configured to halt the machine. It can also obscure more serious issues, complicate diagnostics, and break service-level guarantees. In enterprise terms, that is not “just a bug”; it is an availability and reliability event.

The significance of

The mention of

That design choice is sensible, but it means bugs like this one can have outsized practical impact. A debugobjects warning in a test lab is one thing; the same warning on a hardened server can become a reboot loop or a service outage. So even though the underlying flaw is a timer-label reuse problem, its real-world effect can be disproportionate.

This is a good example of a narrow defensive fix: it does not redesign timers, it does not add broad compatibility layers, and it does not try to guess the caller’s intent. It simply refuses an unsafe combination. In kernel engineering, that kind of restraint is often the most reliable path to stable backporting.

This is the kind of bug that only shows up when code tries to preserve compatibility while extending semantics. The kernel has to support old rule definitions, newer timer behavior, and a shared label namespace all at once. That combination is exactly where “works in isolation” becomes “fails in combination.”

That lesson extends beyond netfilter. In the kernel, many subsystems use versioned or layered APIs to preserve old behavior while introducing new semantics. Each of those layers needs an explicit compatibility gate. When that gate is missing, the failure may remain invisible until a particular ordering of rule creation and reuse happens.

That is especially true in mixed environments where configuration management, legacy firewall scripts, and newer rule generators may all touch the same subsystem. A label that is harmless in a pristine test environment can become risky when a new object type is introduced in production and older automation later reuses the same identifier.

That matters because many production systems are configured to escalate aggressively on warnings. The reason is clear enough: once the kernel starts warning about structural misuse of internal objects, continuing to run can be riskier than stopping. CVE-2026-23274 demonstrates the downside of that safety philosophy: a bug that might have remained a noisy warning in a default environment can become a hard outage in a hardened one.

That makes the bug relevant well beyond the narrow packet-filtering code path where it originated. If firewall rules or timer labels are managed dynamically, a single unsafe insertion can knock over the whole host. The lesson is not that hardened systems are wrong, but that hardening increases the importance of upstream correctness.

That is why the distinction between “exploitable” and “important” is too narrow for kernel vulnerability management. Some bugs are important because they destabilize the platform on which everything else depends. CVE-2026-23274 falls squarely in that category.

Real-world exposure, however, will not be uniform. Systems that do not use xt_IDLETIMER are unlikely to hit this path, and systems that never mix revision 0 and ALARM-creatay never see the bug in practice. But security teams cannot rely on that kind of informal reassurance. They need to know whether the code exists in the kernel build, whether the module is loadable, and whether their firewall automation could create the problematic label reuse pattern.

This is why vulnerability management tools should treat the CVE as a kernel update item even if it appears low severity at first glance. A rule insertion bug in a production fleet can be enough to create a messy incident if the affected subsystem is part of baseline hardening or orchestration logic.

That is the practical path for CVE-2026-23274 as well. If your environment depends on vendor kernels, the question is not whether mainline has the fix, but whether your shipped build has absorbed the backport. That distinction is often where security programs succeed or fail.

Another thing to watch is how severity classification evolves. A warning-to-panic path can look modest in a lab and serious in production, so some organizations may rate it more as a hardening and availability issue than as a traditional vulnerability with clear exploitability. That tension is common in kernel advisories, where the same bug can be low-drama technically and high-impact operationally.

CVE-2026-23274 is not the sort of bug that dominates headlines, but it is exactly the kind of kernel flaw that reminds operators why low-level correctness matters. A single reused label should not be enough to mislead the timer subsystem into touching an object in the wrong state, and now upstream has drawn that line more clearly. The result is a small patch with a large lesson: in the kernel, object identity is never enough unless the object’s semantics are right too.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

mod_timer() on an uninitialized timer_list can trigger debugobjects warnings and, on systems with panic_on_warn=1, can escalate from a warning into a kernel panic. The published fix is straightforward: reject revision 0 rule insertion when the existing label already belongs to an ALARM-type timer object, as reflected in the kernel.org-derived description mirrored in Microsoft’s vulnerability entry and the associated stable commits. inux kernel’s netfilter subsystem is one of those foundational pieces of infrastructure that rarely gets public attention until something goes wrong. It handles packet filtering, NAT, and other rule-driven behaviors that sit deep in the networking stack, which means its modules often blend performance, lifecycle management, and correctness in very tight code paths. The xt_IDLETIMER extension belongs to that world: it is designed to track inactivity and expose timer-based state to userspace and rule logic, so it has to coordinate labels, timers, and object reuse carefully. In practice, that makes it less about “packets in, packets out” and more about maintaining a precise internal contract across multiple rule revisions.This CVE is notable because the bug is not a classic memory corruption issue in the ordinary sense. Instead, it is a lifecycle mismatch: revision 0 expects a reusable timer with a normal

timer_list, while revision 1 can create an ALARM timer object that follows different semantics. If the same label is shared, revision 0 may land on an object that looks reusable by name but is not valid for the old code path. That distinction matters because Linux kernel bugs often emerge not from raw pointer misuse, but from treating two objects as interchangeable when their initialization histories differ.The kernel.org description makes the operational consequence clear: the old code can end up calling

mod_timer() on a timer structure that was never initialized in the way revision 0 expects, which produces debugobjects warnings and may panic a system configured to treat warnings as fatal. That puts the bug in the category of reliability-threatening kernel correctness defects rather than exploit-primitives aimed at direct code execution. Even so, kernel teams treat these bugs seriously because a reproducible panic in a networking subsystem can be enough to take down a server, a container host, or a hardened appliance.The CVE’s publicatof the story. Microsoft’s Security Update Guide now routinely tracks Linux kernel CVEs alongside Windows issues, which reflects how vulnerability management has become platform-agnostic in enterprise environments. This matters because many organizations no longer care where a fix originates; they care whether the advisory is trustworthy, whether the issue has a stable backport, and whether the affected build is in their fleet. Microsoft’s publication of a Linux kernel CVE is therefore less surprising than it once was, and more a sign that patch coordination is now a cross-vendor discipline.

The NVD record is still marked for enrichment, and as of publication it does not yet provide a finalized CVSS assessment. That is common for freshly received kernel CVEs, especially when the upstream description is precise but the downstream severity calculus remains under review. In other words, the technical flaw is already understood well enough to fix, but the final severity label may lag behind the upstream resolution.

What the Bug Actually Is

What the Bug Actually Is

At its c about incompatible reuse. Revision 0 rules for xt_IDLETIMER reuse timers by label and always call mod_timer() on timer->timer. Revision 1, however, can create an object using ALARM semantics, which means the underlying timer->timer field is not initialized in the same way. If the same label is later referenced by a revision 0 rule, the code assumes it is dealing with a normal timer object and tries to restart it. That assumption is wrong, and kernel debug tooling notices.The important nuance is that the vulnerable behavior is not merely “wrong timer type,” but wrong object contract under label reuse. In kernel code, labels often act like soft identifiers for state sharing, and that is convenient until a subsystem starts supporting multiple object flavors under the same label namespace. Once that happens, the label no longer tells the whole truth. The actual type and initialization path matter, and if those are not checked before reuse, the bug becomes a logic trap.

Why label reuse is hazardous

Label reuse seems simple in userspace terms: if two rules share the same name, they should point at the same object. But the kernel is not merely storing a string-to-object map; it is maintaining a live runtime object with lifecycle rules, callbacks, and state transitions. A label that is safe for one timer flavor may be unsafe for another. That is why this fix rejects revision 0 insertion when the pre-existing labeled object is an ALARM timer.The broader lesson is that identity and compatibility are not the same thing. A shared label does not guarantee that the object behind it is safe for every caller, especially when the caller is an older revision with assumptions baked into its implementation. In kernel maintenance, this is exactly the sort of trap that appears small in a patch but large in operational terms.

- Labels can hide incompatible internal state.

- Revisioned interfaces amplify reuse hazards.

- Old code paths often assume a more limited object model.

- Safety checks are cheaper than debugging a live kernel panic.

- A single reused object can affect many downstream rule insertions.

Why mod_timer() is the tipping point

mod_timer() is n call. It assumes that the timer structure it is manipulating has been initialized and is safe to reschedule. If the structure is uninitialized, then the kernel is no longer working with a benign state bug; it is touching an object whose internal bookkeeping may be undefined from the timer subsystem’s point of view. That is what makes the debugobjects warning meaningful.In a development kernel, a warning might be an inconvenience. On a production system with panic-on-warn enabled, it becomes a potential outage trigger. This is why apparently modest correctness defects are tracked as CVEs: their impact is often not determined by the size of the code change, but by where the bug sits in the kernel’s fail-fast policy chain.

Why This Became a CVE

One of the recurring misunderstandings about kernel CVEs is that they must imply a clean remote exploit or a dramatic memory-safety primitive. That is not true. The Linux kernel team and downstream CNAs often assign CVEs to bugs that can produce undefined behavior, architecture-sensitive failures, or security-relevant correctness violations even when the immediate exploitability is uncertain. Microsoft’s entry for CVE-2026-23274 fits that model: the issue is precise, reproducible, and serious enough to merit tracking, even though it is not being described as a high-end attack chain.The reason is simple: kernel correctness and kernel security are increasingly intertte machine in a privileged subsystem can be enough for denial of service, especially in environments where kernel warnings are configured to halt the machine. It can also obscure more serious issues, complicate diagnostics, and break service-level guarantees. In enterprise terms, that is not “just a bug”; it is an availability and reliability event.

The significance of panic_on_warn=1

The mention of panic_on_warn=1 is not decorative. It marks the line between a developer-visible fault and an operationally critical fault. Systems built with this setting are intentionally configured to treat warnings as serious integrity signals, often because administrators would rather fail fast than continue operating after a kernel anomaly.That design choice is sensible, but it means bugs like this one can have outsized practical impact. A debugobjects warning in a test lab is one thing; the same warning on a hardened server can become a reboot loop or a service outage. So even though the underlying flaw is a timer-label reuse problem, its real-world effect can be disproportionate.

- Debug warnings are early indicators of broken assumptions.

- Panic-on-warn turns a warning into an availability issue.

- Rule insertion paths can be triggered by administrative actions.

- Kernel hardening policies can magnify “small” bugs.

- Correctness bugs often matter most where uptime matters most.

How the fix reduces risk

The fix is elegant because it changes policy at the boundary rather than trymismatch later. Instead of allowing revision 0 to proceed and hoping the object behaves correctly, the kernel now rejects insertion when the existing label is already owned by an ALARM-type object. That preserves the integrity of the type contract.This is a good example of a narrow defensive fix: it does not redesign timers, it does not add broad compatibility layers, and it does not try to guess the caller’s intent. It simply refuses an unsafe combination. In kernel engineering, that kind of restraint is often the most reliable path to stable backporting.

Revisioned Interfaces and Hidden Complexity

Revisioned kernel interfaces are useful because they let developers evolve behavior without breaking older rules or userspace tools. But they also create a subtle compatibility matrix. Revision 0 and revision 1 of xt_IDLETIMER are not just different names; they encode different assumptions about how labels map to timer objects and how those objects are supposed to be initialized. When those assumptions overlap, a shared label can become a trapdoor.This is the kind of bug that only shows up when code tries to preserve compatibility while extending semantics. The kernel has to support old rule definitions, newer timer behavior, and a shared label namespace all at once. That combination is exactly where “works in isolation” becomes “fails in combination.”

Compatibility is not the same as interchangeability

A legacy rule path may still be valid, but only within its own assumptions. If the newer revision introduces an ALARM timer flavor, the old path cannot simply inherit the object unless it knows how to deal with that type. The CVE shows what happens when the code treats the existence of an object as proof that the object is safe to reuse. It is not.That lesson extends beyond netfilter. In the kernel, many subsystems use versioned or layered APIs to preserve old behavior while introducing new semantics. Each of those layers needs an explicit compatibility gate. When that gate is missing, the failure may remain invisible until a particular ordering of rule creation and reuse happens.

- Versioned interfaces need explicit boundary checks.

- Shared labels can hide object-type differences.

- Old callers rarely know about new invariants.

- Compatibility paths should fail closed, not open.

- Reuse logic must verify the object’s semantic class.

Why this matters for administrators

Administrators often think in terms of “does the feature work?” rather than “what revis feature expose?” But CVEs like this one are a reminder that compatibility layers have policy embedded inside them. If a system is using nftables/iptables rules that rely on idletimer behavior, then the shape of those rules matters, not just the presence of the module.That is especially true in mixed environments where configuration management, legacy firewall scripts, and newer rule generators may all touch the same subsystem. A label that is harmless in a pristine test environment can become risky when a new object type is introduced in production and older automation later reuses the same identifier.

Kernel Warnings, Panic Policies, and Availability

The phrase debugobjects warnings may sound esoteric, but it points to an important operational reality: kernel warnings are often not cosmetic. Debugobjects exists to catch lifecycle mistakes, especially around timers, work items, and other objects whose initialization state matters. When it reports a problem, the kernel is telling you that a contract has been violated, even if the violation has not yet caused a crash.That matters because many production systems are configured to escalate aggressively on warnings. The reason is clear enough: once the kernel starts warning about structural misuse of internal objects, continuing to run can be riskier than stopping. CVE-2026-23274 demonstrates the downside of that safety philosophy: a bug that might have remained a noisy warning in a default environment can become a hard outage in a hardened one.

Why hardened systems feel this first

Systems withpanic_on_warn=1 are not exotic edge cases anymore. They are common in test clusters, infrastructure environments, and organizations that prefer fail-fast behavior. In those settings, a warning is treated as evidence of corruption or deep inconsistency. The system may choose immediate reboot or halt rather than attempt recovery.That makes the bug relevant well beyond the narrow packet-filtering code path where it originated. If firewall rules or timer labels are managed dynamically, a single unsafe insertion can knock over the whole host. The lesson is not that hardened systems are wrong, but that hardening increases the importance of upstream correctness.

- Debugobjects helps catch broken lifecycle state.

- Panic-on-warn can transform warnings into outages.

- Timer misuse is especially dangerous in core kernel code.

- Fail-fast policies depend on bug-free contracts.

- Runtime safety checks are only as good as the code they monitor.

The broader reliability angle

Even when a bug does not lead to a direct exploit, it can still be security-relevant because availability is part o the networking stack can disconnect services, disrupt remote management, or force failover in a way that breaks consistency. For container hosts and virtualization nodes, a panic may mean not just one service down, but many.That is why the distinction between “exploitable” and “important” is too narrow for kernel vulnerability management. Some bugs are important because they destabilize the platform on which everything else depends. CVE-2026-23274 falls squarely in that category.

Stable Backports and Real-World Exposure

The references attached to the CVE point to upstream kernel.org stable commits, which usually indicates the issue is already moving through the Linux fix pipeline. That is a good sign for operators because it means the remediation is concrete, not speculative. It also means downstream vendors can backport the exact change rather than infer behavior from an abstract advisory.Real-world exposure, however, will not be uniform. Systems that do not use xt_IDLETIMER are unlikely to hit this path, and systems that never mix revision 0 and ALARM-creatay never see the bug in practice. But security teams cannot rely on that kind of informal reassurance. They need to know whether the code exists in the kernel build, whether the module is loadable, and whether their firewall automation could create the problematic label reuse pattern.

Enterprise versus consumer impact

For consumer desktops, the risk is probably low unless a distro ships a configuration or service stack that actively uses xt_IDLETIMER. For enterprise servers, the calculus is different. Kernel modules that are rarely touched can still matter when they sit inside automation frameworks, appliance images, or compliance-hardened hosts that ingest dynamic rule updates.This is why vulnerability management tools should treat the CVE as a kernel update item even if it appears low severity at first glance. A rule insertion bug in a production fleet can be enough to create a messy incident if the affected subsystem is part of baseline hardening or orchestration logic.

What makes backports important here

Backports are not just a convenience; they are how most production systems actually receive kernel security fixes. The Linux stable process exists to bring important corrections into maintained kernels without dragging in unrelated features. When a fix is accepted there, it signals that the bug has crossed the threshold from “upstream curiosity” to “something distributions should ship.”That is the practical path for CVE-2026-23274 as well. If your environment depends on vendor kernels, the question is not whether mainline has the fix, but whether your shipped build has absorbed the backport. That distinction is often where security programs succeed or fail.

- Check vendor advisories, not just upstream commit history.

- Verify whether the fix is backported into your supported kernel line.

- Confirm whether the xt_IDLETIMER module is enabled or loadable.

- Review firewall automation that reuses labels.

- Treat crash-on-warn environments as higher urgency.

Strengths and Opportunities

The good news is that this CVE has a cleanly scoped fix and a well-understood failure path. That usually makes backporting easier and reduces the chance of collateral regressions. More importantly, the bug exposes a specific class of lifecycle mismatch that can be audited in nearby code, which gives maintainers an opportunity to harden similar rule-reuse paths before they become separate incidents.- Narrow fix surface: the patch rejects an unsafe combination instead of refactoring the subsystem.

- Clear semantics: revision 0 and ALARM-type objects are no longer mixed implicitly.

- Low ambiguity: the crash path is tied to a concrete

mod_timer()misuse. - Good backport candidate: conservative policy changes are usually stable-friendly.

- Operationally actionable: administrators can identify exposure by checking module usage and rule behavior.

- Audit opportunity: adjacent timer-label reuse logic can be reviewed for similar assumptions.

- Hardening benefit: systems configured with

panic_on_warn=1gain a real stability improvement.

Risks and Concerns

The main concern is that the vulnerability can convert a logic mistake into a platform-level outage if the system is configured to panic on warnings. That does not make the issue more exploitable in the classic sense, but it does make it more disruptive in hardened environments. The other concern is that label-based reuse, once combined with multiple rule revisions, can be hard to reason about in larger automation stacks.- Availability impact: a warning can become a panic on hardened systems.

- Hidden exposure: shared labels may appear safe until revision mixing occurs.

- Automation risk: configuration tools may reuse labels unknowingly.

- Incomplete severity guidance: NVD had not yet finalized a CVSS score at publication time.

- Downstream variability: vendor backports may arrive at different times.

- Operational surprise: teams may underestimate the bug because the description is brief.

- Regression risk: any timer-policy change must remain compatible with existing firewall behavior.

Looking Ahead

The immediate thing to watch is how quickly major distributions and vendor kernels absorb the backport. Because the upstream fix is already identified in kernel.org stable references, there is a good chance downstream patching will follow the usual Linux security cadence. The more interesting question is whether maintainers use this as a prompt to review other timer reuse or label-sharing logic in netfilter.Another thing to watch is how severity classification evolves. A warning-to-panic path can look modest in a lab and serious in production, so some organizations may rate it more as a hardening and availability issue than as a traditional vulnerability with clear exploitability. That tension is common in kernel advisories, where the same bug can be low-drama technically and high-impact operationally.

Practical items to monitor

- Vendor advisories for backported fixes.

- Whether your deployed kernel includes the relevant stable commit.

- Firewall or orchestration tooling that reuses xt_IDLETIMER labels.

- Any follow-on audit of related timer or label reuse paths.

- Final NVD enrichment, including a formal CVSS entry.

CVE-2026-23274 is not the sort of bug that dominates headlines, but it is exactly the kind of kernel flaw that reminds operators why low-level correctness matters. A single reused label should not be enough to mislead the timer subsystem into touching an object in the wrong state, and now upstream has drawn that line more clearly. The result is a small patch with a large lesson: in the kernel, object identity is never enough unless the object’s semantics are right too.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

Last edited: