On May 8, 2026, CVE-2026-43318 was published for a Linux kernel amdgpu driver bug in

CVE-2026-43318 is, on its face, a narrow kernel fix. The vulnerable path lives in the Direct Rendering Manager subsystem’s AMD GPU driver, not in user-mode graphics libraries and not in AMD’s Windows display stack. The affected code handles a DMA-BUF move notification, which is the sort of phrase that sounds like internal plumbing until the plumbing fails and the screen freezes.

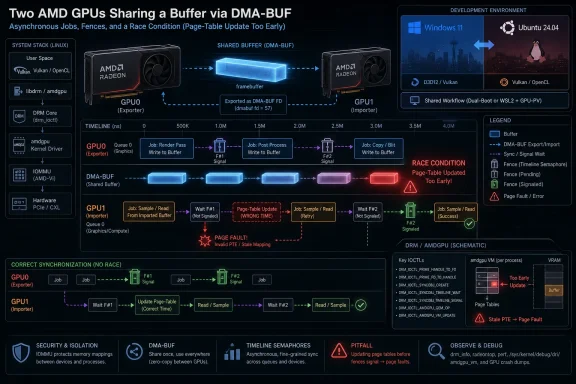

The kernel description is unusually helpful because it explains the race in operational terms. One process exports a buffer object from one GPU, another process imports it on another GPU, and the driver has to keep everyone’s view of memory synchronized while work is still queued or running. If the page table update happens too early, the GPU may keep executing a blit against mappings that have effectively shifted beneath it.

That makes this less like a classic “attacker sends packet, gets code execution” vulnerability and more like a driver correctness flaw with security bookkeeping attached. Linux kernel CVEs increasingly include bugs that were fixed upstream because they can produce unsafe kernel behavior, even when no polished exploit path has been publicly demonstrated. The security label matters, but the story here is mostly about reliability at the most timing-sensitive layer of the desktop.

The key phrase in the record is “shared BO,” meaning shared buffer object. Once a GPU buffer is exported through DMA-BUF, it stops being a private possession of one process or one device. It becomes a contract among drivers, schedulers, page tables, fences, display servers, compositors, and applications that all think they know when it is safe to touch memory.

That is the happy path. The unhappy path is that shared buffers force the kernel to answer a deceptively difficult question: who is allowed to see which version of memory, and when? A synchronization bug in this space is not just a missed lock. It is a broken promise about visibility.

The CVE’s example uses two GPUs,

The bug specifically concerns invalidating a DMA-BUF and notifying another user of the shared buffer that movement occurred. In the reported scenario, process A moves the buffer object, process B needs to know, and the importing side must update its page table. The driver’s previous handling made the virtual memory update behave as if immediate page-table changes were safe, even though GPU work using the prior mapping could still be in flight.

That is why the page fault matters. The page table update is not inherently wrong; the timing is wrong. In graphics drivers, “right operation, wrong time” is often indistinguishable from corruption.

Kernel graphics scheduling depends heavily on fences, reservation objects, dependency tracking, and job queues. The CPU submits work. The GPU executes it asynchronously. The kernel must then reason about work that has been submitted, work that has started, work that has finished, and work that merely appears complete from one participant’s point of view.

In the example, frame rendering on GPU0 completes, then a blit job runs, then the buffer is moved for GPU1 access. The trap is that “the previous job finished” does not necessarily mean “all relevant work touching this buffer is safe to remap.” If a tiled-to-linear blit is still running, moving or remapping the linear buffer for another GPU’s access can pull the rug out from under the executing job.

This is the sort of bug that makes driver engineers cautious about simplifying synchronization logic. A ticket-like sequence can look elegant because it compresses state into an ordered model. But GPUs do not execute human mental models; they execute queued work, sometimes in parallel, sometimes with hidden hardware dependencies, sometimes through pathways that only become visible when another device enters the picture.

The fix, according to the kernel-side description, is not a flashy mitigation but a correction to sync handling. That is exactly what one should expect. The durable answer is to ensure page-table work waits for the correct dependency, not to bolt a policy warning onto user space.

The vulnerable component is the Linux kernel’s

That distinction matters because CVE feeds are now consumed by automated scanners, compliance dashboards, endpoint agents, container registries, and cloud posture tools. A CVE can show up in a Microsoft context, a Linux context, and a vendor advisory context without meaning the same thing operationally in each place. Security teams that treat every CVE title as a direct asset exposure will generate noise faster than they generate remediation.

For WindowsForum’s audience, the practical Windows connection is mostly environmental. If you run Linux on bare metal with AMD GPUs, maintain dual-boot rigs, operate Linux render nodes, host Linux VMs with GPU passthrough, or support mixed Windows/Linux fleets, this is relevant. If your only AMD exposure is a normal Windows display driver from AMD’s Windows package, this specific kernel bug is not the thing to panic about.

Linux users routinely combine integrated and discrete GPUs. Workstations pair display adapters with compute accelerators. VFIO users pass one GPU into a guest while the host owns another. Developers test compositors across cards. AI and media systems mix devices in ways that graphics stacks were not originally optimized to make boring.

When peer-to-peer PCI access is unavailable, the system has to move data through less direct paths. That raises the importance of correct buffer movement and memory visibility. Every copy, blit, import, export, and page-table update becomes part of a choreography that must survive asynchronous execution.

The CVE’s example uses Xorg rather than Wayland, but it would be a mistake to read this as an Xorg-only morality tale. The flaw is in kernel amdgpu synchronization around DMA-BUF movement. Compositors and display servers can influence how likely a path is to be hit, but the correctness burden sits lower than the desktop session.

That is why bugs like this are often discovered by workloads that look ordinary only after you spell them out. A simple OpenGL demo and a display server copying a buffer across GPUs can expose a race that synthetic single-GPU tests miss. The desktop did not become “simple” just because the UI became polished.

Security scoring was not yet provided by NVD in the submitted record, which is significant. Without CVSS enrichment, affected-version metadata, or distributor advisories, it is premature to rank this as a high-severity emergency. The responsible reading is narrower: this is a kernel memory-management correctness bug in a privileged graphics driver path, now fixed upstream and assigned a CVE.

That does not make it irrelevant. GPU page faults can be denial-of-service issues in practice, especially on desktop systems where a GPU reset may take down the session. In shared workstations or compute nodes, a reliable way to trigger device instability can become operationally serious even if it is not a confidentiality breach.

The harder question is exploitability. The public description demonstrates a race and a likely fault, not a complete path to privilege escalation or information disclosure. Kernel graphics bugs can sometimes evolve into more serious vulnerabilities, but this record does not justify claiming that here. The honest assessment is that the impact is plausibly system or session instability in affected configurations, with security significance derived from its location in kernel driver code.

For administrators, that lag is frustrating because dashboards often treat “no score yet” as an unresolved ambiguity. For kernel maintainers, it reflects a deeper mismatch. The Linux kernel now assigns CVEs to many fixes once they meet the project’s criteria, but the vulnerability-management industry still wants each CVE to behave like a neatly packaged product advisory.

This is why Linux kernel CVEs can look both overbroad and underexplained. They are often grounded in real fixes, but the public text may read like a commit message because, functionally, it is close to one. The necessary context — which stable trees received the patch, which distributions backported it, which hardware paths trigger it, and whether an unprivileged user can reliably reach it — tends to arrive later, if at all.

The result is an uncomfortable middle state. Security teams cannot ignore the CVE because it is real kernel code. But they also should not invent severity where the record has not established it. The sane response is to map the bug to actual fleet exposure: AMD GPUs, Linux kernels with the affected amdgpu code, multi-GPU or DMA-BUF-heavy workloads, and environments where desktop or GPU resets matter.

Distribution kernels rarely map cleanly to upstream version numbers. Ubuntu, Fedora, Debian, Arch, SUSE, Red Hat-derived distributions, Proxmox hosts, and vendor appliance kernels may all carry different backport sets. A machine running an older-looking kernel may have the fix; a machine running a newer custom kernel may not.

This is particularly true for graphics drivers because downstreams sometimes carry display, DRM, and vendor-specific patch stacks. AMDGPU bugs may be fixed in an upstream stable release, then folded into a distribution update under a changelog line that does not mention the CVE prominently. Conversely, users of hand-rolled kernels or DKMS-adjacent graphics stacks may not receive the fix until they deliberately move.

The safest practical path is ordinary but unglamorous: track your distribution’s kernel security updates and changelogs, then reboot into the fixed kernel. Graphics driver fixes do not usually take effect until the new kernel is running. On systems using GPU passthrough, remote access, or production display walls, that reboot is the maintenance event that matters.

Developers run Linux containers on Windows workstations. Labs run Windows desktops against Linux render backends. Azure and other clouds expose Linux GPU instances to teams whose endpoint fleet is otherwise Windows. Enterprises use Linux for AI, rendering, simulation, CI pipelines, and remote visualization while managing identities, tickets, and compliance from Microsoft-centric tooling.

Even WSL has trained Windows users to think of Linux as a local subsystem rather than a separate machine. This particular CVE should not be misrepresented as a WSL graphics vulnerability, but the broader lesson carries over: GPU plumbing is now part of the standard computing substrate, not a specialist corner for gamers and CAD operators.

For security teams, the right inventory question is therefore not “Do we run Windows?” but “Where do we run Linux kernels with AMD GPUs attached?” That includes physical workstations, lab benches, hypervisors, GPU passthrough hosts, Linux desktops, and cloud nodes. The machines most likely to hit exotic DMA-BUF paths are often the ones least likely to be represented accurately in endpoint management dashboards.

That is part of why CVE-2026-43318 is interesting for a WindowsForum audience. Windows users are accustomed to treating GPU driver updates as consumer-facing packages with release notes and rollback buttons. Linux users get much of that driver stack through the kernel itself, with Mesa, firmware, compositors, and display servers layered above it. The “driver update” may be a kernel update, a Mesa update, a firmware blob update, or all three.

AMDGPU is generally one of Linux’s great success stories. The in-kernel driver, open Mesa stack, and AMD’s substantial upstream presence have given Linux users first-class Radeon support in many scenarios. But first-class does not mean simple. The more capable the stack becomes, the more often its bugs are about synchronization among correct components rather than one obviously broken component.

This bug also shows why reproducing graphics issues can be maddening. A single-GPU desktop may never hit the path. A dual-GPU setup may hit it only under a specific compositor, buffer-sharing pattern, or PCI topology. A workload that looks harmless — render here, copy there, display elsewhere — may be exactly the workload that reaches the broken interleaving.

CVE-2026-43318 is a good example of why that mismatch matters. A scanner that flags every Linux AMDGPU system as equally exposed may overstate risk. A scanner that ignores the issue because there is no CVSS score yet may understate operational impact for multi-GPU desktops or render nodes. Both approaches miss the point.

The better model is contextual triage. Is the amdgpu driver in use? Is the machine running a kernel line that predates the relevant stable fix? Does the workload use DMA-BUF sharing across devices? Is the system a kiosk, workstation, lab node, gaming machine, VM host, or production GPU server? Those answers matter more than the CVE title alone.

This is also where Windows-centric shops can learn from Linux operations. Kernel updates are not merely security hygiene; they are reliability engineering. A driver synchronization fix may reduce outage-like behavior even when the exploit story is thin. Treating it only as a compliance checkbox leaves value on the table.

That appears to be the shape of this fix. The old behavior let a page-table update happen too early in a move-notify path. The corrected behavior ensures the imported buffer’s virtual memory handling does not race ahead of GPU work that still depends on the old mapping. There is no need for a new security architecture when the bug is that the existing synchronization contract was violated.

For users, that means remediation should arrive through normal kernel maintenance rather than application-level workarounds. You should not have to stop using

There may still be temporary mitigations for people stuck on affected kernels. Avoiding the triggering multi-GPU buffer-sharing path, disabling unusual render offload arrangements, or simplifying display topology could reduce exposure. But those are operational compromises, not proper fixes. The point of the kernel patch is to make the legitimate workload safe.

The Linux graphics stack evolved to support that world. DMA-BUF, DRM scheduling, GPU virtual memory, PRIME buffer sharing, and explicit synchronization are all pieces of a high-performance design. CVE-2026-43318 is the shadow cast by that design: when shared infrastructure gets fast, the cost of a synchronization mistake rises.

Windows has its own version of this story. WDDM, hardware scheduling, GPU paravirtualization, DirectX interop, video acceleration, and compute APIs all live in a similar universe of asynchronous work and shared resources. The code paths differ, but the engineering pressure is the same. Performance now depends on making devices cooperate without forcing every transfer through slow, obvious, serialized paths.

That is why this Linux CVE is worth reading beyond Linux circles. It is a small incident in one driver, but it reflects the broader direction of client and workstation computing. The GPU is no longer an accessory. It is a peer processor with memory-management complexity that rivals the CPU-side operating system.

Administrators should pay special attention to systems with more than one GPU or with render/display split configurations. These are the machines most aligned with the public scenario. Workstations using AMD GPUs for Linux desktops, labs using GPU passthrough, and machines that mix integrated and discrete AMD graphics deserve earlier testing than single-GPU casual desktops.

It is also worth checking whether your monitoring treats GPU resets as first-class events. A system can remain reachable over SSH while the local graphical session is ruined. If your fleet health model only watches CPU load, disk space, and network reachability, GPU driver faults will look like user complaints rather than infrastructure signals.

None of this demands panic. The absence of an NVD score at publication, the specificity of the path, and the nature of the public description all argue against breathless emergency handling. But it does deserve a normal kernel-update cycle, especially on machines where GPU stability is part of the job.

The useful reading is specific.

CVE-2026-43318 will probably not be remembered as a landmark vulnerability, and that is precisely why it is useful. It shows the modern risk surface in its everyday form: not a branded exploit with a logo, but a race in the machinery that lets applications, compositors, and GPUs share memory at speed. The right response is not alarmism; it is disciplined patching, better inventory, and a recognition that the graphics stack has become core infrastructure. As Windows, Linux, cloud desktops, AI workstations, and GPU virtualization keep converging, the bugs that matter will increasingly look like this one — small in the diff, subtle in the timing, and much larger in the systems they quietly hold together.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

amdgpu_dma_buf_move_notify, where incorrect synchronization during DMA-BUF buffer movement could make an AMD GPU update page tables too early and trigger a likely GPU page fault. The vulnerability is not a Windows kernel bug, and Microsoft’s presence in the trail is best understood as vulnerability-indexing plumbing rather than evidence of a Windows exposure. But for WindowsForum readers running Linux gaming rigs, WSL-adjacent GPU workflows, dual-boot workstations, or AMD-heavy lab machines, it is a useful reminder that modern graphics stacks are now shared-memory operating systems in miniature. The bug sits at the point where performance, compositing, multi-GPU rendering, and kernel security all collide.

A Small amdgpu Race Exposes a Big Modern Graphics Problem

A Small amdgpu Race Exposes a Big Modern Graphics Problem

CVE-2026-43318 is, on its face, a narrow kernel fix. The vulnerable path lives in the Direct Rendering Manager subsystem’s AMD GPU driver, not in user-mode graphics libraries and not in AMD’s Windows display stack. The affected code handles a DMA-BUF move notification, which is the sort of phrase that sounds like internal plumbing until the plumbing fails and the screen freezes.The kernel description is unusually helpful because it explains the race in operational terms. One process exports a buffer object from one GPU, another process imports it on another GPU, and the driver has to keep everyone’s view of memory synchronized while work is still queued or running. If the page table update happens too early, the GPU may keep executing a blit against mappings that have effectively shifted beneath it.

That makes this less like a classic “attacker sends packet, gets code execution” vulnerability and more like a driver correctness flaw with security bookkeeping attached. Linux kernel CVEs increasingly include bugs that were fixed upstream because they can produce unsafe kernel behavior, even when no polished exploit path has been publicly demonstrated. The security label matters, but the story here is mostly about reliability at the most timing-sensitive layer of the desktop.

The key phrase in the record is “shared BO,” meaning shared buffer object. Once a GPU buffer is exported through DMA-BUF, it stops being a private possession of one process or one device. It becomes a contract among drivers, schedulers, page tables, fences, display servers, compositors, and applications that all think they know when it is safe to touch memory.

DMA-BUF Made Linux Graphics Fast, and Also Made Timing Bugs Harder to See

DMA-BUF is one of the unsung reasons modern Linux graphics can feel smooth. It lets different devices and drivers share buffers without always copying data through the CPU. A compositor, a video decoder, a browser, a game, and a display stack can pass around memory-backed images with far less waste than older designs allowed.That is the happy path. The unhappy path is that shared buffers force the kernel to answer a deceptively difficult question: who is allowed to see which version of memory, and when? A synchronization bug in this space is not just a missed lock. It is a broken promise about visibility.

The CVE’s example uses two GPUs,

glxgears, Xorg, and a system without peer-to-peer PCI support. That example is almost charmingly old-school — glxgears is the canonical “is OpenGL alive?” toy — but the underlying pattern is not old at all. Multi-GPU laptops, external GPUs, heterogeneous rendering setups, virtualized desktops, containers with GPU access, and compositor-heavy Linux desktops all lean on variations of this machinery.The bug specifically concerns invalidating a DMA-BUF and notifying another user of the shared buffer that movement occurred. In the reported scenario, process A moves the buffer object, process B needs to know, and the importing side must update its page table. The driver’s previous handling made the virtual memory update behave as if immediate page-table changes were safe, even though GPU work using the prior mapping could still be in flight.

That is why the page fault matters. The page table update is not inherently wrong; the timing is wrong. In graphics drivers, “right operation, wrong time” is often indistinguishable from corruption.

The Ticket Was the Wrong Abstraction at the Wrong Moment

The CVE description points to a synchronization bug caused by “the use of the ticket.” In ordinary language, the driver had a sequencing mechanism that madeamdgpu_vm_handle_moved behave as though it could update the page table immediately. In this narrow path, that assumption broke down.Kernel graphics scheduling depends heavily on fences, reservation objects, dependency tracking, and job queues. The CPU submits work. The GPU executes it asynchronously. The kernel must then reason about work that has been submitted, work that has started, work that has finished, and work that merely appears complete from one participant’s point of view.

In the example, frame rendering on GPU0 completes, then a blit job runs, then the buffer is moved for GPU1 access. The trap is that “the previous job finished” does not necessarily mean “all relevant work touching this buffer is safe to remap.” If a tiled-to-linear blit is still running, moving or remapping the linear buffer for another GPU’s access can pull the rug out from under the executing job.

This is the sort of bug that makes driver engineers cautious about simplifying synchronization logic. A ticket-like sequence can look elegant because it compresses state into an ordered model. But GPUs do not execute human mental models; they execute queued work, sometimes in parallel, sometimes with hidden hardware dependencies, sometimes through pathways that only become visible when another device enters the picture.

The fix, according to the kernel-side description, is not a flashy mitigation but a correction to sync handling. That is exactly what one should expect. The durable answer is to ensure page-table work waits for the correct dependency, not to bolt a policy warning onto user space.

This Is a Linux CVE, Not a Windows Patch Tuesday Event

The Microsoft Security Response Center page in the submitted material may confuse some readers. Microsoft tracks a broad set of CVEs in its update guide and vulnerability ecosystem, including issues that can affect Microsoft products indirectly, open-source components, cloud environments, Linux distributions in Azure, or third-party software contexts. That does not make this a Windows kernel vulnerability.The vulnerable component is the Linux kernel’s

drm/amdgpu driver. The Windows AMD graphics driver stack is different, the Windows Display Driver Model is different, and Windows does not use Linux’s DRM subsystem or DMA-BUF in the same way. A Windows 11 desktop with an AMD Radeon card is not automatically implicated by this CVE because the bug name contains “amdgpu.”That distinction matters because CVE feeds are now consumed by automated scanners, compliance dashboards, endpoint agents, container registries, and cloud posture tools. A CVE can show up in a Microsoft context, a Linux context, and a vendor advisory context without meaning the same thing operationally in each place. Security teams that treat every CVE title as a direct asset exposure will generate noise faster than they generate remediation.

For WindowsForum’s audience, the practical Windows connection is mostly environmental. If you run Linux on bare metal with AMD GPUs, maintain dual-boot rigs, operate Linux render nodes, host Linux VMs with GPU passthrough, or support mixed Windows/Linux fleets, this is relevant. If your only AMD exposure is a normal Windows display driver from AMD’s Windows package, this specific kernel bug is not the thing to panic about.

Multi-GPU Linux Is Where the Edge Cases Go to Become Real

The reproduced scenario is not the median desktop. It involves two GPUs, an exported linear buffer, imported use on another GPU, a blit, Xorg, and lack of peer-to-peer PCI access. That sounds exotic until you look at the machines enthusiasts and administrators actually build.Linux users routinely combine integrated and discrete GPUs. Workstations pair display adapters with compute accelerators. VFIO users pass one GPU into a guest while the host owns another. Developers test compositors across cards. AI and media systems mix devices in ways that graphics stacks were not originally optimized to make boring.

When peer-to-peer PCI access is unavailable, the system has to move data through less direct paths. That raises the importance of correct buffer movement and memory visibility. Every copy, blit, import, export, and page-table update becomes part of a choreography that must survive asynchronous execution.

The CVE’s example uses Xorg rather than Wayland, but it would be a mistake to read this as an Xorg-only morality tale. The flaw is in kernel amdgpu synchronization around DMA-BUF movement. Compositors and display servers can influence how likely a path is to be hit, but the correctness burden sits lower than the desktop session.

That is why bugs like this are often discovered by workloads that look ordinary only after you spell them out. A simple OpenGL demo and a display server copying a buffer across GPUs can expose a race that synthetic single-GPU tests miss. The desktop did not become “simple” just because the UI became polished.

Page Faults Are the Symptom, Not the Whole Risk

The kernel description says the mistimed update would likely produce a page fault. For an end user, that might show up as a GPU hang, application crash, compositor freeze, display reset, or kernel log entry that looks more dramatic than the user action that triggered it. For a sysadmin, it may look like another unhelpful amdgpu instability report in a sea of driver noise.Security scoring was not yet provided by NVD in the submitted record, which is significant. Without CVSS enrichment, affected-version metadata, or distributor advisories, it is premature to rank this as a high-severity emergency. The responsible reading is narrower: this is a kernel memory-management correctness bug in a privileged graphics driver path, now fixed upstream and assigned a CVE.

That does not make it irrelevant. GPU page faults can be denial-of-service issues in practice, especially on desktop systems where a GPU reset may take down the session. In shared workstations or compute nodes, a reliable way to trigger device instability can become operationally serious even if it is not a confidentiality breach.

The harder question is exploitability. The public description demonstrates a race and a likely fault, not a complete path to privilege escalation or information disclosure. Kernel graphics bugs can sometimes evolve into more serious vulnerabilities, but this record does not justify claiming that here. The honest assessment is that the impact is plausibly system or session instability in affected configurations, with security significance derived from its location in kernel driver code.

The NVD Gap Is a Feature of the Modern CVE Pipeline

The submitted record says NVD had not yet provided CVSS 4.0, CVSS 3.x, or CVSS 2.0 scores at publication time. That is increasingly normal. CVE publication, kernel fix availability, NVD enrichment, distribution backports, and scanner database updates now move on different clocks.For administrators, that lag is frustrating because dashboards often treat “no score yet” as an unresolved ambiguity. For kernel maintainers, it reflects a deeper mismatch. The Linux kernel now assigns CVEs to many fixes once they meet the project’s criteria, but the vulnerability-management industry still wants each CVE to behave like a neatly packaged product advisory.

This is why Linux kernel CVEs can look both overbroad and underexplained. They are often grounded in real fixes, but the public text may read like a commit message because, functionally, it is close to one. The necessary context — which stable trees received the patch, which distributions backported it, which hardware paths trigger it, and whether an unprivileged user can reliably reach it — tends to arrive later, if at all.

The result is an uncomfortable middle state. Security teams cannot ignore the CVE because it is real kernel code. But they also should not invent severity where the record has not established it. The sane response is to map the bug to actual fleet exposure: AMD GPUs, Linux kernels with the affected amdgpu code, multi-GPU or DMA-BUF-heavy workloads, and environments where desktop or GPU resets matter.

The Stable-Kernel Trail Matters More Than the CVE Page

The references attached to the CVE point to stable kernel commits. That is where Linux users should focus. For most administrators and enthusiasts, the question is not whether to cherry-pick a particular hash from kernel.org. It is whether their distribution kernel includes the fix.Distribution kernels rarely map cleanly to upstream version numbers. Ubuntu, Fedora, Debian, Arch, SUSE, Red Hat-derived distributions, Proxmox hosts, and vendor appliance kernels may all carry different backport sets. A machine running an older-looking kernel may have the fix; a machine running a newer custom kernel may not.

This is particularly true for graphics drivers because downstreams sometimes carry display, DRM, and vendor-specific patch stacks. AMDGPU bugs may be fixed in an upstream stable release, then folded into a distribution update under a changelog line that does not mention the CVE prominently. Conversely, users of hand-rolled kernels or DKMS-adjacent graphics stacks may not receive the fix until they deliberately move.

The safest practical path is ordinary but unglamorous: track your distribution’s kernel security updates and changelogs, then reboot into the fixed kernel. Graphics driver fixes do not usually take effect until the new kernel is running. On systems using GPU passthrough, remote access, or production display walls, that reboot is the maintenance event that matters.

Why Windows Administrators Should Still Read Linux GPU CVEs

It is tempting for Windows administrators to skip Linux graphics CVEs altogether. That would be a mistake in mixed environments. The boundary between Windows and Linux has become porous in precisely the places where GPUs matter most.Developers run Linux containers on Windows workstations. Labs run Windows desktops against Linux render backends. Azure and other clouds expose Linux GPU instances to teams whose endpoint fleet is otherwise Windows. Enterprises use Linux for AI, rendering, simulation, CI pipelines, and remote visualization while managing identities, tickets, and compliance from Microsoft-centric tooling.

Even WSL has trained Windows users to think of Linux as a local subsystem rather than a separate machine. This particular CVE should not be misrepresented as a WSL graphics vulnerability, but the broader lesson carries over: GPU plumbing is now part of the standard computing substrate, not a specialist corner for gamers and CAD operators.

For security teams, the right inventory question is therefore not “Do we run Windows?” but “Where do we run Linux kernels with AMD GPUs attached?” That includes physical workstations, lab benches, hypervisors, GPU passthrough hosts, Linux desktops, and cloud nodes. The machines most likely to hit exotic DMA-BUF paths are often the ones least likely to be represented accurately in endpoint management dashboards.

Enthusiasts Will Notice This as Stability, Not Security

For Linux desktop users with AMD hardware, the visible concern is probably not a CVE number. It is whether the system hangs less often after a kernel update. GPU faults tend to arrive as black screens, compositor resets, frozen sessions, or dmesg excerpts that send users down forum rabbit holes.That is part of why CVE-2026-43318 is interesting for a WindowsForum audience. Windows users are accustomed to treating GPU driver updates as consumer-facing packages with release notes and rollback buttons. Linux users get much of that driver stack through the kernel itself, with Mesa, firmware, compositors, and display servers layered above it. The “driver update” may be a kernel update, a Mesa update, a firmware blob update, or all three.

AMDGPU is generally one of Linux’s great success stories. The in-kernel driver, open Mesa stack, and AMD’s substantial upstream presence have given Linux users first-class Radeon support in many scenarios. But first-class does not mean simple. The more capable the stack becomes, the more often its bugs are about synchronization among correct components rather than one obviously broken component.

This bug also shows why reproducing graphics issues can be maddening. A single-GPU desktop may never hit the path. A dual-GPU setup may hit it only under a specific compositor, buffer-sharing pattern, or PCI topology. A workload that looks harmless — render here, copy there, display elsewhere — may be exactly the workload that reaches the broken interleaving.

Security Automation Needs More Humility Around Kernel CVEs

There is a growing mismatch between vulnerability automation and kernel reality. Scanners want a package name, a version range, a score, and a remediation. Kernel CVEs often offer a commit, a subsystem, a sparse description, and a handful of stable references.CVE-2026-43318 is a good example of why that mismatch matters. A scanner that flags every Linux AMDGPU system as equally exposed may overstate risk. A scanner that ignores the issue because there is no CVSS score yet may understate operational impact for multi-GPU desktops or render nodes. Both approaches miss the point.

The better model is contextual triage. Is the amdgpu driver in use? Is the machine running a kernel line that predates the relevant stable fix? Does the workload use DMA-BUF sharing across devices? Is the system a kiosk, workstation, lab node, gaming machine, VM host, or production GPU server? Those answers matter more than the CVE title alone.

This is also where Windows-centric shops can learn from Linux operations. Kernel updates are not merely security hygiene; they are reliability engineering. A driver synchronization fix may reduce outage-like behavior even when the exploit story is thin. Treating it only as a compliance checkbox leaves value on the table.

The Patch Is Boring in the Way Good Kernel Fixes Are Boring

The best kernel fixes often look underwhelming from the outside. They adjust ordering. They wait on the right fence. They stop assuming that a notification means immediate safety. They make an asynchronous system slightly more honest about time.That appears to be the shape of this fix. The old behavior let a page-table update happen too early in a move-notify path. The corrected behavior ensures the imported buffer’s virtual memory handling does not race ahead of GPU work that still depends on the old mapping. There is no need for a new security architecture when the bug is that the existing synchronization contract was violated.

For users, that means remediation should arrive through normal kernel maintenance rather than application-level workarounds. You should not have to stop using

glxgears, Xorg, or multi-GPU setups forever. You should run a kernel that contains the fix.There may still be temporary mitigations for people stuck on affected kernels. Avoiding the triggering multi-GPU buffer-sharing path, disabling unusual render offload arrangements, or simplifying display topology could reduce exposure. But those are operational compromises, not proper fixes. The point of the kernel patch is to make the legitimate workload safe.

The Real Lesson Is That GPUs Are Now Shared Infrastructure

Once upon a time, a graphics driver mostly had to draw pixels for one local user. That world is gone. Today’s GPU may decode video for a browser, render a game, accelerate a desktop compositor, train a model, serve a remote session, export a buffer to another process, and share memory across devices.The Linux graphics stack evolved to support that world. DMA-BUF, DRM scheduling, GPU virtual memory, PRIME buffer sharing, and explicit synchronization are all pieces of a high-performance design. CVE-2026-43318 is the shadow cast by that design: when shared infrastructure gets fast, the cost of a synchronization mistake rises.

Windows has its own version of this story. WDDM, hardware scheduling, GPU paravirtualization, DirectX interop, video acceleration, and compute APIs all live in a similar universe of asynchronous work and shared resources. The code paths differ, but the engineering pressure is the same. Performance now depends on making devices cooperate without forcing every transfer through slow, obvious, serialized paths.

That is why this Linux CVE is worth reading beyond Linux circles. It is a small incident in one driver, but it reflects the broader direction of client and workstation computing. The GPU is no longer an accessory. It is a peer processor with memory-management complexity that rivals the CPU-side operating system.

The Practical Shape of the Fix for AMD Linux Systems

For affected users, the action plan is straightforward but not instantaneous. Watch your distribution’s kernel advisories, install the fixed kernel when it lands, and reboot. If you build kernels yourself, verify that one of the stable commits referenced by the CVE is included in your tree or that your branch contains the equivalent fix.Administrators should pay special attention to systems with more than one GPU or with render/display split configurations. These are the machines most aligned with the public scenario. Workstations using AMD GPUs for Linux desktops, labs using GPU passthrough, and machines that mix integrated and discrete AMD graphics deserve earlier testing than single-GPU casual desktops.

It is also worth checking whether your monitoring treats GPU resets as first-class events. A system can remain reachable over SSH while the local graphical session is ruined. If your fleet health model only watches CPU load, disk space, and network reachability, GPU driver faults will look like user complaints rather than infrastructure signals.

None of this demands panic. The absence of an NVD score at publication, the specificity of the path, and the nature of the public description all argue against breathless emergency handling. But it does deserve a normal kernel-update cycle, especially on machines where GPU stability is part of the job.

The Signal Hidden in CVE-2026-43318’s Narrow Patch

This is the part of the story that should survive after the CVE falls out of the news cycle. CVE-2026-43318 is not a Windows flaw, not a reason to uninstall AMD drivers on Windows, and not evidence that every Radeon-equipped Linux desktop is waiting to fall over. It is a concrete fix for a synchronization mistake in the Linux amdgpu DMA-BUF move-notification path.The useful reading is specific.

- CVE-2026-43318 affects the Linux kernel’s amdgpu DRM driver path, not the native Windows AMD display driver stack.

- The bug involves DMA-BUF buffer movement and page-table synchronization when shared buffer objects are used across processes or GPUs.

- The public scenario points most strongly toward multi-GPU AMD Linux systems, especially where peer-to-peer PCI access is unavailable.

- The likely visible failure mode is GPU page fault or session instability, while public information does not establish a complete privilege-escalation exploit.

- The proper remediation is to install a distribution kernel or stable kernel build that includes the upstream fix and then reboot into it.

- Security teams should triage this by actual Linux AMDGPU exposure rather than by the mere presence of an AMD graphics card somewhere in inventory.

CVE-2026-43318 will probably not be remembered as a landmark vulnerability, and that is precisely why it is useful. It shows the modern risk surface in its everyday form: not a branded exploit with a logo, but a race in the machinery that lets applications, compositors, and GPUs share memory at speed. The right response is not alarmism; it is disciplined patching, better inventory, and a recognition that the graphics stack has become core infrastructure. As Windows, Linux, cloud desktops, AI workstations, and GPU virtualization keep converging, the bugs that matter will increasingly look like this one — small in the diff, subtle in the timing, and much larger in the systems they quietly hold together.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center