The Linux kernel has a new CVE tied to a subtle but important synchronization bug in the PMBus regulator path, and this one is a good example of how a seemingly narrow race condition can ripple into broader reliability concerns. CVE-2026-31486 covers a fix in

PMBus support in the Linux kernel sits in an unglamorous but highly consequential part of the stack. It underpins hardware monitoring, voltage regulation, and power-management features that are especially relevant in servers, embedded systems, and other environments where stable power delivery is more than a convenience. The code path implicated in CVE-2026-31486 is not a user-facing feature in the everyday sense, but it is part of the infrastructure that helps the kernel query and control regulators safely.

The vulnerability description makes the core issue clear:

What makes this case interesting is that the obvious fix is not safe on its own. If the voltage accessors are simply wrapped in the mutex, a deadlock can occur because

The upstream remedy therefore moves notification delivery out of the mutex-protected region entirely. Events are stored as atomics in a per-page bitmask, then drained and processed by a worker function that runs independently of the lock. The fix also wires the worker into registration and cleanup so the lifecycle remains consistent, using

For enterprise operators, the practical concern is reliability. A lock bug in a low-level hardware path may surface as intermittent instability, fault-reporting confusion, or weird behavior under stress rather than a clean crash. For consumer systems, the exposure is narrower, but it is still worth patching because many modern systems rely on power-management abstractions that are invisible until they fail.

That distinction matters because notifier chains are often where kernel code becomes re-entrant in surprising ways. A notification can trigger callbacks, and callbacks can call back into helper functions that were never designed to be lockless. If the lock hierarchy is not carefully managed, the kernel can deadlock itself without any obvious external trigger. The CVE description explicitly calls out that scenario, which is why the worker-based redesign is the real fix.

The other notable detail is the use of atomic bitmasks to store pending events. That suggests maintainers wanted a simple, low-contention mechanism for accumulating notifications on a per-page basis while preserving ordering and minimizing lock hold time. In other words, they are not just plugging a hole; they are rebalancing the code so the state machine makes sense under concurrency.

Here, the patch takes the more sophisticated route:

The worker design solves this by decoupling the notification operation from the locked path. Rather than calling

This kind of design shift is easy to underestimate because it does not look dramatic. There is no new subsystem, no new API, and no user-visible feature. But this is exactly how kernel maintainers often fix bugs that are both subtle and real: they reframe the sequencing problem so the code can be correct under concurrent execution.

The per-page detail suggests the PMBus regulator model is page-aware, which is common in power-management hardware where each page maps to a logical output or rail. Storing pending events per page keeps the design local to the regulator object rather than inventing a more global event queue. That is a practical solution because it reduces cross-object contention and keeps the patch surgical.

The cleanup side is equally important.

The new cleanup path reduces that risk by making the worker’s lifetime explicit. That gives maintainers a stronger guarantee that notifications won’t outlive the device context they depend on. It is a quiet fix, but in kernel land, quiet is good.

For enterprise environments, the implications are mostly about uptime and correctness. Power-management code is deeply embedded in platform behavior, even if it is not front and center in daily operations. A flaw in this area can affect fault handling, regulator updates, or monitoring responsiveness, especially under stress or unusual hardware conditions.

For consumers, the direct exposure is usually lower, because most users never interact with PMBus or regulator internals consciously. But “low exposure” is not the same as “no exposure.” Systems with specialized hardware, embedded Linux deployments, or boards that depend heavily on PMBus-managed rails can still be affected in real-world use.

That matters because many organizations now manage Linux and Windows risks through the same operational lens. A single security team may be responsible for host hardening, container images, VM templates, appliance firmware, and cloud-based workloads. In that environment, the presence of a Linux CVE in Microsoft’s portal is not an anomaly; it is a signal that the issue belongs in a broader patch-management workflow.

This also reinforces a market reality: vulnerability publication is increasingly platform-agnostic, but remediation still depends on the underlying vendor tree. Microsoft can surface the issue, NVD can index it, and kernel.org can host the fix, but the actual patch path depends on downstream distributors and device vendors. That is a good thing for visibility, but it can also create confusion when teams assume there is a single “owner” for the whole lifecycle.

The first step is to identify whether your Linux builds include the upstream or backported PMBus fix. Because vendor kernels often backport changes without obviously changing the version string, version numbers alone are not enough. Confirm package-level inclusion, distribution advisories, or vendor changelog references rather than assuming a kernel version tells the whole story.

The second step is to assess exposure by hardware profile. If your fleet includes systems with PMBus-managed regulators, hardware monitoring subsystems, or specialized server boards, prioritize validation there first. If your environment does not use PMBus at all, the patch is still worth taking through normal maintenance windows, but the operational urgency is lower.

There is also a broader opportunity in how this CVE is handled. Cases like this reinforce the value of treating low-level reliability bugs as first-class security events, because the operational impact of a race condition can be just as serious as a more obvious vulnerability. That mindset helps teams build better triage habits and less brittle infrastructure.

Another concern is that patch visibility may lag in downstream kernels, especially where vendors heavily customize hardware support. Backports are not always one-to-one, and administrators may think they are safe because they have a vaguely current kernel version when in fact the relevant change has not landed. That is why build-level validation matters more than headline-level awareness.

A second thing to watch is whether the fix influences nearby driver patterns. Once maintainers solve a lock/notification interaction cleanly in one subsystem, it can become a reference point for adjacent code that has similar notifier-chain behavior. That kind of cross-pollination is one of the quiet advantages of upstream kernel development.

Finally, it will be worth watching whether this CVE nudges enterprise teams to pay closer attention to low-level power-management code during routine patch cycles. Many organizations already track network and filesystem CVEs closely, but hardware monitoring and regulator paths often receive less attention until something misbehaves. That is a habit worth changing.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

hwmon: (pmbus/core) Protect regulator operations with mutex, where voltage accessors were touching shared PMBus state without the protection they needed. The upstream resolution is not just “add a lock”; it reworks the notification path to avoid a deadlock that would otherwise appear as soon as callbacks tried to re-enter the protected voltage routines. The result is a more disciplined separation between state mutation and event delivery, which is exactly the kind of kernel hardening that matters in production systems.

Background

Background

PMBus support in the Linux kernel sits in an unglamorous but highly consequential part of the stack. It underpins hardware monitoring, voltage regulation, and power-management features that are especially relevant in servers, embedded systems, and other environments where stable power delivery is more than a convenience. The code path implicated in CVE-2026-31486 is not a user-facing feature in the everyday sense, but it is part of the infrastructure that helps the kernel query and control regulators safely.The vulnerability description makes the core issue clear:

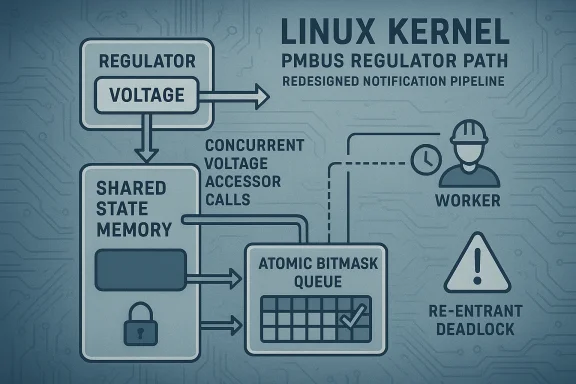

pmbus_regulator_get_voltage(), pmbus_regulator_set_voltage(), and pmbus_regulator_list_voltage() accessed PMBus registers and shared data without the update_lock mutex. That creates a classic race window, because multiple contexts can observe or modify device state at the same time. In kernel code, that kind of omission can be deceptively small in the diff and disproportionately large in the operational consequences.What makes this case interesting is that the obvious fix is not safe on its own. If the voltage accessors are simply wrapped in the mutex, a deadlock can occur because

pmbus_regulator_notify() often runs while the same mutex is already held, such as in fault-handling paths. A regulator callback that calls back into one of the protected voltage helpers would then try to take the mutex again, and the kernel would wedge itself on its own synchronization rule. That is a textbook example of why kernel security fixes often require restructuring rather than merely tightening a lock.The upstream remedy therefore moves notification delivery out of the mutex-protected region entirely. Events are stored as atomics in a per-page bitmask, then drained and processed by a worker function that runs independently of the lock. The fix also wires the worker into registration and cleanup so the lifecycle remains consistent, using

devm_add_action_or_reset() to ensure the worker is cancelled when the device goes away. That sort of lifecycle-aware patching is often what separates a correct fix from a temporary bandage.Why this matters beyond PMBus

This is not a headline-grabbing memory corruption bug, and it is not being described as remote code execution. Still, kernel races are never just academic. They can lead to inconsistent regulator state, spurious behavior in power-management code, and hard-to-reproduce failures that are expensive to diagnose in the field.For enterprise operators, the practical concern is reliability. A lock bug in a low-level hardware path may surface as intermittent instability, fault-reporting confusion, or weird behavior under stress rather than a clean crash. For consumer systems, the exposure is narrower, but it is still worth patching because many modern systems rely on power-management abstractions that are invisible until they fail.

- The bug is in a shared-state path.

- The fix required architectural rework, not just a new lock.

- The issue is relevant to stability and correctness, not only security.

- The patch was designed to avoid re-entrant deadlock.

- The worker-based design improves event sequencing and lifecycle management.

What the Vulnerability Changes

At a practical level, CVE-2026-31486 changes the contract around how PMBus regulator operations interact with concurrency. Before the fix, the voltage accessors could run unsafely against mutable shared data. After the fix, the kernel forces those accessors back behind the mutex while moving notification delivery to a separate execution context. That is a cleaner shape for the code because it keeps “read/modify shared state” and “tell listeners about changes” from happening in the same critical section.That distinction matters because notifier chains are often where kernel code becomes re-entrant in surprising ways. A notification can trigger callbacks, and callbacks can call back into helper functions that were never designed to be lockless. If the lock hierarchy is not carefully managed, the kernel can deadlock itself without any obvious external trigger. The CVE description explicitly calls out that scenario, which is why the worker-based redesign is the real fix.

The other notable detail is the use of atomic bitmasks to store pending events. That suggests maintainers wanted a simple, low-contention mechanism for accumulating notifications on a per-page basis while preserving ordering and minimizing lock hold time. In other words, they are not just plugging a hole; they are rebalancing the code so the state machine makes sense under concurrency.

Locking, not just locking more

A common mistake in kernel hardening is to assume that adding a mutex always improves safety. Often it does, but not if a path can be entered recursively or if a notifier chain can call back into the same subsystem. In those cases, more locking can actually create a harder failure than the race it was supposed to fix.Here, the patch takes the more sophisticated route:

- Keep shared PMBus state protected by

update_lock. - Move notification delivery out of the critical section.

- Use a worker to serialize notification processing.

- Track queued events atomically so no updates are lost.

- Cancel the worker during device teardown to avoid lifecycle leaks.

How the Deadlock Was Avoided

The deadlock angle is the most important nuance in the advisory. The vulnerable functions can be called from paths that already hold theupdate_lock, so a straightforward mutex insertion would have created self-deadlock. That means the original bug was not simply “missing lock”; it was “missing lock in a system where naive locking would be wrong.”The worker design solves this by decoupling the notification operation from the locked path. Rather than calling

regulator_notifier_call_chain() while still in a critical section, the kernel records that a notification is pending and lets a worker deliver it later. That preserves the logical event flow without nesting lock acquisition in the wrong order.This kind of design shift is easy to underestimate because it does not look dramatic. There is no new subsystem, no new API, and no user-visible feature. But this is exactly how kernel maintainers often fix bugs that are both subtle and real: they reframe the sequencing problem so the code can be correct under concurrent execution.

Why worker-based signaling is safer

A worker gives the kernel a place to do notification work when the lock is not held. That lowers the risk of recursive entry, makes callback behavior more predictable, and usually reduces the chance that unrelated code paths will deadlock the subsystem.- Notifications can be queued first, dispatched later.

- The lock protects state mutation, not callback execution.

- The callback chain becomes less re-entrant.

- Teardown becomes more reliable because the worker has an explicit lifecycle.

- The kernel can preserve event ordering without holding the lock the entire time.

The Role of the Worker and Atomic Bitmask

The advisory notes that events are stored as atomics in a per-page bitmask and then processed by the worker. That design is telling, because it balances two competing needs: preserve minimal synchronization overhead, and make sure no notification is lost when multiple events arrive close together. In kernel code, atomic flags are often the right tool when the event itself is simple and the expensive work can be deferred.The per-page detail suggests the PMBus regulator model is page-aware, which is common in power-management hardware where each page maps to a logical output or rail. Storing pending events per page keeps the design local to the regulator object rather than inventing a more global event queue. That is a practical solution because it reduces cross-object contention and keeps the patch surgical.

The cleanup side is equally important.

devm_add_action_or_reset() ensures that the worker and its associated data are torn down properly during device removal. Without that, a fix for one race could introduce a new lifecycle bug, especially in hotplug, probe-failure, or module-unload scenarios. Kernel maintainers tend to be very deliberate about this because cleanup-path mistakes can be just as harmful as the original issue.Lifecycle safety is part of security

It is tempting to think of security fixes as only about the vulnerable path itself. In reality, the teardown path is often where bugs go to hide. If a worker or deferred operation can outlive the device state it refers to, a patch can trade a race condition for a stale-pointer or use-after-free problem.The new cleanup path reduces that risk by making the worker’s lifetime explicit. That gives maintainers a stronger guarantee that notifications won’t outlive the device context they depend on. It is a quiet fix, but in kernel land, quiet is good.

- The worker preserves notification semantics without holding the mutex.

- Atomics reduce the need for extra contention.

- Per-page state keeps the logic localized.

- Cleanup hooks prevent dangling worker state.

- The design is more robust for probe, fault, and removal paths.

Security and Stability Impact

This CVE sits in a category that often gets underestimated because it does not look like a classic exploit primitive. But concurrency bugs in kernel driver code are significant because they can undermine the predictability of the entire subsystem. If shared state is accessed inconsistently, one caller may see a value that another caller is in the middle of changing, and that can produce faults that are hard to reproduce and harder to diagnose.For enterprise environments, the implications are mostly about uptime and correctness. Power-management code is deeply embedded in platform behavior, even if it is not front and center in daily operations. A flaw in this area can affect fault handling, regulator updates, or monitoring responsiveness, especially under stress or unusual hardware conditions.

For consumers, the direct exposure is usually lower, because most users never interact with PMBus or regulator internals consciously. But “low exposure” is not the same as “no exposure.” Systems with specialized hardware, embedded Linux deployments, or boards that depend heavily on PMBus-managed rails can still be affected in real-world use.

Consumer versus enterprise exposure

The consumer story is mostly about rarity of trigger conditions. The enterprise story is about breadth of deployment and the cost of weird failures in systems that are supposed to be boring. That difference matters when prioritizing patch cycles.- Consumer systems are less likely to touch the affected path routinely.

- Enterprise and embedded systems may depend on the code more directly.

- Managed fleets can patch faster, but only after validation.

- Stability regressions can be costly in production environments.

- Power-management bugs are often invisible until hardware stress makes them obvious.

Why Microsoft Is Publishing a Linux Kernel CVE

It may seem odd to see a Linux kernel vulnerability in Microsoft’s update guide, but that is now a normal part of the vulnerability ecosystem. Microsoft’s security platform tracks open-source issues that matter to mixed fleets, cloud environments, and enterprise infrastructures that are not neatly separated into “Windows-only” and “Linux-only” worlds.That matters because many organizations now manage Linux and Windows risks through the same operational lens. A single security team may be responsible for host hardening, container images, VM templates, appliance firmware, and cloud-based workloads. In that environment, the presence of a Linux CVE in Microsoft’s portal is not an anomaly; it is a signal that the issue belongs in a broader patch-management workflow.

This also reinforces a market reality: vulnerability publication is increasingly platform-agnostic, but remediation still depends on the underlying vendor tree. Microsoft can surface the issue, NVD can index it, and kernel.org can host the fix, but the actual patch path depends on downstream distributors and device vendors. That is a good thing for visibility, but it can also create confusion when teams assume there is a single “owner” for the whole lifecycle.

Ecosystem coordination is the real story

The modern security process is distributed. Upstream kernel maintainers land the fix, stable trees carry it, vendors backport it, and security portals publish it. That pipeline improves response speed when it works well.- Microsoft’s portal improves cross-platform visibility.

- NVD helps normalize tracking and categorization.

- kernel.org provides the authoritative upstream fix.

- Downstream distributors handle packaging and rollout.

- Enterprises need to map all of that back to their own kernel builds.

What Administrators Should Do

Administrators should treat CVE-2026-31486 as a normal kernel maintenance item with a concurrency-sensitive fix, not as a mass-exploitation emergency. That does not make it trivial; it means the right response is structured validation, especially in environments where kernel updates are staged and regression-tested before broad deployment.The first step is to identify whether your Linux builds include the upstream or backported PMBus fix. Because vendor kernels often backport changes without obviously changing the version string, version numbers alone are not enough. Confirm package-level inclusion, distribution advisories, or vendor changelog references rather than assuming a kernel version tells the whole story.

The second step is to assess exposure by hardware profile. If your fleet includes systems with PMBus-managed regulators, hardware monitoring subsystems, or specialized server boards, prioritize validation there first. If your environment does not use PMBus at all, the patch is still worth taking through normal maintenance windows, but the operational urgency is lower.

A practical patching checklist

- Confirm whether the kernel build includes the PMBus mutex/worker fix.

- Identify hosts with PMBus or regulator-heavy hardware.

- Review downstream vendor advisories for backport status.

- Stage the update in a test environment first.

- Watch for regressions in fault-handling or power-management behavior.

- Roll the fix into routine kernel maintenance if no issues appear.

Strengths and Opportunities

The good news here is that the upstream community appears to have addressed the bug with a thoughtful fix rather than a brittle workaround. The patch removes the race, avoids the deadlock, and improves lifecycle handling all at once, which is a strong sign of kernel engineering done well. It also gives downstream vendors a clear target for backporting, which should help reduce ambiguity in enterprise patch workflows.There is also a broader opportunity in how this CVE is handled. Cases like this reinforce the value of treating low-level reliability bugs as first-class security events, because the operational impact of a race condition can be just as serious as a more obvious vulnerability. That mindset helps teams build better triage habits and less brittle infrastructure.

- The fix is surgical rather than invasive.

- The deadlock avoidance is architecturally sound.

- The worker model improves clarity of execution.

- The patch should be backport-friendly for maintainers.

- Enterprises can integrate it into existing kernel maintenance cycles.

- The CVE improves visibility into niche but important hardware paths.

- The change strengthens confidence in concurrency-sensitive kernel code.

Risks and Concerns

The main concern is that the affected code sits in a privileged hardware-control path, which makes even a “mere” race condition operationally meaningful. If the bug is triggered in a specialized environment, the consequences may be subtle rather than catastrophic, but subtle bugs in power-management code are exactly the sort that can turn into long troubleshooting sessions. The fact that the fix required a worker redesign also tells you the interaction surface was more complex than a simple mutex omission.Another concern is that patch visibility may lag in downstream kernels, especially where vendors heavily customize hardware support. Backports are not always one-to-one, and administrators may think they are safe because they have a vaguely current kernel version when in fact the relevant change has not landed. That is why build-level validation matters more than headline-level awareness.

- Kernel races can create hard-to-debug instability.

- Naive locking could have introduced deadlock instead of safety.

- Downstream backport timing may vary widely.

- Specialized hardware fleets may be more exposed than general-purpose desktops.

- Cleanup mistakes can create new lifecycle bugs.

- Vendor version strings may not reveal whether the fix is present.

- Operators may underestimate low-profile CVEs because they look minor.

Looking Ahead

The next thing to watch is how quickly downstream vendors absorb the PMBus fix into maintained kernel streams. Because the upstream description already explains both the race and the deadlock hazard, the patch should be relatively easy for maintainers to classify and backport. The real uncertainty is not technical comprehension; it is rollout speed.A second thing to watch is whether the fix influences nearby driver patterns. Once maintainers solve a lock/notification interaction cleanly in one subsystem, it can become a reference point for adjacent code that has similar notifier-chain behavior. That kind of cross-pollination is one of the quiet advantages of upstream kernel development.

Finally, it will be worth watching whether this CVE nudges enterprise teams to pay closer attention to low-level power-management code during routine patch cycles. Many organizations already track network and filesystem CVEs closely, but hardware monitoring and regulator paths often receive less attention until something misbehaves. That is a habit worth changing.

- Track downstream backport status in your distribution or OEM kernel.

- Verify whether PMBus hardware exists in your fleet before prioritizing.

- Watch for similar notifier/lock patterns in related drivers.

- Review fault-handling and cleanup paths during kernel validation.

- Treat “low-severity-looking” kernel races as operationally relevant.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center