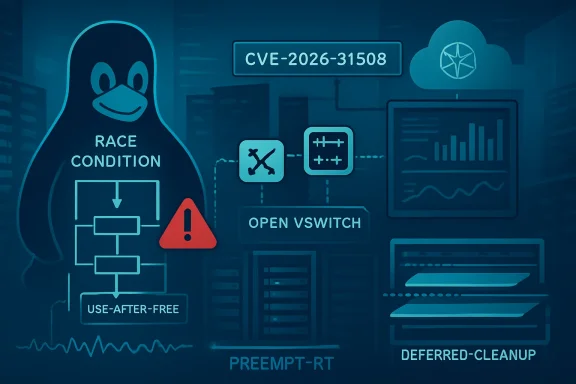

CVE-2026-31508 is a high-severity Linux kernel vulnerability, published April 22, 2026 and modified April 28, affecting Open vSwitch teardown paths where a network device can be freed before unregistration completes, particularly under PREEMPT_RT timing on kernels carrying the vulnerable change. The bug is not a Windows flaw, but it matters to WindowsForum readers because modern Microsoft estates routinely run Linux in Azure, Kubernetes, Hyper-V labs, WSL-adjacent tooling, and network virtualization stacks. This is the kind of vulnerability that looks small in a changelog and large in production, because the failure mode sits at the boundary between kernel lifetime rules and software-defined networking. The practical lesson is blunt: Linux kernel CVEs in the virtualization substrate are now Windows infrastructure stories, too.

Open vSwitch is one of those technologies that many admins touch indirectly. It is the virtual switch behind a great deal of Linux-based networking, cloud virtualization, container networking, and network function plumbing. When it works, it disappears; when it fails, packets stop being an abstraction and become an outage.

CVE-2026-31508 lives in the teardown path for OVS ports. The vulnerable sequence involves

That is the essence of a use-after-free-style kernel lifetime bug. The object is not conceptually finished being used, but memory management is already treating it as disposable. The crash trace included in the disclosure shows a general protection fault around

The PREEMPT_RT detail matters. Real-time kernels deliberately increase preemption opportunities to reduce scheduling latency. That can expose races that ordinary kernels may rarely hit, not because the race is fictional elsewhere, but because the timing window is narrower under a more conventional scheduler.

This is why the bug deserves more attention than a quick “local kernel crash” shrug. Timing bugs in kernel networking code often begin life as corner cases, then become operationally interesting when workloads, automation, and orchestration systems repeatedly exercise the same path at scale.

Still, CVSS is a starting point, not an incident plan. This bug is not remotely exploitable over the network in the usual sense. It is not a wormable SMB moment, and it is not a browser drive-by. An attacker would need local capability sufficient to exercise the affected networking paths, and many systems will not expose OVS teardown operations to ordinary users.

But “local” means something different in 2026 than it did in 2006. A local attacker may be a compromised container workload, a tenant with limited rights on a shared Linux host, a malicious CI job, or a foothold on a network appliance VM. In environments where Open vSwitch is part of the tenant or orchestration boundary, the difference between “local” and “operationally reachable” can get blurry.

The more conservative reading is that CVE-2026-31508 is primarily an availability and stability concern unless someone demonstrates a reliable privilege-escalation exploit. The more paranoid reading is that use-after-free patterns in kernel code deserve prompt patching because memory corruption bugs have a long history of evolving from crash bugs into exploit primitives.

Both readings can be true. For a lab machine, this may be routine kernel hygiene. For a virtualization host, Kubernetes node, NFV appliance, or real-time Linux system carrying Open vSwitch, it belongs higher in the queue.

Azure Linux, Microsoft Defender for Endpoint on Linux, Azure Kubernetes Service node images, Linux VMs, network appliances in Azure Marketplace, and cross-platform management tooling all pull Microsoft customers into the Linux vulnerability stream. Even if Microsoft is not the upstream maintainer of a kernel subsystem, the company has to tell customers when a third-party component may affect Microsoft products or services.

That does not mean every MSRC-listed Linux CVE applies to every Azure or Windows-adjacent environment. It means asset owners must stop treating the vendor name as the product boundary. The relevant question is not “Is this a Microsoft bug?” The better question is, “Do any of our Microsoft-managed, Microsoft-hosted, or Microsoft-integrated systems include an affected Linux kernel with Open vSwitch enabled or available?”

That distinction matters during Patch Tuesday triage. Windows teams are used to mapping CVEs to Windows Server, Exchange, SQL Server, Office, Edge, and Azure services. Linux kernel CVEs require a different map: kernel branch, distribution backport, cloud image lineage, module availability, workload role, and whether the vulnerable code is reachable.

The uncomfortable truth is that many Windows-first organizations do not have that map. They have VM inventories and EDR dashboards, but not always kernel branch visibility. CVE-2026-31508 is a reminder that hybrid infrastructure is only as manageable as its least-inventoried layer.

A teardown bug is especially interesting because teardown paths often receive less glamour than fast paths. Engineers optimize packet forwarding, flow lookup, and tunnel encapsulation because those paths dominate performance. But lifecycle code—create, attach, detach, unregister, destroy—carries the hard correctness problems of distributed systems in miniature.

The object lifetime here turns on a flag.

This is a classic kernel design tension. Locks serialize state but can hurt performance, latency, or layering. RCU allows readers to proceed efficiently while reclamation is deferred. Real-time kernels push hard against long-held locks. The bug emerges in the gap between the theoretical lifecycle and the scheduler’s right to interrupt reality.

The fix, judging from the description, is not conceptually exotic: avoid releasing the network device before teardown completes. The significance lies in the fact that the previous cleanup change relaxed unconditional RTNL locking, and that made a latent ordering assumption unsafe. In kernel code, removing a lock is not merely a performance improvement; it is a renegotiation of time.

A race that “can happen” on PREEMPT_RT may have a very different risk profile from one that requires cosmic-ray timing on a stock distro kernel. More preemption points mean more opportunities for one teardown path to pause after clearing a flag while another callback advances. The failure is still timing-dependent, but the timing model has changed in favor of the bug.

That is why the crash trace matters. The reported system was a Dell PowerEdge R740 running a 6.12-based Enterprise Linux real-time kernel, with the

For WindowsForum readers, the real-time angle may sound niche. But many Microsoft-heavy shops also run telecom gateways, storage appliances, industrial edge nodes, observability collectors, or Kubernetes workers that were not built by the Windows team but are still part of the service. If those systems use OVS and real-time kernels, they may be exactly where this bug moves from theoretical to noisy.

The larger pattern is familiar: kernel hardening and scheduler specialization expose assumptions in old code. That is not an indictment of PREEMPT_RT. It is proof that different timing models test different truths.

For admins, the trap is assuming that upstream version numbers directly answer the enterprise question. Distributions routinely backport security fixes without changing the headline kernel version in the way upstream users expect. A system reporting a vulnerable-looking base version may be patched by vendor errata. A system reporting a newer custom kernel may still carry the bad change if it was built outside normal distro channels.

This is where Windows-style patch thinking can mislead. On Windows, monthly cumulative updates provide a relatively unified servicing story. On Linux, kernel security state depends on the distribution vendor’s packaging, errata, live patching, cloud image refreshes, and whether the node has actually rebooted into the fixed kernel. Installing the package is not the same as running it.

That last point is especially important for infrastructure nodes. Linux servers often accumulate updated kernels in

The correct operational test is therefore layered: identify systems with OVS present or loaded, determine the running kernel release, check the distribution’s advisory or package changelog for the CVE fix, and verify that the system has booted into the corrected kernel. Anything less is asset theater.

Security vendors often describe kernel use-after-free issues as potential privilege escalation, and that is a reasonable class-level concern. But operational response should distinguish between “memory safety bug in privileged code” and “reliable local root exploit in the wild.” The former requires patching; the latter requires emergency containment.

The absence of a public exploit should not become an excuse for inaction. Kernel bugs have a habit of aging poorly. Researchers learn the object layout, attackers test distribution kernels, and proof-of-concept code appears after defenders have already decided the issue was boring. By the time the exploit narrative is obvious, the maintenance window has often passed.

The best stance is boring and disciplined. Treat CVE-2026-31508 as a high-priority kernel update for OVS-capable hosts, with extra urgency for PREEMPT_RT, virtualization, cloud networking, and multi-tenant environments. Do not treat it as an internet-facing mass exploitation event unless new evidence appears.

That is not hedging; it is adult vulnerability management. Panic and complacency are both bad patch strategies.

This is where CVE-2026-31508 becomes culturally interesting. It is a Linux kernel race in an OVS teardown path, yet it arrives in the inboxes of administrators who may spend most of their day thinking about Windows Server, Intune, Defender, and Azure policy. Hybrid infrastructure has collapsed the old specialization boundary faster than many organizations have updated their patch process.

The right response is not to turn every Windows admin into a kernel networking developer. It is to make sure the organization knows who owns Linux kernel patching when the Linux system is part of a Microsoft-delivered service chain. If an AKS node image, Azure-hosted appliance, or Linux-based network component is vulnerable, the accountable team cannot be discovered during the incident call.

This is especially true for Open vSwitch because it often sits below the application team’s awareness. Developers know their container failed. Windows admins know the Azure service is degraded. Network engineers know flows are missing. The actual bug may be in a host kernel module that none of those teams explicitly claimed.

CVE-2026-31508 is a small case study in why asset management has to include function, not just operating system. “Linux VM” is not enough. “Linux VM running OVS as part of tenant networking on a real-time kernel” is the level of detail that changes priority.

Kernel updates on OVS hosts deserve a little more choreography than ordinary package churn. These systems may be carrying overlay networks, bridges, tunnels, and datapath state that other workloads assume will persist. A reboot can be routine, but a badly sequenced reboot of network nodes can become a self-inflicted outage.

For enterprise teams, the best patch plan starts with classification. Internet exposure is not the deciding factor here; local reachability and role are. A single-user workstation with an unused OVS module is different from a Kubernetes worker in a multi-tenant cluster. A PREEMPT_RT edge node with OVS-backed networking deserves more urgency than a lab VM where OVS is installed but inactive.

Next comes vendor mapping. Red Hat, Ubuntu, SUSE, Debian, Azure Linux, appliance vendors, and cloud image providers may ship the same upstream fix under different package names and kernel release strings. The only reliable answer is the vendor advisory or package changelog for the deployed distribution, followed by a check of the running kernel after reboot.

Finally, teams should inspect automation. If infrastructure-as-code templates or golden images pin old kernels, patching current nodes is only half the job. New vulnerable nodes can be created tomorrow by yesterday’s image. Kernel CVEs are notorious for returning through autoscaling groups, stale VM images, and disaster recovery templates.

Linux kernel CPEs are particularly awkward. The upstream kernel is one thing; a vendor-maintained enterprise kernel is another; a cloud-tuned kernel is another still. A CPE range can say “affected,” but a distribution may have backported the fix. A scanner may flag the base version while the vendor security state is clean. Or a scanner may miss a custom kernel that does not identify cleanly.

This is why the phrase “Are we missing a CPE here?” is more than bureaucracy. It reflects a structural problem in vulnerability management: machine-readable identifiers are necessary, but they are not the same as ground truth. They have to be reconciled with vendor advisories, package metadata, and runtime facts.

Security teams should resist the temptation to resolve CVE-2026-31508 purely inside a scanner console. Scanner output can identify candidates. It cannot always answer whether a given host is actually running the vulnerable code path, whether OVS is loaded, whether the vendor has backported the fix, or whether a reboot is pending.

The more mature workflow treats CPE data as a lead, not a verdict. That is slower than clicking “export CSV,” but it is how kernel CVEs get handled without wasting cycles or missing the systems that matter.

The most concerning environments are those where untrusted or semi-trusted users can influence network device lifecycle events. That might include container platforms with delegated network operations, CI systems with elevated network namespace capabilities, lab environments where users can create and destroy OVS ports, or appliances that expose network configuration to operators with limited shell access.

Even where exploitation is not plausible, denial of service may be enough. A kernel crash on a networking host can bring down multiple workloads. In edge and telecom environments, a crash may not just interrupt an application; it may interrupt connectivity for downstream systems. Availability impact is not theoretical when the vulnerable code lives in the packet path’s supporting machinery.

The crash trace in the disclosure is a reminder that the first symptom may look like a spontaneous kernel oops during routine network administration. If a team recently saw unexplained crashes on PREEMPT_RT systems using OVS, CVE-2026-31508 should be part of the retrospective.

That is also why observability matters. Kernel logs, crash dumps, module lists, and command histories can turn a vague “the node rebooted” incident into a recognizable pattern. Without that evidence, teams may patch blindly or, worse, misattribute the outage to hardware.

The most concrete action is inventory. If the organization cannot quickly answer which systems run Open vSwitch, which kernels they run, and whether they are PREEMPT_RT or cloud/vendor variants, the vulnerability has already revealed a management gap. That gap will matter again.

For teams maintaining Microsoft-heavy environments, the presence of this CVE in MSRC should be treated as a routing signal. It says the issue may matter somewhere in the Microsoft ecosystem, not that every Microsoft customer is directly exposed. The job is to connect that signal to actual systems.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

A Race Condition in the Plumbing Becomes a Platform Risk

A Race Condition in the Plumbing Becomes a Platform Risk

Open vSwitch is one of those technologies that many admins touch indirectly. It is the virtual switch behind a great deal of Linux-based networking, cloud virtualization, container networking, and network function plumbing. When it works, it disappears; when it fails, packets stop being an abstraction and become an outage.CVE-2026-31508 lives in the teardown path for OVS ports. The vulnerable sequence involves

ovs_netdev_detach_dev(), netdev_destroy(), the IFF_OVS_DATAPATH flag, and the kernel’s RCU cleanup machinery. In plain English, one part of the kernel marks a network device as no longer belonging to an OVS datapath before the broader unregistration process is truly finished. Under the wrong timing, another path sees that flag cleared and proceeds to free the object.That is the essence of a use-after-free-style kernel lifetime bug. The object is not conceptually finished being used, but memory management is already treating it as disposable. The crash trace included in the disclosure shows a general protection fault around

dev_set_promiscuity(), with Open vSwitch functions in the call stack and a PREEMPT_RT kernel in play.The PREEMPT_RT detail matters. Real-time kernels deliberately increase preemption opportunities to reduce scheduling latency. That can expose races that ordinary kernels may rarely hit, not because the race is fictional elsewhere, but because the timing window is narrower under a more conventional scheduler.

This is why the bug deserves more attention than a quick “local kernel crash” shrug. Timing bugs in kernel networking code often begin life as corner cases, then become operationally interesting when workloads, automation, and orchestration systems repeatedly exercise the same path at scale.

The CVSS Score Says High, but the Environment Says “It Depends”

The kernel.org CNA assigned CVE-2026-31508 a CVSS 3.1 base score of 7.8, with a local attack vector, low attack complexity, low privileges required, no user interaction, unchanged scope, and high impact across confidentiality, integrity, and availability. That combination is familiar to anyone who tracks kernel bugs: the attacker needs a foothold, but once they are local, the kernel boundary is the prize.Still, CVSS is a starting point, not an incident plan. This bug is not remotely exploitable over the network in the usual sense. It is not a wormable SMB moment, and it is not a browser drive-by. An attacker would need local capability sufficient to exercise the affected networking paths, and many systems will not expose OVS teardown operations to ordinary users.

But “local” means something different in 2026 than it did in 2006. A local attacker may be a compromised container workload, a tenant with limited rights on a shared Linux host, a malicious CI job, or a foothold on a network appliance VM. In environments where Open vSwitch is part of the tenant or orchestration boundary, the difference between “local” and “operationally reachable” can get blurry.

The more conservative reading is that CVE-2026-31508 is primarily an availability and stability concern unless someone demonstrates a reliable privilege-escalation exploit. The more paranoid reading is that use-after-free patterns in kernel code deserve prompt patching because memory corruption bugs have a long history of evolving from crash bugs into exploit primitives.

Both readings can be true. For a lab machine, this may be routine kernel hygiene. For a virtualization host, Kubernetes node, NFV appliance, or real-time Linux system carrying Open vSwitch, it belongs higher in the queue.

Microsoft’s Name on the Page Is the New Normal

The MSRC entry is the part that will catch Windows admins’ eyes. Seeing a Linux kernel CVE in Microsoft’s Security Update Guide is no longer strange, but it still marks a shift in what “Microsoft security” means. Microsoft ships, hosts, supports, or integrates with enough Linux that the old mental boundary between Windows patching and Linux patching is obsolete.Azure Linux, Microsoft Defender for Endpoint on Linux, Azure Kubernetes Service node images, Linux VMs, network appliances in Azure Marketplace, and cross-platform management tooling all pull Microsoft customers into the Linux vulnerability stream. Even if Microsoft is not the upstream maintainer of a kernel subsystem, the company has to tell customers when a third-party component may affect Microsoft products or services.

That does not mean every MSRC-listed Linux CVE applies to every Azure or Windows-adjacent environment. It means asset owners must stop treating the vendor name as the product boundary. The relevant question is not “Is this a Microsoft bug?” The better question is, “Do any of our Microsoft-managed, Microsoft-hosted, or Microsoft-integrated systems include an affected Linux kernel with Open vSwitch enabled or available?”

That distinction matters during Patch Tuesday triage. Windows teams are used to mapping CVEs to Windows Server, Exchange, SQL Server, Office, Edge, and Azure services. Linux kernel CVEs require a different map: kernel branch, distribution backport, cloud image lineage, module availability, workload role, and whether the vulnerable code is reachable.

The uncomfortable truth is that many Windows-first organizations do not have that map. They have VM inventories and EDR dashboards, but not always kernel branch visibility. CVE-2026-31508 is a reminder that hybrid infrastructure is only as manageable as its least-inventoried layer.

Open vSwitch Is Boring Until It Is the Control Plane

Open vSwitch is easy to underestimate because it is plumbing. It creates bridges, ports, tunnels, flows, and datapaths that make virtualized networking behave like a programmable fabric. In cloud and container environments, that fabric is not decorative; it is how segmentation, overlay routing, service exposure, and tenant isolation become real packets.A teardown bug is especially interesting because teardown paths often receive less glamour than fast paths. Engineers optimize packet forwarding, flow lookup, and tunnel encapsulation because those paths dominate performance. But lifecycle code—create, attach, detach, unregister, destroy—carries the hard correctness problems of distributed systems in miniature.

The object lifetime here turns on a flag.

IFF_OVS_DATAPATH tells the kernel whether the netdev is associated with an OVS datapath. Clearing that flag too early changes what another callback believes about the object’s state. Once netdev_destroy() sees the object as no longer belonging to the datapath, it can move toward RCU-based freeing before the rest of the unregistration logic is done.This is a classic kernel design tension. Locks serialize state but can hurt performance, latency, or layering. RCU allows readers to proceed efficiently while reclamation is deferred. Real-time kernels push hard against long-held locks. The bug emerges in the gap between the theoretical lifecycle and the scheduler’s right to interrupt reality.

The fix, judging from the description, is not conceptually exotic: avoid releasing the network device before teardown completes. The significance lies in the fact that the previous cleanup change relaxed unconditional RTNL locking, and that made a latent ordering assumption unsafe. In kernel code, removing a lock is not merely a performance improvement; it is a renegotiation of time.

PREEMPT_RT Turns Rare Timing Into Repeatable Pain

The disclosure explicitly calls out-rt kernels, and admins should not skim past that. PREEMPT_RT exists to make Linux suitable for workloads that care about deterministic latency: industrial systems, telecom, audio, robotics, edge devices, and specialized financial or networking workloads. These are not always the biggest fleets, but they are often the least tolerant of kernel instability.A race that “can happen” on PREEMPT_RT may have a very different risk profile from one that requires cosmic-ray timing on a stock distro kernel. More preemption points mean more opportunities for one teardown path to pause after clearing a flag while another callback advances. The failure is still timing-dependent, but the timing model has changed in favor of the bug.

That is why the crash trace matters. The reported system was a Dell PowerEdge R740 running a 6.12-based Enterprise Linux real-time kernel, with the

ip command involved in the call path. This was not a hypothetical proof scribbled in a lab notebook. It looked like a production-shaped failure on recognizable server hardware.For WindowsForum readers, the real-time angle may sound niche. But many Microsoft-heavy shops also run telecom gateways, storage appliances, industrial edge nodes, observability collectors, or Kubernetes workers that were not built by the Windows team but are still part of the service. If those systems use OVS and real-time kernels, they may be exactly where this bug moves from theoretical to noisy.

The larger pattern is familiar: kernel hardening and scheduler specialization expose assumptions in old code. That is not an indictment of PREEMPT_RT. It is proof that different timing models test different truths.

Affected Version Lists Are Useful but Not Sufficient

NVD’s change history lists affected Linux kernel CPE ranges across multiple stable lines, including branches such as 5.10, 5.15, 6.1, 6.6, 6.12, 6.18, 6.19, and 7.0 release candidates. The fixed thresholds vary by branch, with stable updates identified across the kernel.org patch references. That breadth reflects the way Linux kernel fixes are backported across maintained series.For admins, the trap is assuming that upstream version numbers directly answer the enterprise question. Distributions routinely backport security fixes without changing the headline kernel version in the way upstream users expect. A system reporting a vulnerable-looking base version may be patched by vendor errata. A system reporting a newer custom kernel may still carry the bad change if it was built outside normal distro channels.

This is where Windows-style patch thinking can mislead. On Windows, monthly cumulative updates provide a relatively unified servicing story. On Linux, kernel security state depends on the distribution vendor’s packaging, errata, live patching, cloud image refreshes, and whether the node has actually rebooted into the fixed kernel. Installing the package is not the same as running it.

That last point is especially important for infrastructure nodes. Linux servers often accumulate updated kernels in

/boot while continuing to run the old kernel for weeks because nobody wants to reboot the host. Live patching can help for some classes of flaws, but not every fix is live-patchable, and networking subsystem changes may still require conventional maintenance.The correct operational test is therefore layered: identify systems with OVS present or loaded, determine the running kernel release, check the distribution’s advisory or package changelog for the CVE fix, and verify that the system has booted into the corrected kernel. Anything less is asset theater.

The Exploit Story Is Still Incomplete

At the time of the disclosed details, the public record describes the bug and the crash pattern but does not establish a widely available exploit. That matters. A kernel general protection fault is not automatically a privilege escalation exploit, even when CVSS models confidentiality, integrity, and availability as high. Exploitability depends on allocator behavior, object reuse, control over timing, and whether the freed structure can be shaped in a useful way.Security vendors often describe kernel use-after-free issues as potential privilege escalation, and that is a reasonable class-level concern. But operational response should distinguish between “memory safety bug in privileged code” and “reliable local root exploit in the wild.” The former requires patching; the latter requires emergency containment.

The absence of a public exploit should not become an excuse for inaction. Kernel bugs have a habit of aging poorly. Researchers learn the object layout, attackers test distribution kernels, and proof-of-concept code appears after defenders have already decided the issue was boring. By the time the exploit narrative is obvious, the maintenance window has often passed.

The best stance is boring and disciplined. Treat CVE-2026-31508 as a high-priority kernel update for OVS-capable hosts, with extra urgency for PREEMPT_RT, virtualization, cloud networking, and multi-tenant environments. Do not treat it as an internet-facing mass exploitation event unless new evidence appears.

That is not hedging; it is adult vulnerability management. Panic and complacency are both bad patch strategies.

Windows Admins Inherited the Linux Kernel Whether They Wanted It or Not

The modern Windows estate is not Windows-only. Hyper-V hosts may run Linux guests. Azure tenants may rely on Linux appliances. Developers may build containers on Linux nodes while authenticating through Microsoft Entra ID. Security teams may manage Linux endpoints through Microsoft tooling. The procurement system may still call the environment “Microsoft,” while the outage root cause saysopenvswitch.This is where CVE-2026-31508 becomes culturally interesting. It is a Linux kernel race in an OVS teardown path, yet it arrives in the inboxes of administrators who may spend most of their day thinking about Windows Server, Intune, Defender, and Azure policy. Hybrid infrastructure has collapsed the old specialization boundary faster than many organizations have updated their patch process.

The right response is not to turn every Windows admin into a kernel networking developer. It is to make sure the organization knows who owns Linux kernel patching when the Linux system is part of a Microsoft-delivered service chain. If an AKS node image, Azure-hosted appliance, or Linux-based network component is vulnerable, the accountable team cannot be discovered during the incident call.

This is especially true for Open vSwitch because it often sits below the application team’s awareness. Developers know their container failed. Windows admins know the Azure service is degraded. Network engineers know flows are missing. The actual bug may be in a host kernel module that none of those teams explicitly claimed.

CVE-2026-31508 is a small case study in why asset management has to include function, not just operating system. “Linux VM” is not enough. “Linux VM running OVS as part of tenant networking on a real-time kernel” is the level of detail that changes priority.

The Patch Is Simple; the Rollout Is Not

The fix itself is upstream and has been backported across stable kernel lines. That is the easy part. The hard part is getting the fix into the exact running environments that matter without breaking the very networking layer you are trying to protect.Kernel updates on OVS hosts deserve a little more choreography than ordinary package churn. These systems may be carrying overlay networks, bridges, tunnels, and datapath state that other workloads assume will persist. A reboot can be routine, but a badly sequenced reboot of network nodes can become a self-inflicted outage.

For enterprise teams, the best patch plan starts with classification. Internet exposure is not the deciding factor here; local reachability and role are. A single-user workstation with an unused OVS module is different from a Kubernetes worker in a multi-tenant cluster. A PREEMPT_RT edge node with OVS-backed networking deserves more urgency than a lab VM where OVS is installed but inactive.

Next comes vendor mapping. Red Hat, Ubuntu, SUSE, Debian, Azure Linux, appliance vendors, and cloud image providers may ship the same upstream fix under different package names and kernel release strings. The only reliable answer is the vendor advisory or package changelog for the deployed distribution, followed by a check of the running kernel after reboot.

Finally, teams should inspect automation. If infrastructure-as-code templates or golden images pin old kernels, patching current nodes is only half the job. New vulnerable nodes can be created tomorrow by yesterday’s image. Kernel CVEs are notorious for returning through autoscaling groups, stale VM images, and disaster recovery templates.

The CPE Question Shows the Limits of Machine-Readable Security

The NVD record includes CPE configurations and even invites feedback if a CPE is missing. That is not a trivial footnote. CPEs are the glue for scanners, dashboards, compliance exports, and executive risk charts. When they are incomplete, too broad, or mismatched to distribution backports, the machinery produces either false comfort or false alarms.Linux kernel CPEs are particularly awkward. The upstream kernel is one thing; a vendor-maintained enterprise kernel is another; a cloud-tuned kernel is another still. A CPE range can say “affected,” but a distribution may have backported the fix. A scanner may flag the base version while the vendor security state is clean. Or a scanner may miss a custom kernel that does not identify cleanly.

This is why the phrase “Are we missing a CPE here?” is more than bureaucracy. It reflects a structural problem in vulnerability management: machine-readable identifiers are necessary, but they are not the same as ground truth. They have to be reconciled with vendor advisories, package metadata, and runtime facts.

Security teams should resist the temptation to resolve CVE-2026-31508 purely inside a scanner console. Scanner output can identify candidates. It cannot always answer whether a given host is actually running the vulnerable code path, whether OVS is loaded, whether the vendor has backported the fix, or whether a reboot is pending.

The more mature workflow treats CPE data as a lead, not a verdict. That is slower than clicking “export CSV,” but it is how kernel CVEs get handled without wasting cycles or missing the systems that matter.

The Real Blast Radius Is in Shared Infrastructure

For individual Linux desktops, this bug is probably not the crisis of the week. For shared infrastructure, it deserves respect. The kernel networking layer is a privilege boundary, a reliability boundary, and often a tenancy boundary. Open vSwitch may be carrying traffic for workloads whose owners cannot see or control the host kernel.The most concerning environments are those where untrusted or semi-trusted users can influence network device lifecycle events. That might include container platforms with delegated network operations, CI systems with elevated network namespace capabilities, lab environments where users can create and destroy OVS ports, or appliances that expose network configuration to operators with limited shell access.

Even where exploitation is not plausible, denial of service may be enough. A kernel crash on a networking host can bring down multiple workloads. In edge and telecom environments, a crash may not just interrupt an application; it may interrupt connectivity for downstream systems. Availability impact is not theoretical when the vulnerable code lives in the packet path’s supporting machinery.

The crash trace in the disclosure is a reminder that the first symptom may look like a spontaneous kernel oops during routine network administration. If a team recently saw unexplained crashes on PREEMPT_RT systems using OVS, CVE-2026-31508 should be part of the retrospective.

That is also why observability matters. Kernel logs, crash dumps, module lists, and command histories can turn a vague “the node rebooted” incident into a recognizable pattern. Without that evidence, teams may patch blindly or, worse, misattribute the outage to hardware.

The Practical Reading for Patch Queues This Week

CVE-2026-31508 should land in the patch queue as a high-severity Linux kernel issue with a narrow but important exposure profile. It is not a Windows desktop emergency, and it is not a reason to panic-reboot every Linux VM in the estate. It is a reason to find OVS-capable hosts, especially real-time and shared networking systems, and move them toward fixed kernels with deliberate speed.The most concrete action is inventory. If the organization cannot quickly answer which systems run Open vSwitch, which kernels they run, and whether they are PREEMPT_RT or cloud/vendor variants, the vulnerability has already revealed a management gap. That gap will matter again.

For teams maintaining Microsoft-heavy environments, the presence of this CVE in MSRC should be treated as a routing signal. It says the issue may matter somewhere in the Microsoft ecosystem, not that every Microsoft customer is directly exposed. The job is to connect that signal to actual systems.

- Systems running Open vSwitch on affected Linux kernel branches should be prioritized for vendor kernel updates, especially if they are shared, multi-tenant, or network-critical.

- PREEMPT_RT kernels deserve extra attention because the disclosed race is specifically described as reachable when preemption occurs after the OVS datapath flag is cleared.

- Scanner findings based on upstream kernel CPEs should be validated against distribution advisories and running kernel versions before teams declare victory or panic.

- Installing a fixed kernel package is not enough if the host has not rebooted or otherwise activated the corrected kernel.

- Golden images, autoscaling templates, and appliance baselines should be refreshed so newly created systems do not reintroduce the vulnerable kernel later.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center