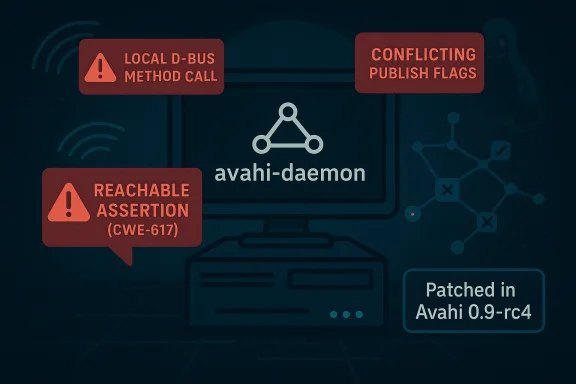

CVE-2026-34933 is another reminder that availability bugs in mDNS/DNS-SD infrastructure can be just as operationally painful as memory corruption in more glamorous code paths. In Avahi, a single local D-Bus method call with conflicting publish flags can trip a reachable assertion in

Avahi sits in a deceptively ordinary corner of the Linux stack. It implements multicast DNS and DNS-SD service discovery, the plumbing that lets devices find printers, speakers, file shares, and other local-network services without a central directory. On desktops it often feels invisible; on servers, containers, and mixed-platform networks, it can be the difference between seamless discovery and a baffling “it worked yesterday” outage. (security.snyk.io)

What makes Avahi security-sensitive is not that it handles glamorous remote exploits, but that it is part of the always-on service coordination layer. If

The immediate pattern here is familiar to anyone who has tracked Avahi bugs over the last few years: a small logic flaw in a service-discovery code path becomes a service-wide crash condition. Snyk’s listing shows CVE-2026-34933 as a reachable assertion affecting Avahi packages, and its NVD summary says that prior to 0.9-rc4, an unprivileged local user can crash

That is important because the attack does not require clever packet crafting or sustained pressure. It is a single-shot availability kill switch once an attacker has local access and the ability to talk to the system D-Bus. That makes the vulnerability less about brute force and more about trust boundaries: code that assumed well-formed publish parameters is forced to confront an adversarial caller. (security.snyk.io)

The issue also fits a broader pattern in open-source infrastructure software: defensive assertions are invaluable during development, but dangerous when they remain reachable in production if user-controlled input can still drive execution into them. In other words, the bug is not just that validation was missing. It is that the program’s internal “this should never happen” logic was still exposed to attacker-controlled state. (sentinelone.com)

The specific failure point, as described in the CVE title and summaries, is

The interesting part is the shape of the exploit. The attacker does not need to chain together multiple calls, exhaust memory, or race the scheduler. Snyk’s advisory states that a single D-Bus method call is enough. That simplicity matters: one malformed request is often easier to automate, more reliable across environments, and harder to distinguish from benign local traffic than a long, noisy attack sequence. (security.snyk.io)

Key consequences:

In practical terms, that can become a persistence-of-pain problem. Even if the daemon restarts automatically, the attacker can repeat the call and keep the service unstable. On a box that depends on service discovery for printers, media devices, or local coordination, the user impact can look like network unreliability while the root cause is actually a security flaw. That distinction matters for triage. (sentinelone.com)

The pattern is especially visible in software that bridges userland applications with system-level discovery features. A D-Bus interface may be local, but it is still an API surface. Once an API is public within the machine, the application has to behave as if its caller is hostile. If any code path still uses assertions as a substitute for input validation, the daemon can become fragile under pressure. (security.snyk.io)

That is why the CVE’s availability wording is stronger than it might first appear. The issue can produce complete denial of service for the affected component, and the affected component is often a singleton service on the host. In an environment with repeated exploitation, the impact becomes persistent and operationally noisy rather than merely intermittent. (security.snyk.io)

A second reason is that service discovery daemons often operate in a mixed privilege environment. They must accept inputs from privileged components, semi-trusted local applications, and automation tools. The more flexible the interface, the more careful the validation needs to be. CVE-2026-34933 is what happens when the validation trail is shorter than the trust boundary. (security.snyk.io)

The practical problem is that the malicious request does not have to be sophisticated. The NVD summary in Snyk’s page says a single D-Bus method call with conflicting publish flags is sufficient. That is the sort of failure mode that can be scripted quickly, tested repeatedly, and adapted easily across distributions with slightly different packaging. (security.snyk.io)

That is why policy hardening still matters even when a fix exists. Administrators can reduce risk by limiting which accounts can reach Avahi interfaces, applying MAC policies such as SELinux or AppArmor where appropriate, and keeping the attack surface as small as possible. SentinelOne’s mitigation section specifically recommends restricting D-Bus access, applying sandboxing policies, and disabling

A few operational points stand out:

That matters because the best security fixes often look boring. There is no new crypto, no elaborate sandbox, no dramatic refactor. Instead, the code now handles malformed or conflicting input as input validation failure, which is exactly where it should have been in the first place. A good patch often removes the possibility of reaching a dangerous internal invariant at all. (sentinelone.com)

This approach also improves maintainability. If the code later evolves to support more combinations or new transport semantics, the validation layer can be updated without relying on crash-prone assumptions. In other words, the fix makes the API behavior explicit rather than implicit. That is a better long-term posture for a daemon that sits at the center of discovery logic. (sentinelone.com)

Administrators should therefore look beyond the source project version number and verify their distribution’s security tracker, package changelog, and update cadence. When dealing with Avahi, the package name may stay constant while the effective fix date shifts depending on the vendor. That is a classic Linux security reality, not a special exception. (security.snyk.io)

The business impact is amplified by the fact that this is an availability problem, not an intrusion problem. Security teams often prioritize data theft and privilege escalation, but persistent service outages can be equally disruptive when they block productivity tools, on-prem services, or essential peripherals. A denial-of-service bug in a discovery daemon can become an SLA issue very quickly. (security.snyk.io)

There is also a monitoring issue. A daemon that crashes and restarts can blend into routine noise unless the logs are reviewed carefully. SentinelOne recommends looking for unexpected

What makes that especially frustrating is that the underlying machine may otherwise look healthy. CPU and memory metrics may be normal. The only clue may be a service restart loop or a log entry about an assertion failure. That is exactly why availability bugs are so expensive to diagnose. (sentinelone.com)

This matters because the lesson is bigger than any one CVE. When a project repeatedly hits the same weakness family, the right response is not just patching individual crashes. It is reviewing how invariants are enforced, how local APIs are exposed, and how much trust is implicitly granted to userland callers. If the validation posture is too thin, the next crash is often already waiting in another code path. (sentinelone.com)

A mature response includes more than patching. It includes:

That is why the wording in the CVE description is so important. The flaw is not speculative, and the impact is not theoretical. It is a concrete path from malformed local input to a dead service process. In security terms, that is enough to matter. (security.snyk.io)

The second question is whether more details emerge about the exact flags and D-Bus interface behavior. The public advisory and downstream summaries already establish the crash condition, but deeper technical disclosures often help defenders write better detection logic and test cases. For now, the safest assumption is that any untrusted local caller who can reach the relevant D-Bus path should be treated as potentially disruptive. (security.snyk.io)

The third question is whether this disclosure prompts a broader hardening review for Avahi deployments. Repeated reachable-assertion issues are a cue to audit access policy, service necessity, and crash-recovery behavior. In practice, the safest deployment is often the one that never needed the service in the first place. (sentinelone.com)

Source: MSRC Security Update Guide - Microsoft Security Response Center

transport_flags_from_domain(), crash avahi-daemon, and knock out service discovery for the affected machine. The flaw is tracked as a denial-of-service issue with a CWE-617 reachable assertion classification, and it is patched upstream in Avahi 0.9-rc4. (security.snyk.io)

Background

Background

Avahi sits in a deceptively ordinary corner of the Linux stack. It implements multicast DNS and DNS-SD service discovery, the plumbing that lets devices find printers, speakers, file shares, and other local-network services without a central directory. On desktops it often feels invisible; on servers, containers, and mixed-platform networks, it can be the difference between seamless discovery and a baffling “it worked yesterday” outage. (security.snyk.io)What makes Avahi security-sensitive is not that it handles glamorous remote exploits, but that it is part of the always-on service coordination layer. If

avahi-daemon goes down, clients may not be able to browse services, advertise printers, or resolve local names in the way users expect. In an enterprise, that can translate into support calls, failed onboarding flows, broken air printing, and confusing partial outages that look like “network weirdness” rather than a cleanly labeled security incident. (sentinelone.com)The immediate pattern here is familiar to anyone who has tracked Avahi bugs over the last few years: a small logic flaw in a service-discovery code path becomes a service-wide crash condition. Snyk’s listing shows CVE-2026-34933 as a reachable assertion affecting Avahi packages, and its NVD summary says that prior to 0.9-rc4, an unprivileged local user can crash

avahi-daemon with one D-Bus call that uses conflicting publish flags. (security.snyk.io)That is important because the attack does not require clever packet crafting or sustained pressure. It is a single-shot availability kill switch once an attacker has local access and the ability to talk to the system D-Bus. That makes the vulnerability less about brute force and more about trust boundaries: code that assumed well-formed publish parameters is forced to confront an adversarial caller. (security.snyk.io)

The issue also fits a broader pattern in open-source infrastructure software: defensive assertions are invaluable during development, but dangerous when they remain reachable in production if user-controlled input can still drive execution into them. In other words, the bug is not just that validation was missing. It is that the program’s internal “this should never happen” logic was still exposed to attacker-controlled state. (sentinelone.com)

What CVE-2026-34933 Actually Does

At a high level, this CVE is a local denial-of-service flaw. An unprivileged local user can issue a crafted D-Bus request to Avahi that contains incompatible publish flags, and the daemon falls into an assertion failure rather than rejecting the request cleanly. SentinelOne summarizes the result plainly: the attacker can crash the service and interrupt local discovery operations. (sentinelone.com)The specific failure point, as described in the CVE title and summaries, is

transport_flags_from_domain(). That function appears to be part of the logic that maps a requested service-publishing domain to transport behavior. The critical mistake is not merely that the function exists; it is that conflicting publish flags are allowed to reach it in a state that violates internal assumptions. When the assumptions fail, the daemon aborts. (sentinelone.com)Why the assertion is “reachable”

A reachable assertion is more than a crash label. It means code that was probably intended as a sanity check in a happy-path routine can be forced from outside the trusted core. That is why CVE-2026-34933 is mapped to CWE-617, the standard bucket for reachable assertions. The vulnerability is exploitable not because the assertion itself is exotic, but because the input validation upstream of it is insufficient. (security.snyk.io)The interesting part is the shape of the exploit. The attacker does not need to chain together multiple calls, exhaust memory, or race the scheduler. Snyk’s advisory states that a single D-Bus method call is enough. That simplicity matters: one malformed request is often easier to automate, more reliable across environments, and harder to distinguish from benign local traffic than a long, noisy attack sequence. (security.snyk.io)

Key consequences:

- One local call can crash the daemon.

- The failure is tied to conflicting publish flags.

- The result is a service outage, not code execution.

- The weakness sits in a production-reachable assertion path. (security.snyk.io)

Why local access still matters

Some readers will glance at “local” and discount the issue. That would be a mistake. Modern desktops, shared lab systems, VDI hosts, jump boxes, thin clients, kiosk environments, and multi-user Linux systems still have meaningful local trust boundaries. If an unprivileged user can crashavahi-daemon, they can degrade name resolution or service advertising for everyone on that machine. (security.snyk.io)In practical terms, that can become a persistence-of-pain problem. Even if the daemon restarts automatically, the attacker can repeat the call and keep the service unstable. On a box that depends on service discovery for printers, media devices, or local coordination, the user impact can look like network unreliability while the root cause is actually a security flaw. That distinction matters for triage. (sentinelone.com)

How the Bug Fits Avahi’s Security Pattern

Avahi has a history of availability bugs that are easier to trigger than they are to explain. The repository ecosystem around the daemon has already seen other reachable assertion advisories, which suggests a recurring risk category: stateful service-discovery logic that assumes inputs will be well-formed. The companion materials in the search results even point to earlier Avahi CVEs with similar denial-of-service character, reinforcing that this is not a one-off coding slip. (sentinelone.com)The pattern is especially visible in software that bridges userland applications with system-level discovery features. A D-Bus interface may be local, but it is still an API surface. Once an API is public within the machine, the application has to behave as if its caller is hostile. If any code path still uses assertions as a substitute for input validation, the daemon can become fragile under pressure. (security.snyk.io)

The difference between a bug and a crash vector

Many software bugs degrade quality. This one degrades trust. A crash in a discovery daemon is not just a process exit; it is the loss of a coordination service that other software quietly depends on. When the daemon disappears, the blast radius can spread beyond the immediate user session and into print services, network browsing, and any application that expects Avahi to be present. (sentinelone.com)That is why the CVE’s availability wording is stronger than it might first appear. The issue can produce complete denial of service for the affected component, and the affected component is often a singleton service on the host. In an environment with repeated exploitation, the impact becomes persistent and operationally noisy rather than merely intermittent. (security.snyk.io)

Why this keeps happening

There is a structural reason bugs like this keep emerging in mature infrastructure projects. The code tends to evolve around protocol details, compatibility constraints, and multiple front-ends. Over time, a small sanity check that was once effectively unreachable can become user-triggerable as new features, flags, or integration paths are added. That is the quiet danger of living codebases. (sentinelone.com)A second reason is that service discovery daemons often operate in a mixed privilege environment. They must accept inputs from privileged components, semi-trusted local applications, and automation tools. The more flexible the interface, the more careful the validation needs to be. CVE-2026-34933 is what happens when the validation trail is shorter than the trust boundary. (security.snyk.io)

- Service-discovery code often sits in a high-trust local API role.

- Assertions are not a substitute for rejecting bad input.

- Compatibility pressure can create fragile parameter handling.

- Repeated local crashes can create a persistent outage pattern. (sentinelone.com)

A Closer Look at the Attack Surface

The attack surface here is narrower than a network worm, but it is still meaningful. The exploit path is through D-Bus, which means the attacker needs local execution and access to the system message bus. That is an important constraint, but not a comforting one in shared systems where unprivileged accounts are common. (security.snyk.io)The practical problem is that the malicious request does not have to be sophisticated. The NVD summary in Snyk’s page says a single D-Bus method call with conflicting publish flags is sufficient. That is the sort of failure mode that can be scripted quickly, tested repeatedly, and adapted easily across distributions with slightly different packaging. (security.snyk.io)

D-Bus as a trust boundary

D-Bus is one of those Unix technologies that feels local and therefore safe, but safety is not the same as trustworthiness. System buses often carry privileged requests, policy decisions, and service activation logic. If a daemon treats a D-Bus caller as “friendly enough,” an unprivileged user can become the trigger for a system-level availability event. (sentinelone.com)That is why policy hardening still matters even when a fix exists. Administrators can reduce risk by limiting which accounts can reach Avahi interfaces, applying MAC policies such as SELinux or AppArmor where appropriate, and keeping the attack surface as small as possible. SentinelOne’s mitigation section specifically recommends restricting D-Bus access, applying sandboxing policies, and disabling

avahi-daemon where service discovery is unnecessary. (sentinelone.com)Local user impact versus multi-user systems

On a personal laptop, the impact may look like a transient annoyance. On a multi-user machine or workstation class system, the same crash can affect everyone sharing the host. That is a very different risk profile, especially in classrooms, labs, remote desktop farms, and engineering environments where Avahi may be running even if users are unaware of it. Local is not the same as harmless. (security.snyk.io)A few operational points stand out:

- The exploit path is local, not remote.

- The attacker needs low privileges, not root.

- The damage is to availability, not secrecy or integrity.

- The failure can be repeated to keep the daemon down. (security.snyk.io)

What the Patch Changes

The fix lands in Avahi 0.9-rc4, which both Snyk and the SentinelOne writeup identify as the patched release. The core remedial action is straightforward: validate the publish flags properly before processing the D-Bus method call, so the daemon does not advance into the assertion failure state. (security.snyk.io)That matters because the best security fixes often look boring. There is no new crypto, no elaborate sandbox, no dramatic refactor. Instead, the code now handles malformed or conflicting input as input validation failure, which is exactly where it should have been in the first place. A good patch often removes the possibility of reaching a dangerous internal invariant at all. (sentinelone.com)

Why the fix is preferable to papering over the crash

There are two basic ways to deal with a reachable assertion: remove the assertion or stop untrusted input from reaching it. The latter is usually the right answer when the assertion enforces a meaningful invariant. Here, the invariant is about publish flag compatibility, so proper validation upstream is cleaner than weakening the check downstream. (sentinelone.com)This approach also improves maintainability. If the code later evolves to support more combinations or new transport semantics, the validation layer can be updated without relying on crash-prone assumptions. In other words, the fix makes the API behavior explicit rather than implicit. That is a better long-term posture for a daemon that sits at the center of discovery logic. (sentinelone.com)

Upstream and downstream timing

The upstream fix is important, but distro timing matters just as much. Snyk’s Debian 12 page notes that there is no fixed version for that distribution in the package record it tracks, which is a reminder that downstream users often have to wait for vendor packaging even after upstream has shipped the patch. That lag can be the difference between a short advisory window and a long one. (security.snyk.io)Administrators should therefore look beyond the source project version number and verify their distribution’s security tracker, package changelog, and update cadence. When dealing with Avahi, the package name may stay constant while the effective fix date shifts depending on the vendor. That is a classic Linux security reality, not a special exception. (security.snyk.io)

- Patched upstream in 0.9-rc4.

- Validation now happens before the dangerous code path.

- Downstream package availability may still vary by distribution.

- Administrators should verify vendor-specific fixed builds. (security.snyk.io)

Operational Impact for Enterprises

For enterprises, the question is not whether Avahi is fashionable. The question is whether it is present anywhere in the fleet where discovery, printer visibility, or local device discovery matters. On desktops and shared workstations, Avahi often exists because users expect local devices to “just work.” When it crashes, help desks may see printer failures, service browsing failures, or confusing intermittent discovery problems. (sentinelone.com)The business impact is amplified by the fact that this is an availability problem, not an intrusion problem. Security teams often prioritize data theft and privilege escalation, but persistent service outages can be equally disruptive when they block productivity tools, on-prem services, or essential peripherals. A denial-of-service bug in a discovery daemon can become an SLA issue very quickly. (security.snyk.io)

Where the blast radius is largest

The biggest concern is not a single laptop. It is a fleet with consistent software images where Avahi is deployed broadly and local users are not fully trusted. Think engineering labs, VDI environments, shared kiosks, thin-client deployments, and classroom workstations. In those settings, one user’s ability to crash a system service can become everyone else’s problem. (security.snyk.io)There is also a monitoring issue. A daemon that crashes and restarts can blend into routine noise unless the logs are reviewed carefully. SentinelOne recommends looking for unexpected

avahi-daemon crashes, assertion failures, D-Bus audit anomalies, and repeated service-discovery failures. Those are exactly the kinds of weak signals that can otherwise get lost in a normal operations stream. (sentinelone.com)Consumer impact is real too

Consumers should not assume this is an enterprise-only issue. Desktop Linux users rely on Avahi for zero-configuration discovery all the time, often without realizing it. If an application or untrusted local process can crash the daemon, a home user may see printers vanish, sharing sessions break, or devices stop appearing on the network until the service is restarted. (security.snyk.io)What makes that especially frustrating is that the underlying machine may otherwise look healthy. CPU and memory metrics may be normal. The only clue may be a service restart loop or a log entry about an assertion failure. That is exactly why availability bugs are so expensive to diagnose. (sentinelone.com)

Relationship to Earlier Avahi Vulnerabilities

Avahi has repeatedly surfaced in the security ecosystem for denial-of-service style bugs. The search results show recent and older Avahi CVEs around reachable assertions and crash conditions, which suggests the project’s threat model is heavily influenced by input-validation edge cases. That does not mean the code is uniquely poor; it means the feature set is inherently sensitive to malformed service data and unexpected state transitions. (sentinelone.com)This matters because the lesson is bigger than any one CVE. When a project repeatedly hits the same weakness family, the right response is not just patching individual crashes. It is reviewing how invariants are enforced, how local APIs are exposed, and how much trust is implicitly granted to userland callers. If the validation posture is too thin, the next crash is often already waiting in another code path. (sentinelone.com)

What repeating patterns tell defenders

Recurring reachable assertions point to a codebase where defensive checks and feature evolution are not always moving in lockstep. As new publish options, transports, or integration features are added, assumptions accumulate. Eventually, one of them becomes externally reachable. That is the lifecycle risk that security teams should keep in mind when reviewing service discovery software. (sentinelone.com)A mature response includes more than patching. It includes:

- Reviewing D-Bus policy around Avahi.

- Limiting unnecessary multicast discovery exposure.

- Watching for service restart loops.

- Treating repeated daemon crashes as a security signal, not just an operations issue. (sentinelone.com)

Why reachable assertions are especially annoying

Reachable assertions are annoying because they sit at the boundary between correctness bugs and security bugs. They are often dismissed as “just a crash,” but a crash in the right daemon is a security event by another name. If the daemon is foundational to connectivity, printing, or device discovery, the user experiences a loss of service that is indistinguishable from a targeted attack. (security.snyk.io)That is why the wording in the CVE description is so important. The flaw is not speculative, and the impact is not theoretical. It is a concrete path from malformed local input to a dead service process. In security terms, that is enough to matter. (security.snyk.io)

Strengths and Opportunities

The upside of this disclosure is that the fix is relatively clear and the exposure model is understandable. Organizations can act quickly, and distributions can backport the patch without changing the architecture of their deployments. More broadly, the bug gives defenders a clean checklist for hardening local service discovery stacks.- Simple remediation path: upgrade to Avahi 0.9-rc4 or the vendor-fixed package. (security.snyk.io)

- Clear attack surface: the issue is local and tied to D-Bus, which makes policy hardening practical. (sentinelone.com)

- Low complexity: the exploit is reportedly a single method call, which makes detection and regression testing easier. (security.snyk.io)

- Good mitigation options: restrict D-Bus access, apply MAC policies, or disable Avahi where unnecessary. (sentinelone.com)

- Operational visibility: daemon crashes, restart loops, and assertion messages are observable indicators. (sentinelone.com)

- Limited blast radius: the issue affects availability rather than integrity or confidentiality. (security.snyk.io)

Risks and Concerns

The downside is that “local only” can lull teams into underestimating the problem. In any multi-user environment, an unprivileged crash vector can still become a meaningful outage generator, and repeated exploitation can keep the daemon effectively unavailable. That is especially problematic when Avahi supports user-visible workflows like printer discovery or local service browsing.- Persistent disruption if the attacker repeats the call after restarts. (security.snyk.io)

- Under-prioritization because the flaw is not remote code execution. (security.snyk.io)

- Hidden dependency risk on systems where Avahi is running but not obviously tracked. (sentinelone.com)

- Vendor lag that can delay real-world remediation even after upstream patching. (security.snyk.io)

- Log noise that can obscure the causal link between crashes and malicious local activity. (sentinelone.com)

- Shared-host exposure in labs, VDI, kiosks, and workstation pools. (security.snyk.io)

What to Watch Next

The immediate question is how quickly distributions backport the fix and how many environments still ship vulnerable Avahi builds. The upstream version number is only part of the answer; what matters operationally is when each vendor delivers a corrected package. Snyk’s note about Debian 12 underscores how uneven that landscape can be. (security.snyk.io)The second question is whether more details emerge about the exact flags and D-Bus interface behavior. The public advisory and downstream summaries already establish the crash condition, but deeper technical disclosures often help defenders write better detection logic and test cases. For now, the safest assumption is that any untrusted local caller who can reach the relevant D-Bus path should be treated as potentially disruptive. (security.snyk.io)

The third question is whether this disclosure prompts a broader hardening review for Avahi deployments. Repeated reachable-assertion issues are a cue to audit access policy, service necessity, and crash-recovery behavior. In practice, the safest deployment is often the one that never needed the service in the first place. (sentinelone.com)

- Track vendor package updates rather than upstream release notes alone. (security.snyk.io)

- Watch for new advisory details from the Avahi project and downstream maintainers. (security.snyk.io)

- Audit D-Bus policy rules for local access to Avahi interfaces. (sentinelone.com)

- Review whether avahi-daemon is needed on each class of system. (sentinelone.com)

- Add alerts for repeat daemon crashes and assertion failures. (sentinelone.com)

avahi-daemon crashes as security-relevant are the right responses now, before a one-line D-Bus abuse becomes someone’s all-day outage. (security.snyk.io)Source: MSRC Security Update Guide - Microsoft Security Response Center