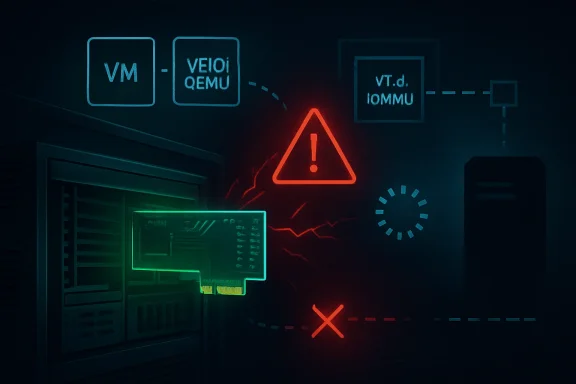

CVE-2026-43161, published by NVD on May 6, 2026, describes a Linux kernel Intel VT-d flaw where PCIe devices using ATS and passthrough can hard-lock a host when the device becomes inaccessible during removal, link failure, or userspace teardown. That sounds like a narrow kernel corner case, and in one sense it is. But for anyone running KVM, VFIO, DPDK, GPU or NIC passthrough, or dense virtualization hosts, it is a reminder that the most dangerous failure mode in infrastructure is not always remote code execution. Sometimes it is a machine that simply stops obeying.

The vulnerability sits in the uneasy space between performance hardware and safety engineering. Intel’s IOMMU stack exists to make direct device access safer and faster, but CVE-2026-43161 shows how a single assumption — that a device receiving an invalidation request is still reachable — can turn a routine cleanup path into a host-wide freeze. This is not a mass-market Windows desktop emergency, nor is it a speculative cloud apocalypse. It is the sort of bug that matters precisely because the systems exposed to it are the ones administrators expect to be boring.

The short version is deceptively simple: a PCIe endpoint with Address Translation Services enabled is passed through to userspace, the endpoint becomes inaccessible, and the Intel IOMMU code tries to flush that device’s IOTLB anyway. If the device cannot respond, the kernel can wait indefinitely. The result is not a clean driver failure or a recoverable VFIO error, but a hard-lock of the host.

That matters because passthrough is no longer a laboratory trick. It is how many shops run fast networking, storage controllers, accelerators, and GPUs inside virtual machines or userspace networking stacks. QEMU and DPDK are explicitly named in the CVE description because they are exactly the sort of environments where the host intentionally hands a device closer to the guest or application than a traditional driver stack would.

The vulnerability is also not about a maliciously crafted packet or a malformed file. It is about a device disappearing at the wrong moment. That disappearance can be a surprise removal, a hot-plug event, or a link fault that leaves the kernel with neither a cooperative endpoint nor the right evidence that it should stop talking to one.

This is why the issue feels different from the usual CVE treadmill. It is less “attacker sends input, code mishandles input” and more “the hardware topology changed, the kernel took the optimistic path, and the machine paid the price.” In enterprise operations, that distinction is not comforting. Hardware events are not rare enough to ignore, especially in racks full of risers, NICs, GPUs, cables, and firmware combinations that have all aged at different speeds.

ATS complicates the picture. Address Translation Services allow a PCIe device to cache translations so that it can avoid repeatedly consulting the IOMMU for the same mappings. The performance case is clear: devices that do high-rate DMA benefit from fewer translation round trips. The operational bargain is equally clear: once devices cache translations, the platform must reliably invalidate those cached translations when mappings change.

CVE-2026-43161 lives in that bargain. When the kernel tears down or changes mappings for a passed-through device, it may need to flush the device-side IOTLB. That flush is only useful if the endpoint can receive it and respond. If the link has dropped or the device is otherwise inaccessible, the request becomes a message to nowhere.

The kernel had already grown logic to avoid issuing ATS invalidation requests when a device was known to be disconnected, but the CVE description says that earlier protection applied only when Intel IOMMU scalable mode was enabled. Systems without scalable mode, or with it disabled, could still walk into the trap. In other words, the safety check existed, but it lived on the wrong side of a configuration boundary.

That is the sort of boundary that ages badly. Kernel code grows around hardware generations, feature flags, and increasingly elaborate fast paths. A guard added for one mode may be assumed, informally, to represent the whole class of behavior. Then a real deployment finds the missing half.

Hot paths get measured, optimized, and stared at because every nanosecond is suspect. Cleanup paths are different. They are supposed to be safe, rare, and boring. They run when a device is being detached, a group is being released, a VM is being destroyed, or a PCIe hot-plug event is being handled. Those are exactly the moments when system state is already unstable.

The CVE description includes call traces from multiple scenarios: device removal through PCIe hot-plug, device release through Intel IOMMU cleanup, and a case where the endpoint loses connection without a clean link-down event and the host locks when the userspace process is killed. The names in those traces —

The bug is that “putting the world back in order” includes sending a flush to a device that may no longer be part of the world. If the IOMMU waits forever for that operation to complete, the cleanup path becomes the failure amplifier.

That is why this issue is especially irritating for virtualization hosts. Killing a VM, detaching a VFIO group, or reacting to a slot event should be how an administrator contains damage. In the affected scenario, that containment action can become the event that freezes the entire host.

Some systems do not support scalable mode. Some firmware leaves features inconsistent. Some distributions or kernel configurations may avoid enabling a mode for compatibility reasons. Some deployments run hardware old enough to matter but new enough to still be in production. The result is that “fixed when scalable mode is enabled” is not the same as “fixed.”

The earlier kernel change referenced in the CVE avoided issuing ATS invalidation when a device was disconnected, but did so in a path tied to scalable mode behavior. CVE-2026-43161 is the companion lesson: non-scalable mode is not a legacy footnote if production systems still depend on it.

The vulnerability also references another commit, “Fix NULL domain on device release,” which added a teardown path that could call

There is no scandal in that. Kernel IOMMU code is necessarily entangled with device lifecycles, firmware tables, PCIe semantics, and vendor-specific hardware behavior. But it does underline a reality administrators sometimes forget: enabling advanced platform features is not merely a BIOS checkbox. It enrolls the machine in a set of assumptions stretching from silicon to kernel release cadence.

That difference is the heart of the bug. Safe-removal state is administrative knowledge. Device presence is empirical knowledge. When a link fault happens without a clean hot-plug notification, the kernel may not have the administrative signal it wants, but it can still test whether the endpoint answers.

The reported cost of this check on a ConnectX-5 at 8 GT/s over two lanes is around 70 microseconds. In a packet-forwarding hot path, that would be a non-starter. In an attach or release path, it is a rounding error compared with the alternative: a host that never returns.

This is a classic systems tradeoff. The fastest code assumes the world is still as it was. The safest code asks the world whether that is still true. CVE-2026-43161 is a case where the question is cheap enough, the path is cold enough, and the failure severe enough that asking becomes the only defensible option.

It also reflects a broader shift in kernel hardening. The old model trusted state transitions to happen cleanly. Modern infrastructure has learned to distrust clean transitions. Devices vanish mid-operation, firmware lies, slots flap, virtual functions wedge, cables fail, and management controllers report events late or not at all. Robust code increasingly has to treat hardware presence as a fact to be revalidated, not a state to be inferred.

The scope of exploitation is narrower than a network-reachable kernel flaw. The vulnerable configuration requires PCIe endpoints with ATS enabled and passed through to userspace. An attacker would likely need some influence over the guest, the userspace workload, the device state, or the physical/link environment. That makes it less broadly exploitable than the headline CVEs that dominate monthly patch coverage.

But in the environments where it applies, the blast radius is ugly. A single endpoint going inaccessible can freeze the host, not merely crash the guest or terminate the userspace process. If that host runs multiple VMs, storage services, network functions, or clustered workloads, the operational impact can exceed what the narrow technical trigger suggests.

The phrase “hard-lock” matters. A kernel oops, service crash, or driver reset leaves breadcrumbs. A hard-locked server may require power cycling, out-of-band management, fencing by a cluster manager, and postmortem reconstruction from whatever logs made it out before the system froze. For a colocated box or remote edge system, the difference between a clean panic and a wedged host is the difference between software recovery and a ticket to remote hands.

This is why vulnerability triage should not reduce everything to exploitability from the public Internet. Infrastructure risk often comes from combinations of privilege, hardware adjacency, and failure mode. A bug that is hard to trigger deliberately but easy to encounter during a hardware fault can still deserve priority in the right fleet.

The MSRC listing is a clue to that universe. Microsoft tracks many CVEs that do not look, at first glance, like traditional Windows bugs because customers consume open-source components, Linux kernels, container hosts, appliances, and cloud images through Microsoft products and services. The practical question is not whether the vulnerable code is in

For Windows admins, the most relevant exposure may be indirect. A Linux virtualization host may run Windows guests with passed-through GPUs or NICs. A lab may use VFIO to test Windows drivers. A storage or backup appliance may be Linux-based even though every protected workload is Windows Server. A network acceleration box may sit in front of Active Directory, SQL Server, or Remote Desktop infrastructure without being managed by the same team that owns those services.

This is where siloed patching breaks down. The Windows team may know the business impact of a host outage, while the Linux team owns the kernel update, and the network team owns the ConnectX card. CVE-2026-43161 is not likely to be exploited by someone emailing a malicious attachment to an accounting department. It is more likely to bite when ownership boundaries make hardware health, kernel versions, and passthrough settings nobody’s complete picture.

It is also a useful reminder that Windows workloads inherit the reliability of their substrate. A Windows VM with a passed-through accelerator is only as stable as the host’s ability to recover when that accelerator misbehaves. Guest operating system maturity does not save a VM from a frozen host.

The bargain is that the host keeps enough control to isolate and recover. IOMMUs provide translation and containment. VFIO provides a framework for safely exposing devices to userspace. PCIe hot-plug handling provides a way to deal with topology changes. Each layer narrows the danger, but none abolishes it.

CVE-2026-43161 shows how thin the recovery margin can become. Once a device caches translations and the platform must invalidate them, the device is no longer just a passive endpoint. It is a participant in the correctness protocol. If it disappears while the protocol expects a response, the host needs a way to stop waiting.

That principle generalizes beyond this CVE. Hardware acceleration is increasingly built on shared responsibility between device, firmware, kernel, and userspace runtime. The more capability we push toward devices, the more recovery paths must assume that those devices may fail at exactly the wrong time.

The fix does not say passthrough is unsafe. It says passthrough needs defensive pessimism. Before asking a device to do something required for teardown, check whether the device can still be reached. That may sound obvious, but obviousness is often discovered only after the first hard-lock.

That is good news for administrators because it means the likely remediation path is ordinary kernel maintenance, not a hardware redesign or a risky configuration workaround. It also means distributions and appliance vendors should be able to absorb the change into their update channels. The challenge, as usual, is less about writing the patch than getting it onto the systems that actually carry the risk.

The affected population is narrower than “all Linux servers.” Systems without PCIe passthrough, without ATS-enabled endpoints, or without the Intel VT-d paths in question are not the primary concern. But the systems that do match are often the ones where downtime is most expensive: virtualization hosts, high-throughput networking boxes, GPU compute nodes, and specialized appliances.

Admins should resist the temptation to wait for a CVSS score before starting inventory. NVD enrichment can lag publication, and a missing score does not tell you whether your fleet has QEMU hosts with passed-through NICs. The useful triage starts with architecture, not a number.

The first question is whether you operate Linux hosts using Intel VT-d with PCIe passthrough to guests or userspace frameworks. The second is whether those devices use ATS. The third is whether your kernel line has received one of the stable fixes referenced by the CVE or an equivalent vendor backport. If the answer to the first two is yes and the third is no, the risk is not theoretical enough to ignore.

That does not mean every homelab is exposed. But it does mean the trigger conditions are plausible in exactly the environments where people are most likely to yank cables, move cards, test kernels, and destroy VMs from the command line when something looks wrong. A link fault that becomes a host hard-lock is not an abstract risk when your storage VM and router VM live on the same box.

The more subtle point is that enthusiasts often run newer kernels than enterprises but older hardware than enterprises. That combination can be excellent for features and terrible for edge cases. A kernel may contain complex modern IOMMU behavior while the platform’s firmware and PCIe topology remain eccentric.

For Windows enthusiasts using Linux hosts to run Windows guests, this CVE is another reason to treat passthrough as infrastructure, not a hobby toggle. Keep kernel packages current. Know which devices are assigned to which guests. Avoid assuming that a VM teardown is always safer than waiting. And when a host freezes during VFIO cleanup, do not immediately blame Windows, QEMU, or the guest driver; the host IOMMU path may be the crime scene.

The second move is vendor mapping. If you run distribution kernels, watch your distribution’s advisories rather than assuming the upstream commit hash is directly visible in your installed kernel. Enterprise distributions frequently backport fixes without changing the headline kernel version in a way that satisfies casual checks. Appliance vendors may do the same, but on their own schedule.

The third move is recovery planning. Until patched, affected systems should be treated as having a possible host-level lockup condition during device removal, link instability, or userspace teardown. That does not mean disabling all passthrough overnight. It does mean avoiding unnecessary hot-plug experiments on production boxes and making sure out-of-band management, fencing, and cluster failover behave as expected.

There is also a monitoring angle, though it is imperfect. PCIe AER errors, link flaps, VFIO detach events, and IOMMU warnings may provide hints before a lockup, but the whole problem with a hard-lock is that it may leave the logs incomplete. Operators should not count on perfect observability after the fact.

For organizations with formal change windows, this is a case for pairing kernel updates with hardware-aware testing. Reproduce basic attach, detach, VM shutdown, and device reset flows in a lab that resembles production. The fix should reduce the risk of indefinite waits on inaccessible devices, but every passthrough environment has enough local variation that blind confidence is unearned.

This can be confusing for administrators who still think in vendor boxes. A CVE on an MSRC page may not mean “patch Windows Update now.” It may mean “this vulnerability affects something in the broader software and service supply chain Microsoft tracks.” In the case of CVE-2026-43161, the underlying source is kernel.org, and the practical remediation will come through Linux kernel updates or vendor-provided downstream patches.

That distinction matters because otherwise teams either overreact or underreact. Overreaction treats every MSRC-listed CVE as an immediate Windows emergency. Underreaction treats every non-Windows CVE as someone else’s problem. Mixed infrastructure punishes both instincts.

The better reading is contextual. If your Windows estate depends on Linux hosts, appliances, or cloud infrastructure that use PCIe passthrough, this CVE belongs on your radar. If your environment is pure Windows endpoints and ordinary servers with no Linux virtualization substrate in scope, it may be background noise. Most organizations are somewhere in between.

This is where modern vulnerability management becomes less about feeds and more about architecture. The feed can tell you that a flaw exists. Only your asset model can tell you whether it matters.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

The vulnerability sits in the uneasy space between performance hardware and safety engineering. Intel’s IOMMU stack exists to make direct device access safer and faster, but CVE-2026-43161 shows how a single assumption — that a device receiving an invalidation request is still reachable — can turn a routine cleanup path into a host-wide freeze. This is not a mass-market Windows desktop emergency, nor is it a speculative cloud apocalypse. It is the sort of bug that matters precisely because the systems exposed to it are the ones administrators expect to be boring.

The Bug Is Not Exotic; the Deployment Model Is

The Bug Is Not Exotic; the Deployment Model Is

The short version is deceptively simple: a PCIe endpoint with Address Translation Services enabled is passed through to userspace, the endpoint becomes inaccessible, and the Intel IOMMU code tries to flush that device’s IOTLB anyway. If the device cannot respond, the kernel can wait indefinitely. The result is not a clean driver failure or a recoverable VFIO error, but a hard-lock of the host.That matters because passthrough is no longer a laboratory trick. It is how many shops run fast networking, storage controllers, accelerators, and GPUs inside virtual machines or userspace networking stacks. QEMU and DPDK are explicitly named in the CVE description because they are exactly the sort of environments where the host intentionally hands a device closer to the guest or application than a traditional driver stack would.

The vulnerability is also not about a maliciously crafted packet or a malformed file. It is about a device disappearing at the wrong moment. That disappearance can be a surprise removal, a hot-plug event, or a link fault that leaves the kernel with neither a cooperative endpoint nor the right evidence that it should stop talking to one.

This is why the issue feels different from the usual CVE treadmill. It is less “attacker sends input, code mishandles input” and more “the hardware topology changed, the kernel took the optimistic path, and the machine paid the price.” In enterprise operations, that distinction is not comforting. Hardware events are not rare enough to ignore, especially in racks full of risers, NICs, GPUs, cables, and firmware combinations that have all aged at different speeds.

VT-d’s Safety Promise Meets PCIe’s Messy Reality

Intel VT-d is supposed to give the operating system a way to constrain direct memory access from devices. Instead of letting a PCIe device freely read and write physical memory, the IOMMU translates and restricts device DMA. That is the foundation that makes modern device passthrough plausible rather than reckless.ATS complicates the picture. Address Translation Services allow a PCIe device to cache translations so that it can avoid repeatedly consulting the IOMMU for the same mappings. The performance case is clear: devices that do high-rate DMA benefit from fewer translation round trips. The operational bargain is equally clear: once devices cache translations, the platform must reliably invalidate those cached translations when mappings change.

CVE-2026-43161 lives in that bargain. When the kernel tears down or changes mappings for a passed-through device, it may need to flush the device-side IOTLB. That flush is only useful if the endpoint can receive it and respond. If the link has dropped or the device is otherwise inaccessible, the request becomes a message to nowhere.

The kernel had already grown logic to avoid issuing ATS invalidation requests when a device was known to be disconnected, but the CVE description says that earlier protection applied only when Intel IOMMU scalable mode was enabled. Systems without scalable mode, or with it disabled, could still walk into the trap. In other words, the safety check existed, but it lived on the wrong side of a configuration boundary.

That is the sort of boundary that ages badly. Kernel code grows around hardware generations, feature flags, and increasingly elaborate fast paths. A guard added for one mode may be assumed, informally, to represent the whole class of behavior. Then a real deployment finds the missing half.

The Failure Path Runs Through Cleanup, Not the Hot Path

One of the more telling details in the CVE text is where the fix lands. The proposed change adds a presence check in__context_flush_dev_iotlb(), a path described as being used for device attach and release rather than a hot dataplane path. That distinction is important because it explains both why the bug survived and why the fix is relatively pragmatic.Hot paths get measured, optimized, and stared at because every nanosecond is suspect. Cleanup paths are different. They are supposed to be safe, rare, and boring. They run when a device is being detached, a group is being released, a VM is being destroyed, or a PCIe hot-plug event is being handled. Those are exactly the moments when system state is already unstable.

The CVE description includes call traces from multiple scenarios: device removal through PCIe hot-plug, device release through Intel IOMMU cleanup, and a case where the endpoint loses connection without a clean link-down event and the host locks when the userspace process is killed. The names in those traces —

vfio_group_fops_release, pci_stop_and_remove_bus_device, pciehp_disable_slot, qi_flush_dev_iotlb — tell a story administrators will recognize. Something is being unplugged, destroyed, detached, or recovered, and the host is trying to put the world back in order.The bug is that “putting the world back in order” includes sending a flush to a device that may no longer be part of the world. If the IOMMU waits forever for that operation to complete, the cleanup path becomes the failure amplifier.

That is why this issue is especially irritating for virtualization hosts. Killing a VM, detaching a VFIO group, or reacting to a slot event should be how an administrator contains damage. In the affected scenario, that containment action can become the event that freezes the entire host.

Scalable Mode Was a Boundary Too Many

The CVE’s title calls out “without scalable mode,” and that phrase is easy to glide past. It should not be. Scalable mode is part of the newer Intel VT-d architecture intended to handle more complex translation and isolation models, including PASID-related machinery. But real fleets do not run as clean diagrams.Some systems do not support scalable mode. Some firmware leaves features inconsistent. Some distributions or kernel configurations may avoid enabling a mode for compatibility reasons. Some deployments run hardware old enough to matter but new enough to still be in production. The result is that “fixed when scalable mode is enabled” is not the same as “fixed.”

The earlier kernel change referenced in the CVE avoided issuing ATS invalidation when a device was disconnected, but did so in a path tied to scalable mode behavior. CVE-2026-43161 is the companion lesson: non-scalable mode is not a legacy footnote if production systems still depend on it.

The vulnerability also references another commit, “Fix NULL domain on device release,” which added a teardown path that could call

qi_flush_dev_iotlb(). That commit was itself a fix, but it introduced or exposed another place where an inaccessible endpoint could cause the same indefinite wait. This is how kernel reliability work often proceeds: one correctness fix expands the set of cases the code handles, and another edge case emerges behind it.There is no scandal in that. Kernel IOMMU code is necessarily entangled with device lifecycles, firmware tables, PCIe semantics, and vendor-specific hardware behavior. But it does underline a reality administrators sometimes forget: enabling advanced platform features is not merely a BIOS checkbox. It enrolls the machine in a set of assumptions stretching from silicon to kernel release cadence.

Presence Is More Useful Than Politeness

The interesting technical pivot in the CVE description is the move frompci_dev_is_disconnected() to pci_device_is_present(). The former covers devices marked as disconnected through safe-removal paths. The latter tests whether the device is actually accessible by reading vendor and device IDs, and internally also accounts for disconnected state.That difference is the heart of the bug. Safe-removal state is administrative knowledge. Device presence is empirical knowledge. When a link fault happens without a clean hot-plug notification, the kernel may not have the administrative signal it wants, but it can still test whether the endpoint answers.

The reported cost of this check on a ConnectX-5 at 8 GT/s over two lanes is around 70 microseconds. In a packet-forwarding hot path, that would be a non-starter. In an attach or release path, it is a rounding error compared with the alternative: a host that never returns.

This is a classic systems tradeoff. The fastest code assumes the world is still as it was. The safest code asks the world whether that is still true. CVE-2026-43161 is a case where the question is cheap enough, the path is cold enough, and the failure severe enough that asking becomes the only defensible option.

It also reflects a broader shift in kernel hardening. The old model trusted state transitions to happen cleanly. Modern infrastructure has learned to distrust clean transitions. Devices vanish mid-operation, firmware lies, slots flap, virtual functions wedge, cables fail, and management controllers report events late or not at all. Robust code increasingly has to treat hardware presence as a fact to be revalidated, not a state to be inferred.

This Is a Denial-of-Service Bug With Administrator Consequences

NVD had not yet assigned CVSS scores at the time the record appeared, which means there is no official severity number to anchor procurement dashboards or vulnerability management reports. That absence should not be mistaken for low impact. A host hard-lock is a denial-of-service condition in its purest form.The scope of exploitation is narrower than a network-reachable kernel flaw. The vulnerable configuration requires PCIe endpoints with ATS enabled and passed through to userspace. An attacker would likely need some influence over the guest, the userspace workload, the device state, or the physical/link environment. That makes it less broadly exploitable than the headline CVEs that dominate monthly patch coverage.

But in the environments where it applies, the blast radius is ugly. A single endpoint going inaccessible can freeze the host, not merely crash the guest or terminate the userspace process. If that host runs multiple VMs, storage services, network functions, or clustered workloads, the operational impact can exceed what the narrow technical trigger suggests.

The phrase “hard-lock” matters. A kernel oops, service crash, or driver reset leaves breadcrumbs. A hard-locked server may require power cycling, out-of-band management, fencing by a cluster manager, and postmortem reconstruction from whatever logs made it out before the system froze. For a colocated box or remote edge system, the difference between a clean panic and a wedged host is the difference between software recovery and a ticket to remote hands.

This is why vulnerability triage should not reduce everything to exploitability from the public Internet. Infrastructure risk often comes from combinations of privilege, hardware adjacency, and failure mode. A bug that is hard to trigger deliberately but easy to encounter during a hardware fault can still deserve priority in the right fleet.

The Windows Angle Is the Mixed-Stack Data Center

WindowsForum readers may reasonably ask why a Linux kernel CVE belongs in a Windows-centric publication. The answer is that modern Windows estates rarely run on Windows alone. Hyper-V, Azure Stack HCI, VMware, Proxmox, KVM, WSL-adjacent development infrastructure, Linux storage appliances, Kubernetes workers, and DPDK-based network functions all coexist in the same operational universe.The MSRC listing is a clue to that universe. Microsoft tracks many CVEs that do not look, at first glance, like traditional Windows bugs because customers consume open-source components, Linux kernels, container hosts, appliances, and cloud images through Microsoft products and services. The practical question is not whether the vulnerable code is in

ntoskrnl.exe. It is whether your business depends on systems that run it.For Windows admins, the most relevant exposure may be indirect. A Linux virtualization host may run Windows guests with passed-through GPUs or NICs. A lab may use VFIO to test Windows drivers. A storage or backup appliance may be Linux-based even though every protected workload is Windows Server. A network acceleration box may sit in front of Active Directory, SQL Server, or Remote Desktop infrastructure without being managed by the same team that owns those services.

This is where siloed patching breaks down. The Windows team may know the business impact of a host outage, while the Linux team owns the kernel update, and the network team owns the ConnectX card. CVE-2026-43161 is not likely to be exploited by someone emailing a malicious attachment to an accounting department. It is more likely to bite when ownership boundaries make hardware health, kernel versions, and passthrough settings nobody’s complete picture.

It is also a useful reminder that Windows workloads inherit the reliability of their substrate. A Windows VM with a passed-through accelerator is only as stable as the host’s ability to recover when that accelerator misbehaves. Guest operating system maturity does not save a VM from a frozen host.

Passthrough Has Always Been a Bargain

PCIe passthrough is attractive because it gives guests and userspace processes near-native access to hardware. That is why homelab users love it for GPUs, why telcos and cloud providers use it for packet processing, and why high-performance storage and compute stacks keep returning to it despite the management pain. The alternative — full emulation or paravirtualization — is often safer but slower or less capable.The bargain is that the host keeps enough control to isolate and recover. IOMMUs provide translation and containment. VFIO provides a framework for safely exposing devices to userspace. PCIe hot-plug handling provides a way to deal with topology changes. Each layer narrows the danger, but none abolishes it.

CVE-2026-43161 shows how thin the recovery margin can become. Once a device caches translations and the platform must invalidate them, the device is no longer just a passive endpoint. It is a participant in the correctness protocol. If it disappears while the protocol expects a response, the host needs a way to stop waiting.

That principle generalizes beyond this CVE. Hardware acceleration is increasingly built on shared responsibility between device, firmware, kernel, and userspace runtime. The more capability we push toward devices, the more recovery paths must assume that those devices may fail at exactly the wrong time.

The fix does not say passthrough is unsafe. It says passthrough needs defensive pessimism. Before asking a device to do something required for teardown, check whether the device can still be reached. That may sound obvious, but obviousness is often discovered only after the first hard-lock.

The Patch Is Small Because the Lesson Is Large

The core mitigation described in the CVE is conceptually modest: before flushing the device IOTLB in the relevant attach and release paths, check whether the PCIe device is present. If it is inaccessible, skip the flush rather than waiting indefinitely. The stable kernel references indicate the fix has been backported across maintained branches.That is good news for administrators because it means the likely remediation path is ordinary kernel maintenance, not a hardware redesign or a risky configuration workaround. It also means distributions and appliance vendors should be able to absorb the change into their update channels. The challenge, as usual, is less about writing the patch than getting it onto the systems that actually carry the risk.

The affected population is narrower than “all Linux servers.” Systems without PCIe passthrough, without ATS-enabled endpoints, or without the Intel VT-d paths in question are not the primary concern. But the systems that do match are often the ones where downtime is most expensive: virtualization hosts, high-throughput networking boxes, GPU compute nodes, and specialized appliances.

Admins should resist the temptation to wait for a CVSS score before starting inventory. NVD enrichment can lag publication, and a missing score does not tell you whether your fleet has QEMU hosts with passed-through NICs. The useful triage starts with architecture, not a number.

The first question is whether you operate Linux hosts using Intel VT-d with PCIe passthrough to guests or userspace frameworks. The second is whether those devices use ATS. The third is whether your kernel line has received one of the stable fixes referenced by the CVE or an equivalent vendor backport. If the answer to the first two is yes and the third is no, the risk is not theoretical enough to ignore.

The Homelab Crowd Should Not Dismiss This Either

Enterprise operators are the obvious audience, but homelab users may recognize this bug even faster. GPU passthrough, NIC passthrough, HBA passthrough, and Proxmox-style experimentation are common in enthusiast setups. Those systems are often built from consumer boards, secondhand enterprise cards, risers, split lanes, and firmware combinations that would make a server vendor’s validation matrix wince.That does not mean every homelab is exposed. But it does mean the trigger conditions are plausible in exactly the environments where people are most likely to yank cables, move cards, test kernels, and destroy VMs from the command line when something looks wrong. A link fault that becomes a host hard-lock is not an abstract risk when your storage VM and router VM live on the same box.

The more subtle point is that enthusiasts often run newer kernels than enterprises but older hardware than enterprises. That combination can be excellent for features and terrible for edge cases. A kernel may contain complex modern IOMMU behavior while the platform’s firmware and PCIe topology remain eccentric.

For Windows enthusiasts using Linux hosts to run Windows guests, this CVE is another reason to treat passthrough as infrastructure, not a hobby toggle. Keep kernel packages current. Know which devices are assigned to which guests. Avoid assuming that a VM teardown is always safer than waiting. And when a host freezes during VFIO cleanup, do not immediately blame Windows, QEMU, or the guest driver; the host IOMMU path may be the crime scene.

The Risk Is in the Rack, Not the Press Release

The most important operational move now is inventory. Security teams often track operating systems and packages, but passthrough exposure lives in configuration. A generic Linux asset list will not tell you whether a host has an ATS-enabled ConnectX adapter assigned to a userspace packet processor or a GPU bound to VFIO for a Windows VM.The second move is vendor mapping. If you run distribution kernels, watch your distribution’s advisories rather than assuming the upstream commit hash is directly visible in your installed kernel. Enterprise distributions frequently backport fixes without changing the headline kernel version in a way that satisfies casual checks. Appliance vendors may do the same, but on their own schedule.

The third move is recovery planning. Until patched, affected systems should be treated as having a possible host-level lockup condition during device removal, link instability, or userspace teardown. That does not mean disabling all passthrough overnight. It does mean avoiding unnecessary hot-plug experiments on production boxes and making sure out-of-band management, fencing, and cluster failover behave as expected.

There is also a monitoring angle, though it is imperfect. PCIe AER errors, link flaps, VFIO detach events, and IOMMU warnings may provide hints before a lockup, but the whole problem with a hard-lock is that it may leave the logs incomplete. Operators should not count on perfect observability after the fact.

For organizations with formal change windows, this is a case for pairing kernel updates with hardware-aware testing. Reproduce basic attach, detach, VM shutdown, and device reset flows in a lab that resembles production. The fix should reduce the risk of indefinite waits on inaccessible devices, but every passthrough environment has enough local variation that blind confidence is unearned.

Microsoft’s CVE Feed Is a Map of Modern Dependency Sprawl

The appearance of a Linux kernel CVE in Microsoft’s security ecosystem is not a novelty anymore; it is the new normal. Microsoft ships Linux in cloud images, supports Linux workloads in Azure, participates heavily in open source, and serves customers whose “Microsoft environment” is built on a web of non-Microsoft components. The Security Update Guide reflects that sprawl.This can be confusing for administrators who still think in vendor boxes. A CVE on an MSRC page may not mean “patch Windows Update now.” It may mean “this vulnerability affects something in the broader software and service supply chain Microsoft tracks.” In the case of CVE-2026-43161, the underlying source is kernel.org, and the practical remediation will come through Linux kernel updates or vendor-provided downstream patches.

That distinction matters because otherwise teams either overreact or underreact. Overreaction treats every MSRC-listed CVE as an immediate Windows emergency. Underreaction treats every non-Windows CVE as someone else’s problem. Mixed infrastructure punishes both instincts.

The better reading is contextual. If your Windows estate depends on Linux hosts, appliances, or cloud infrastructure that use PCIe passthrough, this CVE belongs on your radar. If your environment is pure Windows endpoints and ordinary servers with no Linux virtualization substrate in scope, it may be background noise. Most organizations are somewhere in between.

This is where modern vulnerability management becomes less about feeds and more about architecture. The feed can tell you that a flaw exists. Only your asset model can tell you whether it matters.

A Small Kernel Fix Carries a Big Operational Message

CVE-2026-43161 should push teams to make several concrete checks before the next maintenance cycle. The vulnerability is not broad enough to panic over, but it is severe enough in the wrong configuration that waiting for a dashboard to turn red is poor practice.- Systems running Linux with Intel VT-d and PCIe passthrough deserve priority review, especially hosts using QEMU, VFIO, or DPDK with high-performance NICs, GPUs, or accelerators.

- The risk centers on ATS-enabled PCIe endpoints that become inaccessible during removal, link failure, or userspace teardown, where an IOMMU device-IOTLB flush can wait indefinitely.

- The issue is especially relevant to hosts without Intel IOMMU scalable mode enabled or supported, because an earlier disconnected-device guard did not fully cover that path.

- NVD had not assigned CVSS metrics at publication, so teams should triage by exposure and business impact rather than waiting for a severity score.

- Kernel updates or vendor backports containing the stable fixes are the clean remediation path, while interim risk reduction depends on avoiding unnecessary passthrough churn and ensuring out-of-band recovery works.

- Windows workloads are still exposed when they run atop affected Linux passthrough hosts, so ownership boundaries between Windows, Linux, virtualization, and network teams should not become patching boundaries.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center