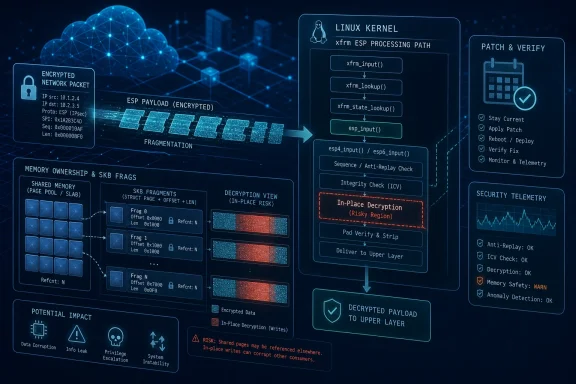

Microsoft published CVE-2026-43284 in its Security Update Guide on May 8, 2026, tracking a Linux kernel flaw in the xfrm ESP path where encrypted network packets can be decrypted in place over shared socket-buffer fragments. The bug is not a Windows kernel vulnerability, but it matters deeply to WindowsForum readers because Microsoft now ships, secures, and operates large amounts of Linux through Azure, Azure Linux, WSL-adjacent developer workflows, containers, and hybrid infrastructure. The immediate lesson is not that IPsec suddenly became unsafe; it is that modern operating systems increasingly fail at the seams between performance optimization and memory ownership. For administrators, the practical story is brutally simple: if Linux workloads live anywhere in your Microsoft estate, this is a patch-and-verify event, not a curiosity.

There was a time when a Linux kernel CVE appearing in a Microsoft security channel would have felt like an oddity. That era is over. Microsoft’s update guide is no longer just a map of Windows, Office, Exchange, and Edge risk; it is a front door into the mixed operating-system reality most enterprises already run.

CVE-2026-43284 sits in that uncomfortable middle ground. The vulnerable code is Linux networking code, specifically the xfrm framework used for IPsec transformations and the ESP receive path. Yet the people who need to care include Azure administrators, Kubernetes operators, security engineers using Defender telemetry, and Windows-first shops that quietly became Linux shops the moment they adopted containers.

That is the real significance of the disclosure. Microsoft did not suddenly make Linux vulnerable, and Windows desktops are not being rooted by this bug. But Microsoft’s ecosystem is now broad enough that a flaw in a Linux packet-decryption fast path can become a Microsoft operational incident.

For WindowsForum’s audience, this is the kind of vulnerability that rewards architectural literacy. It is not glamorous in the way a browser zero-day is glamorous. It does not begin with a phishing email or a malicious document. It begins with an assumption inside the kernel about whether a piece of memory is privately owned — and ends, in the worst case, with a local attacker moving from ordinary user privileges to root.

In Linux networking, an skb — short for socket buffer — is the core structure used to carry packet data through the network stack. For performance reasons, that packet data does not always live in one neat, private, linear buffer. It may be spread across fragments, mapped pages, or memory borrowed from elsewhere. That is efficient, but it means the kernel must be very clear about who owns the bytes before it modifies them.

CVE-2026-43284 is about what happens when that clarity is missing. The vulnerable ESP path can decrypt data in place under conditions where the packet’s fragments are backed by shared pages. If those pages are not truly private to the packet, modifying them is no longer just packet processing. It becomes an unintended write into memory that may be visible or controllable through another path.

The fix described by the vulnerability title is conceptually straightforward: do not perform in-place decryption when the socket-buffer fragments are shared. Force a copy-on-write style path instead, so the kernel decrypts into memory it actually owns. In operating-system security, however, “conceptually straightforward” often arrives only after someone demonstrates that the fast path was wrong.

That is why this class of bug keeps returning. Kernel developers are constantly trading copies for speed, especially in networking, storage, and crypto paths. Every avoided copy saves CPU cycles and memory bandwidth. Every avoided copy also depends on the invariant that the data being modified cannot be observed or reused in a way the developer did not intend.

Dirty Pipe was memorable because it was both technically elegant and operationally alarming: an unprivileged local user could abuse kernel pipe-buffer behavior to overwrite data in the page cache. Dirty Frag appears in the same family of failure. It is not the same bug, but it rhymes with the same mistake: the kernel takes a shortcut involving shared memory and accidentally hands an attacker a write primitive.

The “Frag” part matters. This is not simply about pipes, nor simply about IPsec. It is about fragmented packet data, shared page references, and the subtle handling of nonlinear socket buffers. Modern kernels are full of these structures because high-throughput systems cannot afford to copy every byte at every boundary.

That does not make the flaw excusable. It makes it predictable. When optimization pushes more subsystems toward zero-copy or low-copy designs, memory ownership becomes a security boundary. If the boundary is implicit, stale, or inconsistently marked across code paths, attackers eventually find the mismatch.

The uncomfortable implication is that Dirty Frag is not an isolated engineering embarrassment. It is a warning about a design pressure that is only getting stronger. The cloud wants more throughput per watt. Containers want denser scheduling. VPNs, overlays, service meshes, and encrypted east-west traffic all push packets through elaborate kernel machinery. The attack surface is not shrinking; it is being optimized.

A Windows-first organization may still run Linux in Azure virtual machines, Azure Kubernetes Service node pools, appliance images, developer workstations, CI runners, NAS devices, security tools, and edge boxes. The Windows admin who once patched only domain controllers and file servers is now expected to understand container base images, cloud node images, and kernel live-patching status. Microsoft’s own security coverage reflects that reality.

The bug is especially relevant to systems where untrusted or semi-trusted local code can execute. That includes shared hosting, multi-tenant development servers, container platforms with weak isolation, build infrastructure, and compromised web workloads that begin with low privileges. Local privilege escalation bugs are often dismissed as “post-compromise,” but that phrase can be dangerously soothing.

Post-compromise is where real intrusions become durable. A web shell running as a service account is bad; a root shell on the host is worse. A malicious dependency in a CI job is bad; a kernel-assisted breakout path is worse. A low-privileged foothold on a Linux VM is bad; control of the underlying node can turn one incident into a cluster problem.

This is why Microsoft’s handling matters. The company’s customer base is full of enterprises that do not think of themselves as Linux-first, even when their production estate says otherwise. Publishing and tracking this kind of CVE through Microsoft channels helps close that perception gap.

IPsec remains a foundational technology for VPNs, host-to-host encryption, and secure tunnels. The problem is not that encryption failed cryptographically. The problem is that decryption is a write operation, and the kernel must not write decrypted bytes into storage it does not own. The bug sits below the level where administrators think in terms of ciphers and policies.

That distinction matters for risk communication. Telling a network team “there is an IPsec bug” may lead them to ask whether they use a particular VPN configuration. Telling a platform team “there is a local privilege escalation path in the kernel involving ESP packet handling and shared fragments” leads to a different conversation. The first sounds like a perimeter issue. The second sounds like a host-hardening and patch-management issue.

Some mitigations discussed in the Linux community focus on disabling or blocking affected modules where they are not needed. That can be reasonable in tightly controlled environments, but it is not a substitute for patching. Kernel module blacklisting is easy to get wrong, easy to drift from, and risky when production networking assumptions are poorly documented.

The safer administrative posture is to treat mitigations as temporary pressure relief. If the workload can tolerate disabling unused ESP-related paths, do it carefully and test it. But the end state should be a vendor kernel containing the proper fix.

A local kernel bug changes the economics of compromise. Without it, an attacker who lands in a restricted account may need credentials, misconfigurations, writable scripts, cron abuse, exposed sockets, or application secrets to climb higher. With it, the path may collapse into running a proof-of-concept binary and waiting for root.

That is especially dangerous in Linux server environments where local execution is not rare. Web applications run code. Build systems run code. Data-science notebooks run code. Container hosts run code submitted by developers who are not kernel security experts. SSH access for contractors, automation users, and support staff is common enough that “local” is not the same as “unlikely.”

The container angle deserves particular caution. Containers are not magic security bubbles; they are processes sharing a host kernel with namespaces, cgroups, seccomp profiles, and other restrictions layered on top. A kernel local privilege escalation may be blocked by a good runtime profile, or it may not. The answer depends on the exact exploit path, the syscalls allowed, the capabilities granted, and the host kernel version.

That makes blanket reassurance irresponsible. Administrators should not assume that “it is inside a container” means “it cannot reach the vulnerable path.” They should also not assume every container platform is equally exposed. The right answer is inventory, patch, verify, and review confinement settings.

Linux kernel security updates do not arrive as one universal artifact. They are backported by distribution vendors, cloud image maintainers, appliance builders, live-patching providers, and platform teams. The running kernel version string may not tell the whole story because enterprise distributions routinely backport fixes without rebasing to the newest upstream kernel. Conversely, a new-looking kernel in a custom image may still lack a specific patch if the image pipeline is stale.

That is where many organizations stumble. They check a CVE scanner, see ambiguous results, and move on. For a bug like CVE-2026-43284, the better approach is to confirm the vendor advisory for the exact OS and kernel package, then verify that the running systems have actually booted into the fixed kernel or received a live patch.

Reboot discipline matters here. Linux administrators know the old trap: the package manager says the kernel update is installed, but

The Microsoft estate adds another layer. Azure Marketplace images, Azure Linux images, third-party appliances, AKS node images, and self-managed VMs may all have different update paths. A Windows admin checking Microsoft Update will not see the full picture. The question is not “did Patch Tuesday run?” It is “which Linux kernels are actually executing in my environment today?”

That does not mean every system is being exploited at scale. It does mean defenders should not wait for perfect telemetry before acting. Local privilege escalation bugs often become embedded in attacker toolkits precisely because they are useful after some other access path succeeds.

The mention of active attack in Microsoft security commentary raises the stakes further. Even when details are incomplete, that kind of language should shift an organization from watchful waiting to operational response. The burden moves from “prove we are vulnerable” to “prove our exposed Linux estate is fixed or accept the risk explicitly.”

There is also a broader disclosure-policy lesson. Kernel fixes can be hard to classify, and security significance is not always obvious at first glance. A commit that looks like defensive hygiene in a memory-handling path may become a roadmap for exploit developers once correlated with adjacent behavior. The distance between patch and weaponization has narrowed.

That is not an argument against transparency. It is an argument for faster consumption of upstream security signals. Enterprises cannot afford to treat kernel CVEs as slow-moving infrastructure trivia when public exploit development now follows them in days.

Not Linux servers in the CMDB. Not “known Unix systems.” Actual kernels. The ones under Kubernetes nodes, virtual appliances, developer VMs, security sensors, storage controllers, lab boxes, embedded management tools, and temporary cloud workloads. If that inventory does not exist, every Linux kernel CVE becomes a scavenger hunt.

WSL deserves a careful mention here. The vulnerability at issue is in Linux kernel code, but exploitability depends on kernel configuration, reachable code paths, and environment. Administrators should avoid both panic and dismissal. The right posture is to keep WSL kernels and Windows hosts updated, understand whether Linux networking features are exposed in the environment, and not treat developer machines as exempt from kernel hygiene.

For enterprise teams, the key operational split is between systems you patch directly and systems patched through a platform owner. Self-managed Linux VMs are your problem. Managed Kubernetes nodes may be your cloud provider’s image problem, but you still own upgrade cadence. Appliances may require vendor firmware. Containers inherit the host kernel, so rebuilding an application image alone does not fix the kernel beneath it.

This is where Windows and Linux administration cultures collide. Windows patching tends to be centralized, scheduled, and visible through familiar management planes. Linux patching in cloud-native environments is often distributed across infrastructure-as-code, image pipelines, package repositories, and orchestration systems. CVE-2026-43284 punishes the assumption that those worlds are separate.

This is not unique to Linux. Windows, BSD, hypervisors, browsers, databases, and storage engines all use reference counting, shared buffers, page caches, and copy-on-write tricks to go faster. The difference is that the Linux kernel’s visibility makes these failures unusually legible once disclosed. We can see the machinery, the assumptions, and the patch.

The industry should resist the lazy conclusion that performance optimization is the enemy. Without zero-copy and low-copy techniques, modern networking would be vastly more expensive. The better conclusion is that ownership metadata must be treated as security-critical state, not housekeeping.

That point matters for developers far outside kernel networking. The same pattern appears anywhere a system borrows, maps, shares, or defers copying of mutable data. If one subsystem thinks a buffer is private and another knows it is shared, the attacker only needs to find the gap between those beliefs.

CVE-2026-43284 is therefore more than a patch note. It is a case study in how modern systems fail when performance shortcuts become invisible contracts.

The most important actions are also the least exotic:

CVE-2026-43284 will eventually disappear into updated kernels, closed tickets, and compliance reports, but the pattern behind it will not. Microsoft’s publication of a Linux kernel vulnerability is a reminder that the modern Windows estate extends into Linux, cloud images, containers, and memory-management assumptions most users never see. The next serious flaw may not mention ESP, xfrm, or skb fragments at all, but it will likely ask the same question in a different subsystem: when the operating system writes to memory for speed, is it absolutely sure that memory is its own?

Source: MSRC Security Update Guide - Microsoft Security Response Center

Microsoft’s Linux Problem Is Now Everyone’s Windows Problem

Microsoft’s Linux Problem Is Now Everyone’s Windows Problem

There was a time when a Linux kernel CVE appearing in a Microsoft security channel would have felt like an oddity. That era is over. Microsoft’s update guide is no longer just a map of Windows, Office, Exchange, and Edge risk; it is a front door into the mixed operating-system reality most enterprises already run.CVE-2026-43284 sits in that uncomfortable middle ground. The vulnerable code is Linux networking code, specifically the xfrm framework used for IPsec transformations and the ESP receive path. Yet the people who need to care include Azure administrators, Kubernetes operators, security engineers using Defender telemetry, and Windows-first shops that quietly became Linux shops the moment they adopted containers.

That is the real significance of the disclosure. Microsoft did not suddenly make Linux vulnerable, and Windows desktops are not being rooted by this bug. But Microsoft’s ecosystem is now broad enough that a flaw in a Linux packet-decryption fast path can become a Microsoft operational incident.

For WindowsForum’s audience, this is the kind of vulnerability that rewards architectural literacy. It is not glamorous in the way a browser zero-day is glamorous. It does not begin with a phishing email or a malicious document. It begins with an assumption inside the kernel about whether a piece of memory is privately owned — and ends, in the worst case, with a local attacker moving from ordinary user privileges to root.

The Bug Lives Where Speed Meets Ownership

The phrase “avoid in-place decrypt on shared skb frags” sounds like a commit message written for six people in the world. Unfortunately, those six people are standing between the rest of us and a root shell.In Linux networking, an skb — short for socket buffer — is the core structure used to carry packet data through the network stack. For performance reasons, that packet data does not always live in one neat, private, linear buffer. It may be spread across fragments, mapped pages, or memory borrowed from elsewhere. That is efficient, but it means the kernel must be very clear about who owns the bytes before it modifies them.

CVE-2026-43284 is about what happens when that clarity is missing. The vulnerable ESP path can decrypt data in place under conditions where the packet’s fragments are backed by shared pages. If those pages are not truly private to the packet, modifying them is no longer just packet processing. It becomes an unintended write into memory that may be visible or controllable through another path.

The fix described by the vulnerability title is conceptually straightforward: do not perform in-place decryption when the socket-buffer fragments are shared. Force a copy-on-write style path instead, so the kernel decrypts into memory it actually owns. In operating-system security, however, “conceptually straightforward” often arrives only after someone demonstrates that the fast path was wrong.

That is why this class of bug keeps returning. Kernel developers are constantly trading copies for speed, especially in networking, storage, and crypto paths. Every avoided copy saves CPU cycles and memory bandwidth. Every avoided copy also depends on the invariant that the data being modified cannot be observed or reused in a way the developer did not intend.

Dirty Frag Is a Familiar Song in a New Register

Security researchers and vendors have been referring to this issue as part of “Dirty Frag,” a name that deliberately echoes Dirty Pipe. The comparison is not perfect, but it is useful. Both stories revolve around the kernel writing to page-backed memory in ways that break the mental model users and administrators rely on.Dirty Pipe was memorable because it was both technically elegant and operationally alarming: an unprivileged local user could abuse kernel pipe-buffer behavior to overwrite data in the page cache. Dirty Frag appears in the same family of failure. It is not the same bug, but it rhymes with the same mistake: the kernel takes a shortcut involving shared memory and accidentally hands an attacker a write primitive.

The “Frag” part matters. This is not simply about pipes, nor simply about IPsec. It is about fragmented packet data, shared page references, and the subtle handling of nonlinear socket buffers. Modern kernels are full of these structures because high-throughput systems cannot afford to copy every byte at every boundary.

That does not make the flaw excusable. It makes it predictable. When optimization pushes more subsystems toward zero-copy or low-copy designs, memory ownership becomes a security boundary. If the boundary is implicit, stale, or inconsistently marked across code paths, attackers eventually find the mismatch.

The uncomfortable implication is that Dirty Frag is not an isolated engineering embarrassment. It is a warning about a design pressure that is only getting stronger. The cloud wants more throughput per watt. Containers want denser scheduling. VPNs, overlays, service meshes, and encrypted east-west traffic all push packets through elaborate kernel machinery. The attack surface is not shrinking; it is being optimized.

The Microsoft Angle Is Azure, Containers, and the Hybrid Estate

If your only computer is a Windows 11 laptop with no WSL, no Hyper-V Linux guests, no containers, and no remote Linux servers, CVE-2026-43284 is probably background noise. That is not the world most IT pros inhabit.A Windows-first organization may still run Linux in Azure virtual machines, Azure Kubernetes Service node pools, appliance images, developer workstations, CI runners, NAS devices, security tools, and edge boxes. The Windows admin who once patched only domain controllers and file servers is now expected to understand container base images, cloud node images, and kernel live-patching status. Microsoft’s own security coverage reflects that reality.

The bug is especially relevant to systems where untrusted or semi-trusted local code can execute. That includes shared hosting, multi-tenant development servers, container platforms with weak isolation, build infrastructure, and compromised web workloads that begin with low privileges. Local privilege escalation bugs are often dismissed as “post-compromise,” but that phrase can be dangerously soothing.

Post-compromise is where real intrusions become durable. A web shell running as a service account is bad; a root shell on the host is worse. A malicious dependency in a CI job is bad; a kernel-assisted breakout path is worse. A low-privileged foothold on a Linux VM is bad; control of the underlying node can turn one incident into a cluster problem.

This is why Microsoft’s handling matters. The company’s customer base is full of enterprises that do not think of themselves as Linux-first, even when their production estate says otherwise. Publishing and tracking this kind of CVE through Microsoft channels helps close that perception gap.

IPsec Is Not the Villain, but It Is the Doorway

Because the flaw touches ESP, it is tempting to frame this as an IPsec vulnerability. That is only partly right. ESP is the path through which the vulnerable behavior can be reached, but the deeper issue is memory mutation in the presence of shared fragments.IPsec remains a foundational technology for VPNs, host-to-host encryption, and secure tunnels. The problem is not that encryption failed cryptographically. The problem is that decryption is a write operation, and the kernel must not write decrypted bytes into storage it does not own. The bug sits below the level where administrators think in terms of ciphers and policies.

That distinction matters for risk communication. Telling a network team “there is an IPsec bug” may lead them to ask whether they use a particular VPN configuration. Telling a platform team “there is a local privilege escalation path in the kernel involving ESP packet handling and shared fragments” leads to a different conversation. The first sounds like a perimeter issue. The second sounds like a host-hardening and patch-management issue.

Some mitigations discussed in the Linux community focus on disabling or blocking affected modules where they are not needed. That can be reasonable in tightly controlled environments, but it is not a substitute for patching. Kernel module blacklisting is easy to get wrong, easy to drift from, and risky when production networking assumptions are poorly documented.

The safer administrative posture is to treat mitigations as temporary pressure relief. If the workload can tolerate disabling unused ESP-related paths, do it carefully and test it. But the end state should be a vendor kernel containing the proper fix.

Local Privilege Escalation Is Still Enterprise-Critical

Security scoring systems have trained too many organizations to privilege remote code execution above all else. That instinct is understandable; worms and unauthenticated network bugs are terrifying. But local privilege escalation is how attackers finish the job.A local kernel bug changes the economics of compromise. Without it, an attacker who lands in a restricted account may need credentials, misconfigurations, writable scripts, cron abuse, exposed sockets, or application secrets to climb higher. With it, the path may collapse into running a proof-of-concept binary and waiting for root.

That is especially dangerous in Linux server environments where local execution is not rare. Web applications run code. Build systems run code. Data-science notebooks run code. Container hosts run code submitted by developers who are not kernel security experts. SSH access for contractors, automation users, and support staff is common enough that “local” is not the same as “unlikely.”

The container angle deserves particular caution. Containers are not magic security bubbles; they are processes sharing a host kernel with namespaces, cgroups, seccomp profiles, and other restrictions layered on top. A kernel local privilege escalation may be blocked by a good runtime profile, or it may not. The answer depends on the exact exploit path, the syscalls allowed, the capabilities granted, and the host kernel version.

That makes blanket reassurance irresponsible. Administrators should not assume that “it is inside a container” means “it cannot reach the vulnerable path.” They should also not assume every container platform is equally exposed. The right answer is inventory, patch, verify, and review confinement settings.

The Patch Is Simple; The Fleet Is Not

The upstream fix direction is clear: mark the relevant datagram-splice fragments as shared and make ESP input take the safer copy path when shared fragments are present. In a single source tree, that is the kind of fix a kernel engineer can reason about. Across enterprise fleets, it becomes a logistics problem.Linux kernel security updates do not arrive as one universal artifact. They are backported by distribution vendors, cloud image maintainers, appliance builders, live-patching providers, and platform teams. The running kernel version string may not tell the whole story because enterprise distributions routinely backport fixes without rebasing to the newest upstream kernel. Conversely, a new-looking kernel in a custom image may still lack a specific patch if the image pipeline is stale.

That is where many organizations stumble. They check a CVE scanner, see ambiguous results, and move on. For a bug like CVE-2026-43284, the better approach is to confirm the vendor advisory for the exact OS and kernel package, then verify that the running systems have actually booted into the fixed kernel or received a live patch.

Reboot discipline matters here. Linux administrators know the old trap: the package manager says the kernel update is installed, but

uname -r says the host is still running yesterday’s vulnerable kernel. In cloud and container environments, that trap expands to node pools, golden images, autoscaling groups, and ephemeral runners.The Microsoft estate adds another layer. Azure Marketplace images, Azure Linux images, third-party appliances, AKS node images, and self-managed VMs may all have different update paths. A Windows admin checking Microsoft Update will not see the full picture. The question is not “did Patch Tuesday run?” It is “which Linux kernels are actually executing in my environment today?”

The Disclosure Shows How Fast Kernel Bugs Become Operational

The timeline around Dirty Frag has moved quickly. Public records and vendor advisories appeared in early May 2026, with CVE-2026-43284 tied to the ESP side of the issue and CVE-2026-43500 discussed in connection with RxRPC. Reports also indicate that proof-of-concept material has circulated publicly, which compresses the time defenders have to treat the issue as theoretical.That does not mean every system is being exploited at scale. It does mean defenders should not wait for perfect telemetry before acting. Local privilege escalation bugs often become embedded in attacker toolkits precisely because they are useful after some other access path succeeds.

The mention of active attack in Microsoft security commentary raises the stakes further. Even when details are incomplete, that kind of language should shift an organization from watchful waiting to operational response. The burden moves from “prove we are vulnerable” to “prove our exposed Linux estate is fixed or accept the risk explicitly.”

There is also a broader disclosure-policy lesson. Kernel fixes can be hard to classify, and security significance is not always obvious at first glance. A commit that looks like defensive hygiene in a memory-handling path may become a roadmap for exploit developers once correlated with adjacent behavior. The distance between patch and weaponization has narrowed.

That is not an argument against transparency. It is an argument for faster consumption of upstream security signals. Enterprises cannot afford to treat kernel CVEs as slow-moving infrastructure trivia when public exploit development now follows them in days.

Windows Administrators Need a Linux Kernel Inventory

The most useful response to CVE-2026-43284 may have nothing to do with ESP specifically. It should force Windows-heavy organizations to answer a basic question: where are our Linux kernels?Not Linux servers in the CMDB. Not “known Unix systems.” Actual kernels. The ones under Kubernetes nodes, virtual appliances, developer VMs, security sensors, storage controllers, lab boxes, embedded management tools, and temporary cloud workloads. If that inventory does not exist, every Linux kernel CVE becomes a scavenger hunt.

WSL deserves a careful mention here. The vulnerability at issue is in Linux kernel code, but exploitability depends on kernel configuration, reachable code paths, and environment. Administrators should avoid both panic and dismissal. The right posture is to keep WSL kernels and Windows hosts updated, understand whether Linux networking features are exposed in the environment, and not treat developer machines as exempt from kernel hygiene.

For enterprise teams, the key operational split is between systems you patch directly and systems patched through a platform owner. Self-managed Linux VMs are your problem. Managed Kubernetes nodes may be your cloud provider’s image problem, but you still own upgrade cadence. Appliances may require vendor firmware. Containers inherit the host kernel, so rebuilding an application image alone does not fix the kernel beneath it.

This is where Windows and Linux administration cultures collide. Windows patching tends to be centralized, scheduled, and visible through familiar management planes. Linux patching in cloud-native environments is often distributed across infrastructure-as-code, image pipelines, package repositories, and orchestration systems. CVE-2026-43284 punishes the assumption that those worlds are separate.

The Real Risk Is the Copy Nobody Wanted to Pay For

At the engineering level, the fix for this bug reasserts an old truth: if you are going to modify data, you need to own it. The kernel tried to avoid unnecessary copying for performance. Under the wrong conditions, that optimization crossed a security boundary.This is not unique to Linux. Windows, BSD, hypervisors, browsers, databases, and storage engines all use reference counting, shared buffers, page caches, and copy-on-write tricks to go faster. The difference is that the Linux kernel’s visibility makes these failures unusually legible once disclosed. We can see the machinery, the assumptions, and the patch.

The industry should resist the lazy conclusion that performance optimization is the enemy. Without zero-copy and low-copy techniques, modern networking would be vastly more expensive. The better conclusion is that ownership metadata must be treated as security-critical state, not housekeeping.

That point matters for developers far outside kernel networking. The same pattern appears anywhere a system borrows, maps, shares, or defers copying of mutable data. If one subsystem thinks a buffer is private and another knows it is shared, the attacker only needs to find the gap between those beliefs.

CVE-2026-43284 is therefore more than a patch note. It is a case study in how modern systems fail when performance shortcuts become invisible contracts.

The Practical Lesson Hidden in the Fragment

For all the kernel nuance, the operational response should be concrete. Organizations do not need every administrator to understand skb fragment ownership to handle the incident well. They need disciplined update management and a refusal to wave away Linux risk because the corporate desktop standard is Windows.The most important actions are also the least exotic:

- Confirm which Linux distributions, kernels, cloud images, Kubernetes node pools, and appliances in your environment are covered by vendor fixes for CVE-2026-43284.

- Verify the running kernel, not just the installed package, because a host that has not rebooted may still be vulnerable.

- Treat local privilege escalation as a serious post-compromise accelerator on shared servers, developer systems, CI infrastructure, and container hosts.

- Use temporary mitigations such as disabling unused affected modules only where they are supported, tested, and documented.

- Review container runtime confinement, including seccomp and capability settings, but do not treat container isolation as a replacement for patching.

- Make Linux kernel inventory part of normal Microsoft-estate security operations rather than an emergency exercise during each new CVE.

CVE-2026-43284 will eventually disappear into updated kernels, closed tickets, and compliance reports, but the pattern behind it will not. Microsoft’s publication of a Linux kernel vulnerability is a reminder that the modern Windows estate extends into Linux, cloud images, containers, and memory-management assumptions most users never see. The next serious flaw may not mention ESP, xfrm, or skb fragments at all, but it will likely ask the same question in a different subsystem: when the operating system writes to memory for speed, is it absolutely sure that memory is its own?

Source: MSRC Security Update Guide - Microsoft Security Response Center