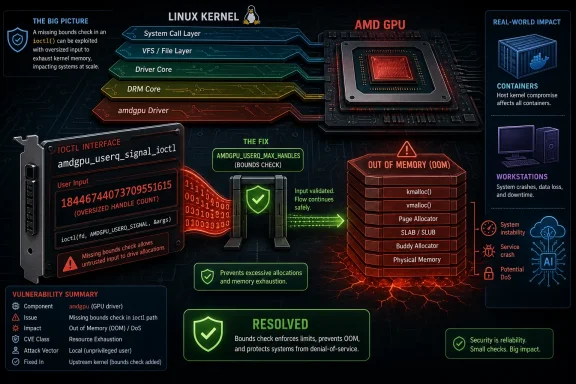

CVE-2026-43400 is a newly published Linux kernel vulnerability, disclosed on May 8, 2026, in AMD’s open-source amdgpu driver, where oversized user input to the

The vulnerability description is terse, even by kernel CVE standards: huge input values in

But kernel bugs rarely need to be theatrical to matter. An ioctl is a userspace-to-kernel command channel, and GPU drivers expose many of them because applications, compositors, compute runtimes, and graphics stacks need to submit work, synchronize fences, manage buffers, and coordinate queues. When one of those paths trusts a user-controlled count too much, the kernel may allocate memory based on an attacker-supplied value. If that value is enormous, the bug stops being a programming oversight and becomes a resource-exhaustion primitive.

That is the core of CVE-2026-43400. The vulnerable path lives in the AMDGPU user queue signaling logic, where userspace can pass handle-related counts into a kernel routine. The fix adds an upper bound that the patch authors describe as large enough for legitimate use while preventing pathological allocations. It is security engineering at its most unglamorous: decide what “reasonable” means, encode it, and stop pretending the caller will behave.

For WindowsForum readers, the AMDGPU part may sound like Linux-only plumbing. In strict product terms, this is not a Windows display-driver CVE. But the MSRC listing matters because Microsoft increasingly has to account for open-source components across its estate: cloud hosts, Linux distributions, developer platforms, container environments, and security feeds consumed by enterprise patch teams. Windows shops no longer get to ignore Linux kernel CVEs simply because the affected file path starts with

On a modern Linux desktop, the amdgpu kernel driver is not merely painting pixels. It is coordinating command submission, synchronization objects, buffer objects, virtual memory mappings, display state, power management, video acceleration, and compute-oriented workloads. On developer workstations and AI systems, that same driver may be exercised by browser tabs, games, Wayland compositors, containers, machine-learning frameworks, ROCm components, and virtualization stacks.

This is why a bounds check in a signal ioctl deserves attention. The flaw is not that AMDGPU has a queue mechanism; it needs one. The flaw is that any kernel interface that accepts counts, sizes, handles, or array lengths has to assume malice, not cooperation. A single missing maximum can turn a normal synchronization operation into a memory-pressure attack.

The affected description points to out-of-memory behavior rather than privilege escalation. That distinction is important, but it should not make administrators shrug. Denial of service in kernel space can mean a local user taking down a shared workstation, a compute node, a thin-client host, or a desktop session that is otherwise carefully locked down. On machines where the GPU is part of a production workflow, availability is a security property.

There is also a broader trend here. GPU drivers keep absorbing complexity that once belonged higher in the stack. The closer that complexity gets to the kernel, the more every validation mistake resembles the classic file-system and network-driver bugs of earlier eras. The vocabulary has changed from packets and blocks to fences and queues, but the failure mode is familiar.

That gap is not just bureaucratic trivia. Many organizations still route patch prioritization through CVSS thresholds, even though kernel vulnerabilities often need local context to evaluate properly. A local denial-of-service bug on a single-user laptop may be annoying but not urgent. The same bug on a multi-user GPU workstation, a university compute cluster, a VDI-like Linux host, or a cloud image with GPU passthrough can be materially more important.

The CVE text says “could be exploited,” but it does not provide a public proof of concept in the record supplied here. That should keep the tone measured. There is no basis for claiming active exploitation from the provided disclosure. There is, however, enough to say that the vulnerable code path accepted huge user-controlled inputs and could exhaust memory, which is precisely the sort of condition local attackers and mischievous users have historically found easy to weaponize.

The absence of a score also illustrates why fresh kernel CVEs can feel noisy. The Linux kernel project now assigns CVEs to a large number of fixes, including narrow correctness fixes that may or may not be reachable in typical deployments. That is better than burying security-relevant changes in a commit log, but it pushes more interpretation onto IT teams. Not every kernel CVE is a five-alarm incident. Not every unscored CVE is harmless.

CVE-2026-43400 sits in the middle. It is not a remote-code-execution panic. It is not a theoretical documentation cleanup either. It is a kernel input-validation flaw in a privileged GPU driver, with a clear denial-of-service failure mode and a straightforward fix.

There are three stable kernel references associated with the CVE record, indicating that the correction was propagated into maintained kernel trees rather than left only in a development branch. The CVE description also notes that the change was cherry-picked from an upstream commit. That is the normal Linux kernel security conveyor belt: fix the code, backport to stable branches, then let distributions and vendors absorb the patch into their kernels.

The timing matters. The CVE was published on May 8, 2026, but the underlying patch discussion appears to have happened earlier in the AMD graphics development lists. This is often how kernel security becomes visible: the technical fix lands first as an engineering change, and the CVE label arrives after the fact. Administrators who rely only on CVE feeds may see the issue later than developers tracking upstream stable commits.

That sequence is not necessarily a failure. It is a consequence of how open-source kernel maintenance works. The public commit trail is the operating system’s bloodstream; CVE databases are the medical chart. Both matter, but they do not move at the same speed.

For defenders, the practical question is not whether the commit is elegant. It is whether your running kernel contains the bound check. Distribution advisories, vendor kernels, and cloud images may package the fix under their own update names. The CVE identifier helps track the issue, but the real control is still the kernel package deployed on the host.

The less obvious constituency is Windows-centered IT. Many Windows environments now include Linux in ways that do not look like a traditional Linux server fleet. Developers run WSL. Security teams run Linux analysis boxes. Data scientists run GPU-enabled Linux workstations. Enterprises deploy Linux containers on Windows-managed networks. Azure and other cloud estates mix Windows and Linux routinely, often under the same vulnerability-management program.

This specific CVE should not be misread as a Windows kernel vulnerability. It is not evidence that the AMD Radeon driver on Windows is affected. The vulnerable code path is in the Linux kernel’s DRM AMDGPU driver. But the fact that Microsoft’s Security Update Guide carries the CVE is a sign of where enterprise security has gone: dependency boundaries are now organizationally blurrier than product boundaries.

That blurring creates a familiar operational trap. A Windows-heavy shop may have mature Patch Tuesday routines but weaker muscle memory for kernel updates on developer Linux boxes. Those machines are often more permissive, more experimental, and more likely to have direct access to source code, credentials, build systems, or internal networks. A local denial-of-service vulnerability on such a host may not steal data, but it can disrupt work and create footholds for more creative abuse.

The right response is not panic. It is inventory. Know which systems run AMD GPUs under Linux. Know which ones are multi-user. Know which ones expose GPU devices into containers. Know which ones are running vendor kernels that lag upstream stable. The organizations that can answer those questions quickly will treat this CVE as a routine patch. The ones that cannot will treat every GPU CVE as a scavenger hunt.

If a normal userspace program allocates too much memory, the kernel has tools: limits, cgroups, the OOM killer, accounting, and policy. If a kernel ioctl path performs allocations on behalf of userspace without sane bounds, the line between “the process asked for too much” and “the kernel trapped itself into doing too much” becomes much thinner. The attacker does not need to corrupt memory to cause damage. They only need to persuade privileged code to spend resources irresponsibly.

That is especially relevant for graphics. GPU stacks already occupy a fragile part of the user experience. A hung GPU can freeze a compositor, kill a session, wedge a display server, or force a reboot even when the rest of the operating system is theoretically intact. Linux has improved dramatically here, but anyone who has debugged

In shared environments, the stakes rise. A local user who can reliably exhaust memory through a GPU ioctl may be able to disrupt other users on the same host. On lab machines, render nodes, CI systems, and GPU workstations, that is enough to matter. Availability attacks are not second-class risks when the affected machine is expensive, scarce, or central to a workflow.

This is also why input validation remains the least glamorous and most durable security discipline in kernel development. The vulnerable pattern is simple: userspace supplies a number, kernel code uses it to size work, and the number is not capped tightly enough. The fix is equally simple. The cost of missing it is borne by everyone who assumes a local interface cannot become a local weapon.

Every time the graphics stack exposes a faster path, it has to decide how much trust moves into userspace. The answer should be “as little as possible,” but performance engineering is often a negotiation with reality. Drivers need to avoid excessive copying, redundant validation, and heavyweight locks. Attackers, meanwhile, are perfectly happy to explore every fast path for missing bounds checks.

CVE-2026-43400 does not prove that AMDGPU user queues are unsafe as a design. It proves the opposite lesson: this is exactly the kind of bug that appears when new mechanisms are pushed into production and then hardened through review, fuzzing, and real-world use. Mature kernel subsystems are not bug-free; they are bug-resistant because they accumulate guardrails.

The fix also suggests the expected legitimate range was already understood.

For developers building against GPU APIs, the lesson is to stay within documented expectations rather than relying on permissive kernel behavior. For administrators, the lesson is to avoid treating GPU driver updates as optional unless a display is visibly broken. Security fixes in this layer may not announce themselves with a blue screen or a failed boot. They may be one-line validations that prevent the next local crash.

That creates an uncomfortable patch-management asymmetry. Servers are inventoried because they host services. Laptops are managed because they are endpoints. GPU workstations often fall between those categories. They are powerful enough to be infrastructure, personal enough to be treated as snowflakes, and specialized enough that teams hesitate to update kernels casually.

Kernel updates on GPU machines also carry a perceived regression risk. AMDGPU has a long and public history of users reporting hangs, resets, firmware mismatches, and compositor oddities across different kernel and Mesa combinations. Many of those reports are not security issues, and many are configuration-specific. But the operational memory is real: people who depend on a stable graphics stack are reluctant to touch it.

That reluctance is understandable but dangerous when it becomes indefinite deferral. CVE-2026-43400 is exactly the sort of fix that may be buried inside a routine stable kernel update alongside dozens of unrelated changes. Waiting for a perfect, isolated, single-CVE patch may not be realistic for mainstream distributions. Testing and staged rollout are the better compromise.

Organizations should also pay attention to containers and device passthrough. If GPU device nodes are exposed into containers, the host kernel driver remains the enforcement point. Container boundaries do not magically make a vulnerable ioctl safe. The process may be namespaced, but the driver is still in the host kernel, and the memory pressure is still host memory pressure.

That distinction matters for Windows administrators. If your environment is purely Windows clients running AMD’s Windows display driver, this CVE is not the basis for emergency Radeon driver action. If your environment includes Linux hosts, Azure workloads, WSL-based development workflows, custom images, or GPU-enabled Linux systems, the CVE belongs in your normal Linux vulnerability-management process.

The more interesting story is how ordinary this has become. Microsoft, once the company most associated with closed desktop operating systems, now publishes, consumes, and operationalizes open-source security data at enterprise scale. The Security Update Guide has become not only a Patch Tuesday dashboard but also a place where Microsoft customers encounter vulnerabilities in components that sit adjacent to, underneath, or alongside Microsoft products.

That is good for transparency but challenging for triage. A CVE appearing in an MSRC context can trigger Windows-shaped assumptions: Which KB fixes it? Which Windows versions are affected? Is there an out-of-band update? For CVE-2026-43400, those are probably the wrong first questions. The better question is: which Linux kernels in my estate include the vulnerable amdgpu code, and which vendor update contains the backport?

This is where security tooling should earn its keep. Asset inventory needs to distinguish Windows GPU drivers from Linux kernel GPU drivers, physical desktops from cloud images, and loaded kernel modules from merely installed packages. Without that precision, organizations either overreact to irrelevant CVEs or miss the ones hiding in plain sight.

But overlooked does not mean unimportant. The machines most likely to expose this code are often high-value workstations and specialized Linux systems. They may belong to developers, researchers, designers, engineers, or students. They may sit outside the most disciplined server patch rings. They may have privileged users, SSH access, container workloads, or direct paths into build systems.

A local denial-of-service bug also changes character depending on the local user model. On a single-user desktop, the attacker and victim may be the same person, which lowers practical risk. On a shared workstation, a lab machine, a remote development host, or a GPU-enabled jump box, the same flaw can become a way to disrupt others. Security severity is not only about exploit mechanics; it is about where the vulnerable mechanism lives.

There is another subtle point: GPU device access is often granted for performance reasons before security teams fully model the implications. Users who would never receive broad administrative rights may still receive access to

The patch’s existence is therefore a good moment to revisit local GPU access. Not to lock everything down reflexively, but to ensure that access maps to need. If a server has an AMD GPU but no workload should be issuing graphics or compute commands, device permissions deserve scrutiny. If containers receive GPU devices, the host kernel update cadence matters. If a workstation is shared, kernel CVEs are not merely the owner’s problem.

The more strategic action is to classify where Linux GPU drivers exist in your environment. That includes obvious Linux desktops, but also dual-boot systems, developer machines, hypervisors, lab boxes, build hosts, and GPU-enabled cloud instances. If your Windows management stack has no view into those machines, this CVE is another argument for closing that visibility gap.

The lack of an NVD score should not block triage. Treat the vulnerability as a local denial-of-service issue affecting Linux systems using AMDGPU, then adjust priority based on exposure. A shared GPU host deserves faster attention than a personal test laptop. A production compute node deserves more urgency than an offline hobby machine.

Most importantly, do not confuse absence of drama with absence of risk. Kernel memory-exhaustion bugs are often mundane in description and ugly in practice. If a user-controlled number can push privileged code into excessive allocation, the patch is worth taking.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

amdgpu_userq_signal_ioctl path can trigger out-of-memory conditions and potentially be abused for denial-of-service attacks. The fix is almost comically small: reject values above AMDGPU_USERQ_MAX_HANDLES. The lesson is larger than the patch. Modern GPU drivers have become kernel-scale attack surfaces, and this CVE is a reminder that “just graphics” code now sits in the blast radius of desktops, workstations, cloud hosts, AI boxes, and Windows-adjacent Linux deployments.

A Tiny Bounds Check Exposes a Big Kernel Assumption

A Tiny Bounds Check Exposes a Big Kernel Assumption

The vulnerability description is terse, even by kernel CVE standards: huge input values in amdgpu_userq_signal_ioctl can lead to an out-of-memory condition, so the driver now checks those values against AMDGPU_USERQ_MAX_HANDLES. That is not a cinematic bug. There is no dramatic remote exploit chain in the public record, no browser sandbox escape, no wormable network daemon.But kernel bugs rarely need to be theatrical to matter. An ioctl is a userspace-to-kernel command channel, and GPU drivers expose many of them because applications, compositors, compute runtimes, and graphics stacks need to submit work, synchronize fences, manage buffers, and coordinate queues. When one of those paths trusts a user-controlled count too much, the kernel may allocate memory based on an attacker-supplied value. If that value is enormous, the bug stops being a programming oversight and becomes a resource-exhaustion primitive.

That is the core of CVE-2026-43400. The vulnerable path lives in the AMDGPU user queue signaling logic, where userspace can pass handle-related counts into a kernel routine. The fix adds an upper bound that the patch authors describe as large enough for legitimate use while preventing pathological allocations. It is security engineering at its most unglamorous: decide what “reasonable” means, encode it, and stop pretending the caller will behave.

For WindowsForum readers, the AMDGPU part may sound like Linux-only plumbing. In strict product terms, this is not a Windows display-driver CVE. But the MSRC listing matters because Microsoft increasingly has to account for open-source components across its estate: cloud hosts, Linux distributions, developer platforms, container environments, and security feeds consumed by enterprise patch teams. Windows shops no longer get to ignore Linux kernel CVEs simply because the affected file path starts with

drivers/gpu/drm.The GPU Driver Is No Longer a Peripheral Detail

For years, GPU drivers were treated as performance-sensitive but conceptually separate from the operating system’s security story. That distinction has collapsed. The GPU driver is now one of the busiest and most privileged brokers between untrusted user workloads and expensive shared hardware.On a modern Linux desktop, the amdgpu kernel driver is not merely painting pixels. It is coordinating command submission, synchronization objects, buffer objects, virtual memory mappings, display state, power management, video acceleration, and compute-oriented workloads. On developer workstations and AI systems, that same driver may be exercised by browser tabs, games, Wayland compositors, containers, machine-learning frameworks, ROCm components, and virtualization stacks.

This is why a bounds check in a signal ioctl deserves attention. The flaw is not that AMDGPU has a queue mechanism; it needs one. The flaw is that any kernel interface that accepts counts, sizes, handles, or array lengths has to assume malice, not cooperation. A single missing maximum can turn a normal synchronization operation into a memory-pressure attack.

The affected description points to out-of-memory behavior rather than privilege escalation. That distinction is important, but it should not make administrators shrug. Denial of service in kernel space can mean a local user taking down a shared workstation, a compute node, a thin-client host, or a desktop session that is otherwise carefully locked down. On machines where the GPU is part of a production workflow, availability is a security property.

There is also a broader trend here. GPU drivers keep absorbing complexity that once belonged higher in the stack. The closer that complexity gets to the kernel, the more every validation mistake resembles the classic file-system and network-driver bugs of earlier eras. The vocabulary has changed from packets and blocks to fences and queues, but the failure mode is familiar.

CVE Scoring Has Not Caught Up With Operational Reality

At publication, NVD had received the CVE record but had not yet provided CVSS scoring for version 4.0, 3.x, or 2.0. That is common for fresh kernel CVEs, and it leaves defenders in an awkward middle state. The record is public. The fix exists. The severity number that many dashboards use to sort urgency is absent.That gap is not just bureaucratic trivia. Many organizations still route patch prioritization through CVSS thresholds, even though kernel vulnerabilities often need local context to evaluate properly. A local denial-of-service bug on a single-user laptop may be annoying but not urgent. The same bug on a multi-user GPU workstation, a university compute cluster, a VDI-like Linux host, or a cloud image with GPU passthrough can be materially more important.

The CVE text says “could be exploited,” but it does not provide a public proof of concept in the record supplied here. That should keep the tone measured. There is no basis for claiming active exploitation from the provided disclosure. There is, however, enough to say that the vulnerable code path accepted huge user-controlled inputs and could exhaust memory, which is precisely the sort of condition local attackers and mischievous users have historically found easy to weaponize.

The absence of a score also illustrates why fresh kernel CVEs can feel noisy. The Linux kernel project now assigns CVEs to a large number of fixes, including narrow correctness fixes that may or may not be reachable in typical deployments. That is better than burying security-relevant changes in a commit log, but it pushes more interpretation onto IT teams. Not every kernel CVE is a five-alarm incident. Not every unscored CVE is harmless.

CVE-2026-43400 sits in the middle. It is not a remote-code-execution panic. It is not a theoretical documentation cleanup either. It is a kernel input-validation flaw in a privileged GPU driver, with a clear denial-of-service failure mode and a straightforward fix.

The Patch Says More Than the CVE Record

The essential fix is to compare user-provided values againstAMDGPU_USERQ_MAX_HANDLES. That constant is the policy line: legitimate workloads should not need more than this, and malicious or nonsensical workloads should not be allowed to force the kernel into oversized allocation paths. In security terms, the patch converts an implicit trust boundary into an explicit one.There are three stable kernel references associated with the CVE record, indicating that the correction was propagated into maintained kernel trees rather than left only in a development branch. The CVE description also notes that the change was cherry-picked from an upstream commit. That is the normal Linux kernel security conveyor belt: fix the code, backport to stable branches, then let distributions and vendors absorb the patch into their kernels.

The timing matters. The CVE was published on May 8, 2026, but the underlying patch discussion appears to have happened earlier in the AMD graphics development lists. This is often how kernel security becomes visible: the technical fix lands first as an engineering change, and the CVE label arrives after the fact. Administrators who rely only on CVE feeds may see the issue later than developers tracking upstream stable commits.

That sequence is not necessarily a failure. It is a consequence of how open-source kernel maintenance works. The public commit trail is the operating system’s bloodstream; CVE databases are the medical chart. Both matter, but they do not move at the same speed.

For defenders, the practical question is not whether the commit is elegant. It is whether your running kernel contains the bound check. Distribution advisories, vendor kernels, and cloud images may package the fix under their own update names. The CVE identifier helps track the issue, but the real control is still the kernel package deployed on the host.

AMDGPU Is a Linux Problem With Windows-Era Consequences

The obvious affected constituency is Linux users with AMD GPUs. That includes desktop Linux gamers, workstation users, developers running Wayland or Xorg, and compute users relying on AMD graphics or accelerators. If the amdgpu driver is loaded and the vulnerable code is present, the machine is in scope.The less obvious constituency is Windows-centered IT. Many Windows environments now include Linux in ways that do not look like a traditional Linux server fleet. Developers run WSL. Security teams run Linux analysis boxes. Data scientists run GPU-enabled Linux workstations. Enterprises deploy Linux containers on Windows-managed networks. Azure and other cloud estates mix Windows and Linux routinely, often under the same vulnerability-management program.

This specific CVE should not be misread as a Windows kernel vulnerability. It is not evidence that the AMD Radeon driver on Windows is affected. The vulnerable code path is in the Linux kernel’s DRM AMDGPU driver. But the fact that Microsoft’s Security Update Guide carries the CVE is a sign of where enterprise security has gone: dependency boundaries are now organizationally blurrier than product boundaries.

That blurring creates a familiar operational trap. A Windows-heavy shop may have mature Patch Tuesday routines but weaker muscle memory for kernel updates on developer Linux boxes. Those machines are often more permissive, more experimental, and more likely to have direct access to source code, credentials, build systems, or internal networks. A local denial-of-service vulnerability on such a host may not steal data, but it can disrupt work and create footholds for more creative abuse.

The right response is not panic. It is inventory. Know which systems run AMD GPUs under Linux. Know which ones are multi-user. Know which ones expose GPU devices into containers. Know which ones are running vendor kernels that lag upstream stable. The organizations that can answer those questions quickly will treat this CVE as a routine patch. The ones that cannot will treat every GPU CVE as a scavenger hunt.

Denial of Service Is Still a Security Bug

The phrase “out of memory” can make a vulnerability sound like a nuisance. Every sysadmin has seen a bad process eat RAM. Every developer has written a test that accidentally consumed too much memory. But kernel-mediated memory exhaustion is different because it can bypass the usual expectations about process containment and fault isolation.If a normal userspace program allocates too much memory, the kernel has tools: limits, cgroups, the OOM killer, accounting, and policy. If a kernel ioctl path performs allocations on behalf of userspace without sane bounds, the line between “the process asked for too much” and “the kernel trapped itself into doing too much” becomes much thinner. The attacker does not need to corrupt memory to cause damage. They only need to persuade privileged code to spend resources irresponsibly.

That is especially relevant for graphics. GPU stacks already occupy a fragile part of the user experience. A hung GPU can freeze a compositor, kill a session, wedge a display server, or force a reboot even when the rest of the operating system is theoretically intact. Linux has improved dramatically here, but anyone who has debugged

amdgpu resets, fence timeouts, or compositor lockups knows that graphics failure often feels like full-system failure to the person at the keyboard.In shared environments, the stakes rise. A local user who can reliably exhaust memory through a GPU ioctl may be able to disrupt other users on the same host. On lab machines, render nodes, CI systems, and GPU workstations, that is enough to matter. Availability attacks are not second-class risks when the affected machine is expensive, scarce, or central to a workflow.

This is also why input validation remains the least glamorous and most durable security discipline in kernel development. The vulnerable pattern is simple: userspace supplies a number, kernel code uses it to size work, and the number is not capped tightly enough. The fix is equally simple. The cost of missing it is borne by everyone who assumes a local interface cannot become a local weapon.

The User Queue Era Raises the Validation Bar

The mention ofuserq is a clue to the direction of modern GPU drivers. User queues are part of a broader effort to make command submission more efficient and flexible by allowing userspace and the kernel to coordinate GPU work with less overhead. That is good for performance, latency, and modern graphics and compute workloads. It also means the kernel must police increasingly rich user-provided structures.Every time the graphics stack exposes a faster path, it has to decide how much trust moves into userspace. The answer should be “as little as possible,” but performance engineering is often a negotiation with reality. Drivers need to avoid excessive copying, redundant validation, and heavyweight locks. Attackers, meanwhile, are perfectly happy to explore every fast path for missing bounds checks.

CVE-2026-43400 does not prove that AMDGPU user queues are unsafe as a design. It proves the opposite lesson: this is exactly the kind of bug that appears when new mechanisms are pushed into production and then hardened through review, fuzzing, and real-world use. Mature kernel subsystems are not bug-free; they are bug-resistant because they accumulate guardrails.

The fix also suggests the expected legitimate range was already understood.

AMDGPU_USERQ_MAX_HANDLES is described as large enough for genuine use cases. That detail matters because good security limits are not arbitrary. A bound that breaks real workloads will be reverted, worked around, or ignored. A bound that reflects actual engineering constraints becomes part of the contract.For developers building against GPU APIs, the lesson is to stay within documented expectations rather than relying on permissive kernel behavior. For administrators, the lesson is to avoid treating GPU driver updates as optional unless a display is visibly broken. Security fixes in this layer may not announce themselves with a blue screen or a failed boot. They may be one-line validations that prevent the next local crash.

Patch Management Has to Include the Machines Under the Desks

The highest-risk systems for this CVE are not necessarily the most obvious servers in the rack. They may be developer desktops, Linux gaming rigs repurposed for work, lab workstations, university machines, media production boxes, or AI experimentation hosts. These are the systems with real GPUs, real users, and sometimes looser change control.That creates an uncomfortable patch-management asymmetry. Servers are inventoried because they host services. Laptops are managed because they are endpoints. GPU workstations often fall between those categories. They are powerful enough to be infrastructure, personal enough to be treated as snowflakes, and specialized enough that teams hesitate to update kernels casually.

Kernel updates on GPU machines also carry a perceived regression risk. AMDGPU has a long and public history of users reporting hangs, resets, firmware mismatches, and compositor oddities across different kernel and Mesa combinations. Many of those reports are not security issues, and many are configuration-specific. But the operational memory is real: people who depend on a stable graphics stack are reluctant to touch it.

That reluctance is understandable but dangerous when it becomes indefinite deferral. CVE-2026-43400 is exactly the sort of fix that may be buried inside a routine stable kernel update alongside dozens of unrelated changes. Waiting for a perfect, isolated, single-CVE patch may not be realistic for mainstream distributions. Testing and staged rollout are the better compromise.

Organizations should also pay attention to containers and device passthrough. If GPU device nodes are exposed into containers, the host kernel driver remains the enforcement point. Container boundaries do not magically make a vulnerable ioctl safe. The process may be namespaced, but the driver is still in the host kernel, and the memory pressure is still host memory pressure.

Microsoft’s Presence Is a Signal, Not a Windows Panic Button

The source link provided points to Microsoft’s Security Update Guide, which can be jarring for a Linux kernel AMDGPU issue. The correct interpretation is not that Windows suddenly inherited the Linux DRM driver. It is that Microsoft’s security surface includes open-source software and Linux-based components in enough places that MSRC tracks relevant CVEs for customer visibility.That distinction matters for Windows administrators. If your environment is purely Windows clients running AMD’s Windows display driver, this CVE is not the basis for emergency Radeon driver action. If your environment includes Linux hosts, Azure workloads, WSL-based development workflows, custom images, or GPU-enabled Linux systems, the CVE belongs in your normal Linux vulnerability-management process.

The more interesting story is how ordinary this has become. Microsoft, once the company most associated with closed desktop operating systems, now publishes, consumes, and operationalizes open-source security data at enterprise scale. The Security Update Guide has become not only a Patch Tuesday dashboard but also a place where Microsoft customers encounter vulnerabilities in components that sit adjacent to, underneath, or alongside Microsoft products.

That is good for transparency but challenging for triage. A CVE appearing in an MSRC context can trigger Windows-shaped assumptions: Which KB fixes it? Which Windows versions are affected? Is there an out-of-band update? For CVE-2026-43400, those are probably the wrong first questions. The better question is: which Linux kernels in my estate include the vulnerable amdgpu code, and which vendor update contains the backport?

This is where security tooling should earn its keep. Asset inventory needs to distinguish Windows GPU drivers from Linux kernel GPU drivers, physical desktops from cloud images, and loaded kernel modules from merely installed packages. Without that precision, organizations either overreact to irrelevant CVEs or miss the ones hiding in plain sight.

The Real Risk Is the Systems Nobody Classifies

CVE-2026-43400 is unlikely to become famous. It has no catchy name, no logo, and no public scoring at the time of publication. Its exploit story is local and resource-oriented. Its patch is a bounds check. In the vulnerability attention economy, that makes it easy to overlook.But overlooked does not mean unimportant. The machines most likely to expose this code are often high-value workstations and specialized Linux systems. They may belong to developers, researchers, designers, engineers, or students. They may sit outside the most disciplined server patch rings. They may have privileged users, SSH access, container workloads, or direct paths into build systems.

A local denial-of-service bug also changes character depending on the local user model. On a single-user desktop, the attacker and victim may be the same person, which lowers practical risk. On a shared workstation, a lab machine, a remote development host, or a GPU-enabled jump box, the same flaw can become a way to disrupt others. Security severity is not only about exploit mechanics; it is about where the vulnerable mechanism lives.

There is another subtle point: GPU device access is often granted for performance reasons before security teams fully model the implications. Users who would never receive broad administrative rights may still receive access to

/dev/dri devices because their workloads require acceleration. That access is normal, but it is also a route into a complex kernel driver. The policy decision should be conscious, not accidental.The patch’s existence is therefore a good moment to revisit local GPU access. Not to lock everything down reflexively, but to ensure that access maps to need. If a server has an AMD GPU but no workload should be issuing graphics or compute commands, device permissions deserve scrutiny. If containers receive GPU devices, the host kernel update cadence matters. If a workstation is shared, kernel CVEs are not merely the owner’s problem.

The Practical Read for WindowsForum Readers

The concrete action is refreshingly simple: patch the kernel through your distribution or vendor channel once the fix is available for your branch. Do not try to hand-apply upstream commits to production machines unless that is already how you manage kernels. For most users, the safe path is a tested distribution update, followed by a reboot into the corrected kernel.The more strategic action is to classify where Linux GPU drivers exist in your environment. That includes obvious Linux desktops, but also dual-boot systems, developer machines, hypervisors, lab boxes, build hosts, and GPU-enabled cloud instances. If your Windows management stack has no view into those machines, this CVE is another argument for closing that visibility gap.

The lack of an NVD score should not block triage. Treat the vulnerability as a local denial-of-service issue affecting Linux systems using AMDGPU, then adjust priority based on exposure. A shared GPU host deserves faster attention than a personal test laptop. A production compute node deserves more urgency than an offline hobby machine.

Most importantly, do not confuse absence of drama with absence of risk. Kernel memory-exhaustion bugs are often mundane in description and ugly in practice. If a user-controlled number can push privileged code into excessive allocation, the patch is worth taking.

The Bounds Check Is the Message

CVE-2026-43400 reduces to a few operational truths that are easy to state and easy to miss. The patch is small, but the systems around it are not.- CVE-2026-43400 affects the Linux kernel’s AMDGPU driver, not the Windows Radeon display driver.

- The bug allows oversized user input to the

amdgpu_userq_signal_ioctlpath to create an out-of-memory condition. - The fix adds an upper bound using

AMDGPU_USERQ_MAX_HANDLES, which is intended to preserve legitimate use while rejecting extreme values. - NVD had not assigned a CVSS score at publication, so administrators should prioritize based on local exposure rather than waiting for a number.

- Linux systems with AMD GPUs, shared GPU workstations, and hosts exposing GPU devices to containers deserve the closest attention.

- Windows-centric IT teams should treat the MSRC listing as a reminder that Linux components are now part of many Microsoft-adjacent environments.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center