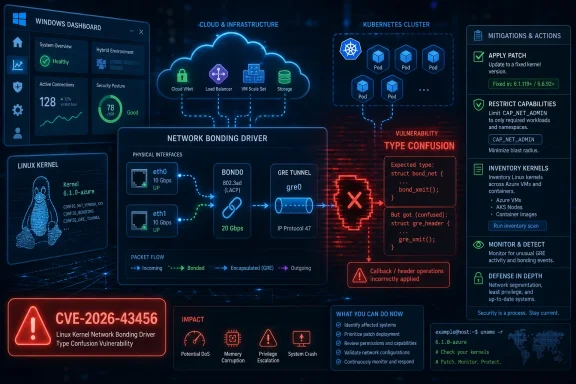

CVE-2026-43456 is a Linux kernel bonding-driver vulnerability published by NVD on May 8, 2026 and modified on May 11, in which a local privileged user can trigger type confusion when a non-Ethernet device such as a GRE tunnel is enslaved to a bond. The bug is not a Windows vulnerability in the ordinary Patch Tuesday sense, despite being listed through Microsoft’s security update machinery. It is a reminder that Microsoft’s security perimeter now includes Linux kernels running in clouds, containers, appliances, WSL-adjacent workflows, and hybrid estates. The practical story is less “panic now” than “know where Linux networking is hiding in your Windows shop.”

The oddity of CVE-2026-43456 is not the flaw itself. Linux kernel networking bugs are a steady drumbeat, and bonding has long been one of those unglamorous subsystems whose job is to make multiple interfaces behave like one. The oddity is the route by which many Windows administrators will first encounter it: a Microsoft Security Response Center entry pointing to a kernel.org-assigned CVE.

That is not a clerical error so much as a reflection of the modern Microsoft estate. Azure runs Linux. Microsoft ships Linux-based components. Customers run Linux workloads on Hyper-V, in Azure, on Kubernetes, and inside increasingly mixed management stacks. A vulnerability can be operationally relevant to WindowsForum readers even when the vulnerable code lives nowhere near

The result is a small but important mental shift. Security teams cannot sort their intake by brand anymore and assume “Microsoft” equals Windows, or “Linux” equals someone else’s problem. The CVE pipeline now mirrors the infrastructure reality: Windows administrators are often responsible for Linux exposure, whether or not their job title says so.

CVE-2026-43456 therefore deserves attention not because every desktop is at risk, but because it sits at the intersection of kernel networking, virtualization, cloud tenancy, and the increasingly blurry boundary between Microsoft and Linux operations.

That shortcut is fragile because some of those callbacks assume that the

That is classic type confusion: the code reads one kind of object as if it were another. The crash trace published with the CVE shows the failure surfacing in

The fix changes the direction of delegation. Rather than letting the bond pretend to own the slave’s callback table, the bonding driver introduces wrapper functions that call the active slave’s

This is the kind of kernel bug that looks painfully obvious in hindsight. But many durable bugs live in exactly this zone: an old convenience, a special-case subsystem, and an assumption that remains true until someone composes the pieces in an unusual order.

The answer is that kernel context changes the arithmetic. Local bugs that cross from a constrained user or namespace into kernel memory corruption are not mere nuisance crashes. They can become privilege-escalation building blocks, denial-of-service triggers, or exploit primitives when combined with other weaknesses.

The CVE text describes a reproducible crash involving GRE and bonding. It does not, by itself, prove a polished public exploit that hands out root shells on commodity distributions. But it also does not allow administrators to shrug off the issue as a lab curiosity. The kernel.org CNA rating explicitly models high confidentiality, integrity, and availability impact, and the patch discussion refers to memory corruption, not just an orderly refusal.

The important practical qualifier is access. The scenario requires the ability to create and manipulate network devices — adding a dummy interface, creating a GRE tunnel, creating a bond, enslaving the tunnel, bringing devices up, and sending traffic through the resulting path. On a normal locked-down Linux host, that is not available to an unprivileged shell user.

But “normal locked-down Linux host” is doing a lot of work. Containers, network namespaces, lab appliances, CI systems, developer VMs, and cloud images frequently grant broader networking capability than administrators remember. If a service account or container workload has

Most administrators encounter bonding with Ethernet devices. CVE-2026-43456 becomes interesting when the slave is not an ordinary Ethernet NIC, but a device such as a GRE tunnel. GRE is a tunneling mechanism, and tunnel devices often carry private state that ordinary Ethernet code does not. That is the mismatch the vulnerable bonding path mishandled.

The reproduction sequence in the CVE is revealing. It creates a dummy interface, assigns it an address, creates a GRE device, creates a bond in active-backup mode, enslaves the GRE interface, and then assigns an IPv6 link-local address to the bond. This is not a typical office laptop configuration. It is, however, entirely plausible in advanced networking labs, overlay designs, test automation, and custom appliances.

That is why the bug should not be dismissed as exotic. Enterprise infrastructure is full of “not typical” combinations that become normal inside one team’s environment. The Linux network stack is prized precisely because it allows those combinations. Every extra composable layer also gives old assumptions more room to fail.

For Windows-heavy organizations, that is where confusion often creeps in. A Microsoft security listing may trigger ticket creation in a Windows patching queue. The ticket may then bounce between endpoint, server, cloud, and Linux teams because nobody owns the exact component. Meanwhile, the vulnerable systems are not the Windows clients but the Linux hosts, guests, or network appliances that quietly support the environment.

That does not make MSRC’s inclusion unhelpful. Quite the opposite: it is a useful flare for hybrid shops. If your Microsoft estate includes Azure Linux workloads, Kubernetes nodes, Linux VMs on Hyper-V, WSL images used for development, or third-party network appliances integrated into Microsoft-managed infrastructure, this is the sort of CVE that can otherwise fall between organizational cracks.

The correct response is not to ask whether this is a “Microsoft bug” or a “Linux bug.” It is to ask whether any system you are responsible for runs an affected Linux kernel with bonding and non-Ethernet network devices available to local users or workloads.

That is a good fix because it addresses the design mistake at the bonding layer. Patching GRE, IP6GRE, or every individual non-Ethernet driver would treat the symptoms as separate bugs. The root problem was that bonding blindly inherited callback pointers from devices whose callbacks were not written to be called against a bond’s private data.

The patch history also matters. The fix appeared in the Linux networking development flow before the CVE record was published, and stable review carried it into maintained kernel lines. That is normal for Linux: fixes often land as ordinary commits, then later acquire CVE identifiers as downstream security programs map patches to advisories.

Administrators should therefore resist the temptation to search only for the CVE number in package changelogs. Distribution backports may mention the upstream commit title rather than the CVE, especially when the patch was queued before the identifier became visible. The strings that matter are “bonding,” “type confusion,”

This is one reason kernel patch management remains more subtle than application patching. The version number alone may not tell the whole story, because enterprise distributions routinely backport security fixes without rebasing to the newest upstream kernel. A nominally older kernel may be fixed; a seemingly newer custom kernel may not be.

That does not mean every Kubernetes cluster is exposed. Most ordinary pods cannot create host-level network devices, and many managed clusters enforce tighter defaults. But clusters that host network plugins, service meshes, packet-processing tools, VPN agents, or homegrown network automation deserve a closer look.

This is also a good moment to revisit privileged build and test infrastructure. Security conversations often focus on production clusters, while CI workers sit in a softer zone with more permissions, more experimental kernels, and less monitoring. A reproducible kernel crash may be enough to disrupt a build farm; a memory-corruption primitive may be more serious if chained with other flaws.

For Windows administrators, the analogous lesson is familiar from years of service hardening: do not grant broad system privileges just because it makes deployment easier. On Linux, network administration capabilities are powerful enough to turn a “local” kernel bug into a realistic operational risk.

Less certain is the availability of a reliable, weaponized exploit across major distributions. Kernel memory corruption bugs often vary by configuration, allocator behavior, hardening options, architecture, and timing. KASAN traces prove a bug; they do not automatically prove a turnkey exploit. Conversely, dismissing memory corruption because the first visible symptom is a crash is a mistake seasoned defenders should have outgrown.

This is why the right operational posture is boring: patch, reduce privileges, and inventory the reachable configurations. If a system does not use bonding or GRE-like devices, the practical exposure is lower. If it runs multi-tenant workloads with broad network capabilities, the urgency rises.

There is also a denial-of-service dimension that does not require cinematic exploitation. A kernel BUG can take down a host, depending on panic settings and workload impact. On virtualization hosts, routers, storage gateways, or cluster nodes, that is enough to matter. Availability is not a consolation prize when the affected machine is part of the fabric other systems depend on.

The CVE’s high score should therefore be read as a prompt for prioritization, not an invitation to panic. It is a kernel networking flaw with plausible privilege and availability consequences under specific local conditions. That is exactly the category where mature patch programs should move quickly without pretending every endpoint is on fire.

WSL deserves a careful mention. The published trigger involves creating and enslaving network devices in a way that is not representative of ordinary WSL use. WSL users should still keep their platform current, but the more realistic exposure is on full Linux systems or containers with meaningful network administration rights. Treat WSL as part of hygiene, not as the main battlefield.

Azure and hybrid operations are a different story. If your estate includes Linux images maintained by distribution vendors, managed Kubernetes node pools, or marketplace appliances, the patch path may run through several parties. Microsoft may surface the advisory, but Ubuntu, Debian, Red Hat, SUSE, Oracle, appliance vendors, or custom image owners may supply the actual kernel update.

This is where asset classification beats CVE theater. A ticket that says “CVE-2026-43456: patch all systems” is less useful than one that asks for Linux hosts with bonding enabled, GRE or other tunnel devices available, and local workloads that can manipulate networking. The former creates noise. The latter finds risk.

The lesson is not new, but the setting is. Windows administrators have spent years learning that firmware, drivers, browsers, runtimes, and cloud control planes all matter. Linux kernel components are now part of that same operational field, even in organizations that still think of themselves as primarily Windows shops.

The vulnerable behavior came from treating

C is unforgiving here. The kernel’s private data pattern is fast and idiomatic, but it relies on developers preserving relationships that the compiler cannot fully enforce. When a subsystem hands a callback the wrong object, the resulting bug can sit dormant until a fuzzer, researcher, or unusual deployment pattern puts the wrong pieces together.

The stable-kernel process is the counterweight. Bugs are found, patches are reviewed, fixes are backported, and CVEs are assigned. That machinery works, but it does not erase the administrative burden. Operators still need to know which kernels they run, which fixes were backported, and which capabilities they expose to workloads.

This is why kernel security should not be reduced to version worship. The important question is not simply “Are we on the newest kernel?” It is “Do our maintained kernels include the relevant fix, and have we limited the local conditions needed to abuse the bug?”

Administrators should also examine capability boundaries. If containers or services have

For distribution-managed systems, watch vendor advisories and changelogs rather than relying only on upstream version numbers. Backports can carry the fix into older enterprise kernels, and custom kernels can miss it even if they appear modern. Appliance vendors should be pressed for explicit confirmation if the device uses Linux bonding or tunnel interfaces internally.

Finally, test updates with the seriousness kernel patches deserve. Networking fixes can affect failover, tunnels, and virtual interface behavior. That is not a reason to delay indefinitely; it is a reason to validate on representative systems before rolling into environments where bonded links are part of the availability story.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

A Linux Kernel Bug Lands in a Microsoft-Shaped Inbox

A Linux Kernel Bug Lands in a Microsoft-Shaped Inbox

The oddity of CVE-2026-43456 is not the flaw itself. Linux kernel networking bugs are a steady drumbeat, and bonding has long been one of those unglamorous subsystems whose job is to make multiple interfaces behave like one. The oddity is the route by which many Windows administrators will first encounter it: a Microsoft Security Response Center entry pointing to a kernel.org-assigned CVE.That is not a clerical error so much as a reflection of the modern Microsoft estate. Azure runs Linux. Microsoft ships Linux-based components. Customers run Linux workloads on Hyper-V, in Azure, on Kubernetes, and inside increasingly mixed management stacks. A vulnerability can be operationally relevant to WindowsForum readers even when the vulnerable code lives nowhere near

ntoskrnl.exe.The result is a small but important mental shift. Security teams cannot sort their intake by brand anymore and assume “Microsoft” equals Windows, or “Linux” equals someone else’s problem. The CVE pipeline now mirrors the infrastructure reality: Windows administrators are often responsible for Linux exposure, whether or not their job title says so.

CVE-2026-43456 therefore deserves attention not because every desktop is at risk, but because it sits at the intersection of kernel networking, virtualization, cloud tenancy, and the increasingly blurry boundary between Microsoft and Linux operations.

The Failure Was a Shortcut That Worked Until It Didn’t

At the center of the bug is the Linux bonding driver’s treatment ofheader_ops, the structure of callbacks a network device uses to build and interpret link-layer headers. In the vulnerable path, bond_setup_by_slave() copied the slave device’s header operations directly into the bond device. In plain English, the bond borrowed another device’s methods without also becoming that device.That shortcut is fragile because some of those callbacks assume that the

net_device passed to them owns a particular kind of private data. A GRE tunnel’s header function, for example, expects netdev_priv() to yield tunnel-specific state. When the same function is invoked on the bond device, netdev_priv() instead returns the bond’s private struct bonding.That is classic type confusion: the code reads one kind of object as if it were another. The crash trace published with the CVE shows the failure surfacing in

pskb_expand_head() after a call path through ipgre_header(), dev_hard_header(), and packet socket send logic. The immediate symptom is a kernel BUG and an invalid opcode oops; the deeper issue is that the network stack has been tricked into using the wrong object layout.The fix changes the direction of delegation. Rather than letting the bond pretend to own the slave’s callback table, the bonding driver introduces wrapper functions that call the active slave’s

header_ops with the slave’s own net_device. That distinction matters. The callback still does the device-specific work, but it receives the object it was written to understand.This is the kind of kernel bug that looks painfully obvious in hindsight. But many durable bugs live in exactly this zone: an old convenience, a special-case subsystem, and an assumption that remains true until someone composes the pieces in an unusual order.

The Attack Surface Is Narrow, but the Blast Radius Is Kernel-Sized

The published CVSS 3.1 score from the CNA is 7.8, high severity, with a local attack vector, low attack complexity, low privileges required, no user interaction, and high impact across confidentiality, integrity, and availability. That combination can sound contradictory at first blush. If it is local and requires privileges, why is it high?The answer is that kernel context changes the arithmetic. Local bugs that cross from a constrained user or namespace into kernel memory corruption are not mere nuisance crashes. They can become privilege-escalation building blocks, denial-of-service triggers, or exploit primitives when combined with other weaknesses.

The CVE text describes a reproducible crash involving GRE and bonding. It does not, by itself, prove a polished public exploit that hands out root shells on commodity distributions. But it also does not allow administrators to shrug off the issue as a lab curiosity. The kernel.org CNA rating explicitly models high confidentiality, integrity, and availability impact, and the patch discussion refers to memory corruption, not just an orderly refusal.

The important practical qualifier is access. The scenario requires the ability to create and manipulate network devices — adding a dummy interface, creating a GRE tunnel, creating a bond, enslaving the tunnel, bringing devices up, and sending traffic through the resulting path. On a normal locked-down Linux host, that is not available to an unprivileged shell user.

But “normal locked-down Linux host” is doing a lot of work. Containers, network namespaces, lab appliances, CI systems, developer VMs, and cloud images frequently grant broader networking capability than administrators remember. If a service account or container workload has

CAP_NET_ADMIN, the line between “local privileged” and “practically reachable” becomes much thinner.Bonding Is Boring Infrastructure, Which Is Why It Matters

Linux bonding is not glamorous. It is the machinery behind link aggregation, failover, and active-backup network designs. It lets operators treat multiple physical or virtual links as one logical interface. That makes it attractive in servers, virtualization hosts, storage networks, lab routers, and appliances that are expected to keep talking even when a NIC or path fails.Most administrators encounter bonding with Ethernet devices. CVE-2026-43456 becomes interesting when the slave is not an ordinary Ethernet NIC, but a device such as a GRE tunnel. GRE is a tunneling mechanism, and tunnel devices often carry private state that ordinary Ethernet code does not. That is the mismatch the vulnerable bonding path mishandled.

The reproduction sequence in the CVE is revealing. It creates a dummy interface, assigns it an address, creates a GRE device, creates a bond in active-backup mode, enslaves the GRE interface, and then assigns an IPv6 link-local address to the bond. This is not a typical office laptop configuration. It is, however, entirely plausible in advanced networking labs, overlay designs, test automation, and custom appliances.

That is why the bug should not be dismissed as exotic. Enterprise infrastructure is full of “not typical” combinations that become normal inside one team’s environment. The Linux network stack is prized precisely because it allows those combinations. Every extra composable layer also gives old assumptions more room to fail.

Microsoft’s Presence Changes the Audience, Not the Code

The user-facing source here is MSRC, but the vulnerable component is the Linux kernel. That distinction matters because it determines who patches what. Windows Update will not magically update every Linux kernel in a fleet just because the CVE appears in Microsoft’s ecosystem. Administrators need to trace the affected kernel through the distribution, appliance vendor, cloud image, container host, or managed service that actually ships it.For Windows-heavy organizations, that is where confusion often creeps in. A Microsoft security listing may trigger ticket creation in a Windows patching queue. The ticket may then bounce between endpoint, server, cloud, and Linux teams because nobody owns the exact component. Meanwhile, the vulnerable systems are not the Windows clients but the Linux hosts, guests, or network appliances that quietly support the environment.

That does not make MSRC’s inclusion unhelpful. Quite the opposite: it is a useful flare for hybrid shops. If your Microsoft estate includes Azure Linux workloads, Kubernetes nodes, Linux VMs on Hyper-V, WSL images used for development, or third-party network appliances integrated into Microsoft-managed infrastructure, this is the sort of CVE that can otherwise fall between organizational cracks.

The correct response is not to ask whether this is a “Microsoft bug” or a “Linux bug.” It is to ask whether any system you are responsible for runs an affected Linux kernel with bonding and non-Ethernet network devices available to local users or workloads.

The Patch Is Conservative in the Way Kernel Fixes Need to Be

The upstream repair is conceptually simple: keep bonding in charge of bonding state, but delegate header creation to the real slave device. The patch adds bond-specific header operations and stores the relevant slave device so callbacks can be invoked with the propernet_device. It also handles release paths to avoid leaving dangling pointers when the slave that supplied header behavior is removed.That is a good fix because it addresses the design mistake at the bonding layer. Patching GRE, IP6GRE, or every individual non-Ethernet driver would treat the symptoms as separate bugs. The root problem was that bonding blindly inherited callback pointers from devices whose callbacks were not written to be called against a bond’s private data.

The patch history also matters. The fix appeared in the Linux networking development flow before the CVE record was published, and stable review carried it into maintained kernel lines. That is normal for Linux: fixes often land as ordinary commits, then later acquire CVE identifiers as downstream security programs map patches to advisories.

Administrators should therefore resist the temptation to search only for the CVE number in package changelogs. Distribution backports may mention the upstream commit title rather than the CVE, especially when the patch was queued before the identifier became visible. The strings that matter are “bonding,” “type confusion,”

bond_setup_by_slave(), and header_ops.This is one reason kernel patch management remains more subtle than application patching. The version number alone may not tell the whole story, because enterprise distributions routinely backport security fixes without rebasing to the newest upstream kernel. A nominally older kernel may be fixed; a seemingly newer custom kernel may not be.

The Container Angle Is Where Administrators Should Slow Down

The official vector says local. In 2026, local does not always mean a person with SSH on a bare-metal server. It may mean a pod with elevated capabilities, a CI runner allowed to create network namespaces, a developer container launched with broad privileges, or a network function running in a container because that was easier than packaging it as a VM.CAP_NET_ADMIN is the capability to watch. It is widely understood as dangerous, but it is still granted more often than security teams would like because so many legitimate tools want to configure interfaces, routes, tunnels, iptables, nftables, or traffic control. Once a workload can create GRE devices and manipulate bonding, a bug like this becomes reachable from places that are not supposed to be equivalent to host root.That does not mean every Kubernetes cluster is exposed. Most ordinary pods cannot create host-level network devices, and many managed clusters enforce tighter defaults. But clusters that host network plugins, service meshes, packet-processing tools, VPN agents, or homegrown network automation deserve a closer look.

This is also a good moment to revisit privileged build and test infrastructure. Security conversations often focus on production clusters, while CI workers sit in a softer zone with more permissions, more experimental kernels, and less monitoring. A reproducible kernel crash may be enough to disrupt a build farm; a memory-corruption primitive may be more serious if chained with other flaws.

For Windows administrators, the analogous lesson is familiar from years of service hardening: do not grant broad system privileges just because it makes deployment easier. On Linux, network administration capabilities are powerful enough to turn a “local” kernel bug into a realistic operational risk.

The Exploit Story Is Still Mostly About Conditions

A sober reading of CVE-2026-43456 separates what is known from what is implied. Known: the bonding driver could call non-Ethernet slave header callbacks with the wrong device private data. Known: the published trace shows a kernel BUG in a path involving GRE and packet sending. Known: the CNA scored the vulnerability as high severity with low attack complexity and high impact.Less certain is the availability of a reliable, weaponized exploit across major distributions. Kernel memory corruption bugs often vary by configuration, allocator behavior, hardening options, architecture, and timing. KASAN traces prove a bug; they do not automatically prove a turnkey exploit. Conversely, dismissing memory corruption because the first visible symptom is a crash is a mistake seasoned defenders should have outgrown.

This is why the right operational posture is boring: patch, reduce privileges, and inventory the reachable configurations. If a system does not use bonding or GRE-like devices, the practical exposure is lower. If it runs multi-tenant workloads with broad network capabilities, the urgency rises.

There is also a denial-of-service dimension that does not require cinematic exploitation. A kernel BUG can take down a host, depending on panic settings and workload impact. On virtualization hosts, routers, storage gateways, or cluster nodes, that is enough to matter. Availability is not a consolation prize when the affected machine is part of the fabric other systems depend on.

The CVE’s high score should therefore be read as a prompt for prioritization, not an invitation to panic. It is a kernel networking flaw with plausible privilege and availability consequences under specific local conditions. That is exactly the category where mature patch programs should move quickly without pretending every endpoint is on fire.

The WindowsForum Angle Is Hybrid Risk Management

For this audience, the most important question is not whether Windows 11 is vulnerable. It is whether your Microsoft-centered environment has Linux kernels in places your Windows patch process does not see. That includes Azure VMs, Linux containers on Windows-hosted developer machines, Hyper-V guests, WSL distributions used for tooling, security appliances, network virtual appliances, and Kubernetes nodes.WSL deserves a careful mention. The published trigger involves creating and enslaving network devices in a way that is not representative of ordinary WSL use. WSL users should still keep their platform current, but the more realistic exposure is on full Linux systems or containers with meaningful network administration rights. Treat WSL as part of hygiene, not as the main battlefield.

Azure and hybrid operations are a different story. If your estate includes Linux images maintained by distribution vendors, managed Kubernetes node pools, or marketplace appliances, the patch path may run through several parties. Microsoft may surface the advisory, but Ubuntu, Debian, Red Hat, SUSE, Oracle, appliance vendors, or custom image owners may supply the actual kernel update.

This is where asset classification beats CVE theater. A ticket that says “CVE-2026-43456: patch all systems” is less useful than one that asks for Linux hosts with bonding enabled, GRE or other tunnel devices available, and local workloads that can manipulate networking. The former creates noise. The latter finds risk.

The lesson is not new, but the setting is. Windows administrators have spent years learning that firmware, drivers, browsers, runtimes, and cloud control planes all matter. Linux kernel components are now part of that same operational field, even in organizations that still think of themselves as primarily Windows shops.

Kernel Composability Keeps Producing Security Debt

CVE-2026-43456 is a small case study in a larger kernel design tension. Linux networking is powerful because devices, tunnels, bonds, teams, namespaces, packet sockets, and virtual interfaces can be composed in flexible ways. That flexibility is essential for modern infrastructure. It is also a way to discover assumptions that were never designed for every possible composition.The vulnerable behavior came from treating

header_ops as portable when some callbacks were implicitly tied to a specific private data layout. That is not just a coding error; it is an interface contract problem. If an API accepts a net_device pointer but the function behind it assumes a more specific object, the type system cannot protect you.C is unforgiving here. The kernel’s private data pattern is fast and idiomatic, but it relies on developers preserving relationships that the compiler cannot fully enforce. When a subsystem hands a callback the wrong object, the resulting bug can sit dormant until a fuzzer, researcher, or unusual deployment pattern puts the wrong pieces together.

The stable-kernel process is the counterweight. Bugs are found, patches are reviewed, fixes are backported, and CVEs are assigned. That machinery works, but it does not erase the administrative burden. Operators still need to know which kernels they run, which fixes were backported, and which capabilities they expose to workloads.

This is why kernel security should not be reduced to version worship. The important question is not simply “Are we on the newest kernel?” It is “Do our maintained kernels include the relevant fix, and have we limited the local conditions needed to abuse the bug?”

The Useful Response Is Inventory, Not Alarm

A practical response to CVE-2026-43456 starts with narrowing the affected population. Linux hosts that cannot create bonds or tunnel devices are not equivalent to network-heavy systems that can. Servers that grant no untrusted local access are not equivalent to shared compute nodes or privileged container hosts. Kernel patching still matters, but prioritization should follow exposure.Administrators should also examine capability boundaries. If containers or services have

CAP_NET_ADMIN, ask why. If CI runners can create arbitrary network devices, ask whether that is required for every job. If developers use privileged containers by default, this CVE is another data point in a long argument for tighter defaults.For distribution-managed systems, watch vendor advisories and changelogs rather than relying only on upstream version numbers. Backports can carry the fix into older enterprise kernels, and custom kernels can miss it even if they appear modern. Appliance vendors should be pressed for explicit confirmation if the device uses Linux bonding or tunnel interfaces internally.

Finally, test updates with the seriousness kernel patches deserve. Networking fixes can affect failover, tunnels, and virtual interface behavior. That is not a reason to delay indefinitely; it is a reason to validate on representative systems before rolling into environments where bonded links are part of the availability story.

The Bonding Bug’s Real Checklist for Mixed Windows and Linux Shops

The operational value of this CVE is that it points to a specific set of conditions rather than a vague sense of danger. Treat it as a targeted hunt across hybrid infrastructure, not as a generic Patch Tuesday chore.- Systems running Linux kernels with bonding enabled should be checked for vendor fixes that mention the bonding

header_opstype-confusion issue orbond_setup_by_slave(). - Hosts or containers that can create GRE, IP6GRE, or similar non-Ethernet devices and attach them to bonds deserve higher priority than ordinary single-interface servers.

- Workloads granted

CAP_NET_ADMINshould be reviewed, especially in CI, Kubernetes, network-function, VPN, and packet-processing environments. - Windows-centric teams should verify whether Azure, Hyper-V, WSL, appliance, and Kubernetes responsibilities include Linux kernels outside the normal Windows Update workflow.

- Kernel update validation should include networking failover and tunnel behavior, because the fixed code sits directly in the path that makes bonded interfaces behave like their slaves.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center