NVIDIA’s DLSS 5 reveal landed like a meteor: audacious, technically ambitious, and—judging by the reaction online—deeply, visibly divisive. The company’s cinematic trailer and GDC/GTC presentations framed DLSS 5 as a generational leap: neural rendering that fuses traditional 3D scene data with generative AI to produce real‑time, photoreal lighting and surface detail. But within hours the conversation turned toxic on social platforms; YouTube comment sections and community threads erupted with words like “uncanny,” “hallucination,” and “ruined the art direction.” Even respected technical outlets that were given hands‑on access raised serious questions about what DLSS 5 actually changes and whether the trade-offs are acceptable.

This feature unpacks the announcement, the technical claims, the early hands‑on reports, and the fallout. It weighs the genuine engineering advances against the practical and cultural risks, and it offers concrete guidance for developers, artists, and players who will be living with DLSS 5 as it matures.

NVIDIA pitched DLSS 5 as more than another upscaling or frame‑generation iteration. According to company messaging and the keynote excerpts shown in the reveal, DLSS 5 is described as a form of neural rendering — an AI model that ingests structured 3D data (color buffers, motion vectors, depth, material/semantic masks) and generates enhanced lighting, surface response, and apparent material properties in real time. Jensen Huang framed it as a “GPT moment for graphics,” arguing the technique blends handcrafted rendering with generative AI while preserving artist control.

Two elements matter immediately for context:

But on faces and character models, the effect was uneven. In several high‑profile comparisons, characters that looked familiar in the base render appeared different when DLSS 5 was engaged: details in pore structure, wrinkle depth, lip shape, and facial shading changed in ways that made the characters read as different people — sometimes more photoreal, sometimes simply off. For many viewers that change crossed the uncanny valley and felt like an Instagram filter applied to a 3D model.

That observation is important: DLSS 5 is not a geometry or content‑generation system that inserts new meshes. It manipulates shading and compositing to produce richer-looking output, but the fidelity of the underlying models remains a central limiting factor.

But the public reveal exposed real, human problems that cannot be solved with raw engineering alone. Visual trust — the audience’s implicit confidence that what they see is what the creators intended — matters as much as polygon budgets and frame rates. The early outputs shown in trailers and hands‑on clips illuminated both the upside and the danger: environments that look dramatically better, and character renders that can feel subtly wrong or actively wrong.

This technology will improve. Models will be retrained, the tooling will become richer, and early artifacts will be smoothed out. What remains open — and what NVIDIA, developers, and the community must address — is how to ensure neural rendering augments creative expression rather than overwrites it.

If studios ship DLSS 5 thoughtfully, with clear toggles, robust artist controls, and honest, public communication about what the system does and how it was trained, DLSS 5 could be a valuable new tool in the real‑time graphics toolbox. If it is pushed to market primarily as a marquee feature without the necessary safeguards and transparency, the backlash we've seen so far is only a preview of deeper community fractures.

For players: stay skeptical but curious. For developers: adopt with instrumented caution. For NVIDIA: earn the trust that new, generative graphics demand.

The conversation sparked by DLSS 5 will not end with the next driver update. It’s the start of a broader debate about the role of AI in creative media — and whether the future of gaming will be defined by human artistry augmented by machines, or by machines remaking human art in their image.

Source: Windows Central NVIDIA’s DLSS 5 reveal is getting roasted on YouTube

This feature unpacks the announcement, the technical claims, the early hands‑on reports, and the fallout. It weighs the genuine engineering advances against the practical and cultural risks, and it offers concrete guidance for developers, artists, and players who will be living with DLSS 5 as it matures.

Background / Overview

Background / Overview

NVIDIA pitched DLSS 5 as more than another upscaling or frame‑generation iteration. According to company messaging and the keynote excerpts shown in the reveal, DLSS 5 is described as a form of neural rendering — an AI model that ingests structured 3D data (color buffers, motion vectors, depth, material/semantic masks) and generates enhanced lighting, surface response, and apparent material properties in real time. Jensen Huang framed it as a “GPT moment for graphics,” arguing the technique blends handcrafted rendering with generative AI while preserving artist control.Two elements matter immediately for context:

- DLSS historically began as an image upscaler that used neural networks to recreate higher‑resolution frames from lower‑resolution renders. DLSS 2 and later versions improved quality and broadened adoption. DLSS 5 represents a conceptual shift from pure upscaling toward AI‑generated lighting and material inference.

- Early hands‑on coverage from technical outlets and extended demos indicates the technology can produce dramatically different visual outcomes for the same underlying scene: changes that many users interpret as improvements to environment lighting but that sometimes alter the look of characters and mood in ways the original art team did not intend.

What NVIDIA Claims DLSS 5 Does

The pitch (in plain terms)

NVIDIA’s messaging around DLSS 5 boils down to three core claims:- Neural rendering that augments lighting and materials — DLSS 5 uses an AI model trained to understand scene semantics (characters, hair, cloth, skin subsurface scattering) and infuse frames with photoreal lighting consistent across motion and camera angles.

- Anchored to source 3D content — The company emphasizes that the outputs are anchored to existing game data (geometry, motion vectors, color, depth), not arbitrary image generation that replaces game assets.

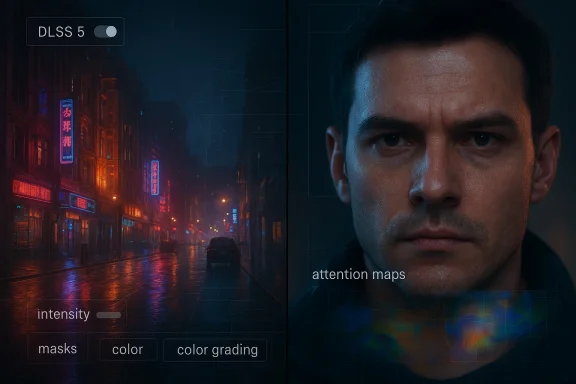

- Developer control — Artists and studios are given controls (intensity, masks, color grading) so the feature can be tuned to preserve a game’s aesthetic.

How it’s described to work technically

According to NVIDIA’s public statements and the hands‑on descriptions reported by specialist outlets:- DLSS 5 consumes per‑frame structured outputs from the engine—color buffers, motion vectors, depth, and masks—then executes a neural model that predicts photoreal lighting and material response.

- The system is designed to be consistent frame‑to‑frame (temporal coherency is a core requirement for interactive use).

- Integration is intended through existing NVIDIA developer frameworks (Streamline / DLSS SDK paths), and the studio controls are exposed so developers can decide where and how the AI‑driven enhancements are applied.

The Hands‑On Reality: What Reviewers and Technical Analysts Saw

Early hands‑on coverage from deep‑tech outlets and extended demo sessions highlighted two things in particular that became focal points for reaction.1) Dramatic environmental improvements — and strange character results

In many environment shots (especially nighttime cityscapes and reflective surfaces), DLSS 5 produced noticeably richer lighting: more convincing diffuse and indirect light, added sheen on wet surfaces, and more convincing specular highlights. Those changes read as genuine quality wins in a number of examples shown in the trailer and demos.But on faces and character models, the effect was uneven. In several high‑profile comparisons, characters that looked familiar in the base render appeared different when DLSS 5 was engaged: details in pore structure, wrinkle depth, lip shape, and facial shading changed in ways that made the characters read as different people — sometimes more photoreal, sometimes simply off. For many viewers that change crossed the uncanny valley and felt like an Instagram filter applied to a 3D model.

2) The engine assets are reportedly unchanged — it’s about lighting and shading

Technical analysis reported by hands‑on outlets made a crucial clarification: the underlying geometry and textures in the source game were not being rewritten or replaced by DLSS 5. Instead, the neural rendering layer changes the presentation — how the game’s surfaces interact with light. Because the model emphasizes new lighting and material responses, shortcomings in low‑fidelity character models can become more obvious, resulting in visual outcomes that some deem worse than the original.That observation is important: DLSS 5 is not a geometry or content‑generation system that inserts new meshes. It manipulates shading and compositing to produce richer-looking output, but the fidelity of the underlying models remains a central limiting factor.

Community Reaction: Why YouTube and Social Platforms Roasted the Reveal

Within hours of the trailer and the technical walkthroughs being published, YouTube comments and social threads turned hostile. The criticisms have several recurring themes:- Uncanny valley and “AI‑beautified” faces — Many viewers felt characters looked overly smoothed, homogenized, or “feminized” in a stylized way that breaks immersion.

- Art direction erosion — Players and creators argued the filter‑like changes overwrite developers’ artistic intent, making games look like a different product than the one designed by the studio.

- Hallucination fears — Because generative models can invent plausible detail, audiences worry DLSS 5 might fabricate features inconsistent with the game world.

- Trust and transparency — Gamers want clarity on how the system was trained and what datasets influenced aesthetics, and many are skeptical of a proprietary black box that changes visuals at runtime.

- Hardware and accessibility concerns — Early demos ran on top‑end GPUs and in some cases used multiple cards for the demo, which fuels the sense this will remain an exclusive feature for a limited audience.

Strengths: What DLSS 5 Can Deliver — When It Works

DLSS 5 is not a trivial or purely cosmetic step. When applied thoughtfully and in appropriate contexts, it offers meaningful benefits:- Real‑time photoreal lighting at interactive framerates — If the model performs as promised, it can dramatically enhance environment lighting without the GPU cost of full global illumination and path tracing.

- Tooling for legacy remasters — DLSS 5 could be transformative for remasters: older games with low‑quality lighting and textures could gain a more modern visual treatment without reauthoring every asset.

- Artist controls and masks — The inclusion of per‑region masks, intensity sliders, and color grading controls means developers are not forced to accept a one‑size‑fits‑all output; studios can fine‑tune the model’s output to fit aesthetic goals.

- Potential performance advantages vs. brute‑force rendering — Compared to real‑time path tracing, a trained neural renderer could deliver similar perceptual gains at lower computational cost — which is economically attractive for triple‑A pipelines.

- A new avenue for stylistic enhancement — Beyond photorealism, the same paradigm could be adapted for stylistic enhancements (filmic tones, painterly filters) that are artist‑driven and reversible.

Risks and Failure Modes: Why This Is Not a Drop‑in Replacement

The concerns voiced by players and analysts are not mere knee‑jerk resistance to novelty. They point to practical, technical, and cultural risks that developers and NVIDIA need to manage:- Visual hallucinations and identity drift — Neural models can invent plausible details that are not present in the input. Even if geometry remains unchanged, inferred surface detail can change perceived identity or mood.

- Aesthetic homogenization — If multiple games rely on similarly trained models, the result can be a flattening of visual styles where different titles start to “look the same.”

- Studio control failure modes — Artist tools need to be granular and reliable. If control parameters are insufficient or unintuitive, studios may struggle to preserve intended looks and may unintentionally introduce regressions across scenes.

- Temporal instability — Real‑time neural rendering must be temporally coherent. Any flicker, frame‑to‑frame variance, or inconsistent detail will be immediately visible and jarring.

- Performance and hardware gatekeeping — Early demos appear demanding; requiring top‑tier GPUs (or multiple cards) for real‑time use will restrict this capability to a smaller segment of players, fueling fairness and access arguments.

- Data and provenance concerns — The models are trained on data. If training datasets include third‑party imagery, fan mod content, or copyrighted material, it raises legal and ethical questions about what aesthetic “knowledge” the model embeds.

- QA and regression testing overhead — Games integrating a generative output layer will need expanded QA to catch cases where the model changes narrative visuals (facial expressions, recognizability, emotional beats).

- Community and modding friction — Mod communities may react strongly if neural rendering alters character looks in ways mods previously attempted to address via manual replacements. The dynamic may be combative if players perceive the tech as erasing modder work or art direction.

Who Benefits — and Who Should Be Wary

Beneficiaries

- Studios with dated assets — Remasters and older titles that primarily need modern lighting stand to gain most.

- Technical art teams — With strong artist controls and expertise, studios can leverage DLSS 5 to reach higher fidelity without reauthoring entire content pipelines.

- Players who want photoreal options — For those who prioritize photorealism over original stylization, DLSS 5 provides a one‑click upgrade path.

Those who should be cautious

- Narrative‑driven titles dependent on precise facial performances — Any unintended change to facial shading or micro‑expression could damage story beats.

- Indie studios with limited art resources — The administrative and QA overhead of integrating and tuning a generative system may outweigh the benefits.

- Preservationists and archivists — If DLSS 5 becomes widely applied in a non‑optional way, it could complicate efforts to preserve original artistic intent and historical authenticity.

Practical Recommendations

For game developers and technical artists

- Treat DLSS 5 as an optional enhancement layer. Ship it as a toggleable option and provide several presets that preserve artistic intent by default.

- Expose fine‑grained artistic controls to QA and cinematics teams. Masks, per‑material intensity sliders, and scene annotations should be first‑class features.

- Run DLSS 5 through narrative regression testing. Validate key cutscenes and character beats with the feature on and off.

- Document training provenance and model assumptions for internal use and for any public trust efforts. Transparency reduces community backlash.

- Design fallback art pipelines: if DLSS 5 introduces unacceptable variance, have a plan to back out changes without heavy rework.

For players and community members

- Don’t judge the tech solely by trailers. Demo footage is curated; real gameplay toggles and customizable presets will matter far more.

- Try it with the toggle off first. If you prefer original art direction, insist on default‑off implementations.

- Report regressions responsibly. When you find unacceptable artifacts or identity drift, give studios actionable feedback tied to specific scenes.

For NVIDIA and platform stakeholders

- Make artist controls obvious and powerful. If studios feel they can keep signature looks, adoption will rise.

- Publish training provenance and model constraints to address ethical and legal questions.

- Invest in performance optimization and broader hardware support to avoid overly gating the feature behind only the most expensive setups.

- Fund third‑party evaluations (independent labs) that can objectively measure temporal stability, identity consistency, and artifact rates.

The Bigger Picture: What DLSS 5 Signals About the Future of Real‑Time Graphics

DLSS 5 is a cultural and technical milestone. Whether it becomes an industry standard, a niche option, or a cautionary tale depends on how the ecosystem responds over the next 12–24 months.- Technically, neural rendering is a natural next step for real‑time graphics. Hardware is rapidly evolving to support ML inference; game engines are increasingly data‑driven; and studios are seeking cost‑effective ways to approximate global illumination and complex material response.

- Creatively, the industry faces a question of who controls aesthetics when generative systems are in the loop. Will studios retain full authorship? Will players demand the right to opt out? Will models be audited to avoid stylistic homogenization?

- Economically and legally, the training data behind these models will become a flashpoint. Companies and studios must preemptively clarify how models were trained and guard against unintentional appropriation claims.

Final Assessment: Bold Leap — But Still a Work in Progress

There is no question DLSS 5 represents substantial engineering ambition. Real‑time neural rendering that is consistent frame‑to‑frame and controllable by artists would reshape what studios can achieve without full path tracing budgets.But the public reveal exposed real, human problems that cannot be solved with raw engineering alone. Visual trust — the audience’s implicit confidence that what they see is what the creators intended — matters as much as polygon budgets and frame rates. The early outputs shown in trailers and hands‑on clips illuminated both the upside and the danger: environments that look dramatically better, and character renders that can feel subtly wrong or actively wrong.

This technology will improve. Models will be retrained, the tooling will become richer, and early artifacts will be smoothed out. What remains open — and what NVIDIA, developers, and the community must address — is how to ensure neural rendering augments creative expression rather than overwrites it.

If studios ship DLSS 5 thoughtfully, with clear toggles, robust artist controls, and honest, public communication about what the system does and how it was trained, DLSS 5 could be a valuable new tool in the real‑time graphics toolbox. If it is pushed to market primarily as a marquee feature without the necessary safeguards and transparency, the backlash we've seen so far is only a preview of deeper community fractures.

For players: stay skeptical but curious. For developers: adopt with instrumented caution. For NVIDIA: earn the trust that new, generative graphics demand.

The conversation sparked by DLSS 5 will not end with the next driver update. It’s the start of a broader debate about the role of AI in creative media — and whether the future of gaming will be defined by human artistry augmented by machines, or by machines remaking human art in their image.

Source: Windows Central NVIDIA’s DLSS 5 reveal is getting roasted on YouTube