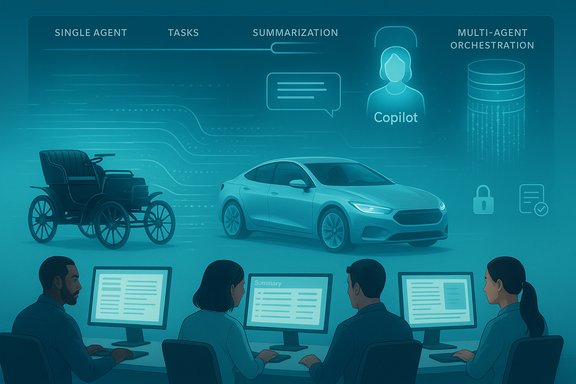

Enterprise AI is on the move, but for most organizations the current reality looks a lot more like a "horseless carriage" than an autonomous, AI-native fleet — promising, uneven, and still bound to the constraints of legacy processes and governance.

At Microsoft Ignite this month, a panel of IT leaders from EY, Pfizer and Lumen framed their current AI deployments as pragmatic, incremental, and heavily tethered to existing processes rather than a wholesale reinvention of how work gets done. The session — and the reporting that followed — paints a picture of agentic AI being applied where it yields fast, low-risk wins: knowledge management, content creation and specialist research assistance. That characterization, grounded in remarks from EY’s John Whittaker and others, captures a broader industry pattern: organizations are rapidly deploying AI tools, but largely as overlays on legacy systems rather than as the basis of redesigned business operations. At the same time, independent industry research and CIO community reporting suggest the same constraint: successful AI at scale is less about model architecture and more about change management, data readiness, governance, and operational integration. The message from multiple directions is consistent: pilots are plentiful; durable, measurable transformation remains rare.

However, the time horizon for truly agent-native enterprises — where AI orchestrates distributed services and manages multi-step business processes without heavy human supervision — remains measured in years rather than months. Expect continued incrementalism: pilots, stabilizations, regulated expansions, and selective redesigns that reflect a prudent balancing of potential and peril.

For IT leaders and Windows-focused buyers, the task is clear: treat initial AI deployments as deliberate organizational programs, not feature launches. Invest in data plumbing, governance and change management now so that, when the agents are ready to steer, the enterprise is safely in the driver’s seat.

This analysis synthesizes the Microsoft Ignite panel reporting and CIO community research, and it cross-checks those claims against independent industry studies that document both the promise and practical limits of agentic AI in enterprises. Where vendor-provided numbers appear in public comments, they are noted as corporate claims and should be independently validated during procurement or case-study review.

Source: Computerworld AI fluency in the enterprise: Still a ‘horseless carriage’

Background

Background

At Microsoft Ignite this month, a panel of IT leaders from EY, Pfizer and Lumen framed their current AI deployments as pragmatic, incremental, and heavily tethered to existing processes rather than a wholesale reinvention of how work gets done. The session — and the reporting that followed — paints a picture of agentic AI being applied where it yields fast, low-risk wins: knowledge management, content creation and specialist research assistance. That characterization, grounded in remarks from EY’s John Whittaker and others, captures a broader industry pattern: organizations are rapidly deploying AI tools, but largely as overlays on legacy systems rather than as the basis of redesigned business operations. At the same time, independent industry research and CIO community reporting suggest the same constraint: successful AI at scale is less about model architecture and more about change management, data readiness, governance, and operational integration. The message from multiple directions is consistent: pilots are plentiful; durable, measurable transformation remains rare. Overview: What the Microsoft Ignite panel actually said

The "horseless carriage" metaphor

John Whittaker, director of AI platform and products at EY, used a vivid metaphor: enterprises are "probably living in some version of the horseless carriage — we haven’t got to the car yet." That line neatly summarizes the central theme from the panel: AI is being grafted onto existing workflows, producing incremental productivity and risk reduction, but not yet running organizations end-to-end as a trusted decision-maker.Who’s doing what — concrete examples from the panel

- EY described a large-scale rollout of agentic assistance across the firm, citing tens of thousands of agents and millions of documented processes inside the organization as the substrate for incremental automation. The firm pointed to a tax-assistant agent that leverages a fine-tuned model trained on millions of specialist documents to keep advisers and clients up to date on frequent tax changes.

- Pfizer outlined a phased, conservative approach: small pilots to measure confidence and accuracy, followed by iterative scaling. The company emphasized starting with well-understood contact-center and research tasks rather than wholesale process redesign.

- Lumen described a strategic, 36-month roadmap to an “AI-native” operating model, with Copilot licenses issued to new team members and a staged plan for multi-agent orchestration. The company framed adoption as a multi-level journey from single agents to multi-agent orchestration to full agent-to-agent transactions.

Why the "horseless carriage" phase matters — and why it’s sensible

Legacy systems are both the problem and the opportunity

Most enterprises live on decades of ERP, CRM and bespoke middleware. Those systems hold the canonical data, transaction histories and authorizations that agents need to act. Tacking AI on top of that fabric is the pragmatic route: lower risk, faster time-to-value, and less disruption to regulated operations.- Short-term wins are high-return: enabling Copilot-style assistants to summarize long documents, find precedent, or draft first-draft responses reduces human effort and increases throughput without changing approvals or audit trails.

- Risk containment is easier: when AI augments human decisions rather than replaces them, governance and human-in-the-loop controls can be retained while teams gain experience and trust.

Fine-tuning beats generic models for domain tasks

Enterprise use cases frequently require specialist knowledge. The panel underlined that fine-tuned models — trained on firm-specific documents, research libraries and policy sources — outperform generic LLM deployments for mission-critical tasks. EY’s tax assistant, for example, combines a broad LLM base with millions of domain-specific documents to deliver higher-quality answers for tax professionals. That strategy — base model + targeted fine-tuning + retrieval-augmented context — is a predictable and practical architecture for the near term.The gains: measurable benefits companies are reporting

Enterprises that have moved beyond experimental pilots are reporting incremental but tangible returns:- Faster onboarding and knowledge transfer: some teams report new hires reaching full productivity in half the previous time by using Copilot-like tools for instant context and historical search.

- Productivity uplift in knowledge work: firms cite measurable time savings in information synthesis, report drafting and research tasks. Industry analyses estimate large potential value from generative AI across marketing, customer service and software engineering.

- Cost avoidance and process acceleration: automating routine steps, routing, and first-line triage in contact centers cuts time-to-resolution and allows human agents to focus on higher-value interactions.

The friction points: what's stopping AI from being "the car"

Despite clear initial wins, multiple constraints slow the transition from overlay to AI-native operations.1) Data and integration debt

AI agents need clean, discoverable, and well-structured access to the right data. Many enterprises still struggle with fragmented data, inconsistent metadata, and brittle integration layers. Collating canonical sources and establishing data lineage remain costly, bespoke work. Research across CIO networks repeatedly identifies data quality and integration as the single largest technical blocker to scaling AI.2) Governance, compliance and auditability

Regulated industries (finance, healthcare, tax) require auditable decision trails, provenance and deterministic failure modes. Agents that craft advice or change account states must surface why a recommendation was made, which inputs influenced the outcome, and what the fallback is when uncertainty is high. Current LLM-centric stacks are often insufficiently transparent without additional engineering — prompting heavy investment in explainability, logging and guardrails.3) Trust and human-in-the-loop design

Enterprises are conservative for good reason: the cost of erroneous automation can be material. Confidence-building patterns — small pilots, human verification, and incremental escalation of agent privileges — dominate. Reports show that organizations prefer to keep humans in control while agents prove reliability and constancy of behavior. That preference shapes architecture (agents as copilots, not controllers) and timelines.4) Skills, organizational change and ownership

Successful scaling is a people problem as much as a technology problem. Enterprises require new roles — AgentOps, model SREs, data curators and governance architects — plus training programs to embed new behaviors. Many organizations underestimate the non-technical work needed to rewire incentives, KPIs and operating rhythms. The CIO community flags change management as the most common cause of stalled pilots.Strategic pathways to move from "horseless carriage" to "car"

For organizations aiming to accelerate from assistant-first to agent-native operations, the practical path is structured and staged.Stage 1 — Pilot and stabilize

- Identify high-payoff, low-risk tasks (knowledge search, summaries, scripted workflows).

- Require clear KPIs and short-cycle telemetry.

- Keep humans in the loop and instrument outputs for quality and audit.

Stage 2 — Operationalize and govern

- Build canonical data stores and retrieval layers.

- Implement strict access, redaction and logging policies.

- Create an internal AgentOps function to manage life-cycle, rollouts and incident response.

Stage 3 — Reimagine and redesign

- Where agents prove reliable, conduct process redesign sprints that remove redundant steps and rebalance human/agent work.

- Introduce multi-agent orchestration patterns with clearly defined escalation paths.

- Update roles and KPIs: measure outcomes, not clicks or seats.

Critical analysis: strengths, weaknesses and enterprise risk profile

Strengths — why boards are greenlighting agentic AI now

- Rapid value capture in information-heavy tasks reduces friction to adoption.

- Vendor ecosystems (Microsoft Copilot ecosystems, cloud AI services) lower operational cost and accelerate deployment velocity.

- Improved developer productivity and automation in IT functions translate into operational cost savings and faster release cycles.

Weaknesses — where enterprise expectations commonly outpace reality

- Over-reliance on vendor demos: field experience shows that curated demos rarely reflect messy production inputs. That gap produces disappointment and cautious procurement decisions.

- Measurement inconsistency: many pilots report time-saved metrics without rigorous ROI frameworks or long-term business metrics, making comparability hard.

- Vendor lock and platform risk: choosing a closed agent framework without clear data portability may create future migration costs and regulatory exposure.

Risks — regulatory, technical and sociotechnical

- Compliance and legal risk: agents that surface PII, misinterpret contracts or draft regulatory filings expose firms to legal liability unless tightly governed.

- Operational risk: agents that act autonomously on transactional systems require deterministic fail-safes; otherwise, erroneous automation can cascade.

- Workforce impacts: while many organizations report productivity gains, models of displacement remain plausible, especially for routine, entry-level roles; workforce transition and upskilling strategies are essential.

- Trust erosion: overpromising on agent capability can erode internal trust, causing broad resistance and rollback of deployments. Realistic communications from leadership mitigate this risk.

Verification and cross-referencing of key claims

- The notion that enterprises are primarily applying agents to knowledge management, content creation and research matches multiple industry studies: McKinsey’s generative-AI analyses show major impact expected in customer service, marketing, sales and corporate IT, and independent industry reporting highlights similar early-adoption domains. That convergence lends credibility to the claim that early agent wins cluster in document- and knowledge-heavy functions.

- CIO community reporting repeatedly emphasizes change management, data readiness and governance as the top barriers — a consistent corroboration of the panel’s tone that organizational issues, not model capability, are the dominant constraints.

- Independent analyst surveys (Capgemini, Deloitte and Cisco reports) echo a cautious optimism: agentic AI promises significant value, but large-scale autonomous deployments remain rare, and trust issues temper adoption timelines. These independent confirmations show the panel’s anecdotal evidence fits broader market sentiment.

Practical recommendations for IT leaders and Windows-oriented buyers

- Prioritize pilot clarity: define exact KPIs (time saved, error reduction, escalation reduction) and a short evaluation window. Avoid vague productivity proxies.

- Invest early in data plumbing: canonical retrieval layers, document indexing and metadata hygiene accelerate agent utility and reduce hallucination risk.

- Build governance first: logging, explainability wrappers, and rollback mechanisms should be in place before agents touch transactional systems.

- Create an AgentOps team: blend SRE, data engineering and policy expertise to oversee model lifecycle, permissions, and incident processes.

- Start with augmentation: position agents as copilots, not commanders. That builds user trust and provides observable safety boundaries.

- Plan workforce transitions: align upskilling and role redesign programs to ensure human talent shifts from routine tasks to higher-value judgment work.

The outlook: incremental transformation, not an overnight replacement

The current trajectory is clear: enterprise AI will continue to spread rapidly across knowledge-work domains, driven by vendor platforms, internal pilots and competitive pressure. Over time, as data and governance matures and AgentOps practices become standardized, organizations will incrementally redesign processes rather than simply overlay AI on brittle workflows.However, the time horizon for truly agent-native enterprises — where AI orchestrates distributed services and manages multi-step business processes without heavy human supervision — remains measured in years rather than months. Expect continued incrementalism: pilots, stabilizations, regulated expansions, and selective redesigns that reflect a prudent balancing of potential and peril.

Conclusion

The Microsoft Ignite panel — and subsequent reporting — captured the central paradox of enterprise AI adoption today. Organizations are excited, investing, and getting tangible wins from agentic tools, but most of that value is being harvested by bolting AI onto existing processes rather than reimagining those processes for an AI-first future. That “horseless carriage” phase is rational: it reduces risk, accelerates early wins and buys time to build the data, governance and organizational capabilities needed for deeper transformation.For IT leaders and Windows-focused buyers, the task is clear: treat initial AI deployments as deliberate organizational programs, not feature launches. Invest in data plumbing, governance and change management now so that, when the agents are ready to steer, the enterprise is safely in the driver’s seat.

This analysis synthesizes the Microsoft Ignite panel reporting and CIO community research, and it cross-checks those claims against independent industry studies that document both the promise and practical limits of agentic AI in enterprises. Where vendor-provided numbers appear in public comments, they are noted as corporate claims and should be independently validated during procurement or case-study review.

Source: Computerworld AI fluency in the enterprise: Still a ‘horseless carriage’