AWS’s re:Invent keynote delivered a clear, ambitious thesis: agentic AI is no longer a research curiosity but a commercial imperative, and AWS will bet its infrastructure, silicon, and services on turning agents into operational tools that migrate, modernize, secure, and operate enterprise systems at scale.

AWS used its flagship re:Invent stage to expand several interlocking plays: agentic AI as a product category, new foundation-model tooling and training paths, and deeper integration between custom silicon and cloud services to lower the cost of AI at scale. The announcements centered on three commercial threads: (1) agent platforms and “frontier” agents that can run long-lived tasks, (2) developer- and enterprise-facing model tooling (Nova Forge and Bedrock integrations), and (3) infrastructure advances (Trainium3/UltraServer and roadmap signals to NVLink compatibility). Each of these is pitched not as a single feature but as a systems-level attempt to convert AWS’s scale into a usable AI platform for enterprises. Across the messaging, AWS targeted two audiences simultaneously: engineering teams who build and operate complex systems, and procurement/business leaders who currently pay for Windows licenses, legacy databases, and on-prem infrastructure. That dual focus drives both the product design and the marketing narrative—AWS’s pitch is to make migration and modernization low-friction and AI-enabled, while also offering the foundational plumbing and hardware economics to host AI workloads at scale.

Source: The Tech Buzz https://www.techbuzz.ai/articles/aws-unveils-ai-agents-at-re-invent-but-faces-uphill-battle/

Background

Background

AWS used its flagship re:Invent stage to expand several interlocking plays: agentic AI as a product category, new foundation-model tooling and training paths, and deeper integration between custom silicon and cloud services to lower the cost of AI at scale. The announcements centered on three commercial threads: (1) agent platforms and “frontier” agents that can run long-lived tasks, (2) developer- and enterprise-facing model tooling (Nova Forge and Bedrock integrations), and (3) infrastructure advances (Trainium3/UltraServer and roadmap signals to NVLink compatibility). Each of these is pitched not as a single feature but as a systems-level attempt to convert AWS’s scale into a usable AI platform for enterprises. Across the messaging, AWS targeted two audiences simultaneously: engineering teams who build and operate complex systems, and procurement/business leaders who currently pay for Windows licenses, legacy databases, and on-prem infrastructure. That dual focus drives both the product design and the marketing narrative—AWS’s pitch is to make migration and modernization low-friction and AI-enabled, while also offering the foundational plumbing and hardware economics to host AI workloads at scale. What AWS Announced at re:Invent — The Product Rollup

Agent portfolio: from AgentCore to “Frontier” agents

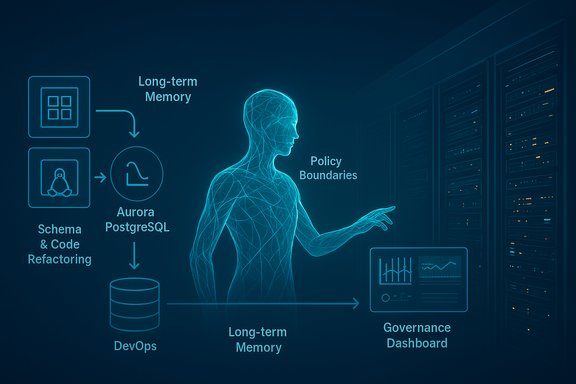

AWS expanded AgentCore and introduced what it calls frontier agents—agents designed to operate autonomously for long-running, multi-step tasks such as large-scale code refactoring, security triage, and continuous DevOps operations. These agents include new capabilities around long-term memory, policy boundaries, built-in evaluator tooling, and audit logging intended for enterprise governance. Early examples unveiled include Kiro (an autonomous developer agent), a Security Agent, and a DevOps Agent. AWS claims these agents can operate for hours or days, coordinating across services and toolchains under human supervision. Key vendor claims:- Agents will log actions, maintain state across sessions, and surface detailed transformation summaries.

- Policy controls let organizations set hard boundaries and guardrails for autonomous behavior.

AWS Transform: full‑stack Windows modernization agents

AWS expanded AWS Transform into a full-stack Windows modernization offering that automates coordinated conversion of .NET applications and Microsoft SQL Server databases to Linux-based stacks and Amazon Aurora PostgreSQL. The service promises:- Automated discovery, dependency mapping, schema conversion (SQL Server → Aurora PostgreSQL), stored-procedure transformation, and coordinated refactoring of .NET code (Entity Framework/ADO.NET).

- End-to-end pipelines that commit transformed artifacts to new repository branches and produce deployable IaC for ECS or EC2 Linux targets.

- Claims of accelerating modernization by “up to 5x” and reducing operating costs “by up to 70%” in promotional material.

Nova Forge, model tooling, and developer controls

AWS introduced Nova Forge, an “open training” approach for customers who want to bake proprietary data into the training process of Nova models—or to build custom frontier models entirely. Nova Forge supports checkpoints at multiple training stages and aims to reduce the cost and time to obtain useful, specialized models for enterprise tasks. The strategy is pragmatic: provide customers both the managed Bedrock hosting plane and gated pathways to more bespoke model development. This fits AWS’s broader approach: sell the full stack (compute + model + tooling) while preserving options for customers to host models privately or to choose from a marketplace of model variants.Trainium3, UltraServer, and NVLink roadmap

On the infrastructure side, AWS announced Trainium3 and UltraServer configurations, promising significant performance-per-dollar improvements (multiple vendors reported claims of up to 4x performance gains and ~40% lower power usage in marketing material), and signaled a roadmap for NVLink/interop with NVIDIA-like interconnects for future Trainium4 designs. Reuters and third-party coverage corroborated these infrastructure claims and highlighted partnerships and specifications that seek to accelerate large-model training economics.Why AWS’s Agent Push Matters — Strategic Context

Turning primitives into outcomes

AWS has historically been strongest at offering primitives: compute instances, storage, databases, and ML building blocks. The contest for enterprise AI now rewards companies that turn primitives into productized outcomes—packaged, guarded workflows that non‑ML teams can use. AWS’s agent strategy is an explicit attempt to productize automation across discovery, transformation, and operations, so that the buyer is purchasing outcomes (migrated apps, secure environments, continuous DevOps) rather than raw model endpoints.Competing narratives with Microsoft and Google

Microsoft and Google have chosen the opposite play in places: tightly integrate AI into productivity suites and developer tools so that end users adopt incremental AI features inside Microsoft 365 or Google Workspace. AWS is attempting to win at the architectural and infrastructure level while also packaging higher-level experiences that speak to CIOs and platform teams. The consequence is a differentiated sales motion: Microsoft sells seat-based productivity value, AWS sells modernization and infrastructure economics. Both are valid—but the buyer personas and procurement paths are different.Why Windows modernization is a political play

AWS’s heavy focus on migrating Windows workloads—with product messaging about an “easy button” to get off Windows—was more than product positioning; it’s a strategic effort to shift long-term revenue pools. Enterprises with large Windows estates (SQL Server, .NET) represent multi-year licensing and cloud-hosting revenue for Microsoft. AWS seeks to capture that migration spend by offering automated tooling that plugs into its managed database and compute services. The economics are attractive if the automation works; the enterprise challenge is political and technical—many shops are cautious to re-platform business-critical systems.Technical Deep Dive — What Makes These Agents Different

Long-lived memory and evaluator tooling

Vendor demos emphasized three engineering attributes that distinguish “frontier” agents from simple RAG-based assistants:- Persistent memory: agents retain a structured, queryable memory across sessions to support multi-step tasks and contextual continuity.

- Built-in evaluators: prebuilt evaluation suites that test for correctness, compliance, and policy violations before an agent’s act is committed.

- Policy enforcement: declarative policy modules that define acceptable tool usage, data access, and external actions.

Model and compute co-design

AWS’s announcements tie Nova Forge and Bedrock to Trainium silicon—an explicit co-design bet where model architectures and training pipelines are optimized for the host hardware. Theoretically, that lowers costs for customers who commit to the stack and creates a performance moat if adoption scales. But this co‑design also creates a portability trade-off for customers who want to avoid lock-in; enterprises must weigh the cost benefits against the risk of higher friction when moving workloads away from the AWS hardware/model stack. Reuters and TechCrunch coverage underlined this co-design emphasis.Business and Operational Risks — The Uphill Battle

AWS’s product set is ambitious, but several headwinds complicate adoption.1) Vendor claims vs. real-world variability

AWS’s promotional numbers—“5x faster,” “up to 70% cost reduction”—are credible as vendor benchmarks but depend heavily on workload complexity, legacy coupling, and test assumptions. Enterprises should treat these figures as directional rather than guaranteed, and validate them with proof-of-concept (PoC) projects that mirror production scale. AWS documentation is explicit about availability and regional launch points, another reminder that enterprise pilots must confirm regional compliance and latency constraints.2) Lock-in and commercial trade-offs

Automated modernization that rewrites business logic and migrates databases is highly valuable—but it also produces derivative code and IaC patterns optimized for AWS (Aurora, ECS, CloudFormation/CDK). That increases the cost of reversing the migration if a company later wants to switch clouds. Buyers must build portability into contracts and require exportable artifacts and clear handover processes. Industry analysts repeatedly warn that short-term migration ease can create medium-term lock-in.3) Safety, compliance, and regulatory exposure

Agentic systems that access emails, calendars, or PII increase the attack surface for data leakage and regulatory exposure. EU AI regulations and industry-specific rules will impose conformity assessments for systems used in regulated workflows. AWS provides governance controls, but integrating those controls with corporate compliance programs and legal contracts will be nontrivial. Enterprises in finance, healthcare, and government will face a higher bar before allowing agents to act autonomously.4) Operational complexity and agent sprawl

Gartner and other analysts warn of “agent sprawl”—the proliferation of small, poorly governed agents across departments that cumulatively create security, cost, and maintainability headaches. Organizations need guardrails, observability, and centralized policy orchestration to avoid runaway costs and inconsistent behavior across teams. AWS offers policy modules and evaluation tooling, but the customer must operationalize these capabilities.5) Capital and capacity constraints

Scaling AI requires GPUs, power, and datacenter capacity—constraints that influence time-to-production and cost. AWS’s investment in Trainium and new UltraServer racks is meant to blunt those constraints, but the industry-wide demand for accelerators means supply and pricing dynamics will remain volatile. Investors and IT leaders should monitor capex guidance, utilization rates, and the pace at which new capacity is monetized.Practical Guidance — How IT Leaders Should Evaluate AWS’s Agent Push

- Start with measurable pilots:

- Define 2–3 KPIs (time to migrate a module, regression rate, cost delta).

- Pilot a non-critical application and a small database conversion to validate transform fidelity.

- Invest in governance up front:

- Require RBAC, immutable audit logs, human approval gates, and tamper-evident artifacts.

- Integrate agent logs with existing SIEM and compliance tooling.

- Architect for portability:

- Extract business logic into well-documented modules.

- Maintain a canonical build and CI pipeline that can be re-targeted with minimal changes.

- Validate vendor claims independently:

- Use third-party performance testing and code audits to confirm functional equivalence after transformation.

- Test for edge cases in stored procedures, custom CLR assemblies, or nonstandard SQL constructs.

- Negotiate commercial protections:

- Ask for exit clauses, credits for failed PoCs, and contractual guarantees around data export and artifact handover.

Critical Analysis — Strengths, Weaknesses, and the Middle Ground

Strengths

- Scale and engineering depth: AWS’s global footprint, custom silicon, and machine resources are unmatched in reach, enabling customers to experiment with production-scale workloads.

- Integrated migration story: Bundling discovery, conversion, testing, and deployment into a single agentic workflow is a concrete, valuable step toward reducing modernization friction.

- Productized governance features: Built-in evaluators and policy modules show AWS understands enterprise blockers; these are practical levers for cautious IT organizations.

Weaknesses and risks

- Narrative vs. execution gap: AWS must translate engineering prowess into repeatable, broadly applicable transformation outcomes. Marketing numbers are persuasive, but real-world heterogeneity in legacy codebases exposes the limits of automation.

- Vendor lock-in trade-offs: Ease of migration to AWS-managed services may increase long-term switching costs; organizations must explicitly plan for portability.

- Regulatory and safety unknowns: Agentic automation introduces legal and compliance questions that vary by jurisdiction—especially in regulated industries. Enterprise adoption will be incremental and governance-driven.

The middle ground

AWS’s push is neither a silver bullet nor empty theater. For many organizations, agentic automation will speed low-risk modernization tasks (dependency mapping, routine schema conversion, CI/CD generation). For highly business-critical systems or complex, bespoke codebases, the right model is still human+agent—automation that accelerates engineers but retains human verification and staged rollout.Conclusion

AWS’s re:Invent announcements represent an aggressive, coherent strategy to turn the promise of agentic AI into enterprise-grade outcomes: migrate Windows estates, modernize databases, and run long-lived agents that can shoulder routine, error-prone tasks. The company is pairing software (AgentCore, Nova Forge, Bedrock) with hardware (Trainium3/UltraServer and NVLink roadmap) to make a vertically integrated value proposition that is both powerful and commercially attractive. Yet the uphill battle is real. Vendor performance claims require validation; governance, portability, and regulatory compliance are mission-critical; and the human and political dimensions of moving off entrenched Windows and SQL Server estates cannot be automated away. The sensible path for CIOs is pragmatic: pilot with clear KPIs, insist on governance and portability, and treat vendor claims as hypotheses to be verified in realistic environments. If AWS’s agents deliver even a portion of their promises, they will meaningfully accelerate enterprise modernization—but success will depend as much on operational discipline and governance as on the underlying technology.Source: The Tech Buzz https://www.techbuzz.ai/articles/aws-unveils-ai-agents-at-re-invent-but-faces-uphill-battle/