Glean’s move from “Google for enterprise” to the invisible intelligence layer under every workplace AI is not a product tweak — it’s a strategic bet that could rewrite how companies adopt, govern, and scale generative AI across the business.

Background: how we got here and why the layer matters

Enterprise AI adoption today looks like a fight for the user interface. Microsoft has folded Copilot into Microsoft 365 and Windows; Google is pushing Gemini into Workspace; OpenAI, Anthropic, and other model vendors are courting enterprises directly; and almost every SaaS vendor has a built-in assistant. Those moves create a crowded surface of AI: chat windows, sidebars, embedded assistants. But winning the surface does not automatically solve the deeper problem enterprises face — how to make models work safely and usefully with live, permissioned company data and business processes.Glean started in 2019 as an enterprise search product with a simple promise: help people find the right information across Slack, Google Drive, Confluence, Salesforce, and dozens of other tools. That foundational work — indexing, cross‑tool mapping of people and documents, mirroring permissions — has quietly become a competitive asset. Because Glean already understands where information lives, who can see it, and how pieces of work connect, the company argues it is uniquely placed to be the intelligence layer that sits between generic LLMs and the company’s governed knowledge graph. Glean’s June 10, 2025 Series F and subsequent product announcements accelerated that thesis into a commercial strategy.

Why does that matter? Because LLMs are powerful pattern‑matching engines but are not natively tied to the constantly changing, access‑restricted realities inside an enterprise. The difference between a model that can write an answer and a model that can act correctly within corporate boundaries is the intelligence plumbing: connectors, permissioned retrieval, reliable grounding, action orchestration, audit trails. That’s the layer Glean is building.

The three pillars of an enterprise intelligence layer

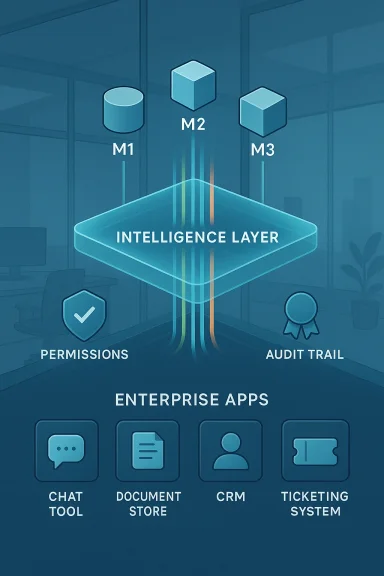

Glean frames its platform around three structural pillars. Each addresses a recognized failure mode when enterprises try to bolt LLMs onto their systems.1) Model access and abstraction — avoid lock‑in, pick the right model for the job

- What it is: a model switchboard and orchestration fabric that lets enterprises route queries and agent steps to different LLMs (proprietary or open‑source) based on task, cost, latency, or compliance needs.

- Why it matters: firms do not want to be trapped in one lab’s ecosystem; they want to choose the best model for a writing task, a code task, a summarization task, or an action that must be auditable.

- How Glean describes it: a platform that supports “LLM options” and open agent interoperability so customers can combine or swap models without rebuilding their data connectors.

2) Deep system connectors — intelligence must be able to act

- What it is: first‑class integrations into systems like Slack, Jira, Confluence, Google Drive, Salesforce and many others, coupled with the ability for agents to perform actions where appropriate (e.g., open tickets, suggest sales updates, embed answers in CRM).

- Why it matters: generative AI stops being a novelty when it can automate multi‑step workflows — triage a customer case, fetch historical cases, propose an update, and (with the right approvals) apply it. That requires both read access and controlled write actions.

3) Governance and permissions‑aware retrieval — security, trust, and auditability

- What it is: a retrieval and governance layer that understands who is asking, what they are allowed to see, and which sources should be used to ground any generated answer. It also provides citations and built‑in verification against source documents.

- Why it matters: the two biggest enterprise risks with generative AI are (a) hallucination — confidently wrong answers; and (b) data leakage — exposing information to people or models without appropriate rights. Permissions‑aware retrieval and audit trails are the difference between a pilot and an org‑wide deployment.

Market validation: funding, ARR signals, and investor thesis

Glean’s June 10, 2025 Series F — $150 million at a $7.2 billion valuation — is the clearest external signal investors believe the middleware/intelligence layer thesis has commercial legs. The round was led by Wellington Management and included a long list of enterprise‑software investors, reinforcing that the market sees durable enterprise demand for trusted AI infrastructure. Glean told the market it had crossed $100 million ARR before the Series F, and later public reporting from the company suggests continued ARR growth.Why is this important? Two reasons:

- A high valuation backed by real ARR indicates the market is rewarding a product that converts beyond experimental pilots into repeatable revenue.

- The investor mix — growth and enterprise funds — suggests buyers and those who advise them see strong demand from large customers who prioritize security, governance, and cross‑tool interoperability.

How the intelligence layer affects real‑world deployments

For IT leaders, the promise of generative AI is clear — faster research, automated routine tasks, more leveraged human effort — but the adoptions that matter require operational safety.- Fine‑grained answers, not blasted leaks: when an employee queries “What is the product roadmap?” the system must assemble a synthesized answer from Confluence, Slack threads, and Jira tickets only if the employee has rights to all those sources. A single misstep can leak confidential roadmaps or customer details. Glean’s permission mirroring and live fetch behaviors address that exact use case.

- Auditability and citations: enterprise adoption demands that generated outputs be traceable to source documents. If a model recommends a price change or a code‑release plan, auditors will want to see the source facts. Glean’s platform emphasizes referenceable answers and citations as part of its grounding strategy.

- Agents that act, with human‑in‑the‑loop controls: automating actions (e.g., closing stale tickets) requires workflows that propose actions, get approvals, and then execute. Glean’s agent framework includes action‑level RBAC and configurable human review gates so organizations can scale agent actions without scaling risk.

Strengths: what Glean brings to the enterprise table

- Contextual depth born from search: Glean’s historical focus on enterprise search forced the company to solve mapping problems early — identity resolution, cross‑tool indexing, permission modeling — which are now core to delivering safe generative experiences. That engineering debt is a durable advantage.

- Model neutrality as a commercial hedge: supporting multiple LLMs lets customers pick and evolve models over time. This makes Glean attractive to enterprises with multi‑vendor risk profiles or specific compliance constraints.

- Actionable connectors, not just read‑only integrations: the ability to surface cross‑tool context in‑place (embedded panels in Service Cloud, for example) and to run controlled write actions raises the platform’s practical value. Enterprises pay for automation that reduces manual toil, not just for prettier results in search.

- Capital efficiency vs. compute‑heavy labs: unlike frontier model providers that burn capital on training and massive compute, Glean sells enterprise software and services built on top of models. That business model can scale more profitably if product‑market fit persists. The June 2025 raise provided a war chest to expand product and go‑to‑market.

Risks and open questions that enterprise buyers and execs should weigh

No strategy is risk‑free. Here are the central uncertainties that will determine whether Glean’s layer is a long‑term winner or a well‑funded detour.1) Platform encroachment from the hyperscalers

Microsoft and Google control massive endpoints in enterprise workflows. If they successfully couple strong governance with compelling cross‑tool connectors (including third‑party embedding), the need for a neutral layer could be reduced for customers already committed to a single vendor ecosystem. The question for Glean: can neutrality and heterogeneous integrations remain more valuable than the convenience of a single‑vendor stack? Many customers will decide based on procurement complexity, existing cloud contracts, and how well each vendor proves governance in practice.2) The operational cost and complexity of being the “router”

Glean’s model‑agnostic approach requires ongoing engineering work: integrating new models, maintaining compatibility, benchmarking performance/cost tradeoffs, and ensuring consistent hallucination controls across vendors. The company must also manage emerging licensing terms and data privacy constraints tied to each model provider. That operational overhead scales with platform adoption and could erode margins if not automated thoughtfully.3) The tension between openness and control

Enterprises want choice; compliance teams want control. Supporting both simultaneously means building fine‑grained policy and telemetry systems that are easy to understand and operate. If policy complexity becomes the hidden cost of flexibility, CIOs may prefer fewer moving parts in exchange for strong, opinionated governance. Glean’s challenge is to make orchestration and policy simple enough for security teams to adopt.4) Measuring business outcomes beyond demos

Early AI pilots often produce impressive demos but weak ROI when scaled. Glean’s messaging anchors on agent actions and ARR growth, and the company reports strong traction in agent usage metrics, but large customers will still ask for hard KPIs: time saved, error rate reduction, compliance incident reduction, and cost offsets versus professional services. The company will need to keep building prescriptive playbooks and measurable outcomes to convert pilots into rollouts.Competitive landscape and where Glean fits

- Hyperscalers (Microsoft, Google, AWS): They own platforms and endpoints that are deeply embedded in enterprise workflows. Their advantage is tight integration and a single commercial stack, often bundled with existing licensing. Their weakness in many scenarios is vendor lock‑in and limited cross‑tool interoperability when customers use mixed stacks.

- Model labs (OpenAI, Anthropic, Cohere): They supply the core models enterprises use. Their strategic interest is selling models and APIs; they are less focused on deep, enterprise permission modeling across hundreds of SaaS tools. Firms like Glean can sit between models and customers, delivering the permissions and connectors model vendors typically do not provide.

- Point solutions and vertical specialists: Many vendors focus on a single application (e.g., sales‑specific copilots, HR assistants). They can win by being domain‑specialized, but they cannot replace a horizontal intelligence layer that spans the whole company.

Practical guidance for IT leaders evaluating an intelligence layer

If your org is wrestling with how to operationalize generative AI safely, consider this pragmatic checklist:- Define the trust boundary. Map which data must never leave systems, what can be exposed to models under strict controls, and what can be used for test pilots.

- Inventory connectors and entitlements. Understand which systems the intelligence layer must integrate with and whether it can mirror source‑level permissions (e.g., record‑level Salesforce access).

- Decide your model policy. Choose whether you will standardize on one model vendor for simplicity or require flexibility for best‑of‑breed functionality and cost control.

- Insist on citations and audit trails. Any candidate platform should produce answers that can be traced back to source documents and include human review options for write actions.

- Pilot with measurable outcomes. Start with one function (support triage, sales pipeline hygiene, compliance summarization) with clear KPIs for speed, error reductions, and dollars saved.

Why neutrality is a defensible strategic bet — and where it can fail

Neutrality buys customers flexibility. Corporate software estates are heterogeneous: HR systems from one vendor, CRM from another, bespoke code in Git repositories, contracts in a document system. A neutral intelligence layer that understands all of those contexts has a network effect — knowledge about people, documents, and workflows compounds as more sources are connected.But neutrality is costly. It requires relentless engineering across connectors, robust policy engines, orchestration systems, and a commercial model that aligns with customers who might otherwise accept a bundled solution for convenience. The vendor that solves the “last 10%” of governance and makes the layer operationally frictionless will win enterprise budgets. Whether Glean can maintain velocity against tech giants that can subsidize integration work through other product lines is the industry’s open question.

Verdict: an essential piece of infrastructure if they can operationalize it

Glean’s strategy reads like a classic enterprise software playbook: identify a foundational operational problem (finding and understanding enterprise context), build a durable engineering solution (connectors, permissions, indexing), and then layer new functionality (agents, model abstraction, governance) on top as the market evolves. The company’s funding, ARR trajectory, and product rollouts provide credible evidence that the market values this approach.But the road ahead is conditional. Winning the intelligence‑layer category requires more than good engineering; it demands frictionless onboarding, enterprise‑grade security, easily demonstrable ROI, and a political narrative that convinces procurement and platform teams a neutral layer is worth the added complexity. If Glean — or any layer vendor — can make governance, model routing, and connector maintenance nearly invisible to customers while delivering measurable outcomes, they will be an indispensable piece of enterprise AI infrastructure. If not, hyperscalers’ convenience and single‑stack economics will be a formidable force.

What to watch next

- Product adoption metrics: look for published customer case studies with hard ROI figures (time saved, cost avoided, compliance incidents prevented). Those will be the clearest signals of enterprise impact.

- Hyperscaler responses: watch how Microsoft, Google, and others extend their governance and cross‑tool connectors — specifically whether they open enough interoperability to keep neutral players relevant.

- Model licensing and data‑use terms: changes in model vendors’ enterprise licensing (e.g., stricter data‑retention or usage clauses) will affect how third‑party intelligence layers operate and sell.

- Standards and regulatory pressure: as governments and regulators focus on generative AI, expect compliance requirements that favor platforms offering strong auditability and permissioning controls. Vendors that bake those into the platform will have a commercial edge.

Conclusion

The race for the chatbot has created visible winners and lots of brand noise, but the harder battle is underneath: reliably connecting powerful, generic LLMs to the messy, permissioned, and changeable world of corporate knowledge and workflows. Glean’s engineering history — enterprise search, permission mirroring, and cross‑tool indexing — gives it a plausible path to build that intelligence layer. Its product bets (model abstraction, deep connectors, governance) and its June 10, 2025 funding round validate the thesis in the market.That said, neutrality is only a winning strategy if it simplifies operations for customers and demonstrably reduces risk while delivering measurable outcomes. The contest with hyperscalers and the practical challenges of operating as a multi‑model, multi‑connector platform mean the outcomes are still undecided. For IT leaders, the right posture is pragmatic: pilot with clear KPIs, test governance controls rigorously, and treat the intelligence layer as infrastructure that must prove its value in dollars saved, incidents avoided, and processes automated — not merely as another shiny assistant on the desktop.

Source: Bitcoin world Enterprise AI’s Critical Layer: How Glean’s Ingenious Strategy Builds the Intelligence Beneath the Interface