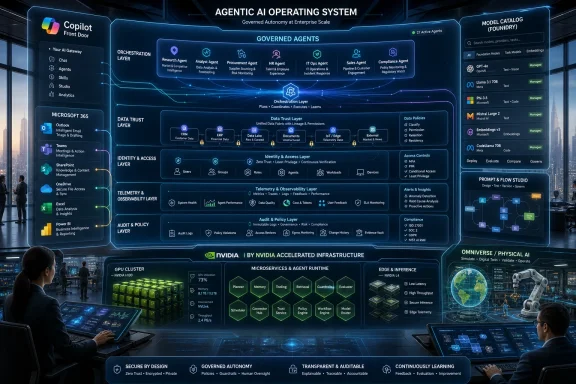

EY has built the EY.ai Agentic Platform as a global enterprise-scale agentic AI operating system, combining Microsoft Foundry, Microsoft 365 Copilot, Fabric and Copilot Studio with NVIDIA GPUs, NIM microservices, NeMo Guardrails, Foundry and Omniverse to run governed AI agents across its business and client work. The important part is not that another large consultancy has launched another AI platform. It is that EY is treating agents less like clever chatbots and more like a new enterprise control layer. If this model works, the next fight in corporate AI will be about who owns the operating system for work itself.

The phrase agentic AI operating system sounds like marketing until you strip it down to its practical claim. EY is saying that an enterprise does not scale AI by giving every team a model, every department a bot, and every employee a prompt library. It scales AI by building a governed substrate where models, data, permissions, tools, workflows, audit trails and user experiences are managed as one system.

That is a very different argument from the first wave of generative AI adoption. The early enterprise story was mostly about productivity: summarize this meeting, draft that email, search this document, generate that slide. EY’s case study describes a firm that has already moved past that phase, with EY.ai EYQ deployed to more than 300,000 professionals and Microsoft 365 Copilot rolled into real workflows across Tax, Assurance, Consulting and internal functions.

The next bottleneck is not whether people can access generative AI. It is whether thousands of agents can act inside a global professional-services firm without creating chaos. EY’s answer is to put intelligence, orchestration, and data trust into a common architecture rather than letting agents multiply as disconnected departmental experiments.

For WindowsForum readers, that distinction matters. Microsoft has spent the past two years pushing Copilot from a sidebar into the center of Microsoft 365, Windows, security tooling, developer workflows and business applications. EY’s design shows what that pitch looks like when a global enterprise tries to turn it into an operating model rather than a licensing line item.

Fragmentation is the hidden tax of enterprise AI. One team tunes a model for tax research. Another builds an assistant for audit evidence. Another connects a bot to internal knowledge stores. Another experiments with client-facing agents. Each individual effort can be rational, but the aggregate result is duplicated spend, uneven controls, inconsistent telemetry and a brittle mess of bespoke integrations.

EY’s case study frames this as a strategic risk, not an IT hygiene issue. The firm has tied AI to growth ambitions, client transformation work and its own internal operating model. In that context, the danger is not simply that teams build too many bots. It is that the firm’s AI estate becomes unmanageable just as clients are asking EY to help them manage theirs.

That is why the operating-system metaphor is useful, even if it is not literal. EY is not replacing Windows, Azure, Microsoft 365, NVIDIA’s stack or its own EY Fabric. It is trying to impose a higher-level order across them: a layer where models can be selected, agents can be composed, data can be governed, and autonomous workflows can be run without every project becoming its own mini-platform.

That split maps neatly onto where each vendor wants to sit in the enterprise stack. Microsoft wants Copilot to be the place where work happens, especially inside email, documents, meetings, Teams, SharePoint and business applications. NVIDIA wants to be the accelerated computing and AI factory layer underneath the modern enterprise, not merely the company that sells the GPUs everyone needs.

EY’s role is to stitch those worlds into something clients can actually buy, govern and operate. That is the consultancy value proposition in miniature: the hyperscalers and silicon vendors provide the platforms; EY turns them into industry workflows, controls, templates, training, and change management. The case study is as much about ecosystem orchestration as it is about AI architecture.

The most interesting move is that EY is not presenting this as a Microsoft-only play. The platform is described as extensible, portable and multi-technology, operating across Microsoft and NVIDIA environments for internal and client applications. That language is doing a lot of work. It reassures clients that EY is not merely reselling one vendor’s roadmap, while also acknowledging that the enterprise AI market is too unsettled for any serious integrator to bet everything on a single model provider or cloud abstraction.

EY’s intelligence layer is designed around a centralized way to access, tune, govern and deploy foundation models and agentic reasoning capabilities. That includes model catalogs, tuning and distillation pipelines, guardrails, multimodal intelligence, planning frameworks and tool-use frameworks. In plainer English: EY wants one place to decide what brains its agents are allowed to use and under what conditions.

This is where the operating-system analogy becomes more concrete. Traditional operating systems abstract hardware resources and expose controlled APIs to applications. EY’s platform is trying to abstract AI resources and expose governed capabilities to agents and workflows. Instead of every team picking its own model and improvising its own controls, the platform makes model choice part of the enterprise architecture.

That does not eliminate risk. A centralized catalog can become a bottleneck, a compliance theater, or a false sense of safety if model evaluation is shallow. But the alternative is worse: thousands of autonomous or semi-autonomous agents making opaque calls to inconsistent models against inconsistently governed data. For a firm in assurance, tax and consulting, that would be asking for reputational pain.

But EY’s design also makes clear that Copilot alone is not the architecture. Copilot is the front door, not the building. Behind it sit identity, lifecycle management, agent creation tools, multi-agent coordination, data governance, telemetry, model selection, and domain-specific intelligence. That distinction is crucial for CIOs evaluating Microsoft’s AI stack.

A user can experience AI as a button in Outlook or Teams. An enterprise has to experience it as a managed runtime with security boundaries, approvals, logs, escalation paths, cost controls and failure modes. EY is effectively saying that the difference between a Copilot deployment and an agentic operating system is everything behind the pleasant chat interface.

This should sound familiar to Windows administrators. The desktop has always hidden enormous complexity behind icons and menus. Identity, group policy, endpoint management, app deployment, logging, patching and data protection determine whether a user-friendly environment can survive at scale. Agentic AI is developing the same split between the visible interface and the administrative substrate beneath it.

That is why EY’s third layer — data, trust and governance — is not a supporting character. It is the system’s license to operate. The EY.ai Data Marketplace is described as the trusted foundation connecting AI-ready data to model performance, agent effectiveness and business value. The phrase may be polished, but the underlying point is sharp: agents are only useful if they can see the right data, and only safe if they cannot see the wrong data.

This is where many enterprise AI pilots stall. A demo against sanitized data can look magical. A production workflow that has to respect client confidentiality, regional regulation, role-based permissions, data lineage, retention rules and audit requirements is far less magical. It is infrastructure work, and infrastructure work is slow.

EY’s professional-services context raises the stakes. A bad retail chatbot might annoy customers. A bad audit, tax or compliance agent could contaminate advice, expose sensitive data or undermine client trust. In that setting, Responsible AI is not a slide at the end of the presentation; it has to be embedded into how agents are built, discovered, deployed and monitored.

Internal agent marketplaces could become the next version of corporate app stores. Instead of installing a mobile app or requesting access to a SaaS tool, employees may subscribe to an approved agent that knows how to perform a specific business process. Some agents will be general assistants. Others will be narrow, domain-specific workers that handle particular tasks inside a regulated workflow.

That model has obvious appeal. It supports reuse, standardization and faster diffusion of successful automation. It also creates a new administrative burden. Someone has to certify which agents are safe, which models they use, which data sources they touch, how they are versioned, what telemetry they emit, and when they should be retired.

The marketplace idea also changes how employees experience software. In the traditional enterprise, an application is a destination. In an agentic enterprise, a capability may be invoked through Copilot, embedded in a meeting, triggered by a document, or coordinated by another agent. The user may not care which application did the work. IT absolutely will.

The history of enterprise technology repeats itself here. Macros, Access databases, SharePoint workflows, low-code apps, SaaS subscriptions and automation scripts all began as ways for business users to solve real problems faster than central IT could respond. They also created governance debt. Agents are potentially more powerful than all of those because they can reason, call tools, handle unstructured inputs and coordinate with other agents.

EY’s answer is to industrialize the development pattern before the sprawl becomes unmanageable. Consistent multi-agent patterns, telemetry-driven automation and reusable intelligence across domains are attempts to make agents look less like one-off experiments and more like governed software assets. That is the right instinct.

Still, the scale raises hard questions that the case study understandably does not linger on. How many of those 50,000 agents are production-grade? How many are prototypes? How are they evaluated? How often are they used? How many duplicate one another? The agent count is a signal of momentum, but the governance model will determine whether it becomes a durable advantage or a maintenance backlog.

The workforce has to learn when to trust an agent, when to challenge it, when to escalate, and when not to use it at all. That is especially true in a professional-services firm where the output is often judgment. AI can increase capacity, but it can also create a false fluency that makes weak analysis look finished.

Training also changes the politics of adoption. If workers experience agents as imposed automation, resistance is rational. If they experience agents as governed assistants that remove drudgery while preserving human accountability, adoption is easier. EY is clearly trying to frame the platform as augmentation rather than replacement.

That framing should not be accepted uncritically. Agentic systems will change staffing models, leverage ratios and career paths in consulting, audit and tax. Junior work that once trained people may be automated or compressed. The firms that adopt agents most aggressively will need to think just as hard about apprenticeship and expertise formation as they do about productivity.

That matters because the agent story is often told as if it lives only inside documents and chat windows. In reality, the same architectural pattern can extend into factories, supply chains, energy systems, logistics and industrial design. Agents reason over operational data, simulations test possible actions, and human operators remain in the loop for high-consequence decisions.

EY’s case study gestures at that broader horizon. A global consultancy does not want an AI platform only for writing emails faster. It wants a repeatable blueprint for clients in manufacturing, finance, healthcare, government and infrastructure. The inclusion of physical AI is a sign that the platform is meant to travel from the back office into the operational core of businesses.

For Microsoft, this is strategically valuable. If Fabric becomes the data unification layer, Foundry becomes the model and agent layer, and Copilot becomes the user interface, then Azure becomes a natural home for both knowledge-work agents and industrial AI systems. For NVIDIA, the same story keeps accelerated computing central as enterprise AI moves from pilots into production workloads.

Microsoft is already building answers into the ecosystem Windows-centric organizations use every day. Copilot Studio, Microsoft 365 Copilot, Microsoft Foundry, Fabric, Entra, Purview and Defender are converging around the idea that AI agents are manageable enterprise identities and workloads. That is a profound shift from treating AI as a feature sprinkled onto existing software.

The practical consequence for sysadmins is that agent governance will become part of the Microsoft estate. It will touch identity, conditional access, data loss prevention, endpoint security, compliance, logging and lifecycle management. The people who once had to explain why unmanaged macros were dangerous may soon be explaining why unmanaged agents are worse.

The practical consequence for developers is just as large. Building an enterprise agent will not simply mean wiring a model to a tool. It will mean operating inside approved frameworks, using sanctioned connectors, respecting data boundaries, emitting telemetry and passing evaluation gates. The agent that works in a hackathon will not automatically be the agent allowed near production data.

There is also a deeper accountability problem. When a human uses software, the chain of responsibility is usually legible. When a human delegates to an agent that invokes another agent, calls tools, retrieves data, uses a tuned model and produces a recommendation, accountability becomes harder to explain. That is why auditability and lineage are not bureaucratic extras. They are the only way to make agentic work defensible.

EY’s assurance heritage gives it a credible reason to emphasize trust. It also gives the firm a commercial incentive to define the problem in a way that requires consulting, governance frameworks and transformation services. That does not make the argument wrong. It means customers should separate the architectural lesson from the sales motion.

The lesson is that agentic AI cannot be responsibly scaled as a collection of clever demos. The sales motion is that EY, Microsoft and NVIDIA can provide the blueprint. Enterprises should study the former carefully and evaluate the latter with the same skepticism they would bring to any strategic platform commitment.

Source: EY Case study: Building an enterprise-scale agentic AI OS

EY Is Trying to Turn AI From a Tool Into the Firm’s Workload Scheduler

EY Is Trying to Turn AI From a Tool Into the Firm’s Workload Scheduler

The phrase agentic AI operating system sounds like marketing until you strip it down to its practical claim. EY is saying that an enterprise does not scale AI by giving every team a model, every department a bot, and every employee a prompt library. It scales AI by building a governed substrate where models, data, permissions, tools, workflows, audit trails and user experiences are managed as one system.That is a very different argument from the first wave of generative AI adoption. The early enterprise story was mostly about productivity: summarize this meeting, draft that email, search this document, generate that slide. EY’s case study describes a firm that has already moved past that phase, with EY.ai EYQ deployed to more than 300,000 professionals and Microsoft 365 Copilot rolled into real workflows across Tax, Assurance, Consulting and internal functions.

The next bottleneck is not whether people can access generative AI. It is whether thousands of agents can act inside a global professional-services firm without creating chaos. EY’s answer is to put intelligence, orchestration, and data trust into a common architecture rather than letting agents multiply as disconnected departmental experiments.

For WindowsForum readers, that distinction matters. Microsoft has spent the past two years pushing Copilot from a sidebar into the center of Microsoft 365, Windows, security tooling, developer workflows and business applications. EY’s design shows what that pitch looks like when a global enterprise tries to turn it into an operating model rather than a licensing line item.

The First GenAI Win Created the Next Enterprise Problem

EY’s starting point is familiar to anyone who has watched large organizations adopt cloud, collaboration platforms or automation suites. A successful pilot becomes a successful rollout, and then the rollout creates a governance problem bigger than the original technical one. Once EY had secure chat, domain assistants, prompt tooling and AI-enabled business workflows running at scale, the firm faced the problem of fragmentation.Fragmentation is the hidden tax of enterprise AI. One team tunes a model for tax research. Another builds an assistant for audit evidence. Another connects a bot to internal knowledge stores. Another experiments with client-facing agents. Each individual effort can be rational, but the aggregate result is duplicated spend, uneven controls, inconsistent telemetry and a brittle mess of bespoke integrations.

EY’s case study frames this as a strategic risk, not an IT hygiene issue. The firm has tied AI to growth ambitions, client transformation work and its own internal operating model. In that context, the danger is not simply that teams build too many bots. It is that the firm’s AI estate becomes unmanageable just as clients are asking EY to help them manage theirs.

That is why the operating-system metaphor is useful, even if it is not literal. EY is not replacing Windows, Azure, Microsoft 365, NVIDIA’s stack or its own EY Fabric. It is trying to impose a higher-level order across them: a layer where models can be selected, agents can be composed, data can be governed, and autonomous workflows can be run without every project becoming its own mini-platform.

Microsoft Gets the Front Door, NVIDIA Gets the Engine Room

The architecture EY describes is bluntly pragmatic. Microsoft provides much of the enterprise-facing orchestration layer: Foundry and its model catalog, Copilot as the user-facing entry point, Microsoft Fabric for data unification, and Copilot Studio for building enterprise agents. NVIDIA provides the acceleration and AI infrastructure side: GPUs, NIM microservices, NeMo Guardrails, training and distillation tooling, and Omniverse for simulation and physical AI scenarios.That split maps neatly onto where each vendor wants to sit in the enterprise stack. Microsoft wants Copilot to be the place where work happens, especially inside email, documents, meetings, Teams, SharePoint and business applications. NVIDIA wants to be the accelerated computing and AI factory layer underneath the modern enterprise, not merely the company that sells the GPUs everyone needs.

EY’s role is to stitch those worlds into something clients can actually buy, govern and operate. That is the consultancy value proposition in miniature: the hyperscalers and silicon vendors provide the platforms; EY turns them into industry workflows, controls, templates, training, and change management. The case study is as much about ecosystem orchestration as it is about AI architecture.

The most interesting move is that EY is not presenting this as a Microsoft-only play. The platform is described as extensible, portable and multi-technology, operating across Microsoft and NVIDIA environments for internal and client applications. That language is doing a lot of work. It reassures clients that EY is not merely reselling one vendor’s roadmap, while also acknowledging that the enterprise AI market is too unsettled for any serious integrator to bet everything on a single model provider or cloud abstraction.

Foundry Turns the Model Catalog Into Enterprise Plumbing

Microsoft Foundry sits at the heart of EY’s orchestration story because the model layer has become too complicated for casual selection. Enterprises now face a sprawling menu of frontier models, small language models, open-weight models, multimodal models, domain models, safety components and inference options. Choosing a model is no longer a developer preference; it is a governance, cost, latency, data-residency and risk decision.EY’s intelligence layer is designed around a centralized way to access, tune, govern and deploy foundation models and agentic reasoning capabilities. That includes model catalogs, tuning and distillation pipelines, guardrails, multimodal intelligence, planning frameworks and tool-use frameworks. In plainer English: EY wants one place to decide what brains its agents are allowed to use and under what conditions.

This is where the operating-system analogy becomes more concrete. Traditional operating systems abstract hardware resources and expose controlled APIs to applications. EY’s platform is trying to abstract AI resources and expose governed capabilities to agents and workflows. Instead of every team picking its own model and improvising its own controls, the platform makes model choice part of the enterprise architecture.

That does not eliminate risk. A centralized catalog can become a bottleneck, a compliance theater, or a false sense of safety if model evaluation is shallow. But the alternative is worse: thousands of autonomous or semi-autonomous agents making opaque calls to inconsistent models against inconsistently governed data. For a firm in assurance, tax and consulting, that would be asking for reputational pain.

Copilot Is the User Interface, Not the Whole System

Microsoft would like many customers to think of Copilot as the face of AI at work, and EY’s architecture broadly accepts that premise. Agents need to appear inside the tools employees already use: email, documents, meetings and collaboration spaces. If agentic workflows live in a separate portal, they become another system of record for employees to avoid.But EY’s design also makes clear that Copilot alone is not the architecture. Copilot is the front door, not the building. Behind it sit identity, lifecycle management, agent creation tools, multi-agent coordination, data governance, telemetry, model selection, and domain-specific intelligence. That distinction is crucial for CIOs evaluating Microsoft’s AI stack.

A user can experience AI as a button in Outlook or Teams. An enterprise has to experience it as a managed runtime with security boundaries, approvals, logs, escalation paths, cost controls and failure modes. EY is effectively saying that the difference between a Copilot deployment and an agentic operating system is everything behind the pleasant chat interface.

This should sound familiar to Windows administrators. The desktop has always hidden enormous complexity behind icons and menus. Identity, group policy, endpoint management, app deployment, logging, patching and data protection determine whether a user-friendly environment can survive at scale. Agentic AI is developing the same split between the visible interface and the administrative substrate beneath it.

The Real Product Is Governed Autonomy

The word autonomous appears often in agentic AI discussions, but most enterprises do not actually want unconstrained autonomy. They want delegated action within bounded environments. An agent may be allowed to summarize records, draft a response, prepare evidence, recommend a workflow, or trigger a process, but each of those actions has to respect permissions, data lineage, policy and business context.That is why EY’s third layer — data, trust and governance — is not a supporting character. It is the system’s license to operate. The EY.ai Data Marketplace is described as the trusted foundation connecting AI-ready data to model performance, agent effectiveness and business value. The phrase may be polished, but the underlying point is sharp: agents are only useful if they can see the right data, and only safe if they cannot see the wrong data.

This is where many enterprise AI pilots stall. A demo against sanitized data can look magical. A production workflow that has to respect client confidentiality, regional regulation, role-based permissions, data lineage, retention rules and audit requirements is far less magical. It is infrastructure work, and infrastructure work is slow.

EY’s professional-services context raises the stakes. A bad retail chatbot might annoy customers. A bad audit, tax or compliance agent could contaminate advice, expose sensitive data or undermine client trust. In that setting, Responsible AI is not a slide at the end of the presentation; it has to be embedded into how agents are built, discovered, deployed and monitored.

Agent Marketplaces Are Coming for Internal Software Portfolios

One of the quieter but more consequential claims in the case study is that EY.ai EYQ evolves into an enterprise marketplace where agents are created, discovered and consumed. That is a familiar platform move. Once an organization has enough reusable components, it needs a place to find them, rate them, govern them and prevent everyone from rebuilding the same thing.Internal agent marketplaces could become the next version of corporate app stores. Instead of installing a mobile app or requesting access to a SaaS tool, employees may subscribe to an approved agent that knows how to perform a specific business process. Some agents will be general assistants. Others will be narrow, domain-specific workers that handle particular tasks inside a regulated workflow.

That model has obvious appeal. It supports reuse, standardization and faster diffusion of successful automation. It also creates a new administrative burden. Someone has to certify which agents are safe, which models they use, which data sources they touch, how they are versioned, what telemetry they emit, and when they should be retired.

The marketplace idea also changes how employees experience software. In the traditional enterprise, an application is a destination. In an agentic enterprise, a capability may be invoked through Copilot, embedded in a meeting, triggered by a document, or coordinated by another agent. The user may not care which application did the work. IT absolutely will.

The 50,000-Agent Claim Is Impressive — and a Warning

EY says its platform accelerated experimentation and development of more than 50,000 agents in nine months. That number is impressive, but it should also make every enterprise architect sit up straight. If one professional-services firm can generate agents at that pace, the question is not whether agent sprawl will happen. It is whether organizations will notice before it becomes their next shadow IT crisis.The history of enterprise technology repeats itself here. Macros, Access databases, SharePoint workflows, low-code apps, SaaS subscriptions and automation scripts all began as ways for business users to solve real problems faster than central IT could respond. They also created governance debt. Agents are potentially more powerful than all of those because they can reason, call tools, handle unstructured inputs and coordinate with other agents.

EY’s answer is to industrialize the development pattern before the sprawl becomes unmanageable. Consistent multi-agent patterns, telemetry-driven automation and reusable intelligence across domains are attempts to make agents look less like one-off experiments and more like governed software assets. That is the right instinct.

Still, the scale raises hard questions that the case study understandably does not linger on. How many of those 50,000 agents are production-grade? How many are prototypes? How are they evaluated? How often are they used? How many duplicate one another? The agent count is a signal of momentum, but the governance model will determine whether it becomes a durable advantage or a maintenance backlog.

AI Training Is the Other Half of the Platform

EY reports that more than 80 percent of its professionals are using EY.ai EYQ and more than 80 percent have completed foundational AI training. It also points to 2 million learning hours consumed and hundreds of credentials awarded through a partnership with Hult International Business School. Those figures matter because agentic AI cannot be deployed purely as an infrastructure project.The workforce has to learn when to trust an agent, when to challenge it, when to escalate, and when not to use it at all. That is especially true in a professional-services firm where the output is often judgment. AI can increase capacity, but it can also create a false fluency that makes weak analysis look finished.

Training also changes the politics of adoption. If workers experience agents as imposed automation, resistance is rational. If they experience agents as governed assistants that remove drudgery while preserving human accountability, adoption is easier. EY is clearly trying to frame the platform as augmentation rather than replacement.

That framing should not be accepted uncritically. Agentic systems will change staffing models, leverage ratios and career paths in consulting, audit and tax. Junior work that once trained people may be automated or compressed. The firms that adopt agents most aggressively will need to think just as hard about apprenticeship and expertise formation as they do about productivity.

Physical AI Pulls the Story Beyond Office Work

The NVIDIA side of EY’s architecture points beyond knowledge work into simulation, robotics and physical AI. Omniverse and simulation environments appear in the intelligence layer for scenarios involving physical systems. Microsoft and NVIDIA have also been tightening their integration around Foundry, Fabric, Omniverse libraries and industrial AI workloads.That matters because the agent story is often told as if it lives only inside documents and chat windows. In reality, the same architectural pattern can extend into factories, supply chains, energy systems, logistics and industrial design. Agents reason over operational data, simulations test possible actions, and human operators remain in the loop for high-consequence decisions.

EY’s case study gestures at that broader horizon. A global consultancy does not want an AI platform only for writing emails faster. It wants a repeatable blueprint for clients in manufacturing, finance, healthcare, government and infrastructure. The inclusion of physical AI is a sign that the platform is meant to travel from the back office into the operational core of businesses.

For Microsoft, this is strategically valuable. If Fabric becomes the data unification layer, Foundry becomes the model and agent layer, and Copilot becomes the user interface, then Azure becomes a natural home for both knowledge-work agents and industrial AI systems. For NVIDIA, the same story keeps accelerated computing central as enterprise AI moves from pilots into production workloads.

Windows Shops Should Read This as a Preview of Their Own Roadmap

Most organizations will not build something as elaborate as EY’s Agentic Platform. They will, however, face the same architectural questions in miniature. Which models are approved? Where do agents run? How are they identified? Which data can they access? How are prompts, tools, connectors and actions governed? What happens when agents collaborate or conflict?Microsoft is already building answers into the ecosystem Windows-centric organizations use every day. Copilot Studio, Microsoft 365 Copilot, Microsoft Foundry, Fabric, Entra, Purview and Defender are converging around the idea that AI agents are manageable enterprise identities and workloads. That is a profound shift from treating AI as a feature sprinkled onto existing software.

The practical consequence for sysadmins is that agent governance will become part of the Microsoft estate. It will touch identity, conditional access, data loss prevention, endpoint security, compliance, logging and lifecycle management. The people who once had to explain why unmanaged macros were dangerous may soon be explaining why unmanaged agents are worse.

The practical consequence for developers is just as large. Building an enterprise agent will not simply mean wiring a model to a tool. It will mean operating inside approved frameworks, using sanctioned connectors, respecting data boundaries, emitting telemetry and passing evaluation gates. The agent that works in a hackathon will not automatically be the agent allowed near production data.

The Platform Bet Still Depends on Trust the Market Has Not Fully Earned

EY’s case study is persuasive because it understands that governance is the product. But the broader agentic AI market is still young, unstable and overpromised. Models hallucinate. Tool calls fail. Permissions are misconfigured. Evaluations remain uneven. Costs can be unpredictable. Vendor roadmaps change faster than enterprise procurement cycles.There is also a deeper accountability problem. When a human uses software, the chain of responsibility is usually legible. When a human delegates to an agent that invokes another agent, calls tools, retrieves data, uses a tuned model and produces a recommendation, accountability becomes harder to explain. That is why auditability and lineage are not bureaucratic extras. They are the only way to make agentic work defensible.

EY’s assurance heritage gives it a credible reason to emphasize trust. It also gives the firm a commercial incentive to define the problem in a way that requires consulting, governance frameworks and transformation services. That does not make the argument wrong. It means customers should separate the architectural lesson from the sales motion.

The lesson is that agentic AI cannot be responsibly scaled as a collection of clever demos. The sales motion is that EY, Microsoft and NVIDIA can provide the blueprint. Enterprises should study the former carefully and evaluate the latter with the same skepticism they would bring to any strategic platform commitment.

The EY Blueprint Makes Agent Sprawl the New Shadow IT

The clearest takeaway from EY’s case study is that agentic AI is moving from experimentation into systems design. The organizations that win will not necessarily be the ones with the most agents, the biggest model catalog or the flashiest Copilot demo. They will be the ones that can make autonomy governed, observable and useful.- EY’s platform treats agents as enterprise software assets that need lifecycle management, identity, telemetry, security and governance rather than as isolated productivity bots.

- Microsoft’s role is strongest at the orchestration and user-experience layer, where Copilot, Foundry, Fabric and Copilot Studio can bring agents into everyday work.

- NVIDIA’s role is strongest in the accelerated computing, inference, guardrails, simulation and physical AI layers that make large-scale and domain-specific workloads feasible.

- The EY.ai Data Marketplace is central to the strategy because agentic systems become dangerous or useless when they lack permissioned, lineage-rich and compliant data.

- The reported development of more than 50,000 agents shows both the speed of enterprise experimentation and the scale of governance debt that can accumulate without a common platform.

- For Windows and Microsoft 365 environments, agent administration is likely to become a normal part of identity, compliance, security and endpoint operations.

Source: EY Case study: Building an enterprise-scale agentic AI OS