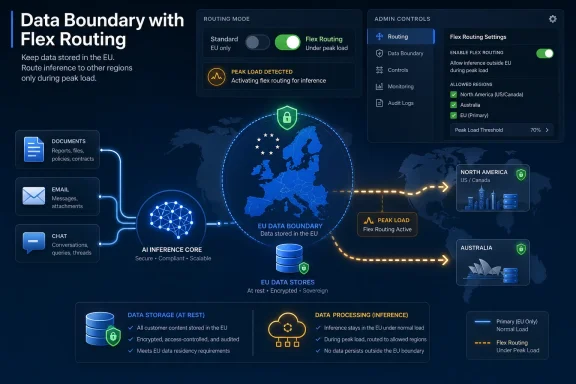

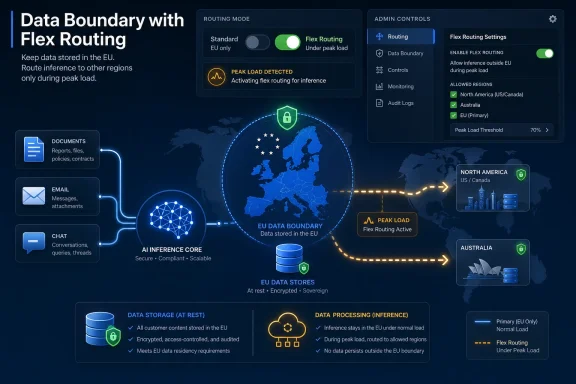

Microsoft introduced Flex Routing for Microsoft 365 Copilot in April 2026 for eligible EU and EFTA tenants, allowing large language model inferencing to occur in the United States, Canada, or Australia during peak demand while data at rest remains inside the EU Data Boundary. The feature is presented as a resiliency valve for an AI service under load. But for administrators, privacy officers, and regulated organizations, it is something more consequential: a default that can turn data residency from a policy into a runtime condition.

That is the uncomfortable lesson in the latest Copilot controversy. Microsoft is not saying it will store European customer data abroad as a matter of course, nor is it claiming that encryption vanishes at the border. The harder problem is that modern AI processing is not just storage, and the distinction between where data sleeps and where data is used has become the new compliance fault line.

Flex Routing sounds like the sort of infrastructure feature that would normally live deep in a cloud architecture diagram. When demand spikes in one region, traffic can be served by capacity elsewhere. Cloud platforms have spent two decades teaching customers that this is one of the reasons the cloud works at all.

Copilot changes the stakes because the request being routed is not a static web transaction or a cacheable asset. It is an inference call assembled from user prompts, Microsoft Graph context, email, documents, chats, meetings, and organizational metadata. In other words, the useful magic of Microsoft 365 Copilot is precisely what makes the routing decision sensitive.

Microsoft’s own description is careful. Flex Routing applies to large language model inferencing during periods of peak demand. Data remains encrypted in transit and at rest. Data at rest continues to be stored inside the EU Data Boundary, with limited exceptions for pseudonymized operational and security data.

That framing is not meaningless. Encryption matters. Storage commitments matter. The EU Data Boundary is a real architectural and contractual program, not a marketing sticker. But the question raised by privacy lawyers is not whether Microsoft is abandoning European data centers. It is whether a Copilot prompt and its assembled context may be processed outside the EU or EFTA when the service decides capacity requires it.

On that point, the answer appears to be yes, if Flex Routing is enabled.

That means a Copilot request is not merely “write me a polite email.” It may become a structured package of prompt text, grounding data, permissions-aware search results, retrieved documents, labels, and other context needed to produce the answer. The service has to collect enough relevant material to make the model useful.

Inference is the point at which that package is handed to the model for processing. For ordinary users, this is invisible. For compliance teams, it is the event that matters.

A company may be comfortable with Microsoft storing Exchange mailboxes, SharePoint files, and Teams messages in European data centers. It may have told customers, regulators, works councils, or auditors that its Microsoft 365 processing is bounded by a particular region. Flex Routing complicates that statement because the data may still be stored in Europe while being processed elsewhere for the purpose of producing an AI response.

This is not a pedantic distinction. GDPR and similar regimes are concerned with processing, not just storage. Access, transmission, retrieval, consultation, and use all matter. If personal data is sent to a model endpoint outside the EU Data Boundary for inferencing, privacy officers will ask whether that is a third-country transfer and what legal mechanism supports it.

Microsoft’s position is likely to lean on its existing data protection terms, transfer mechanisms, security controls, and regional commitments. Customers still need to decide whether that is enough for their own risk posture. The point is not that Flex Routing is automatically unlawful. The point is that it is automatically relevant.

Microsoft says Flex Routing is on by default for eligible tenants created after March 25, 2026. For eligible tenants that existed before that date, administrators are told to check Message Center details for their tenant’s default setting. That caveat matters because rollout behavior appears to have been communicated through Microsoft’s operational messaging rather than through a broad, unmistakable compliance event.

For IT departments, Message Center is familiar territory. It is where Microsoft announces deprecations, feature rollouts, admin center changes, Teams tweaks, Exchange behavior shifts, and the never-ending background weather of Microsoft 365. Some messages are urgent. Many are not. The channel is noisy enough that even disciplined administrators triage it rather than treating every entry as a legal fire alarm.

Flex Routing exposes the weakness of that model. A tenant-level setting that can alter where AI processing occurs is not just an admin convenience. It changes the factual basis on which an organization describes its data flows.

The default also reveals Microsoft’s product priority. Copilot must feel fast, available, and enterprise-ready. The nightmare scenario for Microsoft is a premium AI service that slows down or fails under peak European demand. Flex Routing reduces that risk by giving Microsoft more capacity to draw on.

That may be sensible engineering. It is also a governance choice. Microsoft has effectively made service continuity the default and left data-location strictness as something administrators must assert.

Flex Routing does not destroy the EU Data Boundary. But it makes the boundary look more conditional than many customers may have assumed.

The problem is not that Microsoft hid the concept of exceptions. Cloud residency programs always have scope notes, service exclusions, telemetry carve-outs, support access rules, and operational necessities. The problem is that generative AI turns an exception into part of the interactive user experience.

A support engineer accessing logs is one kind of risk. An AI service dynamically sending inference workloads abroad during load peaks is another. The first is episodic and operational. The second may be woven into daily work by design.

That is why “data remains encrypted” does not end the debate. Encryption in transit protects against interception. Encryption at rest protects stored content. Neither changes the fact that a service must process usable data to generate a useful answer. At some layer, the model-serving system must receive input it can act upon.

The compliance argument therefore shifts from “is my data stored in Europe?” to “what processing happens outside Europe, when, under what controls, and under whose decision?” Flex Routing makes that question impossible to ignore.

GDPR analysis is less interested in the label. If personal data is transferred to or processed in a third country, organizations need to understand the legal basis and safeguards. Depending on the destination and the nature of the data, that may involve an adequacy decision, standard contractual clauses, transfer impact assessments, supplementary measures, or additional contractual review.

The United States sits in a different posture from Canada and Australia. The EU-U.S. Data Privacy Framework provides an adequacy mechanism for certified organizations and covered transfers, but it is not a universal solvent. Canada has partial adequacy in specific commercial contexts, and Australia does not enjoy a general EU adequacy decision. The details matter, and they will not be identical across all tenants, sectors, or data categories.

The practical implication is that administrators cannot treat Flex Routing as a purely technical switch. If enabled, it belongs in the organization’s data map. It may belong in records of processing activities. It may need to be assessed in a data protection impact assessment for Microsoft 365 Copilot. It certainly belongs in the conversation between IT, legal, procurement, security, and the data protection officer.

That is exactly the sort of cross-functional work many organizations hoped Microsoft 365 Copilot would not require. Copilot was sold as a native productivity layer, not a new cloud vendor to evaluate from scratch. But the AI layer changes the risk surface even when the contract and admin portal look familiar.

A cloud provider silently or semi-silently changing where a sensitive processing step may occur is exactly the kind of supplier-risk event that mature governance programs are supposed to catch. It does not mean the change is forbidden. It means it must be known, assessed, documented, and either accepted or constrained.

This is where the Microsoft 365 operating model strains under its own success. Microsoft can ship new capabilities to enormous numbers of tenants quickly. That same delivery machine can also outrun the slower machinery of compliance review. The Message Center post lands, the feature rolls out, the default takes effect, and only later does a privacy team discover that a processing location assumption has changed.

For a small business, the answer may be as simple as toggling Flex Routing off and moving on. For a hospital, bank, public authority, defense supplier, university, or critical infrastructure operator, the answer is rarely that simple. There may be internal policies, outsourcing registers, regulator expectations, contractual promises, data classification rules, works council obligations, and sector-specific restrictions layered on top.

The unsettling part is not that Microsoft added a setting. The unsettling part is that the setting sits at the intersection of all those obligations while being framed as a performance feature.

That sounds easy, but it hides several enterprise realities. The person who can see or change the setting may not be the person accountable for the data protection decision. The tenant may have multiple admin teams. Copilot may be piloted in one business unit but available across another. Documentation may lag behind configuration.

There is also the Power Platform dimension. Microsoft’s documentation indicates that the Microsoft 365 admin center setting applies to Microsoft 365 Copilot and Copilot Chat, while the Power Platform admin center setting applies to Copilot experiences in Dynamics 365, Power Platform, and Copilot Studio. In practice, that means organizations need to think beyond the Word-and-Outlook version of Copilot.

The administrative burden is part of a larger Copilot pattern. Microsoft is turning Microsoft 365 into an AI platform through a stream of controls, defaults, integrations, and licensing incentives. Each individual switch may be defensible. Together, they create a governance treadmill.

The danger is not that administrators cannot find the toggle. The danger is that the organization’s policy function does not know the toggle exists.

If European capacity is constrained during peak periods, routing inference to the United States, Canada, or Australia may improve reliability. For some customers, that trade-off will be acceptable. For others, the business value of responsive Copilot may outweigh the marginal compliance overhead, especially if existing Microsoft transfer mechanisms are already approved internally.

There is no virtue in pretending that strict regional confinement is free. It may increase latency, reduce resiliency, or force providers to overbuild capacity. European digital sovereignty has technical and economic costs, and customers ultimately pay them in price, performance, or feature availability.

But Microsoft’s problem is not that it wants flexibility. It is that flexibility and sovereignty are opposing promises when both are left vague. Customers cannot simultaneously be told that their data boundary is a trust anchor and that key processing may leave it by default when the service is busy.

The honest version is more complicated: Copilot can be more resilient if customers permit cross-boundary inference during peak demand; customers that require strict regional processing can disable that behavior; and the trade-off should be an explicit governance decision. That is a reasonable product posture. It is less clear that a default-on rollout is the right way to implement it.

Flex Routing complicates that sales pitch, but it does not invalidate it. A governed Microsoft 365 Copilot deployment is still more controllable than hundreds of unmanaged AI accounts. The problem is that “governed” now requires a more precise understanding of where inference happens and how defaults change over time.

This is the new enterprise AI bargain. The assistant is not an app bolted onto the side of the company. It is a processing fabric stretched across the company’s communications and documents. That makes location, logging, retention, access control, and model interaction first-order governance concerns.

Copilot’s sensitivity comes from its usefulness. A version of Copilot that never reads anything important would be easier to approve and mostly pointless. The enterprise wants the model close to the data, but compliance wants the processing tightly bounded. Flex Routing is the moment those two desires visibly collide.

The broader lesson for administrators is that AI features should be treated as data-processing changes, not UI enhancements. A new button in Outlook may be a new workflow. A new inference setting may be a new transfer path. A new Copilot connector may expose a new class of documents to prompt-time retrieval.

Microsoft’s cloud cadence was built for continuous improvement. Compliance programs were built for controlled change. AI is forcing those worlds to negotiate in public.

Organizations can make defensible choices. They can decide that existing contractual safeguards and transfer mechanisms are sufficient. They can accept the performance benefits. They can restrict the feature for sensitive users or disable it tenant-wide. They can run a phased review and document residual risk.

What they cannot credibly do is treat the matter as irrelevant. Flex Routing affects where a central processing step may occur. That makes it part of the accountability story.

The paper trail should be boring but real. Who reviewed the setting? What was the tenant configuration on the review date? Which Copilot workloads were in scope? Which categories of personal data may be processed through Copilot? What transfer mechanism was relied on for each destination? Was the data protection impact assessment updated? Were security and supplier-risk teams notified?

This is not bureaucratic theater. It is how organizations prove that cloud defaults did not silently become business policy. It is also how they protect administrators who are otherwise left holding the bag for decisions that belong above their pay grade.

For WindowsForum readers who live in admin centers, this is the part to underline. The toggle is easy. The decision is not.

That velocity creates a communication problem. Customers are not just buying features; they are inheriting a moving architecture. Every new capability arrives with a configuration surface, a data-flow implication, and a trust claim. The faster Microsoft ships, the more those claims must be legible.

Flex Routing is an example of a feature that may be technically reasonable and politically clumsy at the same time. The cloud engineer sees capacity optimization. The product manager sees a smoother Copilot experience. The privacy officer sees a possible third-country transfer. The CISO sees supplier-risk drift. The administrator sees another Message Center item that might become tomorrow’s audit finding.

Microsoft cannot solve that by adding more prose to a documentation page. It needs a clearer taxonomy for AI data movement: what leaves, when it leaves, why it leaves, whether the customer opted in, and what breaks if the customer says no. The distinction between storage and processing should be front and center, not buried in the conceptual machinery of inferencing.

The company also needs to recognize that defaults are governance statements. When Microsoft sets a privacy-sensitive AI behavior to on for new tenants, it is not simply reducing friction. It is choosing the baseline risk posture for customers who may not yet have formed their own.

That may be acceptable in consumer software. In enterprise compliance, it is asking for backlash.

Instead, Copilot governance needs to become continuous. Message Center monitoring should feed compliance review. Tenant configuration should be periodically exported or screenshotted. High-impact changes should trigger documented decisions. Admin roles should be mapped to policy ownership. Procurement, legal, and security should know which Microsoft 365 changes require escalation.

This is not only a Microsoft issue. Google, Amazon, Salesforce, ServiceNow, Atlassian, and every major SaaS provider are racing to embed generative AI into existing platforms. The same tension will appear wherever AI features process customer data across regional boundaries or rely on shared model infrastructure.

Microsoft is simply the most consequential test case because Microsoft 365 is already the nervous system of many organizations. If Copilot changes a data flow, it may affect mail, documents, meetings, chats, CRM records, workflows, and custom apps. Few vendors have a broader blast radius.

That breadth cuts both ways. Microsoft has the resources to build strong controls and publish detailed commitments. It also has the market power to normalize defaults that smaller vendors would be punished for attempting. Customers should welcome the controls while resisting the normalization of surprise.

The mature stance is neither panic nor complacency. Flex Routing is not proof that Copilot is unusable in Europe. It is proof that Copilot must be governed like infrastructure, not installed like an add-in.

Source: datenschutz notizen Microsoft Copilot: Flex Routing – Your Data Is Leaving the EU. By Default.

That is the uncomfortable lesson in the latest Copilot controversy. Microsoft is not saying it will store European customer data abroad as a matter of course, nor is it claiming that encryption vanishes at the border. The harder problem is that modern AI processing is not just storage, and the distinction between where data sleeps and where data is used has become the new compliance fault line.

Microsoft Turns Capacity Planning Into a Compliance Event

Microsoft Turns Capacity Planning Into a Compliance Event

Flex Routing sounds like the sort of infrastructure feature that would normally live deep in a cloud architecture diagram. When demand spikes in one region, traffic can be served by capacity elsewhere. Cloud platforms have spent two decades teaching customers that this is one of the reasons the cloud works at all.Copilot changes the stakes because the request being routed is not a static web transaction or a cacheable asset. It is an inference call assembled from user prompts, Microsoft Graph context, email, documents, chats, meetings, and organizational metadata. In other words, the useful magic of Microsoft 365 Copilot is precisely what makes the routing decision sensitive.

Microsoft’s own description is careful. Flex Routing applies to large language model inferencing during periods of peak demand. Data remains encrypted in transit and at rest. Data at rest continues to be stored inside the EU Data Boundary, with limited exceptions for pseudonymized operational and security data.

That framing is not meaningless. Encryption matters. Storage commitments matter. The EU Data Boundary is a real architectural and contractual program, not a marketing sticker. But the question raised by privacy lawyers is not whether Microsoft is abandoning European data centers. It is whether a Copilot prompt and its assembled context may be processed outside the EU or EFTA when the service decides capacity requires it.

On that point, the answer appears to be yes, if Flex Routing is enabled.

The Inference Step Is Where Copilot Becomes Sensitive

To understand why this matters, it helps to stop thinking about Copilot as a chatbot window. In Microsoft 365, Copilot is an interface over the tenant’s working memory. It can summarize a thread, draft from a document, reason over meeting notes, and retrieve information the user is already permitted to access.That means a Copilot request is not merely “write me a polite email.” It may become a structured package of prompt text, grounding data, permissions-aware search results, retrieved documents, labels, and other context needed to produce the answer. The service has to collect enough relevant material to make the model useful.

Inference is the point at which that package is handed to the model for processing. For ordinary users, this is invisible. For compliance teams, it is the event that matters.

A company may be comfortable with Microsoft storing Exchange mailboxes, SharePoint files, and Teams messages in European data centers. It may have told customers, regulators, works councils, or auditors that its Microsoft 365 processing is bounded by a particular region. Flex Routing complicates that statement because the data may still be stored in Europe while being processed elsewhere for the purpose of producing an AI response.

This is not a pedantic distinction. GDPR and similar regimes are concerned with processing, not just storage. Access, transmission, retrieval, consultation, and use all matter. If personal data is sent to a model endpoint outside the EU Data Boundary for inferencing, privacy officers will ask whether that is a third-country transfer and what legal mechanism supports it.

Microsoft’s position is likely to lean on its existing data protection terms, transfer mechanisms, security controls, and regional commitments. Customers still need to decide whether that is enough for their own risk posture. The point is not that Flex Routing is automatically unlawful. The point is that it is automatically relevant.

The Default Is the Message

The most provocative detail is not the existence of Flex Routing. Large cloud providers have always sought ways to smooth demand across regions. The provocative detail is the default.Microsoft says Flex Routing is on by default for eligible tenants created after March 25, 2026. For eligible tenants that existed before that date, administrators are told to check Message Center details for their tenant’s default setting. That caveat matters because rollout behavior appears to have been communicated through Microsoft’s operational messaging rather than through a broad, unmistakable compliance event.

For IT departments, Message Center is familiar territory. It is where Microsoft announces deprecations, feature rollouts, admin center changes, Teams tweaks, Exchange behavior shifts, and the never-ending background weather of Microsoft 365. Some messages are urgent. Many are not. The channel is noisy enough that even disciplined administrators triage it rather than treating every entry as a legal fire alarm.

Flex Routing exposes the weakness of that model. A tenant-level setting that can alter where AI processing occurs is not just an admin convenience. It changes the factual basis on which an organization describes its data flows.

The default also reveals Microsoft’s product priority. Copilot must feel fast, available, and enterprise-ready. The nightmare scenario for Microsoft is a premium AI service that slows down or fails under peak European demand. Flex Routing reduces that risk by giving Microsoft more capacity to draw on.

That may be sensible engineering. It is also a governance choice. Microsoft has effectively made service continuity the default and left data-location strictness as something administrators must assert.

The EU Data Boundary Was Never a Magic Wall

Microsoft has invested heavily in the EU Data Boundary as a trust story. The premise is simple enough for procurement decks: European customer data for major cloud services can be stored and processed within the EU and EFTA, subject to defined exceptions. In a market shaped by Schrems II, public-sector procurement concerns, and long-running anxiety about US cloud providers, that story matters.Flex Routing does not destroy the EU Data Boundary. But it makes the boundary look more conditional than many customers may have assumed.

The problem is not that Microsoft hid the concept of exceptions. Cloud residency programs always have scope notes, service exclusions, telemetry carve-outs, support access rules, and operational necessities. The problem is that generative AI turns an exception into part of the interactive user experience.

A support engineer accessing logs is one kind of risk. An AI service dynamically sending inference workloads abroad during load peaks is another. The first is episodic and operational. The second may be woven into daily work by design.

That is why “data remains encrypted” does not end the debate. Encryption in transit protects against interception. Encryption at rest protects stored content. Neither changes the fact that a service must process usable data to generate a useful answer. At some layer, the model-serving system must receive input it can act upon.

The compliance argument therefore shifts from “is my data stored in Europe?” to “what processing happens outside Europe, when, under what controls, and under whose decision?” Flex Routing makes that question impossible to ignore.

GDPR Does Not Care What the Feature Is Called

The name Flex Routing is almost comically understated. It sounds like a load-balancing preference, not a privacy-impacting control. That is common in cloud services, where product language tends to domesticate risk through soft verbs: enhance, optimize, streamline, improve.GDPR analysis is less interested in the label. If personal data is transferred to or processed in a third country, organizations need to understand the legal basis and safeguards. Depending on the destination and the nature of the data, that may involve an adequacy decision, standard contractual clauses, transfer impact assessments, supplementary measures, or additional contractual review.

The United States sits in a different posture from Canada and Australia. The EU-U.S. Data Privacy Framework provides an adequacy mechanism for certified organizations and covered transfers, but it is not a universal solvent. Canada has partial adequacy in specific commercial contexts, and Australia does not enjoy a general EU adequacy decision. The details matter, and they will not be identical across all tenants, sectors, or data categories.

The practical implication is that administrators cannot treat Flex Routing as a purely technical switch. If enabled, it belongs in the organization’s data map. It may belong in records of processing activities. It may need to be assessed in a data protection impact assessment for Microsoft 365 Copilot. It certainly belongs in the conversation between IT, legal, procurement, security, and the data protection officer.

That is exactly the sort of cross-functional work many organizations hoped Microsoft 365 Copilot would not require. Copilot was sold as a native productivity layer, not a new cloud vendor to evaluate from scratch. But the AI layer changes the risk surface even when the contract and admin portal look familiar.

NIS2 Raises the Temperature for Supplier Risk

For organizations in scope of NIS2, the Flex Routing story has another edge. NIS2 is not only about firewalls and incident response. It pushes covered entities to manage cybersecurity risk across supply chains, including how critical ICT services are acquired, developed, maintained, and governed.A cloud provider silently or semi-silently changing where a sensitive processing step may occur is exactly the kind of supplier-risk event that mature governance programs are supposed to catch. It does not mean the change is forbidden. It means it must be known, assessed, documented, and either accepted or constrained.

This is where the Microsoft 365 operating model strains under its own success. Microsoft can ship new capabilities to enormous numbers of tenants quickly. That same delivery machine can also outrun the slower machinery of compliance review. The Message Center post lands, the feature rolls out, the default takes effect, and only later does a privacy team discover that a processing location assumption has changed.

For a small business, the answer may be as simple as toggling Flex Routing off and moving on. For a hospital, bank, public authority, defense supplier, university, or critical infrastructure operator, the answer is rarely that simple. There may be internal policies, outsourcing registers, regulator expectations, contractual promises, data classification rules, works council obligations, and sector-specific restrictions layered on top.

The unsettling part is not that Microsoft added a setting. The unsettling part is that the setting sits at the intersection of all those obligations while being framed as a performance feature.

Admins Now Own a Switch They May Not Have Asked For

The immediate operational instruction is straightforward. Eligible EU and EFTA tenant administrators should open the Microsoft 365 admin center, go to Copilot settings, find the option for flexible inferencing during peak load periods, and verify whether Flex Routing is allowed. If the organization does not want Copilot inferencing to occur outside the EU Data Boundary, the setting should be changed to disallow it.That sounds easy, but it hides several enterprise realities. The person who can see or change the setting may not be the person accountable for the data protection decision. The tenant may have multiple admin teams. Copilot may be piloted in one business unit but available across another. Documentation may lag behind configuration.

There is also the Power Platform dimension. Microsoft’s documentation indicates that the Microsoft 365 admin center setting applies to Microsoft 365 Copilot and Copilot Chat, while the Power Platform admin center setting applies to Copilot experiences in Dynamics 365, Power Platform, and Copilot Studio. In practice, that means organizations need to think beyond the Word-and-Outlook version of Copilot.

The administrative burden is part of a larger Copilot pattern. Microsoft is turning Microsoft 365 into an AI platform through a stream of controls, defaults, integrations, and licensing incentives. Each individual switch may be defensible. Together, they create a governance treadmill.

The danger is not that administrators cannot find the toggle. The danger is that the organization’s policy function does not know the toggle exists.

The Performance Argument Is Real, and Still Not Enough

It is worth taking Microsoft’s likely motivation seriously. AI inference capacity is expensive, bursty, and difficult to forecast. Users expect Copilot to respond quickly inside tools where they are already trying to get work done. A slow assistant embedded in Outlook or Teams is worse than a slow website because it interrupts a live workflow.If European capacity is constrained during peak periods, routing inference to the United States, Canada, or Australia may improve reliability. For some customers, that trade-off will be acceptable. For others, the business value of responsive Copilot may outweigh the marginal compliance overhead, especially if existing Microsoft transfer mechanisms are already approved internally.

There is no virtue in pretending that strict regional confinement is free. It may increase latency, reduce resiliency, or force providers to overbuild capacity. European digital sovereignty has technical and economic costs, and customers ultimately pay them in price, performance, or feature availability.

But Microsoft’s problem is not that it wants flexibility. It is that flexibility and sovereignty are opposing promises when both are left vague. Customers cannot simultaneously be told that their data boundary is a trust anchor and that key processing may leave it by default when the service is busy.

The honest version is more complicated: Copilot can be more resilient if customers permit cross-boundary inference during peak demand; customers that require strict regional processing can disable that behavior; and the trade-off should be an explicit governance decision. That is a reasonable product posture. It is less clear that a default-on rollout is the right way to implement it.

Copilot Makes Shadow Data Flows Official

Enterprises have always had shadow AI risks. Employees paste confidential text into consumer chatbots. Teams experiment with SaaS tools outside procurement. Developers wire up APIs with unclear retention terms. Against that backdrop, Microsoft 365 Copilot is supposed to be the safe path: same tenant, same identity, same permissions, same compliance stack.Flex Routing complicates that sales pitch, but it does not invalidate it. A governed Microsoft 365 Copilot deployment is still more controllable than hundreds of unmanaged AI accounts. The problem is that “governed” now requires a more precise understanding of where inference happens and how defaults change over time.

This is the new enterprise AI bargain. The assistant is not an app bolted onto the side of the company. It is a processing fabric stretched across the company’s communications and documents. That makes location, logging, retention, access control, and model interaction first-order governance concerns.

Copilot’s sensitivity comes from its usefulness. A version of Copilot that never reads anything important would be easier to approve and mostly pointless. The enterprise wants the model close to the data, but compliance wants the processing tightly bounded. Flex Routing is the moment those two desires visibly collide.

The broader lesson for administrators is that AI features should be treated as data-processing changes, not UI enhancements. A new button in Outlook may be a new workflow. A new inference setting may be a new transfer path. A new Copilot connector may expose a new class of documents to prompt-time retrieval.

Microsoft’s cloud cadence was built for continuous improvement. Compliance programs were built for controlled change. AI is forcing those worlds to negotiate in public.

Regulators Will Care Less About the Toggle Than the Paper Trail

If a regulator or auditor asks about Flex Routing six months from now, the worst answer will not be “we enabled it.” The worst answer will be “we did not know.”Organizations can make defensible choices. They can decide that existing contractual safeguards and transfer mechanisms are sufficient. They can accept the performance benefits. They can restrict the feature for sensitive users or disable it tenant-wide. They can run a phased review and document residual risk.

What they cannot credibly do is treat the matter as irrelevant. Flex Routing affects where a central processing step may occur. That makes it part of the accountability story.

The paper trail should be boring but real. Who reviewed the setting? What was the tenant configuration on the review date? Which Copilot workloads were in scope? Which categories of personal data may be processed through Copilot? What transfer mechanism was relied on for each destination? Was the data protection impact assessment updated? Were security and supplier-risk teams notified?

This is not bureaucratic theater. It is how organizations prove that cloud defaults did not silently become business policy. It is also how they protect administrators who are otherwise left holding the bag for decisions that belong above their pay grade.

For WindowsForum readers who live in admin centers, this is the part to underline. The toggle is easy. The decision is not.

Redmond’s AI Velocity Is Outrunning Its Trust Vocabulary

Microsoft has spent the last two years pushing Copilot into nearly every corner of its product stack. Windows, Microsoft 365, Edge, Security, GitHub, Dynamics, Power Platform, Azure—the brand is everywhere. The strategy is coherent: make Microsoft the default enterprise AI layer by embedding assistance where work already happens.That velocity creates a communication problem. Customers are not just buying features; they are inheriting a moving architecture. Every new capability arrives with a configuration surface, a data-flow implication, and a trust claim. The faster Microsoft ships, the more those claims must be legible.

Flex Routing is an example of a feature that may be technically reasonable and politically clumsy at the same time. The cloud engineer sees capacity optimization. The product manager sees a smoother Copilot experience. The privacy officer sees a possible third-country transfer. The CISO sees supplier-risk drift. The administrator sees another Message Center item that might become tomorrow’s audit finding.

Microsoft cannot solve that by adding more prose to a documentation page. It needs a clearer taxonomy for AI data movement: what leaves, when it leaves, why it leaves, whether the customer opted in, and what breaks if the customer says no. The distinction between storage and processing should be front and center, not buried in the conceptual machinery of inferencing.

The company also needs to recognize that defaults are governance statements. When Microsoft sets a privacy-sensitive AI behavior to on for new tenants, it is not simply reducing friction. It is choosing the baseline risk posture for customers who may not yet have formed their own.

That may be acceptable in consumer software. In enterprise compliance, it is asking for backlash.

The Copilot Contract Is Becoming a Living Document

The Flex Routing dispute should push organizations to rethink how they manage Microsoft 365 change. Traditional contract review is too slow for AI-era cloud services. Annual vendor assessments are too infrequent. Static DPIAs age out as soon as a major processing behavior changes.Instead, Copilot governance needs to become continuous. Message Center monitoring should feed compliance review. Tenant configuration should be periodically exported or screenshotted. High-impact changes should trigger documented decisions. Admin roles should be mapped to policy ownership. Procurement, legal, and security should know which Microsoft 365 changes require escalation.

This is not only a Microsoft issue. Google, Amazon, Salesforce, ServiceNow, Atlassian, and every major SaaS provider are racing to embed generative AI into existing platforms. The same tension will appear wherever AI features process customer data across regional boundaries or rely on shared model infrastructure.

Microsoft is simply the most consequential test case because Microsoft 365 is already the nervous system of many organizations. If Copilot changes a data flow, it may affect mail, documents, meetings, chats, CRM records, workflows, and custom apps. Few vendors have a broader blast radius.

That breadth cuts both ways. Microsoft has the resources to build strong controls and publish detailed commitments. It also has the market power to normalize defaults that smaller vendors would be punished for attempting. Customers should welcome the controls while resisting the normalization of surprise.

The mature stance is neither panic nor complacency. Flex Routing is not proof that Copilot is unusable in Europe. It is proof that Copilot must be governed like infrastructure, not installed like an add-in.

The Toggle That Belongs in the Board Packet

The practical response to Flex Routing should be immediate, but not hysterical. Administrators should verify the setting, privacy teams should assess the transfer implications, and leadership should decide whether performance flexibility is worth the data-location trade-off. The essential point is that the choice should be made by the organization, not inherited from a default.- Eligible EU and EFTA tenants created after March 25, 2026 should assume Flex Routing may be enabled by default unless they have verified otherwise.

- Existing eligible tenants should review their Microsoft 365 Message Center history and current admin center configuration rather than relying on assumptions about rollout behavior.

- Disabling Flex Routing keeps Microsoft 365 Copilot inferencing inside the EU Data Boundary even during peak demand, according to Microsoft’s documented setting behavior.

- Keeping Flex Routing enabled requires a documented view of transfer mechanisms for the United States, Canada, and Australia, not just a general belief that Microsoft has compliance covered.

- Organizations subject to NIS2, DORA, sector rules, public procurement obligations, or strict internal data residency policies should treat the setting as a supplier-risk and governance event.

- Copilot change management should be folded into ongoing privacy, security, and compliance operations because AI defaults are now part of the Microsoft 365 control plane.

Source: datenschutz notizen Microsoft Copilot: Flex Routing – Your Data Is Leaving the EU. By Default.