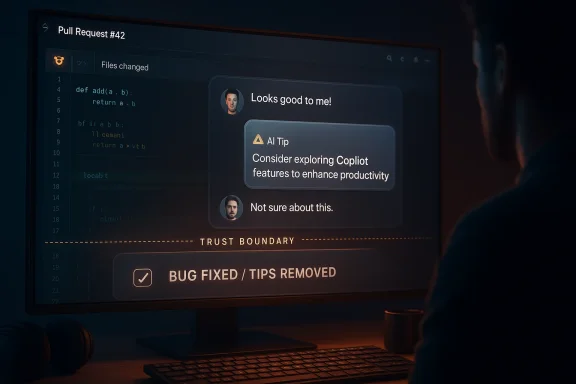

Microsoft’s denial that GitHub is testing ads in pull requests is more than a narrow clarification; it is a reminder of how quickly trust can become the real product in AI-era developer tools. A Copilot-generated product tip that surfaced in the wrong place looked like an ad, sounded like an ad, and understandably triggered an immediate backlash from developers who already feel surrounded by feature creep and platform monetization. Microsoft says the behavior was a bug, not a deliberate advertising experiment, and GitHub says it has removed agent tips from pull request comments moving forward. That matters because in a developer workflow, even the appearance of promotional content can feel like a breach of the social contract.

The controversy began with a simple but jarring observation: a pull request comment that looked less like technical guidance and more like a promotional nudge for Copilot’s agentic features and Raycast. According to the reporting that sparked the debate, a developer noticed the message after asking Copilot to help correct an error in a pull request, which made the placement feel especially out of bounds. The result was not just annoyance; it was suspicion, amplified by the fact that it appeared in a workspace where developers expect precision, not marketing.

Microsoft’s explanation is straightforward. GitHub says the message came from a programming logic issue that caused a Copilot product tip to show up in the wrong context, and that it was originally intended only for pull requests created by Copilot itself. In other words, the company is drawing a line between an internal product hint and an intentional advertisement. That distinction may be technically important, but to users who saw the message, the practical effect was still the same: something that looked like an ad appeared in a place where an ad should never be.

The timing is especially notable because GitHub has spent the last year accelerating Copilot’s role in the developer workflow. A recent GitHub changelog entry says Copilot coding agent now starts work 50% faster, and GitHub Docs now describe a much broader set of entry points for asking Copilot to create or edit pull requests. That expansion makes the pull-request surface area more sensitive than ever. When AI is embedded into the core review loop, every misplaced prompt, label, or suggestion carries extra weight. (github.blog)

The emotional reaction was predictably strong because the optics were so poor. The message reportedly referenced a third-party tool, Raycast, and social media discussion quickly framed it as an ad. Raycast publicly denied any ad arrangement, which only deepened the sense that nobody wanted to own the optics of the incident. That kind of ambiguity is exactly what makes platform trust fragile.

That evolution has real product logic behind it. GitHub has been racing to make Copilot more useful, faster, and more autonomous. On March 19, 2026, the company said Copilot coding agent starts work 50% faster, while a March 24 update described new behavior around asking Copilot to make changes to an existing pull request. These are not isolated feature drops; they are signs of a platform push toward persistent, agentic participation in software development. (github.blog)

The problem is that every new integration creates new opportunities for context errors. If a system can generate a pull request, comment on a pull request, or edit a pull request, then the UI rules around which prompts appear where must be exact. GitHub’s own docs note that Copilot can respond to comments from people with write access, that it leaves a “Copilot has started work” event in the timeline, and that it may push commits directly to the pull request branch. That makes the pull request timeline an especially sensitive environment for any stray product text. (docs.github.com)

In a mature development workflow, the user should always know whether they are talking to a helper, a reviewer, or a sales surface. When those roles blur, developers understandably become defensive. The fact that GitHub now documents Raycast as one of the environments where developers can track Copilot sessions also shows how many external surfaces Copilot is touching. That breadth increases convenience, but it also raises the stakes for mistakes. (docs.github.com)

That explanation is plausible because it aligns with the broader architecture GitHub has described. Copilot can initiate work, create pull requests, and then continue iterating through comments. A logic error in that pipeline could easily place a message in the wrong stage of the workflow. Still, plausible is not the same as reassuring. Developers judge tools by their behavior, not by their internal taxonomy.

GitHub has explicitly designed Copilot to participate in that space. Docs say users can ask Copilot to make changes to an existing pull request by mentioning

This distinction matters because developers are unusually sensitive to workflow contamination. They accept warnings about CI, tests, and branch protections because those warnings serve the codebase. They are much less tolerant of anything that looks like a product pitch. Once that line is crossed, users begin to wonder what else might be nudging them under the hood.

That kind of detail can transform a minor bug into a reputational incident. Without the Raycast reference, the story might have been framed as an awkward Copilot UI glitch. With it, the message looked more like a sponsored recommendation embedded in a collaboration space. Looks like matters almost as much as is in this category.

GitHub docs already caution that Copilot-created pull requests should be reviewed carefully, and that workflows may remain disabled until a human approves them. That language reflects the broader reality that agentic tools require guardrails. If the UI itself becomes unreliable about where it speaks, those guardrails start to feel more necessary, not less. (docs.github.com)

That expansion helps explain why the bug surfaced at all. As Copilot touches more of the software lifecycle, its messages need to be carefully scoped. The more places an agent can appear, the greater the risk that a system-generated tip will arrive in the wrong pane, thread, or comment box. In effect, GitHub is building a more powerful but also more delicate workflow surface.

But teammates have social rules. They do not interrupt meetings with marketing copy. They do not drift into unrelated product mentions during a code review. And they certainly do not create ambiguity about whether a message is system guidance or a commercial nudge. That is why the company’s reassurance has to be more than “it was a bug.”

That power comes with brittleness. Each new capability multiplies the number of contexts in which the agent may speak, react, or infer intent. If the product can handle more use cases, it also has more opportunities to fail visibly. In a developer tool, visible failure is costly because it directly affects confidence.

That breadth makes the software more useful, but it also suggests GitHub needs stricter message separation. If a product tip is intended for agent-created pull requests, then the UI must guarantee it only appears there. Anything less and users will continue to conflate operational guidance with promotion.

But denial alone cannot reset perception. Trust is cumulative, and the AI industry has already trained users to be skeptical of product explanations that arrive after a public backlash. That skepticism is reasonable, not cynical. Developers have seen enough software “experiments” to know that some bugs are really soft launches in disguise.

If GitHub continues to keep promotional language out of pull requests, the company can credibly say this was a one-off context bug. If similar messages reappear elsewhere, that story collapses. For now, the burden is on GitHub to prove the fix is durable.

The phrasing is also smart from a product perspective. By saying the tips have been removed from pull request comments, GitHub signals action, not just explanation. That distinction helps because developers care less about rhetorical reassurance than about whether the weird thing is gone.

GitHub is not the only platform walking this line. Many software vendors now bundle AI capabilities into their most important collaboration surfaces. The more those products become central to work, the less tolerant users will be of anything that even resembles a pitch. Contextual relevance is becoming a product requirement, not a nice-to-have.

That social context matters as much as the code path. A developer platform lives or dies on the sense that it exists to serve the work, not to extract attention. When a message appears to do the latter, the community responds quickly and loudly.

Once that happens, technical nuance struggles to keep up. A logic error in a context engine is not as memorable as a story about ads sneaking into code review. That asymmetry is why companies need to move quickly with clarifications and fixes.

That is an important distinction because it shows the market is not rejecting agentic development tools. It is demanding better discipline from them. The more capable the tools become, the more rigor users expect in interface design, permissions, and disclosure.

The broader lesson is simple: never let convenience outrun clarity. In a developer environment, clarity is a feature, not an aesthetic preference. When platforms forget that, their users will remind them fast.

The answer matters because Copilot is increasingly used in managed environments where IT teams need to know exactly how the assistant behaves. GitHub’s own docs state that Copilot coding agent is available across multiple plans and can be configured with settings that affect workflow behavior. That means the product is already operating inside enterprise controls, not just individual user accounts. (docs.github.com)

That skepticism may reduce adoption at the margins. Even if the core assistant remains useful, developers may hesitate to delegate certain tasks if they think the UI could introduce distracting or misleading content. Trust, once dented, tends to be repaired one boring release note at a time.

If an agent can surface the wrong text in a review context, then organizations will ask what else it might surface incorrectly. That may not halt adoption, but it will increase the appetite for pilot programs, usage restrictions, and stricter audits. Security teams hate ambiguity for a reason.

The challenge is to make that expansion feel reliable. Developers will tolerate aggressive feature growth if the product remains predictable. What they will not tolerate is a system that confuses operational guidance with promotion.

If Copilot is supposed to be the default AI layer for developers, then trust incidents are strategic risks. Competitors can use moments like this to emphasize restraint, transparency, or cleaner UX, especially in enterprise sales conversations. Even if the functionality is similar, the perception of discipline can become a differentiator.

This is a classic product-messaging opportunity. A competitor can say, implicitly or explicitly, that its assistant is useful without being noisy. That may sound small, but in developer tooling, small differences matter.

For ecosystem vendors, the lesson is to be unmistakably transparent about the origin of their content. If a tool references another product in a workflow surface, users should know why. Anything else invites the appearance of stealth marketing, even when none was intended.

Infrastructure must be boring in the best possible sense. It must be predictable, quiet, and hard to misuse. This bug shows how quickly the market notices when a platform drifts away from that standard.

There is also a credibility upside. Companies that acknowledge and correct a visible mistake quickly can sometimes strengthen trust more than if the mistake had never occurred. The key is whether the fix is truly structural rather than cosmetic.

Perception problems are notoriously sticky because they are reinforced by memory, not logs. Developers will remember the discomfort of seeing what looked like an ad inside a pull request, and that memory will influence how they interpret future changes. That is the real cost of the incident.

The broader market will probably treat this as a cautionary tale rather than a turning point. Still, cautionary tales matter in platform software because they influence procurement, policy, and user expectations. If GitHub responds with clear boundaries, cleaner messaging, and fewer surprises, it can preserve momentum. If not, every future AI prompt in a pull request will arrive under a cloud of suspicion.

Source: Windows Latest Microsoft says Copilot ad in GitHub pull request was a bug, not an advertisement

Overview

Overview

The controversy began with a simple but jarring observation: a pull request comment that looked less like technical guidance and more like a promotional nudge for Copilot’s agentic features and Raycast. According to the reporting that sparked the debate, a developer noticed the message after asking Copilot to help correct an error in a pull request, which made the placement feel especially out of bounds. The result was not just annoyance; it was suspicion, amplified by the fact that it appeared in a workspace where developers expect precision, not marketing.Microsoft’s explanation is straightforward. GitHub says the message came from a programming logic issue that caused a Copilot product tip to show up in the wrong context, and that it was originally intended only for pull requests created by Copilot itself. In other words, the company is drawing a line between an internal product hint and an intentional advertisement. That distinction may be technically important, but to users who saw the message, the practical effect was still the same: something that looked like an ad appeared in a place where an ad should never be.

The timing is especially notable because GitHub has spent the last year accelerating Copilot’s role in the developer workflow. A recent GitHub changelog entry says Copilot coding agent now starts work 50% faster, and GitHub Docs now describe a much broader set of entry points for asking Copilot to create or edit pull requests. That expansion makes the pull-request surface area more sensitive than ever. When AI is embedded into the core review loop, every misplaced prompt, label, or suggestion carries extra weight. (github.blog)

Why this landed so badly

Developers are not just reacting to one bad message. They are reacting to a broader pattern in which AI features increasingly blur the line between assistance and influence. A pull request is a working document, a review artifact, and often a compliance record all at once. If a platform injects product suggestions there, even accidentally, it can feel like the beginning of a much larger shift.The emotional reaction was predictably strong because the optics were so poor. The message reportedly referenced a third-party tool, Raycast, and social media discussion quickly framed it as an ad. Raycast publicly denied any ad arrangement, which only deepened the sense that nobody wanted to own the optics of the incident. That kind of ambiguity is exactly what makes platform trust fragile.

- Context matters more than the wording of the message itself.

- Placement inside a pull request made the note feel intrusive.

- Timing during active code review amplified the backlash.

- Perception of ads in developer tooling can outlive the bug that caused them.

Background

GitHub Copilot has evolved from a code completion assistant into a much broader coding agent. The company’s own documentation now says users can ask Copilot to create a pull request from multiple entry points, including GitHub Issues, the agents panel, Copilot Chat, the GitHub CLI, and MCP-enabled tools. GitHub also says users can mention@copilot in a pull request comment to request changes, which means the agent is now woven into the review-and-iterate loop rather than sitting outside it as a suggestion layer. (docs.github.com)That evolution has real product logic behind it. GitHub has been racing to make Copilot more useful, faster, and more autonomous. On March 19, 2026, the company said Copilot coding agent starts work 50% faster, while a March 24 update described new behavior around asking Copilot to make changes to an existing pull request. These are not isolated feature drops; they are signs of a platform push toward persistent, agentic participation in software development. (github.blog)

The problem is that every new integration creates new opportunities for context errors. If a system can generate a pull request, comment on a pull request, or edit a pull request, then the UI rules around which prompts appear where must be exact. GitHub’s own docs note that Copilot can respond to comments from people with write access, that it leaves a “Copilot has started work” event in the timeline, and that it may push commits directly to the pull request branch. That makes the pull request timeline an especially sensitive environment for any stray product text. (docs.github.com)

The shift from assistant to agent

The deeper story here is not about one misplaced message. It is about the rapid migration from autocomplete to automation. Assistive tools are expected to make suggestions, but agents are expected to act, and that changes user expectations around messaging, consent, and traceability.In a mature development workflow, the user should always know whether they are talking to a helper, a reviewer, or a sales surface. When those roles blur, developers understandably become defensive. The fact that GitHub now documents Raycast as one of the environments where developers can track Copilot sessions also shows how many external surfaces Copilot is touching. That breadth increases convenience, but it also raises the stakes for mistakes. (docs.github.com)

What GitHub says happened

GitHub says the message came from a coding agent tip that surfaced in the wrong context. The company says the tip was meant for pull requests created by Copilot, not for human-authored pull requests where Copilot was invoked to edit code. GitHub says it removed agent tips from pull request comments after identifying the issue. (docs.github.com)That explanation is plausible because it aligns with the broader architecture GitHub has described. Copilot can initiate work, create pull requests, and then continue iterating through comments. A logic error in that pipeline could easily place a message in the wrong stage of the workflow. Still, plausible is not the same as reassuring. Developers judge tools by their behavior, not by their internal taxonomy.

Why Pull Requests Are a High-Sensitivity Surface

Pull requests are one of the most visible trust boundaries in modern software teams. They are where code review, collaboration, and release readiness intersect, which means any unexpected content in that surface feels immediately suspicious. When the unexpected content is AI-generated, the instinctive reaction is often worse, because people worry the tool is shaping the conversation rather than supporting it.GitHub has explicitly designed Copilot to participate in that space. Docs say users can ask Copilot to make changes to an existing pull request by mentioning

@copilot, and that Copilot will respond only to users with write access. That makes sense for productivity, but it also means the boundaries between comments, tasks, and system messages are now thinner than they used to be. (docs.github.com)The difference between help and promotion

A helpful hint in a developer tool is not the same thing as a product push. The former is contextual, expected, and task-oriented. The latter is self-interested, even if the company insists it was merely an internal tip exposed by accident.This distinction matters because developers are unusually sensitive to workflow contamination. They accept warnings about CI, tests, and branch protections because those warnings serve the codebase. They are much less tolerant of anything that looks like a product pitch. Once that line is crossed, users begin to wonder what else might be nudging them under the hood.

- Workflow integrity is part of developer trust.

- Review artifacts should stay free of marketing flavor.

- Unexpected messages invite scrutiny even when accidental.

- AI output is judged by placement as much as by content.

The Raycast detail made it worse

The inclusion of Raycast was a particularly unfortunate wrinkle. Because Raycast is a well-known third-party productivity tool, the appearance of its name in a Copilot tip made the message feel externally sourced and oddly commercial, even if that was not the intent. Raycast’s denial of any ad deal with Microsoft further reinforced the sense that this was a systems problem, not a partnership story.That kind of detail can transform a minor bug into a reputational incident. Without the Raycast reference, the story might have been framed as an awkward Copilot UI glitch. With it, the message looked more like a sponsored recommendation embedded in a collaboration space. Looks like matters almost as much as is in this category.

Why this hit a nerve with enterprise teams

Enterprise teams care about more than convenience. They care about auditability, predictability, and whether a tool can be safely deployed at scale. A misrouted product tip does not automatically imply a security issue, but it does imply process fragility, which is enough to make procurement and governance teams nervous.GitHub docs already caution that Copilot-created pull requests should be reviewed carefully, and that workflows may remain disabled until a human approves them. That language reflects the broader reality that agentic tools require guardrails. If the UI itself becomes unreliable about where it speaks, those guardrails start to feel more necessary, not less. (docs.github.com)

GitHub Copilot’s Expanding Role

The story lands in the middle of a major transition for GitHub Copilot. The product is no longer just a coding assistant embedded in editors; it is becoming an orchestrator that can create branches, open pull requests, and respond to review comments. GitHub’s own docs make clear that Copilot is now meant to work across GitHub Issues, the agents panel, Copilot Chat, the GitHub CLI, and supporting tools with MCP integration. (docs.github.com)That expansion helps explain why the bug surfaced at all. As Copilot touches more of the software lifecycle, its messages need to be carefully scoped. The more places an agent can appear, the greater the risk that a system-generated tip will arrive in the wrong pane, thread, or comment box. In effect, GitHub is building a more powerful but also more delicate workflow surface.

From coding assistant to workflow participant

The old mental model for Copilot was simple: type code, get suggestions. The new model is much broader: delegate work, review the result, iterate with comments, and let the agent continue in context. That makes Copilot feel less like a plugin and more like a teammate.But teammates have social rules. They do not interrupt meetings with marketing copy. They do not drift into unrelated product mentions during a code review. And they certainly do not create ambiguity about whether a message is system guidance or a commercial nudge. That is why the company’s reassurance has to be more than “it was a bug.”

The new feature set is powerful, but brittle

GitHub recently said Copilot coding agent now starts work 50% faster, and the docs describe capabilities that include pull request creation, code iteration, and custom agent extension. The platform is clearly moving toward a future where agents are not peripheral tools but active participants in shipping software. (github.blog)That power comes with brittleness. Each new capability multiplies the number of contexts in which the agent may speak, react, or infer intent. If the product can handle more use cases, it also has more opportunities to fail visibly. In a developer tool, visible failure is costly because it directly affects confidence.

What the docs tell us about the intended design

GitHub’s docs are useful because they show the intended workflow in plain language. Users can ask Copilot to create a pull request, ask it to make changes to an existing pull request, and review its work before merging. The docs also note that Copilot can respond to pull request comments and that it tracks sessions through several interfaces, including Raycast. (docs.github.com)That breadth makes the software more useful, but it also suggests GitHub needs stricter message separation. If a product tip is intended for agent-created pull requests, then the UI must guarantee it only appears there. Anything less and users will continue to conflate operational guidance with promotion.

Microsoft’s Denial and Why It Matters

Microsoft’s statement is legally and strategically significant because it draws a hard boundary: GitHub does not and does not plan to include ads in GitHub. That is the exact sentence developers needed to hear, because once the word “ads” enters the conversation, it is difficult to remove. The company’s follow-up that the incident was a logic error rather than an intentional test tries to preserve the idea that GitHub remains a tooling platform, not an attention marketplace.But denial alone cannot reset perception. Trust is cumulative, and the AI industry has already trained users to be skeptical of product explanations that arrive after a public backlash. That skepticism is reasonable, not cynical. Developers have seen enough software “experiments” to know that some bugs are really soft launches in disguise.

The difference between a bug and a test

In product communications, the line between a bug and an experiment can be hard to prove from the outside. Companies frequently use cautious language while they measure user response, and users have learned to read that as strategic ambiguity. Microsoft’s categorical denial is therefore important, but it will be judged by future behavior.If GitHub continues to keep promotional language out of pull requests, the company can credibly say this was a one-off context bug. If similar messages reappear elsewhere, that story collapses. For now, the burden is on GitHub to prove the fix is durable.

- A firm denial helps, but it does not erase the optics.

- Public trust depends on repeatable behavior, not one statement.

- AI products are increasingly judged through a suspicion lens.

- Small UI mistakes can trigger outsized reputational damage.

Why the statement from GitHub was carefully phrased

GitHub’s wording is notable because it emphasizes a programming logic issue and the wrong context of a tip. That language tries to frame the problem as a scope bug, not a monetization strategy. It also suggests the company is aware of how sensitive the incident is among its developer audience. (docs.github.com)The phrasing is also smart from a product perspective. By saying the tips have been removed from pull request comments, GitHub signals action, not just explanation. That distinction helps because developers care less about rhetorical reassurance than about whether the weird thing is gone.

Why this is bigger than one company

The whole episode reflects a broader industry problem: AI assistants increasingly live inside the tools people use to create revenue-producing work. That creates an understandable temptation to cross-promote adjacent features, subscriptions, or partner products. But in high-trust environments, the cost of that temptation is enormous.GitHub is not the only platform walking this line. Many software vendors now bundle AI capabilities into their most important collaboration surfaces. The more those products become central to work, the less tolerant users will be of anything that even resembles a pitch. Contextual relevance is becoming a product requirement, not a nice-to-have.

Developer Reaction and Community Backlash

The developer reaction was intense because the message hit at a particularly sensitive intersection of AI anxiety and platform fatigue. People who already suspect that large vendors will eventually monetize every surface in their workflow saw this as proof of the slope they fear. Even if Microsoft is correct that this was a bug, the backlash was still predictable, because the user community has been conditioned to look for hidden incentives.That social context matters as much as the code path. A developer platform lives or dies on the sense that it exists to serve the work, not to extract attention. When a message appears to do the latter, the community responds quickly and loudly.

Why social media amplified the story

The story spread fast because it was easy to understand and easy to emotionally translate. “Ad inside pull request” is a headline that almost writes itself. It compresses a complex bug into a simple allegation of monetization, which is exactly the kind of story that spreads in developer circles.Once that happens, technical nuance struggles to keep up. A logic error in a context engine is not as memorable as a story about ads sneaking into code review. That asymmetry is why companies need to move quickly with clarifications and fixes.

Why the reaction was not just anti-AI sentiment

It would be too easy to blame the backlash on general anti-AI frustration. In reality, developers are not objecting to Copilot’s existence; they are objecting to compromised boundaries. Copilot can be extremely useful when it behaves as expected. The issue is not automation itself, but uncertainty about what the automation is doing and why.That is an important distinction because it shows the market is not rejecting agentic development tools. It is demanding better discipline from them. The more capable the tools become, the more rigor users expect in interface design, permissions, and disclosure.

The reputational lesson for platform owners

Platform owners should treat this incident as a warning about invisible UX assumptions. If a message is supposed to appear only in a narrow workflow, then it needs tests, telemetry, and clear fallback behavior. The cost of one message escaping its intended boundary can dwarf the value of whatever engagement metric it was designed to improve.The broader lesson is simple: never let convenience outrun clarity. In a developer environment, clarity is a feature, not an aesthetic preference. When platforms forget that, their users will remind them fast.

Enterprise vs. Consumer Impact

For individual developers, the issue is mostly about annoyance and trust. For enterprises, it cuts deeper because the concern extends to governance, compliance, and the operational reliability of AI-assisted workflows. A misplaced product tip does not automatically create a security incident, but it does create a policy question: can this system be trusted to separate system guidance from commercial noise?The answer matters because Copilot is increasingly used in managed environments where IT teams need to know exactly how the assistant behaves. GitHub’s own docs state that Copilot coding agent is available across multiple plans and can be configured with settings that affect workflow behavior. That means the product is already operating inside enterprise controls, not just individual user accounts. (docs.github.com)

Consumer developers: annoyance and skepticism

Independent developers and open-source maintainers are likely to respond with skepticism rather than formal escalation. They may not care whether the behavior was a bug or a test; they care that it happened at all. For them, the most immediate consequence is a renewed willingness to scrutinize every Copilot-generated message.That skepticism may reduce adoption at the margins. Even if the core assistant remains useful, developers may hesitate to delegate certain tasks if they think the UI could introduce distracting or misleading content. Trust, once dented, tends to be repaired one boring release note at a time.

Enterprise teams: governance and approval risk

Enterprises have a different problem. They need assurance that Copilot’s behavior is deterministic enough to fit within policy frameworks. GitHub Docs already emphasize that Copilot-generated pull requests should be reviewed carefully and that workflow runs may require explicit approval. Those safeguards are appropriate, but they also underscore why any surprise in the UI is a concern. (docs.github.com)If an agent can surface the wrong text in a review context, then organizations will ask what else it might surface incorrectly. That may not halt adoption, but it will increase the appetite for pilot programs, usage restrictions, and stricter audits. Security teams hate ambiguity for a reason.

The likely product response

The most likely response is not a retreat from Copilot, but more defensive UI separation. GitHub will probably keep expanding agentic capabilities while tightening where tips, prompts, and metadata appear. That is the practical fix because the underlying direction of travel is clear: Copilot is becoming more central, not less.The challenge is to make that expansion feel reliable. Developers will tolerate aggressive feature growth if the product remains predictable. What they will not tolerate is a system that confuses operational guidance with promotion.

Competitive Implications

This incident also has competitive consequences, because GitHub’s Copilot is now part of a broader race to define how software development will work in the AI era. Rivals like Microsoft’s own ecosystem partners, cloud vendors, and specialist coding-assistant companies all benefit when GitHub looks ahead of the curve. But they also benefit when GitHub stumbles in a way that makes its product feel less trustworthy.If Copilot is supposed to be the default AI layer for developers, then trust incidents are strategic risks. Competitors can use moments like this to emphasize restraint, transparency, or cleaner UX, especially in enterprise sales conversations. Even if the functionality is similar, the perception of discipline can become a differentiator.

Why rivals will pay attention

Competing AI coding tools will watch how GitHub handles this because it reveals whether the market is hypersensitive to workflow contamination. If the backlash is severe, rivals may avoid any UI elements that could be mistaken for promotion. They may also place more emphasis on clear provenance labels for agent output.This is a classic product-messaging opportunity. A competitor can say, implicitly or explicitly, that its assistant is useful without being noisy. That may sound small, but in developer tooling, small differences matter.

The partner ecosystem angle

Raycast’s name appearing in the incident also matters from an ecosystem perspective. Third-party integrations can be valuable, but they can also create misunderstanding when users cannot tell whether a suggestion is native, partner-driven, or contextually generated. That uncertainty makes ecosystem messaging harder, not easier.For ecosystem vendors, the lesson is to be unmistakably transparent about the origin of their content. If a tool references another product in a workflow surface, users should know why. Anything else invites the appearance of stealth marketing, even when none was intended.

The strategic takeaway for GitHub

GitHub’s long-term advantage is distribution. Its long-term risk is overloading that distribution with too many AI interactions at once. The more Copilot becomes a central development platform, the more it will be judged as infrastructure rather than software.Infrastructure must be boring in the best possible sense. It must be predictable, quiet, and hard to misuse. This bug shows how quickly the market notices when a platform drifts away from that standard.

Strengths and Opportunities

The incident is embarrassing, but it also highlights areas where GitHub can sharpen Copilot’s value proposition. If the company responds well, it can turn a reputational stumble into a demonstration of discipline, especially by tightening context handling and reaffirming that its AI products are there to assist development, not intrude on it.- Clearer context isolation between Copilot-generated content and human pull requests.

- Stronger labeling for agent tips, prompts, and system messages.

- Better user trust if GitHub keeps the fix narrow and transparent.

- More enterprise confidence if workflow surfaces remain clean.

- Improved UX testing for AI-generated interactions in review flows.

- Opportunity to refine docs so users know exactly where Copilot can speak.

- Chance to reinforce that Copilot is a developer aid, not a marketing channel.

Why the fix could improve the product

A good bug fix is sometimes a design upgrade in disguise. If GitHub uses this moment to simplify how Copilot comments appear, users may end up with a cleaner workflow than before. That could make the product feel more professional and less chatty.There is also a credibility upside. Companies that acknowledge and correct a visible mistake quickly can sometimes strengthen trust more than if the mistake had never occurred. The key is whether the fix is truly structural rather than cosmetic.

Risks and Concerns

Even if the message was accidental, the bigger risk is what it signals about the fragility of AI-generated workflow surfaces. Users may start assuming that other contextual messages, hints, or labels are similarly vulnerable to leakage. That suspicion could slow adoption or encourage teams to limit where Copilot is allowed to operate.- Trust erosion if similar messages appear again in another surface.

- Misinterpretation risk whenever AI output resembles promotion.

- Enterprise hesitation around compliance and workflow reliability.

- User fatigue from too many AI prompts in collaboration tools.

- Reputational spillover that affects broader Copilot messaging.

- Blurred boundaries between assistant behavior and product marketing.

- Higher scrutiny of every future Copilot UI change.

The hardest problem is perception

The most difficult part of this incident is not technical remediation; it is perception management. Once users believe a product might be steering them toward promotions, even accidentally, they stop giving it the benefit of the doubt. That effect can linger long after the bug is fixed.Perception problems are notoriously sticky because they are reinforced by memory, not logs. Developers will remember the discomfort of seeing what looked like an ad inside a pull request, and that memory will influence how they interpret future changes. That is the real cost of the incident.

Looking Ahead

The immediate question is whether GitHub can keep Copilot’s rapid expansion from overwhelming the boundaries that make developer workflows feel safe. The company is clearly committed to making Copilot more agentic, more responsive, and more embedded in the pull request lifecycle. The challenge now is to ensure that the product’s growing autonomy does not create new ambiguity at the interface level. (github.blog)The broader market will probably treat this as a cautionary tale rather than a turning point. Still, cautionary tales matter in platform software because they influence procurement, policy, and user expectations. If GitHub responds with clear boundaries, cleaner messaging, and fewer surprises, it can preserve momentum. If not, every future AI prompt in a pull request will arrive under a cloud of suspicion.

- Watch for follow-up changelogs that mention comment handling or tip placement.

- Watch for documentation updates clarifying where Copilot can surface suggestions.

- Watch for enterprise guidance on how to control Copilot’s workflow behavior.

- Watch for community response if similar messages appear again.

- Watch for competitor positioning around safer or quieter AI developer tools.

Source: Windows Latest Microsoft says Copilot ad in GitHub pull request was a bug, not an advertisement