A controlled classroom experiment from Cambridge University Press & Assessment and Microsoft Research confirms a clear — and reassuring — headline for teachers and technologists alike: handwritten note‑taking produces stronger three‑day retention and comprehension than relying on a large language model (LLM) alone, while combining LLM use with active note‑taking preserves those benefits and adds engagement and exploration value. The trial, reported as a peer‑reviewed article in Computers & Education and available as a preprint, tested 405 students aged 14–15 across seven English schools and measured delayed recall and conceptual understanding using curriculum‑relevant history passages.

Generative AI — particularly chat‑style LLMs — is now ubiquitous among students, and education systems are scrambling to translate that ubiquity into useful practice rather than panic or prohibition. National surveys from the United Kingdom show very high rates of student AI use for homework and study, with roughly six in ten young people reporting they used generative AI to help with homework in 2025; teachers and institutions are simultaneously calling for more guidance and training. The Cambridge–Microsoft experiment was designed to move beyond self‑report and lab tasks: it is a randomized classroom trial that measures actual learning outcomes (not just enjoyment or task completion) in real school conditions. That experimental design gives the findings practical relevance for lesson planning, procurement, and classroom policy.

The policy choice is not binary. The data support a hybrid path that preserves the cognitive benefits of human generative work while harnessing LLMs’ power to lower initial access barriers, increase engagement, and stimulate curiosity — if deployments are accompanied by teacher training, governance, and thoughtful product design. The Cambridge–Microsoft trial gives educators a robust experimental basis for that balanced strategy; the next step is careful implementation, replication across domains, and product innovations that prioritise learning science as much as convenience.

Source: varsity.co.uk Cambridge study finds students learn better with notes than AI

Background

Background

Generative AI — particularly chat‑style LLMs — is now ubiquitous among students, and education systems are scrambling to translate that ubiquity into useful practice rather than panic or prohibition. National surveys from the United Kingdom show very high rates of student AI use for homework and study, with roughly six in ten young people reporting they used generative AI to help with homework in 2025; teachers and institutions are simultaneously calling for more guidance and training. The Cambridge–Microsoft experiment was designed to move beyond self‑report and lab tasks: it is a randomized classroom trial that measures actual learning outcomes (not just enjoyment or task completion) in real school conditions. That experimental design gives the findings practical relevance for lesson planning, procurement, and classroom policy. What the study did — methods and materials

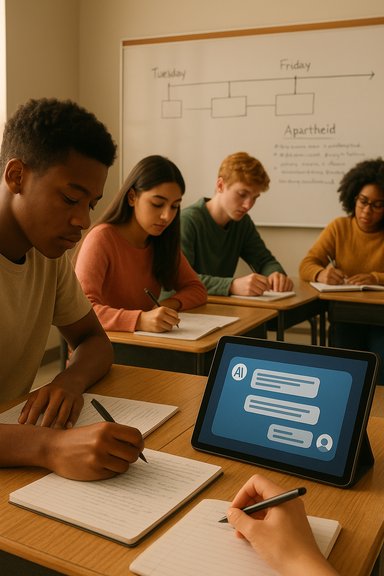

The experiment used a mixed within‑ and between‑participant randomised design with clear, curriculum‑aligned materials.- Participants: 405 students aged 14–15 recruited from seven schools in England.

- Materials: two history passages aligned to the UK national curriculum — one on apartheid in South Africa and one on the Cuban Missile Crisis — chosen to be substantive but unlikely to be already well known to all students.

- Conditions: students studied passages under different conditions:

- Note‑taking only — students read and made handwritten notes.

- LLM only — students used an LLM as an interactive aid.

- LLM + note‑taking — students used the LLM for clarification/exploration and also took notes separately.

- Procedure: students received a short tutorial on LLM use where applicable, used the tool freely for the task, and then returned three days later for an unannounced comprehension and retention test probing factual recall and explanatory understanding.

The trial’s delayed testing window is a critical design choice: measuring performance after three days prioritizes retention and durable learning rather than immediate, short‑lived recall. This is the outcome that matters for real educational progress and assessment design.

Core findings — clear signal, practical nuance

The headline quantitative result is unambiguous: students who took notes — whether by themselves or after consulting an LLM — scored significantly higher on the three‑day delayed tests than students who relied on the LLM alone. The hybrid condition (LLM + notes) produced outcomes comparable to note‑taking alone, suggesting that LLMs do not undermine the benefit of active encoding if students still perform generative work themselves. At the same time, subjective measures and qualitative analyses tell a more textured story:- Students liked the LLM. Many found the chat interface more engaging, accessible, and enjoyable. Several used the LLM to explore context, ask follow‑up questions, and deepen initial comprehension. Those affective benefits matter because motivation often drives study behaviour.

- Prompt archetypes emerged. Qualitative coding of student queries revealed consistent patterns — clarification, contextualisation, elaboration — that provide educators with actionable insight into how learners naturally deploy LLMs.

- Risk of copying. The authors warn that students might be tempted to copy machine outputs into notes verbatim, which erodes the generative processing that produces durable memory. The recommendation is simple and evidence‑based: take notes separately from LLM interactions, and paraphrase rather than copy.

These converging quantitative and qualitative signals support a balanced conclusion: LLMs can scaffold understanding and engagement, but they are not a substitute for the cognitive work of note‑taking.

What was confirmed (and cross‑checked)

To ensure accuracy, the principal claims were cross‑checked across multiple independent sources: the Microsoft Research project page summarising the published article, the SSRN preprint that includes the experimental protocol and sample details, and news releases and summaries distributed by Cambridge University Press & Assessment and science news outlets. All three confirm the core elements — sample size (405), age range (14–15), seven schools, use of curriculum‑aligned history passages, and the three‑day delayed test design. A note on model identity: some press reporting named a specific LLM (for example, ChatGPT‑3.5‑turbo) in secondary coverage. The published materials and preprint emphasise “an LLM” in the intervention description and recommend consulting the methods section for precise model configuration. Because model version, system prompts, and safety filters materially shape outputs — and therefore the learning interaction — any mention of a specific model in secondary reporting should be treated as provisional unless explicitly documented in the methods. The research team’s public materials emphasise student interactions and learning mechanisms rather than vendor branding.Why note‑taking still matters — a quick cognitive lens

The finding aligns with longstanding cognitive science: generative processing matters. When learners select, summarise, paraphrase, and record information in their own words they are engaging retrieval and encoding operations that strengthen memory traces. Externalising those operations by reading a model’s summary reduces immediate effort and can make material more accessible, but it short‑circuits the generative step that produces long‑term retention. The Cambridge–Microsoft experiment offers experimental evidence that this mechanism matters in classroom practice and for curriculum‑aligned content.Strengths of the study — why practitioners should pay attention

- Real‑classroom randomized design. Unlike many lab studies, this trial ran in situ with normal classroom constraints, making the findings operationally relevant.

- Delayed testing (three days). This moves the outcome from immediate recall — which many prior studies focus on — toward retention, the metric that matters for cumulative learning.

- Mixed methods. Combining quantitative measures with qualitative prompt analysis gives a fuller picture that can inform both pedagogy and product design.

- Curriculum alignment. Using materials drawn from the national history curriculum increases ecological validity for K‑12 stakeholders and helps teachers see direct classroom implications.

Limitations and risks — what the study does not prove

A rigorous read of the findings requires attention to scope and generalisability:- Domain specificity. The trial used history passages for 14–15‑year‑olds. STEM problem solving, mathematics, programming, languages, or creative writing may show very different interactions between LLMs and student generative work. Extrapolating beyond history requires further trials.

- Age range. Results apply to mid‑teen secondary students; younger learners or university students could respond differently.

- Model/configuration sensitivity. The exact LLM, system prompt, and safety filters matter. Press pieces that name a specific consumer model should be treated as provisional unless the methods specify otherwise. Different LLMs (or versions) could yield different levels of factuality, verbosity, or scaffold quality.

- Short‑term snapshot. The three‑day window is stronger than immediate recall measures, but it does not reveal cumulative, longitudinal effects of habitual LLM use over months or years — especially the risk of skill erosion if students consistently outsource generative synthesis. Longitudinal follow‑ups are necessary.

- Copying behaviour. The hybrid condition’s benefit depends on students actually doing generative work (paraphrasing notes). Without classroom rules or in‑tool nudges to prevent verbatim copying, the hybrid workflow could become a façade for outsourcing. The study flags this as a realistic risk.

Where claims about model identity, long‑term effects, or cross‑domain generalisability appear in secondary reporting, those should be flagged as provisional and subject to replication and specification checks.

Practical implications — for teachers, IT leaders, and product teams

The study offers immediate, actionable guidance for classrooms and ed‑tech development. Key items below summarise recommended practice and product design aligned with the evidence.For teachers and schools

- Keep note‑taking central. Make explicit note‑taking instruction (Cornell method, concept maps, self‑explanation prompts) part of lessons before allowing LLM use. The evidence shows note‑taking produces better retention.

- Adopt a hybrid workflow policy. If students use LLMs, require that they:

- Read the passage, take handwritten or typed notes without an LLM.

- Use the LLM to clarify or deepen understanding.

- Paraphrase and annotate any LLM insights into a separate notes section rather than copying them verbatim.

- Design assessments to value process. Require drafts, annotated revision logs, and short oral summaries or in‑class synthesis checks to make generative work visible in grading rubrics. This reduces incentives to outsource the cognitive work to an AI.

- Teach prompt literacy and verification. Show students how to ask LLMs for evidence, ask for sources, and cross‑check facts. Emphasise that model outputs can be helpful scaffolds but are not authoritative.

For IT leaders and procurement

- Prefer managed, education editions. Enterprise or education SKUs (with explicit contractual protections around training data and retention) reduce privacy and training‑use risks for minors. Audit retention policies and non‑training clauses in vendor contracts.

- Surface auditability. Ensure conversation logs, prompt histories, and export controls are available to teachers and admins to support assessment of student process and to investigate potential misuse.

- Pilot with PD. Pair tool rollouts with teacher professional development on prompt craft, verification checks, and assessment redesign. Pilots that include PD consistently report better outcomes than ad hoc deployments.

For product teams and Windows‑centric features

- Build hybrid workflows into note apps. Features that integrate an LLM pane with a forced paraphrase or reflection step before export will align tools to the cognitive mechanism the study highlights. For Windows users, OneNote + Copilot style integrations are a logical place for such flows.

- Nudge generative engagement. Implement UI nudges such as “summarise this in your own words before saving” or mandatory student reflections tied to model responses to prevent mindless copying.

- Expose provenance and confidence. Make it clear which model generated a response and whether the output cites verifiable sources or is conjectural. This helps teachers and students judge when to verify.

Broader policy considerations and risks

The study’s results support a middle path between blanket bans and unregulated adoption. However, scaling hybrid use without governance risks three major harms:- Skill erosion. Habitual outsourcing of synthesis reduces practice opportunities in paraphrase, explanation, and argumentation — skills essential for higher‑order work. The remedy is curriculum and assessment redesign that requires process evidence.

- Misinformation and hallucinations. LLMs can produce fluent but incorrect claims. Student reliance on unverified model output can propagate errors; verification training is essential.

- Equity gaps. Unequal device access or enterprise licensing can create a two‑tier system where some students gain scaffolded help and others do not. Districts must consider device parity, managed accounts, and inclusive policy to avoid widening disparities.

Where research needs to go next

The Cambridge–Microsoft experiment is an important early randomized classroom trial, but it is not the last word. The authors and independent reviewers identify clear priorities for follow‑up:- Replication across domains and ages. Run similar randomized trials in STEM, languages, and across younger and older age cohorts to map domain‑specific interactions between LLMs and generative learning strategies.

- Longitudinal studies. Track cohorts across terms and years to detect cumulative skill effects — particularly whether hybrid workflows preserve or erode generative skills with sustained use.

- Interface experiments. Randomized UI tests of product‑level nudges (forced paraphrase, provenance displays, confidence indicators) to quantify whether these design choices preserve retention while keeping LLMs useful.

- Specification transparency. Future trials should clearly specify model version, system prompts, and safety filters so practitioners can replicate and understand how model configuration affects learning outcomes. The current literature sometimes conflates “an LLM” with a consumer branded model; precision here matters.

Conclusion

The Cambridge University Press & Assessment and Microsoft Research randomized classroom experiment offers a timely and practical verdict: note‑taking remains central to durable learning, and LLMs are best framed as complementary tutors rather than substitutes for the cognitive work that produces memory and understanding. Schools and product teams should take that evidence seriously: teach note‑taking and prompt literacy, design assessments to reward process, deploy managed LLM access with privacy protections, and bake UI nudges into study tools to ensure students paraphrase and reflect rather than copy.The policy choice is not binary. The data support a hybrid path that preserves the cognitive benefits of human generative work while harnessing LLMs’ power to lower initial access barriers, increase engagement, and stimulate curiosity — if deployments are accompanied by teacher training, governance, and thoughtful product design. The Cambridge–Microsoft trial gives educators a robust experimental basis for that balanced strategy; the next step is careful implementation, replication across domains, and product innovations that prioritise learning science as much as convenience.

Source: varsity.co.uk Cambridge study finds students learn better with notes than AI

Last edited: