HM Revenue and Customs is moving Microsoft Copilot from experiment to infrastructure, handing roughly 28,000 staff access to the AI assistant and preparing for more advanced, agentic-style features across the department. The rollout follows a large Whitehall trial in which civil servants reported saving an average of 26 minutes per day, but the same evidence base also warned that generative AI is weaker on complex, nuanced, data-heavy work. For a tax authority that handles some of the UK’s most sensitive citizen and business records, the central question is not whether Copilot can draft a cleaner email, but whether HMRC can govern AI fast enough to match its own ambition.

HMRC has spent years trying to modernize one of the most complex administrative machines in the British state. The department collects tax, administers customs, supports benefits-related functions, runs compliance investigations, and answers millions of taxpayer interactions across channels that still depend on a mixture of modern cloud services and legacy back-end systems. That makes it a natural candidate for AI-assisted productivity, but also one of the least forgiving places to get automation wrong.

The new Copilot deployment sits inside a broader government push to make AI a normal part of public-sector work. Since 2024, Whitehall has moved from speculative AI pilots to a more explicit “scan, pilot, scale” model, with departments encouraged to test commercial tools, gather evidence, and then expand what works. Microsoft 365 Copilot has become one of the most visible examples because it plugs directly into Outlook, Teams, Word, Excel, PowerPoint, SharePoint, and the Microsoft Graph.

The timing matters. HMRC is under pressure to reduce service backlogs, improve digital channels, close the tax gap, and absorb fiscal constraints while maintaining public confidence. Ministers and senior officials see AI as a way to reduce repetitive work without simply cutting service quality, but the department’s history with large technology programs gives skeptics plenty of reason to ask hard questions.

HMRC’s chief AI officer James Mitton has framed the goal in unusually ambitious terms, pitching a future in which the department becomes one of the world’s most AI-enabled tax authorities. That is not merely a software procurement statement. It signals a shift in how the tax authority wants its staff to search, summarize, draft, triage, and potentially coordinate work across a sprawling administrative estate.

But the findings were more mixed once the work became more complex. Users and evaluators noted limits around nuanced judgment, data-heavy analysis, and scenarios where accuracy mattered more than speed. That distinction is crucial for HMRC, because the department’s highest-value work often sits precisely in the space where ambiguity, evidence, law, and citizen impact intersect.

The trial’s reported benefits can be summarized in three broad categories:

This is the central governance challenge for HMRC. Permission-aware AI can still become an oversharing accelerator if the underlying permission model is untidy. In large departments, legacy collaboration spaces, old project folders, dormant Teams channels, duplicated documents, and broad “everyone” access groups can create risk long before an AI tool enters the picture.

HMRC’s risk is therefore not only hallucination. It is also discovery at scale, where the tool reduces the practical obscurity that once protected badly governed information. Data that was theoretically available but practically buried may become operationally available in seconds.

Key differences from a conventional enterprise rollout include:

For HMRC, that mirror may reveal uncomfortable truths about document lifecycle management. Drafts, outdated guidance, duplicated policy notes, partially superseded procedures, and old collaboration folders may all be searchable depending on how they are stored and permissioned. If Copilot is asked to summarize policy or procedural content, it may surface the most available material rather than the most authoritative one.

This is why Microsoft’s ecosystem around Purview, sensitivity labels, retention policies, audit logs, and data loss prevention becomes part of the story. Copilot deployment without data classification is like giving a fast search tool to an archive with missing labels. It may still be useful, but the user must understand that retrieval is not the same as truth.

For Windows and Microsoft 365 administrators watching from the enterprise side, HMRC’s deployment offers several lessons:

In tax administration, even small mistakes can cascade. A wrong summary can mislead a caseworker, a misread policy note can shape correspondence, and an incorrect assumption about a taxpayer’s status can create distress or delay. If AI begins nudging workflows rather than merely drafting text, HMRC must define where human judgment is mandatory.

A responsible agentic rollout should move through clear stages:

There is also a risk of self-reported optimism. Staff who enjoy a new tool may perceive savings before the organization can measure whether backlogs, call handling, case throughput, compliance yield, or citizen satisfaction have improved. Perceived productivity is useful evidence, but it is not the same as operational transformation.

HMRC should therefore be judged against harder measures over time:

HMRC will need a governance model that distinguishes between personal productivity and operational use. Drafting a meeting agenda carries different risk from summarizing a compliance case. Asking Copilot to rewrite an internal briefing is different from using it to interpret evidence about a taxpayer.

A credible enterprise governance plan should include:

HMRC must therefore keep a bright line between AI-assisted administration and automated decision-making. If staff use Copilot to draft correspondence, the human reviewer remains accountable. If agents begin preparing case material, the department must be able to show what sources were used, what was excluded, and where human judgment entered the process.

Taxpayer-facing benefits could include:

That creates pressure on competitors. Google, OpenAI, Anthropic, AWS, Salesforce, ServiceNow, Oracle, and specialist public-sector AI suppliers all want a role in government transformation, but Microsoft’s advantage is distribution. It already owns the productivity surface where many civil servants work.

The competitive implications include:

This matters because tax work is procedural. Staff need to know not only what a rule says, but whether it is current, how it applies to a taxpayer’s facts, and which internal guidance takes precedence. If AI draws from stale content, the error may look authoritative because the output is fluent.

HMRC’s modernization challenge therefore has two tracks:

If Copilot handles first drafts and summaries, staff may spend less time producing raw text and more time reviewing, verifying, and applying judgment. That sounds positive, but it requires training. Reviewing AI output is a different skill from writing from scratch, and subtle errors can be harder to catch when text is fluent and plausible.

Staff impact will vary by role:

Watch especially for whether the department publishes clearer boundaries for use with Official Sensitive material. If Copilot is allowed into sensitive workflows, HMRC will need to explain the safeguards around permissions, labels, retention, audit, and human review. The public will also need reassurance that AI is not quietly changing how tax decisions are made.

Several indicators will show whether the rollout is succeeding:

HMRC’s move is best understood as a high-stakes test of whether public-sector AI can graduate from pilot enthusiasm to operational discipline. The potential benefits are obvious: less administrative drag, faster access to knowledge, and better use of skilled staff time. But the department’s real achievement will not be handing out 28,000 licenses; it will be proving that AI can support tax administration without weakening accuracy, accountability, or public trust.

Source: theregister.com UK tax authority hands 28,000 staff an AI copilot

Background

Background

HMRC has spent years trying to modernize one of the most complex administrative machines in the British state. The department collects tax, administers customs, supports benefits-related functions, runs compliance investigations, and answers millions of taxpayer interactions across channels that still depend on a mixture of modern cloud services and legacy back-end systems. That makes it a natural candidate for AI-assisted productivity, but also one of the least forgiving places to get automation wrong.The new Copilot deployment sits inside a broader government push to make AI a normal part of public-sector work. Since 2024, Whitehall has moved from speculative AI pilots to a more explicit “scan, pilot, scale” model, with departments encouraged to test commercial tools, gather evidence, and then expand what works. Microsoft 365 Copilot has become one of the most visible examples because it plugs directly into Outlook, Teams, Word, Excel, PowerPoint, SharePoint, and the Microsoft Graph.

The timing matters. HMRC is under pressure to reduce service backlogs, improve digital channels, close the tax gap, and absorb fiscal constraints while maintaining public confidence. Ministers and senior officials see AI as a way to reduce repetitive work without simply cutting service quality, but the department’s history with large technology programs gives skeptics plenty of reason to ask hard questions.

HMRC’s chief AI officer James Mitton has framed the goal in unusually ambitious terms, pitching a future in which the department becomes one of the world’s most AI-enabled tax authorities. That is not merely a software procurement statement. It signals a shift in how the tax authority wants its staff to search, summarize, draft, triage, and potentially coordinate work across a sprawling administrative estate.

From Whitehall Trial to Tax-Office Deployment

The immediate justification for HMRC’s rollout comes from the cross-government Microsoft 365 Copilot experiment run across 12 departments and involving about 20,000 civil servants. Participants reported an average time saving of 26 minutes per day, with strong satisfaction scores and widespread interest in using Copilot for routine administrative work. Those numbers gave ministers and digital leaders a politically useful proof point: AI was no longer just a laboratory curiosity.What the pilot actually proved

The pilot showed that Copilot can help with tasks that knowledge workers already recognize as time sinks. Summarizing long email chains, drafting meeting notes, turning rough notes into more polished prose, and finding information buried inside Microsoft 365 are exactly the kind of friction points that make civil service work slower than it needs to be. In that sense, the trial validated the idea that ambient AI assistance can reduce small daily burdens.But the findings were more mixed once the work became more complex. Users and evaluators noted limits around nuanced judgment, data-heavy analysis, and scenarios where accuracy mattered more than speed. That distinction is crucial for HMRC, because the department’s highest-value work often sits precisely in the space where ambiguity, evidence, law, and citizen impact intersect.

The trial’s reported benefits can be summarized in three broad categories:

- Time savings on drafting, summarization, and information retrieval.

- User satisfaction, with many participants saying they would not want to return to pre-Copilot workflows.

- Accessibility gains, especially for staff who benefit from help structuring text or processing large volumes of written material.

- Uneven performance in complex or data-intensive work.

- Security concerns around sensitive information and permissions.

- Training dependency, because useful output depends heavily on user skill and organizational guidance.

Why HMRC Is Different From a Normal Office Rollout

A 28,000-license deployment inside a tax authority is not equivalent to putting Copilot into a marketing department, university, or ordinary corporate headquarters. HMRC staff handle taxpayer identities, business filings, payment histories, case notes, investigations, policy documents, legal interpretations, and internal risk analysis. Much of that information may be classified, sensitive, personal, commercially confidential, or operationally delicate.The tax authority data problem

Microsoft’s enterprise pitch for Copilot emphasizes that it respects existing Microsoft 365 permissions. That is important, but it is not the same as saying that every permission in a large organization is correct. If a SharePoint library is too broadly accessible, Copilot can make that overexposure more visible by retrieving and summarizing information that a user could technically access but might never otherwise have found.This is the central governance challenge for HMRC. Permission-aware AI can still become an oversharing accelerator if the underlying permission model is untidy. In large departments, legacy collaboration spaces, old project folders, dormant Teams channels, duplicated documents, and broad “everyone” access groups can create risk long before an AI tool enters the picture.

HMRC’s risk is therefore not only hallucination. It is also discovery at scale, where the tool reduces the practical obscurity that once protected badly governed information. Data that was theoretically available but practically buried may become operationally available in seconds.

Key differences from a conventional enterprise rollout include:

- Higher sensitivity because taxpayer data carries legal, financial, and personal consequences.

- More complex accountability because public bodies must justify decisions, not just optimize workflows.

- Legacy dependency because older systems and processes still shape how information is created and stored.

- Regulatory scrutiny because data protection, public law, and parliamentary oversight all apply.

- Uneven data quality because policy, guidance, case material, and operational records may conflict or age at different rates.

Copilot Inside the Microsoft 365 Stack

Microsoft 365 Copilot’s appeal is that it works where civil servants already spend much of their day. Rather than requiring staff to switch to a standalone chatbot, it appears inside familiar apps and uses organizational content through Microsoft Graph. This makes adoption easier, but it also means Copilot inherits the strengths and weaknesses of the department’s existing Microsoft 365 environment.Permission-aware does not mean risk-free

In theory, Copilot does not grant users access to files, emails, or chats they could not already reach. In practice, many organizations discover during AI readiness reviews that their permissions were broader than leaders assumed. The AI assistant becomes a mirror held up to the tenant.For HMRC, that mirror may reveal uncomfortable truths about document lifecycle management. Drafts, outdated guidance, duplicated policy notes, partially superseded procedures, and old collaboration folders may all be searchable depending on how they are stored and permissioned. If Copilot is asked to summarize policy or procedural content, it may surface the most available material rather than the most authoritative one.

This is why Microsoft’s ecosystem around Purview, sensitivity labels, retention policies, audit logs, and data loss prevention becomes part of the story. Copilot deployment without data classification is like giving a fast search tool to an archive with missing labels. It may still be useful, but the user must understand that retrieval is not the same as truth.

For Windows and Microsoft 365 administrators watching from the enterprise side, HMRC’s deployment offers several lessons:

- Copilot readiness begins with SharePoint hygiene, not prompt-writing workshops.

- Sensitivity labels matter only when they are consistently applied and enforced.

- Audit trails must be reviewed, not merely collected.

- Users need policy guidance on what should and should not be processed through AI.

- Data owners must be accountable for stale, duplicated, or overexposed content.

- AI governance cannot sit only with IT, because legal, operational, HR, and policy teams all carry risk.

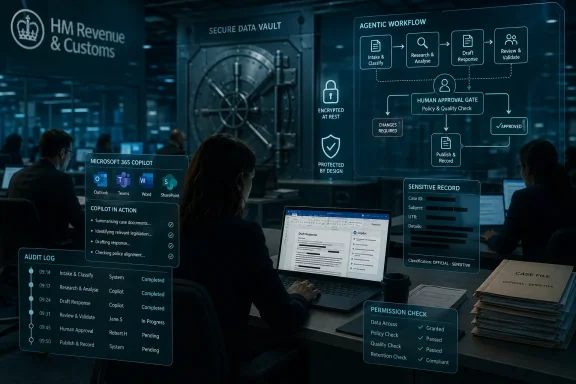

The Agentic Turn

HMRC is not only giving staff a drafting and summarization assistant. The department is preparing to switch on more agentic AI capabilities, which could allow systems to coordinate steps, call tools, query internal information, and support more structured workflows. This is where the story becomes more consequential.From assistant to semi-autonomous workflow

Agentic features change the user relationship with AI. A chatbot answers a question or drafts a response; an agent may monitor a process, assemble materials, create follow-up actions, or invoke other services under defined constraints. That can be powerful in a department full of repetitive processes, but it also raises the cost of errors.In tax administration, even small mistakes can cascade. A wrong summary can mislead a caseworker, a misread policy note can shape correspondence, and an incorrect assumption about a taxpayer’s status can create distress or delay. If AI begins nudging workflows rather than merely drafting text, HMRC must define where human judgment is mandatory.

A responsible agentic rollout should move through clear stages:

- Low-risk personal productivity, such as meeting summaries and private drafting.

- Team-level knowledge support, such as finding approved guidance and summarizing internal procedures.

- Workflow assistance, such as preparing case packs or drafting standard responses for review.

- Controlled system actions, such as creating tasks, updating records, or routing work.

- High-assurance decision support, only where testing, auditability, and human oversight are mature.

Productivity Promise and the Measurement Trap

The headline metric from the Whitehall trial, 26 minutes saved per user per day, sounds concrete. Across 28,000 staff, it suggests a striking amount of reclaimed time. But productivity in government is harder to measure than time saved on a calendar.26 minutes can mean many things

A user may feel faster because Copilot drafts a first version of an email. That does not prove the final output is better, that the saved time is redeployed productively, or that downstream reviewers do not spend more time correcting subtle mistakes. In knowledge work, the difference between faster activity and better outcomes is often hidden.There is also a risk of self-reported optimism. Staff who enjoy a new tool may perceive savings before the organization can measure whether backlogs, call handling, case throughput, compliance yield, or citizen satisfaction have improved. Perceived productivity is useful evidence, but it is not the same as operational transformation.

HMRC should therefore be judged against harder measures over time:

- Reduced turnaround times for internal drafting and case preparation.

- Improved response quality in taxpayer correspondence.

- Lower rework rates caused by incomplete or inaccurate staff outputs.

- Better knowledge retrieval from approved and current guidance.

- Higher staff satisfaction without hidden overtime or review burdens.

- No increase in data incidents, misdirected information, or inappropriate AI use.

- Clear evidence of redeployed capacity, rather than vague claims of efficiency.

Enterprise Impact: Compliance, Audit, and Governance

For enterprise IT leaders, HMRC’s move is a case study in what happens when AI becomes a platform-level capability rather than a departmental experiment. A large Copilot deployment forces decisions about identity, access, records management, device security, retention, compliance, and monitoring. It also forces non-technical leaders to confront the state of their information estate.Governance becomes the product

The value of Copilot depends on whether users can trust the content it retrieves. That trust requires authoritative sources, clear data ownership, and documented controls. Without those foundations, AI can confidently summarize obsolete or conflicting information.HMRC will need a governance model that distinguishes between personal productivity and operational use. Drafting a meeting agenda carries different risk from summarizing a compliance case. Asking Copilot to rewrite an internal briefing is different from using it to interpret evidence about a taxpayer.

A credible enterprise governance plan should include:

- Role-based AI policies aligned to job function and data sensitivity.

- Mandatory training that covers hallucinations, prompt hygiene, and data handling.

- Approved-source controls for guidance, policy, and procedural information.

- Usage monitoring that detects risky patterns without creating a surveillance culture.

- Incident response procedures for AI-generated errors or data exposure.

- Regular permission reviews across SharePoint, Teams, OneDrive, and mailboxes.

- Independent assurance for high-risk workflows before agentic features expand.

Consumer and Taxpayer Impact

The immediate rollout is internal, but taxpayers will eventually feel the consequences. If HMRC staff can find current guidance faster, summarize complex histories more accurately, and reduce repetitive administrative work, citizens and businesses could benefit from quicker, clearer service. That is the optimistic version.Better service, if accountability survives

The less optimistic version is that AI-generated text makes official communication more polished without making the underlying decision better. A taxpayer does not need a beautifully drafted wrong answer. They need a correct answer, a transparent explanation, and a way to challenge mistakes.HMRC must therefore keep a bright line between AI-assisted administration and automated decision-making. If staff use Copilot to draft correspondence, the human reviewer remains accountable. If agents begin preparing case material, the department must be able to show what sources were used, what was excluded, and where human judgment entered the process.

Taxpayer-facing benefits could include:

- Faster replies to routine correspondence.

- Clearer explanations of complex tax rules.

- More consistent internal guidance when staff use approved sources.

- Better accessibility for staff who support citizens with varied communication needs.

- Reduced administrative drag in call handling and follow-up work.

- Improved case preparation when AI summarizes long histories for human review.

Competitive and Market Implications

HMRC’s Copilot rollout is also a signal to the wider software market. Microsoft has spent the past several years positioning Copilot as the default AI layer for organizations already standardized on Microsoft 365. A 28,000-seat deployment inside a major government department strengthens that narrative.Microsoft’s public-sector flywheel

The public sector is especially valuable to Microsoft because adoption in one large department can shape expectations elsewhere. Once staff learn Copilot inside Outlook, Teams, Word, and SharePoint, rival tools must either integrate with that environment or offer a dramatically better alternative. The more deeply Copilot becomes embedded in workflows, the harder it becomes to displace.That creates pressure on competitors. Google, OpenAI, Anthropic, AWS, Salesforce, ServiceNow, Oracle, and specialist public-sector AI suppliers all want a role in government transformation, but Microsoft’s advantage is distribution. It already owns the productivity surface where many civil servants work.

The competitive implications include:

- Higher barriers for standalone AI tools that do not integrate with Microsoft 365.

- Greater demand for governance overlays that manage Copilot risk and permissions.

- More public-sector procurement scrutiny around lock-in and resilience.

- Increased pressure on rivals to prove security, sovereignty, and auditability.

- A larger market for AI readiness services, especially SharePoint cleanup and Purview configuration.

- Potential consolidation as departments prefer integrated suites over fragmented AI pilots.

Legacy Systems Still Set the Ceiling

HMRC’s enthusiasm for AI does not erase the department’s legacy technology problem. Tax administration depends on long-lived systems built around rules, records, and processes that predate modern cloud-native design. AI can wrap those systems with a friendlier interface, but it cannot automatically fix fragmented data models or brittle integrations.AI cannot summarize its way out of bad architecture

There is a temptation in large organizations to use generative AI as a cosmetic layer over unresolved technical debt. If staff struggle to find information, Copilot may help. If the information itself is inconsistent, outdated, or duplicated across systems, Copilot may simply make the inconsistency faster to consume.This matters because tax work is procedural. Staff need to know not only what a rule says, but whether it is current, how it applies to a taxpayer’s facts, and which internal guidance takes precedence. If AI draws from stale content, the error may look authoritative because the output is fluent.

HMRC’s modernization challenge therefore has two tracks:

- Improve staff productivity through AI tools embedded in daily work.

- Modernize core systems so that AI can rely on clean, authoritative data.

- Retire obsolete content rather than hoping users can identify it manually.

- Map data lineage so outputs can be traced back to approved sources.

- Invest in APIs and integration rather than relying only on document search.

- Maintain human expertise because tax law cannot be reduced to pattern matching.

The Staff Dimension

AI adoption in government is never just a technology story. It changes how staff write, search, decide, learn, and demonstrate competence. For HMRC’s 28,000 licensed users, Copilot may become a daily colleague, but also a new source of ambiguity about what good work looks like.Augmentation, not headcount magic

Senior leaders often say AI will augment staff rather than replace them, and that is probably the right framing for HMRC in the near term. The department’s workload is too varied, legally constrained, and citizen-facing to be solved by simply removing people from the loop. However, augmentation still changes job design.If Copilot handles first drafts and summaries, staff may spend less time producing raw text and more time reviewing, verifying, and applying judgment. That sounds positive, but it requires training. Reviewing AI output is a different skill from writing from scratch, and subtle errors can be harder to catch when text is fluent and plausible.

Staff impact will vary by role:

- Caseworkers may benefit from faster summaries but need strong verification habits.

- Policy teams may draft and compare documents more quickly but must guard against invented nuance.

- Customer-service staff may use AI to prepare responses but remain accountable for tone and accuracy.

- Managers may gain meeting summaries and planning support but risk over-relying on compressed context.

- Technical teams may face heavier governance, monitoring, and support demands.

- Data protection officers may see AI become a permanent part of compliance workload.

Strengths and Opportunities

HMRC’s Copilot deployment has genuine upside if the department keeps the technology focused on appropriate work and pairs it with serious governance. The opportunity is not that AI will magically understand the tax system, but that it can reduce friction around writing, summarizing, searching, and preparing material for human judgment.- Administrative relief could free staff from repetitive drafting, meeting notes, and inbox triage.

- Faster knowledge retrieval could help employees find relevant internal material more quickly.

- Improved accessibility could support staff who benefit from assistance with structure, language, or summarization.

- Better consistency could emerge if Copilot is grounded in approved, current guidance.

- Scalable training could help new staff understand processes through guided explanations and summaries.

- Operational intelligence could improve if AI highlights patterns in workload, correspondence, and process bottlenecks.

- Modernization momentum could force long-delayed cleanup of permissions, documents, and legacy collaboration spaces.

Risks and Concerns

The risks are equally real, and they are amplified by HMRC’s role as a tax authority. Copilot does not need to leak information publicly to cause harm; it only needs to surface the wrong information to the wrong authorized user, produce a misleading summary, or normalize reliance on outputs that are not fully checked.- Oversharing risk remains high if Microsoft 365 permissions are broader than intended.

- Hallucinated or distorted summaries could influence staff judgment in subtle ways.

- Stale guidance may be retrieved and repackaged as if it were current.

- Automation bias could lead users to trust fluent output more than evidence warrants.

- Audit complexity may increase as AI-assisted drafting becomes part of official workflows.

- Vendor lock-in could deepen as Copilot becomes embedded in daily operations.

- Public trust could suffer if taxpayers believe decisions are being shaped by opaque AI systems.

What to Watch Next

The next phase will reveal whether HMRC is treating Copilot as a controlled productivity tool or as the first layer of a deeper operational AI platform. The distinction matters because drafting support can be governed with training and policy, while agentic workflow requires stronger assurance, testing, logging, and escalation paths. HMRC’s public messaging should become more specific as the deployment matures.Watch especially for whether the department publishes clearer boundaries for use with Official Sensitive material. If Copilot is allowed into sensitive workflows, HMRC will need to explain the safeguards around permissions, labels, retention, audit, and human review. The public will also need reassurance that AI is not quietly changing how tax decisions are made.

Several indicators will show whether the rollout is succeeding:

- Measured operational outcomes, not just self-reported time savings.

- Clear AI usage policies for sensitive, taxpayer-related, and casework material.

- Evidence of permission cleanup across SharePoint, Teams, and OneDrive.

- Transparent incident reporting if AI errors or data issues occur.

- Careful staging of agentic features with human approval gates.

- Independent scrutiny from auditors, Parliament, or data protection specialists.

HMRC’s move is best understood as a high-stakes test of whether public-sector AI can graduate from pilot enthusiasm to operational discipline. The potential benefits are obvious: less administrative drag, faster access to knowledge, and better use of skilled staff time. But the department’s real achievement will not be handing out 28,000 licenses; it will be proving that AI can support tax administration without weakening accuracy, accountability, or public trust.

Source: theregister.com UK tax authority hands 28,000 staff an AI copilot