Microsoft’s recent public foray into high‑temperature superconductors (HTS) for datacenter power delivery represents more than a laboratory novelty — it is a deliberate engineering bet that the next generation of cloud-scale compute will require fundamentally different approaches to electricity delivery, and that superconducting cable systems can unlock capacity, density, and community‑friendly siting that copper and aluminum simply cannot sustain at hyperscale.

High‑temperature superconductors are materials that, when cooled below a characteristic critical temperature, conduct electricity with essentially zero resistance. That physical property eliminates resistive losses and heat generation in the conductor itself, which in practical deployments translates into markedly higher current densities in much smaller cross‑sections compared with conventional copper or aluminum conductors. Recent reviews of HTS technology also emphasize that while HTS still requires cryogenic refrigeration, the operating temperature range is far more accessible (liquid nitrogen temperatures around 65–77 K) than legacy low‑temperature superconductors that need liquid helium.

Microsoft’s Azure engineering teams have publicly outlined how HTS cables could be used inside and around datacenters to ease capacity constraints, shrink the physical footprint of power corridors, and reduce the local impacts of heavy electrical infrastructure, while supporting the rising energy demands of AI workloads. Industry deployments over the last two decades — from Long Island’s transmission‑level HTS demonstration to the Chicago REG urban resilience project — show that the technology works at scale in grid applications, and have informed modern expectations for capacity and compactness.

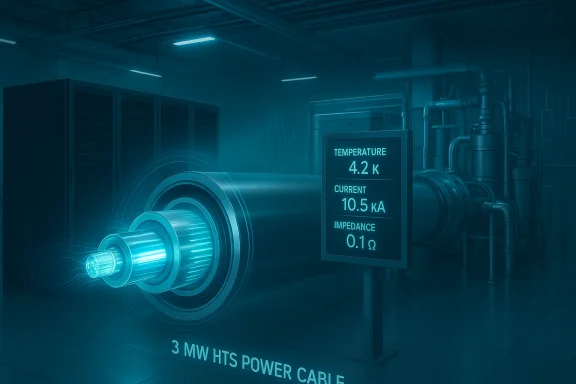

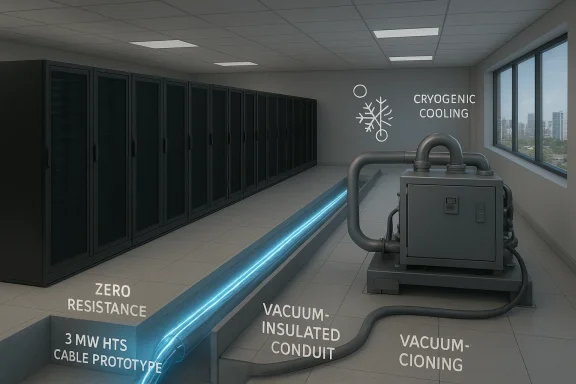

Independently reported coverage of the commercial startups involved — notably VEIR, a Microsoft Climate Innovation Fund portfolio company — confirms a roughly 3 MW low‑voltage HTS cable system prototype targeted at datacenter use, with pilots planned and an expectation of broader commercialization in the mid‑to‑late 2020s. Those vendor roadmaps are explicit: pilot in datacenter environments first (where deployment cycles and customer demand move quickly), with transmission‑line and utility‑side rollouts following more cautiously.

Why a 3 MW cable matters in practical terms: a single, compact HTS feeder carrying multiple megawatts reduces the need for parallel copper feeders, large high‑voltage switchgear, or multi‑transformer substations inside the datacenter perimeter. That can accelerate commissioning times, shrink seismic and thermal footprints, and reduce civil works costs when compared with replicating conventional feeder networks.

The technical foundation is sound: superconductors carry far more current per cross‑section and have been proven in grid pilots that tackled the most difficult siting and capacity problems. The practical barriers — refrigeration energy, AC losses, manufacturing scale, and integration complexity — are real but addressable with careful design, vendor partnerships, and staged pilots. For datacenter operators, the smartest path is an engineering‑led pilot program that quantifies the full lifecycle tradeoffs and locks down service agreements for the new cryogenic dependency. Early adopters will gain not only immediate capacity relief, but also an operational playbook that could define how power gets to the racks in the AI era.

Microsoft and its partners are now testing those operational assumptions in the field. The next two years of pilot data will show whether HTS becomes a specialized solution for constrained, high‑value corridors or the basis of a broader re‑thinking of datacenter power distribution at hyperscale.

Source: azure.microsoft.com Superconductors in datacenters: A breakthrough for power infrastructure

Background

Background

High‑temperature superconductors are materials that, when cooled below a characteristic critical temperature, conduct electricity with essentially zero resistance. That physical property eliminates resistive losses and heat generation in the conductor itself, which in practical deployments translates into markedly higher current densities in much smaller cross‑sections compared with conventional copper or aluminum conductors. Recent reviews of HTS technology also emphasize that while HTS still requires cryogenic refrigeration, the operating temperature range is far more accessible (liquid nitrogen temperatures around 65–77 K) than legacy low‑temperature superconductors that need liquid helium. Microsoft’s Azure engineering teams have publicly outlined how HTS cables could be used inside and around datacenters to ease capacity constraints, shrink the physical footprint of power corridors, and reduce the local impacts of heavy electrical infrastructure, while supporting the rising energy demands of AI workloads. Industry deployments over the last two decades — from Long Island’s transmission‑level HTS demonstration to the Chicago REG urban resilience project — show that the technology works at scale in grid applications, and have informed modern expectations for capacity and compactness.

Overview: Why superconductors now matter for datacenters

Datacenters are power‑dense facilities. The last five years of architectural changes — denser racks, liquid cooling, and accelerator‑heavy racks — have pushed per‑rack and per‑campus power requirements into regimes where sourcing additional feeders, expanding substations, or widening rights‑of‑way becomes the limiting factor for growth. Superconducting cables directly address three linked problems:- Capacity limits at existing voltage levels. HTS lines can carry an order of magnitude more current than conventional conductors at the same voltage, enabling far more power through the same conduit footprint.

- Physical footprint and community impact. Because HTS cables are compact and can be buried with smaller trenches or routed in constrained ductwork, they reduce the visual, constructional, and land‑use friction that typically accompanies new overhead lines or big substations — a major benefit in urban or suburban siting disputes.

- Thermal and electrical losses. Zero‑resistance conductors eliminate Joule heating in the cable itself. The result is lower local heat rejection needs and reduced voltage drop across feeders, improving power quality and reducing the need for oversized conductor sizing margins.

What Microsoft announced — the engineering highlights

Microsoft’s Azure operations group published a technical overview describing prototype work with HTS power delivery into datacenter racks and campuses, including factory tests of a 3 MW HTS cable and a rack‑level HTS power prototype. The post frames HTS as part of a broader power‑network‑thermal triad of innovations (alongside hollow‑core fiber and microfluidic cooling) intended to support AI workloads at scale.Independently reported coverage of the commercial startups involved — notably VEIR, a Microsoft Climate Innovation Fund portfolio company — confirms a roughly 3 MW low‑voltage HTS cable system prototype targeted at datacenter use, with pilots planned and an expectation of broader commercialization in the mid‑to‑late 2020s. Those vendor roadmaps are explicit: pilot in datacenter environments first (where deployment cycles and customer demand move quickly), with transmission‑line and utility‑side rollouts following more cautiously.

Why a 3 MW cable matters in practical terms: a single, compact HTS feeder carrying multiple megawatts reduces the need for parallel copper feeders, large high‑voltage switchgear, or multi‑transformer substations inside the datacenter perimeter. That can accelerate commissioning times, shrink seismic and thermal footprints, and reduce civil works costs when compared with replicating conventional feeder networks.

Technical reality check: physics, materials, and cooling

HTS do not mean “no engineering tradeoffs.” The key technical realities:- Materials and operating temperature. Modern HTS cables use rare‑earth barium cuprate (REBCO, often called YBCO) or bismuth‑based compounds; both reach superconductivity at liquid‑nitrogen temperatures, which simplifies cryogenics relative to liquid‑helium systems but still demands robust refrigeration and thermal shielding.

- AC losses and magnetic effects. Alternating current introduces hysteresis and coupling losses in superconducting tapes and cable architectures. Recent engineering work (patterned tapes, multi‑filament designs, CORC and Roebel configurations) reduces AC losses significantly, but these factors must be accounted for in an operational efficiency model.

- Cryogenics consumes energy. Cooling the cable to cryogenic temperatures requires continuous refrigeration. Modern cryocoolers for HTS at ~77 K have improved efficiency, and larger pulse‑tube or Stirling‑type systems can reach kilowatt‑class cooling capacity, but the refrigeration load is non‑trivial and must be compared against resistive loss savings to compute net energy benefits. Published engineering studies estimate that cryocooler systems can be sized to yield favorable paybacks at megawatt throughput and when cable lengths and duty factors are optimized.

Field precedent: what utilities and cities have learned

Superconductor cable projects are not new testbed concepts; they have been demonstrated successfully in several grid and urban projects:- The Long Island Power Authority project (LIPA) in 2008 was the world’s first transmission‑voltage HTS cable in a production grid, rated at hundreds of megawatts and intended to relieve a congested corridor without new overhead lines. That deployment proved the technical concept for transmission‑level HTS.

- The Chicago REG (Resilient Electric Grid) project used an HTS cable to interconnect substations in dense urban environments, delivering ~62 MVA at 12 kV while minimizing disturbance in a crowded right‑of‑way — an instructive demonstration for the community‑impact argument.

What HTS changes in datacenter architecture

If HTS becomes commercially viable at datacenter scale, expect to see at least three architecture shifts:- Feeder consolidation and simplified substations. A small set of HTS feeders can replace large numbers of parallel copper feeders, reducing substation real estate and enabling compact power houses inside campus boundaries. This reduces civil and permitting timelines in many jurisdictions.

- Higher rack‑level delivery voltages with smaller cables. HTS allows more power to be delivered into the rack or pod with much smaller physical conductors, enabling denser compute packing and potentially simpler busbar designs within the building. Microsoft’s rack prototype tests illustrate these internal power distribution opportunities.

- Distributed resilience and fault‑current behavior. HTS systems can be designed with integrated fault‑current limiting behavior, and their compactness makes it easier to create redundant, physically separated feedpaths — a resilience benefit both for datacenters and the local grid. Utilities and vendors have explored superconducting fault‑current limiters (SFCLs) as complementary devices, and these can be co‑packaged with cable systems.

Economics and deployment timeline: realistic expectations

The cost model for HTS is multi‑dimensional:- Upfront capital costs. HTS wire is still more expensive per meter than copper at commodity prices, although mass‑production improvements and new tape geometries have reduced unit costs appreciably in recent years. The Azure post and market analyses both note that manufacturing and economies of scale have reached an inflection point where HTS becomes commercially justifiable for targeted, high‑value applications.

- Operational costs. The cryogenic refrigeration load is continuous, and while modern cryocoolers have improved efficiency, the energy consumed to maintain 65–77 K offsets some of the transmission losses saved by moving away from copper. Precise TCO depends on local electricity prices, load profiles, and the length and utilization of the cable runs.

- Time to market. VEIR and similar companies are positioning datacenter pilots in the near term (pilots in the following years, commercialization toward the late 2020s), while grid‑level, long‑distance deployments — which require longer stakeholder timelines and utility approvals — are expected to follow. Microsoft’s public testing and VEIR’s prototype plans align with a staged adoption model: datacenter pilots first, then utility adoption at scale.

Safety, reliability, and community considerations

Superconducting systems pose unique reliability considerations that datacenter operators must manage:- Cryogenic system reliability. Cryocoolers and liquid‑nitrogen systems have matured, but they introduce new single‑points like refrigeration skids and vacuum jacket integrity. Redundancy in cryogenics becomes critical for any feed that, if lost, could force load shedding or trigger protective devices.

- Protection and fault behavior. Utilities and integrators have converged on SFCL designs and termination techniques that mitigate fault energy and enable predictable protection coordination. Integration with datacenter protection schematics (e.g., PV/UPS, generator paralleling) requires careful design and testing.

- Community impact and permitting. HTS can reduce visible infrastructure, trench widths, and construction timelines, which is a tangible benefit for community acceptance. Projects like Chicago’s REG and earlier LIPA and Manhattan tests demonstrate that HTS lines can be installed with minimal disruption compared to major overhead projects. But every community and permitting authority is different; HTS still requires safety documentation and handling protocols that local regulators must accept.

Operational playbook: how datacenter operators should approach HTS pilots

For CTOs and infrastructure owners considering HTS, a pragmatic pilot roadmap reduces risk and produces measurable decision points:- Pilot selection. Choose constrained, high‑value runs where civil works costs or permitting timelines are the true gating factor for capacity expansion.

- System partnership. Work with an HTS integrator (cable manufacturer + cryogenics supplier + systems integrator) and insist on full‑life‑cycle SLAs that include refrigeration uptime, leak detection, and spare module provisioning.

- Energy audit. Model the full energy picture: cable loss avoidance, refrigeration load, PUE changes due to reduced local heat rejection, and maintenance overheads.

- Protection and commissioning. Conduct staged commissioning tests integrating SFCL, switchgear, UPS, and generator failover scenarios to validate operational assumptions under fault and maintenance conditions.

- Community and regulatory plan. Build public communications and permitting packages that highlight the smaller trench footprints, reduced visual impact, and faster construction timelines as community benefits.

Strengths, risks, and who wins

Strengths:- High effective capacity density — HTS allows megawatts through dramatically smaller conductors and conduit, easing siting and expansion constraints.

- Lower local environmental and visual impact — smaller trenches and reduced need for overhead lines can make datacenter expansions more community friendly.

- Technical maturity for targeted applications — decades of R&D plus operational grid projects demonstrate that HTS systems can function reliably when engineered and maintained correctly.

- Cryogenic operational dependence — refrigeration is a continuous load and an operational dependency; its energy cost and reliability profile are central risk items.

- Capital and supply chain — while manufacturing costs are improving, HTS wire and specialized terminations are still higher cost items, and ramping supply to hyperscaler volumes will require industrial scale‑up.

- Integration complexity — protection schemes, fault coordination, and physical maintenance practices differ from conventional copper systems; organizations must accept a new operational competence curve.

- Hyperscalers and large campus operators who face dense urban siting constraints or near‑term capacity pinch points are the most likely early winners, since they can justify the premium through faster deployment and avoided civil works.

- Vendors that can offer integrated, SLA‑backed cryogenics plus cable systems — with proven AC‑loss mitigation and service networks — will capture first market share.

- Utilities will benefit when HTS is applied to constrained urban circuits or resilience projects where rights‑of‑way and siting block conventional upgrades.

What to watch next

- Pilot results and metrics. Look for published pilot energy comparisons (net kWh saved vs. copper alternatives), refrigeration reliability statistics, and lifecycle O&M costs from VEIR, Microsoft, and utility pilots in 2026–2028.

- Manufacturing scale announcements. Wire cost reductions and longer continuous‑length tape manufacturing are the linchpin that will shift HTS from niche to mainstream. Watch for supplier capacity increases and new REBCO tape fabs.

- Standards and protection practices. As SFCLs and HTS terminations mature, standards bodies and utilities will publish more prescriptive protection and commissioning procedures — a key enabler for broader utility adoption.

Conclusion

Microsoft’s public engagement with high‑temperature superconductors reframes an enduring infrastructure problem: as AI and data‑intensive workloads continue to consume rising amounts of power, the constraints are becoming less about compute and more about how we get reliable, high‑quality electricity to dense compute clusters quickly and with minimal community impact. HTS is not a silver bullet, but it is a high‑value tool in the infrastructure toolbox — particularly where right‑of‑way, civil works, and rapid capacity are the dominant constraints.The technical foundation is sound: superconductors carry far more current per cross‑section and have been proven in grid pilots that tackled the most difficult siting and capacity problems. The practical barriers — refrigeration energy, AC losses, manufacturing scale, and integration complexity — are real but addressable with careful design, vendor partnerships, and staged pilots. For datacenter operators, the smartest path is an engineering‑led pilot program that quantifies the full lifecycle tradeoffs and locks down service agreements for the new cryogenic dependency. Early adopters will gain not only immediate capacity relief, but also an operational playbook that could define how power gets to the racks in the AI era.

Microsoft and its partners are now testing those operational assumptions in the field. The next two years of pilot data will show whether HTS becomes a specialized solution for constrained, high‑value corridors or the basis of a broader re‑thinking of datacenter power distribution at hyperscale.

Source: azure.microsoft.com Superconductors in datacenters: A breakthrough for power infrastructure