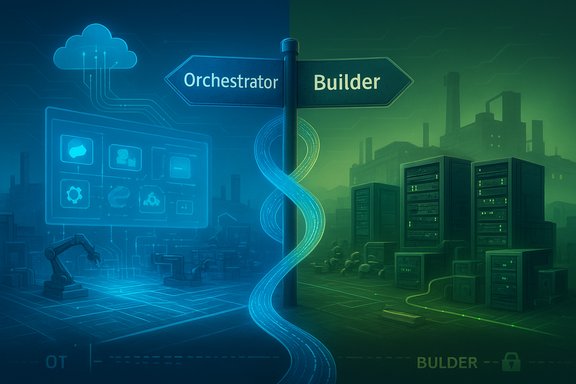

The industrial cloud has split into two distinct strategic highways: one headed toward centralized orchestration and workforce augmentation, and the other toward customizable infrastructure, model independence, and on‑prem control. This “Great Bifurcation” — an Orchestrator path led by Microsoft and a Builder path driven by AWS — matters because it changes not just procurement decisions, but how industrial organizations structure data fabrics, secure OT/IT boundaries, and design agentic workflows that will actually run factories, fleets, and supply chains at scale.

The last two enterprise AI waves were defined by model supply and cloud scale; the current shift is about architectural philosophy. Microsoft’s product moves at Ignite and the broader Copilot/Foundry narrative emphasize an integrated control plane: identity-bound Copilots and agent registries, governance primitives, and tight coupling with Microsoft 365 and Dynamics workflows. AWS, by contrast, is building a modern “Builder’s OS” for industrial organizations — an ecosystem that prizes model and infrastructure choice, edge-built compute appliances, and purpose‑built racks that bring cloud‑grade training and inference into customer data centers. These two philosophies are no longer abstract differences; they produce materially different outcomes for sovereignty, vendor lock-in, operational risk, and long-run differentiation.

Key takeaways for industrial leaders

Source: ARC Advisory The Industrial AI Evolution: The Great Bifurcation—Orchestrator vs Builder

Background / Overview

Background / Overview

The last two enterprise AI waves were defined by model supply and cloud scale; the current shift is about architectural philosophy. Microsoft’s product moves at Ignite and the broader Copilot/Foundry narrative emphasize an integrated control plane: identity-bound Copilots and agent registries, governance primitives, and tight coupling with Microsoft 365 and Dynamics workflows. AWS, by contrast, is building a modern “Builder’s OS” for industrial organizations — an ecosystem that prizes model and infrastructure choice, edge-built compute appliances, and purpose‑built racks that bring cloud‑grade training and inference into customer data centers. These two philosophies are no longer abstract differences; they produce materially different outcomes for sovereignty, vendor lock-in, operational risk, and long-run differentiation. Industrial Foundation Models: Integration vs. Independence

The divergence in model strategy

At the heart of AI-enabled industrial transformation is the question: Which models, and how many? Microsoft’s Azure AI Foundry and Copilot ecosystem have expanded to host multiple vendor models (Anthropic, Cohere, OpenAI and others), but the operational experience, the “golden path,” and many of the prebuilt Copilot experiences still favor deep integration with Azure OpenAI and Microsoft’s orchestration primitives. That integration reduces time‑to‑value for knowledge‑worker and cross‑team scenarios, making it attractive when tight governance and fast enterprise rollout are the priority. AWS’s posture is intentionally agnostic. Amazon Bedrock exposes a broad portfolio of models — from Anthropic Claude to Meta’s Llama and Mistral, alongside AWS’s Nova family — and builds tooling (distillation, customization) so teams can choose or compose the right model for each task. For industrial workloads that span high‑volume telemetry, low‑latency edge inference, and occasional heavy reasoning, the “right model for the right task” approach can produce meaningful cost and performance advantages. Amazon Bedrock’s model lifecycle and distillation features make it straightforward to deploy smaller, cheaper distilled students for massive inference workloads, while retaining larger “teacher” models for complex reasoning.What industrial teams must weigh

- Speed vs. freedom: pre-integrated Copilot stacks shorten implementation time. Model-agnostic stacks preserve competitive differentiation and permit testing/benchmarking across models.

- Cost profile: distilled or server‑local models can reduce inference spend for telemetry-heavy production use cases.

- Governance and reproducibility: enterprise auditors and regulators care about model lineage, access controls, and reproducible evaluation. The orchestration-first approach bundles those primitives; builder-first stacks require engineering discipline to match them.

Practical guidance

- Map workloads to model characteristics: use small, optimized models for sensor‑stream summarization and larger reasoning models for cross‑plant diagnostics.

- Pilot multi-model routing: run the same task against two or three candidate models and record cost, latency, and accuracy.

- Require model portability contracts: insist on exportable artifacts, model weights where possible, and documented retry/rollback plans for model upgrades.

The Business Apps Wedge: Partner or Competitor?

Microsoft’s advantage in the enterprise fabric

Microsoft’s strength is its entrenched role as the digital nervous system of the white‑collar enterprise: Teams, Outlook, SharePoint, and Dynamics are where workflows, approvals, and engineering communications live. Embedding Copilots and agents into that fabric gives Microsoft a natural orchestration layer across business processes that matter to industrial leaders — procurement, EHS, field service, and engineering change control. That makes Microsoft both an attractive partner and a direct competitor to vendors that sell vertically oriented ERP, FSM, and industry suites. The practical consequence is vendor dynamics that are complex: industrial software vendors may gain deep operational integration with Microsoft, while simultaneously competing in boardroom selection processes.AWS’s neutrality and the “Switzerland” stance

AWS has chosen a different lane: provide robust infrastructure and open, neutral services that let ISVs and systems integrators build and own the application layer. It does not sell ERP or CRM as its primary offering, which reduces direct application-layer competition with customers and independent software vendors. That neutrality makes AWS an attractive host for vendor-built industrial apps — but it shifts responsibility to customers and partners for application-level governance and orchestration. In short: Microsoft offers an integrated business apps wedge; AWS offers a flexible foundation for builders.Business implications for CIOs/CDOs

- If your transformation depends on rapid rollout across knowledge workers and you already run large Microsoft estates, the orchestration route shortens internal adoption friction.

- If your competitive edge is in product‑ or process‑level differentiation — custom ML models, proprietary digital twins, or novel control logic — the builder path preserves engineering flexibility.

- Hybrid choices exist: run business‑facing copilots in Microsoft 365 while keeping heavy industrial model training and edge inference on AWS or on-prem systems.

Compute and Sovereignty: Software Control vs. Integrated Hybrid Infrastructure

Microsoft’s software‑defined hybrid approach

Microsoft’s hybrid story centers on a software control plane: Azure Arc, Azure Local (previously Azure Stack HCI), and a fabric that extends policy, identity, and telemetry to on‑prem infrastructure. This approach treats local hardware as managed cloud resources and makes hybrid operational management consistent with Azure tooling. It’s attractive where customers want a single control-plane and a predictable update path across distributed sites.AWS’s physical approach: AI Factories and Trainium

AWS has moved into a physically integrated hybrid play with AWS AI Factories — pre‑integrated racks that combine AWS Trainium accelerators and NVIDIA GPUs, specialized networking, and storage, designed to be deployed in customer data centers. This “box-in-the-rack” model prioritizes time-to‑deploy, performance, and physical sovereignty where legal, security, or latency constraints require compute to remain strictly local. The difference matters: some industrial and defense workloads cannot tolerate remote control planes or ambiguous data residency; they require hardware that’s physically present and optimized for large-scale training and secure inference.How to choose: a practical rubric

- Regulatory/residency constraints: choose physical local compute if law or defense classification forbids data export.

- Latency and determinism: sub‑10ms inference guarantees favor purpose‑built on‑prem appliances.

- Operational model maturity: if your IT/OT teams lack cloud‑native tooling, a software control plane that unifies policies may reduce operational risk.

Checklist before procurement

- Validate SLAs for on‑prem racks (support, spare parts, remote diagnostics).

- Demand clear data flow diagrams and attestations about telemetry and control-plane connectivity.

- Require independent benchmarks and pilot runs using your telemetry and models.

Robotics and IoT: The Legacy Incumbent vs. the Modern Innovator

The industry moment: RoboMaker’s exit and what it signals

AWS’s decision to discontinue RoboMaker effective September 10, 2025 — with migration guidance toward containerized workflows on AWS Batch — is a clear signal that robotics innovation is shifting from monolithic vendor-managed services to containerized, pipeline-driven developer ecosystems. AWS’s own documentation and public notices confirm the platform’s end of support and migration path recommendations. That transition lets AWS focus on infrastructure primitives (compute, simulation orchestration, data pipelines) rather than a single robotics runtime. Microsoft’s legacy on the factory floor — deriving from Windows Embedded and Windows IoT lineage — gives it enormous reach into devices and operators’ user interfaces. That footprint buys Microsoft scale in data and control points but also creates a maintenance burden: older devices, drivers, and update processes make rapid innovation at the edge harder. Meanwhile, AWS sidesteps legacy OS constraints and invests where scale matters — telemetry ingestion, fleet orchestration, and simulation-as‑container workflows — letting partners and ISVs own device-level innovation.The pragmatic industrial robotics stack in 2026

- Edge compute: purpose‑built inference appliances or integrated AI Factory racks for heavy on‑site training.

- Fleet orchestration: containerization, orchestration pipelines, and observability to run simulation, training, and validation workloads.

- Device runtime: either vendor‑managed OS (legacy Windows IoT) or modern containerized runtimes (ROS2, containerized microservices).

Risks and operational realities

- Legacy device sprawl: Microsoft‑centric estates still require expensive modernization projects or careful segmentation to run newer agentic capabilities safely.

- Simulation and validation: reproducible, hardware‑in‑the‑loop testing is still a bottleneck for scaled robotics deployment; containerized simulation pipelines ease reproducibility but shift engineering burdens to ops teams.

- Attack surface: introducing agents and telemetry into OT increases exploitable vectors; segmentation, identity, and hardened gateways are mandatory.

ARC Perspective: Architectural Philosophy and the CIO Decision Matrix

Two end states, two core philosophies

- The Orchestrator (Microsoft): prioritize governance, identity‑bound agents, rapid workforce augmentation, and a single operational fabric that spans office and factory. This reduces integration friction for knowledge-worker workflows and simplifies the governance story for heavily regulated industries.

- The Builder (AWS): prioritize model independence, physical and compute sovereignty, and the ability to create bespoke model stacks and on‑prem infrastructure. This preserves engineering differentiation and gives full control to in‑house teams or specialized vendors.

Strengths and trade-offs

- Orchestrator strengths:

- Rapid adoption inside Microsoft-centric enterprises.

- Built-in governance constructs: agent registries, RBAC, Purview controls.

- Seamless embedding into knowledge‑worker flows (Teams, Office, Dynamics).

- Trade-offs: potential vendor tie‑in and reduced model control for edge‑oriented, sovereign workloads.

- Builder strengths:

- Maximum model and infrastructure choice; enables hybrid and sovereign deployments.

- Purpose‑built hardware for heavy on‑prem training and inference.

- Trade-offs: more operational work to implement governance primitives that orchestration-first stacks provide out of the box.

A recommended decision framework for industrial CIOs/CDOs

- Classify workloads by risk and sovereignty:

- Category A: Safety‑critical control loops, defense/manufacturing secrets.

- Category B: High‑volume telemetry and inference (predictive maintenance).

- Category C: Knowledge work, process automation, and white‑collar augmentation.

- Choose a blended architecture:

- Category A → builder / on‑prem appliance (AWS AI Factories or validated partner stacks).

- Category B → builder or hybrid, with distilled models deployed locally for cost efficiency.

- Category C → orchestrator for rapid deployment and user adoption, especially where Microsoft 365 is the workspace fabric.

- Negotiate vendor contracts for portability:

- Data export/cloning clauses, model weight transferability, and interoperability with open protocols (MCP, A2A).

- Pilot with measurable KPIs and independent validation:

- Insist on CFO‑grade metrics (MTTR, production uptime, energy savings) and third‑party verification where possible.

Governance, Safety, and the Hidden Costs of Lock‑In

Operational governance remains the decisive factor

Announcements and product roadmaps matter, but operationalization is the long game. Every new agent multiplies governance complexity: identity management, telemetry ingestion, audit trails, drift detection, and human‑in‑the‑loop mechanisms. Enterprises that skip those investments will amplify risk, not reduce it. Practical governance must include role‑based agent provisioning, tamper‑resistant logs, and automated rollback mechanisms as first‑class capabilities.The cost of “convenience” and economic realism

The economic calculus is often understated in vendor narratives. Training and retraining foundation models consume significant accelerator capacity; inference at scale costs money even with distilled models; and on‑prem racks require capital expense and ongoing lifecycle support. Procurement teams should model total cost of ownership across three axes — compute, integration (engineering hours), and governance — not just headline feature availability. Independent benchmarks and pilot-based costing are essential to avoid surprises.Flagging unverifiable or vendor‑reported claims

Some vendor ROI figures and multi‑fold improvement claims often originate from commissioned studies or vendor case studies. These are directional and useful, but they should be validated with independent pilots and audited metrics. Where claims cannot be independently reproduced, treat them as vendor‑reported and require contractual KPIs.Practical Roadmap: How to Pilot the Right Strategy in 90–180 Days

- Inventory and classify data and systems (0–30 days)

- Map PLM, MES, ERP, historian, and field telemetry. Tag sensitivity and residency requirements.

- Define pilot KPIs and rollback rules (0–30 days)

- Select one high‑value use case (e.g., line‑level predictive maintenance with measurable MTTR improvements).

- Run model comparison pilots (30–90 days)

- Bench the same task on two models (one orchestration-first model and one builder‑preferred model) to compare cost, latency, and reliability.

- Harden governance and human‑in‑the‑loop controls (60–120 days)

- Implement RBAC, agent registries, and audit logging. Define escalation paths and trigger thresholds for manual intervention.

- Evaluate compute sovereignty needs and pilot on‑prem if required (90–180 days)

- If latency or regulation demands, run a short on‑prem pilot using dedicated racks or validated HCI solutions.

Conclusion

The industrial AI decision in 2026 is less a binary cloud choice and more an architectural posture: will your organization prioritize a unified, identity‑bound orchestration fabric that accelerates workforce adoption, or will it prioritize engineering autonomy, model independence, and physical compute sovereignty to protect and differentiate core operations? The correct answer for many industrial leaders will be hybrid — use orchestration for enterprise productivity and governance; use builder primitives for sovereign, mission‑critical differentiation. That requires disciplined procurement, model portability contracts, measurable pilots, and an AgentOps mindset that treats agents as auditable, governed services rather than marketing buzzwords. The next 18 months will separate vendors who deliver operational reliability from those who deliver promising demos; the winners for industrial enterprises will be those who match platform capability with operational rigor.Key takeaways for industrial leaders

- Prioritize measurable pilots with CFO‑grade KPIs and independent validation.

- Map workloads by sovereignty and risk, then apply the Orchestrator vs Builder rubric.

- Insist on portability, auditability, and transparent model lifecycle contracts.

- Build AgentOps capabilities: registries, identity, human‑in‑the‑loop gates, and rollback playbooks.

Source: ARC Advisory The Industrial AI Evolution: The Great Bifurcation—Orchestrator vs Builder