Intel’s recruiting ad for a “Unified Core” CPU team has reignited a debate that’s been simmering inside chip-hungry communities for years: is the hybrid P‑core/E‑core era coming to an end, and is Intel quietly preparing a return to a single, unified core fabric? The short answer is: possibly — but the evidence is circumstantial, the timeline long, and the implications for software, performance, and platform design are more complex than simple headlines suggest.

Intel’s hybrid approach — pairing high-performance P‑cores with high-efficiency E‑cores — arrived with 12th‑Gen Alder Lake and was designed to balance single‑thread speed and multi‑threaded throughput while improving power efficiency. That model works because the CPU and operating system cooperate: Intel’s Thread Director provides real‑time scheduling hints so the OS can place the right thread on the right core type. The hybrid model enabled higher core counts without exploding power budgets, but it also introduced scheduling complexity that some workloads (notably certain games and latency‑sensitive tasks) have found awkward to manage.

What changed this week is not a product launch but a personnel posting: multiple copies of a LinkedIn job listing and third‑party reposts show Intel recruiting a Senior CPU Verification Engineer for a team explicitly named Unified Core in Austin, Texas. The role centers on pre‑silicon verification of CPU logic designs — a stage of development that typically occurs very early in a CPU program’s life cycle. Journalists who spotted and reported on the listing — and Intel job aggregators that mirrored it — suggest the posting was live only briefly, which further fueled speculation.

Meanwhile, Intel’s own public materials continue to explain and defend the hybrid approach as of their latest developer documentation — meaning Intel hasn’t publicly declared hybrid obsolete. The company’s public narrative still treats hybrid as a mainstream strategy while the Unified Core hiring signals parallel experimentation.

But executing such a pivot without harming energy efficiency would be the major technical challenge. E‑cores are tiny for a reason: they deliver blanket background performance at a fraction of the power of big cores. Replacing them with scaled‑down variants of a larger core will require excellent low‑voltage microarchitecture engineering and possibly new power‑management topologies.

Finally, Intel’s public materials still champion hybrid approaches. That suggests the company is hedging: keeping hybrid in the field while letting research teams evaluate unified options. That’s a smart approach for a company operating at Intel’s scale — it reduces exposure while exploring high‑upside alternatives.

For the enthusiast and the enterprise buyer, the short‑term reality is unchanged: Intel’s hybrid architecture remains the company’s shipping foundation, and any unified successor would require several years of engineering and validation before it appears in retail or datacenter racks. In the meantime, watch the hiring signals, architecture disclosures, and early silicon leaks — they will be the clearest breadcrumbs on whether Intel is truly preparing a post‑hybrid future or simply expanding its architectural toolkit.

Source: Windows Central Is Intel ditching its hybrid architecture? A new job listing fans rumors.

Background / Overview

Background / Overview

Intel’s hybrid approach — pairing high-performance P‑cores with high-efficiency E‑cores — arrived with 12th‑Gen Alder Lake and was designed to balance single‑thread speed and multi‑threaded throughput while improving power efficiency. That model works because the CPU and operating system cooperate: Intel’s Thread Director provides real‑time scheduling hints so the OS can place the right thread on the right core type. The hybrid model enabled higher core counts without exploding power budgets, but it also introduced scheduling complexity that some workloads (notably certain games and latency‑sensitive tasks) have found awkward to manage.What changed this week is not a product launch but a personnel posting: multiple copies of a LinkedIn job listing and third‑party reposts show Intel recruiting a Senior CPU Verification Engineer for a team explicitly named Unified Core in Austin, Texas. The role centers on pre‑silicon verification of CPU logic designs — a stage of development that typically occurs very early in a CPU program’s life cycle. Journalists who spotted and reported on the listing — and Intel job aggregators that mirrored it — suggest the posting was live only briefly, which further fueled speculation.

What the job listing actually tells us

The immediate facts

- The job title and posting tie a team named Unified Core to Intel’s Silicon and Platform Engineering (SPE) organization, and the role’s responsibilities are classic CPU verification work: building UVM testbenches, running system‑level simulations, debugging pre‑silicon issues, and working with RTL designers and architects. These are real and well‑defined engineering activities — not marketing fluff.

- Multiple reputable outlets and job mirrors captured the listing while it was visible and republished its highlights; coverage appeared on mainstream hardware sites and regional tech outlets within 24–48 hours of the posting. The speed of pickup reflects both the listing’s specificity and the ongoing interest in Intel’s roadmap.

What the listing does not prove

- A team name is not a finished product. A hiring ad confirms that Intel is exploring or building something under the Unified Core name, but it does not prove that Intel will ship a desktop or server CPU with that branding, architecture, or timeline.

- Job postings can be exploratory, duplicative, or part of internal reorganization. Companies sometimes create roles to investigate options or to consolidate prior initiatives. Hiring for verification engineers is also recurring for multiple Intel projects worldwide; the presence of one listing does not automatically mean the entire company is abandoning hybrid designs.

- There is no public Intel technical brief or press release announcing Unified Core as a finished architecture. Until Intel publishes an official roadmap or tape‑out announcement, the market must treat the hiring posting as a credible signal but not a confirmation.

Why a hiring post matters: the cadence of silicon development

Designing, verifying, and tape‑outing modern x86 CPUs is a multi‑year endeavor. Verification engineers join the timeline long before customers see silicon; their work is foundational and precedes tape‑out, bring‑up, and system validation. Because of that lead time, the presence of verification‑level hiring for a new core design implies the project is at least in active R&D. If Intel’s objective were to launch a new microarchitecture to market within a three‑to‑five year window, hiring verification engineers now would align with that schedule. Conversely, a consumer product deployment could easily be four to five years away, depending on design scope, foundry or internal process choices, and platform integration work. Tom’s Hardware and other outlets that analyzed the posting estimate that productization — if pursued — would likely target the 2029–2030 timeframe rather than 2026–2027. That estimate matches typical internal calendars for large‑scale microarchitectural shifts.Unified Core vs Hybrid: what would change?

The current model — hybrid P/E cores

Intel’s hybrid approach gives architects two levers: raw single‑thread performance from P‑cores, and scaled multi‑threaded or background efficiency from E‑cores. The advantages are clear:- Higher overall thread counts without linear power scaling.

- Better idle and background efficiency, improving battery life for mobile devices.

- Architectural freedom to tune one core for peak single‑thread IPC and another for area‑/power‑efficiency.

- Scheduling complexity that moved some intelligence from hardware into the OS.

- Edge‑case behavior where certain applications mis‑schedule or suffer latency spikes.

- Additional platform complexity (e.g., cache hierarchies and coherency, feature parity between core types).

The unified alternative

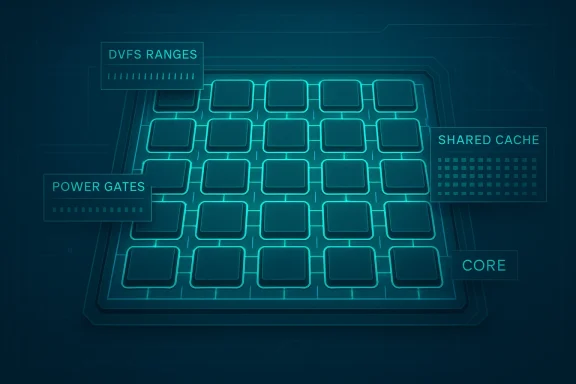

A Unified Core architecture — broadly defined — would use one core microarchitecture that is scaled or configured up or down across product segments. That model resembles AMD’s strategy with Zen 5 and Zen 5c: the same microarchitectural foundation, tuned to different power/performance points. Advantages of unification include:- Simplified scheduling: the OS scheduler faces a symmetric set of cores, eliminating the need for a Thread Director‑style hardware‑assisted scheduling layer.

- Easier software expectations: developers and OS vendors can reason about a single core type’s performance characteristics.

- Potential for larger caches, bigger integrated accelerators (NPUs), or bigger iGPUs per die area freed from hybrid control logic.

- Loss of a dedicated, extremely low‑power core class for background tasks could hurt ultra‑low power scenarios unless the unified core is highly scalable.

- To maintain energy efficiency, the unified core must implement aggressive power gating, DVFS ranges, and microarchitectural modes — all of which add design complexity in different places.

How Intel might implement a Unified Core — plausible architectures

There are several credible technical approaches Intel could take if it truly wants to move away from a strict P/E split:- Scale‑down unified cores: design a single core microarchitecture that can operate across a wide power/performance envelope — much like ARM’s scalable cores or AMD’s approach with Zen/Zen‑C. That requires excellent low‑voltage behavior and a wide DVFS window.

- Performance bins inside the same core: build cores that can be configured at a silicon or firmware level to enable/disable certain front‑end or back‑end features (e.g., fewer execution units or tighter power limits), producing high‑speed and low‑power variants from the same RTL base.

- Hierarchical “software‑defined supercore”: some public speculation suggests Intel could create a unified building block that supports software‑defined composition — essentially tiling many identical cores and exposing flexible cluster behavior to the OS or firmware. That’s speculative but intellectually consistent with modern chiplet/logical tile thinking.

Software and ecosystem impacts: Thread Director and beyond

Intel’s Thread Director was created to solve a real problem: coordinating heterogenous core types without breaking legacy OS and application assumptions. If Intel adopts a truly symmetric unified core, the immediate benefits are less scheduling complexity and fewer corner cases for older software. However:- A unified approach shifts the burden onto microarchitectural scaling to deliver the same energy envelope that E‑cores offered. Achieving that without efficiency‑optimized small cores is nontrivial.

- The OS and firmware will still need to handle power domains, core parking, and frequency management; a unified fabric changes the knobs but doesn’t remove them. Thread Director solved an acute scheduling gap; removing that gap is beneficial, but it does not magically make power and thermal problems vanish.

- For datacenter and server markets, a unified core could simplify VM scheduling and per‑core feature parity, improving software portability across SKUs. For laptop and mobile designs, however, achieving battery life parity with hybrid designs will be the engineering challenge to watch.

What the market and competitors are doing (context)

AMD’s strategy with modern Zen cores has offered a template: similar microarchitecture across core classes and different tuning to hit power/performance targets. This symmetry makes scheduler behavior predictable and reduces platform idiosyncrasies that can bite real‑world workloads. Notebookcheck and others have reported that future Intel roadmaps (Razer Lake → Hammer Lake timelines and beyond) have discussed an evolution toward greater focus on E‑core design and the possibility of a unified core later in the decade. Those leaks and analyses are consistent with the idea that Intel is exploring multiple directions, not committing to a single future yet.Meanwhile, Intel’s own public materials continue to explain and defend the hybrid approach as of their latest developer documentation — meaning Intel hasn’t publicly declared hybrid obsolete. The company’s public narrative still treats hybrid as a mainstream strategy while the Unified Core hiring signals parallel experimentation.

Strengths and potential benefits of Unified Core for Intel

- Simplified product stack: One core type scales easier across client and server SKUs, which could streamline validation, firmware, and customer education.

- Reduced scheduling edge cases: Gaming and latency‑sensitive workloads that sometimes show unpredictable behavior under mixed P/E systems could benefit from a symmetric core landscape.

- More die area for accelerators: Eliminating the need for two distinct core layouts might free up die real estate for larger NPUs, integrated GPUs, or bigger caches — useful as AI on the client accelerates in importance.

- Better time to market for core innovations: A unified microarchitecture enables a single RTL baseline to be optimized incrementally, potentially shortening microarchitecture refresh cycles once a robust base is established.

Risks, downsides, and unanswered technical questions

- Power efficiency tradeoffs: The main risk is losing the efficiency advantage E‑cores provide. Unless the unified core matches or beats E‑core energy efficiency at low loads, real battery life or thermals could regress in thin and light laptops.

- Engineering complexity moves elsewhere: Simplifying scheduler complexity shifts complexity into the core’s microarchitecture, DVFS control, and power domains — these are solved problems but expensive and time‑consuming to perfect.

- Timeline and execution risk: A move of this scale requires years of validation and silicon iteration. The hiring ad and ancillary leaks point to an R&D commitment, not a guaranteed, on‑time product. Market windows that Intel must hit (e.g., to compete with AMD’s multicore performance or Apple/ARM’s efficiency gains) are unforgiving.

- Perception risk: Customers and OEM partners buy predictability. Frequent, ambiguous roadmap chatter can create hesitancy among OEMs making platform‑level decisions two years ahead of product launch. Intel has to manage expectations carefully to avoid an “uncertainty tax” on PC builders and enterprise buyers.

Practical scenarios and what consumers should expect

If Intel ships Unified Core for desktops and laptops:

- Expect simpler core counts (e.g., “24 identical cores” rather than an 8P+16E split).

- Scheduling should be easier for game developers and background service designers.

- OEM thermal profiles and laptop battery targets will be the early barometer: if battery life remains competitive, unified core gained an edge; if not, Intel will face criticism.

If Intel uses unified core selectively (e.g., client only, or mobile first):

- The company could keep hybrid designs for server and HPC segments where differential core types still make sense, while streamlining client SKUs.

- That would be the lowest‑risk route: incremental migration without full platform upheaval.

If the posting is exploratory:

- Intel may be staffing options and running parallel programs; don’t expect wholesale product changes immediately. The hiring ad could simply be a sign that Intel wants to learn how far a unified design can go before committing to mass production.

How to read the timeline and what to watch next

- Company communications: an Intel architecture or press announcement mentioning Unified Core, or an official roadmap update, would be definitive. Until then, treat leaks as credible but not conclusive.

- Patent and paper filings: new microarchitecture patents, white papers, or talks at industry conferences can reveal the direction of research. Those are slower signals but highly informative.

- Additional hiring and team expansion: sustained hiring for Unified Core at multiple sites, or movement of high‑profile architects into a named program, suggests a committed product cycle rather than an exploratory project. Job boards and LinkedIn will remain useful trackers.

- Leaks and silicon samples: early engineering samples (ES) or performance leaks would accelerate belief in an imminent transition — but also be skeptical until multiple independent data points converge.

My analysis: pragmatic optimism with caveats

Intel’s Unified Core job posting is a meaningful data point: it shows the company is actively exploring architectures that would reduce the heterogeneity induced by the P/E split. The possible advantages — simpler scheduling, potential for larger integrated accelerators, and a unified validation baseline — are real and could be strategic in the era of on‑device AI and increasingly complex system‑level features.But executing such a pivot without harming energy efficiency would be the major technical challenge. E‑cores are tiny for a reason: they deliver blanket background performance at a fraction of the power of big cores. Replacing them with scaled‑down variants of a larger core will require excellent low‑voltage microarchitecture engineering and possibly new power‑management topologies.

Finally, Intel’s public materials still champion hybrid approaches. That suggests the company is hedging: keeping hybrid in the field while letting research teams evaluate unified options. That’s a smart approach for a company operating at Intel’s scale — it reduces exposure while exploring high‑upside alternatives.

What this means for AMD, Apple, and the broader CPU landscape

- AMD benefits from the narrative that symmetric cores offer development simplicity; any Intel move toward unification narrows the architectural contrast between Intel and AMD. AMD’s gain would therefore be in competitive rhetoric more than immediate technical advantage.

- Apple and ARM‑based players continue to press the efficiency envelope. Intel’s choice will reflect whether it prioritizes performance complexity (hybrid) or symmetric scaling and integration (unified). Either path can succeed — it depends on execution.

- For OEMs and enterprise buyers, the near‑term impact is minimal. Product cycles and procurement decisions are shaped by actual parts and their characteristics, not job postings. The true test will be shipping silicon and platform benchmarks.

Takeaways and what to watch this year

- The Unified Core job posting is a credible signal that Intel is investing in alternatives to a rigid hybrid P/E model. Treat it as a research/early engineering confirmation, not a product announcement.

- Real product implications, if they exist, are years away. Timelines discussed in coverage and industry analysis point toward the late 2020s as the earliest plausible shipping window for any wholesale core redefinition.

- The most important near‑term metrics to evaluate: battery life on mobile SKUs, deterministic latency behavior for games and interactive apps, and whether Intel can preserve or improve area‑efficiency per function compared with today’s E‑core + P‑core balance.

- Watch for more hiring posts, architecture papers, patent activity, and any Intel keynote or architecture day disclosures that use the Unified Core phraseology. Those will be the clearest signs that Intel is moving from investigation to commitment.

Conclusion

Intel’s brief, visible flirtation with the Unified Core label is a meaningful — but not decisive — data point. It underlines the company’s active exploration of architectural options as it competes for performance leadership, efficiency, and integration of accelerators. The benefits of moving away from hybrid heterogeneity are attractive: simpler scheduling, unified validation, and headroom for accelerators. The risks are equally real: matching E‑core efficiency, re‑engineering power management, and executing a multi‑year transition without disrupting platform partners.For the enthusiast and the enterprise buyer, the short‑term reality is unchanged: Intel’s hybrid architecture remains the company’s shipping foundation, and any unified successor would require several years of engineering and validation before it appears in retail or datacenter racks. In the meantime, watch the hiring signals, architecture disclosures, and early silicon leaks — they will be the clearest breadcrumbs on whether Intel is truly preparing a post‑hybrid future or simply expanding its architectural toolkit.

Source: Windows Central Is Intel ditching its hybrid architecture? A new job listing fans rumors.