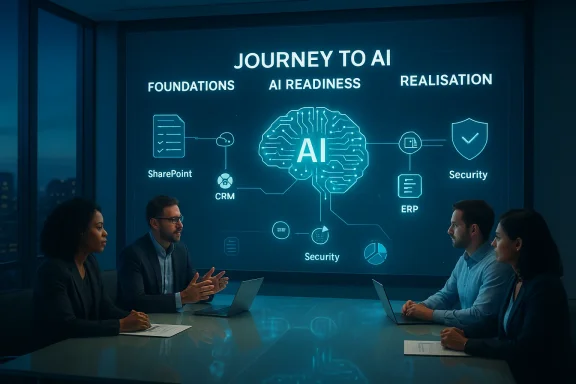

ANS’s approach reframes AI adoption as a journey rather than a one‑off project, giving partners a structured set of tools — from an initial data and security assessment to an “AI Readiness” phase and a delivery toolkit called the Journey to AI App — that helps organisations adopt Microsoft AI services such as Copilot in a staged, governed and business‑outcome driven way.

Microsoft has pushed AI into the center of its product strategy, embedding generative and agentic capabilities across Microsoft 365, Azure and the Power Platform. That shift turns familiar tools — Word, Excel, Teams and Azure services — into channels for productivity AI, custom agents and automated workflows. Copilot has become a platform focal point, and Copilot Studio and Azure AI Foundry are explicit examples of how Microsoft expects partners and customers to build tailored agents and enterprise AI solutions.

At the same time, the market is noisy and cautious: customers face competing claims, new security risks, unclear business cases and the operational work required to run models safely and at scale. This creates a commercial opening for partners that can combine Microsoft technical capability with disciplined change management, security and data governance. ANS positions itself — and its partner ecosystem — as the guide for that route.

This approach aligns with partner enablement signals from Microsoft itself, which is increasing partner benefits and Azure/Copilot credits to stimulate agent and Copilot development. Partners that offer outcomes (productivity uplift, faster innovation, improved customer experience) position themselves for earlier engagements and longer lifetime value.

Microsoft’s public roadmap and documentation on Copilot, Copilot Studio, and agentic AI corroborate the technical context ANS works within: Microsoft has been extending Copilot into multiple products, opening agent creation and management tools, and adding governance and telemetry integrations to give IT teams oversight of AI interactions. Those platform capabilities are the foundation on which partners will build operational offerings.

That said, partners should beware of a few persistent hazards:

Source: Comms Business The journey to AI - Comms Business

Background: why this matters now

Background: why this matters now

Microsoft has pushed AI into the center of its product strategy, embedding generative and agentic capabilities across Microsoft 365, Azure and the Power Platform. That shift turns familiar tools — Word, Excel, Teams and Azure services — into channels for productivity AI, custom agents and automated workflows. Copilot has become a platform focal point, and Copilot Studio and Azure AI Foundry are explicit examples of how Microsoft expects partners and customers to build tailored agents and enterprise AI solutions.At the same time, the market is noisy and cautious: customers face competing claims, new security risks, unclear business cases and the operational work required to run models safely and at scale. This creates a commercial opening for partners that can combine Microsoft technical capability with disciplined change management, security and data governance. ANS positions itself — and its partner ecosystem — as the guide for that route.

Summary of ANS’s “Journey to AI” proposition

Core idea in brief

ANS argues that successful AI is not a single deployment but a structured progression that must be aligned to organisational objectives. Their model breaks adoption into stages:- Foundations: assess and fix data quality, access, and baseline security.

- AI Readiness: define value, use cases, skills, governance and priorities.

- Realisation: move from plan to prodey to AI App and secure deployment of Microsoft AI offerings such as Copilot.

What ANS is promising partners

ANS’s messaging emphasises three partner benefits:- A repeatable, Microsoft‑aligned roadmap that can be applied to different starting points.

- Tooling and playbooks (the Journey to AI App) to help move customers from readiness into secure execution.

- Credibility and go‑to‑market strength backed by recent industry recognition: ANS was named Microsoft UK Partner of the Year 2025, a signal the firm is already embedded in Microsoft’s partner ecosystem.

Why partners should pay attention: the commercial case

AI becomes a strategic conversation, not a transactional sale

ANS’s play reframes AI from an add‑on feature to a multi‑year transformation. That reframing changes partner economics: instead of selling a single Copilot licence or an isolated integration, partners can deliver recurring advisory, implementation, security, monitoring and optimisation services that scale as customers expand AI usage.This approach aligns with partner enablement signals from Microsoft itself, which is increasing partner benefits and Azure/Copilot credits to stimulate agent and Copilot development. Partners that offer outcomes (productivity uplift, faster innovation, improved customer experience) position themselves for earlier engagements and longer lifetime value.

Market demand and customer readiness are uneven — tS correctly identifies heterogeneity in customer readiness: some organisations are already experimenting inside Microsoft 365; others need data cleanup and governance before exploration can start. Partners who can assess, prioritise and sequence work will be more effective at reducing risk and showing early wins — a classic route to larger programmes. The Journey to AI roadmap and readiness work sell precisely into that gap.

Evidence and validation: what independent data shows

ANS backs its security messaging with a sizeable survey and a published report, AI Readiness Secured, which canvassed more than 2,000 IT decision‑makers and shows a worrying disconnect between AI ambition and AI security maturity. The report highlights that a large majority view AI as core to strategy, yet many organisations treat security as an afterthought during implementation. ANS’s own material and third‑party summaries emphasise that spend and confidence do not equate to effective controls.Microsoft’s public roadmap and documentation on Copilot, Copilot Studio, and agentic AI corroborate the technical context ANS works within: Microsoft has been extending Copilot into multiple products, opening agent creation and management tools, and adding governance and telemetry integrations to give IT teams oversight of AI interactions. Those platform capabilities are the foundation on which partners will build operational offerings.

Critical analysis: strengths, limitations and risks

Strengths of ANS’s approach

- Practical sequencing: The three‑stage framework (foundations → readiness → realisation) matches what experienced transformation teams actually do when implementing complex technology — especially when data, compliance and change management matter.

- Partner enablement: Offering an app and playbooks gives partners repeatable assets, which reduces delivery friction and improves margins.

- Security emphasis: Explicitly making security central to the roadmap addresses the most credible risk customers worry about and creates a space for premium services (secure design, model risk assessment, SOC integration).

- Market credibility: ANS’s recognition as Microsoft UK Partner of the Year (2025) and Inner Circle participation strengthens customers’ trust in their Microsoft alignment.

Limitations and areas of caution

- Vendor lock‑in risk: Heavy reliance on Microsoft‑specific tooling (Copilot Studio, Azure AI Foundry, Microsoft 365 integrations) accelerates time‑to‑value but can create long‑term dependency for customers who later wish to migrate models or use multi‑cloud strategies. Partners must help customers plan exit and portability strategies.

- Operational complexity: Agentic AI and RAG (retrieval‑augmented generation) patterns introduce new operational surfaces — model monitoring, data drift, prompt injection, and access entitlements — that many partners still lack deep operational experience in. Delivering on the "secure and scalable" promise requires investment in LLM‑ops, observability and model security tooling.

- Overconfidence vs reality: ANS’s own report exposes a consistent market problem: organisations think they’ve invested enough, but objective embedding of security is low. Partners must avoid validating customer optimism and instead present concrete, measurable readiness gates.

Security risks that demand concrete actions

- Prompt injection and data leakage: RAG pipelines and embeddings can accidentally expose sensitive information unless retrieval layers and prompt sanitation are tightly controlled.

- Agent escalation: Agentic workflows that call APIs, move data, or take actions can escalate privileges or execute unintended operations if role separation and least‑privilege controls are not strict.

- Model poisoning and supply chain: Fine‑tuning with tainted datasets or third‑party components can introduce integrity issues.

- Operational monitoring blind spots: Traditional SIEM and SOC playbooks do not automatically capture model behaviour, hallucination rates or agent decision trails — creating gaps in forensic and compliance capabilities. Recent independent reporting also warns that poorly designed agent deployments can be abused to steal tokens or credentials, reinforcing the need for hardened controls.

Practical guidance for partners: a checklist partners can use today

Below is a stepwise, actionable checklist for partners who want to adopt the ANS Journey to AI approach while avoiding common pitfalls.- Foundations: Data and Identity

- Inventory critical sources for embeddings and RAG (documents, SharePoint, CRM, ERP).

- Apply data classification and tag PII before ingestion.

- Enforce least privilege and conditional access for AI workflows.

- Readiness: Use cases, governance, skills

- Run a short business‑value workshop to prioritise 2–3 measurable use cases (productivity, customer experience, cost reduction).

- Define governance: who approves prompts, who owns outputs, audit logging requirements, retention policies.

- Identify skill gaps and create a 6–12 week upskilling plan for IT, security and business teams.

- Pilot: Scoped, observable deployments

- Start with a narrow, high‑value pilot (e.g., Copilot for sales proposal drafting or an HR knowledge agent).

- Integrate telemetry from Copilot Studio / Copilot agents into SIEM/observability.

- Test for prompt injection and perform adversarial tests on RAG endpoints.

- Secure production: controls and operations

- Implement model monitoring: latency, hallucination rate, retrain triggers, query patterns.

- Enforce data governance: approved connectors only, sanitized contexts, and masking of sensitive tokens.

- Establish runbooks for incident response that include model‑specific scenarios.

- Scale: FinOps, lifecycle and continuous improvement

- Build cost governance and tagging for LLM usage (token counts, model selection).

- Mature model lifecycle practices: versioning, canary releases, rollback.

- Provide a continuous training schedule for business users to refine prompts and templates.

How partners can use ANS’s assets — and what to ask before you buy in

What the Journey to AI App should deliver for a partner engagement

- A repeatable assessment workflow that generates a clear remediation plan.

- Built‑in artefacts: capability maps, governance templates, security checklists and a prioritized roadmap.

- Deployment accelerators for Microsoft Copilot and Copilot Studio agents that include security guardrails.

- Measurable KPIs: time saved, adoption metrics, incident reduction and cost per user.

- Does the app produce exportable artefacts (so customers don’t become dependent on a single vendor)?

- Which telemetry and logs can the app surface to SOC and compliance teams?

- Is the app maintained to reflect Microsoft’s frequent Copilot/Copilot Studio updates and licensing changes?

- Are documents and playbooks aligned to industry standards (NIST/ISO) for security and data governance?

The partner opportunity: commercial models and go‑to‑market

Partners who package Journey to AI offerings should consider multi‑tier commercial models:- Entry advisory / assessment (fixed fee) — rapid discovery and readiness score.

- Pilot delivery (time & materials + fixed outcome) — limited scope, rapid ROI tracking.

- Managed platform (subscription) — hosting, security, model ops and governance.

- Outcomes/consumption (revenue share or per user value capture) — tied to productivity KPIs.

The regulatory and ethical landscape partners can’t ignore

AI governance is now top‑tier for boards and regulators. Key obligations and expectations include:- Auditability: maintain explainable records of agent decision trails and model versions.

- Privacy: provenance and consent controls for any customer data used in model context.

- Bias and fairness: assess models for disparate impact where outcomes affect decisions (hiring, lending, care).

- Data residency and contractual controls: especially for regulated sectors using third‑party models and cloud services.

Case study‑style examples: what success looks like

- Local government pothole classification: ANS helped a council use generative AI and image recognition to automate categorisation and prioritisation. The project cut manual review time, improved response rates and fed into better resource allocation — a classic productivity outcome enabled by tightly scoped data and governance.

- Large enterprise Copilot rollout: a staged approach starts with a productivity pilot in a single function, expands via governance templates and then integrates Copilot telemetry into security tooling, giving the organisation both business wins and assurance that the deployment is auditable and controllable. This mirrors the Journey to AI sequence ANS recommends.

Final assessment: where ANS’s model works best — and where partners must be careful

ANS’s Journey to AI model addresses the most common failure modes in AI adoption: lack of structured sequencing, weak governance, and mismatched expectations. By providing playbooks, a delivery app and Microsoft alignment, ANS creates a practical sales and delivery construct partners can use to capture the AI advisory market.That said, partners should beware of a few persistent hazards:

- Don’t let the presence of branded Microsoft tooling lull teams into thinking governance is solved — it is not. Copilot and Copilot Studio provide building blocks; protecting them in production requires additional operational rigor.

- Avoid packaging readiness as a tick‑box. The ANS report shows many organisations think they’re ready. Rigourous gate criteria and measurable controls are essential to prevent downstream incidents.

- Plan for platform evolution. Microsoft’s Copilot ecosystem is evolving quickly; partners must invest in continuous learning, automation to adapt to platform changes, and portability strategies for customers who demand multi‑model or multi‑cloud choices.

Practical next steps for partners and customers

- Run an evidence‑based readiness assessment focused on data, identity, governance and use‑case value.

- Choose a high‑value, low‑blast‑radius pilot and instrument it for security and observability.

- Require board‑level sponsorship and measurable KPIs tied to business outcomes.

- Embed model and agent telemetry into the SOC and ensure runbooks include AI‑specific response steps.

- Negotiate contractual protections and SLAs for model availability, data handling and portability.

Conclusion

AI’s promise — higher productivity, faster innovation and better customer experiences — is real. But delivering those outcomes reliably demands more than licences or feature checklists. ANS’s Journey to AI framework gives partners a structured path: a focus on foundations, a disciplined readiness phase and execution assets designed for Microsoft’s Copilot and Azure AI stack. The firm’s market recognition and its AI Readiness Secured research underline both the opportunity and the risk: customers are eager for AI, and yet security and governance frequently lag. Partners that combine business‑first use‑case thinking with hardened security, rigorous model operations and transparent governance will be the ones who turn early engagements into enduring relationships — and those are exactly the partners ANS aims to enable.Source: Comms Business The journey to AI - Comms Business