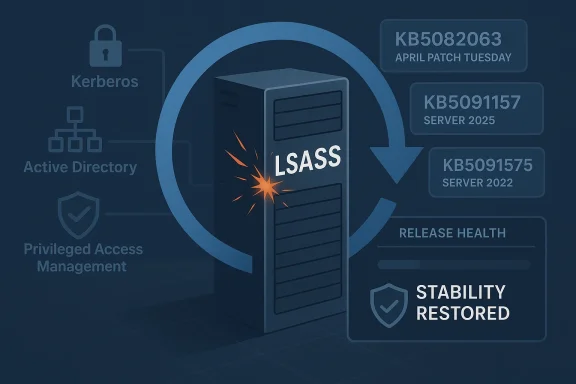

Microsoft’s emergency response to KB5082063 is a reminder that Windows Server patching in 2026 is now as much about operational risk management as it is about security hardening. What began as a routine April Patch Tuesday release quickly turned into a serious domain infrastructure issue, with reports of LSASS crashes, repeated restarts, and domain controller reboot loops in environments using Privileged Access Management. Microsoft has now moved to contain the fallout with out-of-band fixes KB5091157 for Windows Server 2025 and KB5091575 for Windows Server 2022, both aimed at restoring stability while preserving the security gains of the original update. The episode also fits an increasingly familiar pattern: Microsoft is shipping emergency corrections faster, but the complexity of modern Windows servicing is making each failure more consequential. Microsoft’s own support and release-health materials show that this was not an isolated hiccup but part of a broader cluster of post-release problems tied to the April security cycle.

The core of the problem is straightforward, even if the operational consequences were not. KB5082063, released on April 14, 2026, is a cumulative security update for Windows Server 2025 that Microsoft said included the latest security fixes and improvements. That release also landed amid a broader monthly patch cycle in which Microsoft was trying to close a large number of vulnerabilities, including publicly emphasized zero-days. In other words, administrators were being asked to accept some risk in the name of staying protected, which is exactly why a server-breaking regression is so difficult for Microsoft to manage once it appears.

The new failure mode centered on Local Security Authority Subsystem Service, better known as LSASS, the Windows component that handles authentication and enforces security policy. Microsoft’s release-health documentation and community reports indicate that on affected domain controllers, LSASS could stop responding during startup, which then triggered repeated restarts. In practical terms, that means the server reboots, hits the same fault, and reboots again before it can become useful as a domain controller. That is not just a server crash; it is an identity outage.

The detail that matters most is the environment: the issue was tied to non-Global Catalog domain controllers in forests that use PAM. That makes the problem narrower than a full fleet-wide Windows bug, but narrower is not the same thing as small when the target is authentication infrastructure. If a DC cannot start cleanly, users may not log in, services may not obtain tickets, and downstream systems can quickly start failing for reasons that look unrelated at first glance. The Microsoft Q&A threads are blunt on this point: administrators were told to contact Microsoft Support for business to obtain the mitigation.

This is also not the first time Microsoft has had to publish a rapid follow-up for a server-side authentication issue. Microsoft’s own support pages for earlier Windows Server releases show a recurring pattern of LSASS instability on domain controllers, and the current episode arrives after several other recent Windows servicing incidents that required a correction outside the normal monthly rhythm. That history matters because it changes how IT teams read Patch Tuesday: no longer as a tidy monthly event, but as a potentially multi-stage deployment cycle with hidden traps.

The practical distinction is simple:

The support note for the hotpatch release is especially revealing because it describes the exact symptom chain: after installing the April 14 security update and restarting, domain controllers with multi-domain forests using PAM might experience startup issues, LSASS may stop responding, and repeated restarts can prevent authentication and directory services from functioning. That language makes clear that Microsoft is not treating this as an ambiguous compatibility gripe; it is acknowledging a structured failure path in a supported configuration.

The OS build numbers also matter. Microsoft lists KB5091157 as build 26100.32698 for Server 2025 and KB5091575 as build 20348.5024 for Server 2022. Those build markers are the practical anchor for administrators managing mixed estates, because they determine which machines have the fix and which remain exposed. In large environments, the build number is often more useful than the marketing label.

That tradeoff is increasingly common in Windows servicing. Microsoft has to balance two risks at once: leaving a serious vulnerability unpatched, or shipping a patch that destabilizes core infrastructure. The fact that it chose an out-of-band fix rather than a full rollback suggests the underlying security posture of KB5082063 remained important enough that Microsoft wanted administrators to stay on a patched track.

Microsoft’s April guidance indicates that the affected servers were non-Global Catalog DCs in multi-domain forests using PAM. That suggests the failure path is likely connected to specific directory-role interactions rather than a universal kernel bug. It also means the bug can be highly environment-dependent, which is one of the hardest classes of Windows problems to debug because the same update can appear healthy in one forest and fatal in another.

The real-world consequences were not subtle:

That said, the immediate fixes Microsoft announced were version-specific. KB5091157 addresses Windows Server 2025, while KB5091575 is for Windows Server 2022. The fact that Microsoft published dedicated packages rather than a single universal remediation reflects the realities of Windows servicing: different branches, different baselines, different cumulative-package dependencies. In enterprise patch management, one size fits all is usually a fiction.

The impact split looks like this:

This is why Microsoft did not simply tell everyone to uninstall the patch and wait. If an update blocks authentication on critical servers, there is obvious operational pain. But if that same update also closes actively exploited holes, leaving it off the fleet can expose organizations to a different, and potentially more dangerous, class of incident. Administrators are often forced into a grim choice between availability now and security now.

A concise way to think about it is this:

That does not mean Microsoft is ignoring the issue. In fact, the quick publication of an emergency fix is evidence of responsiveness. But repeated incidents do affect perception. Every time a domain-controller update needs special handling, administrators become a little less willing to trust the baseline release and a little more dependent on release-health notes, hotfix guidance, and community verification.

The out-of-band fixes also illustrate a growing reality of Windows administration: servicing is becoming a release-engineering exercise. Administrators are no longer just applying updates; they are evaluating build branches, role sensitivity, dependency paths, and recovery options. Microsoft’s willingness to publish emergency updates is helpful, but it also assumes a level of operational maturity that smaller shops may not always have.

That shift has benefits. It lets Microsoft respond faster to severe regressions and ship targeted fixes without waiting for the next monthly cycle. But it also creates a new expectation: that every serious issue will be followed by a second release, a support note, or a mitigation thread. The cadence is more agile, but it is also more fragile because customers now have to track more moving parts.

At the same time, the scale of Microsoft’s installed base makes perfection unrealistic. The more code paths, server roles, and hybrid configurations the company supports, the more likely an obscure combination will fail in the wild. The practical question is not whether bugs occur, but how quickly Microsoft identifies them, communicates them, and ships a repair. On that score, this incident is mixed but not catastrophic.

Administrators should watch for three things in particular: whether Microsoft broadens the fix into later cumulative releases, whether additional mitigation notes appear for older Server versions, and whether release-health language changes from “known issue” to “resolved issue.” Those signals will determine whether this becomes a short-lived incident or a longer servicing headache. For now, the best assumption is that Microsoft has contained the immediate reboot-loop problem, but not the broader confidence issue around April 2026 server patching.

Source: Notebookcheck Microsoft fixes KB5082063 Windows Server domain controller reboot loops

Background

Background

The core of the problem is straightforward, even if the operational consequences were not. KB5082063, released on April 14, 2026, is a cumulative security update for Windows Server 2025 that Microsoft said included the latest security fixes and improvements. That release also landed amid a broader monthly patch cycle in which Microsoft was trying to close a large number of vulnerabilities, including publicly emphasized zero-days. In other words, administrators were being asked to accept some risk in the name of staying protected, which is exactly why a server-breaking regression is so difficult for Microsoft to manage once it appears.The new failure mode centered on Local Security Authority Subsystem Service, better known as LSASS, the Windows component that handles authentication and enforces security policy. Microsoft’s release-health documentation and community reports indicate that on affected domain controllers, LSASS could stop responding during startup, which then triggered repeated restarts. In practical terms, that means the server reboots, hits the same fault, and reboots again before it can become useful as a domain controller. That is not just a server crash; it is an identity outage.

The detail that matters most is the environment: the issue was tied to non-Global Catalog domain controllers in forests that use PAM. That makes the problem narrower than a full fleet-wide Windows bug, but narrower is not the same thing as small when the target is authentication infrastructure. If a DC cannot start cleanly, users may not log in, services may not obtain tickets, and downstream systems can quickly start failing for reasons that look unrelated at first glance. The Microsoft Q&A threads are blunt on this point: administrators were told to contact Microsoft Support for business to obtain the mitigation.

This is also not the first time Microsoft has had to publish a rapid follow-up for a server-side authentication issue. Microsoft’s own support pages for earlier Windows Server releases show a recurring pattern of LSASS instability on domain controllers, and the current episode arrives after several other recent Windows servicing incidents that required a correction outside the normal monthly rhythm. That history matters because it changes how IT teams read Patch Tuesday: no longer as a tidy monthly event, but as a potentially multi-stage deployment cycle with hidden traps.

Why domain controllers are different

A workstation that loops after an update is annoying. A domain controller that loops can become a business continuity incident. DCs sit at the center of Kerberos, directory lookups, group policy, and privileged access workflows, so any startup fault can cascade across the forest. That is why Microsoft’s own guidance and release-health materials tend to treat DC regressions with more urgency than ordinary client bugs.The practical distinction is simple:

- A workstation issue affects one user or one device.

- A domain controller issue can affect an entire domain.

- An LSASS crash during boot can stop authentication before the server is even reachable.

- PAM-enabled environments are especially sensitive because privileged workflows depend on the same directory plumbing.

- Recovery often requires hands-on intervention, not a casual reboot cycle.

What Microsoft Says Changed

Microsoft’s emergency follow-up came in the form of out-of-band updates, a servicing path the company uses when waiting for the next Patch Tuesday would be too disruptive. For Windows Server 2025, the fix is KB5091157, and for Windows Server 2022, the corresponding hotpatch/out-of-band correction is KB5091575. Microsoft says both are available through Windows Update, the Microsoft Update Catalog, and WSUS, which is important because it gives enterprise admins multiple ways to pick up the fix without waiting for the next scheduled cumulative release.The support note for the hotpatch release is especially revealing because it describes the exact symptom chain: after installing the April 14 security update and restarting, domain controllers with multi-domain forests using PAM might experience startup issues, LSASS may stop responding, and repeated restarts can prevent authentication and directory services from functioning. That language makes clear that Microsoft is not treating this as an ambiguous compatibility gripe; it is acknowledging a structured failure path in a supported configuration.

The OS build numbers also matter. Microsoft lists KB5091157 as build 26100.32698 for Server 2025 and KB5091575 as build 20348.5024 for Server 2022. Those build markers are the practical anchor for administrators managing mixed estates, because they determine which machines have the fix and which remain exposed. In large environments, the build number is often more useful than the marketing label.

Why an out-of-band patch instead of a rollback?

Microsoft did not simply retract KB5082063, and that decision is telling. The April update patched a large set of vulnerabilities, and Microsoft’s support page indicates it was part of a broader security-release wave rather than a narrow optional fix. Pulling it outright would have created its own security exposure, especially because Microsoft and its support ecosystem were already drawing attention to actively exploited threats in the same cycle. The company appears to have judged that a surgical correction was safer than undoing the whole security rollout.That tradeoff is increasingly common in Windows servicing. Microsoft has to balance two risks at once: leaving a serious vulnerability unpatched, or shipping a patch that destabilizes core infrastructure. The fact that it chose an out-of-band fix rather than a full rollback suggests the underlying security posture of KB5082063 remained important enough that Microsoft wanted administrators to stay on a patched track.

The LSASS Failure Mode

LSASS is one of those Windows components that most users never think about until something goes wrong. It manages local security authority functions, credential validation, and policy enforcement, which means it is tightly bound to the boot process on servers that act as identity providers. When LSASS crashes early in startup, the machine may never progress to a stable state, which is why the symptoms here were so severe.Microsoft’s April guidance indicates that the affected servers were non-Global Catalog DCs in multi-domain forests using PAM. That suggests the failure path is likely connected to specific directory-role interactions rather than a universal kernel bug. It also means the bug can be highly environment-dependent, which is one of the hardest classes of Windows problems to debug because the same update can appear healthy in one forest and fatal in another.

What administrators actually saw

The visible symptoms were ugly and operationally noisy. Some DCs would restart repeatedly, some would fail during new DC provisioning, and some would fall over when they started processing authentication requests early in the boot sequence. That creates a dangerous mix of predictability and randomness: the server may appear fine until a restart, then suddenly become unusable.The real-world consequences were not subtle:

- Users could not authenticate.

- Services depending on AD could stall.

- New DC setup could fail mid-process.

- Troubleshooting time increased because the machine was trapped in a loop.

- Recovery often required manual intervention or temporary rollback.

Scope Across Windows Server Versions

One of the more important aspects of the story is that the affected platform range was broad. The reboot-loop issue was reported across Windows Server 2025, Windows Server 2022, Windows Server 2019, Windows Server 2016, and Windows Server version 23H2. Even if the precise trigger conditions varied by edition, that broad footprint underlines how deeply this bug touched the server line.That said, the immediate fixes Microsoft announced were version-specific. KB5091157 addresses Windows Server 2025, while KB5091575 is for Windows Server 2022. The fact that Microsoft published dedicated packages rather than a single universal remediation reflects the realities of Windows servicing: different branches, different baselines, different cumulative-package dependencies. In enterprise patch management, one size fits all is usually a fiction.

Enterprise versus consumer impact

Microsoft says the issue does not affect personal devices and unmanaged consumer PCs, which is an important distinction because it contains the public-facing blast radius. But that does not make the bug less serious. In modern Windows estates, the most fragile and most valuable systems are often the ones running the domain, not the ones sitting on a desk. A consumer bug is inconvenient; a DC bug is existential for operations.The impact split looks like this:

- Consumers: little to no direct effect from this issue.

- Managed servers: potential startup failure after patching.

- Directory services: risk of broad authentication outages.

- IT teams: increased recovery and validation workload.

- Security teams: pressure to keep the security update in place if possible.

Why KB5082063 Was So Hard to Ignore

This story would be easier if KB5082063 were just a problematic update with modest security value. It was not. Microsoft’s April 14 release addressed a large number of vulnerabilities, including two that were publicly described as actively exploited zero-days. That is the central tension: the same update that caused the reboot-loop problem also materially improved security posture. In a world where defenders are already racing exploit timelines, a full rollback is rarely an attractive option.This is why Microsoft did not simply tell everyone to uninstall the patch and wait. If an update blocks authentication on critical servers, there is obvious operational pain. But if that same update also closes actively exploited holes, leaving it off the fleet can expose organizations to a different, and potentially more dangerous, class of incident. Administrators are often forced into a grim choice between availability now and security now.

The patch-management tradeoff

The episode exposes the downside of modern cumulative servicing. Microsoft bundles fixes aggressively because bundling reduces fragmentation, but the downside is that one flawed change can drag a large amount of business risk into the same release. That makes testing more important, but it also makes testing harder, because real-world server topologies are messy and not easy to reproduce in a lab.A concise way to think about it is this:

- Microsoft ships a broad security update.

- A niche but high-impact server role breaks.

- Administrators must decide whether to keep the patch, remove it, or apply the out-of-band fix.

- The resolution path depends on business tolerance for downtime and exposure.

- The original monthly cycle is no longer the whole story.

A Familiar April Problem for Microsoft

The most uncomfortable part of the story is its pattern. The April 2026 issue is not the first time a spring update has damaged a Windows Server identity workload, and Microsoft’s release history shows a series of prior DC problems that needed separate remediation. Microsoft’s own support pages and release-health material point to a broader history of LSASS-related or authentication-related regressions on server builds. That makes this look less like a one-off accident and more like a recurring pressure point in the servicing pipeline.That does not mean Microsoft is ignoring the issue. In fact, the quick publication of an emergency fix is evidence of responsiveness. But repeated incidents do affect perception. Every time a domain-controller update needs special handling, administrators become a little less willing to trust the baseline release and a little more dependent on release-health notes, hotfix guidance, and community verification.

The March-to-April-to-April cadence

Microsoft’s recent update history shows a rough rhythm that has become easy for admins to recognize:- A Patch Tuesday update ships.

- A niche server-side issue appears shortly afterward.

- Microsoft posts release-health guidance.

- An out-of-band package or targeted correction follows.

- The next month’s patch cycle inherits the confidence problem.

Enterprise Response and Operational Lessons

For IT teams, the immediate takeaway is not panic but sequencing. Microsoft’s support pages make clear that the affected platforms are server builds used in identity infrastructure, not consumer desktops, so the response needs to be aimed at production directory services rather than general endpoint cleanup. That means patch rings, staged rollout, recovery planning, and validation of every DC role in every forest.The out-of-band fixes also illustrate a growing reality of Windows administration: servicing is becoming a release-engineering exercise. Administrators are no longer just applying updates; they are evaluating build branches, role sensitivity, dependency paths, and recovery options. Microsoft’s willingness to publish emergency updates is helpful, but it also assumes a level of operational maturity that smaller shops may not always have.

Practical lessons from the incident

Several lessons stand out from the April 2026 event:- Test server updates against real identity roles, not just generic VM images.

- Track DCs separately from the rest of the server fleet.

- Monitor release-health pages as part of patch governance.

- Preserve a recovery path before deploying security cumulative updates.

- Treat PAM-enabled forests as higher-risk for regression testing.

- Keep rollback and remediation steps documented and rehearsed.

Broader Implications for Microsoft Servicing

There is a bigger story behind KB5082063 than a single bad update. Microsoft is trying to run Windows more like a modern cloud service, with faster corrections, narrower emergency packages, and more explicit release-health communication. That is a rational response to a more dangerous threat environment, but it also means the platform now behaves like a live service with all the operational baggage that implies.That shift has benefits. It lets Microsoft respond faster to severe regressions and ship targeted fixes without waiting for the next monthly cycle. But it also creates a new expectation: that every serious issue will be followed by a second release, a support note, or a mitigation thread. The cadence is more agile, but it is also more fragile because customers now have to track more moving parts.

Why this matters competitively

From a market perspective, reliability is part of Microsoft’s platform promise. Enterprise buyers do not just purchase Windows Server for features; they buy into trust, identity, and operational predictability. Every patching incident chips away at that trust, even when Microsoft ultimately fixes the problem quickly. Rivals in cloud and identity infrastructure benefit whenever Microsoft appears to be struggling with the basics of update stability.At the same time, the scale of Microsoft’s installed base makes perfection unrealistic. The more code paths, server roles, and hybrid configurations the company supports, the more likely an obscure combination will fail in the wild. The practical question is not whether bugs occur, but how quickly Microsoft identifies them, communicates them, and ships a repair. On that score, this incident is mixed but not catastrophic.

Strengths and Opportunities

Microsoft deserves credit for moving quickly once the reboot-loop problem was confirmed. The emergency fix was published as an out-of-band response, and the company made it available through the usual enterprise channels, which gives administrators flexibility in how they deploy it. That kind of responsiveness is essential when the affected systems are authentication servers and not ordinary endpoints.- The fix arrived as an out-of-band correction rather than waiting for the next monthly cycle.

- Microsoft provided multiple deployment paths, including Windows Update and WSUS.

- The issue was documented clearly enough for admins to identify the affected role.

- Separate builds for Server 2025 and Server 2022 reduce ambiguity.

- Microsoft preserved the security value of KB5082063 instead of simply discarding it.

- Release-health guidance helps support teams triage faster.

- The response shows Microsoft can still react quickly when infrastructure is at risk.

Risks and Concerns

The biggest concern is that this is becoming a pattern rather than an anomaly. When critical server updates repeatedly affect domain controllers, administrators begin to expect the unexpected, and that expectation has a cost. It slows deployments, complicates compliance, and increases the burden on teams that are already short on time.- Repeated DC regressions can erode trust in Patch Tuesday.

- Narrow fixes can still be painful if they hit identity infrastructure.

- Multi-domain PAM environments are operationally fragile.

- Emergency patches increase the number of moving parts in a fleet.

- Smaller IT teams may miss mitigation guidance or deploy out of order.

- The need for targeted hotfixes can complicate version control.

- A security fix that destabilizes identity services creates a hard tradeoff.

Looking Ahead

The next few weeks will tell us whether this remains a narrowly scoped server issue or becomes another case study in fragile identity servicing. If KB5091157 and KB5091575 hold up across mixed environments, the story will settle into the familiar pattern of a bad update followed by a targeted repair. If more edge cases emerge, Microsoft may need to extend the guidance further or publish additional corrections for adjacent Server branches.Administrators should watch for three things in particular: whether Microsoft broadens the fix into later cumulative releases, whether additional mitigation notes appear for older Server versions, and whether release-health language changes from “known issue” to “resolved issue.” Those signals will determine whether this becomes a short-lived incident or a longer servicing headache. For now, the best assumption is that Microsoft has contained the immediate reboot-loop problem, but not the broader confidence issue around April 2026 server patching.

- Confirm whether your DCs are on the affected builds.

- Prioritize PAM-enabled forests for validation.

- Deploy the out-of-band update through a controlled ring.

- Monitor directory service health after every reboot.

- Keep a rollback plan available until the fix proves stable.

- Review Microsoft release-health notes before the next monthly rollout.

- Separate workstation patch policy from DC patch policy.

Source: Notebookcheck Microsoft fixes KB5082063 Windows Server domain controller reboot loops