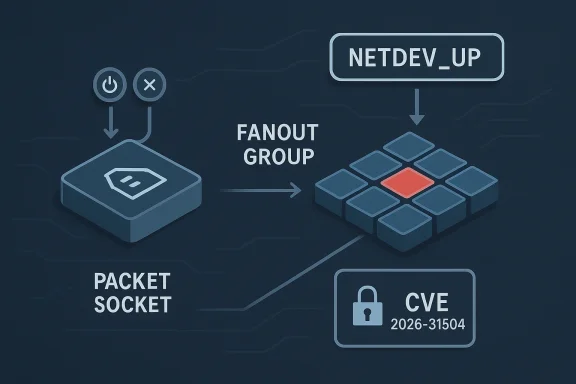

Linux has published another network-stack security fix that underscores how small lifetime bugs can become serious kernel problems. In CVE-2026-31504, the issue is a use-after-free risk in the packet socket fanout path, where a NETDEV_UP race can re-register a socket into a fanout group after

Packet sockets sit close to the metal in Linux networking. They are used for low-level capture, filtering, fanout, and other operations where raw packet handling matters more than higher-level protocol convenience. That makes their teardown paths especially sensitive, because the code must coordinate socket lifetime, device state, and notifier-driven events without leaving stale state behind. The more concurrency a subsystem has, the more dangerous it becomes when cleanup logic assumes the world has already stopped moving.

The CVE description points to a specific race window in

This matters because fanout arrays are not just convenience lists. They are live ownership structures. If a socket is present in the array after release, later code may dereference a pointer that should already be dead. In practical terms, that is the sort of bug that can turn routine interface churn into a kernel crash, memory corruption, or a hard-to-reproduce reliability failure. The bug was reportedly found after an additional audit using Claude Code, based on lessons from CVE-2025-38617, which is a reminder that one lifetime bug often exposes nearby assumptions in the same subsystem.

The broader pattern is familiar to anyone who follows Linux networking security. Kernel CVEs often begin as race-condition fixes that look small in isolation but matter because the code sits in a hot path or a broadly shared infrastructure layer. Even when a bug is not obviously weaponized, the kernel community tends to track it once the fix exists, because visibility helps downstream maintainers and vendors backport the right change. That cautious approach is part of why the Linux kernel security process remains useful: it treats risk as an operational fact, not just a completed exploit chain.

Another important point is that the affected path is not exotic. Packet sockets and device notifiers are foundational pieces of networking, and fanout is used in environments that care about throughput and packet distribution. When a bug lands in this layer, the effect can be broader than the immediate code path suggests, because the failure may surface as an unrelated panic, a networking stall, or strange behavior during device state transitions. That kind of indirection is exactly why race bugs in the network stack get serious attention.

Why

The CVE text makes a precise point: the fix sets

The significance is not just correctness. In a concurrent subsystem, stale state is often more dangerous than obviously missing state because it can look legitimate to another thread or notifier. A zeroed field clearly says “this association is gone.” A non-zero field says “please keep considering me,” which is the wrong message once teardown has started. The bug is therefore as much about truthfulness of state as it is about locking.

A fanout group assumes that membership and reference ownership line up. Once they diverge, cleanup code may free the socket while the array still points at it, or later consumers may walk the array and touch an object that no longer exists. This is exactly the kind of bug that can sit undetected until an unlucky timing sequence turns it into a panic. It is also why lifetime bugs in networking are treated seriously even when the immediate report is “just” a race.

The issue also illustrates a broader kernel-security truth: not every CVE needs to be a remote code execution primitive to matter. Some vulnerabilities are about stability, reliability, and the elimination of races that might later serve as exploit building blocks. In production, a kernel panic on a network-heavy host can be catastrophic even when no attacker can reliably weaponize the flaw. That is why administrators should not dismiss use-after-free races simply because the public disclosure sounds narrow.

This is also why kernel teams are careful to close race windows with state changes inside the protected section rather than after the fact. If a notifier can observe stale state, it can make a decision that no longer reflects reality. Once that happens, reference accounting and membership lists drift apart, and the system is left to discover the mismatch only when something later dereferences the wrong pointer.

The elegance of the patch is that it changes the meaning of the state before any concurrent observer can misread it. That is often the right answer in kernel concurrency work. Rather than adding heavier locking or redesigning the fanout lifecycle, the fix eliminates the race window by making the state machine honest. That is exactly the sort of intervention maintainers like to see when the underlying bug is localized.

This is also a good example of how small concurrency bugs can hide behind apparently harmless fields. A value that looks like bookkeeping may in fact be the gate that governs whether a socket can be resurrected during teardown. When that gate is left open too long, the rest of the code follows the wrong branch with perfectly reasonable confidence. That is why the safest fixes in kernel code often look deceptively simple.

That pattern has become a hallmark of modern kernel hardening. Sanitizers, code audits, and carefully scoped fixes routinely uncover bugs that are not dramatic at first glance but are still operationally important. The kernel project’s cautious CVE posture reflects that reality. It would rather track a real race early than let downstream users discover it through crashes in production.

That is why fixes like this matter beyond the immediate CVE. They teach the subsystem to express object lifetime more explicitly and earlier in the teardown sequence. Over time, those small corrections reduce the number of ambiguous states that can be observed by concurrent callbacks. It is boring work, but it is the kind of boring work that prevents hard-to-debug production incidents.

The operational burden is amplified because the symptoms may not point clearly at the fanout path. Teams may see unexplained crashes, transient link issues, or odd behavior in packet-processing components and spend time hunting in the wrong places. That is a classic sign of a kernel lifetime bug: the fault appears far away from the root cause, so diagnosis takes longer than the actual fix.

The safest operational stance is to verify the exact build rather than assume a version number tells the whole story. Vendor advisories, stable kernel backports, and package changelogs matter more than the upstream release tag. If a maintenance update includes this fix, it should be treated as a worthwhile deployment even if the public CVE text feels concise.

For many individual users, the practical response is straightforward: install kernel updates from the distribution that include the fix, especially if the machine doubles as a home server or test box. If the system never touches packet fanout or similar low-level network features, the risk is lower, but kernel maintenance is still the prudent path because updates usually carry more than one security correction. Low blast radius is not the same as no risk.

The practical advice is simple: if your machine runs custom networking features, treat this as a real update item. If it does not, keep an eye on your distribution’s security errata anyway, because backported kernel fixes often arrive bundled. Either way, this is a good reminder that specialized kernel paths are still part of the security surface.

Another thing to watch is how quickly downstream maintainers communicate the fix in ordinary package updates rather than separate security-only channels. For many users, especially on managed Linux fleets, that is how the patch will actually arrive. A small but precise kernel change like this often moves quietly through the ecosystem, which is exactly why administrators should verify it rather than assume it will be obvious in release notes.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

packet_release() has already begun tearing it down. The danger is subtle but real: the socket can be put back into f->arr[] without a matching reference increment, leaving a dangling pointer behind in the fanout array. The upstream fix is intentionally narrow, and that is usually a good sign in kernel security work, because it aims to close the race without reshaping the surrounding network machinery.

Background

Background

Packet sockets sit close to the metal in Linux networking. They are used for low-level capture, filtering, fanout, and other operations where raw packet handling matters more than higher-level protocol convenience. That makes their teardown paths especially sensitive, because the code must coordinate socket lifetime, device state, and notifier-driven events without leaving stale state behind. The more concurrency a subsystem has, the more dangerous it becomes when cleanup logic assumes the world has already stopped moving.The CVE description points to a specific race window in

packet_release(). The function does not zero po->num while holding bind_lock, so after the lock is dropped, the socket still looks bound to the device. If a concurrent packet_notifier(NETDEV_UP) has already found that socket in sklist, it can re-link the socket into the fanout group by calling __fanout_link(sk, po). The result is especially awkward for security analysis: f->arr[] gains a pointer to a socket that no longer has the expected ownership bookkeeping, because the re-registration path does not increment f->sk_ref.This matters because fanout arrays are not just convenience lists. They are live ownership structures. If a socket is present in the array after release, later code may dereference a pointer that should already be dead. In practical terms, that is the sort of bug that can turn routine interface churn into a kernel crash, memory corruption, or a hard-to-reproduce reliability failure. The bug was reportedly found after an additional audit using Claude Code, based on lessons from CVE-2025-38617, which is a reminder that one lifetime bug often exposes nearby assumptions in the same subsystem.

The broader pattern is familiar to anyone who follows Linux networking security. Kernel CVEs often begin as race-condition fixes that look small in isolation but matter because the code sits in a hot path or a broadly shared infrastructure layer. Even when a bug is not obviously weaponized, the kernel community tends to track it once the fix exists, because visibility helps downstream maintainers and vendors backport the right change. That cautious approach is part of why the Linux kernel security process remains useful: it treats risk as an operational fact, not just a completed exploit chain.

Another important point is that the affected path is not exotic. Packet sockets and device notifiers are foundational pieces of networking, and fanout is used in environments that care about throughput and packet distribution. When a bug lands in this layer, the effect can be broader than the immediate code path suggests, because the failure may surface as an unrelated panic, a networking stall, or strange behavior during device state transitions. That kind of indirection is exactly why race bugs in the network stack get serious attention.

What the Bug Does

At the heart of CVE-2026-31504 is a mismatch between teardown and re-registration. The socket release path begins cleanup, but it leavespo->num non-zero long enough for a concurrent NETDEV_UP notifier to believe the socket is still eligible for linkage. Once that happens, the socket can be added back into the fanout group even though release logic is already proceeding. In other words, the system is trying to free the object while another path is quietly putting it back on the roster.Why po->num matters

The CVE text makes a precise point: the fix sets po->num to zero while bind_lock is still held. That choice is not cosmetic. It removes the stale signal that tells the notifier path the socket is still associated with the bound device. By zeroing the field before the lock is released, the release path closes the window in which NETDEV_UP can make the wrong decision. That is a classic kernel hardening move: invalidate state at the earliest safe point so a concurrent observer cannot see half-true data.The significance is not just correctness. In a concurrent subsystem, stale state is often more dangerous than obviously missing state because it can look legitimate to another thread or notifier. A zeroed field clearly says “this association is gone.” A non-zero field says “please keep considering me,” which is the wrong message once teardown has started. The bug is therefore as much about truthfulness of state as it is about locking.

How the race becomes a lifetime bug

The reported re-link path calls__fanout_link(sk, po), which adds the socket back into f->arr[] and increments f->num_members, but does not increment f->sk_ref. That detail is the crux of the vulnerability. The object is visible again, but the reference accounting is incomplete, so the lifetime model no longer matches the structure contents. That is how a race in control flow becomes a dangling pointer problem later on.A fanout group assumes that membership and reference ownership line up. Once they diverge, cleanup code may free the socket while the array still points at it, or later consumers may walk the array and touch an object that no longer exists. This is exactly the kind of bug that can sit undetected until an unlucky timing sequence turns it into a panic. It is also why lifetime bugs in networking are treated seriously even when the immediate report is “just” a race.

packet_release()leaves a stale bound-device signal inpo->numNETDEV_UPcan race in and re-link the socket__fanout_link()restores array membership without full reference bookkeepingfanout_release()does not clean up the reintroduced pointer- The end state is a dangling socket pointer in the fanout array

Why This Is a Security Issue

This CVE is best understood as a use-after-free enabler rather than a pure logic bug. The kernel’s networking stack is full of concurrent state transitions, and anything that lets stale pointers survive release logic can destabilize the system. Even if exploitability is not publicly characterized in detail, a bug like this can produce crashes, corruption, or an opening for more advanced abuse depending on surrounding conditions.Lifetime bugs in hot paths are never trivial

Networking paths are heavily optimized and heavily exercised. That combination makes them attractive for attackers and painful for operators, because the same code that maximizes throughput also tends to have delicate ownership rules. A bug in a release path is particularly worrisome because cleanup code is supposed to be the final authority on object lifetime. If cleanup can be undermined by a notifier race, confidence in the whole object model drops.The issue also illustrates a broader kernel-security truth: not every CVE needs to be a remote code execution primitive to matter. Some vulnerabilities are about stability, reliability, and the elimination of races that might later serve as exploit building blocks. In production, a kernel panic on a network-heavy host can be catastrophic even when no attacker can reliably weaponize the flaw. That is why administrators should not dismiss use-after-free races simply because the public disclosure sounds narrow.

Why the notifier path is dangerous

The presence ofNETDEV_UP in the bug description is telling. Device-up transitions are routine, which means the vulnerable condition can arise during ordinary operational churn rather than only in contrived lab scenarios. That broadens the threat model: a host bringing interfaces up and down, automated orchestration touching NIC state, or dynamic device management can all contribute to the race. Bugs that can be reached through normal maintenance activity tend to be more operationally significant than their terse descriptions suggest.This is also why kernel teams are careful to close race windows with state changes inside the protected section rather than after the fact. If a notifier can observe stale state, it can make a decision that no longer reflects reality. Once that happens, reference accounting and membership lists drift apart, and the system is left to discover the mismatch only when something later dereferences the wrong pointer.

- The flaw affects object lifetime, not just a harmless counter

- The race is reachable during ordinary network state transitions

- Dangling pointers in kernel arrays are serious even before exploitation is proven

- Network hot paths amplify the operational impact of small bugs

- Release-path correctness is a core security boundary in the kernel

The Fix and Why It Works

The fix is refreshingly small: setpo->num to zero in packet_release() while bind_lock is still held. That change removes the stale condition that allowed NETDEV_UP to re-register the socket into the fanout group. By the time other paths can observe the object, the release state is already unambiguous, and the notifier no longer has a misleading signal to follow.A surgical change, not a redesign

Kernel maintainers generally prefer fixes that change as little as possible while eliminating the race. That is especially true in network code, where unnecessary churn can introduce new regressions. Here, the patch does not alter the fanout architecture, the notifier framework, or the broader packet socket model. It simply makes teardown state consistent before the lock is released, which is the kind of targeted correction that tends to backport well.The elegance of the patch is that it changes the meaning of the state before any concurrent observer can misread it. That is often the right answer in kernel concurrency work. Rather than adding heavier locking or redesigning the fanout lifecycle, the fix eliminates the race window by making the state machine honest. That is exactly the sort of intervention maintainers like to see when the underlying bug is localized.

Why zeroing is enough

The key insight is thatNETDEV_UP only needs a reliable signal that the socket is still bound and eligible for linkage. Once po->num is zero, the notifier should no longer treat it as a valid re-registration target. In other words, the bug did not require a complicated protocol fix; it required a stronger invariant at the boundary between release and notifier execution. That makes the vulnerability tractable and the remediation easier to reason about.This is also a good example of how small concurrency bugs can hide behind apparently harmless fields. A value that looks like bookkeeping may in fact be the gate that governs whether a socket can be resurrected during teardown. When that gate is left open too long, the rest of the code follows the wrong branch with perfectly reasonable confidence. That is why the safest fixes in kernel code often look deceptively simple.

- The patch closes the race by invalidating state earlier

- It preserves the existing fanout behavior for valid sockets

- It avoids larger locking or refcount redesigns

- It is likely to be stable-backport friendly

- It targets the root cause rather than the symptom

Historical Context

The kernel security community has spent years learning that lifetime bugs are often more important than their first bug reports imply. Many of the most consequential networking fixes start as race conditions, stale-pointer problems, or cleanup mistakes. The reason they keep recurring is simple: the networking stack is one of the few places where throughput, concurrency, and device churn all collide at high speed.Lessons from nearby kernel CVEs

The reference to CVE-2025-38617 in the disclosure is important because it suggests the fanout race was found during a deeper audit, not by accident. That kind of follow-on analysis is common in the Linux ecosystem, where one vulnerability often reveals nearby assumptions or related cleanup mistakes. Security teams should read that as a warning sign: once a subsystem has one lifetime bug, adjacent paths deserve scrutiny.That pattern has become a hallmark of modern kernel hardening. Sanitizers, code audits, and carefully scoped fixes routinely uncover bugs that are not dramatic at first glance but are still operationally important. The kernel project’s cautious CVE posture reflects that reality. It would rather track a real race early than let downstream users discover it through crashes in production.

Why the networking stack keeps producing these issues

Networking code is a dense mesh of fast paths and asynchronous events. Device registration, link state changes, packet delivery, teardown, and notifier callbacks do not happen in one clean linear sequence. They overlap, and those overlaps create the narrow windows where one thread sees stale state and another believes a resource is already gone. Race bugs are the price of speed if the invariants are not crystal clear.That is why fixes like this matter beyond the immediate CVE. They teach the subsystem to express object lifetime more explicitly and earlier in the teardown sequence. Over time, those small corrections reduce the number of ambiguous states that can be observed by concurrent callbacks. It is boring work, but it is the kind of boring work that prevents hard-to-debug production incidents.

- The kernel has a long history of security-relevant lifetime bugs

- One audit often exposes adjacent problems

- Concurrency in networking is inherently tricky

- Early state invalidation is a recurring hardening pattern

- Small fixes often have outsized operational value

Enterprise Impact

For enterprise environments, the real question is not whether the vulnerability exists upstream. It is whether the shipped kernel in production already contains the fix. In Linux security, what matters most is the vendor backport, because many organizations run distribution kernels, appliance builds, or long-term support branches rather than the latest mainline release. That means exposure can persist even after the public fix is known.Why operations teams should care

Packet socket fanout is used in infrastructure contexts where performance and packet distribution matter. That can include monitoring, capture, and specialized networking workloads where device churn is not rare. If an enterprise host is repeatedly bringing interfaces up or down, or if orchestration layers trigger notifier activity, the race becomes more plausible. The worst case is not theoretical memory corruption alone; it is service interruption, panics, or unstable network behavior on critical systems.The operational burden is amplified because the symptoms may not point clearly at the fanout path. Teams may see unexplained crashes, transient link issues, or odd behavior in packet-processing components and spend time hunting in the wrong places. That is a classic sign of a kernel lifetime bug: the fault appears far away from the root cause, so diagnosis takes longer than the actual fix.

What makes the enterprise case stronger than the consumer case

On a workstation, packet fanout is usually niche. On servers, appliances, and observability infrastructure, it is much more common. Enterprise systems also tend to run longer uptimes, more automation, and more dynamic interface management, which all increase the probability of hitting a race window. That makes patch priority materially higher in the data center than on an average desktop.The safest operational stance is to verify the exact build rather than assume a version number tells the whole story. Vendor advisories, stable kernel backports, and package changelogs matter more than the upstream release tag. If a maintenance update includes this fix, it should be treated as a worthwhile deployment even if the public CVE text feels concise.

- Vendor backports matter more than headline kernel versions

- Network-heavy hosts have the clearest exposure

- Orchestration-driven NIC churn raises the odds of hitting the race

- Symptoms may appear as random instability, not a neat security alert

- Appliance and LTS users should verify patch inclusion directly

Consumer and Homelab Impact

Consumers are less likely than enterprises to run packet fanout sockets in a way that hits this bug, but that does not mean the issue is irrelevant. Homelabs, small-office servers, packet-capture setups, and enthusiast networking rigs often use specialized Linux configurations that move them closer to the exposed path. In those environments, the bug is not theoretical; it is simply less widespread.Where the exposure is most likely

A system that uses custom capture tooling, low-level network experimentation, or repeated interface state changes could be closer to the vulnerable condition than a typical laptop. The important distinction is that the bug does not require a sophisticated attacker to exist. It is a kernel race in a common subsystem, which means ordinary administrative actions may be enough to surface it. That is one reason it should still be tracked in consumer-oriented patch cycles.For many individual users, the practical response is straightforward: install kernel updates from the distribution that include the fix, especially if the machine doubles as a home server or test box. If the system never touches packet fanout or similar low-level network features, the risk is lower, but kernel maintenance is still the prudent path because updates usually carry more than one security correction. Low blast radius is not the same as no risk.

Why enthusiasts should still pay attention

The modern Linux ecosystem encourages experimentation. That means a “consumer” machine can suddenly become a router, sniffer, lab host, or virtualization node. Once that happens, the line between consumer and infrastructure shrinks quickly. A bug that was irrelevant on a desktop can become very relevant once the same kernel is handling more dynamic networking tasks.The practical advice is simple: if your machine runs custom networking features, treat this as a real update item. If it does not, keep an eye on your distribution’s security errata anyway, because backported kernel fixes often arrive bundled. Either way, this is a good reminder that specialized kernel paths are still part of the security surface.

- Homelabs and small servers may be closer to the affected path than they think

- Packet capture and experimentation setups deserve attention

- Normal admin actions can be enough to trigger the race

- Kernel patch bundles often include fixes beyond one headline CVE

- A lower blast radius does not mean zero operational risk

Strengths and Opportunities

The good news is that the bug appears to have a clean fix surface. The patch does not force a redesign, and that usually makes it easier for maintainers to review and for vendors to backport. It also gives defenders a concrete condition to validate: if the kernel zeroespo->num before releasing the bind lock, the obvious race window is gone. That is the kind of crisp mitigation story operators appreciate.- Minimal code change, low collateral risk

- Clear race closure at the right lock boundary

- Good candidate for stable and vendor backports

- Improves ownership determinism in a hot path

- Reinforces correct lifetime semantics for fanout sockets

- Gives security teams a precise audit point

- Likely to blend cleanly into routine kernel maintenance

Risks and Concerns

The main concern is that this is a race, which means it may be hard to reproduce and easy to underestimate. Systems that look stable during ordinary testing can still carry the flaw until a specific interleaving of notifier activity and release timing occurs. That makes the vulnerability especially annoying in production, where operators often have to triage symptoms without a clean reproducer.- Intermittent failures can hide the root cause

- Vendor kernels may lag behind upstream fixes

- Network symptoms may be misattributed to other drivers or services

- The affected path may be overlooked in asset inventories

- Downtime can occur before anyone identifies the fanout race

- Stale pointers in kernel arrays can have wider consequences than expected

- Backports may vary across distribution lines and appliance images

What to Watch Next

The most important next step is backport visibility. The public fix exists, but what matters operationally is which stable, enterprise, and distribution kernels have already absorbed it. That is where exposure will either shrink quickly or linger longer than administrators expect. In practice, the kernel version on paper matters less than the exact vendor patch level.Follow-up signals to monitor

- Vendor advisories that explicitly list the fanout UAF fix.

- Stable-tree backports for supported long-term Linux kernels.

- Any follow-up audit results touching packet socket teardown.

- Reports of crashes or instability in environments with active device churn.

- Security tooling updates that map the CVE to fixed package builds.

Another thing to watch is how quickly downstream maintainers communicate the fix in ordinary package updates rather than separate security-only channels. For many users, especially on managed Linux fleets, that is how the patch will actually arrive. A small but precise kernel change like this often moves quietly through the ecosystem, which is exactly why administrators should verify it rather than assume it will be obvious in release notes.

- Confirm whether your vendor kernel includes the fix

- Track stable-tree and LTS backports

- Watch for related audit findings in packet socket teardown

- Monitor for unexplained crashes during NIC state changes

- Validate patch inclusion through package changelogs, not just version numbers

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center