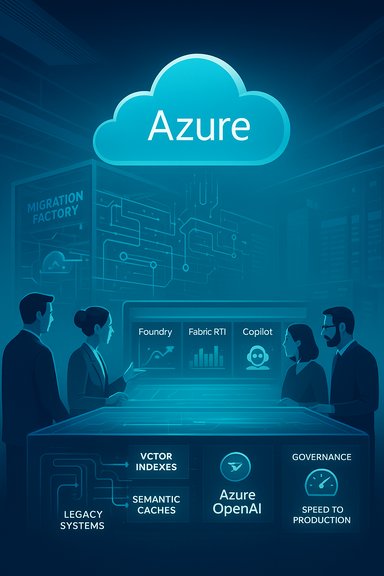

LTIMindtree has formally expanded its global collaboration with Microsoft, positioning itself as a deeper Global System Integrator (GSI) for Azure and promising to accelerate enterprise adoption of Microsoft Azure, Azure OpenAI (via Microsoft Foundry), Microsoft 365 Copilot, and Microsoft Fabric while embedding a full Microsoft security stack into customer programs to drive AI-powered business transformation.

LTIMindtree, the combined entity formed from L&T Infotech (LTI) and Mindtree, has sharpened its Microsoft-focused go-to-market and delivery model over the past three years. The new announcement formalizes a 360° alignment with Microsoft — from co-sell and marketplace plays to a dedicated Microsoft Business Unit and a Microsoft Cloud Generative AI Center of Excellence — with the explicit aim of moving customers “from pilots to productivity.” This is not a narrow product tie-up. LTIMindtree’s messaging and Microsoft’s customer case materials show the strategy spans:

Key competitive dynamics:

Enterprises that pair disciplined procurement, staged technical pilots, and rigorous governance will find the LTIMindtree–Microsoft pathway a compelling route to scale AI. Those that accept marketing claims without contractual and technical guardrails risk unexpected cost inflation, audit exposure and reduced strategic flexibility.

Source: Business Wire India LTIMindtree Strengthens Relationship with Microsoft to Accelerate Microsoft Azure Adoption and Drive AI-Powered Transformation

Background / Overview

Background / Overview

LTIMindtree, the combined entity formed from L&T Infotech (LTI) and Mindtree, has sharpened its Microsoft-focused go-to-market and delivery model over the past three years. The new announcement formalizes a 360° alignment with Microsoft — from co-sell and marketplace plays to a dedicated Microsoft Business Unit and a Microsoft Cloud Generative AI Center of Excellence — with the explicit aim of moving customers “from pilots to productivity.” This is not a narrow product tie-up. LTIMindtree’s messaging and Microsoft’s customer case materials show the strategy spans:- Cloud migration and modernization accelerators that reduce lift-and-shift friction.

- Data modernization using Microsoft Fabric and OneLake as the unified data plane feeding AI systems.

- Production-scale AI using Azure OpenAI in Microsoft Foundry and Copilot integration into business workflows.

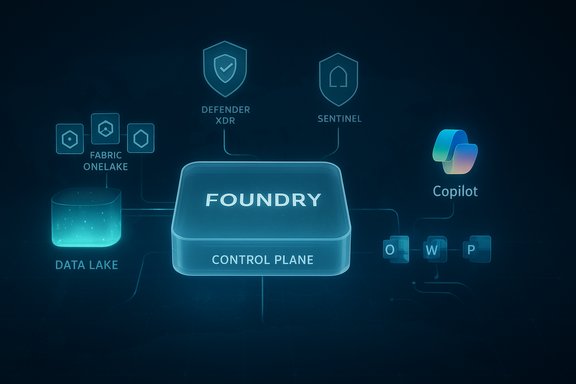

- A security-first operations model built on Defender XDR, Microsoft Sentinel, Intune, Windows Autopatch, and Entra ID.

What the announcement actually says

Core commitments summarized

- A formal Microsoft Business Unit inside LTIMindtree to coordinate joint GTM, co-sell and delivery across Azure and Microsoft 365 stacks.

- A Microsoft Cloud Generative AI Center of Excellence (GenAI CoE) to prototype, govern and scale enterprise-grade generative AI solutions.

- Adoption and integration of Azure OpenAI (via Microsoft Foundry), Microsoft 365 Copilot, and Microsoft Fabric in LTIMindtree IP and client delivery.

- A security-first posture with deployment of Defender XDR, Sentinel, Intune, Windows Autopatch and Entra ID across internal endpoints and as a customer blueprint.

- Commercial mechanisms to accelerate consumption and reduce procurement friction — notably Microsoft Azure Consumption Commitment (MACC) alignment and marketplace listings.

Executive signal

LTIMindtree’s CEO framed the collaboration as a mission to “embed AI into every business process” and accelerate time‑to‑value; Microsoft’s GSI leadership publicly endorsed the alignment as a move toward responsible, scaled AI adoption. These executive quotes are included in the public release and repeated in company and industry coverage.Why this matters: market and technical context

Azure as the enterprise AI substrate

Microsoft has purposefully reoriented Azure toward AI-first workloads over the last 18–24 months, introducing Foundry as a model+governance control plane, expanding Azure OpenAI availability, and embedding Copilot into the productivity fabric of Microsoft 365. For systems integrators, a deep alignment with Microsoft now provides not only engineering resources but direct commercial levers: co‑sell programs, marketplace distribution and consumption‑backed financing. LTIMindtree is explicitly exploiting those levers.Practical differentiators for customers

- Prebuilt vertical accelerators and delivery IP shorten pilot cycles and reduce integration risk.

- A unified data plane (Microsoft Fabric + OneLake) gives a single source of truth for retrieval‑augmented generation (RAG) pipelines and Copilot grounding.

- A packaged security baseline, if implemented correctly, reduces one of the largest adoption barriers — trust and governance.

Technical analysis: how LTIMindtree plans to build enterprise AI

Architecture patterns signaled in the announcement

- Data foundation: Microsoft Fabric / OneLake as the canonical data store for both analytics and model grounding.

- Retrieval and indexing: Azure Cognitive Search or Fabric-backed vector/semantic indexes to power RAG pipelines.

- Model hosting and governance: Azure OpenAI runtimes surfaced through Microsoft Foundry’s control plane to centralize model choice, routing, and observability.

- Inference and scale: Containerized microservices on AKS or managed inference for custom workloads, with GPU-backed nodes as needed.

- Productivity integration: Microsoft 365 Copilot and Copilot Studio to create business-facing copilots inside Word/Excel/Teams and declarative agents connected to organization data.

Operational controls and MLOps

The announcement emphasizes governance-first Copilot rollouts and a security-first stack. These are essential for production reliability:- Identity and access control (Entra ID) for data and model access.

- Centralized telemetry ingestion into Sentinel and Defender for automated playbooks.

- Endpoint management (Intune and Windows Autopatch) to limit the attack surface and control data exfiltration.

Security and governance: strengths and gaps

Strengths

- LTIMindtree has publicly documented large-scale endpoint consolidation and modern workplace deployments — notably the migration of more than 85,000 endpoints across 40 countries using Intune, Autopatch and Autopilot — evidence of execution capability on identity and endpoint hardening. That operational experience provides a credible foundation for secure Copilot and LLM rollouts.

- Integration of Microsoft Copilot for Security and Sentinel in its SOC demonstrates practical benefits: faster triage and automated response playbooks that can reduce time-to-detect and respond for incidents.

Risks and caution points

- Vendor concentration: embedding Copilot, Foundry/ Azure OpenAI, Fabric and the entire Microsoft security stack creates strong platform lock-in. That can simplify engineering and procurement in the short term but reduces vendor portability and negotiating leverage over time.

- Cost visibility for LLM workloads: compute, inference and storage (for vector indexes and OneLake) can balloon unexpectedly; consumption commitments (MACC) help with discounts but can expose customers to overcommitment risk if usage patterns are optimistic. Microsoft documentation outlines MAAC mechanics and eligibility, and customers must model consumption carefully.

- Governance at scale: a governance-first rollout is necessary but not sufficient. Enterprises need auditable data lineage, red-team testing of model outputs, prompt provenance, and external compliance attestations for regulated workloads.

Verification and transparency

Several claims in the press release are verifiable (endpoint migration, internal Copilot adoption and Sentinel/Defender integration) through Microsoft customer stories and independent coverage; other claims — for example being a “featured partner” for Fabric Real‑Time Intelligence — appear in the company release and syndicated news articles but lack a clear, independently verifiable entry in publicly browsable Microsoft partner directories at this time. That designation should be considered a company-declared claim until confirmed by Microsoft’s official partner listing.Commercial implications and procurement mechanics

Azure Consumption Commitment (MACC) use

LTIMindtree lists Microsoft Azure Consumption Commitment (MAAC) as a lever to optimize costs and underwrite migration work. MACC lets organizations commit to a specified Azure spend over time and gain marketplace and partner benefits, but it is a contract that must be modeled precisely:- Define baseline and peak usage and tie commitments to observable KPIs.

- Ensure marketplace purchases are routed through eligible billing flows so they count toward the commitment.

- Include termination and repricing clauses linked to realistic utilization forecasts.

When the GSI model helps — and when it doesn’t

- Helps: end-to-end accountability for migrations, security hardening, and binding Copilot/LLM programs to operational SLAs.

- Doesn’t help: when customers require portability across clouds or prefer a multi-cloud model to avoid lock-in; or when customers need bespoke open-source model hosting outside Microsoft’s managed ecosystem.

- Clear cost models for inference, storage and search (vector ops).

- Outcome-based milestones with partial outcome‑linked payments.

- Auditability of data lineage and independent security attestation.

Execution challenges and what enterprise buyers should insist on

Common execution traps

- Treating PoCs as production: many pilots fail because monitoring, cost controls, and governance are only applied at production scale.

- Ignoring data transformation work: Fabric automation can help, but the hard work of aligning schema, cleaning histories, and building semantic indexes is project-intensive.

- Underestimating latency and availability SLAs for retrieval pathways feeding LLMs.

Practical procurement and delivery checklist (recommended)

- Require a staged delivery roadmap: discovery → pilot (governed) → incrementally expanded production lanes with defined KPIs.

- Contractualize cost ceilings and consumption reporting cadence for MACC programs.

- Ask for independent security verification and runbook access (e.g., red-team reports, model output sampling).

- Insist on portability clauses for critical components — exportable vector indexes and data extracts to avoid entrapment.

- Demand training and transfer of runbook ownership to internal teams for long-term operability.

Competitive landscape and strategic positioning

LTIMindtree’s move is consistent with a larger industry pattern: major GSIs are aligning closely with hyperscalers to provide turnkey AI solutions. This is a defensive and offensive strategy: defense, because customers value a single accountable integrator; offense, because GSIs capture much of the professional services margin and can accelerate sales via co-sell incentives.Key competitive dynamics:

- Other MS‑aligned GSIs are also promoting Fabric, Foundry and copilot accelerators; customers will evaluate delivery credibility and vertical IP (domain expertise), not just partner badges.

- For companies that demand multi-cloud flexibility, specialist cloud‑agnostic integrators or boutique AI firms offering open‑model hosting remain relevant alternatives.

Strengths, weaknesses and the real test

Notable strengths

- Operational proofs exist: LTIMindtree’s Intune/Autopatch consolidation (85k endpoints) and Sentinel/Defender integrations are referenced in Microsoft customer stories and show real field experience.

- Broad, productized offers: the combination of migration factories, Copilot adoption packages, and Fabric-focused data modernization reduces vendor friction for enterprise buyers.

- Security-first narrative: integrating a consolidated Microsoft security stack and Security Copilot can materially improve SOC throughput if executed properly.

Potential weaknesses and open questions

- The Fabric Real‑Time Intelligence “featured partner” claim requires independent confirmation from Microsoft’s partner directories; press releases and syndicated articles alone are insufficient validation. Treat this as a vendor‑declared achievement until verified.

- Execution scale: promises of 170+ distinct services and accelerated Azure consumption are strategic targets — not automatically outcomes. Buyers should insist on measurable, phased delivery tied to KPIs and refunds/escrow for performance misses.

- Cost and governance risk remains high for LLM workloads; the commercial benefits of MAAC need to be traded off against the risk of overcommitment and mobility loss.

Field guide for IT leaders evaluating LTIMindtree + Microsoft offers

- Validate references: ask for verifiable case studies (with redacted technical artifacts) that show Fabric ingestion, vector search throughput, and latency SLAs.

- Test the GenAI CoE: require a short, governed pilot with data residency, model selection and MLOps outputs as deliverables.

- Demand clear cost simulations for one, three and twelve months of production at target scale (token volumes, search QPS, storage growth).

- Ask for a security runbook: how Sentinel playbooks, Defender automation, and Entra conditional access connect to Copilot usage logs and DLP controls.

- Negotiate MACC conservatively: use phased commitments with the option to pause or reallocate consumption to avoid stranded spend.

Conclusion

LTIMindtree’s expanded collaboration with Microsoft is a concrete example of how systems integrators are reorganizing around hyperscaler AI platforms to help enterprise customers move from experimental pilots to operational AI. The announcement ties together Microsoft’s largest enterprise building blocks — Foundry/Azure OpenAI, Microsoft 365 Copilot and Fabric — with LTIMindtree’s delivery IP, security-first references and commercial levers such as MACC. When executed with discipline, that combination can materially speed time-to-value for enterprise AI programs and reduce integration risk. However, the promise is not automatic. Buyers must demand independent verification of partner credentials (especially partner‑directory designations), insist on auditable KPIs and flexible consumption terms, and treat governance, portability and cost control as first‑class contractual requirements. Without those safeguards, the very strengths that make a hyperscaler partnership attractive — integrated tooling, co-sell economics and deep platform features — can become strategic constraints.Enterprises that pair disciplined procurement, staged technical pilots, and rigorous governance will find the LTIMindtree–Microsoft pathway a compelling route to scale AI. Those that accept marketing claims without contractual and technical guardrails risk unexpected cost inflation, audit exposure and reduced strategic flexibility.

Source: Business Wire India LTIMindtree Strengthens Relationship with Microsoft to Accelerate Microsoft Azure Adoption and Drive AI-Powered Transformation