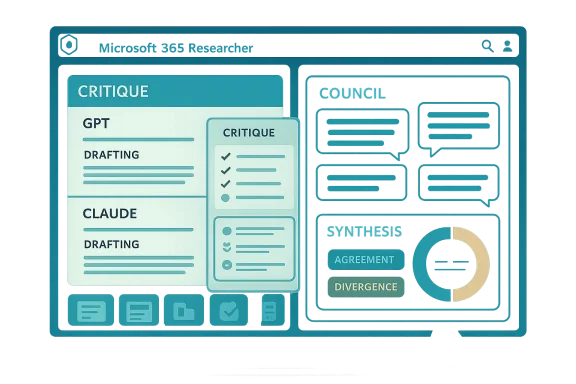

Microsoft’s latest Copilot overhaul is more than a product tweak; it is a quiet admission that one-model dominance is ending. With Critique and Council, Microsoft 365 Copilot’s Researcher agent is now designed to combine OpenAI’s GPT and Anthropic’s Claude in the same workflow, using one model to draft and another to review, compare, or reconcile the output before users see it. The move lands at a moment when enterprises are demanding better trust, better citations, and better governance from AI systems that can no longer rely on raw fluency alone.

Microsoft’s announcement fits into a broader reset of its Copilot strategy that has been visible for weeks. On March 17, 2026, Satya Nadella said Microsoft was reorganizing Copilot around four connected pillars: Copilot experience, Copilot platform, Microsoft 365 apps, and AI models. That is not just corporate housekeeping. It is a signal that Microsoft now sees model choice, orchestration, and product integration as a single stack rather than separate bets.

The Researcher agent sits near the center of that shift. Microsoft describes Researcher as a deep research assistant built to handle complex, multi-step questions inside Microsoft 365 Copilot, operating within the commercial data processing boundary and following the same privacy and security practices as Copilot itself. That matters because research tasks are exactly where hallucinations, missing citations, and shallow answers become business risks rather than mere annoyances.

The new Critique feature formalizes a familiar human workflow: draft first, audit second. In Microsoft’s framing, one model handles planning, retrieval, and drafting, while another checks source reliability, completeness, and citation accuracy before the result is returned. That is a meaningful design change because it converts trust from a hope into a process, at least in theory.

The equally interesting Council feature goes further by letting multiple models respond to the same prompt in parallel and then using a judge model to synthesize where they agree and diverge. In plain English, Microsoft is saying that disagreement is useful data. For enterprise users, that may be more valuable than a single polished answer, because it exposes uncertainty and reduces the temptation to treat model output as final truth.

There is also a strategic cost-control angle. If Microsoft can route different tasks to different models, it can optimize for quality on high-value jobs and efficiency elsewhere. That aligns with Nadella’s broader emphasis on COGS reduction and model economics in Microsoft’s current AI strategy.

This also reveals something important about Microsoft’s priorities. The company is not merely trying to make Copilot more creative or more verbose; it is trying to make it more auditable. That is a very different product philosophy, and one that fits enterprise buyers more naturally than consumer AI hype.

This matters because one of the biggest failures in AI research tools is premature convergence. A model can latch onto an early framing and then defend it elegantly, even when a different framing would be more accurate. Council tries to break that pattern by making divergence visible instead of smoothing it away. That is a subtle but important design choice.

Microsoft says Researcher with Critique scored 57.4 overall when evaluated with GPT-5.2 under the same protocol used in the original benchmark paper. The company says that was a 13.8% improvement over Perplexity Deep Research with Claude Opus 4.6, which Microsoft describes as the previous top performer. Those are strong claims, but they are Microsoft-run tests, so they should be read carefully.

This is especially true for research tasks that would otherwise take analysts hours to do manually. If Council helps a team avoid one bad executive memo, one flawed market brief, or one mis-cited policy analysis, the extra compute may pay for itself quickly. That is the kind of return on investment enterprise software buyers understand immediately.

That matters in procurement conversations. CIOs and security teams want to know that their productivity stack will not be hostage to one vendor’s roadmap, pricing, or service quality. A multi-model architecture is a practical answer to that concern, and not just a symbolic one.

The inclusion of Claude in this ecosystem also reinforces Microsoft’s broader willingness to mix model providers depending on the task. That is important because different models can be better suited to different styles of work, and the enterprise market increasingly wants fit-for-purpose intelligence, not just the largest model on paper.

Microsoft will also need to prove that the gains are meaningful outside benchmark settings. Real customers will care about whether the system handles messy internal documents, contradictory sources, and domain-specific nuance better than competing tools. That is where the true test begins, because enterprise trust is earned in edge cases, not demos.

Source: H2S Media Microsoft Copilot Now Uses Claude to Review GPT's Work in New Multi-Model Research Feature

Overview

Overview

Microsoft’s announcement fits into a broader reset of its Copilot strategy that has been visible for weeks. On March 17, 2026, Satya Nadella said Microsoft was reorganizing Copilot around four connected pillars: Copilot experience, Copilot platform, Microsoft 365 apps, and AI models. That is not just corporate housekeeping. It is a signal that Microsoft now sees model choice, orchestration, and product integration as a single stack rather than separate bets.The Researcher agent sits near the center of that shift. Microsoft describes Researcher as a deep research assistant built to handle complex, multi-step questions inside Microsoft 365 Copilot, operating within the commercial data processing boundary and following the same privacy and security practices as Copilot itself. That matters because research tasks are exactly where hallucinations, missing citations, and shallow answers become business risks rather than mere annoyances.

The new Critique feature formalizes a familiar human workflow: draft first, audit second. In Microsoft’s framing, one model handles planning, retrieval, and drafting, while another checks source reliability, completeness, and citation accuracy before the result is returned. That is a meaningful design change because it converts trust from a hope into a process, at least in theory.

The equally interesting Council feature goes further by letting multiple models respond to the same prompt in parallel and then using a judge model to synthesize where they agree and diverge. In plain English, Microsoft is saying that disagreement is useful data. For enterprise users, that may be more valuable than a single polished answer, because it exposes uncertainty and reduces the temptation to treat model output as final truth.

Why Multi-Model Copilot Matters

The significance of this change is not just that Microsoft is using Claude alongside GPT. It is that Microsoft is productizing model redundancy as a feature. In a market where OpenAI, Anthropic, and Google keep leapfrogging each other, a single-vendor approach can quickly become a liability if the underlying model shifts, pricing changes, or quality fluctuates.The enterprise case for redundancy

Businesses rarely want a research tool that sounds confident; they want one that is defensible. A multi-model design gives Microsoft a way to reduce dependence on any one model family while also improving output quality through critique and comparison. That is especially useful in regulated industries, where a citation error or unsupported claim can create legal, compliance, or reputational problems.There is also a strategic cost-control angle. If Microsoft can route different tasks to different models, it can optimize for quality on high-value jobs and efficiency elsewhere. That aligns with Nadella’s broader emphasis on COGS reduction and model economics in Microsoft’s current AI strategy.

Why this is not just “more models”

Multi-model orchestration is not the same as a simple model picker. The important change is that the models are now being asked to interrogate each other’s output. That shifts Copilot from a single answer engine into a small internal review committee, which is a much more enterprise-friendly metaphor.- It reduces reliance on a single model family.

- It increases the chance of catching unsupported claims.

- It creates more transparent disagreement between models.

- It gives Microsoft a path to better quality without claiming one model is always best.

- It makes “trust” part of the workflow, not a marketing slogan.

How Critique Works

Critique is the more conservative of the two new approaches, and that is probably why it is the default when users select Auto in the model picker. One model does the initial work, then another model reviews the result before delivery. That resembles a classic editorial process, except automated and compressed into seconds.The review dimensions

Microsoft says Critique focuses on source reliability, report completeness, and citation accuracy. Those are exactly the pain points that make deep research agents risky in enterprise use. If the sources are weak, the report is incomplete, or the citations do not line up with the claims, the output may be unusable even if it reads well.This also reveals something important about Microsoft’s priorities. The company is not merely trying to make Copilot more creative or more verbose; it is trying to make it more auditable. That is a very different product philosophy, and one that fits enterprise buyers more naturally than consumer AI hype.

Why critique beats pure generation

The risk with a single-pass research agent is that it can hide uncertainty inside polished prose. Critique forces a second pass, which may not eliminate all mistakes, but it can catch low-quality sources, missing context, or citations that do not support the claim being made. In research workflows, that extra pass may matter more than marginal gains in style.- Drafting and checking are separated.

- Source quality is evaluated explicitly.

- Citations become a core output, not an afterthought.

- The user sees a more vetted report.

- The system becomes easier to defend internally.

Council as a Research Pattern

If Critique is the reviewer, Council is the debate room. Instead of one model reviewing another, multiple models generate independent reports on the same prompt, and a judge model summarizes where they overlap and where they differ. That can be especially useful when a question has no single obvious answer or when the best answer depends on framing.Parallel reasoning, not serial approval

The main advantage of Council is that it preserves independent reasoning paths. A reviewer can only judge what the first model produced, but parallel outputs can surface different evidence, different assumptions, and different levels of caution. In practice, that may help enterprise users see which parts of a report are stable and which parts are interpretive.This matters because one of the biggest failures in AI research tools is premature convergence. A model can latch onto an early framing and then defend it elegantly, even when a different framing would be more accurate. Council tries to break that pattern by making divergence visible instead of smoothing it away. That is a subtle but important design choice.

The judge model problem

Of course, Council introduces a new dependency: the judge model. If the judge is weak, biased, or poorly calibrated, it may summarize disagreement in a misleading way. So the feature does not eliminate model risk; it merely relocates it one layer higher.- Multiple models reduce single-model blind spots.

- The judge model can highlight unique insights.

- Divergence becomes an output, not a bug.

- The workflow is more expensive to run.

- The quality of the summary depends on the judge.

Benchmarking the New Researcher

Microsoft is backing up the new Researcher approach with benchmark claims, and that is where the story gets especially interesting. The company says it tested Critique on DRACO, a new benchmark for Deep Research Accuracy, Completeness, and Objectivity that was published in February 2026 and built from real deep research use cases.What DRACO actually measures

DRACO is designed around complex, open-ended research tasks across multiple domains, making it a more realistic test than narrow question-answer benchmarks. It evaluates whether an agent can be accurate, complete, objective, and well-cited under conditions that resemble real-world research work. That is exactly the kind of benchmark enterprise buyers should care about, because it matches how they actually use research tools.Microsoft says Researcher with Critique scored 57.4 overall when evaluated with GPT-5.2 under the same protocol used in the original benchmark paper. The company says that was a 13.8% improvement over Perplexity Deep Research with Claude Opus 4.6, which Microsoft describes as the previous top performer. Those are strong claims, but they are Microsoft-run tests, so they should be read carefully.

How to interpret the numbers

The key detail is that Microsoft says it used the same evaluation protocol, not the same independent leaderboard. That is fair, but it is not the same as a third-party validation run. So the result is best understood as evidence that the Critique architecture is promising, not as proof that Copilot now owns the category. Benchmark superiority is a snapshot, not a crown.- The benchmark is relevant because it reflects real research tasks.

- The score improvement suggests the critique layer is doing useful work.

- The evaluation was Microsoft-run, so independent confirmation would still matter.

- The result supports the case for multi-model review.

- The headline is more about architecture than raw model power.

The Economics of Trust

None of this comes free. Axios reports that Critique costs roughly 20% more than single-model usage, while Council costs about 2.5 times as much. That is a large jump, but enterprise buyers often tolerate higher spend when the output affects decisions, compliance, or customer-facing work.Paying for verification

The economic logic is straightforward: if AI saves time but occasionally produces costly mistakes, a more expensive system that reduces those mistakes can still win. In other words, the real comparison is not model cost versus model cost; it is workflow cost versus workflow cost.This is especially true for research tasks that would otherwise take analysts hours to do manually. If Council helps a team avoid one bad executive memo, one flawed market brief, or one mis-cited policy analysis, the extra compute may pay for itself quickly. That is the kind of return on investment enterprise software buyers understand immediately.

Consumer versus enterprise math

Consumers tend to resist higher AI costs because they can tolerate some inaccuracy and they do not operate under formal review chains. Enterprises are the opposite. They are more likely to pay for systems that provide traceability, policy compliance, and a lower chance of embarrassing errors, even if the raw output is only incrementally better.- Higher cost is easier to justify when output is mission-critical.

- Verification has economic value in regulated workflows.

- Research quality can be worth more than raw speed.

- Enterprise budgets reward reliability over novelty.

- Consumer adoption may lag if pricing rises too quickly.

Microsoft’s Diversification Strategy

There is a second story here beyond product design: Microsoft is deliberately broadening its AI supply chain. The company’s long-standing partnership with OpenAI remains central, but the new Copilot features make it clear that Microsoft wants optionality. That gives it leverage, resilience, and less dependence on a single upstream lab.Why diversification is politically useful

Microsoft also has a messaging problem to solve. For years, analysts have treated Microsoft as tightly aligned with OpenAI, which created the impression that its Copilot future rose and fell with one partner. By visibly integrating Anthropic, Microsoft can show enterprise customers that it is building a more neutral platform layer rather than a single-model wrapper.That matters in procurement conversations. CIOs and security teams want to know that their productivity stack will not be hostage to one vendor’s roadmap, pricing, or service quality. A multi-model architecture is a practical answer to that concern, and not just a symbolic one.

The platform implication

If Microsoft can abstract model choice behind Copilot, then the model becomes less important than the system that coordinates it. That is a classic platform strategy: own the orchestration layer, and let the underlying intelligence change as the market changes. It is also a warning shot to rivals that think product differentiation can come only from model scale alone.- Microsoft gains model flexibility.

- Customers get less vendor lock-in.

- Competitive pressure shifts to orchestration quality.

- Azure and Copilot become more valuable as control points.

- The platform story becomes stronger than a single-model story.

Copilot Cowork Extends the Pattern

Microsoft did not stop at Researcher. The company also announced that Copilot Cowork is now available through its Frontier early access program, bringing a longer-horizon, task-oriented agent into the Copilot ecosystem. Cowork is designed to handle multi-step work autonomously while allowing the user to monitor progress and intervene when needed.What Cowork adds

Cowork pushes the product from answer generation toward execution. Instead of just summarizing or researching, it can reason across tools and files, plan actions, and carry out steps with human oversight. That is much closer to agentic automation than traditional chat.The inclusion of Claude in this ecosystem also reinforces Microsoft’s broader willingness to mix model providers depending on the task. That is important because different models can be better suited to different styles of work, and the enterprise market increasingly wants fit-for-purpose intelligence, not just the largest model on paper.

The local-versus-cloud distinction

Microsoft’s version operates in the cloud, while Anthropic’s standalone Claude Cowork product reportedly runs locally on users’ computers. That distinction matters because it affects data flow, latency, and governance. Cloud execution favors centralized control and integration; local execution can appeal to users who want more privacy or offline resilience.- Cowork expands Copilot from research to execution.

- Human intervention remains part of the workflow.

- Claude’s presence underscores Microsoft’s multi-model approach.

- Cloud delivery favors enterprise governance.

- The local-versus-cloud split may influence buyer preferences.

Competitive Implications for OpenAI, Anthropic, and Perplexity

Microsoft’s move lands squarely in the middle of the deep research arms race. Perplexity helped define the category, and the DRACO benchmark itself emerged from that ecosystem. By integrating both OpenAI and Anthropic while publicly citing benchmark gains, Microsoft is signaling that the category is now about system design as much as raw model quality.For OpenAI

For OpenAI, the risk is not that Microsoft is abandoning GPT. The risk is more subtle: GPT is becoming one component in a larger orchestration system rather than the unquestioned centerpiece. That can still be a major commercial win for OpenAI, but it also means the brand value of any single model may matter less inside Microsoft’s product surface.For Anthropic

For Anthropic, the opportunity is clear. Being included in Copilot’s research and agentic workflows gives Claude a high-visibility enterprise channel and helps position it as the model of choice for verification, reasoning, and careful output. That is a powerful narrative in a market increasingly worried about AI confidence without accountability.For Perplexity and the research niche

Perplexity helped popularize deep research and now faces a larger platform competitor willing to bundle similar capabilities into a broader productivity suite. Microsoft does not need to beat Perplexity on pure search ergonomics if it can offer acceptable research quality inside the tools customers already use every day. That is the classic distribution beats specialization problem.- OpenAI remains important, but less singular.

- Anthropic gains enterprise legitimacy.

- Perplexity faces platform pressure from Microsoft bundling.

- Deep research becomes a workflow feature, not just a standalone product.

- Competitive advantage shifts to trust, governance, and integration.

Strengths and Opportunities

Microsoft’s multi-model Copilot strategy has real strengths because it aligns technical architecture with enterprise expectations. It treats AI as a managed system rather than a magic box, and that distinction may prove decisive in large organizations that care about reliability as much as innovation.- Better trust posture through critique and verification.

- Reduced single-vendor dependence on any one model provider.

- Improved research quality by checking citations and completeness.

- Stronger enterprise appeal in regulated and compliance-heavy environments.

- Platform flexibility to swap or combine models over time.

- More persuasive ROI story for high-value knowledge work.

- Clearer differentiation from consumer-first AI assistants.

Risks and Concerns

The same design choices that make Critique and Council appealing also create new risks. Multi-model systems can be more expensive, more complex, and harder to explain, and the extra layers do not automatically guarantee better judgment.- Higher inference cost may limit usage or tighten quotas.

- Judge-model errors could misrepresent disagreement.

- Operational complexity increases as more models are orchestrated.

- Benchmark claims may not generalize to all real-world tasks.

- User confusion may rise if model roles are not clearly explained.

- Governance gaps could appear if different models behave inconsistently.

- False confidence remains a danger even with critique layers.

Looking Ahead

The next major question is whether Microsoft turns these features into a durable competitive moat or just a temporary lead. If Critique and Council consistently improve quality in real workflows, they could become the template for how enterprise AI products evolve over the next few years. If not, they may be remembered as an impressive but costly experiment.Microsoft will also need to prove that the gains are meaningful outside benchmark settings. Real customers will care about whether the system handles messy internal documents, contradictory sources, and domain-specific nuance better than competing tools. That is where the true test begins, because enterprise trust is earned in edge cases, not demos.

- Watch for independent validation of Microsoft’s DRACO results.

- Watch for pricing and quota changes as usage scales.

- Watch for how often users choose Auto versus Council manually.

- Watch for broader rollout of Claude across Copilot features.

- Watch for whether other vendors copy the critique-and-council pattern.

Source: H2S Media Microsoft Copilot Now Uses Claude to Review GPT's Work in New Multi-Model Research Feature