Microsoft’s pivot toward “AI self-sufficiency” is no accident — it is a deliberate, well-funded strategy to rewire how the company builds, hosts and ships the generative AI capabilities that now sit at the center of Office, Windows and Azure. Mustafa Suleyman, Microsoft’s Chief AI Officer, has publicly framed that shift as a move to reduce reliance on any single external lab, even as the company preserves deep ties with OpenAI under a reworked commercial arrangement. The result is a multi‑pronged posture: continue to partner where it makes sense, buy compute and models from others when advantageous, and simultaneously build internal frontier models, custom accelerators and a new class of data centers that can run them at scale.

Microsoft’s long and complex relationship with OpenAI reached a new milestone on October 28, 2025, when both companies announced a revised agreement that reshaped their commercial and strategic ties. Under the revised structure Microsoft acquired a significant ownership position in OpenAI’s reconstituted public‑benefit entity, and secured multi‑year access to OpenAI’s models and intellectual property. At the same time, the updated deal explicitly grants both parties greater freedom to pursue independent AI development outside the bilateral relationship.

That change removed a key contractual limitation that had previously restricted Microsoft’s ability to pursue very large, frontier‑scale models in some respects. Within weeks, Microsoft made two parallel moves visible: it accelerated internal model development under the MAI (Microsoft AI) brand and it began rolling out a broader multi‑model strategy across Copilot and Azure that lets customers choose where specific workloads run.

What Microsoft calls “AI self‑sufficiency” is therefore not an abrupt divorce from OpenAI; it is an engineered diversification. The company keeps OpenAI as a strategic partner while building the compute, chips, models and networking needed to be independent if the market or geopolitics require it.

This orchestration approach reduces vendor lock‑in for both Microsoft and enterprise customers. It also creates an explicit pathway to mix models for specific duties — for example, choosing a reasoning‑optimized model for deep research tasks and a latency‑optimized model for interactive chat.

At the same time Microsoft has broadened the set of models available through Azure’s model catalog and “Foundry” services, including Llama family weights, Mistral variants and Anthropic’s Claude options in certain Copilot surfaces. Those models may be hosted by third parties in some cases, but Microsoft’s product surfaces expose them as selectable options — a pragmatic move that preserves customer choice while the company builds out its own capacity.

Key engineering goals Microsoft emphasizes include high on‑chip memory bandwidth, native support for low‑precision numeric formats used in modern models, and a memory architecture tuned to keep model weights local. Those choices target a core problem in large model workloads: the performance cost of moving weights and activations between devices.

It is important to treat early chip claims with caution — vendor performance numbers are useful but must be validated under independent benchmarks and real‑world workloads. Still, Maia 200 signals that Microsoft intends to control more of the hardware stack rather than rely exclusively on third‑party accelerators.

Fairwater sites are explicitly engineered to support training jobs that span hundreds of thousands of GPUs and to interconnect multiple sites across fiber links. Those choices make it possible to host frontier‑scale training runs without the same friction encountered on standard cloud racks.

For enterprise IT leaders, the Fairwater program changes the calculus for cloud capacity planning: Microsoft is signaling that it will host both its own model training and selected customer workloads in an architecture tailored for sustained, high‑density AI workloads.

For developers, the new reality is both liberating and more complex: you’ll be able to pick the best engine for a task, but you’ll also need to understand nuanced differences in cost, latency, behavior and compliance across model providers.

Legal and regulatory risk remains because governments are increasingly interested in model provenance, data flows, and concentration of AI capability. Microsoft’s multi‑model, multi‑cloud posture helps mitigate regulatory concentration risk, but it also increases contractual complexity and cross‑jurisdictional compliance workload for enterprise customers.

Strengths:

For regulators and industry observers, Microsoft’s posture also raises governance questions about concentration of compute, ownership of model IP and the transparency of safety and audit practices when a single company both powers and competes with leading AI labs.

For enterprises and IT professionals, the takeaway is clear: prepare for a multi‑model world where governance, cost control and performance tuning move to the fore. For Microsoft, the tightrope is equally clear — deliver in‑house models and infrastructure that meet or exceed external alternatives while managing the legal, regulatory and operational complexity that comes with being both a platform provider and an active competitor.

The coming 12–24 months will determine whether Microsoft’s investments in chips, datacenters and models translate into durable product advantages or whether the company simply becomes one more capable but costly supplier in an increasingly crowded AI marketplace. Either way, Microsoft’s bet on self‑sufficiency has already reshaped how enterprises evaluate vendor risk and how AI services will be purchased, deployed and governed at scale.

Source: Neowin Microsoft aims to reduce dependency on OpenAI, as it pushes for "AI self-sufficiency"

Background

Background

Microsoft’s long and complex relationship with OpenAI reached a new milestone on October 28, 2025, when both companies announced a revised agreement that reshaped their commercial and strategic ties. Under the revised structure Microsoft acquired a significant ownership position in OpenAI’s reconstituted public‑benefit entity, and secured multi‑year access to OpenAI’s models and intellectual property. At the same time, the updated deal explicitly grants both parties greater freedom to pursue independent AI development outside the bilateral relationship.That change removed a key contractual limitation that had previously restricted Microsoft’s ability to pursue very large, frontier‑scale models in some respects. Within weeks, Microsoft made two parallel moves visible: it accelerated internal model development under the MAI (Microsoft AI) brand and it began rolling out a broader multi‑model strategy across Copilot and Azure that lets customers choose where specific workloads run.

What Microsoft calls “AI self‑sufficiency” is therefore not an abrupt divorce from OpenAI; it is an engineered diversification. The company keeps OpenAI as a strategic partner while building the compute, chips, models and networking needed to be independent if the market or geopolitics require it.

Overview: What “AI self‑sufficiency” actually means

A layered definition

- Strategic independence: Microsoft aims to avoid a single‑point supplier risk by hosting and developing alternative frontier models alongside its OpenAI relationship.

- Product optimization: In‑house models can be tightly integrated and tuned for Microsoft products like Copilot, Office, Bing and Azure services.

- Operational control: Owning the stack — from silicon to data centers to models — gives Microsoft greater control over latency, privacy, compliance and cost.

- Resilience and optionality: A diversified model pool lets Microsoft route workloads to the best provider for a given task, or fall back to its own models when needed.

Why now?

The rapid expansion in enterprise dependence on generative AI — particularly for productivity, knowledge work and search — exposes Microsoft to potentially systemic supply‑chain risk if a single third‑party model provider were to face outages, policy restrictions, or regulatory limitations. The October 2025 partnership revision created the contractual room and timing for Microsoft to invest heavily in its own frontier assets without burning bridges with OpenAI.The strategic playbook: partner, buy, build

Microsoft’s strategy unfolds across three correlated tracks.1) Partner and orchestrate

Microsoft has made product changes that explicitly support a multi‑vendor model strategy inside flagship experiences like Copilot. Instead of hard‑wiring a single model, Copilot increasingly acts as an orchestration layer that can route requests to OpenAI models, Anthropic’s Claude family, Microsoft’s own MAI models, or other partner and open‑source models depending on policy, cost, capability and tenant admin settings.This orchestration approach reduces vendor lock‑in for both Microsoft and enterprise customers. It also creates an explicit pathway to mix models for specific duties — for example, choosing a reasoning‑optimized model for deep research tasks and a latency‑optimized model for interactive chat.

2) Buy compute and host partners

Microsoft is not only building; it is also buying. Large external model providers continue to need vast amounts of GPU‑based compute. Announcements this past year show Microsoft and others striking multi‑billion‑dollar compute arrangements across the industry, creating circular deals where model developers buy cloud compute while cloud providers invest in those developers.At the same time Microsoft has broadened the set of models available through Azure’s model catalog and “Foundry” services, including Llama family weights, Mistral variants and Anthropic’s Claude options in certain Copilot surfaces. Those models may be hosted by third parties in some cases, but Microsoft’s product surfaces expose them as selectable options — a pragmatic move that preserves customer choice while the company builds out its own capacity.

3) Build in‑house frontier capability

The most visible part of the plan is Microsoft’s investment in its own frontier models under the MAI umbrella and the supporting infrastructure that will make those models practical to run at scale.- The MAI model family is being positioned as Microsoft’s in‑house lineup for text, image and voice workloads and for deeper research scenarios. Early MAI models — including high‑quality speech and image generators and a foundation text model preview — have been integrated into Copilot and other product experiences in preview form.

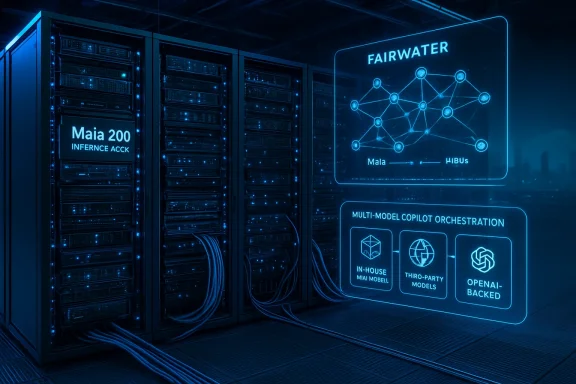

- Microsoft is building a dedicated hardware and datacenter stack — from the Maia 200 accelerator to the Fairwater network of purpose‑built AI data centers — designed to train and infer across models that reach frontier scale.

The infrastructure foundation: Maia 200 and Fairwater

Maia 200: Microsoft’s custom accelerator

Microsoft has publicly unveiled its own accelerator technology intended to be optimized for low‑precision tensor work common to large language model training and inference. The Maia 200 family is positioned as a dense, high‑bandwidth chip designed to reduce cross‑device communication and improve cost efficiency for both training and inference.Key engineering goals Microsoft emphasizes include high on‑chip memory bandwidth, native support for low‑precision numeric formats used in modern models, and a memory architecture tuned to keep model weights local. Those choices target a core problem in large model workloads: the performance cost of moving weights and activations between devices.

It is important to treat early chip claims with caution — vendor performance numbers are useful but must be validated under independent benchmarks and real‑world workloads. Still, Maia 200 signals that Microsoft intends to control more of the hardware stack rather than rely exclusively on third‑party accelerators.

Fairwater: the AI “superfactory” datacenter

Microsoft’s Fairwater project is a network of purpose‑built data centers optimized for large‑scale AI training and inference. The design departs from conventional hyperscale clouds by treating a Fairwater site as a single giant supercomputer, with dense GPU pods, liquid cooling and ultra‑high bandwidth networking that minimize latency within large clusters.Fairwater sites are explicitly engineered to support training jobs that span hundreds of thousands of GPUs and to interconnect multiple sites across fiber links. Those choices make it possible to host frontier‑scale training runs without the same friction encountered on standard cloud racks.

For enterprise IT leaders, the Fairwater program changes the calculus for cloud capacity planning: Microsoft is signaling that it will host both its own model training and selected customer workloads in an architecture tailored for sustained, high‑density AI workloads.

Product implications: what this means for Copilot, Office and Azure

Copilot becomes model‑agnostic and more tightly integrated

Copilot is evolving from an experience that was, in practice, powered primarily by a small set of external models to one that can pull from a diverse model catalog. Practically, this gives administrators and developers more control over cost, data residency, compliance and performance tuning.- Customers can opt to have certain classes of queries handled by MAI models to reduce external data flows or to achieve tighter product integration.

- For specialized tasks — coding, legal synthesis, or medical summarization — Copilot can route to the model that demonstrably performs best under controlled evaluations.

Azure AI product offering broadens

Azure’s model catalog and Foundry services now present a heterogeneous offering where customers choose among high‑performance Microsoft models, partner models and open‑weight options. Microsoft’s value proposition here is orchestration and governance: provide a single control plane for multi‑model operations, while delivering the underlying compute and networking that frontier models require.For developers, the new reality is both liberating and more complex: you’ll be able to pick the best engine for a task, but you’ll also need to understand nuanced differences in cost, latency, behavior and compliance across model providers.

Partnerships and multi‑model sourcing: Anthropic, Meta, Mistral and open models

Microsoft’s diversification is not limited to OpenAI. The company now recognizes the practical value of hosting a variety of models and — where advantageous — forming commercial relationships with other labs.- Anthropic’s Claude family has been made available as an option within Microsoft product surfaces for targeted use cases. In some configurations the Claude endpoints are hosted off‑cloud and routed via Anthropic’s APIs, while in other commercial constructs Anthropic has made large compute commitments tied to major cloud providers.

- Microsoft supports Meta’s Llama and community variants via Azure’s model catalogs, and has explored integrations with high‑quality open‑weight models from Mistral and others.

- The orchestration layer enables developers and admins to compose multi‑model agents that mix MAI, OpenAI, Anthropic, and open‑source engines based on task suitability.

The financial, legal and policy calculus

The investment scale

Microsoft’s investments in both compute and equity stakes are massive and long‑dated. The revised OpenAI agreement, the Fairwater buildout, and chip development reflect multi‑year capital commitments into tens of billions of dollars. These moves are deliberate insurance: they buy Microsoft the option value of independence and the leverage to negotiate favorable terms with partners.IP, exclusivity and contract nuance

The October 2025 rework of the OpenAI partnership clarified Microsoft’s IP access and extended certain hosting and intellectual property rights through a defined period. But the agreement also created new independent pathways for both parties. The practical effect is bilateral: Microsoft kept long‑term access to OpenAI models while gaining the right to develop and host its own frontier models and to work with other model providers.Legal and regulatory risk remains because governments are increasingly interested in model provenance, data flows, and concentration of AI capability. Microsoft’s multi‑model, multi‑cloud posture helps mitigate regulatory concentration risk, but it also increases contractual complexity and cross‑jurisdictional compliance workload for enterprise customers.

Risks and open questions

Microsoft’s strategy is bold and pragmatic, but it is not without risk. Here are the most important caveats for readers and IT decision makers.- Execution risk: Building frontier models and a chip/data‑center stack is hard, time‑consuming and capital‑intensive. Early MAI models and Maia 200 will need independent validation under production conditions to demonstrate cost and performance benefits.

- Talent competition: Training, running and securing frontier models requires top AI research and engineering talent. Microsoft competes not just with OpenAI and Google but with a resurgent ecosystem of startups and research labs for that talent pool.

- Model parity and quality: Frontline customers will judge MAI and Microsoft’s in‑house models by outcomes. If in‑house models lag in reasoning, safety, factuality or developer ergonomics, customers may prefer the incumbent third‑party models despite potential downsides.

- Operational complexity: Orchestrating multiple models across clouds, maintaining governance and ensuring consistent security and privacy controls will be a heavy operational lift for enterprise IT teams.

- Vendor relationships: Hosting partner models that run on rival clouds — for example, Anthropic workloads running on other providers — introduces “cross‑cloud” data paths. That can complicate compliance, auditing and cost allocation.

- Regulatory exposure: A company that both invests in and competes with third‑party labs faces heightened scrutiny about anti‑competitive behavior, preferential treatment, or data sharing. Microsoft will need robust firewalls, auditability and transparency to navigate this landscape.

What IT leaders and administrators should watch

- Model selection controls: Look for admin features that let you define which models are allowed for which workloads, and whether routing decisions respect data residency and compliance policies.

- Cost reporting and allocation: Multi‑model orchestration can lead to variable charges across providers; ensure your cost‑management tools reconcile cross‑cloud and in‑house usage accurately.

- Latency and SLA differences: Different models and hosting locations will have materially different latency profiles. For interactive UX, latency matters.

- Data governance and logs: Verify where prompts and outputs are logged and stored, and whether cross‑cloud model calls leave audit trails that meet your compliance needs.

- Fallback and continuity planning: Build policies and automation to fallback gracefully between models in production when availability or cost thresholds are breached.

Critical analysis: strengths, limits and strategic prudence

Microsoft’s pivot to AI self‑sufficiency is strategically sensible and technically grounded. The company’s scale across cloud, productivity software and enterprise relationships gives it a rare opportunity to internalize advanced AI capabilities and deploy them broadly.Strengths:

- Option value: Building internal models and infrastructure gives Microsoft flexibility and insurance against supplier shock or adversarial policy changes.

- Integration advantage: In‑house models can be product‑optimized for Office, Windows and enterprise services in ways that external models cannot.

- Infrastructure moat: Fairwater and custom accelerators create a technical moat that competitors without equivalent datacenter footprints will find hard to match quickly.

- No guaranteed competency lead: Owning the stack does not automatically produce world‑leading models. Model quality depends on algorithms, training data, evaluation rigor and safety processes — all areas where OpenAI, Google and Anthropic also compete fiercely.

- Economic tradeoffs: Even with custom chips and datacenters, training and operating frontier models remains expensive. Microsoft must balance performance gains against capital and operating costs.

- Regulatory complexity: Operating both as a customer, hosting provider, and competitor to model vendors introduces conflicts and regulatory exposure that will need ongoing management.

The broader industry implications

Microsoft’s moves amplify several industry trends. First, hyperscale cloud providers are transitioning from general compute utility players to vertically integrated AI platform vendors. Second, multi‑model orchestration becomes a differentiator: the company that can manage governance, cost and quality across multiple engines gains a strong enterprise offering. Third, there is an acceleration of chip and datacenter competition: expectation of future model scale is forcing cloud providers to invest in both silicon and specialized facilities.For regulators and industry observers, Microsoft’s posture also raises governance questions about concentration of compute, ownership of model IP and the transparency of safety and audit practices when a single company both powers and competes with leading AI labs.

Conclusion

Microsoft’s campaign for “AI self‑sufficiency” is a pragmatic, capital‑heavy strategy designed to preserve product continuity, reduce single‑supplier risk and enable deeper product‑level optimization. It does not sever the company’s relationship with OpenAI; instead, it rewrites the playbook into one of orchestration, optionality and vertical integration.For enterprises and IT professionals, the takeaway is clear: prepare for a multi‑model world where governance, cost control and performance tuning move to the fore. For Microsoft, the tightrope is equally clear — deliver in‑house models and infrastructure that meet or exceed external alternatives while managing the legal, regulatory and operational complexity that comes with being both a platform provider and an active competitor.

The coming 12–24 months will determine whether Microsoft’s investments in chips, datacenters and models translate into durable product advantages or whether the company simply becomes one more capable but costly supplier in an increasingly crowded AI marketplace. Either way, Microsoft’s bet on self‑sufficiency has already reshaped how enterprises evaluate vendor risk and how AI services will be purchased, deployed and governed at scale.

Source: Neowin Microsoft aims to reduce dependency on OpenAI, as it pushes for "AI self-sufficiency"