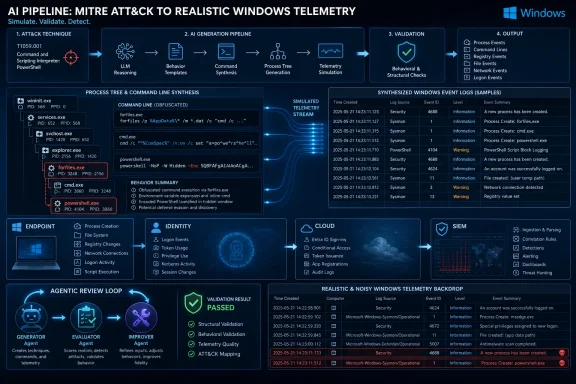

Microsoft Defender Security Research on May 12, 2026, described an AI-assisted research pipeline that turns attacker tactics, techniques, procedures, and concrete actions into realistic synthetic security logs for use in detection engineering across Defender-style endpoint, identity, cloud, and SIEM workflows. The thesis is simple but consequential: the industry’s bottleneck is no longer just finding threats, but manufacturing enough trustworthy telemetry to test whether defenses can see them. Microsoft is not claiming synthetic logs replace labs or real incidents. It is arguing that the slowest part of blue-team work can be accelerated before an adversary ever touches production.

Security vendors love to talk about “signals,” but detection engineering is built on something more mundane: logs that actually resemble what attackers do. A process tree, a command line, a parent-child relationship, a strange service name, a staged script interpreter, a DNS lookup at the wrong moment — these are the raw materials from which rules, hunts, alerts, and automated response flows are built.

The problem is that good malicious telemetry is scarce by design. Mature organizations spend enormous effort preventing attacks, containing intrusions, and removing adversaries before they complete their playbooks. That is good for customers and bad for training data. The more successful a security program becomes, the less clean, complete, and repeatable malicious activity it may have available for future detection development.

Microsoft’s proposal is to use AI to bridge that gap. Instead of waiting for a real intrusion or repeatedly executing attacks in a controlled lab, researchers feed a model a high-level adversary behavior — mapped to MITRE ATT&CK tactics and techniques — plus a concrete attacker action. The system then generates structured log entries that are meant to be semantically faithful to what the activity would produce.

That sounds almost obvious in the age of generative AI, but in security operations the bar is punishingly high. A synthetic phishing email can be useful if it feels plausible. A synthetic event log has to get the boring details right: executable names, parent processes, command-line arguments, timestamps, ordering, and the relationships between one step and the next.

Real-world telemetry is dominated by benign repetition. Workstations launch browsers, Office apps, updaters, shells, device drivers, endpoint agents, and background services all day long. Malicious behavior is rare, and when it appears, it is often partial, noisy, or wrapped inside the specific quirks of one environment.

Collecting and labeling that activity is expensive. Reconstructing an attack chain from logs requires analysts to determine which events matter, which are incidental, and which belong to surrounding noise. Even when a defender has a confirmed intrusion, legal, privacy, and customer confidentiality concerns may limit how widely the data can be shared.

Lab simulations help, but they are not free. Red teams and threat researchers can reproduce techniques in controlled environments, yet each scenario requires setup, instrumentation, execution, cleanup, validation, and maintenance. That makes labs excellent for high-confidence validation but awkward as the first stop for every new detection idea.

The result is a development cadence that looks too slow for the current threat landscape. Microsoft has spent much of 2026 warning that AI is compressing attacker workflows, from reconnaissance and lure generation to vulnerability discovery and operational planning. If attackers are gaining speed from automation, defenders cannot afford a telemetry supply chain that still depends entirely on handcrafted exercises.

Microsoft’s framing is more careful. The goal is not to reproduce real customer logs verbatim. It is to generate logs that are realistic and semantically correct enough to exercise detections in meaningful ways. In other words, the synthetic event does not have to match a real event byte for byte, but it must preserve the operational truth of the attacker behavior.

The example Microsoft gives is revealing. For MITRE ATT&CK technique T1202, Indirect Command Execution, the attacker action involves use of

That is the difference between generating “malware-ish” text and generating telemetry. Detection content is often sensitive to small differences. A missing parent process can break a correlation. A plausible but wrong path can make a rule appear to work in testing and fail in production. An event sequence in the wrong order can destroy the analytic value of the dataset.

Microsoft’s bet is that AI can be made useful here precisely because attacker behavior has structure. TTPs are not random prose. They map to known techniques, expected processes, common abuse patterns, and observable side effects. If a model can translate that structure into telemetry while staying within the constraints of real logging schemas, it becomes a force multiplier for detection teams.

That baseline matters because it reflects how many security teams are already experimenting with generative AI. Analysts paste a threat report into a model, ask for relevant telemetry, then turn the output into a Sigma rule, KQL query, or detection hypothesis. For simple cases, this can be useful. It is also dangerously easy to overestimate.

Prompt-only workflows tend to degrade when the scenario becomes multi-stage. A single command line is one thing; a chain involving staging, execution, persistence, credential access, lateral movement, and cleanup is another. The model has to maintain state across events, preserve causal relationships, and avoid quietly filling gaps with generic security-sounding activity.

That is why Microsoft’s research treats prompting as a baseline rather than the destination. It is the fast sketch, not the engineering drawing. It can produce a starting point, but complex detection work needs a mechanism for critique, correction, and refinement.

The important lesson for defenders is not that prompts are useless. It is that prompting shifts the burden from typing to verification. If a model produces ten synthetic events, the analyst still needs to know whether those events reflect the technique, whether the fields are plausible, and whether the sequence would actually trigger the intended logic.

This is not magic, and the word agentic has been abused across the AI industry. But the design is sensible. Detection engineering is an iterative discipline. Analysts write a hypothesis, test it against telemetry, discover missing context, adjust logic, and repeat. Microsoft is trying to encode that loop into the generation process itself.

The advantage is especially clear in attack chains. A generator may produce the right child process but miss the parent. An evaluator can flag that gap. An improver can repair the sequence so the process tree makes sense. Over multiple turns, the workflow can converge on logs that are more complete and more faithful than a single-shot prompt.

Microsoft says this agentic collaboration produced dramatic recall improvements across its evaluated datasets. That is the kind of claim that deserves both attention and caution. Recall is important because it measures whether the model generated semantically relevant log instances expected for a given attack scenario. But recall alone does not tell the whole story; detection teams also care about precision, false positives, environmental drift, and whether the generated activity teaches a rule to depend on artifacts that will not exist elsewhere.

Still, the direction is right. If AI is going to be useful in security engineering, it should not merely produce output. It should participate in a review loop where its work is challenged against explicit expectations.

That distinction matters. A log can be conceptually right and operationally insufficient. In the early stage of detection design, conceptual fidelity may be enough. For testing detection efficacy against production-like conditions, the details become the point.

RLVR tries to tighten that gap by comparing generated logs against ground-truth logs and awarding partial rewards for semantic alignment while penalizing exact mismatches. The judge also produces reasoning, which matters because security teams need to audit why a generated sample was considered good or bad. A black-box reward system would be a poor fit for a field already drowning in opaque automation.

The irony is that this advanced approach runs straight into the original constraint: labeled data. Reinforcement learning needs enough examples to teach effective policies. Microsoft says it used augmentation techniques such as paraphrasing attack narratives and perturbing parameters to scale from hundreds to thousands of training examples. That helps, but it does not eliminate the dependency.

This is the core tension in the research. AI-assisted synthetic telemetry is meant to reduce reliance on scarce real logs, but the most precise version of the technique still benefits from scarce, labeled ground truth. The practical answer may be tiered adoption. Use prompt and agentic generation for breadth, hypothesis formation, and early detection coverage. Use lab simulations and real logs for final validation, tuning, and high-risk production decisions.

That mix is important. A model that performs well only on one internal dataset may simply be learning the house style of one lab. External datasets introduce different logging assumptions, noise patterns, and attack layouts. ATLASv2 is especially relevant because Windows defenders rarely get clean single-host stories; they get cross-host behavior, browser artifacts, DNS traces, and the operational clutter of real systems.

Microsoft says its LLM-as-a-judge evaluated generated logs using flexible matching around new process name, parent process name, and command-line semantics. That is a defensible choice for process-centric attack telemetry. The example that a synthetic

But this also highlights where the method could become controversial. An LLM judge is not the same as an objective parser, and flexible matching can hide weaknesses if the judge is too generous. In production detection engineering, a malformed path, missing switch, or wrong parent can be the difference between a useful analytic and a false sense of coverage.

The best reading of Microsoft’s results is not that AI can now replace expert validation. It is that AI can generate enough plausible, structured material to move more detection ideas into the testable stage. For teams stuck waiting on lab time or clean samples, that is a meaningful shift.

Synthetic attack logs could improve several parts of that ecosystem. Microsoft’s own researchers can use them to test coverage against rare or emerging threats. Customers could eventually use similar techniques to validate custom detections in Microsoft Sentinel or Defender XDR without staging every attack by hand. Managed detection teams could use synthetic traces to benchmark coverage across customer environments without exposing sensitive production logs.

The privacy angle is not incidental. Security telemetry often contains usernames, hostnames, file paths, internal domain names, IP addresses, application names, and operational clues. Sharing real logs outside a narrow circle can be risky. Synthetic logs, if generated well, offer a safer way to collaborate, train, and benchmark without handing over a map of the environment.

That does not make synthetic telemetry automatically safe. A model trained or prompted on sensitive logs can still leak patterns if governance is poor. Generated attack traces can also become dual-use material if they describe command lines and process chains too concretely. Microsoft’s research sits in the familiar AI security paradox: the same abstraction that helps defenders can also lower friction for adversaries if released carelessly.

There is also a huge surface area for getting things subtly wrong. Windows environments differ by version, policy, logging configuration, EDR coverage, language, installation path, domain structure, and administrative practice. A process that looks suspicious on one server may be routine on another. A command line that appears in a lab may never appear in an enterprise with hardened PowerShell logging and application control.

This is where Microsoft’s emphasis on semantically meaningful logs is both necessary and insufficient. Semantics tell you the attacker used indirect command execution. Operational fidelity tells you whether the generated event looks like it came from Server 2019, Windows 11 24H2, a domain controller, a developer workstation, or a kiosk running a locked-down image.

The more specific the detection, the more environment matters. A broad analytic may only need to know that

Synthetic logs can help map that space, but they should not flatten it. The best implementations will let defenders specify environment constraints, telemetry source, logging policy, and platform assumptions. Otherwise, the generated logs will drift toward a generic Windows that exists mostly in product demos.

That loop is the heart of modern detection engineering. Threat intelligence says an actor abused a living-off-the-land binary. Detection engineering asks what that looks like in logs. Telemetry testing shows whether existing rules would catch it. Response automation determines whether the alert can be triaged, correlated, and acted upon quickly enough to matter.

Historically, those steps have been connected by human labor and institutional memory. A senior analyst reads the report, remembers a similar intrusion, searches old cases, asks a lab team for reproduction, and writes or adjusts a detection. That process works, but it does not scale gracefully.

AI-assisted synthetic logs could compress the loop. A new report could become candidate TTP mappings, candidate telemetry, candidate detections, and candidate tests faster than a traditional workflow. Human experts would still approve, tune, and deploy, but they would start from a richer set of artifacts.

The danger is that organizations mistake acceleration for assurance. Faster candidate detections are not the same as validated detections. Synthetic telemetry is a drafting tool, not a courtroom record. Its value depends on whether it drives better human and machine review, not whether it produces impressive tables.

Synthetic attack logs are the easier half of the equation. The harder half is realistic benign background. A detection that fires on a synthetic malicious command line may still be useless if legitimate administrative tools produce similar patterns every hour. In Windows environments, this is not hypothetical. Administrators, installers, management agents, backup systems, and scripts regularly do things that look strange in isolation.

Microsoft’s RLVR discussion nods at this problem when it says generated logs need to become closer to real event logs, especially in details like service names and command-line arguments. But the bigger challenge is distribution. Real telemetry has weird frequency patterns, seasonal admin behavior, failed tasks, stale software, legacy scripts, and local conventions that no generic generator will perfectly know.

For Defender customers, the practical value will come when synthetic attack traces can be tested against their own benign baselines. That is where privacy-preserving generation could shine. An organization might not want to share production logs with a vendor or community, but it may be willing to generate synthetic malicious overlays that reflect its environment’s structure.

This is also where AI can make mistakes with operational consequences. If synthetic datasets underrepresent benign lookalikes, rules will be too aggressive. If they overfit to known attack examples, rules will be too narrow. If they normalize away messy real-world variation, they will produce clean tests and dirty deployments.

They should not be the final certification that a detection is production-ready. Final validation still needs real telemetry, lab execution, adversary emulation, controlled rollout, and monitoring for false positives. Microsoft’s own post is careful to say synthetic logs complement lab simulations rather than replace them.

That nuance matters because enterprise security buyers are exhausted by automation claims. Every new AI feature promises speed. The real question is where the speed is safe. In detection engineering, early-stage ideation and coverage expansion are safe places to accelerate. Blind production deployment is not.

A mature workflow would use synthetic logs to generate candidate coverage, then test those candidates against known-good benign data, then validate high-value scenarios in a lab, then deploy with staged monitoring. That may sound less glamorous than autonomous security, but it is how real defenders avoid breaking their SOC.

The most concrete implications are already visible:

Microsoft’s synthetic log research is not the end of lab work, and it is not a substitute for the messy authority of real incidents. It is a sign that defensive engineering is moving toward a faster, more iterative model: intelligence becomes behavior, behavior becomes telemetry, telemetry becomes detection, and detection is tested before the attacker supplies the lesson in production. If Microsoft and the broader security community can keep that loop grounded in real Windows behavior rather than AI-generated neatness, synthetic logs may become one of the more practical uses of AI in the SOC — not because they replace reality, but because they let defenders rehearse for more of it.

Source: Microsoft Accelerating detection engineering using AI-assisted synthetic attack logs generation | Microsoft Security Blog

Microsoft Is Trying to Industrialize the Messiest Part of Detection Engineering

Microsoft Is Trying to Industrialize the Messiest Part of Detection Engineering

Security vendors love to talk about “signals,” but detection engineering is built on something more mundane: logs that actually resemble what attackers do. A process tree, a command line, a parent-child relationship, a strange service name, a staged script interpreter, a DNS lookup at the wrong moment — these are the raw materials from which rules, hunts, alerts, and automated response flows are built.The problem is that good malicious telemetry is scarce by design. Mature organizations spend enormous effort preventing attacks, containing intrusions, and removing adversaries before they complete their playbooks. That is good for customers and bad for training data. The more successful a security program becomes, the less clean, complete, and repeatable malicious activity it may have available for future detection development.

Microsoft’s proposal is to use AI to bridge that gap. Instead of waiting for a real intrusion or repeatedly executing attacks in a controlled lab, researchers feed a model a high-level adversary behavior — mapped to MITRE ATT&CK tactics and techniques — plus a concrete attacker action. The system then generates structured log entries that are meant to be semantically faithful to what the activity would produce.

That sounds almost obvious in the age of generative AI, but in security operations the bar is punishingly high. A synthetic phishing email can be useful if it feels plausible. A synthetic event log has to get the boring details right: executable names, parent processes, command-line arguments, timestamps, ordering, and the relationships between one step and the next.

The Scarcity of Attack Logs Has Become a Strategic Weakness

Detection engineering has always lived with an uncomfortable asymmetry. Attackers can experiment privately, iterate quickly, and discard failures. Defenders need telemetry, labels, ground truth, and confidence before they push a new rule into an environment where false positives can swamp an operations team.Real-world telemetry is dominated by benign repetition. Workstations launch browsers, Office apps, updaters, shells, device drivers, endpoint agents, and background services all day long. Malicious behavior is rare, and when it appears, it is often partial, noisy, or wrapped inside the specific quirks of one environment.

Collecting and labeling that activity is expensive. Reconstructing an attack chain from logs requires analysts to determine which events matter, which are incidental, and which belong to surrounding noise. Even when a defender has a confirmed intrusion, legal, privacy, and customer confidentiality concerns may limit how widely the data can be shared.

Lab simulations help, but they are not free. Red teams and threat researchers can reproduce techniques in controlled environments, yet each scenario requires setup, instrumentation, execution, cleanup, validation, and maintenance. That makes labs excellent for high-confidence validation but awkward as the first stop for every new detection idea.

The result is a development cadence that looks too slow for the current threat landscape. Microsoft has spent much of 2026 warning that AI is compressing attacker workflows, from reconnaissance and lure generation to vulnerability discovery and operational planning. If attackers are gaining speed from automation, defenders cannot afford a telemetry supply chain that still depends entirely on handcrafted exercises.

Synthetic Logs Are Not Fake Security If They Preserve the Right Truths

The phrase synthetic security logs invites skepticism, and rightly so. Bad synthetic data is worse than no data because it can train teams and systems to recognize artifacts of the generator rather than artifacts of the adversary. A model that invents neat, well-formed, idealized attack traces may make dashboards look better while making defenses more brittle.Microsoft’s framing is more careful. The goal is not to reproduce real customer logs verbatim. It is to generate logs that are realistic and semantically correct enough to exercise detections in meaningful ways. In other words, the synthetic event does not have to match a real event byte for byte, but it must preserve the operational truth of the attacker behavior.

The example Microsoft gives is revealing. For MITRE ATT&CK technique T1202, Indirect Command Execution, the attacker action involves use of

forfiles, variable expansion through %PROGRAMFILES, hex characters, obfuscated commands such as echo and exec, and output passed into Python for execution. A useful synthetic log for this scenario must capture not just that forfiles.exe appeared, but why it appeared, what it launched, how the command line was shaped, and how defenders would reasonably detect the behavior.That is the difference between generating “malware-ish” text and generating telemetry. Detection content is often sensitive to small differences. A missing parent process can break a correlation. A plausible but wrong path can make a rule appear to work in testing and fail in production. An event sequence in the wrong order can destroy the analytic value of the dataset.

Microsoft’s bet is that AI can be made useful here precisely because attacker behavior has structure. TTPs are not random prose. They map to known techniques, expected processes, common abuse patterns, and observable side effects. If a model can translate that structure into telemetry while staying within the constraints of real logging schemas, it becomes a force multiplier for detection teams.

The First Attempt Is Prompting, and Prompting Alone Is Not Enough

The most straightforward approach is prompt engineering. Give the model the scenario, tell it to think like a cybersecurity researcher, ask it to use MITRE ATT&CK knowledge, and have it produce a coherent set of log entries. Microsoft describes this as a structured, multi-stage dialogue that includes prompting, iterative generation, and evaluation by an independent LLM-as-a-judge.That baseline matters because it reflects how many security teams are already experimenting with generative AI. Analysts paste a threat report into a model, ask for relevant telemetry, then turn the output into a Sigma rule, KQL query, or detection hypothesis. For simple cases, this can be useful. It is also dangerously easy to overestimate.

Prompt-only workflows tend to degrade when the scenario becomes multi-stage. A single command line is one thing; a chain involving staging, execution, persistence, credential access, lateral movement, and cleanup is another. The model has to maintain state across events, preserve causal relationships, and avoid quietly filling gaps with generic security-sounding activity.

That is why Microsoft’s research treats prompting as a baseline rather than the destination. It is the fast sketch, not the engineering drawing. It can produce a starting point, but complex detection work needs a mechanism for critique, correction, and refinement.

The important lesson for defenders is not that prompts are useless. It is that prompting shifts the burden from typing to verification. If a model produces ten synthetic events, the analyst still needs to know whether those events reflect the technique, whether the fields are plausible, and whether the sequence would actually trigger the intended logic.

Agentic Workflows Bring Peer Review to the Generator

Microsoft’s second approach is more interesting because it mirrors how competent detection engineering teams already work. Instead of relying on one model response, the workflow splits responsibilities across three agents: a generator, an evaluator, and an improver. The generator produces the first version of the logs, the evaluator critiques them, and the improver proposes targeted fixes.This is not magic, and the word agentic has been abused across the AI industry. But the design is sensible. Detection engineering is an iterative discipline. Analysts write a hypothesis, test it against telemetry, discover missing context, adjust logic, and repeat. Microsoft is trying to encode that loop into the generation process itself.

The advantage is especially clear in attack chains. A generator may produce the right child process but miss the parent. An evaluator can flag that gap. An improver can repair the sequence so the process tree makes sense. Over multiple turns, the workflow can converge on logs that are more complete and more faithful than a single-shot prompt.

Microsoft says this agentic collaboration produced dramatic recall improvements across its evaluated datasets. That is the kind of claim that deserves both attention and caution. Recall is important because it measures whether the model generated semantically relevant log instances expected for a given attack scenario. But recall alone does not tell the whole story; detection teams also care about precision, false positives, environmental drift, and whether the generated activity teaches a rule to depend on artifacts that will not exist elsewhere.

Still, the direction is right. If AI is going to be useful in security engineering, it should not merely produce output. It should participate in a review loop where its work is challenged against explicit expectations.

Reinforcement Learning Is the Ambitious Part, and the Data Problem Comes Back

The third approach in Microsoft’s research is reinforcement learning with verifiable rewards, or RLVR. This is where the work becomes more ambitious and, frankly, more difficult. The agentic workflow may generate semantically correct logs, but Microsoft acknowledges that those logs can still differ noticeably from real event logs in process paths, command-line arguments, service names, and other low-level details.That distinction matters. A log can be conceptually right and operationally insufficient. In the early stage of detection design, conceptual fidelity may be enough. For testing detection efficacy against production-like conditions, the details become the point.

RLVR tries to tighten that gap by comparing generated logs against ground-truth logs and awarding partial rewards for semantic alignment while penalizing exact mismatches. The judge also produces reasoning, which matters because security teams need to audit why a generated sample was considered good or bad. A black-box reward system would be a poor fit for a field already drowning in opaque automation.

The irony is that this advanced approach runs straight into the original constraint: labeled data. Reinforcement learning needs enough examples to teach effective policies. Microsoft says it used augmentation techniques such as paraphrasing attack narratives and perturbing parameters to scale from hundreds to thousands of training examples. That helps, but it does not eliminate the dependency.

This is the core tension in the research. AI-assisted synthetic telemetry is meant to reduce reliance on scarce real logs, but the most precise version of the technique still benefits from scarce, labeled ground truth. The practical answer may be tiered adoption. Use prompt and agentic generation for breadth, hypothesis formation, and early detection coverage. Use lab simulations and real logs for final validation, tuning, and high-risk production decisions.

The Evaluation Choices Show Microsoft Knows This Is About Generalization

Microsoft evaluated the approaches against three kinds of datasets: Goal-Driven campaigns created through repeatable attack simulations by threat researchers, the open-source Security Datasets Project, and ATLASv2, which includes Windows Security Auditing logs, Sysmon logs, Firefox logs, and DNS telemetry across two Windows VMs with multi-stage attack scenarios and realistic noise.That mix is important. A model that performs well only on one internal dataset may simply be learning the house style of one lab. External datasets introduce different logging assumptions, noise patterns, and attack layouts. ATLASv2 is especially relevant because Windows defenders rarely get clean single-host stories; they get cross-host behavior, browser artifacts, DNS traces, and the operational clutter of real systems.

Microsoft says its LLM-as-a-judge evaluated generated logs using flexible matching around new process name, parent process name, and command-line semantics. That is a defensible choice for process-centric attack telemetry. The example that a synthetic

forfiles.exe can match a ground-truth full path such as D:\Windows\System32\forfiles.exe shows the evaluation is not demanding brittle string equality where semantic equivalence is enough.But this also highlights where the method could become controversial. An LLM judge is not the same as an objective parser, and flexible matching can hide weaknesses if the judge is too generous. In production detection engineering, a malformed path, missing switch, or wrong parent can be the difference between a useful analytic and a false sense of coverage.

The best reading of Microsoft’s results is not that AI can now replace expert validation. It is that AI can generate enough plausible, structured material to move more detection ideas into the testable stage. For teams stuck waiting on lab time or clean samples, that is a meaningful shift.

Defender Customers Should Read This as a Platform Signal, Not Just a Research Note

Microsoft frames the work as important for Defender customers because it can help accelerate detection rules and AI-based automation while preserving privacy and reducing operational overhead. That language is vendor positioning, but it also reflects a genuine platform problem. Defender is only as valuable as its ability to recognize attacker behavior across endpoint, identity, email, cloud apps, and workloads.Synthetic attack logs could improve several parts of that ecosystem. Microsoft’s own researchers can use them to test coverage against rare or emerging threats. Customers could eventually use similar techniques to validate custom detections in Microsoft Sentinel or Defender XDR without staging every attack by hand. Managed detection teams could use synthetic traces to benchmark coverage across customer environments without exposing sensitive production logs.

The privacy angle is not incidental. Security telemetry often contains usernames, hostnames, file paths, internal domain names, IP addresses, application names, and operational clues. Sharing real logs outside a narrow circle can be risky. Synthetic logs, if generated well, offer a safer way to collaborate, train, and benchmark without handing over a map of the environment.

That does not make synthetic telemetry automatically safe. A model trained or prompted on sensitive logs can still leak patterns if governance is poor. Generated attack traces can also become dual-use material if they describe command lines and process chains too concretely. Microsoft’s research sits in the familiar AI security paradox: the same abstraction that helps defenders can also lower friction for adversaries if released carelessly.

Windows Telemetry Is Both the Opportunity and the Trap

For Windows defenders, the appeal is obvious. Windows has rich eventing, mature Sysmon deployments in many advanced environments, Defender telemetry, PowerShell logs, authentication events, service control manager records, scheduled task traces, and a long history of adversaries abusing native binaries. There is a huge surface area for synthetic generation to cover.There is also a huge surface area for getting things subtly wrong. Windows environments differ by version, policy, logging configuration, EDR coverage, language, installation path, domain structure, and administrative practice. A process that looks suspicious on one server may be routine on another. A command line that appears in a lab may never appear in an enterprise with hardened PowerShell logging and application control.

This is where Microsoft’s emphasis on semantically meaningful logs is both necessary and insufficient. Semantics tell you the attacker used indirect command execution. Operational fidelity tells you whether the generated event looks like it came from Server 2019, Windows 11 24H2, a domain controller, a developer workstation, or a kiosk running a locked-down image.

The more specific the detection, the more environment matters. A broad analytic may only need to know that

forfiles.exe spawned a script interpreter. A production-grade rule may need to handle path variations, renamed interpreters, localized directories, endpoint agent normalization, and parent processes that differ across management tools.Synthetic logs can help map that space, but they should not flatten it. The best implementations will let defenders specify environment constraints, telemetry source, logging policy, and platform assumptions. Otherwise, the generated logs will drift toward a generic Windows that exists mostly in product demos.

This Is Really About Closing the Loop Between Threat Intelligence and Detection

Microsoft’s May 2026 post fits into a broader pattern in its security messaging this year. In January, Microsoft described AI-assisted workflows for turning threat reports into detection insights. In April, it argued that AI is accelerating both vulnerability discovery and defensive response. The synthetic log research is the next logical step: once AI can extract TTPs and suggest detections, it needs telemetry to test them.That loop is the heart of modern detection engineering. Threat intelligence says an actor abused a living-off-the-land binary. Detection engineering asks what that looks like in logs. Telemetry testing shows whether existing rules would catch it. Response automation determines whether the alert can be triaged, correlated, and acted upon quickly enough to matter.

Historically, those steps have been connected by human labor and institutional memory. A senior analyst reads the report, remembers a similar intrusion, searches old cases, asks a lab team for reproduction, and writes or adjusts a detection. That process works, but it does not scale gracefully.

AI-assisted synthetic logs could compress the loop. A new report could become candidate TTP mappings, candidate telemetry, candidate detections, and candidate tests faster than a traditional workflow. Human experts would still approve, tune, and deploy, but they would start from a richer set of artifacts.

The danger is that organizations mistake acceleration for assurance. Faster candidate detections are not the same as validated detections. Synthetic telemetry is a drafting tool, not a courtroom record. Its value depends on whether it drives better human and machine review, not whether it produces impressive tables.

The False-Positive Problem Still Owns the Last Mile

Detection engineering is not just about catching more bad things. It is about catching bad things without flooding analysts with benign activity. That is why Microsoft’s note that effective detection engineering requires distinguishing benign from malicious behavior is so important.Synthetic attack logs are the easier half of the equation. The harder half is realistic benign background. A detection that fires on a synthetic malicious command line may still be useless if legitimate administrative tools produce similar patterns every hour. In Windows environments, this is not hypothetical. Administrators, installers, management agents, backup systems, and scripts regularly do things that look strange in isolation.

Microsoft’s RLVR discussion nods at this problem when it says generated logs need to become closer to real event logs, especially in details like service names and command-line arguments. But the bigger challenge is distribution. Real telemetry has weird frequency patterns, seasonal admin behavior, failed tasks, stale software, legacy scripts, and local conventions that no generic generator will perfectly know.

For Defender customers, the practical value will come when synthetic attack traces can be tested against their own benign baselines. That is where privacy-preserving generation could shine. An organization might not want to share production logs with a vendor or community, but it may be willing to generate synthetic malicious overlays that reflect its environment’s structure.

This is also where AI can make mistakes with operational consequences. If synthetic datasets underrepresent benign lookalikes, rules will be too aggressive. If they overfit to known attack examples, rules will be too narrow. If they normalize away messy real-world variation, they will produce clean tests and dirty deployments.

The Best Use Case Is Earlier Experimentation, Not Final Certification

The right mental model is a wind tunnel, not a crash test. Synthetic logs let defenders explore more shapes more quickly. They can reveal whether a detection idea is worth pursuing, whether a rule misses obvious variants, or whether an attack chain requires correlation across multiple data sources.They should not be the final certification that a detection is production-ready. Final validation still needs real telemetry, lab execution, adversary emulation, controlled rollout, and monitoring for false positives. Microsoft’s own post is careful to say synthetic logs complement lab simulations rather than replace them.

That nuance matters because enterprise security buyers are exhausted by automation claims. Every new AI feature promises speed. The real question is where the speed is safe. In detection engineering, early-stage ideation and coverage expansion are safe places to accelerate. Blind production deployment is not.

A mature workflow would use synthetic logs to generate candidate coverage, then test those candidates against known-good benign data, then validate high-value scenarios in a lab, then deploy with staged monitoring. That may sound less glamorous than autonomous security, but it is how real defenders avoid breaking their SOC.

The Useful Future Is Synthetic, Reviewed, and Grounded in Reality

Microsoft’s research lands because it addresses a practical pain point rather than a vague AI aspiration. Security teams do not need another chatbot that summarizes alerts. They need better ways to turn adversary behavior into tested, deployable detection logic before the next campaign arrives.The most concrete implications are already visible:

- Synthetic attack logs can help detection engineers explore coverage for rare or emerging techniques without waiting for a real intrusion or a full lab reproduction.

- Agentic generation appears more promising than single-prompt generation because it builds critique and refinement into the workflow.

- Reinforcement learning with verifiable rewards may improve low-level fidelity, but it still depends heavily on labeled ground-truth examples.

- Defender and Sentinel customers should treat synthetic telemetry as an accelerator for hypothesis testing, not as proof that a detection is production-ready.

- Windows environments will need environment-aware generation because paths, parent processes, logging policies, and administrative patterns vary widely across real fleets.

- The decisive test will be whether synthetic malicious logs can be evaluated against realistic benign baselines without creating a new false-positive factory.

Microsoft’s synthetic log research is not the end of lab work, and it is not a substitute for the messy authority of real incidents. It is a sign that defensive engineering is moving toward a faster, more iterative model: intelligence becomes behavior, behavior becomes telemetry, telemetry becomes detection, and detection is tested before the attacker supplies the lesson in production. If Microsoft and the broader security community can keep that loop grounded in real Windows behavior rather than AI-generated neatness, synthetic logs may become one of the more practical uses of AI in the SOC — not because they replace reality, but because they let defenders rehearse for more of it.

Source: Microsoft Accelerating detection engineering using AI-assisted synthetic attack logs generation | Microsoft Security Blog