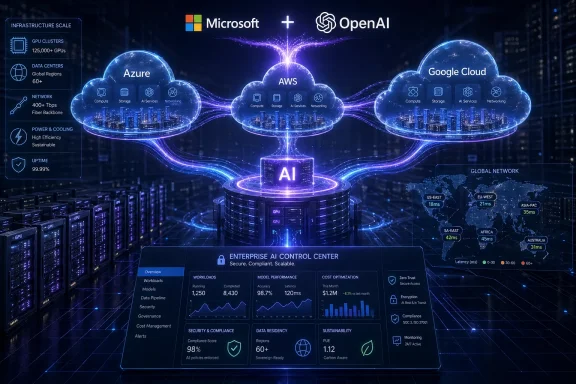

Microsoft and OpenAI have redrawn one of the most consequential alliances in modern computing, replacing a tightly controlled exclusivity model with a broader, multi-cloud AI infrastructure strategy. The revised agreement keeps Azure at the center of OpenAI’s product rollout while allowing OpenAI to serve customers through other cloud providers, a shift that could reshape enterprise AI buying, cloud competition, and the economics behind frontier model deployment. For Microsoft, the move is less a retreat than a recalibration: it preserves long-term access to OpenAI technology while reducing open-ended commercial obligations. For OpenAI, it creates room to scale globally at the pace demanded by AI workloads that now strain even the world’s largest data center networks.

Microsoft’s relationship with OpenAI began as a bold infrastructure bet before generative AI became a mainstream business category. In 2019, Microsoft invested heavily in OpenAI and made Azure the preferred engine for training and serving increasingly large AI models. At the time, the arrangement looked like a strategic moonshot: Microsoft supplied compute, OpenAI supplied frontier research, and both companies gained a head start over rivals still treating large language models as experimental technology.

The launch of ChatGPT changed the stakes dramatically. What had been an ambitious research partnership became a defining pillar of Microsoft’s modern product strategy, feeding Copilot, Azure AI services, developer tools, and enterprise productivity features across Microsoft 365 and Windows. OpenAI, meanwhile, moved from research lab to global AI platform almost overnight, facing enormous demand for compute capacity, inference speed, enterprise controls, and geographic availability.

That rapid expansion exposed friction inside the original structure. A close partnership gave Microsoft early access, distribution power, and deep product integration, but exclusivity also created constraints for OpenAI customers that had already standardized on Amazon Web Services, Google Cloud, Oracle, or regional cloud providers. As AI shifted from novelty to core infrastructure, the market began demanding deployment flexibility, data residency options, procurement choice, and redundancy across providers.

The amended agreement should be read in that context. Microsoft remains OpenAI’s primary cloud partner, and OpenAI products are still expected to ship first on Azure unless Microsoft cannot and chooses not to support the required capabilities. But OpenAI can now offer its products across any cloud provider, Microsoft’s license to OpenAI models and products runs through 2032 on a non-exclusive basis, and revenue-sharing terms have been simplified through capped obligations.

This does not mean Azure becomes less important overnight. Azure remains the first-stop platform for OpenAI products, and Microsoft’s own Copilot strategy still depends on reliable access to advanced OpenAI models. The difference is that Azure-first no longer means Azure-only.

Key changes in the agreement include:

But the end of exclusivity changes the competitive framing. Customers no longer need to interpret an OpenAI adoption decision as a de facto Azure migration. That distinction is especially important for large organizations that run multi-cloud environments by design, often splitting workloads based on regulation, geography, performance, existing contracts, and internal platform skills.

For Microsoft, the bet is that deep integration beats contractual lock-in. If Azure offers better tooling, governance, model operations, security controls, and Copilot connectivity, customers may choose it even when alternatives exist. That is a healthier competitive posture, but also a more demanding one.

Azure’s continuing advantages include:

OpenAI’s new flexibility directly addresses those realities. If a customer has standardized on AWS Bedrock, Google Cloud Vertex AI, Oracle Cloud Infrastructure, or a regional provider, OpenAI now has a clearer path to serve that customer without making Azure the mandatory commercial gateway. That could expand OpenAI’s market while pressuring every cloud provider to compete on performance, price, governance, and developer experience.

The practical benefits for enterprises may include:

For OpenAI, the cap on payments to Microsoft may be equally important. Frontier AI companies need staggering amounts of capital for compute, talent, energy contracts, custom silicon, and global infrastructure. Capped obligations make future financial modeling easier and may help OpenAI present a cleaner growth story to partners, investors, and potential public-market stakeholders.

The revised economics suggest three priorities:

The capped structure also removes some of the ambiguity around technological milestones. Previous arrangements tied aspects of the relationship to future AI progress, including highly debated thresholds around advanced systems. The new structure favors calendar-based and commercially defined terms, which are easier for customers and investors to understand.

This matters for Copilot. Microsoft has spent years embedding AI into productivity, security, developer, and business applications. If rivals can also offer OpenAI models, Microsoft must differentiate through product design, enterprise administration, data grounding, workflow integration, and reliability.

Microsoft’s position remains strong because it owns the operating context for many enterprise users. Windows endpoints, Microsoft 365 documents, Teams collaboration, Outlook communication, SharePoint repositories, and Entra identities create a rich environment for AI assistance. A model alone does not produce enterprise value; it needs secure access to the right data and actions.

Still, competitors will now argue that OpenAI access is no longer a Microsoft-only advantage. That could accelerate innovation across cloud AI platforms and force Microsoft to make Copilot more useful, transparent, and cost-effective. The age of easy differentiation is ending.

Google Cloud brings deep AI research pedigree, custom tensor processing hardware, and its own Gemini model family. OpenAI’s availability on Google’s infrastructure would create an unusual competitive mix: Google could sell its own models while also supporting a major rival’s models. That reflects the direction of the market, where customers increasingly expect model choice rather than single-vendor AI stacks.

Oracle Cloud Infrastructure may also benefit because it has positioned itself around high-performance AI clusters and large-scale infrastructure deals. Regional and sovereign cloud providers could see opportunities too, especially where data residency and local compliance matter more than brand dominance. The more OpenAI expands across clouds, the more AI becomes a distributed infrastructure business.

Competitors will likely push several messages:

Compliance teams will look closely at where workloads run, how data is stored, what logs are retained, and which provider controls the operational environment. The model provider is only one part of the compliance puzzle. Cloud location, encryption, identity, auditability, incident response, and administrative access all matter.

Architecturally, the new world will demand discipline. Companies should avoid treating multi-cloud AI as a license to scatter experiments everywhere. Without governance, the result could be duplicated costs, inconsistent security policies, and fragmented data flows.

A practical enterprise evaluation sequence should look like this:

Consumers care less about cloud-provider politics than responsiveness, usefulness, privacy, and price. If multi-cloud expansion helps OpenAI scale inference capacity, users across ChatGPT and Microsoft services could see more reliable performance during periods of heavy demand. If competition lowers infrastructure costs, premium AI features may become easier to bundle into mainstream software subscriptions.

Windows device makers will also watch closely. AI PCs, neural processing units, local models, and cloud-assisted agents are converging into a new client-compute model. Microsoft’s continued OpenAI access gives it a strong cloud intelligence layer, while on-device AI could reduce latency and improve privacy for certain tasks.

Consumer-facing implications include:

Microsoft has invested heavily in Azure data centers, custom silicon efforts, and AI supercomputing capacity. OpenAI’s need for global scale gives Microsoft a major anchor customer, but also creates pressure. If OpenAI can diversify infrastructure partners, it can reduce bottlenecks while Microsoft avoids being the sole party responsible for every future capacity surge.

Custom silicon is increasingly strategic. Nvidia remains central to AI acceleration, but major cloud providers are developing or deploying their own chips to control costs and optimize specific workloads. Microsoft’s collaboration with OpenAI on next-generation silicon could improve performance for the exact model architectures that matter most to both companies.

The infrastructure race will be shaped by:

But AI also creates new attack surfaces. Prompt injection, data leakage, model abuse, insecure plugin architectures, and over-permissioned agents can turn productivity tools into risk channels. If OpenAI products run across multiple clouds, consistent security controls become even more important.

Enterprises should demand clarity on several fronts:

Data sovereignty is also becoming a larger issue. Governments and regulated sectors may prefer workloads hosted in specific jurisdictions or under defined compliance regimes. A multi-cloud OpenAI strategy could eventually support more localized deployment models, though availability will depend on commercial agreements, infrastructure buildout, and regulatory approvals.

For developers, wider cloud distribution could reduce friction. If OpenAI capabilities become available through the platforms they already use, they can build applications without reworking their entire backend. That may help local AI ecosystems move from experimentation to production.

Regional opportunities include:

Key developments to monitor include:

Microsoft and OpenAI are not breaking apart; they are adapting to a market that has outgrown the original boundaries of their deal. The revised partnership keeps Azure at the front of the line, gives OpenAI more room to scale globally, and signals that the future of AI infrastructure will be competitive, capital-intensive, and increasingly multi-cloud. For enterprises, developers, and Windows users, the message is clear: the AI platform era is moving from exclusive access to operational execution, and the winners will be the companies that can turn model intelligence into secure, reliable, affordable services at global scale.

Source: TechAfrica News Microsoft and OpenAI Restructure Partnership to Accelerate Global AI Infrastructure and Multi-Cloud Expansion - TechAfrica News

Background

Background

Microsoft’s relationship with OpenAI began as a bold infrastructure bet before generative AI became a mainstream business category. In 2019, Microsoft invested heavily in OpenAI and made Azure the preferred engine for training and serving increasingly large AI models. At the time, the arrangement looked like a strategic moonshot: Microsoft supplied compute, OpenAI supplied frontier research, and both companies gained a head start over rivals still treating large language models as experimental technology.The launch of ChatGPT changed the stakes dramatically. What had been an ambitious research partnership became a defining pillar of Microsoft’s modern product strategy, feeding Copilot, Azure AI services, developer tools, and enterprise productivity features across Microsoft 365 and Windows. OpenAI, meanwhile, moved from research lab to global AI platform almost overnight, facing enormous demand for compute capacity, inference speed, enterprise controls, and geographic availability.

That rapid expansion exposed friction inside the original structure. A close partnership gave Microsoft early access, distribution power, and deep product integration, but exclusivity also created constraints for OpenAI customers that had already standardized on Amazon Web Services, Google Cloud, Oracle, or regional cloud providers. As AI shifted from novelty to core infrastructure, the market began demanding deployment flexibility, data residency options, procurement choice, and redundancy across providers.

The amended agreement should be read in that context. Microsoft remains OpenAI’s primary cloud partner, and OpenAI products are still expected to ship first on Azure unless Microsoft cannot and chooses not to support the required capabilities. But OpenAI can now offer its products across any cloud provider, Microsoft’s license to OpenAI models and products runs through 2032 on a non-exclusive basis, and revenue-sharing terms have been simplified through capped obligations.

Why the Partnership Needed a Reset

The old Microsoft-OpenAI model worked brilliantly during the first phase of generative AI because it paired compute supply with model ambition. But the second phase of AI is not only about model intelligence; it is about distribution, energy, latency, regional compliance, and procurement flexibility. No single cloud provider, however powerful, can comfortably satisfy every enterprise and government requirement across the global market.From exclusivity to scale

The revised structure acknowledges a simple operational truth: frontier AI has become too large for narrow infrastructure channels. Training runs require vast clusters, inference demand spikes unpredictably, and customers increasingly expect low-latency access near their own regions. Even Microsoft, with one of the strongest cloud footprints in the world, benefits if OpenAI can expand demand without forcing every workload through a single commercial path.This does not mean Azure becomes less important overnight. Azure remains the first-stop platform for OpenAI products, and Microsoft’s own Copilot strategy still depends on reliable access to advanced OpenAI models. The difference is that Azure-first no longer means Azure-only.

Key changes in the agreement include:

- OpenAI products continue to ship first on Azure under normal conditions.

- OpenAI can serve customers across any cloud provider, expanding commercial flexibility.

- Microsoft retains a license to OpenAI models and products through 2032.

- Microsoft’s OpenAI license is now non-exclusive, reducing lock-in.

- Microsoft will no longer pay revenue share to OpenAI.

- OpenAI will continue paying Microsoft revenue share through 2030, subject to a cap.

- Microsoft remains a major shareholder, preserving financial upside.

Azure Still Sits at the Center

For WindowsForum readers, the most important detail is that Azure remains the anchor platform. OpenAI products will still debut on Microsoft’s cloud unless Azure cannot support the necessary capabilities and Microsoft elects not to do so. That language matters because it preserves Microsoft’s role as the first enterprise-scale proving ground for many OpenAI services.Azure-first is not Azure-only

Azure-first gives Microsoft a continuing advantage in product integration. Features that depend on OpenAI models can flow into Microsoft 365 Copilot, GitHub Copilot, Dynamics 365, Security Copilot, and Windows-adjacent developer experiences with less commercial ambiguity. Enterprises already invested in Microsoft identity, compliance, and management tools will likely continue to see Azure as the default path for OpenAI-powered deployments.But the end of exclusivity changes the competitive framing. Customers no longer need to interpret an OpenAI adoption decision as a de facto Azure migration. That distinction is especially important for large organizations that run multi-cloud environments by design, often splitting workloads based on regulation, geography, performance, existing contracts, and internal platform skills.

For Microsoft, the bet is that deep integration beats contractual lock-in. If Azure offers better tooling, governance, model operations, security controls, and Copilot connectivity, customers may choose it even when alternatives exist. That is a healthier competitive posture, but also a more demanding one.

Azure’s continuing advantages include:

- Tight integration with Microsoft Entra identity and access controls.

- Enterprise governance through Azure Policy and compliance tooling.

- Native alignment with Microsoft 365 and Windows management ecosystems.

- Existing Azure OpenAI Service deployment experience.

- Security operations links through Microsoft Defender and Sentinel.

- Developer familiarity through Visual Studio, GitHub, and Azure DevOps.

Multi-Cloud AI Becomes the New Default

The most visible market impact is the normalization of multi-cloud AI deployment. Enterprises have talked about multi-cloud for years, but AI makes the concept more urgent. Model serving is expensive, latency-sensitive, data-intensive, and often tied to rapidly changing regulatory demands.Why customers demanded choice

A bank may want one cloud for regulated workloads in Europe, another for analytics in North America, and a third for specialized AI accelerators. A government agency may require sovereign controls or domestic hosting. A multinational manufacturer may already have contractual commitments with multiple providers and does not want a single AI vendor relationship to disrupt its infrastructure map.OpenAI’s new flexibility directly addresses those realities. If a customer has standardized on AWS Bedrock, Google Cloud Vertex AI, Oracle Cloud Infrastructure, or a regional provider, OpenAI now has a clearer path to serve that customer without making Azure the mandatory commercial gateway. That could expand OpenAI’s market while pressuring every cloud provider to compete on performance, price, governance, and developer experience.

The practical benefits for enterprises may include:

- Reduced vendor lock-in for AI workloads.

- Improved regional availability where different clouds have stronger footprints.

- More flexible procurement for organizations with existing cloud contracts.

- Better resilience planning through distributed deployment options.

- Potential price competition across providers hosting similar AI capabilities.

- Easier integration with existing data platforms outside Azure.

Revenue Sharing and the New Economics

The financial side of the revised partnership may be just as significant as the infrastructure side. Microsoft will no longer pay revenue share to OpenAI, while OpenAI will continue revenue-sharing payments to Microsoft through 2030 under capped terms. That transforms a complicated mutual-payment model into something more predictable.Predictability over open-ended obligations

For Microsoft, ending payments to OpenAI can improve the economics of its own AI products. Copilot margins, Azure AI services, and enterprise AI bundles have all faced investor scrutiny because generative AI is costly to run. Removing one layer of revenue-sharing expense gives Microsoft more room to manage pricing, infrastructure costs, and long-term profitability.For OpenAI, the cap on payments to Microsoft may be equally important. Frontier AI companies need staggering amounts of capital for compute, talent, energy contracts, custom silicon, and global infrastructure. Capped obligations make future financial modeling easier and may help OpenAI present a cleaner growth story to partners, investors, and potential public-market stakeholders.

The revised economics suggest three priorities:

- Reduce uncertainty around long-term AI revenue flows.

- Give OpenAI more commercial flexibility as it expands beyond Azure-only distribution.

- Let Microsoft improve AI product margins while retaining strategic exposure to OpenAI growth.

The capped structure also removes some of the ambiguity around technological milestones. Previous arrangements tied aspects of the relationship to future AI progress, including highly debated thresholds around advanced systems. The new structure favors calendar-based and commercially defined terms, which are easier for customers and investors to understand.

Intellectual Property: Still Close, No Longer Exclusive

Microsoft’s continued license to OpenAI models and products through 2032 is one of the most important stabilizers in the revised agreement. It means Microsoft does not lose access to the technology foundation that powers many of its AI services. But the fact that the license is now non-exclusive changes the competitive dynamics.Access without monopoly

A non-exclusive license allows Microsoft to keep building on OpenAI systems while OpenAI makes similar or related capabilities available elsewhere. That is a substantial change from a world in which Microsoft’s privileged access served as a powerful moat against other cloud providers. The moat now shifts from access itself to integration, execution, and customer trust.This matters for Copilot. Microsoft has spent years embedding AI into productivity, security, developer, and business applications. If rivals can also offer OpenAI models, Microsoft must differentiate through product design, enterprise administration, data grounding, workflow integration, and reliability.

Microsoft’s position remains strong because it owns the operating context for many enterprise users. Windows endpoints, Microsoft 365 documents, Teams collaboration, Outlook communication, SharePoint repositories, and Entra identities create a rich environment for AI assistance. A model alone does not produce enterprise value; it needs secure access to the right data and actions.

Still, competitors will now argue that OpenAI access is no longer a Microsoft-only advantage. That could accelerate innovation across cloud AI platforms and force Microsoft to make Copilot more useful, transparent, and cost-effective. The age of easy differentiation is ending.

Competitive Impact on AWS, Google, Oracle, and Others

The revised agreement is a gift to Microsoft’s cloud competitors, but not a guaranteed victory for them. It gives them permission to pursue OpenAI workloads more aggressively, yet they still need infrastructure, enterprise controls, and commercial terms that satisfy demanding customers. AI buyers will compare not just model access, but complete platform value.The cloud wars move up the stack

AWS has the largest cloud footprint and a mature enterprise base, making it an obvious beneficiary of OpenAI’s new flexibility. If OpenAI models become more directly available through Amazon’s AI platforms, AWS customers may gain access without re-architecting around Azure. That could blunt one of Microsoft’s strongest AI-driven cloud migration arguments.Google Cloud brings deep AI research pedigree, custom tensor processing hardware, and its own Gemini model family. OpenAI’s availability on Google’s infrastructure would create an unusual competitive mix: Google could sell its own models while also supporting a major rival’s models. That reflects the direction of the market, where customers increasingly expect model choice rather than single-vendor AI stacks.

Oracle Cloud Infrastructure may also benefit because it has positioned itself around high-performance AI clusters and large-scale infrastructure deals. Regional and sovereign cloud providers could see opportunities too, especially where data residency and local compliance matter more than brand dominance. The more OpenAI expands across clouds, the more AI becomes a distributed infrastructure business.

Competitors will likely push several messages:

- AWS will emphasize customer choice and existing enterprise footprints.

- Google will highlight AI-native infrastructure and model optionality.

- Oracle will focus on high-performance clusters and large training capacity.

- Regional providers will stress sovereignty, residency, and local compliance.

- Specialized AI clouds will compete on accelerator availability and cost.

Enterprise Impact: Procurement, Compliance, and Architecture

Enterprise customers may be the biggest practical winners from the revised partnership. Many organizations want OpenAI’s capabilities but do not want infrastructure decisions dictated by a single vendor relationship. The new arrangement could make AI adoption easier for firms with established multi-cloud governance.More paths to the same model family

Procurement teams can now evaluate OpenAI access through a wider set of commercial channels. That may reduce internal resistance in companies that already have long-term AWS, Google Cloud, Oracle, or regional cloud contracts. It could also help organizations negotiate better terms by comparing deployment options across providers.Compliance teams will look closely at where workloads run, how data is stored, what logs are retained, and which provider controls the operational environment. The model provider is only one part of the compliance puzzle. Cloud location, encryption, identity, auditability, incident response, and administrative access all matter.

Architecturally, the new world will demand discipline. Companies should avoid treating multi-cloud AI as a license to scatter experiments everywhere. Without governance, the result could be duplicated costs, inconsistent security policies, and fragmented data flows.

A practical enterprise evaluation sequence should look like this:

- Identify the business process where OpenAI capabilities will create measurable value.

- Map data sensitivity and residency requirements before choosing a cloud path.

- Compare latency, availability, and regional coverage across providers.

- Review identity, logging, and security integration with existing platforms.

- Test cost under realistic inference loads, not just proof-of-concept usage.

- Define exit and portability plans before production rollout.

Consumer and Windows Ecosystem Impact

Consumers may not see the partnership changes immediately, but the downstream effects could be significant. Microsoft’s consumer AI experiences, including Windows features and Copilot offerings, still depend on strong model access and cost-efficient infrastructure. If the new economics improve Microsoft’s ability to scale those services, Windows users may eventually benefit from faster, more capable, and more integrated AI tools.Copilot remains the front door

For the Windows ecosystem, Copilot is the most visible expression of Microsoft’s AI strategy. It sits at the intersection of operating system assistance, web search, productivity, and device-level workflows. OpenAI’s wider cloud availability does not automatically weaken Copilot, but it raises expectations for Microsoft to deliver a polished experience that feels native rather than bolted on.Consumers care less about cloud-provider politics than responsiveness, usefulness, privacy, and price. If multi-cloud expansion helps OpenAI scale inference capacity, users across ChatGPT and Microsoft services could see more reliable performance during periods of heavy demand. If competition lowers infrastructure costs, premium AI features may become easier to bundle into mainstream software subscriptions.

Windows device makers will also watch closely. AI PCs, neural processing units, local models, and cloud-assisted agents are converging into a new client-compute model. Microsoft’s continued OpenAI access gives it a strong cloud intelligence layer, while on-device AI could reduce latency and improve privacy for certain tasks.

Consumer-facing implications include:

- Potentially faster AI services if infrastructure capacity expands.

- More resilient availability during demand spikes.

- Broader model access across apps and platforms.

- Pressure on Microsoft to improve Copilot’s everyday usefulness.

- Greater competition among AI assistants on Windows and the web.

Data Centers, Silicon, and the Infrastructure Race

The announcement’s references to gigawatts of data center capacity and next-generation silicon are not background details; they are the heart of the story. AI is becoming a physical infrastructure race involving land, power, cooling, networking, accelerators, and supply chains. Software strategy now depends on electrical capacity.Compute is the new strategic currency

Training frontier models requires enormous clusters of GPUs or specialized accelerators connected by high-speed networking. Serving those models to millions of users requires another layer of inference infrastructure optimized for throughput, latency, and cost. As models become multimodal, agentic, and deeply embedded in business processes, demand rises across both fronts.Microsoft has invested heavily in Azure data centers, custom silicon efforts, and AI supercomputing capacity. OpenAI’s need for global scale gives Microsoft a major anchor customer, but also creates pressure. If OpenAI can diversify infrastructure partners, it can reduce bottlenecks while Microsoft avoids being the sole party responsible for every future capacity surge.

Custom silicon is increasingly strategic. Nvidia remains central to AI acceleration, but major cloud providers are developing or deploying their own chips to control costs and optimize specific workloads. Microsoft’s collaboration with OpenAI on next-generation silicon could improve performance for the exact model architectures that matter most to both companies.

The infrastructure race will be shaped by:

- Power availability, especially in regions with strained grids.

- Cooling technology, including liquid cooling for dense AI clusters.

- Accelerator supply, where demand still exceeds easy availability.

- Network architecture, which determines cluster efficiency.

- Inference optimization, crucial for sustainable AI margins.

- Regional data center expansion, needed for compliance and latency.

- Custom silicon roadmaps, which may reduce dependence on merchant GPUs.

Cybersecurity and Enterprise Trust

The companies also highlighted cybersecurity as a continuing collaboration area, and that deserves serious attention. AI is becoming both a defensive tool and an offensive risk multiplier. Microsoft’s security business gives it a massive telemetry base, while OpenAI’s models can help interpret, summarize, and act on complex threat signals.AI security cuts both ways

Security teams already face alert overload, talent shortages, and increasingly automated attacks. AI can help triage incidents, explain malicious scripts, summarize logs, and assist analysts with response steps. Microsoft has a natural advantage here because Defender, Sentinel, Entra, Intune, Windows, and Microsoft 365 generate enormous security context.But AI also creates new attack surfaces. Prompt injection, data leakage, model abuse, insecure plugin architectures, and over-permissioned agents can turn productivity tools into risk channels. If OpenAI products run across multiple clouds, consistent security controls become even more important.

Enterprises should demand clarity on several fronts:

- How customer data is isolated and protected.

- Whether prompts and outputs are logged or retained.

- How identity permissions map to AI agent actions.

- What audit trails exist for AI-assisted decisions.

- How model behavior is monitored for abuse or drift.

- Which cloud provider is responsible during incidents.

Global Expansion and the Africa Angle

The TechAfrica framing is important because this restructuring is not just a U.S. cloud-market story. OpenAI’s ability to serve products across multiple providers could matter greatly in regions where infrastructure availability, pricing, data sovereignty, and connectivity constraints differ sharply from North American and Western European norms. Africa’s AI opportunity is real, but it depends on practical access to compute and responsible deployment.Why regional flexibility matters

African enterprises, governments, startups, universities, and developers often operate in hybrid infrastructure environments. Some rely on global hyperscalers, others use regional data centers, and many face cost pressures that make premium AI services difficult to scale. More cloud pathways could improve access if providers compete on local availability and pricing.Data sovereignty is also becoming a larger issue. Governments and regulated sectors may prefer workloads hosted in specific jurisdictions or under defined compliance regimes. A multi-cloud OpenAI strategy could eventually support more localized deployment models, though availability will depend on commercial agreements, infrastructure buildout, and regulatory approvals.

For developers, wider cloud distribution could reduce friction. If OpenAI capabilities become available through the platforms they already use, they can build applications without reworking their entire backend. That may help local AI ecosystems move from experimentation to production.

Regional opportunities include:

- Improved access for startups using non-Azure cloud stacks.

- Potentially broader support for local data residency requirements.

- More competition among providers serving emerging markets.

- Better resilience through geographically distributed infrastructure.

- New AI-enabled services in education, healthcare, finance, and agriculture.

- Greater demand for local cloud and AI skills development.

Strengths and Opportunities

The revised Microsoft-OpenAI agreement strengthens both companies by replacing rigidity with structured flexibility. It keeps the partnership intact where it matters most while acknowledging that global AI deployment now requires broader infrastructure, clearer economics, and more customer choice.- Microsoft preserves strategic access to OpenAI models and products through 2032.

- Azure retains first-launch status, protecting Microsoft’s enterprise AI positioning.

- OpenAI gains multi-cloud reach, making it easier to serve customers outside Azure.

- Capped revenue-sharing terms improve predictability for long-term planning.

- Enterprises gain more procurement flexibility without abandoning OpenAI capabilities.

- Cloud competition should intensify, potentially improving pricing, availability, and tooling.

- Global markets may benefit from broader deployment channels and regional cloud partnerships.

Risks and Concerns

The agreement also introduces complexity. A less exclusive relationship can unlock growth, but it can also blur accountability, complicate customer decisions, and intensify pressure on infrastructure systems already operating near the edge of capacity.- Azure could face stronger competition for OpenAI workloads from AWS, Google, and Oracle.

- Customers may struggle with fragmented governance across multiple AI deployment channels.

- Security responsibilities could become harder to trace when model, cloud, and application vendors differ.

- Cost management may become more difficult if AI teams spread workloads across providers without central oversight.

- Microsoft’s Copilot differentiation may narrow if rivals gain comparable OpenAI access.

- Infrastructure demand could worsen energy and sustainability pressures around data center expansion.

- Regulatory scrutiny may increase as powerful AI systems become more widely distributed.

What to Watch Next

The next major signal will be how quickly OpenAI products appear through additional cloud platforms and under what commercial terms. If AWS, Google Cloud, Oracle, and others can offer meaningful OpenAI access soon, enterprises will start comparing performance, governance, and price across providers. If rollout is slow or limited, Azure’s practical advantage may remain larger than the legal shift suggests.The enterprise buying cycle will tell the story

Microsoft’s upcoming cloud and AI financial results will also matter. Investors will look for evidence that ending Microsoft’s revenue-share payments to OpenAI improves AI margins without weakening Azure growth. OpenAI, meanwhile, will be judged on whether broader distribution accelerates adoption while maintaining reliability and safety.Key developments to monitor include:

- Which cloud providers receive direct OpenAI product availability first.

- How Microsoft positions Azure OpenAI Service against new alternatives.

- Whether Copilot pricing or packaging changes as economics improve.

- How regulators respond to broader frontier model distribution.

- Whether multi-cloud deployment improves performance in underserved regions.

Microsoft and OpenAI are not breaking apart; they are adapting to a market that has outgrown the original boundaries of their deal. The revised partnership keeps Azure at the front of the line, gives OpenAI more room to scale globally, and signals that the future of AI infrastructure will be competitive, capital-intensive, and increasingly multi-cloud. For enterprises, developers, and Windows users, the message is clear: the AI platform era is moving from exclusive access to operational execution, and the winners will be the companies that can turn model intelligence into secure, reliable, affordable services at global scale.

Source: TechAfrica News Microsoft and OpenAI Restructure Partnership to Accelerate Global AI Infrastructure and Multi-Cloud Expansion - TechAfrica News