Microsoft’s Clarity has just pulled back the curtain on a persistent blind spot for publishers and site operators: a new Bot Activity dashboard that surfaces how AI crawlers, search bots, and automated agents access web content — and crucially, it does so using server-side logs rather than client-side heuristics.

The web’s discovery plumbing is shifting. Traditional analytics were built to measure human sessions — pageviews, clicks, and conversions — and they relied heavily on client-side instrumentation that executes only when a real browser renders a page. But modern AI systems frequently fetch content from the server edge, often bypassing client-side scripts entirely. That means a growing share of automated reads — retrievals used to prepare answers, generate embeddings, or index content for assistants — has been effectively invisible to publishers until now. Microsoft’s Bot Activity treats those requests as first-class telemetry to help publishers answer wheis productive, extractive, or simply costly.

This is not theoretical: multiple industry trackers and vendors reported dramatic increases in AI-driven bot activity through 2025. Akamai’s 2025 Digital Fraud and Abuse report documented large year‑over‑year growth in AI bot requests, and TollBit’s tracking of retrieval bots found significant quarter‑to‑quarter increases in bots that bypass robots.txt and repeatedly fetch up‑to‑date content. Those findings underline the operational and economic pressures publishers now face.

A few operational realities follow from that architecture:

Independent telemetry corroborates a large and accelerating role for automated access. Akamai’s 2025 Digital Fraud and Abuse report called out a sharp increase in AI bot requests — reporting dramatic year‑on‑year growth — and researchers such as TollBit quantified the rise of retrieval bots that repeatedly scrape live content for assistant responses. Those external studies highlight two complementary dynamics: the volume of automated reads is growing fast, and a subset of bots is intentionally engineered to access up‑to‑date content (RAG / retrieval) rather than only large-scale training scrapes.

Caveat: percentage growth off a small base can be misleading. A 155% increase from a sub‑1% base still produces modest absolute traffic. But for publishers dependent on subscription and membership funnels, even a small stream of high‑quality, pre‑qualified visitors can yield outsized commercial value. Clarity’s results and independent case studies both stress context sensitivity: verticals, funnel design, and page type matter.

These scenarios show why visibility alone is insufficient; it must be paired with operational policy and commercial decision-making.

At the same time, measurement fuels a market for middle-layer services: bot paywalls, paid retrieval agreeensing brokers have emerged in the wake of rising retrieval traffic. TollBit-style monetization experiments and publisher negotiations with AI firms are a direct consequence of better visibility into who is reading what and how often.

From a security and operations standpoint, the explosion of AI-driven automated reads also heightens the need for robust bot management, DDoS protections, and sophisticated edge rules. Akamai’s and other vendors’ findings stealthy AI bot traffic mean that the classic binary of “good” vs “bad” bots is increasingly inadequate; intent, attribution and long-term value must be evaluated in combination.

However, site owners must approach it with eyes open: the dashboard is observational (not enforcement), classification is imperfect, enabling it may incur provider costs, and policy responses (blocking, licensing, rate‑limiting) carry tradeoffs for discoverability and commercial opportunities. The right play is a measured one: instrument, validate, and then choose defensive or commercial actions based on empirical evidence and clear business priorities.

For publishers and web ops teams, the immediate next steps are straightforward: pilot Clarity’s Bot Activity on representative properties, validate the telemetry against independent CDN logs, budget for any incremental logging costs, and design short, controlled experiments that tie upstream bot signals to downstream outcomes. In a world where AI agents increasingly read before humans click, earliest-observed signals may become the most valuable KPI publishers have for capturing the long-term value of their content.

Source: MediaPost Microsoft Exposes AI Bot Site Traffic

Background: why this matters now

Background: why this matters now

The web’s discovery plumbing is shifting. Traditional analytics were built to measure human sessions — pageviews, clicks, and conversions — and they relied heavily on client-side instrumentation that executes only when a real browser renders a page. But modern AI systems frequently fetch content from the server edge, often bypassing client-side scripts entirely. That means a growing share of automated reads — retrievals used to prepare answers, generate embeddings, or index content for assistants — has been effectively invisible to publishers until now. Microsoft’s Bot Activity treats those requests as first-class telemetry to help publishers answer wheis productive, extractive, or simply costly. This is not theoretical: multiple industry trackers and vendors reported dramatic increases in AI-driven bot activity through 2025. Akamai’s 2025 Digital Fraud and Abuse report documented large year‑over‑year growth in AI bot requests, and TollBit’s tracking of retrieval bots found significant quarter‑to‑quarter increases in bots that bypass robots.txt and repeatedly fetch up‑to‑date content. Those findings underline the operational and economic pressures publishers now face.

What Clarity’s Bot Activity actually measures

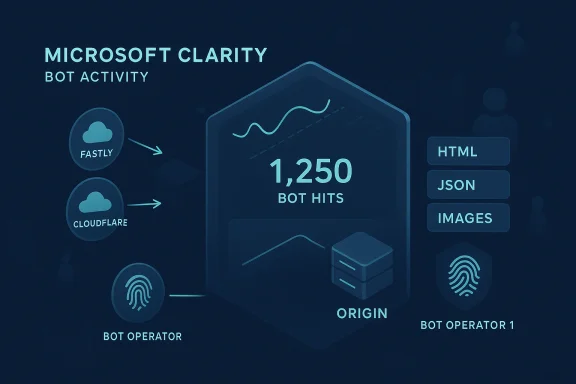

Microsoft designed Bot Activity as a server-log driven card within the Clarity AI Visibility suite. Its architecture and core capabilities include:- Server-side ingestion: request-level logs are forwarded from supported CDNs or the site origin (WordPress plugin, Fastly, Cloudflare LogPush, Amazon CloudFront Firehose) to Clarity for processing. This makes the dataset visible even when requests never execute client-side JavaScript.

- Operator-level classification: Clarity attempts to identify the bot operator or the AI ecosystem making requests, grwn operator fingerprints (e.g., named AI platforms or verified crawlers).

- Activity purpose categories: requests are bucketed by likely intent — indexing, retrieval-for-assistants, embedding generation, developer tooling or API-style access — helping teams infer “why” bots are reading content, not just “howvel aggregation**: Clarity shows which URL paths, asset types (HTML, images, JSON, XML) and resources receive the most automated attention, enabling tactical cache and edge-rule optimizations.

- AI request share / Bot operator metric: the dashboard can express the share of total rto bots, which helps quantify the scale of automated access relative to human traffic.

Why server-side logs matter (technical verification)

Client-side analytics will undercount or entirely miss many non-human actors because automated retrieval systems frequently request pages without executing embedded JavaScript. Server logs are the authoritative record of every HTTP transaction at the edge or origin: user agent strings, request frequency, response status, headers, IPs / ASNs and cache hit/miss signals live there. Clarity’s Bot Activity ingests these logs to perform classification and produce actionable telemetry. Microsoft documentation confirms the platform’s reliance on CDN and server integrations for accurate bot visibility.A few operational realities follow from that architecture:

- Forwarding logs from a CDN or cloud provider typically uses provider-specific pipelines (LogPush, Firehose, S3, etc.) and can generate additional costs for log delivery, storage, or egress. Microsoft clarifies that Clarity itsr AI Visibility, but enabling it may increase bills from the CDN or cloud provider.

- Disconnecting the integration requires provider-side changes; removing the Clarity dashboard alone does not stop logs or retroactively purge already-ingested historical data.

- Classification is probabilistic and footprint-based: Clarity documents that operator detection relies on known fingerprints and metadata, which means stealthy crawlers that spoof user agents or rotate addresses can evade deterministic identification. Treat the dashboard as a high-value signal, not a perfect oracle.

What the industry data says about scale and impact

Clarity’s own publisher sample — drawn from more than 1,200 publisher and news domains instrumented with Clarity — found AI referrals rose rapidly over a measured period, even if they still composed a small fraction of total sessions in that dataset. In that analysis, AI referrals grew roughly 155% over an eight‑month window while remaining under 1% of total sessions in the published sample; importantly, the AI-origin visits that did arrive often converted at materially higher rates for sign‑ups and subscriptions in Clarity’s snapshot.Independent telemetry corroborates a large and accelerating role for automated access. Akamai’s 2025 Digital Fraud and Abuse report called out a sharp increase in AI bot requests — reporting dramatic year‑on‑year growth — and researchers such as TollBit quantified the rise of retrieval bots that repeatedly scrape live content for assistant responses. Those external studies highlight two complementary dynamics: the volume of automated reads is growing fast, and a subset of bots is intentionally engineered to access up‑to‑date content (RAG / retrieval) rather than only large-scale training scrapes.

Caveat: percentage growth off a small base can be misleading. A 155% increase from a sub‑1% base still produces modest absolute traffic. But for publishers dependent on subscription and membership funnels, even a small stream of high‑quality, pre‑qualified visitors can yield outsized commercial value. Clarity’s results and independent case studies both stress context sensitivity: verticals, funnel design, and page type matter.

Strengths of Microsoft’s approach

Microsoft’s Bot Activity delivers several concrete advantages that change thlishers and digital operators:- Early detection of extraction: by surfacing crawl patterns, site teams can see upstream signs of how AI systems may later use content — giving them time to decide whether to lock, monetize, or optimize assets.

- Operational hygiene and cost control: the ability to quantify how much of your request volume is automated helps infrastructure teams identify unexpected load, tune caching, and design rate-limiting or CDN-based mitigations to control costs. Microsoft explicitly positions these insights for performance planning.

- Actionability across teams: marketing, editorial, and engineering can triangulate operator-level interest with path-level access to prioritize structured metadata, canonical signals, and selective paywalling where appropriate. The dashboard enables a closed-loop experiment flow from measurement to optimization.

- Server-side fidelity: leveraging edge logs provides far higher fidelity for non-human access patterns than client-side inference ever could, reducing sampling bias and blind spots.

Risks, limitations, and unanswered questions

No measurement system is neutral. Clarity’s Bot Activity brings neishers should weigh several important caveats and potential hazards before treating it as a panacea.1) Cost and operational complexity

Forwarding high-volume logs to a third party can materially increase CDN or cloud bills. Microsoft’s docs underline that the cost is charged by the provider and depends on log volume and regional configuration. Smaller publishers need to evaluate expected log throughput and budgeting implications before enabling comprehensive forwarding.2) Classification accuracy and adversarial behavior

Operator identification uses known fingerprints and metadata; stealthy crawlers can spoof user agents, use rotating IP pools, or fetch content via third-party proxies that complicate attribution. Microsoft acknowledges classification limitations and explicitly frames Bot Activity as an observational signal — not definitive proof of downstream usage. Publishers must assume false positives and false negatives are possible and validate suspicious findings before taking strong enforcement actions.3) Tradeoffs between blocking and discoverability

Blocking or rate-limiting bots may reduce infrastructure costs but will also affect discoverability inside AI assistants that rely on those same fetches to surface content with attribution. There is no universal answer: blocking aggressive scrapers may be appropriate for extractive, non‑attributing crawlers, but overly broad policies can prevent helpful assistants from indexing content that drives high‑value referrals. Clarity intentionally does not perform automatic blocking; it leaves enforcement to operators, who must balance technical protections against long-term visibility.4) Legal, privacy and licensing ambiguity

Automated crawling, indexing, and downstream use by AI models raise unsettled legal and licensing questions. While measurement shows access, it does not settle whether the use of content in a model constitutes fair use, requires licensing, or violates terms of service. Publishers should not equate observed bot access with a legal claim; measurement informs policy, but legal remedies require separate assessment. Independent reports and industry moves (publisher licensing pilots, paywalls that gate RAG access) indicate the debate will remain active.5) Privacy and data governance

Forwarding request logs to a third party — even a vendor-owned analytics service — requires careful privacy analysis. Depending on the data fields included (geolocation, IPs, headers) and the site’s user base, integration may trigger data residency, consent, or compliance requirements. The Microsoft documentation lists supported CDNs and suggests project admins review configurations — but it is the operator’s responsibility to ensure compliance.Practical playbook: how publishers and site owners should treat Bot Activity

If you run a site or manage digital operations, Bot Activity is an opportunity to move from anecdote to evidence. Here’s a practical, prioritized playbook:- Audit and baseline

- Enable server-side log forwarding for a limited period to establish a baseline: measure the AI request share, top operator sources, and which paths are most heavily crawled. Use the shortest window that yields stable signals.

- Cost modeling

- Before a full rollout, estimate log volume and consult your CDN/cloud pricing to forecast potential incremental costs. Microsoft’s docs highlight that costs can be billed by the provider.

- Validate classifications

- Cross-check operator identifications with your CDN’s own logs and other bot-detection tools to reduce false positives. If you see suspicious operator tags, confirm via IP/ASN checks and pattern analysis.

- Prioritize protection vs. exposure

- For paths that attract heavy extraction but deliver no conversion, consider:

- tighter cache controls,

- API gating or token-based access,

- selective rate limits at the CDN edge,

- or specialized bot paywalling experiments.

- Experiment with discovery optimization

- For pages repeatedly crawled by reputable AI ecosystems, run A/B tests: improve structured metadata, implement clear canonical signals, and test whether that upstream attention leads to increased citations or higher-quality referrals.

- Operationalize monitoring

- Integrate Bot Activity alerts into your incident playbooks: sudden spikes in bot operator activity can indicate scraping campaigns, misconfigured APIs, or even abuse.

- Legal & commercial options

- Track which operators frequently harvest your content. For persistent, extractive access, engage your legal or commercial teams to explore licensing or commercial agreements — measurement data is now an evidence base for negotiation.

- Privacy review

- Conduct a data protection impact assessment for forwarding logs to Clarity and ensure compliance with any cross-border or retention requirements before enabling full forwarding.

Scenario analysis: three real-world outcomes

Scenario A — High-value conversion from AI referrals

A subscription-focused publisher exposes rich, structured long-form pieces; Clarity shows modest crawl volume from a named assistant but those pages later deliver high conversion lift. Strategy: prioritize those paths for canonical signals, structured metadata, and tests to maximize attribution capture. ROI: high per-visit.Scenario B — Costly extraction with no downstream value

A resource-heavy site discovers aggressive embedding generation from an operator that never yields referrals. Strategy: implement API gating or stricter edge rate limiting and consider commercial terms for high-volume access. ROI: negative for status quo; consider license or block.Scenario C — Ambiguous operator behavior

A crawler rotates addresses and spoofs user agents; classification is unreliable. Strategy: combine Clarity signals with third-party bot detection, IP Intelligence, and manual validation before enforcement. ROI: avoid false positive customer-impact.These scenarios show why visibility alone is insufficient; it must be paired with operational policy and commercial decision-making.

The broader implications for the web and publishers

C is more than a new analytics card — it signals a broader shift in how the web will be instrumented and commercialized. If AI agents are to become a primary discovery surface, publishers will need upstream telemetry to negotiate visibility, revenue-sharing, or protective gating. Measurement enables economic conversations: you can’t bargain for access you can’t quantify.At the same time, measurement fuels a market for middle-layer services: bot paywalls, paid retrieval agreeensing brokers have emerged in the wake of rising retrieval traffic. TollBit-style monetization experiments and publisher negotiations with AI firms are a direct consequence of better visibility into who is reading what and how often.

From a security and operations standpoint, the explosion of AI-driven automated reads also heightens the need for robust bot management, DDoS protections, and sophisticated edge rules. Akamai’s and other vendors’ findings stealthy AI bot traffic mean that the classic binary of “good” vs “bad” bots is increasingly inadequate; intent, attribution and long-term value must be evaluated in combination.

Final verdict: a powerful tool with pragmatic limits

Microsoft’s Bot Activity is a timely and pragmatic response to a measurable industry need: publishers require upstream, server-side intelligence to make informed choices in an AI‑first discovery environment. The feature’s strengths are clear — server-side fidelity, operator-level classification, and path-level insights that turn formerly invisible activities into actionable telemetry.However, site owners must approach it with eyes open: the dashboard is observational (not enforcement), classification is imperfect, enabling it may incur provider costs, and policy responses (blocking, licensing, rate‑limiting) carry tradeoffs for discoverability and commercial opportunities. The right play is a measured one: instrument, validate, and then choose defensive or commercial actions based on empirical evidence and clear business priorities.

For publishers and web ops teams, the immediate next steps are straightforward: pilot Clarity’s Bot Activity on representative properties, validate the telemetry against independent CDN logs, budget for any incremental logging costs, and design short, controlled experiments that tie upstream bot signals to downstream outcomes. In a world where AI agents increasingly read before humans click, earliest-observed signals may become the most valuable KPI publishers have for capturing the long-term value of their content.

Source: MediaPost Microsoft Exposes AI Bot Site Traffic