Charles Lamanna, Microsoft’s EVP for Copilot, Agents and Platform, appeared on CNBC’s Fortt Knox on May 15, 2026, to argue that Microsoft’s AI future is not a single giant model but an orchestrated system that routes work across multiple models, agents, data sources, and human workflows. The pitch is deceptively simple: stop worshiping model size and start measuring useful work. For Windows users and enterprise IT, that is more than a product-management slogan. It is Microsoft’s attempt to make Copilot the operating layer for AI labor.

The most revealing claim in the interview was not that Microsoft can access better models. Everyone with a budget can do some version of that now. The interesting claim was that layering models can produce better research accuracy at lower cost than simply reaching for a larger model every time. That is a direct challenge to the frontier-model arms race, and it tells us where Microsoft thinks the next enterprise AI margin will be found: not in owning every brain, but in deciding which brain gets called, when, and under whose rules.

For the first phase of generative AI, Copilot was marketed as an assistant. It drafted emails, summarized meetings, rewrote paragraphs, and sat politely beside the user in Word, Teams, Excel, Outlook, and Windows. The metaphor was useful because it reduced fear: the human was still flying the plane, and the AI was merely helping.

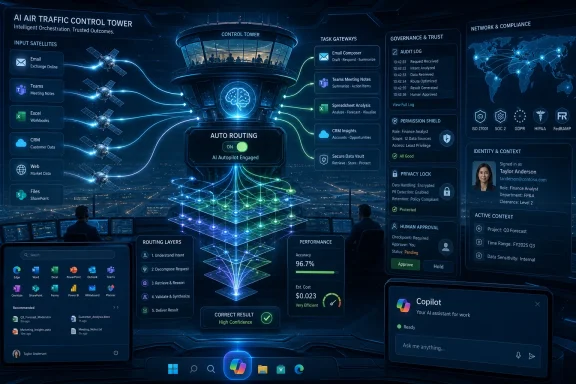

Lamanna’s CNBC appearance points to a more consequential phase. Microsoft now wants Copilot to become a dispatcher for agents, models, tools, and enterprise context. In that world, the important interface is not just a chat box but a routing system that decides whether a request needs speed, reasoning, retrieval, compliance review, workflow execution, or escalation to a person.

That is why “auto” routing matters. It sounds like a convenience feature, but in enterprise software it is a power move. If users no longer pick the model, the platform that routes the work becomes the real product.

Microsoft has been laying the groundwork for this shift across Copilot Studio, Microsoft 365 Copilot, Azure AI Foundry, and its agent governance efforts. The company’s documentation and product messaging increasingly describe Copilot as a system that can choose models, chain tools, invoke agents, and act across business applications. Lamanna’s interview simply said the quiet part in a more CNBC-friendly way: the model picker is becoming middleware.

For WindowsForum readers, this should feel familiar. Windows itself won not because it was always the most elegant component in the stack, but because it became the place where hardware, applications, drivers, user identity, management policy, and developer assumptions converged. Microsoft is trying to make Copilot play a similar role for AI.

The claim that layered model orchestration can produce a 15-point research-accuracy improvement at lower cost than one larger model is exactly the kind of assertion enterprise buyers want to believe. It says they can get better results without letting inference bills run wild. It also says reliability might come from systems design rather than brute-force scale.

That matters because real work is rarely a single prompt. A research task may require retrieval, source ranking, contradiction checking, summarization, spreadsheet manipulation, and final drafting. A customer-service task may require classification, policy lookup, sentiment handling, identity validation, CRM updates, and an audit trail. A coding task may require repository context, test generation, dependency awareness, security review, and pull-request hygiene.

A single frontier model can attempt all of that, but it may not be the cheapest or most dependable way to do it. A routed system can use a fast model to classify the request, a retrieval layer to gather data, a reasoning model to evaluate competing claims, a smaller model to format output, and an approval workflow to stop risky actions. That is not glamorous. It is enterprise software.

This is where Microsoft has an advantage that pure model labs do not automatically have. It owns the workplace surface area: Windows, Office, Teams, Outlook, SharePoint, Entra, Intune, Defender, Purview, Power Platform, Dynamics, GitHub, and Azure. The more AI becomes orchestration over organizational systems, the more Microsoft can argue that the model is only one component in a governed workflow.

The corporate version is easy to imagine. A team buys expensive AI capacity, feeds entire document stores into prompts, asks giant models to reason across everything, and calls the resulting bill “innovation.” The demo works because the system can produce impressive-looking output. The operating model fails because nobody has defined accuracy, latency, cost per task, data exposure, or accountability.

Token abundance can be valuable, especially in coding, legal review, scientific research, and long-document analysis. But token abundance is not the same thing as intelligence. It can also hide weak retrieval, poor chunking, sloppy permissions, and the absence of a clear task architecture.

Microsoft’s push toward auto routing is partly a cost-control argument against this behavior. If a model does not need the whole corpus, do not send it the whole corpus. If a cheap model can classify a request, do not pay a premium model to do clerical triage. If a workflow needs a deterministic API call, do not hallucinate your way through it in natural language.

There is a cynical reading, too. Microsoft has every incentive to abstract away the model bill while keeping customers inside its platform. “Trust the router” can become “stop asking what model did the work.” That may be fine for ordinary productivity tasks, but regulated organizations will not accept model invisibility as a permanent state. They will want logs, policy controls, provenance, and the ability to reproduce decisions when something goes wrong.

Once agents take actions, they become identities. They need permissions, owners, scopes, logs, revocation, monitoring, and lifecycle rules. They also need boundaries around what happens when the human associated with an agent changes roles or leaves the company.

That is why Lamanna’s discussion of “digital doppelgangers” after employees leave is more than a sci-fi aside. Enterprises have long struggled with orphaned accounts, stale SharePoint permissions, departed employees’ mailboxes, and forgotten service principals. AI agents add a more intimate version of the same problem: systems that may encode a worker’s writing style, preferences, workflows, institutional memory, and delegated authority.

The question is not merely whether a company may keep an AI representation of a former employee. In many cases, companies already retain work product, emails, tickets, commits, documents, and process history. The harder question is whether an AI proxy continues to simulate that person in ways that blur authorship, consent, responsibility, and trust.

Sysadmins will recognize the operational analogy. A service account that survives its owner is risky; an agent that behaves like its owner is riskier. It may know too much, act too broadly, and appear more legitimate than it is.

This is where Microsoft’s identity and compliance stack becomes central to the pitch. The company wants to sell not just Copilot, but the control plane around Copilot: who can create agents, what they can access, what they can do, how they are audited, and how they are retired. That is less exciting than a viral demo, but it is the difference between a pilot project and a system an enterprise can actually deploy.

The first wave of AI competition rewarded fluency. The next wave may punish sameness. Customers will not be impressed forever by a well-written AI-generated email. Employees will not be loyal to a workplace because its chatbot can summarize a meeting. Partners will not choose a vendor because its slide decks are grammatically pristine.

That does not mean AI becomes unimportant. It means AI fades into the substrate of work, much as spellcheck, search, and cloud sync did. The differentiator shifts from having the tool to designing the work around it.

For Microsoft, this is both opportunity and risk. If Copilot becomes the shared productivity layer for millions of workers, Microsoft benefits from standardization. But if everyone’s workflows begin to feel Copilot-shaped, companies will look for differentiation elsewhere: domain expertise, culture, speed of decision-making, user experience, and trust.

There is also a Windows angle. The PC has always been personal not because the silicon was unique, but because users organized their work, files, habits, shortcuts, applications, and preferences around it. AI threatens to flatten some of that individuality by generating the same polished corporate voice everywhere. The products that win long-term will need to preserve personal agency rather than merely automate the average.

A multi-model Copilot lets Microsoft tell customers that they are not locked into one model family. It also lets Microsoft tell model providers that distribution depends on fitting into Microsoft’s orchestration layer. That changes the balance of power.

If customers choose a model directly, the model provider owns the relationship. If customers choose Copilot and Copilot chooses the model, Microsoft owns the relationship. The model provider becomes a component supplier inside a larger enterprise platform.

This is the cloud playbook all over again. Azure did not need to own every workload to become strategic; it needed to become the place enterprises governed, connected, billed, monitored, and secured those workloads. Copilot does not need to be the best model at every task if it becomes the place where AI tasks are routed, governed, and embedded into work.

There is a catch. Abstraction is useful until it becomes opacity. Developers and administrators will need to know enough about routing behavior to debug failures, manage compliance, and control cost. If “auto” becomes a black box, it will invite the same distrust that black-box endpoint security, opaque Windows telemetry, and surprise cloud billing have generated in the past.

That layer will decide whether a task stays local, goes to the cloud, invokes a workplace graph, calls a business connector, uses a reasoning model, or hands off to a specialized agent. Over time, the user may not know which model or agent did the work. They may only know that the task completed — or failed.

This is where Windows becomes a strategic surface again. Microsoft has been trying to make the PC relevant to AI not only through cloud Copilot but through local models, NPUs, Copilot+ PCs, Recall-like memory features, and system-level integration. The eventual user experience may be hybrid: local inference for privacy-sensitive or low-latency tasks, cloud models for heavy reasoning, enterprise connectors for work context, and governance policies for everything in between.

That architecture is powerful, but it also complicates trust. Users will ask where their data went. Admins will ask which policy controlled the action. Developers will ask which API failed. Security teams will ask whether the agent respected least privilege. Privacy officers will ask whether the system retained more context than necessary.

Microsoft’s answer appears to be orchestration plus governance. The question is whether the company can make that answer legible enough for real deployment. Windows veterans know the pattern: a platform can be immensely capable and still generate backlash if controls are confusing, defaults are aggressive, or telemetry feels one-sided.

A CIO does not want the most charming chatbot. A CIO wants to know whether the system can reduce support time without leaking customer data. A CFO wants to know whether inference costs scale predictably. A CISO wants to know whether agents can be inventoried and constrained. A general counsel wants to know whether decisions can be audited. A line-of-business leader wants to know whether a workflow actually gets faster.

Microsoft’s advantage is that it can sell into those anxieties. Copilot is not just an AI assistant; it is a SKU, a governance story, an identity story, a compliance story, and a partner ecosystem. That is why even imperfect Copilot deployments matter. They teach Microsoft where enterprise AI breaks.

The danger is that enterprises may confuse deployment with transformation. Installing Copilot everywhere does not automatically create better workflows. A bad process with an AI summary is still a bad process. A chaotic SharePoint estate with generative search is still a chaotic SharePoint estate. A poorly permissioned organization with agents is now a poorly permissioned organization with faster blast radius.

The successful adopters will do the unglamorous work first. They will clean up data, rationalize permissions, define approved actions, measure task outcomes, and decide where humans must remain in the loop. In that world, Microsoft’s orchestration layer becomes valuable not because it replaces management, but because it gives management something to control.

That metaphor is useful because it clarifies the stakes. A single model can answer a question. An orchestrated agent system can perform a sequence of actions that affect real systems. The former can embarrass you. The latter can create operational, financial, legal, or security consequences.

Lamanna’s framing implicitly acknowledges this. Multi-model routing is not only about quality. It is about matching the level of autonomy to the risk of the task. Some work should be generated. Some should be checked. Some should be executed. Some should be blocked.

This is also why “digital doppelgangers” deserve more serious treatment than the usual AI ethics segment. If AI systems inherit human work patterns and organizational permissions, they become part of the company’s control environment. HR, legal, security, and IT will all have claims on how those systems are created and retired.

The boring phrase for this is lifecycle management. The more accurate phrase is institutional memory with an API. Companies will want the productivity benefit of retaining know-how without creating synthetic ghosts that act without consent or accountability.

Who sees the user’s intent? Who has access to the documents? Who understands the permissions? Who controls the connectors? Who logs the action? Who bills the inference? Who decides which model is appropriate? Who can revoke the agent?

Microsoft wants the answer to most of those questions to be Microsoft. Not necessarily because every model comes from Redmond, but because the work flows through Microsoft-controlled surfaces. That is the strategic meaning of Copilot as platform.

The company’s critics will argue that this is another bundling play. They will not be entirely wrong. Copilot becomes more compelling when it is tied to Microsoft 365, Teams, Azure, Entra, Defender, Purview, and Power Platform. The deeper the integration, the harder it becomes to separate the AI assistant from the productivity estate.

But bundling alone does not guarantee success. Enterprises will tolerate lock-in only if the integrated system reduces risk or improves outcomes. If Copilot’s auto-routing is accurate, auditable, and cost-effective, Microsoft’s platform argument strengthens. If it is opaque, inconsistent, or expensive, customers will push for more direct model control and vendor diversity.

Source: CNBC

The most revealing claim in the interview was not that Microsoft can access better models. Everyone with a budget can do some version of that now. The interesting claim was that layering models can produce better research accuracy at lower cost than simply reaching for a larger model every time. That is a direct challenge to the frontier-model arms race, and it tells us where Microsoft thinks the next enterprise AI margin will be found: not in owning every brain, but in deciding which brain gets called, when, and under whose rules.

Microsoft Is Reframing Copilot as the Dispatcher, Not the Genius

Microsoft Is Reframing Copilot as the Dispatcher, Not the Genius

For the first phase of generative AI, Copilot was marketed as an assistant. It drafted emails, summarized meetings, rewrote paragraphs, and sat politely beside the user in Word, Teams, Excel, Outlook, and Windows. The metaphor was useful because it reduced fear: the human was still flying the plane, and the AI was merely helping.Lamanna’s CNBC appearance points to a more consequential phase. Microsoft now wants Copilot to become a dispatcher for agents, models, tools, and enterprise context. In that world, the important interface is not just a chat box but a routing system that decides whether a request needs speed, reasoning, retrieval, compliance review, workflow execution, or escalation to a person.

That is why “auto” routing matters. It sounds like a convenience feature, but in enterprise software it is a power move. If users no longer pick the model, the platform that routes the work becomes the real product.

Microsoft has been laying the groundwork for this shift across Copilot Studio, Microsoft 365 Copilot, Azure AI Foundry, and its agent governance efforts. The company’s documentation and product messaging increasingly describe Copilot as a system that can choose models, chain tools, invoke agents, and act across business applications. Lamanna’s interview simply said the quiet part in a more CNBC-friendly way: the model picker is becoming middleware.

For WindowsForum readers, this should feel familiar. Windows itself won not because it was always the most elegant component in the stack, but because it became the place where hardware, applications, drivers, user identity, management policy, and developer assumptions converged. Microsoft is trying to make Copilot play a similar role for AI.

The Bigger Model Is No Longer the Default Answer

The AI industry has spent the past few years turning model benchmarks into a proxy for destiny. More parameters, more context, more tokens, more synthetic test victories — each announcement has implied that the next model will flatten the last one and simplify the buying decision. Lamanna’s argument cuts against that mythology.The claim that layered model orchestration can produce a 15-point research-accuracy improvement at lower cost than one larger model is exactly the kind of assertion enterprise buyers want to believe. It says they can get better results without letting inference bills run wild. It also says reliability might come from systems design rather than brute-force scale.

That matters because real work is rarely a single prompt. A research task may require retrieval, source ranking, contradiction checking, summarization, spreadsheet manipulation, and final drafting. A customer-service task may require classification, policy lookup, sentiment handling, identity validation, CRM updates, and an audit trail. A coding task may require repository context, test generation, dependency awareness, security review, and pull-request hygiene.

A single frontier model can attempt all of that, but it may not be the cheapest or most dependable way to do it. A routed system can use a fast model to classify the request, a retrieval layer to gather data, a reasoning model to evaluate competing claims, a smaller model to format output, and an approval workflow to stop risky actions. That is not glamorous. It is enterprise software.

This is where Microsoft has an advantage that pure model labs do not automatically have. It owns the workplace surface area: Windows, Office, Teams, Outlook, SharePoint, Entra, Intune, Defender, Purview, Power Platform, Dynamics, GitHub, and Azure. The more AI becomes orchestration over organizational systems, the more Microsoft can argue that the model is only one component in a governed workflow.

“Token Maxing” Is the New Benchmark Theater

Lamanna’s dismissal of “token maxing” as vanity is one of the more useful phrases to come out of the interview. It captures a real pathology in AI adoption: teams bragging about massive context windows, huge prompt budgets, or exotic model access without proving that the resulting work is better, cheaper, safer, or faster.The corporate version is easy to imagine. A team buys expensive AI capacity, feeds entire document stores into prompts, asks giant models to reason across everything, and calls the resulting bill “innovation.” The demo works because the system can produce impressive-looking output. The operating model fails because nobody has defined accuracy, latency, cost per task, data exposure, or accountability.

Token abundance can be valuable, especially in coding, legal review, scientific research, and long-document analysis. But token abundance is not the same thing as intelligence. It can also hide weak retrieval, poor chunking, sloppy permissions, and the absence of a clear task architecture.

Microsoft’s push toward auto routing is partly a cost-control argument against this behavior. If a model does not need the whole corpus, do not send it the whole corpus. If a cheap model can classify a request, do not pay a premium model to do clerical triage. If a workflow needs a deterministic API call, do not hallucinate your way through it in natural language.

There is a cynical reading, too. Microsoft has every incentive to abstract away the model bill while keeping customers inside its platform. “Trust the router” can become “stop asking what model did the work.” That may be fine for ordinary productivity tasks, but regulated organizations will not accept model invisibility as a permanent state. They will want logs, policy controls, provenance, and the ability to reproduce decisions when something goes wrong.

The Agent Boom Turns Governance From Paperwork Into Product

The uncomfortable part of Microsoft’s agent story is that every productivity gain creates a new management problem. A chatbot that answers questions is one thing. An agent that can act across email, calendars, CRM records, support tickets, repositories, spreadsheets, and approval systems is another.Once agents take actions, they become identities. They need permissions, owners, scopes, logs, revocation, monitoring, and lifecycle rules. They also need boundaries around what happens when the human associated with an agent changes roles or leaves the company.

That is why Lamanna’s discussion of “digital doppelgangers” after employees leave is more than a sci-fi aside. Enterprises have long struggled with orphaned accounts, stale SharePoint permissions, departed employees’ mailboxes, and forgotten service principals. AI agents add a more intimate version of the same problem: systems that may encode a worker’s writing style, preferences, workflows, institutional memory, and delegated authority.

The question is not merely whether a company may keep an AI representation of a former employee. In many cases, companies already retain work product, emails, tickets, commits, documents, and process history. The harder question is whether an AI proxy continues to simulate that person in ways that blur authorship, consent, responsibility, and trust.

Sysadmins will recognize the operational analogy. A service account that survives its owner is risky; an agent that behaves like its owner is riskier. It may know too much, act too broadly, and appear more legitimate than it is.

This is where Microsoft’s identity and compliance stack becomes central to the pitch. The company wants to sell not just Copilot, but the control plane around Copilot: who can create agents, what they can access, what they can do, how they are audited, and how they are retired. That is less exciting than a viral demo, but it is the difference between a pilot project and a system an enterprise can actually deploy.

Human Warmth Becomes the Moat Only If AI Becomes Ubiquitous

One of the more striking ideas in the interview was the suggestion that human warmth becomes a moat when everyone has access to similar models. This sounds soft, but it has teeth. If every company can generate competent prose, automate routine support, draft proposals, summarize meetings, and analyze basic data, then tone, judgment, taste, trust, and relationship quality become more valuable.The first wave of AI competition rewarded fluency. The next wave may punish sameness. Customers will not be impressed forever by a well-written AI-generated email. Employees will not be loyal to a workplace because its chatbot can summarize a meeting. Partners will not choose a vendor because its slide decks are grammatically pristine.

That does not mean AI becomes unimportant. It means AI fades into the substrate of work, much as spellcheck, search, and cloud sync did. The differentiator shifts from having the tool to designing the work around it.

For Microsoft, this is both opportunity and risk. If Copilot becomes the shared productivity layer for millions of workers, Microsoft benefits from standardization. But if everyone’s workflows begin to feel Copilot-shaped, companies will look for differentiation elsewhere: domain expertise, culture, speed of decision-making, user experience, and trust.

There is also a Windows angle. The PC has always been personal not because the silicon was unique, but because users organized their work, files, habits, shortcuts, applications, and preferences around it. AI threatens to flatten some of that individuality by generating the same polished corporate voice everywhere. The products that win long-term will need to preserve personal agency rather than merely automate the average.

Auto Routing Gives Microsoft Leverage Over the Model Market

Microsoft’s relationship with OpenAI has defined the commercial AI era, but its strategy is no longer reducible to one partner. The company has been adding support for multiple model providers and has emphasized model choice in Copilot Studio and Microsoft 365 Copilot. Anthropic’s presence in Microsoft’s stack is not an accident; it is leverage.A multi-model Copilot lets Microsoft tell customers that they are not locked into one model family. It also lets Microsoft tell model providers that distribution depends on fitting into Microsoft’s orchestration layer. That changes the balance of power.

If customers choose a model directly, the model provider owns the relationship. If customers choose Copilot and Copilot chooses the model, Microsoft owns the relationship. The model provider becomes a component supplier inside a larger enterprise platform.

This is the cloud playbook all over again. Azure did not need to own every workload to become strategic; it needed to become the place enterprises governed, connected, billed, monitored, and secured those workloads. Copilot does not need to be the best model at every task if it becomes the place where AI tasks are routed, governed, and embedded into work.

There is a catch. Abstraction is useful until it becomes opacity. Developers and administrators will need to know enough about routing behavior to debug failures, manage compliance, and control cost. If “auto” becomes a black box, it will invite the same distrust that black-box endpoint security, opaque Windows telemetry, and surprise cloud billing have generated in the past.

Windows Users Will See the Platform Shift Before They Understand It

Most consumer-facing discussion of Copilot still centers on visible features: a button in Windows, a sidebar, a chat experience, a summary in Edge, a rewrite option in Word. But Microsoft’s real bet is not the visible assistant. It is the invisible decision layer behind the assistant.That layer will decide whether a task stays local, goes to the cloud, invokes a workplace graph, calls a business connector, uses a reasoning model, or hands off to a specialized agent. Over time, the user may not know which model or agent did the work. They may only know that the task completed — or failed.

This is where Windows becomes a strategic surface again. Microsoft has been trying to make the PC relevant to AI not only through cloud Copilot but through local models, NPUs, Copilot+ PCs, Recall-like memory features, and system-level integration. The eventual user experience may be hybrid: local inference for privacy-sensitive or low-latency tasks, cloud models for heavy reasoning, enterprise connectors for work context, and governance policies for everything in between.

That architecture is powerful, but it also complicates trust. Users will ask where their data went. Admins will ask which policy controlled the action. Developers will ask which API failed. Security teams will ask whether the agent respected least privilege. Privacy officers will ask whether the system retained more context than necessary.

Microsoft’s answer appears to be orchestration plus governance. The question is whether the company can make that answer legible enough for real deployment. Windows veterans know the pattern: a platform can be immensely capable and still generate backlash if controls are confusing, defaults are aggressive, or telemetry feels one-sided.

The Enterprise Buyer Wants Boring AI, and That Is Microsoft’s Opening

The consumer AI market rewards surprise. The enterprise AI market rewards repeatability. That difference explains why Lamanna’s message is less about magical intelligence than operational design.A CIO does not want the most charming chatbot. A CIO wants to know whether the system can reduce support time without leaking customer data. A CFO wants to know whether inference costs scale predictably. A CISO wants to know whether agents can be inventoried and constrained. A general counsel wants to know whether decisions can be audited. A line-of-business leader wants to know whether a workflow actually gets faster.

Microsoft’s advantage is that it can sell into those anxieties. Copilot is not just an AI assistant; it is a SKU, a governance story, an identity story, a compliance story, and a partner ecosystem. That is why even imperfect Copilot deployments matter. They teach Microsoft where enterprise AI breaks.

The danger is that enterprises may confuse deployment with transformation. Installing Copilot everywhere does not automatically create better workflows. A bad process with an AI summary is still a bad process. A chaotic SharePoint estate with generative search is still a chaotic SharePoint estate. A poorly permissioned organization with agents is now a poorly permissioned organization with faster blast radius.

The successful adopters will do the unglamorous work first. They will clean up data, rationalize permissions, define approved actions, measure task outcomes, and decide where humans must remain in the loop. In that world, Microsoft’s orchestration layer becomes valuable not because it replaces management, but because it gives management something to control.

The New AI Stack Looks Less Like Chat and More Like Air Traffic Control

The mental model for Copilot is shifting. Chat is the cockpit window, but orchestration is air traffic control. The system has to know which aircraft are in the air, where they are allowed to go, what route is safest, when to reroute, and when a human controller needs to intervene.That metaphor is useful because it clarifies the stakes. A single model can answer a question. An orchestrated agent system can perform a sequence of actions that affect real systems. The former can embarrass you. The latter can create operational, financial, legal, or security consequences.

Lamanna’s framing implicitly acknowledges this. Multi-model routing is not only about quality. It is about matching the level of autonomy to the risk of the task. Some work should be generated. Some should be checked. Some should be executed. Some should be blocked.

This is also why “digital doppelgangers” deserve more serious treatment than the usual AI ethics segment. If AI systems inherit human work patterns and organizational permissions, they become part of the company’s control environment. HR, legal, security, and IT will all have claims on how those systems are created and retired.

The boring phrase for this is lifecycle management. The more accurate phrase is institutional memory with an API. Companies will want the productivity benefit of retaining know-how without creating synthetic ghosts that act without consent or accountability.

The Lamanna Interview Was Really About Who Owns the Workflow

The fight over AI is often described as a fight over models. That is true at the infrastructure layer, where training runs, chips, power, and data matter enormously. But inside the enterprise, the more immediate fight is over workflows.Who sees the user’s intent? Who has access to the documents? Who understands the permissions? Who controls the connectors? Who logs the action? Who bills the inference? Who decides which model is appropriate? Who can revoke the agent?

Microsoft wants the answer to most of those questions to be Microsoft. Not necessarily because every model comes from Redmond, but because the work flows through Microsoft-controlled surfaces. That is the strategic meaning of Copilot as platform.

The company’s critics will argue that this is another bundling play. They will not be entirely wrong. Copilot becomes more compelling when it is tied to Microsoft 365, Teams, Azure, Entra, Defender, Purview, and Power Platform. The deeper the integration, the harder it becomes to separate the AI assistant from the productivity estate.

But bundling alone does not guarantee success. Enterprises will tolerate lock-in only if the integrated system reduces risk or improves outcomes. If Copilot’s auto-routing is accurate, auditable, and cost-effective, Microsoft’s platform argument strengthens. If it is opaque, inconsistent, or expensive, customers will push for more direct model control and vendor diversity.

The Copilot Bet Comes Down to Routing, Memory, and Trust

The concrete lesson from Lamanna’s CNBC appearance is that Microsoft is trying to move the AI conversation away from spectacle and toward systems. That is good news for serious buyers, but it also raises the bar for proof.- Microsoft is positioning Copilot as an orchestration layer that can route tasks across multiple models, agents, tools, and enterprise data sources.

- The company’s claim that layered models can improve research accuracy at lower cost challenges the assumption that the biggest model should always be the default.

- “Token maxing” is becoming a warning sign for inefficient AI adoption when teams use huge context and compute budgets without measuring task outcomes.

- Agent governance is moving from an administrative concern to a core product requirement because agents can act across business systems.

- The “digital doppelganger” problem will force companies to define what happens to AI representations, delegated workflows, and institutional memory when employees leave.

- Human judgment, warmth, and trust become more valuable as model access becomes commoditized across competing organizations.

Source: CNBC