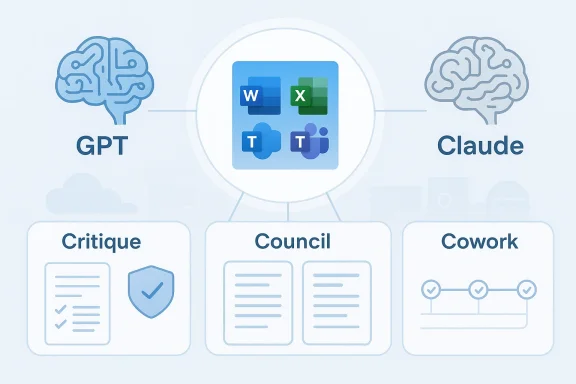

Microsoft’s latest Copilot push is not just another feature drop. It is a strategic signal that the company is moving decisively toward a multi-model AI future, with Anthropic’s Claude and OpenAI’s GPT now working alongside each other inside Microsoft 365 workflows. The practical goal is straightforward: improve reliability, reduce hallucinations, and make Copilot more useful for enterprise-grade research and task execution. But the broader message is even more important — Microsoft is no longer presenting one model as the answer to everything, and that shift could reshape the competitive dynamics of business AI.

For much of the generative AI boom, Microsoft’s Copilot story was tightly linked to OpenAI. That relationship helped Microsoft move quickly, anchor its AI stack in Azure, and launch Copilot as the front door for AI inside Word, Excel, PowerPoint, Outlook, and Teams. The early framing was simple: one major foundation model partnership, one ecosystem, one vision for productivity.

That approach was always going to evolve. As enterprise adoption matured, customers began demanding more than clever demos and fluent prose. They wanted consistency, traceability, and control — especially when AI systems were used for research, analysis, document drafting, and agentic automation. A single model can be impressive, but it can also be brittle. If the model makes a confident mistake, the user pays the price.

Microsoft’s recent Copilot changes show that the company has internalized that lesson. The company now explicitly supports Claude in Researcher, the assistant designed for complex, source-cited work, and it has opened the door to Anthropic models across several Microsoft offerings. Microsoft says Anthropic models are being enabled by default for most commercial customers, while EU, UK, and sovereign cloud environments remain excluded or opt-in by policy. That is a significant operational expansion, not a symbolic pilot. (learn.microsoft.com)

The broader industry context matters too. AI vendors are under pressure to prove that their systems can do more than generate text. The next battleground is not only model quality, but orchestration: which model to use, when to use it, and how to verify the answer before a user sees it. Microsoft’s latest move is a direct bet that the winners in enterprise AI will be the companies that can blend multiple models, multiple reasoning styles, and multiple layers of verification into one governed workflow. (microsoft.com)

The most interesting part is not just access to another model. It is the design philosophy behind the integration. Microsoft’s March 9 Copilot blog described Copilot Cowork as a capability built with Anthropic technology for long-running, multi-step work, and it framed this as part of “Wave 3” of Microsoft 365 Copilot. That is a meaningful shift away from one-shot prompts and toward delegated execution that unfolds over time. (microsoft.com)

It also reduces Microsoft’s dependence on any one vendor’s strengths and weaknesses. If one model is better at drafting, another at critique, and a third at structured reasoning, Microsoft can potentially route work to the best tool rather than pretending every model is equally suited to every job.

Key implications include:

This matters because hallucinations are not a niche annoyance; they are the core product problem that keeps AI from being trusted in serious work. A user can tolerate a typo or an awkward sentence. They cannot tolerate an AI that invents a citation, misstates a policy, or fabricates a business fact in a report that goes to leadership or a customer.

Still, there is a caveat. Verification is only as good as the verifier. If both models share blind spots, or if the critique layer becomes overly conservative, users may get answers that are safer but less useful. That tension is why multi-model systems are promising but not magical.

That shift could become a defining feature of enterprise AI over the next two years:

A comparison interface makes the model’s variability visible. That visibility is valuable in research, compliance, legal review, strategy work, and any context where how an answer was formed matters almost as much as the answer itself.

That can help administrators and power users build internal playbooks. It also gives Microsoft a way to market Copilot as a platform for model governance rather than merely a text generator.

Important benefits include:

That aligns with the broader industry shift toward agentic AI. Instead of asking a system for a single answer, users ask it to complete a sequence of work steps across files, tools, and contexts. This is the promise that has fueled interest in autonomous agents, and it is why Microsoft is racing to bake those capabilities into the productivity stack people already use.

The product design reflects a balance between autonomy and control. That is a much more credible enterprise story than “fully autonomous AI,” which tends to collapse under real-world compliance and permission requirements.

But it also raises the bar dramatically. A system that can work for minutes or hours must be reliable across interruptions, context shifts, and document state changes. That is much harder than generating a polished paragraph.

This is a pragmatic move. The AI market is moving too quickly for any one model partnership to remain sufficient across all use cases. Model quality changes, price/performance shifts, and enterprise needs become more specialized over time. Microsoft appears to be building a platform that can absorb those changes instead of being whipsawed by them.

For customers, the appeal is equally obvious. Different teams may prefer different model behaviors. Finance may want stricter verification, marketing may want more stylistic flexibility, and legal may want conservative reasoning. A multi-model platform can support all three without forcing everyone into the same mold.

That said, multi-model strategy creates its own complexity:

For consumers, the story is more mixed. Many consumer users will simply see better answers, if they see the feature at all. But they are less likely to care about model provenance, compliance settings, or enterprise governance. Consumer adoption will depend more on whether the experience feels faster, smarter, and less error-prone than rivals.

In short, the enterprise buyer wants control, while the consumer wants simplicity. Microsoft has to satisfy both without letting one undermine the other.

That is a delicate competitive balance. Microsoft is not merely using models; it is arbitraging them. If one vendor is especially good at reasoning, another at tool use, and another at speed, Microsoft can blend those strengths into a product advantage.

It also suggests that model vendors may increasingly compete for roles inside platforms rather than exclusive placement. One model becomes the drafter, another the critic, another the agentic executor. That is a very different future from the early assumption that one frontier model would dominate every task.

The company’s challenge is familiar across Big Tech: prove that AI spending converts into durable product value, not just impressive demos. The more Microsoft can show that Copilot reduces friction, increases subscriptions, and supports enterprise retention, the easier it becomes to justify the capital intensity of its AI push.

At the same time, there is a risk that the market discounts AI features until adoption becomes obvious. Features alone do not equal revenue. Microsoft still has to prove that these capabilities change behavior at scale.

That means the rollout is not uniform. Enterprises with multinational compliance obligations will need to think carefully about where Claude is allowed, what data can flow to it, and how those controls are surfaced to users. This is a classic enterprise AI problem: the best model in the world is still limited by policy, geography, and procurement rules.

That creates friction, but it also signals maturity. Enterprise AI cannot be a free-for-all. If Microsoft can make governance straightforward, it will have a real advantage over competitors whose controls are less integrated.

The opportunity is to turn Copilot into a trusted orchestration layer for work, not merely a chatbot embedded in Office.

There is also the danger that verification adds latency without sufficiently improving accuracy, or that agentic workflows produce new classes of failure that are harder to detect.

The real test is not whether Microsoft can show Claude and GPT side by side. It is whether users can complete better work with less effort, fewer errors, and less supervision. That is the standard enterprise AI now has to meet.

A few milestones will tell us a lot:

Source: LatestLY Microsoft Unveils Multi-Model AI Upgrades as Copilot Integrates Claude and GPT for Real-Time Verification | LatestLY

LatestLY

Background

Background

For much of the generative AI boom, Microsoft’s Copilot story was tightly linked to OpenAI. That relationship helped Microsoft move quickly, anchor its AI stack in Azure, and launch Copilot as the front door for AI inside Word, Excel, PowerPoint, Outlook, and Teams. The early framing was simple: one major foundation model partnership, one ecosystem, one vision for productivity.That approach was always going to evolve. As enterprise adoption matured, customers began demanding more than clever demos and fluent prose. They wanted consistency, traceability, and control — especially when AI systems were used for research, analysis, document drafting, and agentic automation. A single model can be impressive, but it can also be brittle. If the model makes a confident mistake, the user pays the price.

Microsoft’s recent Copilot changes show that the company has internalized that lesson. The company now explicitly supports Claude in Researcher, the assistant designed for complex, source-cited work, and it has opened the door to Anthropic models across several Microsoft offerings. Microsoft says Anthropic models are being enabled by default for most commercial customers, while EU, UK, and sovereign cloud environments remain excluded or opt-in by policy. That is a significant operational expansion, not a symbolic pilot. (learn.microsoft.com)

The broader industry context matters too. AI vendors are under pressure to prove that their systems can do more than generate text. The next battleground is not only model quality, but orchestration: which model to use, when to use it, and how to verify the answer before a user sees it. Microsoft’s latest move is a direct bet that the winners in enterprise AI will be the companies that can blend multiple models, multiple reasoning styles, and multiple layers of verification into one governed workflow. (microsoft.com)

What Microsoft Actually Changed

The headline improvement is Microsoft’s shift from a single-model Copilot experience toward a multi-model workflow. In the newly emphasized Researcher experience, users can work with Claude, GPT, or an auto-selected model depending on the task. Microsoft’s support documentation now says Researcher supports Anthropic models and is being rolled out gradually across eligible organizations. (support.microsoft.com)The most interesting part is not just access to another model. It is the design philosophy behind the integration. Microsoft’s March 9 Copilot blog described Copilot Cowork as a capability built with Anthropic technology for long-running, multi-step work, and it framed this as part of “Wave 3” of Microsoft 365 Copilot. That is a meaningful shift away from one-shot prompts and toward delegated execution that unfolds over time. (microsoft.com)

Why the integration matters

This is where the product becomes strategically different. Instead of forcing users to pick a single AI brand and hope for the best, Microsoft is building an orchestration layer that chooses or combines models based on task fit. That is much closer to how enterprises think about software procurement than the consumer AI market’s winner-take-all mentality.It also reduces Microsoft’s dependence on any one vendor’s strengths and weaknesses. If one model is better at drafting, another at critique, and a third at structured reasoning, Microsoft can potentially route work to the best tool rather than pretending every model is equally suited to every job.

Key implications include:

- More resilience if one model underperforms on a task.

- Better enterprise trust through layered checking.

- Greater product differentiation versus single-model assistants.

- Less vendor lock-in for Microsoft itself.

- A more realistic AI workflow for knowledge workers.

Critique and Verification as the New Value Proposition

The most notable feature in this update cycle is Critique, a verification-oriented workflow that reportedly uses one model to generate an answer and another to review it for quality and accuracy before presenting the result. Microsoft’s current documentation already emphasizes that Researcher is built to deliver a structured, source-cited report and that it is meant to support deeper reasoning than standard Copilot chat. The addition of model-based critique fits that mission neatly. (support.microsoft.com)This matters because hallucinations are not a niche annoyance; they are the core product problem that keeps AI from being trusted in serious work. A user can tolerate a typo or an awkward sentence. They cannot tolerate an AI that invents a citation, misstates a policy, or fabricates a business fact in a report that goes to leadership or a customer.

How verification changes the user experience

The practical effect of verification is less about making AI perfect and more about making it safer to rely on. If a second model can flag weak reasoning, missing evidence, or unsupported claims, then the user spends less time manually auditing every paragraph. That is the kind of small-but-real productivity gain enterprises actually value.Still, there is a caveat. Verification is only as good as the verifier. If both models share blind spots, or if the critique layer becomes overly conservative, users may get answers that are safer but less useful. That tension is why multi-model systems are promising but not magical.

Why this is different from simple model switching

Many vendors let users choose between models. Microsoft’s move is more ambitious because it treats the models as part of a workflow rather than a menu. That is a subtle but important distinction. One approach says, “pick the model you like.” The other says, “let the platform assemble a stronger answer on your behalf.”That shift could become a defining feature of enterprise AI over the next two years:

- Generate a draft with one model.

- Review or challenge it with another.

- Surface citations and provenance.

- Let the user intervene before final output.

- Keep the process inside a governed environment.

Council and the Case for Transparency

Alongside Critique, Microsoft is introducing Council, a side-by-side comparison view that lets users inspect responses from different AI models. This is a smart move because it addresses one of the biggest sources of mistrust in AI: opacity. When a single answer appears in a polished interface, users may assume it is more authoritative than it really is.A comparison interface makes the model’s variability visible. That visibility is valuable in research, compliance, legal review, strategy work, and any context where how an answer was formed matters almost as much as the answer itself.

Why side-by-side output matters

Council is not just a feature for enthusiasts. It is a practical learning tool for enterprises that are still figuring out where AI fits in their workflows. By comparing outputs, teams can identify which model is better at summarization, which one handles nuance, and which one is more cautious about uncertain claims.That can help administrators and power users build internal playbooks. It also gives Microsoft a way to market Copilot as a platform for model governance rather than merely a text generator.

Important benefits include:

- Model transparency for users and admins.

- Better prompt tuning through comparison.

- Easier evaluation for enterprise pilots.

- More confidence in high-stakes research.

- A path toward governed model selection.

Copilot Cowork and Agentic AI

The rollout of Copilot Cowork may be the most strategically important part of the announcement. Microsoft says the tool is meant to handle long-running, multi-step tasks, allowing users to delegate meaningful work and monitor progress over time. In Microsoft’s own framing, this is where Copilot moves from assistance toward execution. (microsoft.com)That aligns with the broader industry shift toward agentic AI. Instead of asking a system for a single answer, users ask it to complete a sequence of work steps across files, tools, and contexts. This is the promise that has fueled interest in autonomous agents, and it is why Microsoft is racing to bake those capabilities into the productivity stack people already use.

What makes Cowork enterprise-relevant

Microsoft is careful to present Cowork as an enterprise feature, not a consumer novelty. The company says work is observable, actions are transparent, and progress can be reviewed, guided, or stopped. That matters because enterprise customers will not accept black-box automation that can silently edit documents, send messages, or change data without accountability. (microsoft.com)The product design reflects a balance between autonomy and control. That is a much more credible enterprise story than “fully autonomous AI,” which tends to collapse under real-world compliance and permission requirements.

Why this could reshape office software

If Cowork succeeds, it could change the unit of value in Microsoft 365. Today, the value is often measured in faster drafting or summary generation. Tomorrow, it could be measured in delegated workflows completed end-to-end. That is a far bigger opportunity, because it moves Copilot from a helper to a labor multiplier.But it also raises the bar dramatically. A system that can work for minutes or hours must be reliable across interruptions, context shifts, and document state changes. That is much harder than generating a polished paragraph.

Microsoft’s Multi-Model Strategy

Microsoft’s embrace of Anthropic alongside OpenAI is not a rejection of OpenAI. It is diversification. The company is clearly signaling that it wants the best available models across scenarios, even if they come from different vendors. Microsoft’s documentation now says Anthropic models can be used in Researcher, Copilot Studio, Power Platform, Agent Mode in Excel, and Word, Excel, and PowerPoint agents. (learn.microsoft.com)This is a pragmatic move. The AI market is moving too quickly for any one model partnership to remain sufficient across all use cases. Model quality changes, price/performance shifts, and enterprise needs become more specialized over time. Microsoft appears to be building a platform that can absorb those changes instead of being whipsawed by them.

Why diversification reduces risk

From Microsoft’s perspective, multi-model support lowers platform risk in several ways. It reduces dependence on one supplier’s roadmap. It also gives Microsoft more leverage when negotiating commercial terms, capacity, and cloud deployment choices.For customers, the appeal is equally obvious. Different teams may prefer different model behaviors. Finance may want stricter verification, marketing may want more stylistic flexibility, and legal may want conservative reasoning. A multi-model platform can support all three without forcing everyone into the same mold.

That said, multi-model strategy creates its own complexity:

- More product surfaces to maintain.

- More policy and admin settings to manage.

- More training required for users.

- More potential confusion about which model is active.

- More scrutiny over data handling and regional availability.

Enterprise Impact Versus Consumer Impact

For enterprise buyers, the update is clearly about trust, governance, and productivity. Microsoft is making a case that Copilot can become more dependable precisely because it is not chained to one model. The fact that Researcher is designed to cite sources, that Anthropic models are exposed through admin controls, and that Microsoft is talking about enterprise-grade controls all point in the same direction. (support.microsoft.com)For consumers, the story is more mixed. Many consumer users will simply see better answers, if they see the feature at all. But they are less likely to care about model provenance, compliance settings, or enterprise governance. Consumer adoption will depend more on whether the experience feels faster, smarter, and less error-prone than rivals.

The enterprise case

The enterprise case is strong because the pain point is obvious: workers already spend time verifying AI outputs. If Copilot can reduce that burden, even modestly, that is a compelling ROI story. It also helps Microsoft justify its premium positioning against standalone chatbot products.The consumer case

The consumer case is less clean. Consumer AI is still driven by convenience, novelty, and price. If Microsoft’s added sophistication makes the experience feel more complicated, casual users may not care. However, if the system quietly improves quality without adding friction, the consumer upside could be substantial.In short, the enterprise buyer wants control, while the consumer wants simplicity. Microsoft has to satisfy both without letting one undermine the other.

Competitive Pressure on Google, OpenAI, and Anthropic

This move puts pressure on several fronts at once. For Google, Microsoft is effectively saying that productivity AI should not be locked to a single in-house model family. For OpenAI, the message is subtler but real: Microsoft values the partnership, but it will not make OpenAI the sole identity of Copilot. For Anthropic, Microsoft is giving Claude a powerful distribution channel inside business software where trust matters. (microsoft.com)That is a delicate competitive balance. Microsoft is not merely using models; it is arbitraging them. If one vendor is especially good at reasoning, another at tool use, and another at speed, Microsoft can blend those strengths into a product advantage.

Why this pressures the market

It raises the standard for everyone else. A single-model assistant must now compete not just on raw output quality, but on system design, verification, and workflow integration. That is a harder race to win, especially in enterprise environments where customers care about auditability and policy alignment.It also suggests that model vendors may increasingly compete for roles inside platforms rather than exclusive placement. One model becomes the drafter, another the critic, another the agentic executor. That is a very different future from the early assumption that one frontier model would dominate every task.

Financial Stakes and Investor Reaction

Microsoft’s AI strategy has come under investor scrutiny because the company is spending heavily on infrastructure while markets continue asking when the returns will become obvious. Even with a modest share-price bump around the announcement, the company is still managing a difficult quarter by market standards, and that makes every AI update carry extra financial weight.The company’s challenge is familiar across Big Tech: prove that AI spending converts into durable product value, not just impressive demos. The more Microsoft can show that Copilot reduces friction, increases subscriptions, and supports enterprise retention, the easier it becomes to justify the capital intensity of its AI push.

Why product quality matters to valuation

Investors are increasingly looking for signs that AI is becoming embedded in recurring workflows, not just bundled into headlines. A system like Researcher, with its source citations and model verification, is much closer to recurring business utility than a chat novelty. If Cowork can move from preview to reliable execution, that would be an even stronger monetization story.At the same time, there is a risk that the market discounts AI features until adoption becomes obvious. Features alone do not equal revenue. Microsoft still has to prove that these capabilities change behavior at scale.

Governance, Data Boundaries, and Deployment Friction

The deployment details matter almost as much as the features themselves. Microsoft says Anthropic models are available by default for most commercial cloud customers, but not in the EU, UK, or certain sovereign and government cloud environments. The company also notes that Anthropic models are excluded from the EU Data Boundary and, where relevant, in-country processing commitments. (learn.microsoft.com)That means the rollout is not uniform. Enterprises with multinational compliance obligations will need to think carefully about where Claude is allowed, what data can flow to it, and how those controls are surfaced to users. This is a classic enterprise AI problem: the best model in the world is still limited by policy, geography, and procurement rules.

What admins will need to manage

Administrators are now responsible for more than license assignment. They must understand region-specific defaults, opt-in behavior, and which agents expose Claude in different Microsoft 365 surfaces. Microsoft’s documentation makes clear that model availability and UI indicators vary by product and region. (learn.microsoft.com)That creates friction, but it also signals maturity. Enterprise AI cannot be a free-for-all. If Microsoft can make governance straightforward, it will have a real advantage over competitors whose controls are less integrated.

Strengths and Opportunities

Microsoft’s latest Copilot changes have several clear strengths. They address practical enterprise pain points, not just speculative AI enthusiasm, and they do so in a way that preserves Microsoft’s core advantage: distribution inside Microsoft 365.The opportunity is to turn Copilot into a trusted orchestration layer for work, not merely a chatbot embedded in Office.

- Better answer quality through dual-model critique.

- More transparency via side-by-side comparison.

- Stronger enterprise trust with source-cited research workflows.

- Reduced model dependence on a single vendor.

- Clearer monetization path through premium productivity features.

- Improved workflow automation with long-running agentic tasks.

- Competitive differentiation against simpler AI assistants.

Risks and Concerns

The biggest risk is that multi-model sophistication becomes too complicated for ordinary users and too constrained for regulated ones. Microsoft is trying to satisfy both at once, and that is never easy.There is also the danger that verification adds latency without sufficiently improving accuracy, or that agentic workflows produce new classes of failure that are harder to detect.

- Operational complexity across regions and licenses.

- Regulatory friction from data-boundary exclusions.

- User confusion about which model is active.

- Latency trade-offs from critique and comparison layers.

- Residual hallucinations if verifier models miss the same errors.

- Overpromising autonomy before agents are truly robust.

- Enterprise skepticism if ROI is not immediately visible.

Looking Ahead

The next phase will be about whether Microsoft can make these capabilities feel native, reliable, and easy to administer. If users experience Critique and Council as invisible quality improvements, the company will have solved an important part of the trust problem. If the features feel bolted on, they may be viewed as experiments rather than foundations.The real test is not whether Microsoft can show Claude and GPT side by side. It is whether users can complete better work with less effort, fewer errors, and less supervision. That is the standard enterprise AI now has to meet.

A few milestones will tell us a lot:

- Whether Critique meaningfully reduces factual mistakes.

- Whether Council becomes a real evaluation tool for teams.

- Whether Copilot Cowork graduates from preview to dependable execution.

- Whether regional rollout and admin controls remain manageable.

- Whether enterprise customers see measurable productivity gains.

- Whether rivals respond with similar multi-model orchestration.

Source: LatestLY Microsoft Unveils Multi-Model AI Upgrades as Copilot Integrates Claude and GPT for Real-Time Verification |

Last edited: