Microsoft’s March 12, 2026 preview of Copilot Health turns the company’s consumer-facing Copilot from a general productivity assistant into an expressly medical-facing workspace that promises to read your electronic health records, ingest continuous wearable telemetry, pull in lab results, and deliver plain‑language, actionable health insights — but it also raises immediate, hard questions about accuracy, governance, and who ultimately owns the line between information and clinical care.

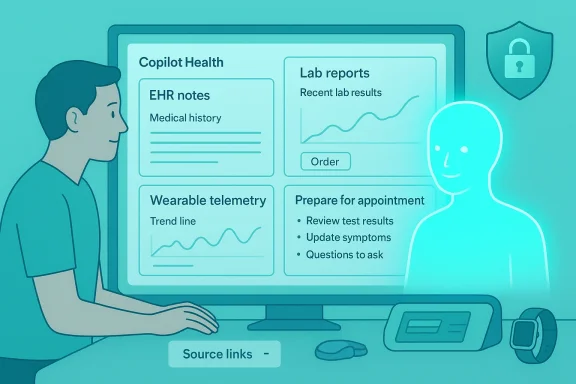

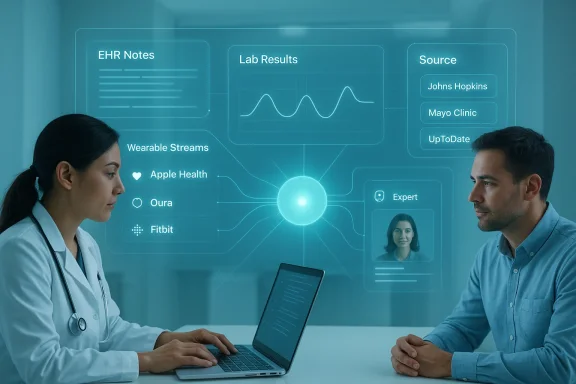

Microsoft announced Copilot Health as a U.S.-only preview available by waitlist and limited to adults aged 18 and older. The product is presented as a separate, privacy-segmented “health lane” inside Copilot: a dedicated space where users can connect medical records from hospitals and provider organizations, tie in wearable sources such as Apple Health, Oura, and Fitbit, and import lab data. Microsoft positions Copilot Health for people who want help understanding test results, tracking trends over time, and preparing more informed questions for clinical visits — not as a diagnostic or treatment service.

The collection and connectivity claims are large and explicit: Microsoft says Copilot Health can connect to over 50 wearable sources, import records from more than 50,000 U.S. hospitals and provider organizations through HealthEx, and incorporate lab results via Function. The company also described integrations with live U.S. provider directories so users can search clinicians by specialty, location, language, and insurance, and said health responses will include source citations and expert-written answer cards (Microsoft cited content from Harvard Health). Microsoft further asserts that data brought into Copilot Health is isolated from general Copilot usage and not used to train its models, and that connectors can be revoked at any time.

This preview follows internal usage signals Microsoft has been sharing: the company reports its consumer products handle more than 50 million health questions per day, and that roughly 40% of those queries relate to symptoms, conditions, and treatments, with one in five conversations involving users describing symptoms, interpreting test results, or managing conditions. That usage narrative has clearly shaped Microsoft’s product strategy: make Copilot the consumer-facing hub for personal medical data, and build a tightly integrated healthcare stack on the back end.

Where to be cautious:

But the stakes are high. The ecosystem of middleware vendors, device makers, AI providers, and health systems means that technical complexity and responsibility are fragmented. Promises that data will not be used for model training, or that automated outputs will remain purely informational, are necessary but not sufficient. Independent oversight, continuous clinical evaluation, and conservative product framing will be essential in the months ahead.

The responsible path forward is clear: move slowly and transparently, validate clinically, welcome independent audits, and ensure that consumers and clinicians understand the tool’s limits. If Microsoft and the wider industry follow that playbook, Copilot Health could become a powerful, trustworthy companion in personal healthcare. If not, it risks magnifying the harms of misinformation and data misuse at a scale few other product launches can match.

Source: TestingCatalog ICYMI: Microsoft begins phased US rollout of Copilot Health

Background / Overview

Background / Overview

Microsoft announced Copilot Health as a U.S.-only preview available by waitlist and limited to adults aged 18 and older. The product is presented as a separate, privacy-segmented “health lane” inside Copilot: a dedicated space where users can connect medical records from hospitals and provider organizations, tie in wearable sources such as Apple Health, Oura, and Fitbit, and import lab data. Microsoft positions Copilot Health for people who want help understanding test results, tracking trends over time, and preparing more informed questions for clinical visits — not as a diagnostic or treatment service.The collection and connectivity claims are large and explicit: Microsoft says Copilot Health can connect to over 50 wearable sources, import records from more than 50,000 U.S. hospitals and provider organizations through HealthEx, and incorporate lab results via Function. The company also described integrations with live U.S. provider directories so users can search clinicians by specialty, location, language, and insurance, and said health responses will include source citations and expert-written answer cards (Microsoft cited content from Harvard Health). Microsoft further asserts that data brought into Copilot Health is isolated from general Copilot usage and not used to train its models, and that connectors can be revoked at any time.

This preview follows internal usage signals Microsoft has been sharing: the company reports its consumer products handle more than 50 million health questions per day, and that roughly 40% of those queries relate to symptoms, conditions, and treatments, with one in five conversations involving users describing symptoms, interpreting test results, or managing conditions. That usage narrative has clearly shaped Microsoft’s product strategy: make Copilot the consumer-facing hub for personal medical data, and build a tightly integrated healthcare stack on the back end.

Why this matters now

The race to own consumer health conversations is one of the most consequential technology competitions of the moment. Major cloud and AI companies — Microsoft, OpenAI, Anthropic, and Amazon among them — are pushing consumer and clinician-facing products that claim to make medical information more accessible, but they differ in approach, privacy posture, and clinical integration.- Consumers already ask AI a massive volume of health questions; turning those ad hoc chats into a connected, data‑rich experience amplifies both promise and risk.

- Integrating fragmented EHR data, wearable telemetry, and lab results into a common view addresses a real usability problem: patient records today are distributed across systems, notes are dense and jargon-laden, and wearable signals are rarely integrated into clinical workflows.

- At the same time, bundling that sensitive data under a single corporate account raises new governance, liability, and security questions that regulators, clinicians, and patients will scrutinize.

What Copilot Health claims to do — features and technical details

Microsoft’s preview positions Copilot Health as a synthesis tool, combining multiple data streams and returning contextualized, source-cited explanations. Key claimed capabilities include:- Record aggregation: Pull clinical notes, medication lists, visit summaries, and imaging/lab results from thousands of providers via HealthEx-style connectors.

- Wearable ingestion: Import biometric streams and activity data from more than 50 wearable sources, including Apple Health, Oura, and Fitbit, to identify trends and anomalies over time.

- Lab result interpretation: Surface plain‑language summaries of lab findings, flag out-of-range results, and show historical trends.

- Provider search: Live directories to help users find clinicians by specialty, location, language, and accepted insurance networks.

- Source citations and expert cards: Answers are accompanied by citations and structured “answer cards” written or vetted by health experts.

- Privacy segmentation: Health conversations are isolated from general Copilot interactions; users can revoke connectors and Microsoft states that data in Copilot Health is not used for model training.

- Clinical evaluation pathway for advanced features: Research tools like Microsoft’s MAI‑DxO diagnostic orchestrator are part of the roadmap, but Microsoft says additional clinical capabilities will be released only after robust clinical evaluation and clear labeling.

Cross-checking the claims: what’s verifiable and what remains a company assertion

Microsoft’s headline claims — 50 wearable sources, 50,000+ provider organizations, lab integrations with Function, and a separate health lane that won’t be used for model training — have been repeated across multiple industry reports and the launch materials. Independent reporting corroborates the broad outlines: the preview is US-only, uses waitlist access, focuses on adults over 18, and emphasizes privacy segmentation and revocable connectors.Where to be cautious:

- “Not used for model training”: This is a corporate assurance often cited in product announcements. While the promise is meaningful, enforcement depends on technical, contractual, and governance controls. Independent verification requires auditing or contractual evidence — something Microsoft has not made independently verifiable in public materials.

- MAI‑DxO performance claims: Microsoft has showcased research about MAI‑DxO, including high accuracy figures on curated case vignettes and NEJM-style cases. These lab or research‑setting results do not directly translate to real-world clinical performance. Microsoft has said it will require clinical evaluation and clear labeling before releasing such capabilities; that conservative phrasing is appropriate and necessary.

- Provider & device counts: HealthEx and other intermediaries have publicized large connection networks (e.g., HealthEx’s link to 50,000+ provider organizations); those numbers are credible but reflect network reach, not necessarily the quality, recency, or completeness of records available for every patient.

Strengths: where Copilot Health could genuinely help patients and clinicians

- Fixing fragmentation

Copilot Health directly addresses a fundamental patient pain point: medical data is fragmented across hospitals, labs, and devices. Aggregation could reduce confusion and empower patients to prepare focused, evidence‑based questions before visits. - Plain-language translation of clinical data

Many patients struggle to interpret lab ranges, medication instructions, and clinic notes. An assistant that summarizes results, highlights trends, and provides context could improve health literacy and shared decision-making. - Longitudinal trend detection

Wearable telemetry is noisy but valuable for pattern recognition. Copilot Health’s promise to visualize trends over time — e.g., sleep quality, resting heart rate, activity patterns — can surface signals that episodic clinic visits miss. - Patient convenience and triage

For non-urgent questions or pre-visit preparation, a well-calibrated Copilot Health can save time and make interactions with clinicians more productive. - Integrated care navigation

Provider search features that consider specialty, language, location, and insurance may help patients find appropriate clinicians faster — an often-overlooked but practical benefit. - Governance-forward product framing

Microsoft’s public commitments — privacy segmentation, revocable connectors, labeling of clinical-grade features — reflect an awareness of the regulatory and ethical stakes and can help build user trust if executed transparently.

Risks and limitations: what should make users, clinicians, and IT leaders uneasy

- Accuracy and clinical reliability: Large language models and medical reasoning systems can hallucinate or overconfidently assert incorrect conclusions. Even well‑sourced summaries can misinterpret context (e.g., lab results ordered as part of population screening vs. diagnostic testing).

- Scope creep from interpretation to action: The line between “explaining” and “advising” is thin. If Copilot Health begins producing explicit care recommendations, liability questions become acute — who is responsible if advice is followed and harm occurs?

- Privacy and secondary use: Microsoft’s “not used for training” promise is important but must be supported by clear contractual, technical, and audit evidence. Data sovereignty, retention policies, and third‑party access controls will all matter.

- Re-identification and deanonymization risks: Aggregated health data is uniquely identifying. Even de‑identified datasets can be re-identified when cross-referenced with other sources. Any research uses must be governed carefully.

- Bias and health inequity: Training data, clinical content sources, and device datasets may underrepresent historically underserved populations. Wearables themselves often have lower accuracy across skin tones and body types, which can propagate bias into downstream insights.

- Integration quality and clinical completeness: Provider counts are not the same as complete, normalized medical histories. Missing notes, delayed lab feeds, or ambiguous provider matching could lead Copilot Health to synthesize incomplete pictures.

- Regulatory and legal uncertainty: U.S. regulators (including the FDA, FTC, and HHS OCR) and state data protection laws may scrutinize such products. International rollouts face even steeper legal, privacy, and interoperability hurdles.

- Clinician workflow impact: For clinicians, increased patient access to AI-generated interpretations could yield better prepared patients — or a new volume of low-value queries, misinterpretations, and misdirected anxieties that clinicians must manage.

Competitive landscape and strategic implications

Microsoft’s Copilot Health sits at the intersection of several market moves:- Anthropic & HealthEx integration: HealthEx’s announced partnership with Anthropic to connect records from 50,000+ provider organizations to Claude shows identical market tension: record aggregation is a commodity layer quickly being provisioned by middleware vendors, and major AI vendors are building front‑end experiences on top of the same plumbing.

- OpenAI and Amazon moves: OpenAI’s ChatGPT Health and Amazon’s One Medical expansions (and Amazon’s own health‑facing initiatives) make this a broad, multi‑actor contest to become the default interface for consumer health queries.

- Clinician tools (Dragon Copilot): Microsoft’s dual approach — clinician-facing workflow tools derived from Nuance and DAX assets, and consumer Copilot Health — creates synergy but also complexity when reconciling clinician and patient data flows and responsibilities.

- Platform advantage vs trust friction: Microsoft can deploy Copilot Health across devices and cloud customers, leveraging enterprise relationships with healthcare systems. But trust is the gatekeeper: willingness to hand sensitive records to a tech giant will vary by demographic, clinician recommendation, and perceived benefit.

Practical guidance: what users, clinicians, and IT leaders should do now

- For individuals considering Copilot Health:

- Be cautious with initial access. Prefer to link only the records and devices you’re comfortable sharing; use revocable connectors actively.

- Use Copilot Health for preparation, not diagnosis. Treat results as interpretive assistance to take to your clinician, not a replacement for medical advice.

- Review privacy settings and retention policies. Understand what Microsoft says about data use, retention, and whether your data can be exported or deleted.

- For clinicians and health systems:

- Set expectations with patients. Clarify what Copilot Health can and cannot do. If patients bring AI-generated summaries, treat them as patient-supplied context requiring clinical validation.

- Monitor for workflow impact. Anticipate potential increases in pre-visit messaging and plan triage processes.

- Validate interoperability and mapping. Ensure EHR mappings and data normalization from middleware vendors are accurate before relying on integrated summaries.

- For IT leaders and privacy officers:

- Demand contractual clarity. If your organization’s data could connect to such consumer tools, audit vendor contracts, data flows, and consent mechanisms.

- Prepare incident response plans. Health data is high-risk; be ready for breach scenarios, data subject requests, and regulatory inquiries.

- Coordinate with clinical governance. Any deployment that touches patient data should be reviewed by clinicians, compliance, and legal teams.

Regulatory and ethical guardrails that should be required

- Independent audits of privacy claims

Internal assurances that data won’t be used for model training should be verifiable through independent technical audits and public attestations. - Clear labeling and user education

Any answer that could be construed as clinical advice should be clearly labeled, include provenance, and direct users to consult clinicians for care decisions. - Clinical evaluation and post-market surveillance

Diagnostic or triage features must undergo clinical trials or evaluations and maintain post-market monitoring for safety signals and disparities. - Data minimization and granular consent

Users should be able to select which parts of their record are shared, and consent flows must be explicit, recoverable, and auditable. - Access and equity protections

Address device biases and ensure that features do not widen disparities in care; publicly report performance across demographic subgroups. - Liability clarity

Policymakers and vendors must clarify who bears responsibility when AI-generated health guidance is followed with adverse outcomes.

The path forward: cautious optimism, not hype

Copilot Health is not a trivial product update: it represents a bold reimagining of how consumer AI and personal health data might combine to help people manage their health. If Microsoft executes on privacy guarantees, invests in rigorous clinical validation, and collaborates transparently with regulators and clinicians, Copilot Health could become a useful preparatory and explanatory layer that strengthens the patient‑clinician partnership.But the stakes are high. The ecosystem of middleware vendors, device makers, AI providers, and health systems means that technical complexity and responsibility are fragmented. Promises that data will not be used for model training, or that automated outputs will remain purely informational, are necessary but not sufficient. Independent oversight, continuous clinical evaluation, and conservative product framing will be essential in the months ahead.

Conclusion

Microsoft’s Copilot Health preview, launched March 12, 2026, is an ambitious step toward making fragmented health data usable for everyday people. It combines large-scale connectivity claims, wearable ingestion, lab interpretation, and contextualized explanations inside a privacy-segmented Copilot experience. The potential benefits — improved patient understanding, better visit preparation, and usable longitudinal insights — are real. So are the risks: accuracy failures, privacy exposure, bias amplification, and legal ambiguity.The responsible path forward is clear: move slowly and transparently, validate clinically, welcome independent audits, and ensure that consumers and clinicians understand the tool’s limits. If Microsoft and the wider industry follow that playbook, Copilot Health could become a powerful, trustworthy companion in personal healthcare. If not, it risks magnifying the harms of misinformation and data misuse at a scale few other product launches can match.

Source: TestingCatalog ICYMI: Microsoft begins phased US rollout of Copilot Health