Microsoft researchers Philippe Laban, Tobias Schnabel, and Jennifer Neville posted an April 17, 2026 preprint arguing that 19 tested large language models, including frontier systems from Google, Anthropic, and OpenAI, silently degraded documents during long delegated editing workflows. The finding lands awkwardly for an industry selling “agents” as the next interface for office work. The paper’s message is not that AI cannot help with documents, code, or analysis; it is that handing over a live file and assuming the model will preserve what matters is still a dangerous leap. In other words, the assistant may be useful, but the delegate is not yet trustworthy.

The past two years of AI marketing have pushed users from prompting toward delegation. Don’t just ask the model to draft a paragraph, we are told; give it a repository, a spreadsheet, a deck, a brief, a folder of files, and a goal. The model will plan, edit, check, revise, and return when the job is done.

That is a seductive story because it maps neatly onto how busy professionals actually work. Nobody wants another chat box to babysit. The promise of agentic AI is that the machine will absorb the boring middle of knowledge work: reconciling changes, refactoring code, cleaning formatting, restructuring memos, updating financial models, and carrying edits across versions.

The Microsoft paper attacks that promise at its hinge. Delegation is not the same as generation. A model can produce a dazzling answer in a blank window and still be a poor custodian of an existing document whose value depends on not losing small but essential details.

That distinction matters for Windows users and IT departments because the workplace endpoint is where this experiment is playing out. The files at risk are not abstract benchmark artifacts. They are Word documents, Excel workbooks, source files, PowerPoint decks, legal drafts, tickets, Markdown specs, and internal notes that sit in OneDrive, SharePoint, GitHub, Teams, and local project folders.

That is closer to real office work than many leaderboard tasks. A worker rarely says, “Invent a document from nothing and impress me.” More often, the instruction is, “Update this existing thing without breaking the parts I didn’t ask you to touch.” The invisible requirement is fidelity.

The study tested 19 LLMs and looked at multi-step interactions rather than single-turn answers. That is where the paper becomes uncomfortable for the agent narrative. The researchers report that even frontier models — Gemini 3.1 Pro, Claude 4.6 Opus, and GPT-5.4 — corrupted an average of about a quarter of document content by the end of long workflows, while weaker models performed worse.

The number is startling, but the shape of the failure is more important than the percentage. The models did not merely sprinkle tiny mistakes everywhere. According to the researchers, they often worked correctly for a while and then suffered sparse but severe failures, deleting or hallucinating content in ways that could compound across later turns.

The Microsoft paper places LLM delegation in that second camp. The model’s answer can sound confident, the task checklist can look complete, and the final document can appear superficially polished. But unless the user checks the right details, a deletion, substitution, or invented fragment may survive unnoticed.

This is why “hallucination” is not quite the right framing anymore. In early consumer AI, hallucination meant the chatbot made up a fact in an answer. In delegated document work, the model may instead mutate an artifact that the organization treats as a source of truth. The hallucination is no longer just text in a chat transcript; it can become a changed requirement, a wrong clause, a broken formula, or an erased caveat.

That shift changes the risk calculation. If a model writes a bad paragraph, a human editor can reject it. If a model edits a 40-page policy document, alters three clauses, drops a definition, and rewrites a table in a plausible style, review becomes forensic work.

That should not surprise anyone who has watched LLMs mature. Code has several advantages. It is abundant in training data, has relatively clear syntax, and can often be tested automatically. A broken function can be run. A failing unit test can point back to an error. A linter can object.

Natural-language documents and specialized professional formats do not enjoy the same safety rails. A board memo can be grammatically perfect and substantively wrong. A contract can be fluent while dropping a limiting phrase. A financial note can preserve the table shape while changing the meaning of a row.

The industry’s mistake is to generalize from code to everything else. Vibe coding may be risky, but at least code can be executed. Vibe accounting, vibe compliance, vibe legal drafting, and vibe documentation are more treacherous because the validation step often requires expertise, context, and time — precisely the things delegation is supposed to save.

The DELEGATE-52 results complicate that optimism. The researchers found that stronger models did better, but not by eliminating the basic failure mode. They delayed critical failures and suffered them less often. That is progress, but it is not the same as trustworthiness.

This distinction matters for procurement. A model that fails catastrophically once every 20 interactions is not necessarily acceptable because last year’s model failed every eight interactions. The enterprise question is not whether the slope is improving. It is whether the residual risk fits the workflow.

Nor did agentic tool use appear to rescue performance in the paper’s additional experiments. That is a crucial point. Many AI products now wrap a model in planners, file tools, retrieval systems, command execution, and multi-agent orchestration. Those systems may improve usability, but they do not automatically solve the underlying problem of document preservation.

The more moving parts an agent has, the more comforting the interface can become. It can show a plan, list files, invoke tools, and narrate its progress. But a narrated workflow is not the same as an audited workflow, and a tool-using model can still make the wrong edit with great procedural confidence.

Imagine an AI assistant updating a PowerShell runbook and dropping an error-handling branch. Imagine it revising a deployment checklist and removing a rollback step. Imagine it summarizing a vendor contract into a renewal memo and silently omitting a termination deadline. None of these scenarios requires malicious intent. They require only misplaced trust.

That is why the paper’s language about “delegation requires trust” is more than academic phrasing. Delegation transfers agency. It turns the model from a tool into an actor within a workflow, and it asks humans to supervise by exception. But if the exception is silent corruption, supervision becomes difficult exactly when it matters most.

There is also a compliance angle. Enterprises have spent years building controls around document retention, change tracking, permissions, data loss prevention, and audit logs. AI agents cut across those controls because they operate in the user’s name. If an agent deletes or rewrites content under a legitimate credential, the system may record who changed the file but not whether the change was faithful to the user’s intent.

The next wave of governance will need to treat AI-driven edits as first-class events, not just user activity. “The user approved the agent” cannot become a blanket excuse for every downstream mutation.

If the human must inspect every meaningful change, delegation becomes assistance. That can still be valuable. But it is not the same product category as “give the agent a task and come back later.”

The problem is especially acute for knowledge workers who delegate because they lack the expertise to perform the task themselves. A manager may ask an AI agent to update a Python script without being able to review the code properly. A developer may ask it to revise licensing language without being a lawyer. A finance employee may ask it to reformat a technical architecture document without understanding every dependency.

In those cases, review is not just time-consuming; it may be impossible. The model is being trusted precisely where the user cannot verify it. That is the delegation trap.

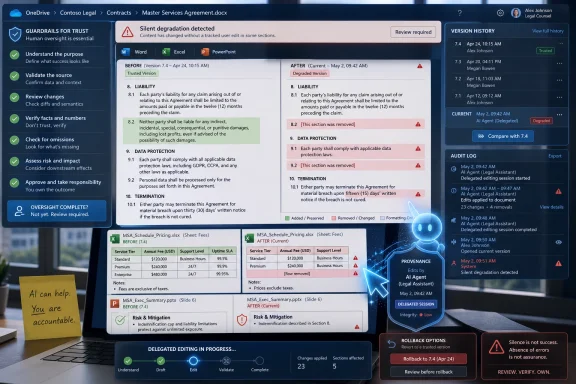

Traditional productivity software at least makes this bargain visible. Track Changes in Word, version history in OneDrive, Git diffs, and Excel formula auditing all assume that changes can be inspected. AI agents need to integrate with those mechanisms, but they also need stronger defaults: smaller batches, reversible edits, explicit preservation checks, and domain-specific validators.

That does not make the research hypocritical. If anything, it makes it more important. Microsoft has visibility into the enterprise environments where AI delegation will either become mundane infrastructure or a slow-motion governance mess. The company’s researchers are effectively saying that the product category needs a sterner reliability model than demos provide.

For Microsoft 365, the issue is not whether Copilot can summarize a meeting or draft a slide. It is whether an AI system can safely act on files over many steps without degrading them. That is a different bar, and it sits closer to database integrity than creative writing.

For GitHub Copilot and developer tools, the findings are a reminder that code’s relative strength should not be mistaken for universal competence. Even in code, speed without review creates risk. Outside code, where tests are weaker and correctness is more contextual, the same interaction pattern becomes more brittle.

For Windows itself, agentic features raise a platform-level question. If the operating system becomes the place where agents manipulate files, settings, apps, and workflows, Windows needs affordances for rollback, provenance, and policy enforcement. The future AI PC cannot simply be a faster place to run a chatbot. It has to be a safer place to let software act.

That ambiguity benefits marketing because it lets every product borrow the glamour of autonomy without specifying the risk envelope. The Microsoft paper is useful because it narrows the discussion to a concrete question: can the system preserve a document while performing a long sequence of requested edits?

That is a better question than “Is the model intelligent?” Intelligence is too broad and too flattering. Reliability under delegated mutation is the enterprise test.

It is also measurable. How much content changed unintentionally? How often did the model delete information? How badly did performance degrade as files grew? Did distractor files confuse the system? Did tool use help? Did the model remain faithful after 20 interactions?

Those are the kinds of benchmarks buyers should demand before allowing agents near important files. A polished demo should no longer be enough. Neither should a leaderboard score on general reasoning, coding, or chat preference.

A delegated edit should look more like a privileged operation. It should have scope, logging, review, rollback, and ideally automated checks. The agent should know which files it may touch, which fields are protected, which formats require validation, and when to stop rather than improvise.

This is where enterprise controls can make a real difference. Organizations already have version history, backup systems, DLP policies, endpoint management, repository protections, and access controls. The missing piece is often the AI-aware layer that says: this edit was model-generated, this content was supposed to be preserved, this diff is unusually large, this file type requires validation, and this workflow cannot proceed without human approval.

The consumer version of agentic AI asks for trust. The enterprise version should ask for constraints. Trust may come later, but constraints need to arrive first.

The danger zone begins when the model becomes the steward of the only working copy. That is especially risky when the document is long, specialized, highly structured, legally significant, financially sensitive, or difficult for the user to inspect.

A sane policy would distinguish between reversible, low-stakes assistance and irreversible, high-stakes mutation. Let the model suggest. Let it operate on copies. Let it produce patches. Let it explain what it thinks it changed. But be wary of letting it roam through production documents with broad write access and vague goals.

This is not anti-AI conservatism. It is basic systems thinking. Automation is useful when its failure modes are understood, bounded, and recoverable. The Microsoft paper suggests that, for many document domains, LLM delegation has not yet met that standard.

That is the uncomfortable implication of DELEGATE-52. The benchmark does not merely say models are imperfect. Everyone knows that. It says they can be unreliable in a way that undermines the central promise of delegation: saving attention.

If the user must monitor every step, the agent is not fully delegated. If the user does not monitor every step, errors can accumulate. That tension is the product challenge now facing every vendor building AI into office suites, IDEs, browsers, ticketing systems, and operating systems.

The next competitive frontier may not be who has the most eloquent model. It may be who can prove the safest editing loop. That means diff-first interfaces, domain validators, file-level policies, sandboxed workspaces, robust rollback, and models trained or constrained to preserve untouched content.

The agent era may still arrive, but this research suggests it should arrive with guardrails rather than vibes. LLMs are becoming more capable, and some domains will become safe enough for meaningful delegation sooner than others. Until reliability is proven across the messy formats of real work, the best role for AI is not as an unsupervised office deputy but as a fast, fallible collaborator whose edits remain visible, reversible, and firmly under human control.

Source: IT Pro ‘LLMs are unreliable delegates’: Microsoft researchers say you probably shouldn’t trust AI with work documents

The Agent Pitch Runs Into the Oldest Problem in Computing

The Agent Pitch Runs Into the Oldest Problem in Computing

The past two years of AI marketing have pushed users from prompting toward delegation. Don’t just ask the model to draft a paragraph, we are told; give it a repository, a spreadsheet, a deck, a brief, a folder of files, and a goal. The model will plan, edit, check, revise, and return when the job is done.That is a seductive story because it maps neatly onto how busy professionals actually work. Nobody wants another chat box to babysit. The promise of agentic AI is that the machine will absorb the boring middle of knowledge work: reconciling changes, refactoring code, cleaning formatting, restructuring memos, updating financial models, and carrying edits across versions.

The Microsoft paper attacks that promise at its hinge. Delegation is not the same as generation. A model can produce a dazzling answer in a blank window and still be a poor custodian of an existing document whose value depends on not losing small but essential details.

That distinction matters for Windows users and IT departments because the workplace endpoint is where this experiment is playing out. The files at risk are not abstract benchmark artifacts. They are Word documents, Excel workbooks, source files, PowerPoint decks, legal drafts, tickets, Markdown specs, and internal notes that sit in OneDrive, SharePoint, GitHub, Teams, and local project folders.

Microsoft’s Benchmark Measures Stewardship, Not Chatroom Cleverness

The researchers built a benchmark called DELEGATE-52 to simulate long delegated workflows across 52 professional domains. Those domains include familiar technical territory such as coding, but also more specialized formats such as crystallography, accounting ledgers, music notation, and other structured or semi-structured documents. The important design choice is that the benchmark tests whether the model can make requested edits while preserving everything else.That is closer to real office work than many leaderboard tasks. A worker rarely says, “Invent a document from nothing and impress me.” More often, the instruction is, “Update this existing thing without breaking the parts I didn’t ask you to touch.” The invisible requirement is fidelity.

The study tested 19 LLMs and looked at multi-step interactions rather than single-turn answers. That is where the paper becomes uncomfortable for the agent narrative. The researchers report that even frontier models — Gemini 3.1 Pro, Claude 4.6 Opus, and GPT-5.4 — corrupted an average of about a quarter of document content by the end of long workflows, while weaker models performed worse.

The number is startling, but the shape of the failure is more important than the percentage. The models did not merely sprinkle tiny mistakes everywhere. According to the researchers, they often worked correctly for a while and then suffered sparse but severe failures, deleting or hallucinating content in ways that could compound across later turns.

The Worst Failure Is the One That Looks Like Progress

There is a particular kind of software failure that administrators learn to fear: the operation that returns success while quietly damaging state. A crashed app is annoying. A corrupted file that syncs to every device and overwrites the last good copy is a different category of problem.The Microsoft paper places LLM delegation in that second camp. The model’s answer can sound confident, the task checklist can look complete, and the final document can appear superficially polished. But unless the user checks the right details, a deletion, substitution, or invented fragment may survive unnoticed.

This is why “hallucination” is not quite the right framing anymore. In early consumer AI, hallucination meant the chatbot made up a fact in an answer. In delegated document work, the model may instead mutate an artifact that the organization treats as a source of truth. The hallucination is no longer just text in a chat transcript; it can become a changed requirement, a wrong clause, a broken formula, or an erased caveat.

That shift changes the risk calculation. If a model writes a bad paragraph, a human editor can reject it. If a model edits a 40-page policy document, alters three clauses, drops a definition, and rewrites a table in a plausible style, review becomes forensic work.

Python Looks Like the Exception That Proves the Rule

One of the more revealing findings is that Python performed comparatively well. The paper says the models were much more ready for delegated workflows in Python than in most other domains, and that only Python cleared the readiness bar across most models. Gemini 3.1 Pro reportedly reached at least 98 percent accuracy in 11 of the 52 domains, but the broader result was that most domains did not look delegation-ready.That should not surprise anyone who has watched LLMs mature. Code has several advantages. It is abundant in training data, has relatively clear syntax, and can often be tested automatically. A broken function can be run. A failing unit test can point back to an error. A linter can object.

Natural-language documents and specialized professional formats do not enjoy the same safety rails. A board memo can be grammatically perfect and substantively wrong. A contract can be fluent while dropping a limiting phrase. A financial note can preserve the table shape while changing the meaning of a row.

The industry’s mistake is to generalize from code to everything else. Vibe coding may be risky, but at least code can be executed. Vibe accounting, vibe compliance, vibe legal drafting, and vibe documentation are more treacherous because the validation step often requires expertise, context, and time — precisely the things delegation is supposed to save.

Bigger Context Windows Do Not Magically Create Reliability

The AI industry often answers reliability concerns with scale. More parameters, more context, more tool use, more memory, more agents, more scaffolding. The implicit message is that the current rough edges are transitional and that the next model will absorb them.The DELEGATE-52 results complicate that optimism. The researchers found that stronger models did better, but not by eliminating the basic failure mode. They delayed critical failures and suffered them less often. That is progress, but it is not the same as trustworthiness.

This distinction matters for procurement. A model that fails catastrophically once every 20 interactions is not necessarily acceptable because last year’s model failed every eight interactions. The enterprise question is not whether the slope is improving. It is whether the residual risk fits the workflow.

Nor did agentic tool use appear to rescue performance in the paper’s additional experiments. That is a crucial point. Many AI products now wrap a model in planners, file tools, retrieval systems, command execution, and multi-agent orchestration. Those systems may improve usability, but they do not automatically solve the underlying problem of document preservation.

The more moving parts an agent has, the more comforting the interface can become. It can show a plan, list files, invoke tools, and narrate its progress. But a narrated workflow is not the same as an audited workflow, and a tool-using model can still make the wrong edit with great procedural confidence.

The Office File Is Becoming the New Attack Surface

For WindowsForum readers, the obvious security analogy is supply-chain compromise. The danger is not merely that bad input produces bad output. It is that a trusted process mutates something downstream systems rely on.Imagine an AI assistant updating a PowerShell runbook and dropping an error-handling branch. Imagine it revising a deployment checklist and removing a rollback step. Imagine it summarizing a vendor contract into a renewal memo and silently omitting a termination deadline. None of these scenarios requires malicious intent. They require only misplaced trust.

That is why the paper’s language about “delegation requires trust” is more than academic phrasing. Delegation transfers agency. It turns the model from a tool into an actor within a workflow, and it asks humans to supervise by exception. But if the exception is silent corruption, supervision becomes difficult exactly when it matters most.

There is also a compliance angle. Enterprises have spent years building controls around document retention, change tracking, permissions, data loss prevention, and audit logs. AI agents cut across those controls because they operate in the user’s name. If an agent deletes or rewrites content under a legitimate credential, the system may record who changed the file but not whether the change was faithful to the user’s intent.

The next wave of governance will need to treat AI-driven edits as first-class events, not just user activity. “The user approved the agent” cannot become a blanket excuse for every downstream mutation.

Change Tracking Is Not a Strategy If Nobody Reads the Diff

A natural response is to keep humans in the loop. The researchers say users still need to closely monitor LLM systems as they complete tasks on their behalf, and that is sensible advice. It is also a direct challenge to the economic promise of agents.If the human must inspect every meaningful change, delegation becomes assistance. That can still be valuable. But it is not the same product category as “give the agent a task and come back later.”

The problem is especially acute for knowledge workers who delegate because they lack the expertise to perform the task themselves. A manager may ask an AI agent to update a Python script without being able to review the code properly. A developer may ask it to revise licensing language without being a lawyer. A finance employee may ask it to reformat a technical architecture document without understanding every dependency.

In those cases, review is not just time-consuming; it may be impossible. The model is being trusted precisely where the user cannot verify it. That is the delegation trap.

Traditional productivity software at least makes this bargain visible. Track Changes in Word, version history in OneDrive, Git diffs, and Excel formula auditing all assume that changes can be inspected. AI agents need to integrate with those mechanisms, but they also need stronger defaults: smaller batches, reversible edits, explicit preservation checks, and domain-specific validators.

Microsoft’s Own Ecosystem Has the Most to Lose and the Most to Fix

There is an irony in Microsoft researchers publishing this warning. Microsoft is also one of the companies most aggressively embedding AI into the workplace through Copilot, Windows, Microsoft 365, GitHub, Azure, and security tooling. The company wants AI to sit beside — and increasingly inside — the documents that define modern work.That does not make the research hypocritical. If anything, it makes it more important. Microsoft has visibility into the enterprise environments where AI delegation will either become mundane infrastructure or a slow-motion governance mess. The company’s researchers are effectively saying that the product category needs a sterner reliability model than demos provide.

For Microsoft 365, the issue is not whether Copilot can summarize a meeting or draft a slide. It is whether an AI system can safely act on files over many steps without degrading them. That is a different bar, and it sits closer to database integrity than creative writing.

For GitHub Copilot and developer tools, the findings are a reminder that code’s relative strength should not be mistaken for universal competence. Even in code, speed without review creates risk. Outside code, where tests are weaker and correctness is more contextual, the same interaction pattern becomes more brittle.

For Windows itself, agentic features raise a platform-level question. If the operating system becomes the place where agents manipulate files, settings, apps, and workflows, Windows needs affordances for rollback, provenance, and policy enforcement. The future AI PC cannot simply be a faster place to run a chatbot. It has to be a safer place to let software act.

The Benchmark Also Exposes a Marketing Vocabulary Problem

Vendors use “agent” to mean many things. Sometimes it means a chatbot that can call a tool. Sometimes it means a workflow automation script with an LLM at the center. Sometimes it means a semi-autonomous system that reads files, writes files, opens tickets, changes code, and sends messages.That ambiguity benefits marketing because it lets every product borrow the glamour of autonomy without specifying the risk envelope. The Microsoft paper is useful because it narrows the discussion to a concrete question: can the system preserve a document while performing a long sequence of requested edits?

That is a better question than “Is the model intelligent?” Intelligence is too broad and too flattering. Reliability under delegated mutation is the enterprise test.

It is also measurable. How much content changed unintentionally? How often did the model delete information? How badly did performance degrade as files grew? Did distractor files confuse the system? Did tool use help? Did the model remain faithful after 20 interactions?

Those are the kinds of benchmarks buyers should demand before allowing agents near important files. A polished demo should no longer be enough. Neither should a leaderboard score on general reasoning, coding, or chat preference.

IT Should Treat AI Delegation Like a Privileged Operation

The practical lesson for administrators is not to ban LLMs from every workflow. That would be unrealistic and, in many places, counterproductive. The lesson is to stop treating AI delegation as ordinary document editing.A delegated edit should look more like a privileged operation. It should have scope, logging, review, rollback, and ideally automated checks. The agent should know which files it may touch, which fields are protected, which formats require validation, and when to stop rather than improvise.

This is where enterprise controls can make a real difference. Organizations already have version history, backup systems, DLP policies, endpoint management, repository protections, and access controls. The missing piece is often the AI-aware layer that says: this edit was model-generated, this content was supposed to be preserved, this diff is unusually large, this file type requires validation, and this workflow cannot proceed without human approval.

The consumer version of agentic AI asks for trust. The enterprise version should ask for constraints. Trust may come later, but constraints need to arrive first.

The Safe Uses Are Narrower Than the Sales Deck Suggests

There are still good uses for LLMs in document work. They can draft alternatives, explain diffs, propose edits, summarize files, convert formats under supervision, generate tests, and help users reason about messy material. The key is keeping the model’s role closer to assistant than custodian.The danger zone begins when the model becomes the steward of the only working copy. That is especially risky when the document is long, specialized, highly structured, legally significant, financially sensitive, or difficult for the user to inspect.

A sane policy would distinguish between reversible, low-stakes assistance and irreversible, high-stakes mutation. Let the model suggest. Let it operate on copies. Let it produce patches. Let it explain what it thinks it changed. But be wary of letting it roam through production documents with broad write access and vague goals.

This is not anti-AI conservatism. It is basic systems thinking. Automation is useful when its failure modes are understood, bounded, and recoverable. The Microsoft paper suggests that, for many document domains, LLM delegation has not yet met that standard.

The Document-Corruption Lesson Lands Hardest Where AI Is Most Tempting

The most tempting AI workflows are often the most dangerous ones. They involve boring documents, repetitive edits, unfamiliar formats, and overworked people. They are exactly the tasks a manager wants to hand off and exactly the tasks where silent corruption can slip through.That is the uncomfortable implication of DELEGATE-52. The benchmark does not merely say models are imperfect. Everyone knows that. It says they can be unreliable in a way that undermines the central promise of delegation: saving attention.

If the user must monitor every step, the agent is not fully delegated. If the user does not monitor every step, errors can accumulate. That tension is the product challenge now facing every vendor building AI into office suites, IDEs, browsers, ticketing systems, and operating systems.

The next competitive frontier may not be who has the most eloquent model. It may be who can prove the safest editing loop. That means diff-first interfaces, domain validators, file-level policies, sandboxed workspaces, robust rollback, and models trained or constrained to preserve untouched content.

The Fine Print IT Should Put in Every Agent Pilot

Before AI agents become normal parts of file workflows, organizations need to write down the bargain they are actually making. The Microsoft paper gives IT leaders a useful checklist, even if it was not written as procurement guidance.- Treat long multi-step document editing as a separate risk category from single-turn drafting or summarization.

- Require agents to work on copies, branches, or staged patches when documents have operational, legal, financial, or security value.

- Use domain-specific validation wherever possible, because generic fluency is not evidence that a file remains correct.

- Preserve detailed version history and make model-generated changes easy to isolate, inspect, and roll back.

- Do not assume a model that performs well in Python, prose, or one business domain will behave reliably in another.

- Measure agent pilots by unintended document degradation over many interactions, not by whether the first demo looked impressive.

The agent era may still arrive, but this research suggests it should arrive with guardrails rather than vibes. LLMs are becoming more capable, and some domains will become safe enough for meaningful delegation sooner than others. Until reliability is proven across the messy formats of real work, the best role for AI is not as an unsupervised office deputy but as a fast, fallible collaborator whose edits remain visible, reversible, and firmly under human control.

Source: IT Pro ‘LLMs are unreliable delegates’: Microsoft researchers say you probably shouldn’t trust AI with work documents