Becoming Frontier is no longer just Microsoft’s favorite strategic phrase; it is now a working operating model for IT, governance, and employee enablement. In this guide, Microsoft Digital lays out how the company is deploying AI agents across a global enterprise while trying to preserve security, compliance, usability, and measurable business value. The message is clear: the real race is not simply to adopt AI, but to redesign work around human-led, agent-operated systems. (microsoft.com)

Microsoft’s latest framing builds on an idea the company has been pushing throughout 2025 and into 2026: organizations move from personal AI assistance, to human-agent collaboration, and eventually to a Frontier Firm model where agents do more of the operational labor. Microsoft also emphasizes that this shift is not mainly a technology problem; it is an organizational design problem that requires governance, adoption, support, and telemetry to work together. (microsoft.com)

The most important thing about this guide is that it is not presented as theory. Microsoft Digital describes itself as Customer Zero, meaning it is using its own internal deployments to test the patterns it wants customers to follow. That makes the article less of a marketing slogan and more of a field manual for enterprise AI transformation, especially for IT teams trying to scale beyond pilots. (microsoft.com)

The backdrop matters. Microsoft has spent the past several years proving out Microsoft 365 Copilot, building internal AI Centers of Excellence, refining data governance, and pushing Responsible AI practices into the enterprise stack. The agent story is the next layer on top of that foundation, not a replacement for it. In Microsoft’s view, the AI-ready data estate, the governance model, and the change-management muscle are all prerequisites for a workable agent strategy. (microsoft.com)

There is also a strong “IT as business strategist” theme running through the guide. Microsoft argues that IT should not merely approve tools or manage service desks; it should shape business outcomes by identifying workflows that agents can improve, then designing the data, access, and controls that make those agents safe to deploy. That is a meaningful shift in posture, and it reflects the company’s broader Frontier Firm narrative. (microsoft.com)

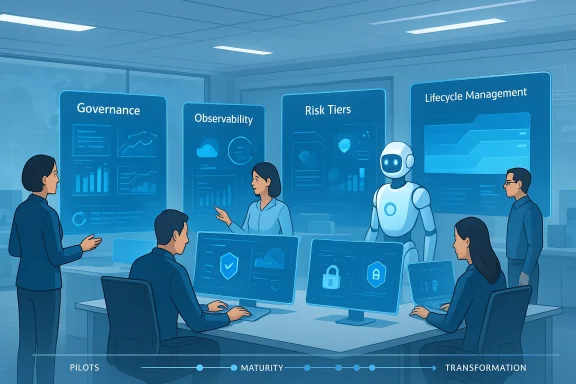

What makes the guide especially notable is how operational it is. Microsoft breaks the journey into governance, implementation, adoption, support, and impact measurement, then adds learning checklists, scenario examples, and maturity stages. That structure signals a key reality: the company believes successful agent deployment will be determined less by model quality alone than by whether enterprises can industrialize everything around the model. (microsoft.com)

Microsoft is also trying to move the conversation away from narrow automation. The company’s language about human-led systems is important because it frames agents as labor multipliers rather than replacements for leadership. That makes the model easier to sell internally and externally, especially in regulated industries or employee-heavy functions where outright automation would trigger resistance. (microsoft.com)

At the same time, the Frontier Firm narrative has a competitive edge. Microsoft is effectively telling customers that the organizations who move fastest on agents will pull away in cost efficiency, service quality, and innovation. The company has backed that claim elsewhere with references to Frontier Firms using AI across more business functions and reporting stronger outcomes than slower adopters. (blogs.microsoft.com)

Microsoft’s own internal language reflects this shift. The guide repeatedly distinguishes between personal assistants, simple low-risk declarative agents, and advanced enterprise agents built by professional developers. That distinction is crucial because the enterprise cannot govern all agents the same way without either over-controlling innovation or under-protecting sensitive workflows. (microsoft.com)

The company’s example scenarios are especially revealing. A read-only knowledge agent created in Microsoft 365 Copilot, grounded only in approved content and accessible sites, may be self-service and low risk. A pro-code agent that can transform, write, or move data across enterprise systems demands a much deeper review process. The policy follows the capability, not the label. (microsoft.com)

This is where Microsoft’s internal experience with Microsoft 365 Copilot appears to have helped. The company says it already had a strong foundation for labeling, permissions, and data hygiene, which made it easier to extend those controls to agents. That suggests a broader lesson for customers: if your data estate is messy, your agent strategy will be fragile no matter how good the models are. (microsoft.com)

The company also leans heavily on lifecycle management. Agent ownership, periodic attestation, and removal when an employee leaves are all presented as key controls for preventing sprawl and orphaned tools. That sounds basic, but in large enterprises basic controls often become the difference between a manageable portfolio and a shadow AI ecosystem. (microsoft.com)

By the time organizations reach operationalization, governance becomes more formal. Microsoft points to steering teams, data councils, and the Office of Responsible AI as mechanisms for keeping the AI portfolio aligned with policy and business value. In effect, the company is saying that scaling agents requires a management layer as sophisticated as the technology itself. (microsoft.com)

The final stage is where the tone becomes more transformational. Microsoft describes embedding AI into the culture, using continuous improvement funnels, and expanding human-AI collaboration so routine work can be offloaded to agents. That is where the Frontier Firm label starts to feel less like branding and more like a design thesis for the enterprise. (microsoft.com)

The maturity model also helps separate consumer enthusiasm from enterprise reality. A consumer can try an agent in minutes. An enterprise must think about compliance, support, ownership, measurement, and long-term maintenance. Microsoft’s framework acknowledges that asymmetry instead of pretending it can be wished away. (microsoft.com)

Microsoft’s AI Agent Launchpad is a good example of how to operationalize adoption. The modular learning path moves employees from AI mindset to basic agent understanding, to discovery, to no-code building, and finally to Copilot Studio. The design reflects a practical insight: different workers need different depths of enablement, and not everyone should start at the same place. (microsoft.com)

Peer influence is another major lever. Microsoft’s Copilot builder champs model and its Viva Engage community reflect the company’s belief that adoption spreads faster when employees see colleagues like themselves succeeding with the tools. That is often more persuasive than executive messaging alone because it turns AI from an abstract initiative into a visible work habit. (microsoft.com)

Microsoft’s advice is to tailor adoption by cohort, geography, and business function. Legal, sales, HR, engineering, and regional teams do not have the same needs, and trying to force a single campaign across all of them will dilute the message. Better adoption comes from precision, not volume. (microsoft.com)

Still, Microsoft is realistic that this is not the end state yet. For now, Microsoft Digital performs reviews, provides guidance, and backstops the system where the platform cannot yet self-govern. That hybrid model is likely what most large organizations will need for the foreseeable future. (microsoft.com)

AI is also beginning to support the support function itself. Microsoft cites internal agents used for compliance assistance, risk profile evaluation, and checks against responsible AI, security, privacy, and access standards. That is important because it shows the company moving beyond AI for end users and into AI for IT operations. (microsoft.com)

That has implications for staffing and operating models. Support teams will need to shift from “answer every question” to “design the conditions in which most questions never arise.” It is a subtle but significant change, and it mirrors the broader theme of the Frontier Firm: humans should be focused on exceptions, not repetitive administration. (microsoft.com)

The company also emphasizes cascading business goals into KPIs. That is a familiar management principle, but it becomes more important with agents because the effect chain can be indirect. An agent may reduce steps, improve quality, accelerate decision-making, or free up capacity rather than producing a single obvious output. (microsoft.com)

Microsoft’s use of telemetry tools like Viva Insights, Microsoft 365 admin center, Power BI, and internal trackers shows how messy this work can be in practice. The introduction of Agent 365 is important because it promises a more unified registry, observability, and interoperability layer. That suggests Microsoft sees measurement not as a dashboard problem but as a control-plane problem. (microsoft.com)

Microsoft’s broader frontier messaging reinforces this point. The company now talks about intelligence layers, trusted semantic foundations, and unified views of AI artifacts. That makes observability sound less like a monitoring add-on and more like the nervous system of the Frontier Firm. (blogs.microsoft.com)

It also explains why Microsoft keeps returning to the idea of the data estate. Consumer AI can tolerate imperfections in grounding and permissions because users can choose to ignore outputs that look off. Enterprise AI cannot rely on that safety valve, because the wrong answer might trigger compliance exposure, customer harm, or operational loss. (microsoft.com)

That is why Microsoft treats governable access, not just model capability, as the core enterprise challenge. The best agent in the world is still a liability if it can see too much, do too much, or linger after its owner has left. (microsoft.com)

That also puts pressure on competing platforms to match Microsoft’s full-stack story. Standalone agent tools may be impressive, but large enterprises increasingly want integration with identity, data governance, collaboration, analytics, and security. Microsoft is positioning itself as the vendor that can connect those layers into one operating model. (blogs.microsoft.com)

What matters most now is execution. Enterprises do not need more abstract language about the future of work; they need a repeatable way to decide which agents to build, how to govern them, how to support them, and how to prove that they matter. Microsoft’s guide is persuasive because it attempts to answer all four questions at once. (microsoft.com)

Source: Microsoft Becoming a Frontier Firm: A guide for deploying AI agents based on our experience at Microsoft - Inside Track Blog

Microsoft’s latest framing builds on an idea the company has been pushing throughout 2025 and into 2026: organizations move from personal AI assistance, to human-agent collaboration, and eventually to a Frontier Firm model where agents do more of the operational labor. Microsoft also emphasizes that this shift is not mainly a technology problem; it is an organizational design problem that requires governance, adoption, support, and telemetry to work together. (microsoft.com)

Overview

Overview

The most important thing about this guide is that it is not presented as theory. Microsoft Digital describes itself as Customer Zero, meaning it is using its own internal deployments to test the patterns it wants customers to follow. That makes the article less of a marketing slogan and more of a field manual for enterprise AI transformation, especially for IT teams trying to scale beyond pilots. (microsoft.com)The backdrop matters. Microsoft has spent the past several years proving out Microsoft 365 Copilot, building internal AI Centers of Excellence, refining data governance, and pushing Responsible AI practices into the enterprise stack. The agent story is the next layer on top of that foundation, not a replacement for it. In Microsoft’s view, the AI-ready data estate, the governance model, and the change-management muscle are all prerequisites for a workable agent strategy. (microsoft.com)

There is also a strong “IT as business strategist” theme running through the guide. Microsoft argues that IT should not merely approve tools or manage service desks; it should shape business outcomes by identifying workflows that agents can improve, then designing the data, access, and controls that make those agents safe to deploy. That is a meaningful shift in posture, and it reflects the company’s broader Frontier Firm narrative. (microsoft.com)

What makes the guide especially notable is how operational it is. Microsoft breaks the journey into governance, implementation, adoption, support, and impact measurement, then adds learning checklists, scenario examples, and maturity stages. That structure signals a key reality: the company believes successful agent deployment will be determined less by model quality alone than by whether enterprises can industrialize everything around the model. (microsoft.com)

Why Microsoft Is Pushing the Frontier Firm Model

Microsoft’s Frontier Firm concept rests on a simple but disruptive premise: agents will become the operational engine of business, while humans focus on judgment, strategy, relationships, and oversight. In the company’s own three-phase framing, workers first use AI assistants, then work alongside agents, and finally lead teams of digital workers that carry out core labor with relative autonomy. That is a radical shift in how enterprise software is understood. (microsoft.com)The strategic logic behind the shift

The logic is not hard to see. Enterprises have already absorbed the first wave of generative AI through copilots, chat experiences, and embedded assistance. The next source of value comes from agents that can take actions, manage workflows, and coordinate across systems instead of merely responding to prompts. Microsoft’s guide treats that as the point where AI stops being a productivity add-on and starts becoming part of the operating model. (microsoft.com)Microsoft is also trying to move the conversation away from narrow automation. The company’s language about human-led systems is important because it frames agents as labor multipliers rather than replacements for leadership. That makes the model easier to sell internally and externally, especially in regulated industries or employee-heavy functions where outright automation would trigger resistance. (microsoft.com)

At the same time, the Frontier Firm narrative has a competitive edge. Microsoft is effectively telling customers that the organizations who move fastest on agents will pull away in cost efficiency, service quality, and innovation. The company has backed that claim elsewhere with references to Frontier Firms using AI across more business functions and reporting stronger outcomes than slower adopters. (blogs.microsoft.com)

What changed from Copilot to agents

Copilot was a useful on-ramp because it normalized AI as a personal productivity layer. Agents raise the stakes because they can operate with more autonomy, access more data, and in some cases perform actions across enterprise systems. That means the organization must think about permissions, lifecycle, sharing, and risk in ways that are more complex than a standard assistant deployment. (microsoft.com)Microsoft’s own internal language reflects this shift. The guide repeatedly distinguishes between personal assistants, simple low-risk declarative agents, and advanced enterprise agents built by professional developers. That distinction is crucial because the enterprise cannot govern all agents the same way without either over-controlling innovation or under-protecting sensitive workflows. (microsoft.com)

Governance as the Foundation

If there is a single throughline in the guide, it is that governance is not a brake on innovation; it is what makes innovation scalable. Microsoft’s governance approach starts with data hygiene, labeling, and permissions, because without that groundwork agents can easily surface content they should not expose. In other words, good governance begins long before an agent is published. (microsoft.com)The matrixed governance model

Microsoft describes a matrixed approach that varies governance by risk, complexity, tool, and scope. Low-risk personal agents may need minimal oversight, while enterprise agents built with pro-code tools and custom connectors require security, privacy, accessibility, and responsible AI reviews. That is a practical way to avoid one-size-fits-all control structures that would either overwhelm IT or leave gaps. (microsoft.com)The company’s example scenarios are especially revealing. A read-only knowledge agent created in Microsoft 365 Copilot, grounded only in approved content and accessible sites, may be self-service and low risk. A pro-code agent that can transform, write, or move data across enterprise systems demands a much deeper review process. The policy follows the capability, not the label. (microsoft.com)

This is where Microsoft’s internal experience with Microsoft 365 Copilot appears to have helped. The company says it already had a strong foundation for labeling, permissions, and data hygiene, which made it easier to extend those controls to agents. That suggests a broader lesson for customers: if your data estate is messy, your agent strategy will be fragile no matter how good the models are. (microsoft.com)

Why governance must be risk-based

A rigid app-development approval model does not map cleanly to agentic tools. Agents can be built faster, proliferate more widely, and vary dramatically in autonomy and exposure. Microsoft’s response is to apply risk-based review logic, not blanket gates, so low-risk experimentation stays possible while high-impact use cases receive the scrutiny they deserve. (microsoft.com)The company also leans heavily on lifecycle management. Agent ownership, periodic attestation, and removal when an employee leaves are all presented as key controls for preventing sprawl and orphaned tools. That sounds basic, but in large enterprises basic controls often become the difference between a manageable portfolio and a shadow AI ecosystem. (microsoft.com)

- Data hygiene is the first control surface.

- Permissions should match what users already can access.

- Risk tiering should determine review intensity.

- Lifecycle management must be user-based, not just system-based.

- Attestation helps eliminate stale or abandoned agents.

- Observability should be built in, not bolted on later.

Implementation and Maturity

Microsoft’s five-stage AI maturity model is one of the most useful parts of the guide because it translates a fuzzy aspiration into a sequenced program. The stages move from awareness and foundation, to pilot projects, to operationalization, to enterprise-wide adoption, and finally to transformation with agentic AI. That progression is sensible because organizations rarely jump straight from experimentation to autonomous workflows without building the institutional habits needed to support them. (microsoft.com)From pilots to operating model

The company’s pilot stage emphasizes selective experimentation, business-value screening, and responsible AI review. That aligns with a familiar enterprise pattern: teams generate more ideas than they can scale, so the organization must learn how to pick the highest-value scenarios and stop weaker ones early. Microsoft’s guidance is explicit that the goal is not “more AI,” but better AI in the right places. (microsoft.com)By the time organizations reach operationalization, governance becomes more formal. Microsoft points to steering teams, data councils, and the Office of Responsible AI as mechanisms for keeping the AI portfolio aligned with policy and business value. In effect, the company is saying that scaling agents requires a management layer as sophisticated as the technology itself. (microsoft.com)

The final stage is where the tone becomes more transformational. Microsoft describes embedding AI into the culture, using continuous improvement funnels, and expanding human-AI collaboration so routine work can be offloaded to agents. That is where the Frontier Firm label starts to feel less like branding and more like a design thesis for the enterprise. (microsoft.com)

Why maturity matters more than novelty

Many organizations are tempted to ask, “Which agent should we build first?” Microsoft’s answer is more disciplined: first ask which business objective you are trying to move, then decide what level of maturity is required. That prevents the common trap of building flashy demonstrations that fail to alter core workflows. (microsoft.com)The maturity model also helps separate consumer enthusiasm from enterprise reality. A consumer can try an agent in minutes. An enterprise must think about compliance, support, ownership, measurement, and long-term maintenance. Microsoft’s framework acknowledges that asymmetry instead of pretending it can be wished away. (microsoft.com)

- Establish the business outcome first.

- Pilot only the most promising use cases.

- Formalize governance before scale.

- Build adoption and training into rollout.

- Track impact continuously, not intermittently.

Adoption and the Human Factor

Microsoft is unusually candid that technical readiness is not enough. The company says an AI-first mindset has to be cultivated through storytelling, peer examples, and structured learning if employees are going to move from passive users to active builders. That point matters because agent adoption is as much about psychology and habit as it is about tooling. (microsoft.com)Building confidence before scaling ambition

One of the strongest ideas in the guide is that employees need to understand when to reuse an agent, when to create one, and where to go for help. That sounds simple, but it addresses a persistent enterprise problem: if people cannot tell whether an existing tool already solves their problem, they will either duplicate effort or never adopt the right solution. (microsoft.com)Microsoft’s AI Agent Launchpad is a good example of how to operationalize adoption. The modular learning path moves employees from AI mindset to basic agent understanding, to discovery, to no-code building, and finally to Copilot Studio. The design reflects a practical insight: different workers need different depths of enablement, and not everyone should start at the same place. (microsoft.com)

Peer influence is another major lever. Microsoft’s Copilot builder champs model and its Viva Engage community reflect the company’s belief that adoption spreads faster when employees see colleagues like themselves succeeding with the tools. That is often more persuasive than executive messaging alone because it turns AI from an abstract initiative into a visible work habit. (microsoft.com)

A culture change, not a training event

The guide repeatedly stresses that agent adoption is a reset, not a continuation of the Copilot journey. That is insightful because some organizations assume once employees are comfortable with one AI tool, they will naturally transfer that comfort to autonomous agents. In reality, agent creation introduces new choices, new responsibilities, and new anxieties. (microsoft.com)Microsoft’s advice is to tailor adoption by cohort, geography, and business function. Legal, sales, HR, engineering, and regional teams do not have the same needs, and trying to force a single campaign across all of them will dilute the message. Better adoption comes from precision, not volume. (microsoft.com)

- Storytelling creates understanding faster than policy documents.

- Peer champions make adoption feel local and credible.

- Modular learning reduces overwhelm.

- Cohort targeting prevents generic, ineffective campaigns.

- Success sharing normalizes experimentation.

- Leadership sponsorship makes AI feel strategic, not optional.

Support and Operational Readiness

Support for agents is where the practical challenges really begin. A static help desk model assumes stable tools and repeatable incidents, but agents evolve quickly and can differ greatly by use case, permissions, and builder sophistication. Microsoft’s answer is to combine embedded guardrails, IT oversight, and user education so support is distributed rather than centralized in a bottleneck. (microsoft.com)Self-governing by design

The company’s ideal state is that agent-building and publishing tools will eventually include guardrails by default, reducing the need for intervention. That is a strong design principle because it pushes responsibility into the platform rather than relying entirely on people to remember every rule. In large enterprises, good defaults often outperform perfect policies. (microsoft.com)Still, Microsoft is realistic that this is not the end state yet. For now, Microsoft Digital performs reviews, provides guidance, and backstops the system where the platform cannot yet self-govern. That hybrid model is likely what most large organizations will need for the foreseeable future. (microsoft.com)

AI is also beginning to support the support function itself. Microsoft cites internal agents used for compliance assistance, risk profile evaluation, and checks against responsible AI, security, privacy, and access standards. That is important because it shows the company moving beyond AI for end users and into AI for IT operations. (microsoft.com)

Avoiding the support trap

The big risk here is recreating old service-desk patterns in a new environment. If every new agent requires manual human review, the enterprise will slow itself down and turn support into a gate rather than an enabler. Microsoft seems determined to avoid that by automating routine checks and reserving human judgment for edge cases. (microsoft.com)That has implications for staffing and operating models. Support teams will need to shift from “answer every question” to “design the conditions in which most questions never arise.” It is a subtle but significant change, and it mirrors the broader theme of the Frontier Firm: humans should be focused on exceptions, not repetitive administration. (microsoft.com)

- Embedded guidance reduces preventable issues.

- IT oversight handles riskier scenarios.

- AI-assisted support should take on routine checks.

- Human support should focus on edge cases.

- Pain points are a signal for where to build the next agent.

- Self-service resources reduce dependency on help desks.

Measuring Impact

Measurement is where many AI programs falter, and Microsoft is right to treat it as its own workstream. The guide makes a strong distinction between agent volume, agent usage, and agent value. That distinction is essential because a large number of created agents can still produce little or no business effect, while a small number of highly used agents may deliver outsized returns. (microsoft.com)What actually counts as value

Microsoft says measurement has to vary by agent type, persona, data access, and whether a tool is created for discovery or direct use. That is the correct framework because the value of a personal productivity agent is different from the value of a line-of-business workflow agent or an enterprise control-plane agent. The wrong metric can make the right project look weak. (microsoft.com)The company also emphasizes cascading business goals into KPIs. That is a familiar management principle, but it becomes more important with agents because the effect chain can be indirect. An agent may reduce steps, improve quality, accelerate decision-making, or free up capacity rather than producing a single obvious output. (microsoft.com)

Microsoft’s use of telemetry tools like Viva Insights, Microsoft 365 admin center, Power BI, and internal trackers shows how messy this work can be in practice. The introduction of Agent 365 is important because it promises a more unified registry, observability, and interoperability layer. That suggests Microsoft sees measurement not as a dashboard problem but as a control-plane problem. (microsoft.com)

Why observability is now strategic

Observability in an agent ecosystem is about more than counting events. It is about knowing what exists, how it behaves, who owns it, what it touches, and whether it contributes to the organization’s goals. Without that, an enterprise cannot confidently scale its agent portfolio or decide which agents should be promoted, constrained, or retired. (blogs.microsoft.com)Microsoft’s broader frontier messaging reinforces this point. The company now talks about intelligence layers, trusted semantic foundations, and unified views of AI artifacts. That makes observability sound less like a monitoring add-on and more like the nervous system of the Frontier Firm. (blogs.microsoft.com)

- Volume is not the same as value.

- Usage is useful, but incomplete.

- Persona-based metrics matter.

- Workflow automation is a stronger signal than raw creation counts.

- Telemetry should be part of the design from day one.

- ROI needs to be tied to business outcomes, not vanity metrics.

Enterprise vs. Consumer Impact

One of the most useful subtexts in the guide is the contrast between consumer-style AI adoption and enterprise-grade AI deployment. Consumers can experiment freely with limited consequences. Enterprises cannot, because they must manage confidential data, regulatory obligations, shared ownership, and lifecycle risk at scale. (microsoft.com)Different worlds, different rules

That difference explains why Microsoft’s guide leans so heavily on policies, catalogs, approval pathways, and role-based oversight. A consumer might care whether an AI tool is convenient. An enterprise must also care whether it is durable, auditable, and safe enough to place inside a business process. The stakes are simply not the same. (microsoft.com)It also explains why Microsoft keeps returning to the idea of the data estate. Consumer AI can tolerate imperfections in grounding and permissions because users can choose to ignore outputs that look off. Enterprise AI cannot rely on that safety valve, because the wrong answer might trigger compliance exposure, customer harm, or operational loss. (microsoft.com)

That is why Microsoft treats governable access, not just model capability, as the core enterprise challenge. The best agent in the world is still a liability if it can see too much, do too much, or linger after its owner has left. (microsoft.com)

The broader market signal

For rivals and customers alike, the message is clear: the next competitive edge will not come from merely shipping more AI features. It will come from packaging those features into a governable, supportable, measurable system that enterprises can actually trust. Microsoft is betting that its combination of Copilot, Power Platform, Teams, Azure OpenAI, and Agent 365 will make that easier for customers to adopt at scale. (microsoft.com)That also puts pressure on competing platforms to match Microsoft’s full-stack story. Standalone agent tools may be impressive, but large enterprises increasingly want integration with identity, data governance, collaboration, analytics, and security. Microsoft is positioning itself as the vendor that can connect those layers into one operating model. (blogs.microsoft.com)

Strengths and Opportunities

Microsoft’s guide is strongest when it treats agent adoption as an enterprise discipline rather than a product launch. The framework is broad enough to cover governance, implementation, support, and measurement, yet concrete enough to be useful to IT leaders trying to move beyond pilots. That combination gives the article real value as a blueprint, not just a vision statement.- Clear maturity model that helps organizations sequence adoption.

- Risk-based governance instead of one-size-fits-all approvals.

- Strong emphasis on data hygiene as a prerequisite for agent success.

- Practical adoption programs like Launchpad and builder champs.

- Support automation that reduces dependency on human bottlenecks.

- Telemetry-first thinking that makes value tracking more credible.

- Enterprise-wide framing that aligns AI work with business outcomes.

Risks and Concerns

The guide is ambitious, but it also exposes how hard Frontier Firm transformation will be in practice. Many organizations will struggle to sustain the governance discipline Microsoft describes, especially if their data estate is fragmented, their ownership models are unclear, or their AI teams are already overloaded. There is also a real risk that enthusiasm for agents could outpace operational readiness.- Agent sprawl could overwhelm governance if discovery and reuse are weak.

- Over-reliance on human review could slow adoption and recreate old bottlenecks.

- Weak data hygiene would undermine grounding and increase exposure risk.

- Measurement confusion could produce activity metrics instead of business metrics.

- Change fatigue may emerge if employees are asked to adopt too many new workflows at once.

- Support complexity could rise faster than self-service tooling matures.

- Shadow AI may proliferate if approved pathways are too slow or difficult to use.

Looking Ahead

Microsoft’s own trajectory suggests the Frontier Firm model is moving from internal doctrine to platform strategy. Agent 365, stronger observability, and the company’s growing emphasis on intelligence layers all point toward a future where agent governance becomes a first-class enterprise control surface. That is likely to shape how Microsoft packages AI for customers in the next wave of product and service releases. (blogs.microsoft.com)What matters most now is execution. Enterprises do not need more abstract language about the future of work; they need a repeatable way to decide which agents to build, how to govern them, how to support them, and how to prove that they matter. Microsoft’s guide is persuasive because it attempts to answer all four questions at once. (microsoft.com)

- Build an agent portfolio with explicit ownership.

- Tie every deployment to a measurable business outcome.

- Make governance and observability part of the architecture.

- Invest in workforce enablement before scale.

- Use agent data to refine both policy and product design.

Source: Microsoft Becoming a Frontier Firm: A guide for deploying AI agents based on our experience at Microsoft - Inside Track Blog