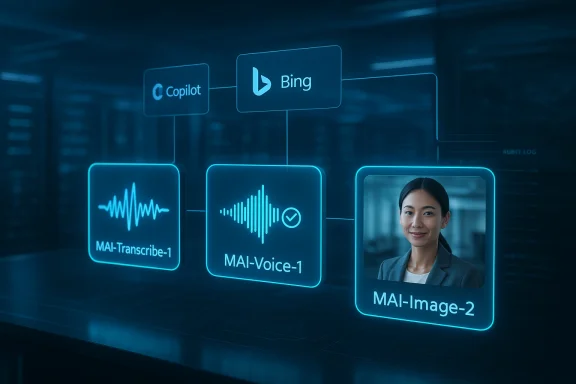

Microsoft is making a sharper bid for control of its AI future, and the implications go well beyond a single model launch. With MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, the company is not just adding capabilities to Foundry and Copilot; it is signaling that it wants to own more of the stack that defines modern AI experiences. That matters because Microsoft has long been viewed as an enterprise gateway to other people’s models, but this release suggests a more ambitious identity: a platform owner with its own model family, its own economics, and its own product agenda. The move also raises a bigger question for customers and competitors alike: when Microsoft can deliver in-house models that are fast, efficient, and good enough to stand beside the best in the market, how dependent does it really need to be on OpenAI? Microsoft’s own Foundry blog says the new models are part of “another step towards” a more complete AI platform, and that framing is hard to ignore.

Microsoft’s AI story has evolved in stages, and each stage has reduced the company’s reliance on outside partners. At first, the company’s strongest generative experiences were largely powered by OpenAI models inside products like Copilot and Bing. That arrangement delivered speed and market relevance, but it also meant that Microsoft’s consumer and enterprise experience depended heavily on another company’s release cadence, safety choices, and product roadmap. The release of MAI-Image-1 and earlier MAI-branded models marked the first clear sign that Microsoft wanted a bigger role in model development itself, not just distribution.

That shift is now visible in the way Microsoft is talking about Foundry. The April 2, 2026 announcement describes the new models as a public preview and positions them as foundational building blocks for a more complete AI and app-agent factory. Microsoft is emphasizing practical utility, not just benchmark prestige. In speech, that means transcription and voice generation tuned for enterprise efficiency; in imagery, it means a model designed with designers and photographers, but optimized for workflow usefulness rather than novelty alone.

The timing also matters. Microsoft has been investing in its own AI infrastructure, including dedicated silicon and inference economics, which makes internal models more attractive than ever. If the company can lower cost per task while improving latency and output quality, it gains a much stronger position inside consumer and enterprise products alike. That is why these model launches feel strategic rather than cosmetic: they are not isolated demos, but pieces of a broader platform ownership strategy.

There is also a competitive backdrop that makes this move especially significant. In speech, the field is crowded with systems from Google, OpenAI, and specialized vendors. In image generation, Microsoft is now competing in a space where users care about realism, text rendering, prompt adherence, and editing flexibility. The company does not need to win every benchmark, but it does need to prove that its in-house stack is strong enough to anchor products people already use every day. That is a very different ambition from simply hosting best-in-class third-party models.

That performance profile matters because transcription is no longer a niche feature. It underpins meeting notes, accessibility tools, content indexing, call-center analysis, video workflows, and increasingly, voice agents. Microsoft’s positioning makes clear that MAI-Transcribe-1 is not intended as a toy for demos; it is meant to be a production model for high-volume workloads. The move from “good transcription” to “economical transcription at scale” is the real differentiator here, especially for enterprises that process large audio volumes every day.

For enterprise buyers, this is the kind of feature that quietly matters in procurement conversations. A model that reduces GPU cost while preserving enough accuracy can unlock new workloads that were previously too expensive to automate. It also gives Microsoft a chance to pitch an integrated platform rather than a patchwork of point tools.

The model also fits a broader trend in enterprise AI: customers increasingly want models that are good enough, predictable, and easy to operationalize. That is especially true in regulated sectors, where compliance and retention rules matter as much as raw model intelligence. Microsoft’s Foundry-centric distribution suggests it understands that reality.

That pricing and performance structure makes the model interesting in two separate ways. First, it lowers the barrier to production use in conversational AI, long-form narration, and accessibility tools. Second, it strengthens Microsoft’s position in a category where latency and naturalness are both important and difficult to optimize at once. A voice model that is expressive but clunky is not useful; a model that is fast but flat is equally limited. Microsoft’s message is that MAI-Voice-1 aims to solve both problems at once.

The company’s Foundry documentation also frames MAI-Voice-1 as a neural text-to-speech model with expressive, natural output and consistent persona quality. That matters because a voice model is only as good as its perceived stability over time. If a brand or product wants to sound coherent, it needs more than a convincing one-off demo; it needs a repeatable vocal identity.

That approval layer will matter. In consumer settings, people often value convenience above nuance. In enterprise settings, however, trust is the product. A company may accept slightly more friction if it means better control, clearer audit trails, and lower legal risk. Microsoft is betting that responsible restrictions will make MAI-Voice-1 easier, not harder, to adopt.

The company has already built the channels. Foundry gives developers access. Azure Speech provides enterprise plumbing. Copilot gives consumer and productivity exposure. If the model proves stable, Microsoft can place it in front of millions of users without forcing them to learn a new tool.

The image-generation market has moved far beyond the question of whether a model can make a picture. Users now care about whether it can render usable text, maintain visual consistency, follow prompts precisely, and produce assets that are trustworthy enough to publish. Microsoft appears to be optimizing MAI-Image-2 for that second-order problem: not artistic surprise, but practical utility.

The reported enterprise praise from WPP, one of the first companies scaling the model, reinforces this framing. Creative leaders do not praise a model like this because it is flashy; they praise it because it is usable. That distinction matters a great deal.

In practice, that means Microsoft can make image generation feel mundane in the best possible way: something users do during real work, not just for experimentation. The more that happens, the more Microsoft controls the creative layer of its own ecosystem.

That matters because defaults shape user behavior. If the output is good enough and the workflow is smooth enough, most users will not care what model family is underneath. They will just use the tool already available inside their Microsoft environment.

That matters because platform power often depends on where developers start. If the first place they try a Microsoft model is Foundry, then Microsoft gets more than usage; it gets mindshare, telemetry, and a better chance to shape how applications are built. In AI, the control plane is as important as the model plane.

The official Microsoft Learn documentation for MAI-Transcribe-1 and MAI-Voice-1 also reinforces the operational angle. These are not research curiosities; they are supported services with implementation pathways, sample workflows, and production considerations. That gives them staying power.

That does not mean Microsoft is abandoning external models. It means the company is building optionality. In a market where model quality, cost, and safety can change quickly, optionality is power.

It also gives customers more ways to buy in. Some will want Microsoft-native models for governance reasons. Others will want the best available model for a specific task. Foundry makes it easier to present both paths without forcing customers to leave the Microsoft ecosystem.

That is especially true for visual generation. If MAI-Image-2 is embedded into Bing or Copilot, the user experience becomes less about “going to an AI model” and more about getting something done. That may sound subtle, but it is how mainstream adoption usually happens.

That makes the models feel less like novelty features and more like everyday utilities.

That means Microsoft’s rollout choices matter just as much as model quality. Tight limits can protect the platform, but they can also reduce excitement. The company has to walk a careful line between responsible control and visible usefulness.

The company’s in-house models strengthen that advantage. When Microsoft controls more of the stack, it can better manage policy, output quality, and integration with the tools enterprises already use. That makes the AI story easier to explain to IT and security teams.

For many businesses, that will be a feature rather than a bug. They want innovation, but they also want a paper trail.

That is why Microsoft’s integrated approach is so powerful. It can sell AI as a feature of systems enterprises already run, not as a separate experiment they must learn to manage.

That is an intelligent position, because workflow relevance is often more valuable than benchmark supremacy. A model that is slightly less glamorous but deeply embedded can still win.

Microsoft is not trying to be the coolest AI company in the room. It is trying to become the most unavoidable one.

For customers, a more independent Microsoft may mean better product continuity and more specialized experiences. For Microsoft, it means the company can adapt faster if the market shifts.

The next few months should reveal whether Microsoft is willing to loosen the experience enough to make these models broadly useful, or whether it will keep them tightly constrained in the name of safety and control. That balance will shape adoption more than any benchmark table will.

Source: Cloud Wars Microsoft Doubles Down on In-House AI With MAI Voice, Transcription, and Image Models

Background

Background

Microsoft’s AI story has evolved in stages, and each stage has reduced the company’s reliance on outside partners. At first, the company’s strongest generative experiences were largely powered by OpenAI models inside products like Copilot and Bing. That arrangement delivered speed and market relevance, but it also meant that Microsoft’s consumer and enterprise experience depended heavily on another company’s release cadence, safety choices, and product roadmap. The release of MAI-Image-1 and earlier MAI-branded models marked the first clear sign that Microsoft wanted a bigger role in model development itself, not just distribution.That shift is now visible in the way Microsoft is talking about Foundry. The April 2, 2026 announcement describes the new models as a public preview and positions them as foundational building blocks for a more complete AI and app-agent factory. Microsoft is emphasizing practical utility, not just benchmark prestige. In speech, that means transcription and voice generation tuned for enterprise efficiency; in imagery, it means a model designed with designers and photographers, but optimized for workflow usefulness rather than novelty alone.

The timing also matters. Microsoft has been investing in its own AI infrastructure, including dedicated silicon and inference economics, which makes internal models more attractive than ever. If the company can lower cost per task while improving latency and output quality, it gains a much stronger position inside consumer and enterprise products alike. That is why these model launches feel strategic rather than cosmetic: they are not isolated demos, but pieces of a broader platform ownership strategy.

There is also a competitive backdrop that makes this move especially significant. In speech, the field is crowded with systems from Google, OpenAI, and specialized vendors. In image generation, Microsoft is now competing in a space where users care about realism, text rendering, prompt adherence, and editing flexibility. The company does not need to win every benchmark, but it does need to prove that its in-house stack is strong enough to anchor products people already use every day. That is a very different ambition from simply hosting best-in-class third-party models.

Why this announcement landed now

Microsoft has been steadily preparing customers for a more integrated AI platform. Foundry has become the company’s main venue for model access, deployment, and experimentation, while Copilot and Bing remain the consumer-facing distribution engines. By launching MAI models into both surfaces, Microsoft is building a feedback loop: model capability feeds product adoption, and product adoption feeds model relevance. That is a classic platform play, and it is also a subtle declaration that Microsoft wants to be judged as a model developer, not merely a model broker.- Model ownership gives Microsoft more pricing and packaging flexibility.

- Foundry distribution makes the models useful to developers, not just consumers.

- Copilot integration turns model quality into day-to-day user experience.

- Bing and PowerPoint rollout extend the reach into familiar Microsoft surfaces.

- Enterprise governance becomes easier when the stack is more internally controlled.

MAI-Transcribe-1: Speech Recognition as a Cost-and-Speed Play

The most immediately enterprise-relevant launch is MAI-Transcribe-1, Microsoft’s speech-to-text model for the 25 most-used languages. Microsoft says the model is 2.5 times faster than its Azure Fast offering for batch transcription, while also delivering lower word error rates than several prominent systems, including GPT-Transcribe, Scribe v2, Gemini 3.1 Flash, and Whisper-large-v3. On paper, that combination of speed and quality is exactly what large-scale transcription customers want: enough accuracy to trust, enough throughput to scale, and enough cost efficiency to deploy broadly.That performance profile matters because transcription is no longer a niche feature. It underpins meeting notes, accessibility tools, content indexing, call-center analysis, video workflows, and increasingly, voice agents. Microsoft’s positioning makes clear that MAI-Transcribe-1 is not intended as a toy for demos; it is meant to be a production model for high-volume workloads. The move from “good transcription” to “economical transcription at scale” is the real differentiator here, especially for enterprises that process large audio volumes every day.

The enterprise economics are the real story

A transcription model that is faster and cheaper can become a hidden operational advantage. If a company transcribes customer calls, video content, training material, or multilingual meetings at high volume, small improvements in throughput and word error rates compound quickly. Microsoft’s own documentation emphasizes the model’s high accuracy and high efficiency, and the foundry blog stresses competitive accuracy at roughly half the GPU cost of leading alternatives. That combination suggests Microsoft is thinking beyond model bragging rights and into usage economics.For enterprise buyers, this is the kind of feature that quietly matters in procurement conversations. A model that reduces GPU cost while preserving enough accuracy can unlock new workloads that were previously too expensive to automate. It also gives Microsoft a chance to pitch an integrated platform rather than a patchwork of point tools.

- Lower cost per transcription reduces operating expense.

- Faster batch throughput supports high-volume workflows.

- Better word accuracy lowers downstream editing time.

- Multilingual coverage helps global organizations standardize processes.

- Azure integration simplifies deployment and governance.

Where MAI-Transcribe-1 fits in practice

Microsoft says the model is intended for use cases like video captioning, meeting transcription, accessibility tooling, call analysis, and voice-agent enablement. Those are not abstract examples; they are the kinds of workloads that often determine whether AI is treated as a strategic capability or a pilot project. If transcription is fast, accurate, and affordable enough, it becomes something companies embed everywhere rather than ration carefully.The model also fits a broader trend in enterprise AI: customers increasingly want models that are good enough, predictable, and easy to operationalize. That is especially true in regulated sectors, where compliance and retention rules matter as much as raw model intelligence. Microsoft’s Foundry-centric distribution suggests it understands that reality.

MAI-Voice-1: Natural Speech, Faster Generation, and Custom Voices

MAI-Voice-1 is Microsoft’s strongest push yet into in-house speech generation. It is now being rolled out to developers through Foundry and the MAI Playground, and Microsoft says users can create a custom voice from a short audio snippet. The company also highlights a dramatic efficiency claim: the model can generate roughly a minute of audio per second, with pricing starting at $22 per 1 million characters.That pricing and performance structure makes the model interesting in two separate ways. First, it lowers the barrier to production use in conversational AI, long-form narration, and accessibility tools. Second, it strengthens Microsoft’s position in a category where latency and naturalness are both important and difficult to optimize at once. A voice model that is expressive but clunky is not useful; a model that is fast but flat is equally limited. Microsoft’s message is that MAI-Voice-1 aims to solve both problems at once.

Why voice generation is suddenly strategic

Voice has become a core interface for AI because it feels faster and more natural than typing in many scenarios. That is especially true for copilots, assistants, avatar systems, customer-service bots, and content narration. Microsoft’s own language around the model reflects that shift: it is not just about synthetic speech, but about human-centered communication that fits real workflows.The company’s Foundry documentation also frames MAI-Voice-1 as a neural text-to-speech model with expressive, natural output and consistent persona quality. That matters because a voice model is only as good as its perceived stability over time. If a brand or product wants to sound coherent, it needs more than a convincing one-off demo; it needs a repeatable vocal identity.

- Expressive speech improves listener engagement.

- Fast generation supports interactive experiences.

- Custom voices create branding opportunities.

- Long-form narration opens publishing and media workflows.

- Accessible audio can help users who rely on spoken content.

Custom voices, governance, and trust

The ability to create a custom voice from a short audio sample is one of the most commercially important parts of the launch, but it is also one of the most sensitive. Voice cloning can be enormously useful for brands, educators, accessibility advocates, and app builders. It can also be abused for impersonation and fraud. Microsoft appears aware of that tension; its Foundry blog says custom voice creation requires an approval process aligned with responsible AI policies, which is exactly the sort of friction enterprises usually want when speech identity is involved.That approval layer will matter. In consumer settings, people often value convenience above nuance. In enterprise settings, however, trust is the product. A company may accept slightly more friction if it means better control, clearer audit trails, and lower legal risk. Microsoft is betting that responsible restrictions will make MAI-Voice-1 easier, not harder, to adopt.

The product opportunity inside Microsoft’s ecosystem

The best-case scenario for Microsoft is that MAI-Voice-1 becomes an embedded layer across Copilot, narration tools, training applications, accessibility features, and enterprise agents. In that world, the model is not competing only on “voice quality,” but on how well it fits into the Microsoft stack. That is a much stronger position, because distribution often beats novelty.The company has already built the channels. Foundry gives developers access. Azure Speech provides enterprise plumbing. Copilot gives consumer and productivity exposure. If the model proves stable, Microsoft can place it in front of millions of users without forcing them to learn a new tool.

MAI-Image-2: Microsoft’s Bid for Practical Image Generation

MAI-Image-2 may be the most visible of the three models, but its strategic role is also the most nuanced. Microsoft says it was developed in collaboration with creatives in photography and design, and it emphasizes improved clarity, accurate skin tone replication, and more natural lighting. The model is available through Foundry and Copilot, and Microsoft says it is rolling out to Bing and PowerPoint as well. That combination tells you a lot: this is not just a creative sandbox, but a model intended to live inside everyday productivity surfaces.The image-generation market has moved far beyond the question of whether a model can make a picture. Users now care about whether it can render usable text, maintain visual consistency, follow prompts precisely, and produce assets that are trustworthy enough to publish. Microsoft appears to be optimizing MAI-Image-2 for that second-order problem: not artistic surprise, but practical utility.

What Microsoft seems to be optimizing for

If MAI-Image-2 is tuned for photorealism and campaign-ready visuals, then Microsoft is making a very deliberate choice. It is not trying to out-Midjourney Midjourney, nor does it need to win every stylistic contest against OpenAI or Google. Instead, it seems to be chasing a model that can live inside PowerPoint, Bing, Copilot, and internal enterprise workflows without feeling second-rate. That is a useful lane because it aligns with Microsoft’s strengths in distribution and workflow integration.The reported enterprise praise from WPP, one of the first companies scaling the model, reinforces this framing. Creative leaders do not praise a model like this because it is flashy; they praise it because it is usable. That distinction matters a great deal.

- Photorealism improves professional credibility.

- Accurate skin tones matter in marketing and portrait work.

- Natural lighting reduces the synthetic look common in AI images.

- Text rendering supports slides, posters, and layouts.

- Workflow fit makes the model more than a standalone generator.

Copilot, Bing, and PowerPoint are the real distribution engines

Microsoft’s biggest advantage here is not the model itself, but where the model lands. If MAI-Image-2 becomes the default backend in Copilot or Bing Image Creator, then Microsoft can normalize its visual style across products used by consumers, students, marketers, and enterprises. If it lands in PowerPoint, the model becomes relevant to one of Microsoft’s most important productivity workflows. That is much more powerful than a standalone image app.In practice, that means Microsoft can make image generation feel mundane in the best possible way: something users do during real work, not just for experimentation. The more that happens, the more Microsoft controls the creative layer of its own ecosystem.

Competitive implications

This is where the launch starts to look like a market statement. Microsoft is now competing with OpenAI, Google, Adobe, and Midjourney not just as a partner or host, but as a model owner. That does not mean the company must beat all of them in every benchmark. But it does mean it can no longer hide behind integration as its only AI story. The company has to prove that its own models are good enough to be defaults.That matters because defaults shape user behavior. If the output is good enough and the workflow is smooth enough, most users will not care what model family is underneath. They will just use the tool already available inside their Microsoft environment.

Microsoft Foundry as the Control Plane

One of the most important details in this launch is not the models themselves, but the venue. Microsoft Foundry is where the company is concentrating its developer-facing AI strategy, and the new MAI models are designed to reinforce that position. Microsoft’s Foundry blog describes the announcement as another step toward the most complete AI and app-agent factory, which is a strong signal that the company wants a unified place for model access, experimentation, deployment, and governance.That matters because platform power often depends on where developers start. If the first place they try a Microsoft model is Foundry, then Microsoft gets more than usage; it gets mindshare, telemetry, and a better chance to shape how applications are built. In AI, the control plane is as important as the model plane.

Foundry is about more than demos

A lot of AI launches are still optimized for splashy demos. Microsoft’s approach here is more industrial. By putting MAI-Transcribe-1 and MAI-Voice-1 into Foundry and Azure Speech, the company is making it easier to move from trial to deployment. That’s an important distinction because enterprise AI only matters when it can survive procurement, compliance review, and scaling pressure.The official Microsoft Learn documentation for MAI-Transcribe-1 and MAI-Voice-1 also reinforces the operational angle. These are not research curiosities; they are supported services with implementation pathways, sample workflows, and production considerations. That gives them staying power.

- Foundry centralizes developer access.

- Azure Speech anchors enterprise deployment.

- MAI Playground lowers the friction for experimentation.

- Governance controls help satisfy compliance teams.

- Model choice becomes part of Microsoft’s ecosystem strategy.

Why control matters in enterprise AI

Companies buying AI increasingly want predictability, and Microsoft understands that better than many rivals. Enterprises want to know where the model runs, how data is handled, what controls are available, and how easy it will be to audit the output later. A more vertically integrated Microsoft can provide those answers without relying as heavily on a partner’s roadmap.That does not mean Microsoft is abandoning external models. It means the company is building optionality. In a market where model quality, cost, and safety can change quickly, optionality is power.

A mixed-model future looks likely

The strategic takeaway is not that Microsoft will stop using OpenAI. It is that Microsoft no longer wants to depend on OpenAI for everything that matters. A mixed-model strategy is increasingly normal in AI, and Microsoft seems intent on being one of the companies best positioned to execute it. That gives the company leverage on pricing, product design, and release timing.It also gives customers more ways to buy in. Some will want Microsoft-native models for governance reasons. Others will want the best available model for a specific task. Foundry makes it easier to present both paths without forcing customers to leave the Microsoft ecosystem.

Consumer Impact: Familiar Surfaces, Lower Friction

For consumers, the most important part of this rollout is that it lands in places they already use. Copilot, Bing, and PowerPoint are familiar surfaces, and familiarity lowers the barrier to adoption. A user who can quickly generate a transcript, a voice clip, or an image without switching to a separate tool is far more likely to experiment, and experiments often become habits.That is especially true for visual generation. If MAI-Image-2 is embedded into Bing or Copilot, the user experience becomes less about “going to an AI model” and more about getting something done. That may sound subtle, but it is how mainstream adoption usually happens.

Everyday use cases are the real consumer prize

Most consumers do not need cinematic perfection or studio-grade voice synthesis. They want useful, fast outputs for ordinary tasks. Microsoft’s models appear tuned for exactly that kind of demand, whether it is generating a birthday graphic, captioning a video, drafting a voice interaction, or turning a meeting into usable notes.That makes the models feel less like novelty features and more like everyday utilities.

- Birthday cards and personal visuals.

- Social posts and quick promotional graphics.

- School projects and classroom materials.

- Meeting notes and transcript cleanup.

- Voice-based assistants for hands-free interaction.

But consumers are also less forgiving

The flip side is that consumer users notice friction fast. If an image model feels too constrained, if a voice feature sounds too generic, or if transcription misses obvious words, users will move on. Consumers do not evaluate a product in strategic terms; they just decide whether it feels generous, useful, and fun.That means Microsoft’s rollout choices matter just as much as model quality. Tight limits can protect the platform, but they can also reduce excitement. The company has to walk a careful line between responsible control and visible usefulness.

Enterprise Impact: Governance, Cost, and Predictability

Enterprise customers will read this launch very differently from consumers. They are less interested in whether the models are exciting and more interested in whether the models are governable, affordable, and practical. Microsoft’s advantage is that it has spent decades selling into companies that care about compliance, administration, and procurement, and that experience gives it a head start in AI deployment.The company’s in-house models strengthen that advantage. When Microsoft controls more of the stack, it can better manage policy, output quality, and integration with the tools enterprises already use. That makes the AI story easier to explain to IT and security teams.

Why enterprises may prefer Microsoft’s tighter posture

A model with approval steps, usage controls, and predictable behavior can be more attractive than a more permissive model that is harder to govern. This is especially true for voice cloning and image generation, where misuse risks are obvious. Microsoft’s approval process for custom voice creation suggests it knows the stakes and is willing to slow things down when necessary.For many businesses, that will be a feature rather than a bug. They want innovation, but they also want a paper trail.

- Auditability supports governance reviews.

- Predictable pricing helps with budgeting.

- Workflow integration reduces adoption friction.

- Data control reassures compliance teams.

- Approval processes reduce misuse risk.

Enterprise uses are where the ROI appears

The enterprise opportunity is strongest where the models save time, reduce editing, or remove manual steps. Transcription can accelerate meetings and compliance records. Voice can power training and support. Image generation can speed up internal communications, mockups, and presentation work. These are not flashy use cases, but they are the ones that justify recurring spend.That is why Microsoft’s integrated approach is so powerful. It can sell AI as a feature of systems enterprises already run, not as a separate experiment they must learn to manage.

Competitive Positioning: Microsoft, OpenAI, Google, Adobe, and Midjourney

Microsoft’s model launch lands in a crowded and increasingly mature market. OpenAI remains a major force in frontier model quality and consumer attention. Google is pushing its own multimodal stack across search and productivity. Adobe owns a critical slice of professional creative workflows. Midjourney still carries premium aesthetic cachet for many users. Microsoft’s answer is not to outdo all of them on every front; it is to own the workflows where models become useful in daily work.That is an intelligent position, because workflow relevance is often more valuable than benchmark supremacy. A model that is slightly less glamorous but deeply embedded can still win.

Microsoft’s differentiator is distribution plus utility

The company has an enormous advantage in reach. If it can make MAI models the default inside Copilot, Bing, PowerPoint, and Azure Speech, it can normalize use faster than competitors that rely on standalone apps or niche creator communities. That advantage becomes even bigger if the models are good enough to eliminate most reasons for users to leave the Microsoft ecosystem.Microsoft is not trying to be the coolest AI company in the room. It is trying to become the most unavoidable one.

- OpenAI still sets part of the frontier.

- Google competes on breadth and integration.

- Adobe owns professional creative workflows.

- Midjourney remains strong on aesthetic distinctiveness.

- Microsoft is betting on ubiquity, utility, and enterprise trust.

The OpenAI relationship becomes more complex

This launch also changes the politics of Microsoft’s partnership with OpenAI. The more Microsoft can supply from its own model stack, the more leverage it has over pricing, timing, and product design. That does not end the relationship, but it does make it more balanced. In strategic terms, that is probably exactly what Microsoft wants.For customers, a more independent Microsoft may mean better product continuity and more specialized experiences. For Microsoft, it means the company can adapt faster if the market shifts.

Strengths and Opportunities

Microsoft has several clear strengths in this launch, and they line up unusually well with the direction of the AI market. The company is combining model ownership, distribution, enterprise trust, and workflow integration in a way that few rivals can match. If it executes well, these MAI models could quietly become defaults across huge parts of its ecosystem.- In-house control reduces dependency on external model roadmaps.

- Foundry availability gives developers a direct path to adoption.

- Copilot integration creates a massive consumer and enterprise funnel.

- Azure Speech support makes deployment easier for existing customers.

- Cost efficiency can unlock larger-scale transcription workloads.

- Voice cloning controls help balance utility with responsible AI.

- PowerPoint rollout gives image generation immediate productivity value.

- Bing distribution makes the image model easy to try.

- Multilingual transcription broadens global applicability.

- Consistent persona quality makes voice workflows more usable.

Risks and Concerns

The biggest risk is that Microsoft over-controls the experience and makes the models feel narrower than they need to be. A technically strong AI model can still disappoint if the product layers around it are too restrictive, too cautious, or too slow to evolve. That is especially dangerous in consumer AI, where enthusiasm is fragile and users move quickly when a tool feels stingy.- Over-filtering could frustrate legitimate creative use.

- Tight limits may reduce consumer excitement.

- Voice cloning carries clear impersonation and fraud risk.

- Image generation can trigger copyright and provenance concerns.

- Enterprise buyers will still demand proof of reliability at scale.

- Competitive pressure from Google and OpenAI will remain intense.

- Product fragmentation could arise if rollout policies differ too much across surfaces.

Looking Ahead

The most important question is not whether Microsoft has launched three good models. It clearly has. The real question is whether the company can translate these models into durable product habits inside Copilot, Bing, PowerPoint, and Foundry. If it can, this moment may be remembered less as a model announcement and more as the point where Microsoft became a true multi-model operator with a meaningful in-house AI identity.The next few months should reveal whether Microsoft is willing to loosen the experience enough to make these models broadly useful, or whether it will keep them tightly constrained in the name of safety and control. That balance will shape adoption more than any benchmark table will.

- Rollout depth across Copilot, Bing, and PowerPoint.

- Developer uptake through Foundry and Azure Speech.

- Enterprise response to governance and pricing.

- Consumer reaction to limits, quality, and ease of use.

- Competitive responses from OpenAI, Google, Adobe, and Midjourney.

Source: Cloud Wars Microsoft Doubles Down on In-House AI With MAI Voice, Transcription, and Image Models