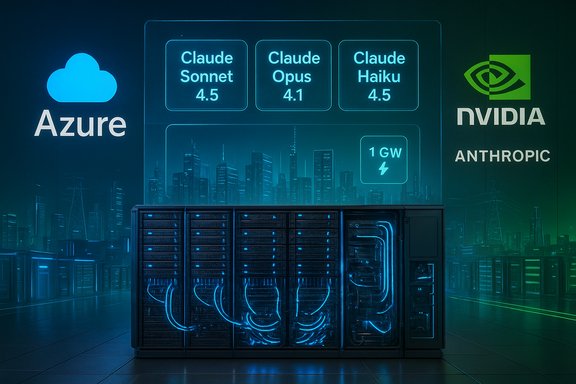

Microsoft, NVIDIA and Anthropic announced a sweeping three‑way strategic partnership that binds Anthropic’s Claude models to Microsoft Azure at massive scale, secures deep co‑engineering between Anthropic and NVIDIA on next‑generation hardware, and includes headline investment and compute commitments that together could reshape enterprise AI procurement and infrastructure planning.

Background

Anthropic, the San Francisco AI lab behind the Claude family of large language models, has moved from startup rapid growth into the center of a compute‑and‑capital arms race. Microsoft is positioning Azure as a multi‑model enterprise platform that can host and surface multiple frontier models inside its Copilot and Foundry toolsets. NVIDIA has evolved from GPU vendor to systems partner, building rack‑scale architectures (Grace Blackwell, Vera Rubin) that target the high memory, high bandwidth needs of today’s largest models. Together, the three companies are turning model development into an industrial‑scale collaboration that spans finance, data‑center engineering and product distribution. Microsoft framed the move as a way to broaden enterprise model choice inside Azure and its Copilot family, while Anthropic gains committed, predictable capacity and deep device‑level optimization for Claude. NVIDIA gains guaranteed demand for its latest systems and a formal co‑design relationship intended to extract performance and efficiency gains for both sides. Those shifts are visible in the topline numbers the companies announced and the product integration commitments that followed.Deal specifics — what was announced

Headline commitments (verified)

- Anthropic committed to purchase roughly $30 billion of Microsoft Azure compute capacity over multiple years.

- Anthropic also signaled the option to contract additional dedicated capacity up to one gigawatt of NVIDIA‑powered compute (an electrical‑capacity ceiling, not a literal GPU count).

- NVIDIA committed to invest up to $10 billion in Anthropic and to establish a deep technology partnership to co‑design hardware and software.

- Microsoft committed to invest up to $5 billion in Anthropic and to expand Claude availability across Azure AI Foundry and Microsoft’s Copilot family (including GitHub Copilot and Copilot Studio).

Product integrations and model availability

Microsoft said Anthropic’s frontier Claude models — cited publicly as Claude Sonnet 4.5, Claude Opus 4.1 and Claude Haiku 4.5 in partner materials — will be available to Azure AI Foundry customers and integrated across Microsoft’s Copilot offerings. That makes Claude the first frontier model family announced to be intentionally available across all three major cloud providers (AWS, Google Cloud and now Azure) in a single vendor’s enterprise product surfaces. Note: some outlets reported slight variation in version numbers; model versioning showed minor inconsistencies across coverage, and the companies’ product pages should be treated as definitive for exact model names and build numbers.Technical implications — one gigawatt, Grace Blackwell and Vera Rubin

What “one gigawatt” really means

The oft‑cited one gigawatt figure is an electrical capacity metric, not a literal GPU count. Delivering a sustained 1 GW IT load implies multiple AI‑dense data halls, high‑capacity substations, advanced liquid cooling and network fabrics, and multi‑year utility commitments. In practical terms, a 1 GW ceiling maps to tens of thousands — potentially many tens of thousands — of the latest accelerators and a capital outlay measured in the tens of billions of dollars when facility buildout, networking and power are included. That is why the announcement pairs electrical ceilings with multi‑year Azure purchase commitments: the capacity is a planning and procurement footprint rather than an overnight deployment.Target hardware — Grace Blackwell and Vera Rubin

The companies named NVIDIA’s Grace Blackwell family and the forthcoming Vera Rubin systems as the initial hardware platforms Anthropic will target. These platforms emphasize large memory pools, high interconnect bandwidth and rack‑scale topologies suited to very large model training and long‑context inference. Optimizing Claude for these architectures can yield measurable gains in throughput, latency and energy per token — but such gains require months of joint profiling, kernel and operator work, quantization strategy alignment and runtime compilation improvements. That co‑engineering is the explicit objective of the Anthropic–NVIDIA tie.Co‑design: why it matters

Model‑to‑silicon co‑design can unlock double‑digit efficiency improvements by matching model topologies and numerical formats to hardware primitives (tensor cores, high‑bandwidth memory, NVLink/NVSwitch fabrics). For Anthropic, the payoff is lower inference cost, faster training iterations, and the ability to operate denser, lower‑latency deployments for enterprise applications. For NVIDIA, the payoff is a reference customer that helps validate system designs and shape future architectures around real, large‑scale workloads. That reciprocity is central to the pact — and it changes the vendor‑customer dynamic into a joint engineering program.Strategic analysis — what each party gains

Microsoft — diversify and fortify Azure’s AI proposition

- Model diversity: Adding Claude to Azure’s model catalog reduces single‑vendor concentration risk and gives enterprises the choice to route workloads to the model best suited for the task.

- Enterprise integration: Surfacing Claude across Microsoft 365 Copilot, GitHub Copilot and Copilot Studio makes model choice an operational setting inside organizations already standardized on Microsoft tooling.

- Revenue and capacity justification: A multi‑billion Azure purchase commitment provides predictable demand signals that justify continued investment in purpose‑built AI infrastructure.

NVIDIA — system validation and market capture

- Guaranteed demand: An Anthropic compute ceiling and Azure placements translate into priority orders for Grace Blackwell / Vera Rubin systems, validating NVIDIA’s rack‑scale roadmap.

- Co‑design leverage: Working with a frontier model developer allows NVIDIA to tune upcoming architectures for workloads that will define the next performance bar, reinforcing its market leadership.

Anthropic — scale, predictability and distribution

- Scale economics: A long‑term Azure commitment stabilizes Anthropic’s unit economics for large‑scale inference and provides defined capacity windows for deployment.

- Distribution: Being formally available in Azure (in addition to AWS and Google Cloud) expands enterprise reach and simplifies procurement for customers standardized on Microsoft toolchains.

- Engineering partnership: Access to co‑design with NVIDIA reduces the technical risk of scaling very large models while improving throughput and TCO.

Strengths of the agreement

- Scale and predictability: The $30B Azure commitment anchors Anthropic’s capacity planning and gives Azure multi‑year visibility into enterprise demand.

- Deep technical alignment: Direct co‑engineering between Anthropic and NVIDIA promises measurable efficiency gains and better TCO for massive model deployments.

- Enterprise friendliness: Integrating Claude into Azure AI Foundry and Copilot surfaces reduces friction for businesses to adopt different frontier models in a governed, enterprise environment.

- Industry diversification: The pact reduces over‑reliance on any single model‑cloud pairing and creates a more multi‑sourced model ecosystem inside enterprise stacks.

Risks, unknowns and red flags

Circularity and concentration risk

The deal exemplifies a pattern where hyperscalers and chipmakers become investors and customers of model firms — a circular arrangement that tightly binds capital, compute and product roadmaps. That circularity can accelerate innovation but also concentrates power and risk in a small number of interdependent players. Regulators and customers should watch for anti‑competitive effects and escalation of preferential treatment that could distort competition.Execution risk: time, power and permitting

Building and operating a 1 GW AI footprint is a multi‑year engineering and permitting project. Utility agreements, substations, liquid‑cooling installs, and phased hardware deliveries are complex and regionally dependent. The one‑gigawatt figure is a planning ceiling, not an instant capability, and delivering sustained capacity at that scale will take years and substantial capital. Enterprises and investors should therefore treat the 1 GW metric as an operational horizon, not a near‑term guarantee.Financial and valuation uncertainty

Some outlets have reported wide variance in Anthropic’s valuation and revenue projections, and the $30B compute commitment may be structured as reserved consumption, credits or staged purchases that are conditional on milestones. Public estimates of Anthropic’s valuation have ranged widely, which makes any arithmetic linking investment size to ownership or future revenues imprecise. Treat valuation quotes and revenue run‑rate claims with caution until formal filings or audited disclosures are available.Vendor lock‑in and portability tradeoffs

Deep co‑optimization for NVIDIA systems increases performance on those platforms but raises the portability cost of moving models to different accelerators. Enterprises that require cross‑accelerator portability should demand clarity on performance and migration pathways. Model bindings to specific vendor libraries or numerical formats can create long‑term switching costs.Energy and environmental concerns

Gigawatt‑scale AI campuses consume substantial power. The growth in dedicated AI capacity intensifies scrutiny around energy sourcing, carbon footprint and local grid impacts. Enterprises and regions hosting such facilities will need transparent energy procurement plans and investments in efficiency and renewable sourcing.Enterprise impact — what this means for IT leaders

- Broader model choice inside Azure: Teams will be able to select Claude variants for tasks where Claude’s safety profile, context length, or behavior suits the workload, while still using Azure governance, identity and compliance tools.

- Simplified procurement for Microsoft customers: Organizations already invested in Microsoft toolchains will find it easier to trial and adopt Claude models without adding a new cloud vendor for front‑line inference.

- New negotiation points in cloud contracts: Long‑term committed spend (e.g., reserved capacity, dedicated racks) and exit clauses will become salient — enterprises should negotiate clear SLAs, geo‑residency guarantees and capacity ramp terms.

- Governance complexity in multi‑model deployments: Routing, telemetry, provenance and liability will become central questions as organizations mix models by capability, cost and risk profile. IT and legal teams must update model governance policies accordingly.

What to watch next — practical milestones and signals

- Contract structures and timelines: Watch for the fine print on the $30B Azure commitment — duration, tranche structure, and exit provisions will determine commercial risk.

- Capacity rollouts: Regional and facility announcements tied to specific Vera Rubin or Blackwell builds will indicate how fast the partnership moves from commitment to live capacity.

- Model performance and portability benchmarks: Independent benchmarks and enterprise pilot reports showing Claude performance on NVIDIA‑tuned racks will validate co‑design claims.

- Regulatory reaction: Antitrust or national security reviews could emerge if the pact is seen to materially reduce competition among cloud, silicon and model vendors.

- Energy and sustainability plans: Public commitments on renewable energy sourcing and PUE (power usage effectiveness) for new data halls will be an important operational indicator.

How enterprises should prepare — a checklist

- Review existing cloud contracts to understand exit terms, reserved capacity commitments, and how vendor investments could affect pricing or preferential capacity.

- Update model governance: add routing rules, provenance tracking, and risk mitigation for multi‑model, multi‑cloud deployments.

- Benchmark models against internal KPIs (latency, cost per token, hallucination rates) before replacing or adding models in production.

- Ask vendors for portability guarantees or mitigations if you rely on cross‑accelerator or multi‑cloud redundancy.

- Factor energy and sustainability into capacity planning: require transparency on PUE, renewable sourcing and demand‑response arrangements.

Conclusion — measured optimism with cautious oversight

The Microsoft–NVIDIA–Anthropic pact is a landmark industrialization move for frontier AI. It pairs massive commercial commitments with technical co‑design promises that could materially lower model TCO and broaden enterprise access to cutting‑edge models inside familiar Microsoft tooling. At the same time, the arrangement crystallizes the circular funding and supply patterns that have become characteristic of the AI era, concentrating influence across a handful of large players and raising execution, regulatory and environmental questions that require scrutiny.For enterprises, the immediate opportunities are real: more model choice, easier procurement inside Azure, and potentially better performance on optimized hardware. The prudent path forward is to treat the headline numbers as strategic intent — not instant guarantees — demand contract clarity, strengthen governance for multi‑model operations, and insist on transparency about timelines, portability and sustainability as the partners execute on their sizable commitments.

Source: it-online.co.za Microsoft, Nvidia, Anthropic in strategic partnership - IT-Online