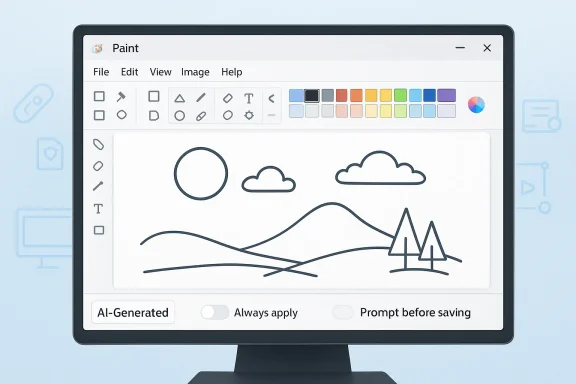

Microsoft Paint is taking another small but telling step into the AI era, and this time the change is less about generating images than about labeling them. According to the latest Insider chatter around Paint version 11.2601.421.0, Microsoft is adding an option to watermark AI-generated images, with the setting turned off by default and choices that let users always apply the mark or be prompted each time before saving. That may sound like a modest quality-of-life tweak, but in the broader context of generative AI, it is a clear signal that Microsoft is increasingly worried about provenance, trust, and the internet’s growing mountain of unlabeled synthetic media.

The Paint update lands at a moment when the conversation around generative AI has shifted from novelty to consequence. In 2023 and 2024, the industry’s focus was largely on making image tools easier, faster, and more impressive. By 2026, the hard questions have become unavoidable: who made this image, was it edited, and should anyone trust it? Microsoft’s own support material for Paint already says images created with Image Creator or other AI features in Paint include a C2PA manifest to help users identify them as AI-generated.

That matters because the new watermark option is not emerging in a vacuum. Microsoft has been steadily expanding AI features across Paint, Photos, and Microsoft 365, while also layering provenance and disclosure mechanisms on top. Microsoft Support says AI-generated content in Microsoft 365 can be watermarked, and that the watermark may appear as an icon or even text such as “AI-Generated.” It also says metadata is added even when the watermark is not enabled.

This is the real story behind the headline: Microsoft is trying to make AI output more legible without making the tool feel punitive. Paint is still the same approachable, low-friction app millions of users know, but it is now being asked to solve a much messier modern problem. The shift is subtle, yet it reflects a broader industry move toward content provenance and away from the old assumption that every image can be treated as equally trustworthy just because it can be saved as a PNG.

The tension is obvious. Microsoft wants AI features to remain easy enough for casual users, but it also has an incentive to show regulators, enterprise buyers, and the public that it is not blind to abuse. A watermark toggle, especially one that is optional and off by default, is a compromise between creative freedom and accountability. It is also a quiet acknowledgement that “AI slop” is not just an internet meme anymore; it is a product-design problem.

The provenance angle is equally important. Microsoft says Paint-generated images contain a C2PA manifest, which is intended to help identify AI-generated content through standardized content credentials. C2PA is not a watermark in the visual sense; it is a technical disclosure layer embedded in the file metadata. That means Microsoft has already been signaling that transparency should be part of the default AI experience, even if the implementation is not obvious to ordinary users.

The move also fits into a much larger Microsoft pattern. Photos gained Designer integration, and Microsoft has repeatedly pushed AI editing and generation into consumer-facing Windows apps. In each case, the company has paired capability with guardrails: content filtering, abuse prevention, and warnings about deceptive use. The message has been consistent, if not always loud: Microsoft wants AI features that feel mainstream, but it does not want to be seen as careless about misuse.

What is changing now is the level of urgency. As AI-generated visuals flood feeds, marketplaces, documents, and even scam campaigns, provenance alone is often not enough. Metadata can be stripped, and invisible credentials do not always survive redistribution. That is why visible watermarks are becoming more attractive: they are harder to ignore, easier to understand, and more useful when an image is viewed out of context.

That makes the app a useful bellwether. If a watermark setting can be introduced in Paint without turning users away, Microsoft can use the same trust logic elsewhere. If it backfires, the company learns something important about how much friction ordinary users will tolerate in exchange for better disclosure.

That design choice reveals a lot. It suggests Microsoft knows mandatory labeling could annoy casual creators who use AI for memes, mockups, or quick concept art. At the same time, the company likely understands that a purely invisible credential is not enough in an environment where content gets screenshot, reposted, and remixed constantly. Optional watermarking is therefore a nudge, not a mandate.

Microsoft’s approach effectively layers both. The file can carry credentials, while the user can choose to display a watermark. That is a smart product choice because it addresses both technical authenticity and human perception.

But off by default also means adoption will depend on user intent. In practice, that may limit the impact of the feature unless Microsoft later decides to encourage or require disclosure in more contexts.

Microsoft’s Paint watermark feature reads like a response to that environment. By giving users a direct way to label AI output, the company is acknowledging that trust is now a core feature of content creation, not just a policy footnote. That is significant because it moves the discussion from “Can the tool generate this?” to “Should the output be clearly disclosed?”

A visible mark can also serve a practical social function. It reduces ambiguity in casual sharing, and it gives recipients a simple clue that they are looking at synthetic or altered imagery. In a world where misinformation spreads faster than context, even a basic indicator can matter.

The more realistic benefit is cultural rather than absolute. Watermarks can normalize disclosure, make honest users easier to identify, and raise the cost of casual deception. That may sound modest, but modest is often how platform norms start.

This layered model is sensible because it acknowledges different audiences. Consumers need visible cues. Enterprises need auditability. Platforms need machine-readable provenance. Microsoft is effectively trying to serve all three without overcomplicating the product surface.

That matters for interoperability. If more apps, cameras, editors, and publishing platforms support the same standard, provenance becomes easier to preserve across the content lifecycle.

For consumers, the stakes are looser but more immediate. They want convenience and creative freedom, yet they also need some signal that an image is synthetic if they are sharing it publicly. Microsoft appears to be trying to keep both audiences inside the same product lane.

That has competitive implications. Google, Adobe, Canva, and other creative platforms are all under pressure to address synthetic content labeling in ways that are understandable to users. Microsoft does not need Paint to become a dominant creation suite; it just needs it to become a credible example of how everyday software can handle AI responsibly.

The update also reinforces Windows as an AI-native operating system rather than a passive container for apps. Paint, Photos, Notepad, and Copilot are all being pulled into the same ecosystem story. That is good for Microsoft’s platform positioning, especially if regulators keep asking whether consumer AI tools should disclose more clearly what they produce.

When users worry about deepfakes, scams, and synthetic clutter, a visible disclosure feature becomes part safety measure, part PR statement, and part product differentiator.

If the mark is too obvious, casual users may reject it. If it is too subtle, it may fail at the very purpose it is supposed to serve. Microsoft will have to walk a narrow line between legibility and usability.

That also raises the pressure on competitors. If Microsoft can make transparency feel native rather than bureaucratic, other vendors may have to follow. If not, the feature may become one more example of good intentions that failed to land with consumers.

That is why visible disclosure features matter. They do not solve the problem, but they help create a norm where AI output is expected to identify itself.

The next phase will likely depend on three things: whether the watermark reaches broader rings beyond Insiders, whether Microsoft makes the setting easier to discover, and whether the company extends similar labeling logic to other consumer tools. If that happens, the April 2026 Paint update may be remembered as one of those quiet moments when Windows started treating AI provenance as a first-class product feature.

Source: thewincentral.com Microsoft Paint Update Adds Watermark Option for AI Images - WinCentral

Overview

Overview

The Paint update lands at a moment when the conversation around generative AI has shifted from novelty to consequence. In 2023 and 2024, the industry’s focus was largely on making image tools easier, faster, and more impressive. By 2026, the hard questions have become unavoidable: who made this image, was it edited, and should anyone trust it? Microsoft’s own support material for Paint already says images created with Image Creator or other AI features in Paint include a C2PA manifest to help users identify them as AI-generated.That matters because the new watermark option is not emerging in a vacuum. Microsoft has been steadily expanding AI features across Paint, Photos, and Microsoft 365, while also layering provenance and disclosure mechanisms on top. Microsoft Support says AI-generated content in Microsoft 365 can be watermarked, and that the watermark may appear as an icon or even text such as “AI-Generated.” It also says metadata is added even when the watermark is not enabled.

This is the real story behind the headline: Microsoft is trying to make AI output more legible without making the tool feel punitive. Paint is still the same approachable, low-friction app millions of users know, but it is now being asked to solve a much messier modern problem. The shift is subtle, yet it reflects a broader industry move toward content provenance and away from the old assumption that every image can be treated as equally trustworthy just because it can be saved as a PNG.

The tension is obvious. Microsoft wants AI features to remain easy enough for casual users, but it also has an incentive to show regulators, enterprise buyers, and the public that it is not blind to abuse. A watermark toggle, especially one that is optional and off by default, is a compromise between creative freedom and accountability. It is also a quiet acknowledgement that “AI slop” is not just an internet meme anymore; it is a product-design problem.

Background

Microsoft has been building AI into Paint gradually rather than all at once. In late 2024, the company began rolling out new AI experiences in Paint and Notepad to Windows Insiders, and Microsoft later expanded support for Image Creator and generative features in the app. By the time Microsoft’s support documentation was updated, Paint was no longer a nostalgic bitmap editor with a few gimmicks attached; it had become a small but visible entry point into Microsoft’s broader Copilot ecosystem.The provenance angle is equally important. Microsoft says Paint-generated images contain a C2PA manifest, which is intended to help identify AI-generated content through standardized content credentials. C2PA is not a watermark in the visual sense; it is a technical disclosure layer embedded in the file metadata. That means Microsoft has already been signaling that transparency should be part of the default AI experience, even if the implementation is not obvious to ordinary users.

The move also fits into a much larger Microsoft pattern. Photos gained Designer integration, and Microsoft has repeatedly pushed AI editing and generation into consumer-facing Windows apps. In each case, the company has paired capability with guardrails: content filtering, abuse prevention, and warnings about deceptive use. The message has been consistent, if not always loud: Microsoft wants AI features that feel mainstream, but it does not want to be seen as careless about misuse.

What is changing now is the level of urgency. As AI-generated visuals flood feeds, marketplaces, documents, and even scam campaigns, provenance alone is often not enough. Metadata can be stripped, and invisible credentials do not always survive redistribution. That is why visible watermarks are becoming more attractive: they are harder to ignore, easier to understand, and more useful when an image is viewed out of context.

Why Paint matters more than it seems

Paint is not Adobe Photoshop, and that is precisely why it matters. Features added to Paint are exposed to a mass audience that includes students, hobbyists, small businesses, and casual users who may never read a product policy. When Microsoft adds a transparency option here, it is effectively testing a disclosure norm at consumer scale.That makes the app a useful bellwether. If a watermark setting can be introduced in Paint without turning users away, Microsoft can use the same trust logic elsewhere. If it backfires, the company learns something important about how much friction ordinary users will tolerate in exchange for better disclosure.

What the new watermark option actually changes

The most important thing about the reported Paint feature is not that it exists, but that it is optional. Microsoft is apparently not forcing a watermark onto every AI-created image by default. Instead, users can choose whether they want it always applied or whether they want to be asked each time before saving. That is a familiar Microsoft pattern: provide control, but make the ethically safer path visible.That design choice reveals a lot. It suggests Microsoft knows mandatory labeling could annoy casual creators who use AI for memes, mockups, or quick concept art. At the same time, the company likely understands that a purely invisible credential is not enough in an environment where content gets screenshot, reposted, and remixed constantly. Optional watermarking is therefore a nudge, not a mandate.

The difference between watermarks and content credentials

A watermark is designed for people. It is visible, immediate, and legible even when the file has been stripped of metadata. A C2PA manifest is designed for systems and platforms. It can preserve provenance information in a standardized way, but it does not help much when the image is pasted into a document or captured on a phone screen.Microsoft’s approach effectively layers both. The file can carry credentials, while the user can choose to display a watermark. That is a smart product choice because it addresses both technical authenticity and human perception.

Why off by default is not a trivial detail

Turning the option off by default is a small UI decision with large implications. It preserves the easy, frictionless feel of Paint for people who do not want to think about provenance every time they generate an image. It also avoids creating the impression that Microsoft is branding its own AI features as inherently suspicious.But off by default also means adoption will depend on user intent. In practice, that may limit the impact of the feature unless Microsoft later decides to encourage or require disclosure in more contexts.

The anti-“AI slop” argument

The phrase “AI slop” has become a shorthand for low-effort, mass-produced synthetic content that clutters the web and cheapens visual communication. Whether one likes the term or not, the phenomenon is real. Marketplaces are full of generic AI artwork, social feeds are crowded with dubious images, and users increasingly have to ask whether a picture was created by a person, a prompt, or a bad actor.Microsoft’s Paint watermark feature reads like a response to that environment. By giving users a direct way to label AI output, the company is acknowledging that trust is now a core feature of content creation, not just a policy footnote. That is significant because it moves the discussion from “Can the tool generate this?” to “Should the output be clearly disclosed?”

A visible mark can also serve a practical social function. It reduces ambiguity in casual sharing, and it gives recipients a simple clue that they are looking at synthetic or altered imagery. In a world where misinformation spreads faster than context, even a basic indicator can matter.

The limits of a watermark

That said, watermarks are not a cure-all. A determined user can crop them out, blur them, or re-export the file through another app. If the underlying goal is fraud or deception, a watermark will not stop bad actors from trying to evade it.The more realistic benefit is cultural rather than absolute. Watermarks can normalize disclosure, make honest users easier to identify, and raise the cost of casual deception. That may sound modest, but modest is often how platform norms start.

Microsoft’s broader provenance strategy

If you step back, the Paint update looks less like a one-off feature and more like part of a broader Microsoft provenance strategy. Support documentation for Paint says AI-generated images include C2PA manifests, while Microsoft 365 can add watermarks to AI-generated images, video, and audio. Microsoft also says some metadata is added even when the visible watermark is not enabled.This layered model is sensible because it acknowledges different audiences. Consumers need visible cues. Enterprises need auditability. Platforms need machine-readable provenance. Microsoft is effectively trying to serve all three without overcomplicating the product surface.

Why C2PA keeps showing up

C2PA is becoming increasingly important because it offers a shared technical language for provenance across vendors. Microsoft’s repeated references to it indicate that the company wants its AI features to sit inside an emerging ecosystem rather than inventing a proprietary labeling scheme.That matters for interoperability. If more apps, cameras, editors, and publishing platforms support the same standard, provenance becomes easier to preserve across the content lifecycle.

Enterprise versus consumer priorities

For enterprise users, provenance is about compliance, brand risk, and internal accountability. A marketing team does not just need pretty images; it needs to know where those images came from and whether they can be used safely. A watermark or credential can help with that, though it will not replace policy review.For consumers, the stakes are looser but more immediate. They want convenience and creative freedom, yet they also need some signal that an image is synthetic if they are sharing it publicly. Microsoft appears to be trying to keep both audiences inside the same product lane.

Why this matters for Windows and the AI race

Paint has always been symbolic. It is one of those Windows apps that almost everyone recognizes, even if few people use it every day. When Microsoft adds an AI transparency feature to Paint, it sends a message that responsible AI is not confined to enterprise dashboards or research demos; it is baked into the most ordinary layers of Windows.That has competitive implications. Google, Adobe, Canva, and other creative platforms are all under pressure to address synthetic content labeling in ways that are understandable to users. Microsoft does not need Paint to become a dominant creation suite; it just needs it to become a credible example of how everyday software can handle AI responsibly.

The update also reinforces Windows as an AI-native operating system rather than a passive container for apps. Paint, Photos, Notepad, and Copilot are all being pulled into the same ecosystem story. That is good for Microsoft’s platform positioning, especially if regulators keep asking whether consumer AI tools should disclose more clearly what they produce.

The subtle brand benefit

There is also a reputational upside. Microsoft can point to features like content credentials and watermarking as evidence that it is not simply shipping generative AI and hoping for the best. In the current climate, that matters.When users worry about deepfakes, scams, and synthetic clutter, a visible disclosure feature becomes part safety measure, part PR statement, and part product differentiator.

What users will likely notice first

Most people will not think about C2PA manifests, metadata integrity, or provenance standards the first time they use the feature. They will notice something much simpler: whether their image gets marked, whether the mark is ugly, and whether it feels intrusive. That means the success of the feature will depend heavily on how the watermark looks and where it appears.If the mark is too obvious, casual users may reject it. If it is too subtle, it may fail at the very purpose it is supposed to serve. Microsoft will have to walk a narrow line between legibility and usability.

Common user reactions to expect

A feature like this typically produces a few predictable reactions:- Some users will welcome it as a trust signal.

- Others will disable it immediately to keep images clean.

- Power users may treat it as a workflow preference.

- Casual users may not even notice it until they save their first AI image.

- Enterprise admins may see it as a welcome policy tool.

- Creators concerned about aesthetics may dislike visible branding.

- Skeptics will argue that bad actors will just remove it anyway.

Competitive pressure and industry trends

Microsoft is not alone in this direction. The broader tech industry has been inching toward content disclosure, provenance tracking, and visible labeling for years, though with varying seriousness. What makes Microsoft notable is the scale of its distribution. When a company with Windows, Office, and Copilot starts making provenance accessible in mainstream tools, the market tends to pay attention.That also raises the pressure on competitors. If Microsoft can make transparency feel native rather than bureaucratic, other vendors may have to follow. If not, the feature may become one more example of good intentions that failed to land with consumers.

The market’s real challenge

The real challenge is that synthetic content is now cheap, fast, and everywhere. The industry can no longer rely on users being careful or skeptical by default. Tools have to assume that images will travel across platforms, lose context, and be judged in seconds.That is why visible disclosure features matter. They do not solve the problem, but they help create a norm where AI output is expected to identify itself.

Strengths and Opportunities

Microsoft’s move has several strengths, and they are bigger than the feature’s size suggests. The update is modest in UI terms, but it aligns Paint with a much broader trust-and-provenance agenda, which is exactly where the AI market is heading.- It makes AI disclosure visible instead of hidden in metadata.

- It supports user choice rather than imposing a one-size-fits-all policy.

- It fits Microsoft’s existing C2PA and content-credentials strategy.

- It improves trust for enterprise workflows where provenance matters.

- It gives casual users a simple way to signal honesty.

- It strengthens Microsoft’s responsible AI branding.

- It can scale across other Windows and Microsoft 365 experiences.

Risks and Concerns

The feature is promising, but it is not free of trade-offs. Optional disclosure can be useful, yet it also creates inconsistency: some images will be labeled, others will not, and users may not understand why. That inconsistency can undermine the very transparency the feature is trying to improve.- Off-by-default settings may reduce real-world adoption.

- Watermarks can be cropped out or removed by bad actors.

- Users may confuse a watermark with a guarantee of authenticity.

- Too much visual branding can make the tool feel less creative.

- Metadata-based provenance may not survive sharing across apps.

- Optional labeling may produce uneven norms across communities.

- The feature may become symbolic if Microsoft does not expand it further.

Looking Ahead

If Microsoft keeps pushing in this direction, Paint could become a template for how mainstream apps handle AI content responsibly. The most important test is whether the company can make disclosure feel natural rather than burdensome. If it can, this feature may look small today but significant in hindsight.The next phase will likely depend on three things: whether the watermark reaches broader rings beyond Insiders, whether Microsoft makes the setting easier to discover, and whether the company extends similar labeling logic to other consumer tools. If that happens, the April 2026 Paint update may be remembered as one of those quiet moments when Windows started treating AI provenance as a first-class product feature.

- Watch for a wider rollout beyond Windows Insider builds.

- Watch for consistency with Microsoft 365 watermark behavior.

- Watch whether the feature becomes linked to broader policy controls.

- Watch whether Microsoft exposes more granular admin management.

- Watch whether other Windows apps adopt the same disclosure model.

Source: thewincentral.com Microsoft Paint Update Adds Watermark Option for AI Images - WinCentral