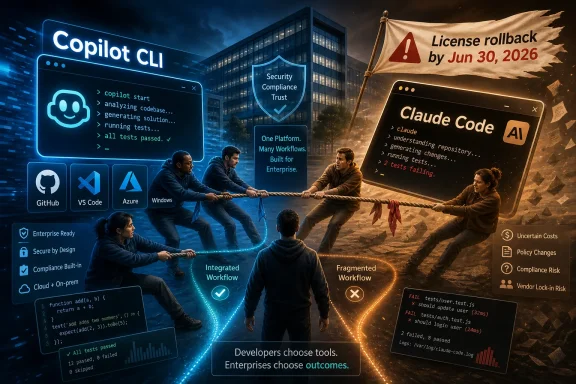

Microsoft is reportedly canceling most internal Claude Code licenses in its Experiences and Devices organization by June 30, 2026, after giving thousands of employees access to Anthropic’s coding assistant in late 2025 and early 2026. The official story, if Microsoft gives one, will probably sound like tool consolidation. The more interesting story is that a rival developer interface became useful enough inside Microsoft to become politically inconvenient. In the AI coding wars, success is not just measured by adoption; it is measured by who owns the workflow when adoption happens.

The striking part of the reported rollback is not that Microsoft wants its employees to use Microsoft tools. That is the default gravity of every large platform company. The striking part is that Claude Code appears to have been invited in, tested at scale, and then shown the door once it proved it could win real developer affection inside the same company that sells GitHub Copilot.

That makes this more than a procurement adjustment. Claude Code was not merely a SaaS subscription sitting unnoticed in a corner of the balance sheet. According to the reporting now circulating, Microsoft opened access to thousands of internal users, including people outside traditional software engineering roles, as part of a broader push to make AI-assisted coding available across the company.

That context matters because it changes the interpretation of the cancellation. If a tool fails, killing it is housekeeping. If a tool succeeds, killing it is strategy.

Microsoft has spent the past several years turning Copilot from a GitHub feature into a company-wide organizing principle. Copilot is in Windows, Microsoft 365, Azure, security products, developer tools, and enterprise workflow software. It is no longer just a brand; it is the name Microsoft gives to the AI layer it wants customers, developers, and employees to touch first.

Claude Code, by contrast, belongs to Anthropic. Even when used inside Microsoft, even when governed by Microsoft procurement and security rules, it represents a competing center of gravity. If internal teams learn to solve problems through Claude’s command-line agent rather than Microsoft’s Copilot tooling, Microsoft has a strategic leak in the one place it most wants coherence: its own engineering culture.

The newer fight is different. Tools like Claude Code and Copilot CLI move the contest into the terminal, where software is built, tested, deployed, debugged, and stitched together. The command line is less glamorous than a polished enterprise dashboard, but it is often closer to the real work.

That is why Microsoft’s reported pivot to Copilot CLI is so revealing. A CLI agent does not merely suggest the next line of code. It can inspect a repository, run commands, edit files, summarize failures, and keep context across a task. Once developers start trusting such a tool, it becomes a companion in the workflow rather than an accessory to it.

In that world, the vendor that owns the coding assistant may eventually own the developer’s operational memory. The agent knows which commands failed, which files mattered, which tests were flaky, which dependencies were fragile, and which architectural compromises were made under deadline pressure. That is not just usage telemetry. It is organizational knowledge.

Microsoft understands that better than almost anyone. GitHub gives it a privileged position in the software supply chain, Visual Studio Code gives it reach on developer desktops, Azure gives it the cloud backend, and Copilot gives it a way to mediate all of those surfaces through AI. Letting Claude Code become the preferred internal path through that stack was always going to create tension.

But there is a difference between offering model choice as cloud infrastructure and letting a rival application own the user experience. Microsoft can happily sell access to many models through Azure or package them into Copilot-branded features. The threat arrives when the rival product becomes the thing employees actually use.

Claude Code is not just a model endpoint. It is a product with a workflow, a personality, a set of defaults, and a habit-forming interface. Developers who like it do not say, “I prefer this model routing architecture.” They say, “This tool helps me ship.” That is a much more dangerous sentence for a platform company.

The same dynamic explains why Microsoft can both partner with Anthropic and compete with Anthropic. Enterprise AI is full of these uneasy arrangements. Cloud providers need model companies. Model companies need distribution. Software incumbents want the best models, but they do not want the model vendor to become the main interface through which customers experience software.

The reported Claude Code rollback is a case study in that boundary. Anthropic may be welcome as an ingredient. It is less welcome as the chef.

That explanation is probably true as far as it goes. It is also incomplete. Microsoft is not a small company trying to shave costs from a developer tools budget because it lacks options. This is a company investing heavily in AI infrastructure, paying for enormous compute capacity, and pushing Copilot into nearly every commercial product line it operates.

The real issue is not whether Microsoft can afford Claude Code. The issue is whether Microsoft can afford to normalize it internally while asking customers to standardize on Copilot externally.

Internal tool choices send signals. When Microsoft engineers use a third-party AI coding agent because they find it better, customers notice. Rivals notice. Employees notice. A company can explain that away as experimentation for a while, but not indefinitely.

This is where the story becomes awkward for Microsoft. If Copilot CLI is already competitive, the migration should be relatively painless. If Claude Code was meaningfully better for many teams, the migration will feel like a product mandate overriding developer preference. Either way, the decision turns an internal tool comparison into a public referendum on Microsoft’s own AI coding stack.

That is the practical risk Microsoft now owns. AI coding tools are unusually personal because they sit inside the messy loop of thought, experiment, failure, and repair. A developer can switch project management systems and complain for a week. Switching an agent that has become part of how they reason through code can feel more invasive.

The disruption may be worse for the nontraditional users Microsoft reportedly encouraged to try AI-assisted development. Designers, product managers, and operations staff do not necessarily have the same fallback habits as professional developers. If Claude Code helped them automate a workflow or build small internal tools, migration is not just a matter of changing commands. It may mean relearning how to ask, verify, and recover when the agent gets stuck.

That is a subtle but important point for WindowsForum readers who administer software environments. Tool consolidation looks clean from a license dashboard. It is messier at the level of actual adoption, where shadow workflows, local scripts, prompt files, repository conventions, and team-specific practices accumulate quickly.

The same pattern will play out in ordinary enterprises. Many organizations are now discovering that AI coding assistants are not interchangeable subscriptions. They become part of the development process. Removing one means unwinding habits as well as contracts.

This is not a trivial challenge. Claude’s reputation among many developers has been built around codebase reasoning, long-context work, and agentic task completion. Copilot has distribution, GitHub integration, brand recognition, and Microsoft’s enterprise muscle. The competition is not one-dimensional.

Microsoft’s advantage is that it can integrate Copilot into places Anthropic cannot easily reach on its own. Copilot can sit across GitHub, Azure DevOps, Visual Studio Code, Microsoft 365, Entra, Defender, and Windows management workflows. In theory, that makes Copilot a better enterprise assistant because it can understand more of the operational environment.

But integration is not the same as excellence. Developers tend to forgive ugly tools that are powerful and resent polished tools that slow them down. The terminal, especially, is a low-patience environment. If Copilot CLI adds friction, the brand will not save it.

That is why the reported cancellation is a gamble. Microsoft is not simply pulling a vendor license. It is betting that its own agentic coding experience can absorb users who have already tasted a strong alternative.

Microsoft is hardly alone here. Every major enterprise vendor wants to be the AI control plane for its customers. Salesforce wants agentic work to happen through Salesforce. Google wants Gemini embedded across Workspace and Cloud. Amazon wants Bedrock and Q to mediate enterprise AI consumption. Microsoft wants Copilot to be the default interface for work.

That does not mean customers will lose all model choice. In many cases, they will still be able to route tasks to different models behind the scenes. But the visible product layer is likely to consolidate around the platforms that already own identity, data, productivity software, and developer infrastructure.

This is where Microsoft’s internal move rhymes with its external pitch. Copilot is the unifying surface. The underlying model may vary, but the user should experience the assistant as Microsoft’s. That is strategically tidy, and it is also a classic platform maneuver.

For IT leaders, the lesson is to distinguish between model availability and product independence. A vendor may support multiple models while still insisting that all meaningful interaction happens through its own assistant. That can improve governance, but it can also reduce leverage.

Having thousands of Microsoft employees use Claude Code across real repositories, real build systems, and real enterprise constraints would be a valuable proving ground. It would expose weaknesses, inspire features, and create internal champions at a company whose developer tooling choices influence the wider market. Losing that channel is not catastrophic, but it is meaningful.

The bigger risk for Anthropic is that this becomes a pattern. If large platform companies decide Claude is best consumed as a model inside their own branded assistants, Anthropic may find itself powerful at the intelligence layer but constrained at the product layer. That is a familiar trap in technology markets: the best component does not always become the dominant interface.

Anthropic has tried to avoid being merely a backend supplier. Claude Code is part of that effort, as are its broader agent and enterprise products. The company wants developers and knowledge workers to experience Claude directly, not only as an invisible option selected by another vendor’s router.

Microsoft’s reported action is therefore a reminder of the distribution problem facing every AI lab. Model quality wins attention. Workflow ownership wins markets.

Expect Microsoft to keep emphasizing Copilot as the manageable, auditable, enterprise-ready path for AI-assisted work. That pitch will resonate with many IT departments. A single assistant platform tied to Microsoft identity, compliance, logging, billing, and admin controls is easier to defend than a patchwork of third-party tools.

But the best governance story does not automatically produce the best user experience. The tension between central control and practitioner preference will define the next phase of enterprise AI adoption. Developers will want the tool that solves the problem. Security teams will want the tool they can monitor. Finance teams will want the tool already covered by a broader agreement.

Microsoft is trying to make those three answers the same answer: Copilot. The reported Claude Code cancellation shows how aggressively it may pursue that alignment, even inside its own walls.

That should prompt customers to ask harder questions before standardizing. Does the chosen AI coding assistant work well across the languages, repositories, and build systems the organization actually uses? Can teams export workflows if the tool changes? Are usage limits, model routing, and data retention policies transparent? Is the assistant being selected because it is best for the work, or because it is easiest for the vendor relationship?

Those questions will become more important as AI assistants move from novelty to dependency. A bad chatbot is annoying. A bad coding agent embedded into release engineering can become operational drag.

A team that builds prompt conventions, repository instruction files, automation scripts, review workflows, and debugging rituals around one assistant is not free in any practical sense. It may be able to cancel the subscription, but it cannot instantly recover the accumulated working style. This is why the Microsoft-Claude story feels bigger than an internal license cleanup.

The same will be true for companies standardizing on Copilot, Claude Code, Gemini Code Assist, Amazon Q, Cursor, or any other agentic coding environment. The tool becomes part of how the organization thinks. It shapes what work feels easy, what work gets delegated, and which employees can safely operate outside their old skill boundaries.

That is especially relevant to Microsoft’s original reported experiment with broader employee access. The promise of AI-assisted development is not only faster programmers. It is that people who are not full-time programmers can build, modify, and automate more of their own work. That promise is powerful, but it makes tool continuity more important, not less.

When a professional developer loses a favorite assistant, they can often fall back on years of experience. When a product manager or designer loses the agent that made coding approachable, the loss may be more fundamental. The democratization story depends on stable trust.

The optics are still awkward. A company that tells customers AI should be about openness, choice, and the right model for the job is reportedly removing a popular rival tool from many of its own developers. That does not make Microsoft hypocritical in a simple sense; enterprises routinely separate backend choice from frontend standardization. But it does expose the limit of the choice narrative.

The lesson is not that Microsoft cannot work with Anthropic. It can, and likely will continue to where the partnership serves Microsoft’s product strategy. The lesson is that Microsoft will prefer Anthropic inside Copilot to Anthropic instead of Copilot.

That distinction will shape the next year of enterprise AI. The winners may not be the labs with the best benchmark scores. They may be the companies that can turn intelligence into a governed, sticky, everyday interface. Microsoft has spent decades winning exactly that kind of fight.

Here is what WindowsForum readers should carry forward as the dust settles:

Source: The Tech Buzz https://www.techbuzz.ai/articles/microsoft-starts-canceling-claude-code-licenses/

Microsoft’s Claude Experiment Became a Copilot Problem

Microsoft’s Claude Experiment Became a Copilot Problem

The striking part of the reported rollback is not that Microsoft wants its employees to use Microsoft tools. That is the default gravity of every large platform company. The striking part is that Claude Code appears to have been invited in, tested at scale, and then shown the door once it proved it could win real developer affection inside the same company that sells GitHub Copilot.That makes this more than a procurement adjustment. Claude Code was not merely a SaaS subscription sitting unnoticed in a corner of the balance sheet. According to the reporting now circulating, Microsoft opened access to thousands of internal users, including people outside traditional software engineering roles, as part of a broader push to make AI-assisted coding available across the company.

That context matters because it changes the interpretation of the cancellation. If a tool fails, killing it is housekeeping. If a tool succeeds, killing it is strategy.

Microsoft has spent the past several years turning Copilot from a GitHub feature into a company-wide organizing principle. Copilot is in Windows, Microsoft 365, Azure, security products, developer tools, and enterprise workflow software. It is no longer just a brand; it is the name Microsoft gives to the AI layer it wants customers, developers, and employees to touch first.

Claude Code, by contrast, belongs to Anthropic. Even when used inside Microsoft, even when governed by Microsoft procurement and security rules, it represents a competing center of gravity. If internal teams learn to solve problems through Claude’s command-line agent rather than Microsoft’s Copilot tooling, Microsoft has a strategic leak in the one place it most wants coherence: its own engineering culture.

The Command Line Is the New Platform Fight

For years, the obvious battlefield for coding assistants was the editor. GitHub Copilot’s original genius was not merely model quality; it was placement. It arrived where developers were already working, inside the IDE, turning autocomplete into a new kind of programming interface.The newer fight is different. Tools like Claude Code and Copilot CLI move the contest into the terminal, where software is built, tested, deployed, debugged, and stitched together. The command line is less glamorous than a polished enterprise dashboard, but it is often closer to the real work.

That is why Microsoft’s reported pivot to Copilot CLI is so revealing. A CLI agent does not merely suggest the next line of code. It can inspect a repository, run commands, edit files, summarize failures, and keep context across a task. Once developers start trusting such a tool, it becomes a companion in the workflow rather than an accessory to it.

In that world, the vendor that owns the coding assistant may eventually own the developer’s operational memory. The agent knows which commands failed, which files mattered, which tests were flaky, which dependencies were fragile, and which architectural compromises were made under deadline pressure. That is not just usage telemetry. It is organizational knowledge.

Microsoft understands that better than almost anyone. GitHub gives it a privileged position in the software supply chain, Visual Studio Code gives it reach on developer desktops, Azure gives it the cloud backend, and Copilot gives it a way to mediate all of those surfaces through AI. Letting Claude Code become the preferred internal path through that stack was always going to create tension.

The Partnership Era Was Always Going to Collide With Product Ambition

Microsoft’s AI posture has often looked expansive from the outside. The company is deeply tied to OpenAI, has made Anthropic models available in some Microsoft contexts, and has repeatedly pitched Azure as a place where customers can choose among multiple frontier models. That multi-model message is useful, especially for enterprise buyers who do not want to be locked into a single lab’s roadmap.But there is a difference between offering model choice as cloud infrastructure and letting a rival application own the user experience. Microsoft can happily sell access to many models through Azure or package them into Copilot-branded features. The threat arrives when the rival product becomes the thing employees actually use.

Claude Code is not just a model endpoint. It is a product with a workflow, a personality, a set of defaults, and a habit-forming interface. Developers who like it do not say, “I prefer this model routing architecture.” They say, “This tool helps me ship.” That is a much more dangerous sentence for a platform company.

The same dynamic explains why Microsoft can both partner with Anthropic and compete with Anthropic. Enterprise AI is full of these uneasy arrangements. Cloud providers need model companies. Model companies need distribution. Software incumbents want the best models, but they do not want the model vendor to become the main interface through which customers experience software.

The reported Claude Code rollback is a case study in that boundary. Anthropic may be welcome as an ingredient. It is less welcome as the chef.

Cost Cutting Is Plausible, but It Is Not the Whole Story

There is an obvious financial explanation here: thousands of enterprise AI coding licenses are expensive, and Microsoft’s fiscal year ends on June 30. If a large internal division can cut a third-party subscription and move employees to a first-party tool, finance teams will rarely object.That explanation is probably true as far as it goes. It is also incomplete. Microsoft is not a small company trying to shave costs from a developer tools budget because it lacks options. This is a company investing heavily in AI infrastructure, paying for enormous compute capacity, and pushing Copilot into nearly every commercial product line it operates.

The real issue is not whether Microsoft can afford Claude Code. The issue is whether Microsoft can afford to normalize it internally while asking customers to standardize on Copilot externally.

Internal tool choices send signals. When Microsoft engineers use a third-party AI coding agent because they find it better, customers notice. Rivals notice. Employees notice. A company can explain that away as experimentation for a while, but not indefinitely.

This is where the story becomes awkward for Microsoft. If Copilot CLI is already competitive, the migration should be relatively painless. If Claude Code was meaningfully better for many teams, the migration will feel like a product mandate overriding developer preference. Either way, the decision turns an internal tool comparison into a public referendum on Microsoft’s own AI coding stack.

Developers Will Judge the Migration by Friction, Not Strategy

For the engineers and adjacent workers affected by the reported change, the strategic logic will matter less than the Monday morning workflow. People who spent months building habits around Claude Code will care about whether Copilot CLI preserves their speed, context handling, repository understanding, and reliability.That is the practical risk Microsoft now owns. AI coding tools are unusually personal because they sit inside the messy loop of thought, experiment, failure, and repair. A developer can switch project management systems and complain for a week. Switching an agent that has become part of how they reason through code can feel more invasive.

The disruption may be worse for the nontraditional users Microsoft reportedly encouraged to try AI-assisted development. Designers, product managers, and operations staff do not necessarily have the same fallback habits as professional developers. If Claude Code helped them automate a workflow or build small internal tools, migration is not just a matter of changing commands. It may mean relearning how to ask, verify, and recover when the agent gets stuck.

That is a subtle but important point for WindowsForum readers who administer software environments. Tool consolidation looks clean from a license dashboard. It is messier at the level of actual adoption, where shadow workflows, local scripts, prompt files, repository conventions, and team-specific practices accumulate quickly.

The same pattern will play out in ordinary enterprises. Many organizations are now discovering that AI coding assistants are not interchangeable subscriptions. They become part of the development process. Removing one means unwinding habits as well as contracts.

Copilot Has to Win on Merit Inside the House

The uncomfortable lesson for Microsoft is that internal mandates can force usage, but they cannot manufacture developer enthusiasm. If Copilot CLI is better, or even close enough while offering tighter enterprise controls, the rollback will fade into the normal churn of internal tooling. If it is worse in obvious ways, the decision will linger as one more example of platform strategy overruling craft.This is not a trivial challenge. Claude’s reputation among many developers has been built around codebase reasoning, long-context work, and agentic task completion. Copilot has distribution, GitHub integration, brand recognition, and Microsoft’s enterprise muscle. The competition is not one-dimensional.

Microsoft’s advantage is that it can integrate Copilot into places Anthropic cannot easily reach on its own. Copilot can sit across GitHub, Azure DevOps, Visual Studio Code, Microsoft 365, Entra, Defender, and Windows management workflows. In theory, that makes Copilot a better enterprise assistant because it can understand more of the operational environment.

But integration is not the same as excellence. Developers tend to forgive ugly tools that are powerful and resent polished tools that slow them down. The terminal, especially, is a low-patience environment. If Copilot CLI adds friction, the brand will not save it.

That is why the reported cancellation is a gamble. Microsoft is not simply pulling a vendor license. It is betting that its own agentic coding experience can absorb users who have already tasted a strong alternative.

Enterprise AI Choice Is Narrowing Behind the Curtain

For customers, the broader implication is that the era of open-ended AI experimentation may be shorter than it looked. In 2023 and 2024, many organizations encouraged teams to try multiple assistants, compare outputs, and find whatever improved productivity. By 2026, the bill is arriving in the form of governance, procurement, data controls, and platform consolidation.Microsoft is hardly alone here. Every major enterprise vendor wants to be the AI control plane for its customers. Salesforce wants agentic work to happen through Salesforce. Google wants Gemini embedded across Workspace and Cloud. Amazon wants Bedrock and Q to mediate enterprise AI consumption. Microsoft wants Copilot to be the default interface for work.

That does not mean customers will lose all model choice. In many cases, they will still be able to route tasks to different models behind the scenes. But the visible product layer is likely to consolidate around the platforms that already own identity, data, productivity software, and developer infrastructure.

This is where Microsoft’s internal move rhymes with its external pitch. Copilot is the unifying surface. The underlying model may vary, but the user should experience the assistant as Microsoft’s. That is strategically tidy, and it is also a classic platform maneuver.

For IT leaders, the lesson is to distinguish between model availability and product independence. A vendor may support multiple models while still insisting that all meaningful interaction happens through its own assistant. That can improve governance, but it can also reduce leverage.

Anthropic Loses More Than Seats

If the reporting is accurate, Anthropic is losing a high-profile internal deployment at one of the most important software companies in the world. The revenue from those licenses matters, but the feedback loop may matter more.Having thousands of Microsoft employees use Claude Code across real repositories, real build systems, and real enterprise constraints would be a valuable proving ground. It would expose weaknesses, inspire features, and create internal champions at a company whose developer tooling choices influence the wider market. Losing that channel is not catastrophic, but it is meaningful.

The bigger risk for Anthropic is that this becomes a pattern. If large platform companies decide Claude is best consumed as a model inside their own branded assistants, Anthropic may find itself powerful at the intelligence layer but constrained at the product layer. That is a familiar trap in technology markets: the best component does not always become the dominant interface.

Anthropic has tried to avoid being merely a backend supplier. Claude Code is part of that effort, as are its broader agent and enterprise products. The company wants developers and knowledge workers to experience Claude directly, not only as an invisible option selected by another vendor’s router.

Microsoft’s reported action is therefore a reminder of the distribution problem facing every AI lab. Model quality wins attention. Workflow ownership wins markets.

Windows and Microsoft 365 Customers Should Watch the Pattern

This story may look like inside baseball for Microsoft employees, but it should matter to Windows administrators and Microsoft 365 tenants. Microsoft’s internal defaults often foreshadow external product pressure. When the company standardizes internally, it tends to refine the playbook it will later sell to customers.Expect Microsoft to keep emphasizing Copilot as the manageable, auditable, enterprise-ready path for AI-assisted work. That pitch will resonate with many IT departments. A single assistant platform tied to Microsoft identity, compliance, logging, billing, and admin controls is easier to defend than a patchwork of third-party tools.

But the best governance story does not automatically produce the best user experience. The tension between central control and practitioner preference will define the next phase of enterprise AI adoption. Developers will want the tool that solves the problem. Security teams will want the tool they can monitor. Finance teams will want the tool already covered by a broader agreement.

Microsoft is trying to make those three answers the same answer: Copilot. The reported Claude Code cancellation shows how aggressively it may pursue that alignment, even inside its own walls.

That should prompt customers to ask harder questions before standardizing. Does the chosen AI coding assistant work well across the languages, repositories, and build systems the organization actually uses? Can teams export workflows if the tool changes? Are usage limits, model routing, and data retention policies transparent? Is the assistant being selected because it is best for the work, or because it is easiest for the vendor relationship?

Those questions will become more important as AI assistants move from novelty to dependency. A bad chatbot is annoying. A bad coding agent embedded into release engineering can become operational drag.

The Real Lock-In Is Habit

Traditional software lock-in was often contractual or technical. Data lived in one database. Files used one format. Licenses renewed on a three-year enterprise agreement. AI assistants introduce a softer kind of lock-in: habit.A team that builds prompt conventions, repository instruction files, automation scripts, review workflows, and debugging rituals around one assistant is not free in any practical sense. It may be able to cancel the subscription, but it cannot instantly recover the accumulated working style. This is why the Microsoft-Claude story feels bigger than an internal license cleanup.

The same will be true for companies standardizing on Copilot, Claude Code, Gemini Code Assist, Amazon Q, Cursor, or any other agentic coding environment. The tool becomes part of how the organization thinks. It shapes what work feels easy, what work gets delegated, and which employees can safely operate outside their old skill boundaries.

That is especially relevant to Microsoft’s original reported experiment with broader employee access. The promise of AI-assisted development is not only faster programmers. It is that people who are not full-time programmers can build, modify, and automate more of their own work. That promise is powerful, but it makes tool continuity more important, not less.

When a professional developer loses a favorite assistant, they can often fall back on years of experience. When a product manager or designer loses the agent that made coding approachable, the loss may be more fundamental. The democratization story depends on stable trust.

Microsoft’s Message Is Clearer Than Its Optics

From Microsoft’s perspective, the logic is defensible. It owns GitHub. It sells Copilot. It needs to improve Copilot CLI. It wants to reduce redundant spending. It has security and compliance obligations around internal source code. It would be strange if the company did not try to consolidate developer AI activity around its own platform.The optics are still awkward. A company that tells customers AI should be about openness, choice, and the right model for the job is reportedly removing a popular rival tool from many of its own developers. That does not make Microsoft hypocritical in a simple sense; enterprises routinely separate backend choice from frontend standardization. But it does expose the limit of the choice narrative.

The lesson is not that Microsoft cannot work with Anthropic. It can, and likely will continue to where the partnership serves Microsoft’s product strategy. The lesson is that Microsoft will prefer Anthropic inside Copilot to Anthropic instead of Copilot.

That distinction will shape the next year of enterprise AI. The winners may not be the labs with the best benchmark scores. They may be the companies that can turn intelligence into a governed, sticky, everyday interface. Microsoft has spent decades winning exactly that kind of fight.

The June 30 Cutoff Turns a Tool Preference Into a Platform Signal

The reported date matters because it gives the story a hard edge. June 30 is not just a convenient deadline; it is the end of Microsoft’s fiscal year. That makes the rollback legible as both a budget move and a strategic reset before the next planning cycle.Here is what WindowsForum readers should carry forward as the dust settles:

- Microsoft is reportedly canceling most Claude Code licenses in at least part of its internal organization and steering affected users toward Copilot CLI by June 30, 2026.

- Claude Code’s reported popularity inside Microsoft makes the decision look less like a failed trial and more like a defensive platform move.

- Copilot CLI now faces a credibility test among users who have already experienced a competing agentic coding workflow.

- Anthropic loses not only license revenue but also a valuable enterprise feedback loop inside one of the world’s most consequential software companies.

- Enterprise customers should treat AI coding assistants as workflow infrastructure, not disposable productivity apps.

- The biggest risk for IT departments is assuming that model choice and product choice are the same thing.

Source: The Tech Buzz https://www.techbuzz.ai/articles/microsoft-starts-canceling-claude-code-licenses/