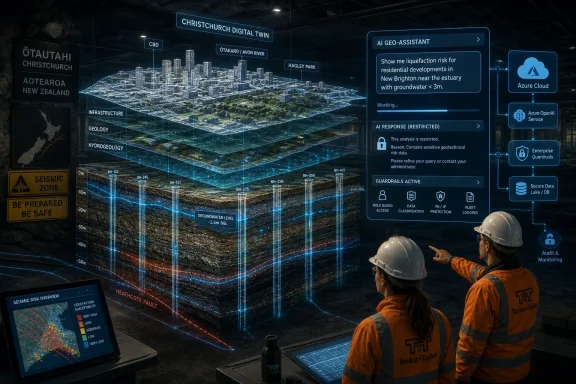

Microsoft Chairman and CEO Satya Nadella visited New Zealand on April 21, 2026, where Microsoft highlighted Beca’s Azure-powered BEYON digital twin platform and AI assistant for querying the New Zealand Geotechnical Database in natural language. The story is not simply that another professional dataset has acquired a chatbot. It is that Microsoft is trying to prove AI’s value in places where the cost of bad information is measured in concrete, capital, insurance, and public safety. For New Zealand, the pitch is sharper still: if the country must keep rebuilding around seismic risk, then the data beneath its cities needs to become easier to use without becoming easier to misuse.

The modern AI demo has become painfully familiar: a blank text box, a polished prompt, a tidy answer, and a claim that work has been transformed. Beca’s upgraded use of the New Zealand Geotechnical Database is a more interesting case because it begins somewhere messier. It starts with boreholes, cone penetration tests, hand augers, groundwater measurements, rock layers, legacy uploads, and the stubborn reality that the ground under a building site does not care how fluent a model sounds.

That makes this a stronger Microsoft story than another office-productivity vignette. The company is using Nadella’s New Zealand visit to show Azure OpenAI and Microsoft Foundry operating inside a technical workflow where search, filtering, access control, and guardrails matter as much as generation. In this version of the AI narrative, the assistant is not being asked to invent strategy slides. It is being asked to help engineers find the right evidence faster.

The distinction matters. Infrastructure professionals already live with software, maps, databases, GIS tools, and specialist modeling packages. Their problem is rarely the total absence of data. It is that useful data is scattered, unevenly structured, hard to compare, locked in old systems, or expensive to rediscover.

Microsoft’s bet is that generative AI becomes most credible when it is attached to a specific operational bottleneck. The assistant in BEYON is not supposed to replace geotechnical judgment. It is supposed to shorten the path between a question and the relevant logs, tests, and map layers that inform that judgment.

That origin gives the database a weight that most enterprise data platforms do not have. This was not a speculative digital transformation project or a vendor-led modernization exercise looking for a business case. It was a response to a national emergency in which fragmented ground data slowed decisions at precisely the moment when speed and confidence were essential.

The problem Beca describes is familiar to anyone who has worked around civil engineering, utilities, or public infrastructure. One project drills and tests a site. Another project nearby later pays to rediscover similar information because the earlier data is inaccessible, inconsistently formatted, or practically invisible. The result is waste, delay, and sometimes a thinner evidentiary base for decisions that should be made with the fullest possible picture.

The NZGD tried to turn that pattern around by treating geotechnical investigations as a shared national asset. By the time the Ministry of Business, Innovation and Employment sought a new host and upgrade path, Microsoft says the database had amassed thousands of users and roughly 168,000 uploaded geotechnical tests. That is not “big data” in the consumer-platform sense. It is more valuable than that: curated professional data tied to place, engineering practice, and public consequence.

That matters because digital twins are often oversold as glossy 3D replicas of real-world assets. In serious infrastructure work, the useful part is less cinematic. A digital twin becomes valuable when it connects the representation of the real world to live or trusted data, permissions, history, spatial context, and analytical workflows.

BEYON gives NZGD a more modern container for that work. Instead of being merely a repository, the platform can act as an interface for exploring relationships among locations, tests, ground conditions, and project needs. The AI assistant then sits on top of a governed data environment rather than floating above a document swamp.

This is the pattern enterprise IT should pay attention to. The assistant is the visible feature, but the prerequisite is boring platform work: cloud hosting, identity, permissions, standards compliance, spatial analytics integration, database modernization, and data-quality processes. Microsoft would prefer customers to see the magic of Azure OpenAI in Microsoft Foundry. Administrators will see the stack underneath it, and they should.

The danger, of course, is that natural language feels authoritative even when it is only a convenience layer. In geotechnical engineering, a confident answer can be worse than no answer if it blurs the boundary between retrieval and analysis. Soil behavior, liquefaction risk, slope stability, groundwater effects, and foundation suitability require professional interpretation and calculation. Those are not chores to hand off casually to a language model.

Beca appears to understand that line. Microsoft’s account says the AI assistant was designed with strict rules and is not allowed to perform geotechnical analysis. That is exactly the right place to draw the boundary. The system can help users find, filter, and extract relevant information; the engineer remains responsible for evaluating what the information means.

For IT pros, this is the more mature version of AI assistance. The value is not that the model becomes a domain expert. The value is that it becomes a controlled interface to domain evidence, constrained by platform rules, identity controls, and application-level design. In regulated or safety-adjacent domains, that is the difference between a useful tool and a liability generator.

A guardrail that prevents the assistant from doing geotechnical analysis is not a cosmetic safety feature. It is a product-design decision about professional accountability. The system should not calculate slope stability because that kind of analysis depends on assumptions, methods, context, standards, and sign-off. It can retrieve the logs and help narrow the data. It should not impersonate the engineer who must own the conclusion.

This is where Microsoft’s enterprise AI strategy has an advantage over the open web chatbot model. Corporate and public-sector buyers do not merely need smart models. They need access controls, auditability, administrative configuration, data boundaries, deployment choices, and vendor accountability. Azure’s appeal is that AI can be embedded in those existing governance habits.

But this also raises the bar. Once Microsoft and its partners sell AI as part of infrastructure workflows, they inherit expectations from infrastructure culture. A bad answer in a creative writing tool is annoying. A bad answer in an engineering information system can contribute to cost overruns, wrong assumptions, or misplaced confidence. The system’s limits must be as visible as its capabilities.

Still, the efficiency number should not be mistaken for the full value proposition. In engineering work, retrieval time is only one part of the equation. The larger benefit may be that engineers can consider more context before deciding where to investigate next, what historical data to trust, and which ground conditions deserve closer attention.

The Hobsonville example is telling. If existing data shows how local geology changes across a surrounding area, an engineer can target new investigations more intelligently for a housing development. That does not eliminate fieldwork. It makes fieldwork less blind.

That is a better AI argument than “do the same work with fewer people.” The more compelling claim is “make scarce expert time less wasteful.” New Zealand, like many countries, faces infrastructure pressure, housing demand, climate adaptation needs, and seismic risk. If AI-assisted retrieval helps engineers spend more time interpreting and less time rummaging, that is a real productivity gain.

There are strong reasons to do that. Cloud platforms can make national-scale services easier to secure, update, scale, and integrate than legacy bespoke systems. Identity-based access control is more manageable than a patchwork of local accounts. Managed databases, monitoring, and platform security can reduce operational fragility.

But the strategic dependency is real. When a national professional dataset sits on a cloud platform and its next major usability layer is built with a cloud AI service, the vendor relationship becomes part of the infrastructure. That does not make the choice wrong. It does mean public agencies and custodians need a clear view of portability, data ownership, resilience, disaster recovery, procurement leverage, and long-term cost.

This is the part of the story Microsoft understandably plays down. Azure is not just a neutral pipe in this arrangement. It is the operating environment, the identity layer, the AI platform, and part of the governance story. For a country trying to turn geotechnical information into a national asset, those choices should be scrutinized with the same seriousness as the engineering workflow itself.

That framing is not accidental. Around the world, Microsoft is positioning itself not merely as a software vendor but as the default platform company for AI-era public and private modernization. In that narrative, each local case study becomes evidence that AI is already delivering practical returns in healthcare, transport, banking, agriculture, engineering, and government.

The geotechnical example is especially useful because it is hard to dismiss as frivolous. It is not a chatbot writing marketing copy or summarizing meeting transcripts. It is a domain-specific assistant attached to a post-disaster database with clear public value. If Microsoft wants skeptical governments to believe AI is more than hype, this is the kind of story it needs.

Yet the same strength makes the story politically and technically sensitive. When AI moves into domains tied to public safety and national resilience, the public deserves more than vendor optimism. It deserves evidence about accuracy, failure modes, user training, audit logs, review processes, and how professionals are instructed to treat AI output.

A retrieval assistant that narrows results, filters investigation types, and reduces information overload is a sensible tool. A system that starts implying what can safely be built where would be a very different product. One supports professional judgment; the other risks laundering model output as expertise.

This line will become increasingly important across enterprise software. The next wave of AI features will not be limited to chat panes. They will be embedded in case-management systems, design platforms, ticketing tools, security consoles, medical workflows, legal databases, and financial applications. The hard question will not be whether the model can generate an answer. It will be whether the application should allow that answer to exist in the first place.

Beca’s NZGD assistant points to a healthier model: constrain the AI to tasks where speed and usability improve without displacing accountable expertise. That may sound less revolutionary than the industry’s usual rhetoric. It is also more likely to survive contact with professional reality.

Source: Microsoft Source Microsoft Chairman and CEO - Satya Nadella New Zealand Visit 2026

Microsoft Finds a Better AI Demo Underground

Microsoft Finds a Better AI Demo Underground

The modern AI demo has become painfully familiar: a blank text box, a polished prompt, a tidy answer, and a claim that work has been transformed. Beca’s upgraded use of the New Zealand Geotechnical Database is a more interesting case because it begins somewhere messier. It starts with boreholes, cone penetration tests, hand augers, groundwater measurements, rock layers, legacy uploads, and the stubborn reality that the ground under a building site does not care how fluent a model sounds.That makes this a stronger Microsoft story than another office-productivity vignette. The company is using Nadella’s New Zealand visit to show Azure OpenAI and Microsoft Foundry operating inside a technical workflow where search, filtering, access control, and guardrails matter as much as generation. In this version of the AI narrative, the assistant is not being asked to invent strategy slides. It is being asked to help engineers find the right evidence faster.

The distinction matters. Infrastructure professionals already live with software, maps, databases, GIS tools, and specialist modeling packages. Their problem is rarely the total absence of data. It is that useful data is scattered, unevenly structured, hard to compare, locked in old systems, or expensive to rediscover.

Microsoft’s bet is that generative AI becomes most credible when it is attached to a specific operational bottleneck. The assistant in BEYON is not supposed to replace geotechnical judgment. It is supposed to shorten the path between a question and the relevant logs, tests, and map layers that inform that judgment.

Christchurch Turned Soil Data Into National Infrastructure

The New Zealand Geotechnical Database was born from catastrophe, not convenience. After the February 2011 Christchurch earthquake killed 185 people and damaged much of the city center’s infrastructure, engineers needed rapid access to information about what lay below damaged buildings, homes, roads, and redevelopment sites. Subsurface conditions were no longer a background technical concern. They were central to decisions about repair, demolition, rebuilding, and risk.That origin gives the database a weight that most enterprise data platforms do not have. This was not a speculative digital transformation project or a vendor-led modernization exercise looking for a business case. It was a response to a national emergency in which fragmented ground data slowed decisions at precisely the moment when speed and confidence were essential.

The problem Beca describes is familiar to anyone who has worked around civil engineering, utilities, or public infrastructure. One project drills and tests a site. Another project nearby later pays to rediscover similar information because the earlier data is inaccessible, inconsistently formatted, or practically invisible. The result is waste, delay, and sometimes a thinner evidentiary base for decisions that should be made with the fullest possible picture.

The NZGD tried to turn that pattern around by treating geotechnical investigations as a shared national asset. By the time the Ministry of Business, Innovation and Employment sought a new host and upgrade path, Microsoft says the database had amassed thousands of users and roughly 168,000 uploaded geotechnical tests. That is not “big data” in the consumer-platform sense. It is more valuable than that: curated professional data tied to place, engineering practice, and public consequence.

Beca’s Digital Twin Is the Real Product Story

The tempting headline is “AI helps engineers query ground data,” but the more important architectural move happened before the assistant arrived. Beca won the role of custodian for the next version of NZGD by hosting it on BEYON, its digital twin platform. The updated NZGD launched in November 2024, running on a SQL database in Microsoft Azure and accessed through BEYON.That matters because digital twins are often oversold as glossy 3D replicas of real-world assets. In serious infrastructure work, the useful part is less cinematic. A digital twin becomes valuable when it connects the representation of the real world to live or trusted data, permissions, history, spatial context, and analytical workflows.

BEYON gives NZGD a more modern container for that work. Instead of being merely a repository, the platform can act as an interface for exploring relationships among locations, tests, ground conditions, and project needs. The AI assistant then sits on top of a governed data environment rather than floating above a document swamp.

This is the pattern enterprise IT should pay attention to. The assistant is the visible feature, but the prerequisite is boring platform work: cloud hosting, identity, permissions, standards compliance, spatial analytics integration, database modernization, and data-quality processes. Microsoft would prefer customers to see the magic of Azure OpenAI in Microsoft Foundry. Administrators will see the stack underneath it, and they should.

Natural Language Is a Door, Not an Engineer

The BEYON assistant lets NZGD users ask for data in ordinary language. In Microsoft’s example, an engineering geologist can ask to see investigations in Hobsonville, then narrow results by investigation type, filtering out data that is irrelevant to the job at hand. That sounds simple, but simplicity is the point. A natural-language layer can reduce the distance between an engineer’s intent and the platform’s query mechanics.The danger, of course, is that natural language feels authoritative even when it is only a convenience layer. In geotechnical engineering, a confident answer can be worse than no answer if it blurs the boundary between retrieval and analysis. Soil behavior, liquefaction risk, slope stability, groundwater effects, and foundation suitability require professional interpretation and calculation. Those are not chores to hand off casually to a language model.

Beca appears to understand that line. Microsoft’s account says the AI assistant was designed with strict rules and is not allowed to perform geotechnical analysis. That is exactly the right place to draw the boundary. The system can help users find, filter, and extract relevant information; the engineer remains responsible for evaluating what the information means.

For IT pros, this is the more mature version of AI assistance. The value is not that the model becomes a domain expert. The value is that it becomes a controlled interface to domain evidence, constrained by platform rules, identity controls, and application-level design. In regulated or safety-adjacent domains, that is the difference between a useful tool and a liability generator.

Microsoft Foundry’s Guardrails Move From Slideware to Worksite

Microsoft Foundry and Azure OpenAI are central to the story because they provide the model access, development environment, and safety tooling behind Beca’s assistant. Microsoft’s own documentation emphasizes layered mitigations: content filters, guardrails, grounding, operational monitoring, and responsible AI practices across development and deployment. In a consumer chatbot, those controls are often discussed abstractly. In a geotechnical data system, they become operational requirements.A guardrail that prevents the assistant from doing geotechnical analysis is not a cosmetic safety feature. It is a product-design decision about professional accountability. The system should not calculate slope stability because that kind of analysis depends on assumptions, methods, context, standards, and sign-off. It can retrieve the logs and help narrow the data. It should not impersonate the engineer who must own the conclusion.

This is where Microsoft’s enterprise AI strategy has an advantage over the open web chatbot model. Corporate and public-sector buyers do not merely need smart models. They need access controls, auditability, administrative configuration, data boundaries, deployment choices, and vendor accountability. Azure’s appeal is that AI can be embedded in those existing governance habits.

But this also raises the bar. Once Microsoft and its partners sell AI as part of infrastructure workflows, they inherit expectations from infrastructure culture. A bad answer in a creative writing tool is annoying. A bad answer in an engineering information system can contribute to cost overruns, wrong assumptions, or misplaced confidence. The system’s limits must be as visible as its capabilities.

The 40 Percent Claim Is Useful, but Not the Whole Case

Beca estimates that the AI assistant helps engineers retrieve needed data in about 40 percent less time on average. That is the sort of number vendors love because it converts an abstract workflow improvement into a boardroom-friendly efficiency claim. It is also plausible. Anyone who has searched through technical records, spatial datasets, or inconsistent project archives knows how much time disappears into finding rather than thinking.Still, the efficiency number should not be mistaken for the full value proposition. In engineering work, retrieval time is only one part of the equation. The larger benefit may be that engineers can consider more context before deciding where to investigate next, what historical data to trust, and which ground conditions deserve closer attention.

The Hobsonville example is telling. If existing data shows how local geology changes across a surrounding area, an engineer can target new investigations more intelligently for a housing development. That does not eliminate fieldwork. It makes fieldwork less blind.

That is a better AI argument than “do the same work with fewer people.” The more compelling claim is “make scarce expert time less wasteful.” New Zealand, like many countries, faces infrastructure pressure, housing demand, climate adaptation needs, and seismic risk. If AI-assisted retrieval helps engineers spend more time interpreting and less time rummaging, that is a real productivity gain.

The Cloud Question Does Not Disappear Because the Use Case Is Good

For WindowsForum readers, the Azure angle deserves a hard look. Microsoft says NZGD 2.0 runs on Azure, uses a SQL database, and benefits from controls such as Microsoft Entra ID. That is a coherent modern enterprise architecture. It is also another example of critical professional workflows moving into hyperscale cloud ecosystems.There are strong reasons to do that. Cloud platforms can make national-scale services easier to secure, update, scale, and integrate than legacy bespoke systems. Identity-based access control is more manageable than a patchwork of local accounts. Managed databases, monitoring, and platform security can reduce operational fragility.

But the strategic dependency is real. When a national professional dataset sits on a cloud platform and its next major usability layer is built with a cloud AI service, the vendor relationship becomes part of the infrastructure. That does not make the choice wrong. It does mean public agencies and custodians need a clear view of portability, data ownership, resilience, disaster recovery, procurement leverage, and long-term cost.

This is the part of the story Microsoft understandably plays down. Azure is not just a neutral pipe in this arrangement. It is the operating environment, the identity layer, the AI platform, and part of the governance story. For a country trying to turn geotechnical information into a national asset, those choices should be scrutinized with the same seriousness as the engineering workflow itself.

Nadella’s New Zealand Pitch Is Bigger Than One Database

Nadella’s April 2026 visit was not only about geotechnical engineering. Microsoft also used the trip to promote broader AI adoption, digital skilling, customer stories, and the company’s claimed economic contribution in New Zealand. The BEYON and NZGD story sits inside that wider campaign: AI as national capability, Azure as enabling infrastructure, Microsoft as long-term partner.That framing is not accidental. Around the world, Microsoft is positioning itself not merely as a software vendor but as the default platform company for AI-era public and private modernization. In that narrative, each local case study becomes evidence that AI is already delivering practical returns in healthcare, transport, banking, agriculture, engineering, and government.

The geotechnical example is especially useful because it is hard to dismiss as frivolous. It is not a chatbot writing marketing copy or summarizing meeting transcripts. It is a domain-specific assistant attached to a post-disaster database with clear public value. If Microsoft wants skeptical governments to believe AI is more than hype, this is the kind of story it needs.

Yet the same strength makes the story politically and technically sensitive. When AI moves into domains tied to public safety and national resilience, the public deserves more than vendor optimism. It deserves evidence about accuracy, failure modes, user training, audit logs, review processes, and how professionals are instructed to treat AI output.

Good AI Infrastructure Starts With Human Restraint

The most encouraging detail in Microsoft’s account is not GPT-5.1, Azure, or the digital twin label. It is the stated refusal to let the assistant perform geotechnical analysis. That restraint is the difference between using AI to improve a workflow and using AI to blur responsibility.A retrieval assistant that narrows results, filters investigation types, and reduces information overload is a sensible tool. A system that starts implying what can safely be built where would be a very different product. One supports professional judgment; the other risks laundering model output as expertise.

This line will become increasingly important across enterprise software. The next wave of AI features will not be limited to chat panes. They will be embedded in case-management systems, design platforms, ticketing tools, security consoles, medical workflows, legal databases, and financial applications. The hard question will not be whether the model can generate an answer. It will be whether the application should allow that answer to exist in the first place.

Beca’s NZGD assistant points to a healthier model: constrain the AI to tasks where speed and usability improve without displacing accountable expertise. That may sound less revolutionary than the industry’s usual rhetoric. It is also more likely to survive contact with professional reality.

The Ground-Level Lesson From Nadella’s Auckland Showcase

Microsoft’s New Zealand story is worth watching because it demonstrates both the promise and the governance burden of applied AI. The concrete lessons are less glamorous than the keynote framing, but they are more useful for anyone building or buying AI systems.- The New Zealand Geotechnical Database became a national asset after the Christchurch earthquake because fragmented subsurface data had direct consequences for rebuilding decisions.

- Beca’s move to host NZGD on BEYON and Azure matters because the AI assistant depends on modernized data, identity, access control, and spatial context.

- The assistant’s strongest use case is retrieval and filtering, not engineering analysis, and that boundary is essential to keeping professional accountability intact.

- The reported 40 percent reduction in data-retrieval time is meaningful, but the larger value is giving engineers faster access to a broader evidence base.

- Azure’s role brings security and scalability advantages, while also creating long-term questions about dependency, resilience, cost, and public stewardship.

- The most credible enterprise AI deployments will be the ones that make expert work easier without pretending expertise has been automated.

Source: Microsoft Source Microsoft Chairman and CEO - Satya Nadella New Zealand Visit 2026