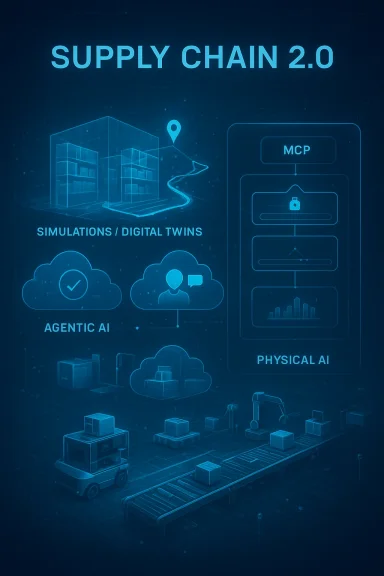

Microsoft is no longer talking about supply chain AI as a narrow set of forecasting and chatbot experiments. In its latest “Supply Chain 2.0” vision, the company is framing logistics as a three-layer transformation: simulations, agentic AI, and physical AI. That shift matters because it moves the conversation from productivity gains inside planning teams to a broader operating model in which software agents, digital twins, and robotics can influence decisions across warehouses, transportation, and manufacturing.

The timing is important. Microsoft has been rapidly expanding its enterprise AI stack in 2026, including Microsoft Foundry, Agent 365, and the broader Frontier positioning for agents in work. Its supply chain message now ties those platform moves to real-world operations, partner ecosystems, and physical automation, signaling that the company wants to own not just the cloud layer, but the decision layer and the execution layer too.

For Microsoft, the supply chain story starts long before the current agent hype cycle. The company has spent years consolidating fragmented logistics, planning, and operations systems into a more unified data foundation, and it has repeatedly used its own operations as a proving ground for enterprise software strategy. In other words, Microsoft is positioning itself as both vendor and customer zero, using its own environment to validate the value of its platform investments.

That framing is not new, but the scope has expanded dramatically. Microsoft says its cloud supply chain spans more than 70 Azure regions, more than 400 datacenters, and over 600,000 kilometers of fiber, while its broader business also includes Windows, Devices, Xbox, and the hardware ecosystem around them. The operational complexity of that footprint helps explain why the company is pushing hard on automation, observability, and predictive planning.

The new article builds on Microsoft’s earlier supply chain AI messaging from a year ago, when the company emphasized generative AI for forecasting, service, and planning workflows. What has changed since then is the industry-wide move from copilots that assist humans to agents that can coordinate actions across systems. Microsoft is clearly trying to show that it has gone beyond demos and into a platform architecture capable of supporting production deployment.

A second major change is the rise of open protocols and interoperability standards. Microsoft is now openly referencing MCP and A2A as mechanisms for agent connectivity, which reflects the market’s growing demand for agents that can work across vendors, data fabrics, and enterprise applications. The company’s recent Foundry and Copilot Studio updates also show a steady push toward multi-agent orchestration and agent publishing across Microsoft’s ecosystem.

The third shift is physical. Microsoft is no longer treating robotics and digital twins as separate from AI strategy; instead, it is casting them as the natural next step after simulations and autonomous reasoning. That is a strong signal to industrial customers, because it suggests Microsoft wants to be relevant from planning all the way to execution, including the edge, the warehouse floor, and the robot itself.

The company is also implicitly acknowledging that supply chains have become too volatile for static planning cycles. Demand shocks, geopolitical disruptions, labor constraints, and transportation variability all make it harder to rely on monthly or weekly planning cadences. Microsoft’s answer is to combine fresh data, context-rich models, and orchestration layers that can respond faster than traditional human-only planning teams.

The company’s approach also reflects a practical reality: supply chain AI fails when it is disconnected from systems of record. If an agent cannot trust the data model, cannot understand the process flow, or cannot safely act inside business constraints, it becomes a novelty instead of a productivity engine.

This matters because enterprise customers are often wary of AI claims that sound detached from operational reality. By describing measurable internal adoption, Microsoft is trying to demonstrate that its platform stack works under the pressure of real-world logistics complexity. It is a familiar tactic, but in this case it is also a useful one.

The design pattern is important. Each agent is anchored to a business problem, a measurable output, and a human workflow that already exists. That is the difference between a pilot that impresses and an agent system that scales.

The company is leaning heavily on discrete event simulation, digital twins, and 3D modeling to support this vision. That approach aligns with what many industrial firms have wanted for years: a way to model complex systems without disrupting the real one.

This is where Microsoft’s broader modeling stack comes in. The article points to Azure Machine Learning and Fabric-based model support as tools for simulating demand patterns, shortages, and disruptions. That positions Microsoft not as a single simulation vendor, but as an orchestration layer across forecasting, analytics, and digital twin workflows.

The collaboration with NVIDIA is also strategically important. By referencing Omniverse, Isaac Sim, and kit-based app streaming, Microsoft is signaling that it wants the Azure cloud to be the operational backbone for industrial simulation workloads. That should resonate with customers who need both simulation fidelity and cloud scalability.

Microsoft cites partners such as paiqo, Cosmo Tech, InstaDeep, and SoftServe, each of which contributes a different piece of the simulation and optimization stack. This is classic platform strategy: make Azure the place where the ecosystem assembles, then make the integration easier than building locally.

SoftServe’s work with Krones and Toyota Material Handling Europe is a good example of how the stack is being used in production-style environments. Those use cases suggest that digital twin value is increasingly measured in time saved, cycle-time reduction, and faster training rather than in abstract innovation language.

Microsoft splits these agents into two broad categories: troubleshooters that diagnose and propose fixes, and orchestrators that coordinate multistep work. This is an important distinction because supply chain operations need both. Troubleshooters can handle local exceptions, while orchestrators can manage cross-functional dependencies.

Microsoft’s examples from CSX Transportation, Dow, C.H. Robinson, Blue Yonder, Resilinc, o9, GEP, and Kinaxis illustrate how widely this pattern can spread. Some agents are narrowly transactional, while others sit closer to core planning platforms. That breadth suggests the market is moving toward embedded AI capabilities rather than standalone assistant products.

This is strategically clever because agents are only as strong as the context they can access. If the system knows how a business actually works, not just what the database contains, then it can make better recommendations and initiate more reliable actions. In supply chain, where process variation is common, that matters a great deal.

The architecture described in the article is also clearly designed for interoperability. Data is unified in Fabric and OneLake, process intelligence provides meaning, and then agents can build, orchestrate, and oversee workflows through Foundry, Copilot Studio, Power Automate, and Agent 365. That is a full stack story, not an isolated feature story.

The result is a closed-loop system in which the agent observes, reasons, acts, and then gets measured against business KPIs. That is the kind of architecture enterprise buyers often want but struggle to assemble on their own.

This is an important strategic expansion because it suggests Microsoft wants to be relevant after the software decision is made, not just before it. In practical terms, that means edge compute, sensor integration, robot training, and orchestration all become part of the Microsoft story.

The reference to Rho-alpha, Microsoft’s robotics model, is especially notable because it combines language, visual perception, and tactile feedback. That combination is exactly what makes robots more practical in messy real-world environments, where a rigid motion plan is often not enough.

This is where the company’s cloud-and-edge strategy becomes visible. Azure provides the compute and orchestration backbone, while edge systems capture live signals and enforce policies closer to the physical operation. That hybrid model is likely to be the norm for industrial AI, not the exception.

That has obvious implications for competitors. Enterprise software vendors that stop at planning or workflow orchestration may find themselves squeezed if Microsoft can connect the full chain from model to action. Likewise, pure-play robotics or digital twin vendors may need stronger platform partnerships to remain relevant at scale.

At the same time, Microsoft still depends on ecosystem credibility. Many of the most compelling examples in the article are partner-led, and customers will judge the platform based on integration quality, cost, and ease of deployment. The platform may be broad, but the winning implementation still has to be specific.

This distinction matters because Microsoft’s supply chain pitch is fundamentally an efficiency and resilience story, not a consumer AI story. The winners are the firms that can absorb the new operating model quickly and govern it well.

The other major question is standardization. If MCP, A2A, and related interoperability patterns continue to gain traction, Microsoft could benefit by becoming the default enterprise runtime for multi-agent supply chain systems. But if customers decide that the market is fragmenting too quickly, they may delay deeper adoption until a few dominant patterns emerge.

What to watch next:

Source: Microsoft Supply Chain 2.0: How Microsoft is powering simulations, AI agents, and physical AI - Microsoft Industry Blogs

The timing is important. Microsoft has been rapidly expanding its enterprise AI stack in 2026, including Microsoft Foundry, Agent 365, and the broader Frontier positioning for agents in work. Its supply chain message now ties those platform moves to real-world operations, partner ecosystems, and physical automation, signaling that the company wants to own not just the cloud layer, but the decision layer and the execution layer too.

Background

Background

For Microsoft, the supply chain story starts long before the current agent hype cycle. The company has spent years consolidating fragmented logistics, planning, and operations systems into a more unified data foundation, and it has repeatedly used its own operations as a proving ground for enterprise software strategy. In other words, Microsoft is positioning itself as both vendor and customer zero, using its own environment to validate the value of its platform investments.That framing is not new, but the scope has expanded dramatically. Microsoft says its cloud supply chain spans more than 70 Azure regions, more than 400 datacenters, and over 600,000 kilometers of fiber, while its broader business also includes Windows, Devices, Xbox, and the hardware ecosystem around them. The operational complexity of that footprint helps explain why the company is pushing hard on automation, observability, and predictive planning.

The new article builds on Microsoft’s earlier supply chain AI messaging from a year ago, when the company emphasized generative AI for forecasting, service, and planning workflows. What has changed since then is the industry-wide move from copilots that assist humans to agents that can coordinate actions across systems. Microsoft is clearly trying to show that it has gone beyond demos and into a platform architecture capable of supporting production deployment.

A second major change is the rise of open protocols and interoperability standards. Microsoft is now openly referencing MCP and A2A as mechanisms for agent connectivity, which reflects the market’s growing demand for agents that can work across vendors, data fabrics, and enterprise applications. The company’s recent Foundry and Copilot Studio updates also show a steady push toward multi-agent orchestration and agent publishing across Microsoft’s ecosystem.

The third shift is physical. Microsoft is no longer treating robotics and digital twins as separate from AI strategy; instead, it is casting them as the natural next step after simulations and autonomous reasoning. That is a strong signal to industrial customers, because it suggests Microsoft wants to be relevant from planning all the way to execution, including the edge, the warehouse floor, and the robot itself.

Why Microsoft Is Reframing Supply Chain AI

Microsoft’s core argument is that traditional supply chain software was built for visibility and reporting, while the next generation must be built for decision-making and action. That is a meaningful distinction. A dashboard can tell you that inventory is off; an agentic system can reason about why it is off, simulate alternatives, and trigger the right workflow.The company is also implicitly acknowledging that supply chains have become too volatile for static planning cycles. Demand shocks, geopolitical disruptions, labor constraints, and transportation variability all make it harder to rely on monthly or weekly planning cadences. Microsoft’s answer is to combine fresh data, context-rich models, and orchestration layers that can respond faster than traditional human-only planning teams.

From reporting to reasoning

The biggest strategic shift is from descriptive analytics to contextual reasoning. Microsoft’s language around Work IQ, Fabric IQ, and Foundry IQ shows that it wants enterprise agents to understand not just the data, but the business meaning behind the data. That matters in supply chain because inventory, route choice, and production planning are rarely isolated decisions; they are tradeoffs between service level, cost, carbon, and risk.The company’s approach also reflects a practical reality: supply chain AI fails when it is disconnected from systems of record. If an agent cannot trust the data model, cannot understand the process flow, or cannot safely act inside business constraints, it becomes a novelty instead of a productivity engine.

- Visibility alone is no longer enough

- Reasoning must be tied to actual process rules

- Actions must be auditable and governed

- Data freshness determines agent usefulness

- Human oversight still matters for exceptions

The Customer Zero Playbook

Microsoft’s own operations are central to its credibility story. The company says it consolidated more than 30 systems into a supply chain data lake on Azure in 2018, began experimenting with generative AI in 2022, and has since deployed more than 25 AI agents and applications across its supply chain environment. That timeline shows an evolution from integration to analytics to automation.This matters because enterprise customers are often wary of AI claims that sound detached from operational reality. By describing measurable internal adoption, Microsoft is trying to demonstrate that its platform stack works under the pressure of real-world logistics complexity. It is a familiar tactic, but in this case it is also a useful one.

Three internal examples

Microsoft highlights three representative agents: a Demand Planning Agent that runs AI-based demand simulations for non-IT rack components, a Multi-Agent DC Spare-Part Space Solver that uses computer vision and multi-agent reasoning, and a CargoPilot Agent that evaluates transport mode, cost, carbon, and cycle time. These are not generic chatbots; they are operational tools with narrow but valuable mandates.The design pattern is important. Each agent is anchored to a business problem, a measurable output, and a human workflow that already exists. That is the difference between a pilot that impresses and an agent system that scales.

- Demand planning reduces manual reconciliation

- Spare-part analysis reduces stockout and space risks

- Cargo optimization balances speed and sustainability

- Workflow automation saves time for planners

- Computer vision expands operational awareness

Simulations as Digital Twins

Microsoft is making a strong case that simulation is the bridge between AI insight and operational confidence. In supply chain, a simulation is more than a planning model; it is a safe environment where teams can test what happens if demand spikes, a supplier fails, or a warehouse layout changes. That makes simulation especially attractive in an era where businesses want faster decisions but cannot afford expensive mistakes.The company is leaning heavily on discrete event simulation, digital twins, and 3D modeling to support this vision. That approach aligns with what many industrial firms have wanted for years: a way to model complex systems without disrupting the real one.

Why simulation matters now

Simulation has always existed in supply chain, but the economic case is stronger today because supply chains are more interconnected and more exposed to volatility. Microsoft’s argument is that AI can make simulations more useful by adding speed, scale, and scenario generation. Instead of manually testing a few what-if cases, companies can explore a much broader decision space.This is where Microsoft’s broader modeling stack comes in. The article points to Azure Machine Learning and Fabric-based model support as tools for simulating demand patterns, shortages, and disruptions. That positions Microsoft not as a single simulation vendor, but as an orchestration layer across forecasting, analytics, and digital twin workflows.

Warehouse digital twins in practice

Microsoft’s warehouse examples are especially interesting because they map clearly to real operational pain points. Greenfield and brownfield planning, real-time monitoring, collision detection, trailer dwell time, and maintenance all benefit from a virtual model that can be updated continuously. In practice, this can reduce capex risk, shorten commissioning, and improve ramp-up performance.The collaboration with NVIDIA is also strategically important. By referencing Omniverse, Isaac Sim, and kit-based app streaming, Microsoft is signaling that it wants the Azure cloud to be the operational backbone for industrial simulation workloads. That should resonate with customers who need both simulation fidelity and cloud scalability.

- Greenfield planning helps design new facilities before construction

- Brownfield planning improves existing sites without major disruption

- Real-time monitoring enables operational visibility

- Safety analytics can identify collision and movement risks

- Maintenance modeling can reduce rework and downtime

The Ecosystem Around Simulation

A major theme in the article is that Microsoft is not trying to build every component itself. Instead, it is cultivating a partner ecosystem that can deliver faster proofs of value across industries. That approach is smart because simulation projects are often domain-specific, hardware-specific, and expensive to customize from scratch.Microsoft cites partners such as paiqo, Cosmo Tech, InstaDeep, and SoftServe, each of which contributes a different piece of the simulation and optimization stack. This is classic platform strategy: make Azure the place where the ecosystem assembles, then make the integration easier than building locally.

Partner specialization is the real differentiator

The partner layer matters because supply chain simulation is not one product category. Forecasting platforms, risk models, reinforcement learning systems, warehouse digital twins, and robot testing environments all solve different problems. Microsoft’s platform strength comes from the fact that it can support all of them without forcing a one-size-fits-all model.SoftServe’s work with Krones and Toyota Material Handling Europe is a good example of how the stack is being used in production-style environments. Those use cases suggest that digital twin value is increasingly measured in time saved, cycle-time reduction, and faster training rather than in abstract innovation language.

- paiqo brings forecasting specialization

- Cosmo Tech targets risk and disruption modeling

- InstaDeep focuses on reinforcement learning and optimization

- SoftServe helps translate simulation into deployment

- TeamViewer extends the value to frontline workers

Agentic Supply Chains and the New Control Layer

The most consequential part of Microsoft’s argument may be its belief that supply chains are entering an agentic web. In this model, agents do not just answer questions; they perform specialized tasks, coordinate with each other, and execute workflows under policy constraints. That is a big jump from earlier chatbot-era automation.Microsoft splits these agents into two broad categories: troubleshooters that diagnose and propose fixes, and orchestrators that coordinate multistep work. This is an important distinction because supply chain operations need both. Troubleshooters can handle local exceptions, while orchestrators can manage cross-functional dependencies.

What multi-agent systems actually do

The practical promise of agentic systems is not autonomy for its own sake. It is the ability to reduce friction across planning, procurement, transportation, and service operations. If agents can validate eligibility, route requests, find discrepancies, or generate quotes, then humans can focus on exceptions, negotiation, and strategic judgment.Microsoft’s examples from CSX Transportation, Dow, C.H. Robinson, Blue Yonder, Resilinc, o9, GEP, and Kinaxis illustrate how widely this pattern can spread. Some agents are narrowly transactional, while others sit closer to core planning platforms. That breadth suggests the market is moving toward embedded AI capabilities rather than standalone assistant products.

- Customer eligibility checks can be automated

- Freight invoice analysis can detect discrepancies

- Fast quoting can speed customer response

- Inventory mismatch detection can prevent shortages

- Supplier-risk monitoring can accelerate mitigation

Fabric IQ, Work IQ, and Process Intelligence

Microsoft’s most interesting architectural claim is that Work IQ, Foundry IQ, and Fabric IQ together form an intelligence layer that gives agents the context they need to act. That framing suggests Microsoft wants to unify human work patterns, business data, and organizational knowledge into a single decision fabric.This is strategically clever because agents are only as strong as the context they can access. If the system knows how a business actually works, not just what the database contains, then it can make better recommendations and initiate more reliable actions. In supply chain, where process variation is common, that matters a great deal.

Why context is the missing layer

Microsoft’s partnership narrative with Celonis is especially notable. Celonis’ Process Intelligence Graph adds process-mining context on top of unified data, which helps explain how work actually flows across the organization. That makes the architecture more realistic than a pure data-lake story, because it captures process truth rather than only data availability.The architecture described in the article is also clearly designed for interoperability. Data is unified in Fabric and OneLake, process intelligence provides meaning, and then agents can build, orchestrate, and oversee workflows through Foundry, Copilot Studio, Power Automate, and Agent 365. That is a full stack story, not an isolated feature story.

How the stack is supposed to work

Microsoft’s layered model is worth unpacking because it reveals the company’s enterprise strategy. On the bottom, system-of-record data is unified with mirroring, streaming, and multi-cloud shortcuts. In the middle, Fabric IQ and Celonis translate that data into operational context. On top, agents take action through Microsoft and partner tools that already live in employee workflows.The result is a closed-loop system in which the agent observes, reasons, acts, and then gets measured against business KPIs. That is the kind of architecture enterprise buyers often want but struggle to assemble on their own.

- OneLake acts as the governed data foundation

- Fabric IQ provides the reasoning layer

- Celonis PI Graph explains process behavior

- Foundry and Copilot Studio build the agents

- Agent 365 oversees governance and control

Physical AI and Robotics at the Edge

The article’s third pillar, physical AI, is perhaps the most ambitious. Here Microsoft is arguing that simulations and agents will eventually be embodied in robots and autonomous systems that act in the physical world. That puts the company in direct conversation with industrial automation, warehouse robotics, and humanoid systems.This is an important strategic expansion because it suggests Microsoft wants to be relevant after the software decision is made, not just before it. In practical terms, that means edge compute, sensor integration, robot training, and orchestration all become part of the Microsoft story.

From screens to machines

Microsoft describes a future in which robots handle trailer unloading, sorting, pallet handling, replenishment, packing, labeling, and last-mile delivery. That is a broad vision, but it fits the current direction of industrial AI: more autonomy, more perception, and more adaptation in environments that were once too variable for full automation.The reference to Rho-alpha, Microsoft’s robotics model, is especially notable because it combines language, visual perception, and tactile feedback. That combination is exactly what makes robots more practical in messy real-world environments, where a rigid motion plan is often not enough.

The robotics toolchain

Microsoft also presents a robotics architecture built around Azure Machine Learning, AKS, Fabric, Azure Arc, and NVIDIA’s robotics stack, including Isaac Sim, Isaac Lab, and OSMO. The key idea is that simulation, training, and deployment should form a continuous loop rather than separate projects. That is essential for robotics, where model quality and environment fidelity directly affect operational safety.This is where the company’s cloud-and-edge strategy becomes visible. Azure provides the compute and orchestration backbone, while edge systems capture live signals and enforce policies closer to the physical operation. That hybrid model is likely to be the norm for industrial AI, not the exception.

- Simulation reduces risk before deployment

- Training improves robot behavior

- Edge orchestration supports real-time action

- Cloud analytics provide fleet-level insight

- Governance keeps automation controlled

Competitive Implications for the Market

Microsoft’s move is not happening in isolation. It is part of a larger race among cloud, software, AI, and industrial technology vendors to control the enterprise decision stack. The company is effectively saying that the future of supply chain belongs to platforms that can combine data, reasoning, simulation, security, and execution.That has obvious implications for competitors. Enterprise software vendors that stop at planning or workflow orchestration may find themselves squeezed if Microsoft can connect the full chain from model to action. Likewise, pure-play robotics or digital twin vendors may need stronger platform partnerships to remain relevant at scale.

What this means for rivals

The competitive pressure is especially intense because Microsoft is approaching the problem from multiple directions at once. It has Azure for infrastructure, Fabric for analytics, Foundry for agents, Dynamics 365 for business workflows, and security/governance products for control. That breadth makes it difficult for rivals to match the stack without partnerships.At the same time, Microsoft still depends on ecosystem credibility. Many of the most compelling examples in the article are partner-led, and customers will judge the platform based on integration quality, cost, and ease of deployment. The platform may be broad, but the winning implementation still has to be specific.

Enterprise versus consumer impact

For enterprises, the biggest value is likely to come from process automation, reduced planning friction, faster response to disruptions, and lower operational waste. For consumers, the impact is indirect but real: better product availability, fewer shipping delays, and eventually more responsive fulfillment networks. The consumer sees the effect, but the enterprise captures the economics.This distinction matters because Microsoft’s supply chain pitch is fundamentally an efficiency and resilience story, not a consumer AI story. The winners are the firms that can absorb the new operating model quickly and govern it well.

- Cloud vendors are now competing on decision infrastructure

- ERP and planning vendors must add stronger agent layers

- Robotics vendors need broader enterprise integration

- Digital twin firms need real-time, governed data foundations

- Systems integrators become more important, not less

Strengths and Opportunities

Microsoft’s supply chain strategy has a number of real strengths. It is not just broad; it is structurally coherent, because the company is aligning infrastructure, AI, data, governance, and partner ecosystems around a single operating vision. That coherence creates opportunities for enterprise buyers who are tired of stitching together disconnected tools.- End-to-end stack coverage from cloud to edge to robotics

- Strong internal validation through Microsoft’s own supply chain

- Clear partner ecosystem that can accelerate deployment

- Open protocol support through MCP and A2A

- Deep data foundation via Fabric, OneLake, and process intelligence

- Governance and security emphasis through Agent 365 and Azure controls

- Practical use cases with measurable operational outcomes

Risks and Concerns

The vision is ambitious, but it is also carrying significant risk. The more Microsoft broadens the stack, the more it must prove that each layer works well in production, integrates cleanly, and remains manageable for customers with limited internal AI maturity. A beautiful architecture diagram is not the same as a dependable operational system.- Integration complexity could slow real-world adoption

- Vendor lock-in concerns may rise as the stack expands

- Data quality issues can undermine agent reliability

- Governance gaps could create security and compliance exposure

- Robotics safety remains a serious operational concern

- ROI proof will vary widely by industry and maturity

- Change management may be harder than the technology itself

Looking Ahead

The next phase of this story will be about execution, not vision. Microsoft has now laid out the architecture for simulations, agents, and physical AI; what matters next is whether customers can deploy these capabilities without excessive complexity or customization. The enterprise market will reward platforms that make advanced automation feel operationally safe, not just technically impressive.The other major question is standardization. If MCP, A2A, and related interoperability patterns continue to gain traction, Microsoft could benefit by becoming the default enterprise runtime for multi-agent supply chain systems. But if customers decide that the market is fragmenting too quickly, they may delay deeper adoption until a few dominant patterns emerge.

What to watch next:

- More real customer deployments with quantified outcomes

- Broader Agent 365 adoption as the governance layer matures

- Deeper Fabric and Celonis integrations for process intelligence

- Expanded robotics pilots tied to warehouse and fulfillment operations

- Evidence of interoperability across Microsoft and third-party agent ecosystems

Source: Microsoft Supply Chain 2.0: How Microsoft is powering simulations, AI agents, and physical AI - Microsoft Industry Blogs