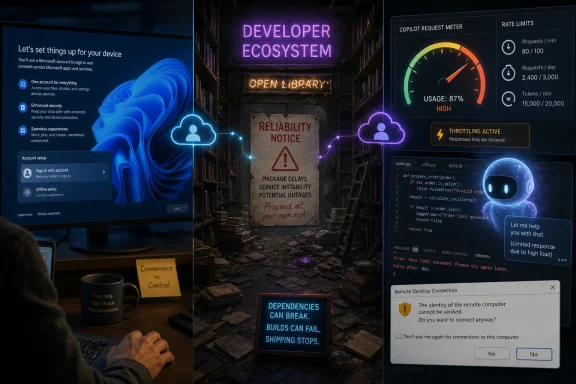

Microsoft’s latest rough week spans Windows 11 account nudges, April 2026 Remote Desktop security prompts, GitHub Copilot usage limits, and mounting complaints that GitHub’s reliability has slipped at the exact moment Redmond is selling AI as the future of software. The common thread is not that every change is equally serious. It is that Microsoft keeps asking users to trust more of their work to Microsoft-controlled services while making the daily experience feel more coercive, more brittle, and less accountable.

That is a bad trade. Windows users can tolerate an irritating prompt if the operating system is otherwise boringly dependable. Developers can forgive the odd GitHub wobble if the platform still feels like neutral infrastructure. But when the pitch becomes “let our cloud, our account system, our agents, and our AI coding assistants sit between you and your work,” reliability stops being a feature and becomes the whole product.

Microsoft has spent the last several years trying to make AI feel inevitable. Copilot has moved from a brand to a strategy, from a button to a business model, and from a developer tool to a kind of ambient Microsoft weather system. Windows is being framed less as a desktop operating system than as a host for agents, identity, subscriptions, cloud storage, and AI-mediated workflows.

That is a defensible bet in the abstract. If AI assistants really do become the primary interface for routine computing, Microsoft is right to want Windows, GitHub, Azure, and Microsoft 365 tied together tightly enough that competitors cannot easily peel users away. The problem is that tight integration feels very different depending on whether the user asked for it.

On Windows 11, the complaint is familiar: Microsoft’s desire to route users through Microsoft accounts and paid services keeps showing up in the setup flow and everyday UI. The company can argue, reasonably, that accounts enable sync, security recovery, device management, app purchases, OneDrive backup, and cross-device continuity. But the lived experience for many users is that the PC they bought keeps behaving like a kiosk for someone else’s business model.

That irritation matters because it creates the emotional backdrop for everything else. When users already feel squeezed toward the cloud, each reliability problem looks less like a mistake and more like proof of a bargain gone sour. Microsoft says the cloud makes the PC smarter. Users notice that it also makes the PC more dependent.

That is why Microsoft received more credit than many expected after buying GitHub in 2018. The old joke was that Redmond would “embrace, extend, extinguish” it. Instead, GitHub remained recognizably GitHub, grew into a broader developer platform, and became the launchpad for Copilot, arguably Microsoft’s most culturally successful AI product.

For years, that looked like a case study in how a giant could buy a beloved developer institution without suffocating it. The platform became more commercial, certainly, but not in the way Skype became a cautionary tale or Windows Live became a landfill of abandoned brands. GitHub still felt like infrastructure first and Microsoft strategy second.

That perception is now under strain. Complaints about GitHub reliability have become harder to dismiss as ordinary grumbling because they are coming from the sort of users who understand exactly how hard reliable infrastructure is — and who have historically been inclined to give GitHub the benefit of the doubt. When a platform’s most committed power users start building outage diaries, the issue has moved beyond social media noise.

The production argument is especially damaging. A developer can host code anywhere. A project can mirror repositories, move issues, switch CI providers, and publish releases through other channels. But the more an ecosystem standardizes around GitHub, the more every migration becomes a political, operational, and educational tax.

GitHub’s value is not only in private repository uptime or pull request buttons. It is in the expectation that a link to code will work, that an issue thread will remain available, that a dependency’s history can be inspected, that a security advisory can be found, and that newcomers can search a living archive of solved problems. A flaky GitHub is not just annoying. It degrades the collective memory of software.

That is where Microsoft’s AI strategy becomes awkward. GitHub’s public code is one of the great reservoirs from which both humans and machines learn. Microsoft has been eager to monetize AI trained around developer workflows, but that heightens rather than reduces its custodial obligation. If GitHub is the library, Copilot is not the librarian; it is a commercial service built on the library’s centrality.

GitHub has moved Copilot deeper into premium requests, model tiers, concurrency controls, and usage-based accounting. Some of that is unavoidable. Large AI models are expensive to run, and agentic workflows can burn through compute in ways a flat subscription price cannot absorb forever. If a single coding session can involve multiple model calls, tool invocations, context expansion, retries, and long-running tasks, the old idea of “unlimited” help was always going to meet the spreadsheet.

But the user experience has been clumsy. Developers do not think in premium request units while trying to debug a failing build. They do not want to discover that a model is unavailable, that a session is rate-limited, or that a nominal allowance is constrained by another hidden ceiling. A coding assistant that becomes unpredictable under pressure is not an assistant; it is another dependency to manage.

This is the fundamental challenge for AI coding tools. The more ambitious they become, the less they resemble autocomplete and the more they resemble cloud compute wearing a friendly face. That means customers will judge them by the standards of cloud compute: transparent pricing, predictable capacity, clear quotas, strong SLAs, and no surprises when work is on the line.

That tension is not new. Windows has been a commercial platform for decades, and Microsoft has always used it to promote adjacent services. The difference now is that AI and cloud identity make the boundaries feel more consequential. A browser prompt is one thing. An operating system that wants to mediate your files, credentials, applications, search, code, and agent permissions is another.

The company’s defenders will argue that most users benefit from defaults. They are right, up to a point. Secure sign-in, cloud recovery, passwordless authentication, device encryption, backup, and app reputation systems can protect people from real harm. The trouble begins when Microsoft uses security language, convenience language, and monetization incentives in the same breath until users can no longer tell which motive is driving which prompt.

That ambiguity corrodes trust. A user who believes a setting exists for safety will accept friction. A user who believes the same setting exists to improve subscription conversion will look for bypasses. Microsoft cannot secure Windows by making its own motives suspect.

Yet enterprise IT does not live in a PowerPoint diagram. Remote Desktop is embedded in old workflows, RemoteApp deployments, admin habits, certificate chains, gateways, help desk scripts, and muscle memory. A security warning that appears in the wrong place, fails to respect known publishers, or trains users to click through yet another dialog can become a productivity incident and a security anti-pattern at the same time.

This is Microsoft’s recurring Windows problem in miniature. The company has to harden a vast legacy platform without breaking the organizations that rely on it. That requires not only better security engineering but better rollout empathy: clearer documentation before enforcement, better controls for administrators, fewer surprise regressions, and testing that reflects messy real-world estates rather than idealized lab setups.

The maddening part is that Microsoft knows this. Its Secure Future Initiative and Windows resiliency messaging acknowledge that the old balance between openness, compatibility, and safety has become untenable. But acknowledging the problem does not absolve the company when the fixes arrive as another round of prompts, breakage, and administrator spelunking.

Voluntary redundancy programs are especially dangerous in engineering organizations. The people most able to leave are often the people with the most portable skills, the strongest networks, and the deepest confidence that they can find good work elsewhere. The people who remain may be talented and committed, but the organization risks losing the undocumented knowledge that keeps complex systems upright.

That knowledge is not glamorous. It is the engineer who knows why a certain queue behaves badly during regional failover. It is the team lead who remembers the migration that almost broke billing. It is the operations veteran who can tell the difference between a harmless spike and the first hint of a cascading outage. AI can summarize logs, suggest patches, and draft incident updates, but it cannot magically recreate institutional memory after management has made it optional.

This matters because reliability is cumulative. It is built by people who have lived through failures, retained the lessons, and earned the authority to say no to risky shortcuts. If those people are distracted, reassigned, burned out, or paid to walk away, the status page eventually tells the story.

The same is true across the broader stack. Microsoft 365 has challengers. Azure has challengers. Copilot has challengers. Windows itself remains enormously sticky, but more workloads are browser-based, cloud-hosted, containerized, or developed on machines where the local OS matters less than the toolchain.

Digital sovereignty adds accelerant. Governments and regulated industries are increasingly uncomfortable with strategic dependence on a handful of US cloud giants. That does not mean they will all abandon Microsoft tomorrow. It does mean that every outage, every licensing surprise, every AI land grab, and every account coercion becomes evidence in procurement arguments that Microsoft would rather not have.

GitHub is particularly exposed because it occupies a cultural role as well as a commercial one. If developers begin to see migration away from GitHub not as eccentric purism but as prudent resilience, Microsoft loses more than repository hosting revenue. It loses default status in the minds of the people who choose tomorrow’s platforms.

That may be useful for marketing, but it raises expectations the underlying systems cannot always meet. If Copilot is everywhere, then Copilot’s failures also feel everywhere. A rate limit in GitHub, a hallucinated enterprise answer, a Windows prompt nobody asked for, or an agent permission scare all become part of the same public narrative: Microsoft is pushing AI faster than it is earning trust.

The company’s strongest AI products succeed when they are specific. Copilot helping with a code completion is easy to understand. Copilot summarizing a Teams meeting has an obvious use. Copilot explaining a PowerShell script can be genuinely helpful. The trouble begins when Microsoft treats AI as the answer to product strategy itself.

Users do not want a theological commitment to agents. They want the task to work. If AI helps, fine. If it adds cost, ambiguity, latency, or another failure mode, the magic evaporates quickly.

Microsoft’s recent week fails that test in several small but connected ways. Windows 11’s service nudges tell users that Redmond’s priorities may override their preferences. Remote Desktop’s security changes remind admins that even justified hardening can arrive with operational splinters. Copilot’s limits reveal that AI abundance has a meter attached. GitHub’s reliability complaints threaten the one Microsoft asset that developers most need to feel boring.

The company can recover, because it has recovered from worse. Microsoft’s enterprise strength has always been a peculiar mix of technical depth, partner gravity, and sheer institutional persistence. It can fix incidents, tune limits, improve documentation, and fund reliability work if it chooses to.

But the choice has to be visible. Trust is not restored by another keynote about agents. It is restored when users see Microsoft making the less flashy decision: slowing a rollout, preserving an escape hatch, staffing reliability, publishing clearer limits, or admitting that not every surface needs an AI upsell.

Source: theregister.com Microsoft's code shack shaken as Redmond chases AI ghosts

That is a bad trade. Windows users can tolerate an irritating prompt if the operating system is otherwise boringly dependable. Developers can forgive the odd GitHub wobble if the platform still feels like neutral infrastructure. But when the pitch becomes “let our cloud, our account system, our agents, and our AI coding assistants sit between you and your work,” reliability stops being a feature and becomes the whole product.

Redmond’s AI Story Is Colliding With Its Trust Story

Redmond’s AI Story Is Colliding With Its Trust Story

Microsoft has spent the last several years trying to make AI feel inevitable. Copilot has moved from a brand to a strategy, from a button to a business model, and from a developer tool to a kind of ambient Microsoft weather system. Windows is being framed less as a desktop operating system than as a host for agents, identity, subscriptions, cloud storage, and AI-mediated workflows.That is a defensible bet in the abstract. If AI assistants really do become the primary interface for routine computing, Microsoft is right to want Windows, GitHub, Azure, and Microsoft 365 tied together tightly enough that competitors cannot easily peel users away. The problem is that tight integration feels very different depending on whether the user asked for it.

On Windows 11, the complaint is familiar: Microsoft’s desire to route users through Microsoft accounts and paid services keeps showing up in the setup flow and everyday UI. The company can argue, reasonably, that accounts enable sync, security recovery, device management, app purchases, OneDrive backup, and cross-device continuity. But the lived experience for many users is that the PC they bought keeps behaving like a kiosk for someone else’s business model.

That irritation matters because it creates the emotional backdrop for everything else. When users already feel squeezed toward the cloud, each reliability problem looks less like a mistake and more like proof of a bargain gone sour. Microsoft says the cloud makes the PC smarter. Users notice that it also makes the PC more dependent.

GitHub Was Supposed to Be the Exception

GitHub has always been Microsoft’s most delicate acquisition because it was never just another product. It is source control, yes, but it is also an archive, a professional network, a publishing system, a CI/CD trigger point, a security surface, a hiring signal, and the place where millions of programmers learn by reading other people’s work. GitHub is not merely where code lives. It is where much of modern software culture remembers itself.That is why Microsoft received more credit than many expected after buying GitHub in 2018. The old joke was that Redmond would “embrace, extend, extinguish” it. Instead, GitHub remained recognizably GitHub, grew into a broader developer platform, and became the launchpad for Copilot, arguably Microsoft’s most culturally successful AI product.

For years, that looked like a case study in how a giant could buy a beloved developer institution without suffocating it. The platform became more commercial, certainly, but not in the way Skype became a cautionary tale or Windows Live became a landfill of abandoned brands. GitHub still felt like infrastructure first and Microsoft strategy second.

That perception is now under strain. Complaints about GitHub reliability have become harder to dismiss as ordinary grumbling because they are coming from the sort of users who understand exactly how hard reliable infrastructure is — and who have historically been inclined to give GitHub the benefit of the doubt. When a platform’s most committed power users start building outage diaries, the issue has moved beyond social media noise.

The Library Cannot Keep Flickering

Mitchell Hashimoto’s criticism landed because it expressed what many developers fear but hesitate to say: GitHub has become so central that even small reliability failures have outsized consequences. Hashimoto is not a casual user wandering into a status-page incident. As a HashiCorp co-founder and creator of projects such as Ghostty, he represents the exact constituency GitHub cannot afford to alienate: serious builders who both depend on the platform and contribute to its prestige.The production argument is especially damaging. A developer can host code anywhere. A project can mirror repositories, move issues, switch CI providers, and publish releases through other channels. But the more an ecosystem standardizes around GitHub, the more every migration becomes a political, operational, and educational tax.

GitHub’s value is not only in private repository uptime or pull request buttons. It is in the expectation that a link to code will work, that an issue thread will remain available, that a dependency’s history can be inspected, that a security advisory can be found, and that newcomers can search a living archive of solved problems. A flaky GitHub is not just annoying. It degrades the collective memory of software.

That is where Microsoft’s AI strategy becomes awkward. GitHub’s public code is one of the great reservoirs from which both humans and machines learn. Microsoft has been eager to monetize AI trained around developer workflows, but that heightens rather than reduces its custodial obligation. If GitHub is the library, Copilot is not the librarian; it is a commercial service built on the library’s centrality.

Copilot’s Rationing Exposes the Economics Behind the Magic

The Copilot usage-limit controversy is more than a billing-policy footnote. It is a glimpse into the uncomfortable economics of AI products that were initially marketed with the smoothness of software-as-a-service but are now behaving more like metered utilities. Developers were sold an assistant. Increasingly, they are discovering a tariff sheet.GitHub has moved Copilot deeper into premium requests, model tiers, concurrency controls, and usage-based accounting. Some of that is unavoidable. Large AI models are expensive to run, and agentic workflows can burn through compute in ways a flat subscription price cannot absorb forever. If a single coding session can involve multiple model calls, tool invocations, context expansion, retries, and long-running tasks, the old idea of “unlimited” help was always going to meet the spreadsheet.

But the user experience has been clumsy. Developers do not think in premium request units while trying to debug a failing build. They do not want to discover that a model is unavailable, that a session is rate-limited, or that a nominal allowance is constrained by another hidden ceiling. A coding assistant that becomes unpredictable under pressure is not an assistant; it is another dependency to manage.

This is the fundamental challenge for AI coding tools. The more ambitious they become, the less they resemble autocomplete and the more they resemble cloud compute wearing a friendly face. That means customers will judge them by the standards of cloud compute: transparent pricing, predictable capacity, clear quotas, strong SLAs, and no surprises when work is on the line.

Microsoft Keeps Mistaking Control for Confidence

The same pattern shows up on Windows. Microsoft’s account push is rational from the company’s perspective, but it often lands as distrust from the user’s perspective. If local accounts are made harder to find, if setup flows steer users toward cloud backup and subscriptions, and if the operating system keeps reintroducing Microsoft services after users have declined them, the message is clear: the machine may be yours, but the preferred route through it is Microsoft’s.That tension is not new. Windows has been a commercial platform for decades, and Microsoft has always used it to promote adjacent services. The difference now is that AI and cloud identity make the boundaries feel more consequential. A browser prompt is one thing. An operating system that wants to mediate your files, credentials, applications, search, code, and agent permissions is another.

The company’s defenders will argue that most users benefit from defaults. They are right, up to a point. Secure sign-in, cloud recovery, passwordless authentication, device encryption, backup, and app reputation systems can protect people from real harm. The trouble begins when Microsoft uses security language, convenience language, and monetization incentives in the same breath until users can no longer tell which motive is driving which prompt.

That ambiguity corrodes trust. A user who believes a setting exists for safety will accept friction. A user who believes the same setting exists to improve subscription conversion will look for bypasses. Microsoft cannot secure Windows by making its own motives suspect.

Remote Desktop Shows the Cost of Security Without Grace

The April 2026 Remote Desktop changes are a useful example because the underlying goal is sound. Malicious RDP files can be used to trick users into connecting to hostile systems or exposing local resources. More explicit warnings and safer defaults make sense, particularly in an era when remote access remains a favorite path for attackers.Yet enterprise IT does not live in a PowerPoint diagram. Remote Desktop is embedded in old workflows, RemoteApp deployments, admin habits, certificate chains, gateways, help desk scripts, and muscle memory. A security warning that appears in the wrong place, fails to respect known publishers, or trains users to click through yet another dialog can become a productivity incident and a security anti-pattern at the same time.

This is Microsoft’s recurring Windows problem in miniature. The company has to harden a vast legacy platform without breaking the organizations that rely on it. That requires not only better security engineering but better rollout empathy: clearer documentation before enforcement, better controls for administrators, fewer surprise regressions, and testing that reflects messy real-world estates rather than idealized lab setups.

The maddening part is that Microsoft knows this. Its Secure Future Initiative and Windows resiliency messaging acknowledge that the old balance between openness, compatibility, and safety has become untenable. But acknowledging the problem does not absolve the company when the fixes arrive as another round of prompts, breakage, and administrator spelunking.

Voluntary Exits Are Not a Reliability Strategy

The Register’s sharpest point is not that Microsoft likes AI too much. It is that Microsoft may be allowing the AI obsession to distort resource allocation and management judgment. When a company is simultaneously pushing AI everywhere, tightening AI usage limits, suffering reliability complaints on a foundational developer platform, and paying some employees to leave, outsiders are entitled to ask whether the institution is optimizing for the right things.Voluntary redundancy programs are especially dangerous in engineering organizations. The people most able to leave are often the people with the most portable skills, the strongest networks, and the deepest confidence that they can find good work elsewhere. The people who remain may be talented and committed, but the organization risks losing the undocumented knowledge that keeps complex systems upright.

That knowledge is not glamorous. It is the engineer who knows why a certain queue behaves badly during regional failover. It is the team lead who remembers the migration that almost broke billing. It is the operations veteran who can tell the difference between a harmless spike and the first hint of a cascading outage. AI can summarize logs, suggest patches, and draft incident updates, but it cannot magically recreate institutional memory after management has made it optional.

This matters because reliability is cumulative. It is built by people who have lived through failures, retained the lessons, and earned the authority to say no to risky shortcuts. If those people are distracted, reassigned, burned out, or paid to walk away, the status page eventually tells the story.

The Developer Cloud Is No Longer a One-Horse Town

Microsoft also faces a competitive problem that did not exist in the same way a decade ago. GitHub remains dominant, but alternatives are credible. GitLab, self-hosted Forgejo and Gitea deployments, cloud-native CI systems, artifact registries, and sovereign development platforms all offer escape routes for organizations that decide GitHub has become too risky or too strategically entangled.The same is true across the broader stack. Microsoft 365 has challengers. Azure has challengers. Copilot has challengers. Windows itself remains enormously sticky, but more workloads are browser-based, cloud-hosted, containerized, or developed on machines where the local OS matters less than the toolchain.

Digital sovereignty adds accelerant. Governments and regulated industries are increasingly uncomfortable with strategic dependence on a handful of US cloud giants. That does not mean they will all abandon Microsoft tomorrow. It does mean that every outage, every licensing surprise, every AI land grab, and every account coercion becomes evidence in procurement arguments that Microsoft would rather not have.

GitHub is particularly exposed because it occupies a cultural role as well as a commercial one. If developers begin to see migration away from GitHub not as eccentric purism but as prudent resilience, Microsoft loses more than repository hosting revenue. It loses default status in the minds of the people who choose tomorrow’s platforms.

The Copilot Brand Is Carrying Too Much Weight

Part of Microsoft’s problem is semantic inflation. “Copilot” now means too many things: a coding assistant, a Windows sidebar, a Microsoft 365 helper, an Azure feature, a security product, a branding wrapper, and increasingly an agentic promise that software will do more on the user’s behalf. The word has become a strategic solvent, dissolving product boundaries in Microsoft’s favor.That may be useful for marketing, but it raises expectations the underlying systems cannot always meet. If Copilot is everywhere, then Copilot’s failures also feel everywhere. A rate limit in GitHub, a hallucinated enterprise answer, a Windows prompt nobody asked for, or an agent permission scare all become part of the same public narrative: Microsoft is pushing AI faster than it is earning trust.

The company’s strongest AI products succeed when they are specific. Copilot helping with a code completion is easy to understand. Copilot summarizing a Teams meeting has an obvious use. Copilot explaining a PowerShell script can be genuinely helpful. The trouble begins when Microsoft treats AI as the answer to product strategy itself.

Users do not want a theological commitment to agents. They want the task to work. If AI helps, fine. If it adds cost, ambiguity, latency, or another failure mode, the magic evaporates quickly.

The Real SLA Is Emotional

Service-level agreements measure uptime, but platforms also have an emotional SLA. Users ask, implicitly: can I trust this thing not to waste my time? Can I predict its behavior? Can I explain it to my boss, my team, or my family? Can I opt out without being punished? Can I keep working when the cloud hiccups?Microsoft’s recent week fails that test in several small but connected ways. Windows 11’s service nudges tell users that Redmond’s priorities may override their preferences. Remote Desktop’s security changes remind admins that even justified hardening can arrive with operational splinters. Copilot’s limits reveal that AI abundance has a meter attached. GitHub’s reliability complaints threaten the one Microsoft asset that developers most need to feel boring.

The company can recover, because it has recovered from worse. Microsoft’s enterprise strength has always been a peculiar mix of technical depth, partner gravity, and sheer institutional persistence. It can fix incidents, tune limits, improve documentation, and fund reliability work if it chooses to.

But the choice has to be visible. Trust is not restored by another keynote about agents. It is restored when users see Microsoft making the less flashy decision: slowing a rollout, preserving an escape hatch, staffing reliability, publishing clearer limits, or admitting that not every surface needs an AI upsell.

The Week’s Menu Leaves a Bitter Aftertaste

The lesson from this particular Microsoft wobble is not that AI is doomed, GitHub is collapsing, or Windows is suddenly unusable. It is that Microsoft is bundling too many trust-sensitive changes into a period when users are already primed to suspect the company’s motives.- Microsoft’s Windows 11 account and service nudges are becoming a symbol of coercion even when individual features have defensible security or convenience arguments.

- GitHub’s reliability complaints are unusually serious because the platform functions as shared developer infrastructure, not merely as another Microsoft cloud service.

- Copilot’s usage limits show that AI coding tools are moving from flat-rate magic toward metered compute, and developers will demand cloud-grade transparency as a result.

- Remote Desktop’s April 2026 security prompts illustrate how even sensible hardening can damage trust if it lands without enough administrative polish.

- Microsoft’s AI push will be judged less by demos than by whether the company protects the boring reliability of the systems AI now depends on.

Source: theregister.com Microsoft's code shack shaken as Redmond chases AI ghosts