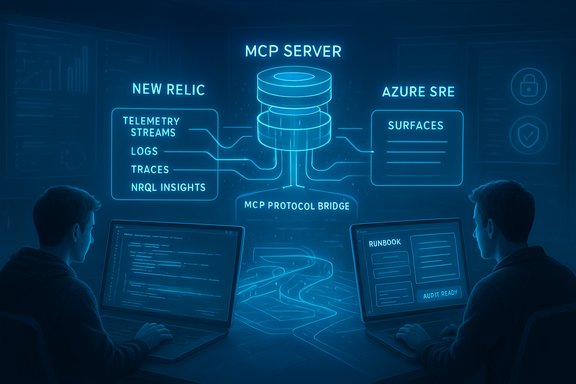

New Relic’s latest push tightens the link between application telemetry and the new generation of agentic AI by shipping an AI Model Context Protocol (MCP) Server in public preview and embedding observability data directly into Microsoft Azure operational surfaces — a move intended to cut context‑switching for engineers, accelerate incident response, and make AI-driven remediation safer and more auditable. The company says the MCP Server and related Agentic AI Monitoring features let AI agents, GitHub Copilot, Copilot Studio, and Microsoft Foundry access live New Relic telemetry so diagnostics, probable‑cause signals, and runbook steps appear inside the developer and SRE workflows they already use.

The announcement sits at the intersection of three trends reshaping cloud operations: cloud providers adding “agentic” assistants inside their control planes, the rise of standards like the Model Context Protocol (MCP) to let agents call tools deterministically, and observability platforms evolving to provide model‑aware telemetry. New Relic’s MCP Server aims to be a protocol bridge that exposes New Relic’s telemetry, traces, logs, and NRQL‑driven insights to MCP‑compatible agents, eliminating the need for custom instrumentation each time a new agent or model is introduced. The company made the MCP Server available in public preview on November 4–5, 2025 and documents the onboarding steps, supported tools, and preview constraints in its product pages. Why this matters now: enterprises are rapidly embedding models and multi‑agent workflows into production, and those agentic systems must access high‑fidelity telemetry to act correctly. Without tight observability integration, agents operate blind to production context — increasing the chance of bad fixes, cost surprises, or safety incidents. New Relic’s pitch is that feeding actionable observability into agent workflows reduces mean time to resolution (MTTR), lowers toil, and makes agentic automation auditable and traceable.

Key capabilities highlighted by New Relic:

Important vendor framing: New Relic frames this as a productivity and safety play — letting agents operate with richer situational awareness while preserving approval gates and audit trails so teams can scale agentic automation without abdicating control.

Practical implication: organizations should evaluate vendor integrations not only on feature lists but on integration depth, governance primitives (RBAC, audit logs), and evidence that causal analysis delivers higher‑confidence remediations rather than noise.

This announcement is part of a broader industry shift: telemetry is no longer only diagnostic data for humans — it is material agents will rely on to reason and act. Observability vendors and cloud providers are racing to make that exchange efficient, safe, and auditable. New Relic’s approach — a standardized MCP Server plus in‑context diagnostics inside the developer and portal surfaces — maps directly to that trend and gives teams new ways to move from triage to resolution more quickly. Enterprises should proceed, but with the discipline that large‑scale automation demands: conservative pilots, transparent cost modeling, strict governance, and robust tests for model reliability before broad rollout.

Conclusion

New Relic’s new MCP Server and Agentic AI Monitoring formalize an emergent operational pattern: feed high‑confidence observability into agentic surfaces so agents and humans can collaborate inside unified workflows. For Azure customers, the integration simplifies context retrieval and offers a path to faster incident response, but it also elevates the need for vigilance around cost, security, and model correctness. The technology is promising and timely, yet its promise depends on disciplined adoption — precise pilots, strong identity and approval controls, and careful validation of both technical scale and operational outcomes. Organizations that treat this as a program (not a product) — and that pair experimentation with governance — will capture the productivity benefits while keeping the new risks in check.

Source: DevOps.com New Relic Enhances Azure Integration with AI-Powered Observability Tools - DevOps.com

Background / Overview

Background / Overview

The announcement sits at the intersection of three trends reshaping cloud operations: cloud providers adding “agentic” assistants inside their control planes, the rise of standards like the Model Context Protocol (MCP) to let agents call tools deterministically, and observability platforms evolving to provide model‑aware telemetry. New Relic’s MCP Server aims to be a protocol bridge that exposes New Relic’s telemetry, traces, logs, and NRQL‑driven insights to MCP‑compatible agents, eliminating the need for custom instrumentation each time a new agent or model is introduced. The company made the MCP Server available in public preview on November 4–5, 2025 and documents the onboarding steps, supported tools, and preview constraints in its product pages. Why this matters now: enterprises are rapidly embedding models and multi‑agent workflows into production, and those agentic systems must access high‑fidelity telemetry to act correctly. Without tight observability integration, agents operate blind to production context — increasing the chance of bad fixes, cost surprises, or safety incidents. New Relic’s pitch is that feeding actionable observability into agent workflows reduces mean time to resolution (MTTR), lowers toil, and makes agentic automation auditable and traceable. What New Relic announced — features and intent

New Relic’s November release bundles two closely related capabilities: Agentic AI Monitoring and the New Relic AI Model Context Protocol (MCP) Server in public preview. Together they aim to let agents query, interpret, and act on monitoring data as part of a structured, auditable workflow.Key capabilities highlighted by New Relic:

- Instant MCP‑level tracing and visualization for MCP requests, including tool invocation chains and waterfall diagrams.

- Direct embedding of telemetry and performance metrics inside IDEs and platforms that host agents (GitHub Copilot, Copilot Studio, Microsoft Foundry), so developers and SREs see context without platform hopping.

- NRQL assistance that converts plain‑language queries into observability queries, enabling non‑expert users and agents to fetch relevant telemetry.

- Packaging of remediation hints and runbook steps so agents can present or trigger approved actions with traceable approvals and audit logs.

- Support for multi‑language agents initially arriving in Python Agent 10.13.0 with more language agents promised.

Important vendor framing: New Relic frames this as a productivity and safety play — letting agents operate with richer situational awareness while preserving approval gates and audit trails so teams can scale agentic automation without abdicating control.

How the integration actually works — technical view

At a technical level the flow is straightforward but requires careful plumbing to preserve trust and scale:- Telemetry ingestion — New Relic continues to collect metrics, traces, and logs from apps and infrastructure (APM, infrastructure agents, OpenTelemetry pipelines). This data is retained in the New Relic telemetry platform and correlated with metadata.

- MCP Server exposes a standardized tool manifest and structured endpoints that MCP‑capable agents can call to request context, run structured queries, or invoke preapproved runbook templates. The server converts natural‑language or structured requests into NRQL and returns time‑bound diagnostic payloads.

- Embedding in developer and portal surfaces — when an agent or developer requests context (for example, during an incident), the MCP Server returns causal chains, implicated resources, and suggested runbook steps. These payloads can be surfaced inside Microsoft Foundry, GitHub or the Azure portal so engineers don’t have to move between consoles.

- Gate, approve, act — automation is staged: recommend, gate/approve, act. Integration with identity and RBAC systems is expected (Azure Entra or equivalent) so agent actions are attributable and auditable. New Relic’s docs emphasize preserving human controls and audit trails during preview.

Developer and SRE experience — what changes day‑to‑day

For engineers, the promise is tangible: fewer console hops, more conversational troubleshooting, and fast, context‑rich answers inside tools they use every day. In practical terms this can mean:- When an alert fires, the Azure SRE Agent (or a Foundry‑hosted agent) can fetch the full traced request, the implicated services, and a ranked list of probable causes within seconds.

- Remediation suggestions (e.g., scale a deployment, restart a failing pod, flush a cache) can be proposed in the portal alongside the diagnostic evidence and the runbook template to execute the action.

- Developers authoring inside Visual Studio or Copilot Studio can query performance for recent deployments with natural language and receive NRQL‑backed answers without leaving the IDE.

Cost signals and commercial model — what to budget

Two components drive cost for agentic observability in Azure with New Relic in the loop:- Azure agentic execution billing — Azure bills Azure Agent Units (AAUs) for the baseline agent presence and for active agent tasks. Microsoft’s published pricing model for the Azure SRE Agent lists a baseline of 4 AAUs per hour per agent and 0.25 AAUs per second for each active task executed by an agent; teams must convert AAUs into currency regionally and factor them into operational budgets. This is material for continuous monitoring or frequent automated tasks.

- Observability ingestion and retention — richer telemetry (traces at high cardinality, long retention windows, and additional MCP payloads) increases New Relic ingestion and storage costs under its usage‑based pricing model. Plan for increased telemetry volumes when enabling model‑level tracing and MCP servers.

Strengths: where New Relic’s approach is strong

- Single‑protocol bridge: MCP Server reduces custom integration work by presenting a single, standard tool surface for agents to call, making it easier to onboard new agents and models.

- Developer ergonomics: Embedding observability into the dev workflow (Copilot, VS, Foundry) addresses a real pain point: context‑switching. When telemetry follows the engineer into the IDE, diagnosing and patching incidents becomes faster.

- Auditability and staged automation: New Relic and Microsoft’s design both emphasize gate‑first automation — recommended fixes followed by approval gates and identity‑backed execution. That model helps tame many governance concerns enterprises have about autonomous agents.

- Market timing: with MCP gaining vendor adoption and platforms like Microsoft Foundry and Azure SRE Agent maturing, New Relic’s MCP Server arrives when standards and cloud surfaces are available to consume it.

Risks, unknowns and critical caveats

No product exists in a vacuum; the new capabilities bring operational and security tradeoffs that organizations must manage.- Model hallucination and incorrect remediation: agents can present plausible‑sounding but incorrect root‑cause analyses or remediation steps. If an agent triggers an automated action without robust validation, the result can be downtime or data loss. Mitigation: keep human‑in‑the‑loop for higher‑risk actions, simulate playbooks, and require confidence scores and evidence before any automated change.

- Agent compromise and expanded attack surface: exposing telemetry and action primitives to agents increases exposure points. An attacker who compromises an agent’s identity or an MCP endpoint could attempt destructive actions. Harden MCP servers, use Entra‑backed short‑lived credentials, and monitor agent behavior for anomalies.

- Cost surprise from AAUs and telemetry: the AAU billing model plus higher telemetry ingestion can produce unexpectedly large bills if automation is allowed to run unchecked. Model costs during a pilot and set budgets/alerts for AAU consumption.

- Integration scope and vendor “first” claims: marketing‑forward claims like “first observability platform to integrate” should be validated technically during procurement. Confirm whether integrations are API‑level exports, deep bi‑directional workflows, or shallow telemetry pulls — the operational value varies by integration depth.

How this compares across the ecosystem

New Relic is not the only vendor moving telemetry toward agentic surfaces. Microsoft and other observability vendors are building similar integration patterns: feeding telemetry and diagnostics into Azure SRE Agent or Foundry so agent suggestions are grounded in production evidence. Public previews and product demonstrations from other vendors illustrate the same architectural pattern — telemetry ingestion, causal analysis, and packaging of remediation for provider‑native agents. These parallel efforts confirm a market shift toward embedded, agent‑aware observability.Practical implication: organizations should evaluate vendor integrations not only on feature lists but on integration depth, governance primitives (RBAC, audit logs), and evidence that causal analysis delivers higher‑confidence remediations rather than noise.

Practical checklist — how to pilot New Relic MCP Server and Azure agentic workflows

- Start with a narrow, high‑ROI use case — e.g., an idempotent remediation like scale up/down or cache purge.

- Model AAU consumption and New Relic ingestion costs for the pilot scope and set hard spending limits in Azure and New Relic before enabling active automation.

- Configure identity and RBAC: provision Entra Agent IDs (or equivalent), apply least privilege, and require approval gates for any write actions.

- Instrument thorough tracing and enable MCP‑aware spans for agent interactions; validate trace continuity end‑to‑end.

- Run chaos and compromise simulations to validate rollback procedures if an agent misbehaves; ensure immutable audit logs are preserved.

- Measure outcomes: MTTR, frequency of false‑positive remediation attempts, operational hours reclaimed, and cost delta. Use these metrics to decide whether to scale.

What New Relic’s announcement does — and does not — prove yet

Verified claims:- New Relic published an MCP Server public preview and documentation describing setup, capabilities, and language timing.

- New Relic’s press materials describe Agentic AI Monitoring and position the MCP Server as a protocol bridge that connects New Relic telemetry with popular agent surfaces (GitHub Copilot, Microsoft Foundry).

- Microsoft’s Azure SRE Agent pricing and AAU model are publicly documented and should be considered when budgeting agentic operations.

- Specific MTTR reductions attributable to the integration will vary widely by workload and by how well runbooks and guardrails are designed; vendors often cite optimistic pilot results, so insist on vendor‑led proof‑of‑value trials.

- The resilience of the end‑to‑end pipeline under high telemetry cardinality (large AKS clusters, heavy LLM logging) should be validated; theoretical scaling is one thing, field performance is another.

Governance and security hardening — recommended guardrails

- Enforce least‑privilege for agent identities; rotate short‑lived credentials and log every agent invocation.

- Maintain immutable audit trails for every automated action and link them to telemetry that justified the action.

- Version control runbooks and test them in CI/CD pipelines with simulation and rollback validation.

- Use anomaly detection around MCP server inputs to catch abnormal agent behavior (rate spikes, unusual tool invocation patterns).

- Treat agentic tooling as production‑grade code: review, security‑scan, and include it in SRE on‑call playbooks.

Final assessment — why this matters to WindowsForum readers

New Relic’s MCP Server public preview and Agentic AI Monitoring are practical moves to embed observability directly into the workflows where developers and SREs already operate. For Azure‑centric organizations, the combination of New Relic’s telemetry and Microsoft’s agentic control plane (Azure SRE Agent and Foundry) reduces operational friction and makes it easier to close the loop from detection to remediation. That potential will be realized only if teams model costs (AAUs and telemetry), harden identity and approvals, and run rigorous pilots that measure MTTR and automation safety.This announcement is part of a broader industry shift: telemetry is no longer only diagnostic data for humans — it is material agents will rely on to reason and act. Observability vendors and cloud providers are racing to make that exchange efficient, safe, and auditable. New Relic’s approach — a standardized MCP Server plus in‑context diagnostics inside the developer and portal surfaces — maps directly to that trend and gives teams new ways to move from triage to resolution more quickly. Enterprises should proceed, but with the discipline that large‑scale automation demands: conservative pilots, transparent cost modeling, strict governance, and robust tests for model reliability before broad rollout.

Conclusion

New Relic’s new MCP Server and Agentic AI Monitoring formalize an emergent operational pattern: feed high‑confidence observability into agentic surfaces so agents and humans can collaborate inside unified workflows. For Azure customers, the integration simplifies context retrieval and offers a path to faster incident response, but it also elevates the need for vigilance around cost, security, and model correctness. The technology is promising and timely, yet its promise depends on disciplined adoption — precise pilots, strong identity and approval controls, and careful validation of both technical scale and operational outcomes. Organizations that treat this as a program (not a product) — and that pair experimentation with governance — will capture the productivity benefits while keeping the new risks in check.

Source: DevOps.com New Relic Enhances Azure Integration with AI-Powered Observability Tools - DevOps.com