Nottinghamshire County Council is moving from cautious experimentation to structured scale-up in its use of artificial intelligence, proposing a formal three‑tier governance model to oversee growing deployments — from AI‑assisted transcription in adult social care to wider trials of Microsoft Copilot across directorates — while warning that legal, ethical and cyber risks must be actively managed as part of every business case.

AI is not a single silver‑bullet technology but a toolbox: machine learning for pattern detection and prediction, and generative AI for drafting, summarising and transforming information. The council’s recent overview paper to committee frames the current activity in exactly these terms — pilots that automate administrative tasks, experiments that summarise meeting content, and horizon‑scanning for predictive uses in demand management and planning.

Public bodies in the UK are adopting AI at pace, but the legal and regulatory environment has also changed. The Procurement Act 2023 and subsequent Procurement Regulations 2024 set new procurement duties for contracting authorities that came into force under the reformed regime, which public organisations must observe when buying AI‑based services. The Information Commissioner’s Office (ICO) has published specific guidance on AI and data protection, stressing lawful basis, data minimisation, transparency and the need for impact assessments where AI touches personal data. These threads — procurement, data protection and the operational reality of probabilistic AI outputs — form the governance bedrock the council’s report addresses.

In parallel, the council ran a Microsoft Copilot pilot involving approximately 300 users. Internal analysis logged a total estimated time saving of 378.3 hours across 182 recorded usage entries, with a median saving of 1.33 hours per entry and an average productivity gain of 72 per cent for the recorded activities — figures that the council is using to build business cases for formal adoption where appropriate. Meetings and automated note‑taking represented the largest chunk of those time savings.

That said, some aspects demand more specificity:

If implemented with the technical diligence the report promises — a staffed Centre of Excellence, enforceable procurement clauses, independent validation, and mandatory human sign‑off for statutory outputs — Nottinghamshire can realise meaningful efficiency gains without eroding professional accountability or exposing residents’ data. The next months will show whether the council converts the pilot figures into durable, governed practice — and whether local government more widely can turn the potential of AI into safe, demonstrable public value.

Source: West Bridgford Wire New governance model proposed as Nottinghamshire council expands AI use | West Bridgford Wire

Background

Background

AI is not a single silver‑bullet technology but a toolbox: machine learning for pattern detection and prediction, and generative AI for drafting, summarising and transforming information. The council’s recent overview paper to committee frames the current activity in exactly these terms — pilots that automate administrative tasks, experiments that summarise meeting content, and horizon‑scanning for predictive uses in demand management and planning.Public bodies in the UK are adopting AI at pace, but the legal and regulatory environment has also changed. The Procurement Act 2023 and subsequent Procurement Regulations 2024 set new procurement duties for contracting authorities that came into force under the reformed regime, which public organisations must observe when buying AI‑based services. The Information Commissioner’s Office (ICO) has published specific guidance on AI and data protection, stressing lawful basis, data minimisation, transparency and the need for impact assessments where AI touches personal data. These threads — procurement, data protection and the operational reality of probabilistic AI outputs — form the governance bedrock the council’s report addresses.

What Nottinghamshire is proposing

The three‑tier governance model

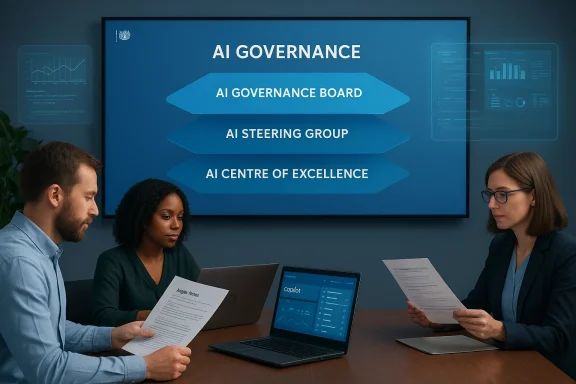

The council’s paper sets out a three‑tier structure to move from program boards to a sustained governance fabric:- AI Governance Board — strategic oversight, ethics review and risk appetite decisions.

- AI Steering Group — translating strategy into business priorities and approving projects against benefits cases.

- AI Centre of Excellence (CoE) — technical assurance, standards, procurement support, and staff training.

Where AI is already being used

Nottinghamshire’s most advanced live use case is the Magic Notes transcription and summarisation tool in Adult Social Care and Health. After an early 2025 pilot, the council approved a wider rollout in September 2025. Magic Notes captures recorded assessment conversations, produces verbatim transcripts and generates structured summaries that practitioners review before uploading records to the Mosaic case management system. The council reports measurable time and quality improvements from initial surveys taken before and after rollout.In parallel, the council ran a Microsoft Copilot pilot involving approximately 300 users. Internal analysis logged a total estimated time saving of 378.3 hours across 182 recorded usage entries, with a median saving of 1.33 hours per entry and an average productivity gain of 72 per cent for the recorded activities — figures that the council is using to build business cases for formal adoption where appropriate. Meetings and automated note‑taking represented the largest chunk of those time savings.

What’s on the exploration list

The report marks several areas of investigation rather than committed projects:- Multi‑agency data analysis for earlier identification of families needing support.

- AI‑assisted scheduling and draft care plan generation.

- Digital assistants for SEND (Special Educational Needs and Disabilities) processes and Education, Health and Care Plan (EHCP) management.

- Community insight dashboards and predictive planning/infrastructure forecasting.

The evidence — what the pilots actually delivered

Quantified outcomes matter for councils under financial pressure. Nottinghamshire’s internal metrics for Magic Notes and Copilot are presented as early, pilot‑level evidence but show notable operational improvements:- Staff spending more than half their time on administration fell from 56% to 23%.

- Staff taking more than an hour to write notes for a single visit fell from 59% to 28%.

- Notes submitted within one day rose from 34% to 73%.

- The proportion of staff rating the quality of their conversations at eight out of ten or higher rose from 35% to 72%.

Why the council’s governance choices matter

Legal and procurement constraints

Procurement is a material operational control here. The Procurement Act 2023 introduced a new procurement framework, effective from February 24, 2025, and the Procurement Regulations 2024 replaced earlier statutory rules — changes that public bodies must factor into supplier selection, contract terms and KPI obligations. Any AI purchases will therefore need documented procurement routes, explicit contract clauses on data use, exportability, IP and incident response, and published performance metrics where required by the new regime.Data protection and AI‑specific obligations

AI projects that ingest or generate personal data demand close attention under the UK GDPR. The ICO’s guidance emphasises lawful basis, particularly for public bodies that commonly rely on Article 6(1)(e) (public task), and it stresses that automated decision‑making features or inferences about individuals may trigger additional safeguards. The council notes this and requires DPIAs, human review of outputs and configuration controls for tenant data — an approach consistent with ICO expectations.Operational security and supply‑chain risk

Generative agents and productivity copilots need access to organisational content to be useful, but that access is also the core governance challenge. Councils must apply role‑based permissions, conditional access policies and tenant‑level DLP so copilots and transcription services only access appropriate material. The technical literature on Copilot governance stresses these operational controls — including thorough logging of prompts and outputs so decisions can be audited — and warns against assuming hyperscaler defaults provide sufficient containment without testing. Nottinghamshire’s CoE remit to create standards and run technical assurance is therefore practical and necessary.Strengths of the council’s approach

- Practical, benefits‑led pilots: The council has focused pilots on high‑value, low‑risk tasks such as note capture and meeting summarisation, where human verification is straightforward and measurable time savings are plausible. This avoids the classic “big‑bang” risk of trialling AI on mission‑critical statutory decisions.

- Clear governance signal: Proposing an AI Governance Board, Steering Group and CoE shows the authority understands AI is an organisational change problem as much as a technical one. Centralised policy combined with local accountability is a common pattern that reduces inconsistent ad‑hoc adoption.

- Legal and procurement awareness: The report explicitly maps future procurements to the Procurement Act 2023 and Procurement Regulations 2024, and commits to UK GDPR compliance — a necessary stance that improves defensibility for decisions affecting sensitive datasets.

- External engagement: Participation in national sector groups — for example the Local Government Association AI group — increases access to peer learning, standard templates and shared red‑teaming resources, reducing reinvention and strengthening cross‑authority comparability.

Risks and gaps the council must close

No pilot is risk‑free. The report flags several hazards that deserve sharper operational commitments.1) Algorithmic bias and unfairness

AI models trained or tuned on historical case data can replicate structural bias, potentially skewing prioritisation of support or predictive flags that inform interventions. The council must mandate independent fairness testing, dataset provenance, and formal validation cycles as part of each rollout. These are not optional for systems affecting children’s services, adult social care, or SEND processes.2) Hallucinations and factual errors

Generative systems create plausible but false content. Councils must treat outputs as drafts requiring human verification, and ensure audit trails can link every auto‑generated sentence back to source documents or the audio segment that informed it. The council’s current practice — requiring practitioners to review Magic Notes summaries before signing into Mosaic — is correct; however, the authority should codify acceptance checks and sampling‑based QA to detect degradation over time.3) Over‑reliance and deskilling

Time savings can mask long‑term risks: as staff rely more on automated drafts, critical skills like clinical judgement, record‑writing nuance and escalation instincts can atrophy. Training and role redesign must accompany automation to maintain professional capacity and to ensure that responsibility remains with named practitioners rather than an “AI” artifact.4) Supply chain and vendor lock‑in

Deeply embedding a single cloud vendor or third‑party transcription provider increases negotiation dependency and migration cost. Procurement terms must include exportable audit logs, defined data residency, contractual indemnities for data breaches, and clear exit plans for moving data and models. The council’s alignment to Procurement Act requirements is a good start; the next step is robust commercial drafting and technical validation of vendor claims.5) Cybersecurity and prompt‑injection threats

Agents and copilots introduce new attack surfaces: compromised connectors, stolen tokens and prompt‑injection attacks can exfiltrate sensitive records. Technical assurance by the CoE must include adversarial testing, endpoint detection tuned for agent behaviour and rapid revocation processes for misbehaving agents.Practical checklist for moving from pilot to safe production

The council’s paper is aspirational about the future — to operationalise that ambition, the following sequence of steps is essential:- Complete Data Protection Impact Assessments (DPIAs) for every service that uses personal or special category data and publish summary mitigations.

- Require vendor evidence: SOC2/ISO artifacts, sample audit exports, provenance logs and defined incident playbooks as pre‑conditions to contract award.

- Define outcome KPIs and an exit plan for every proof of value (6–12 week proofs with measurable success criteria).

- Mandate “human in the loop” sign‑off points for any output that affects statutory decisions, care plans, or legal records.

- Build continuous monitoring for model drift, latency anomalies and accuracy regressions; instrument endpoints with immutable logs.

- Run red‑team tests for prompt injection, data exfiltration scenarios and adversarial inputs before wider rollouts.

- Publish a clear staff policy and training programme, emphasising when to use AI, verification duties and how to escalate disagreements or errors.

Cross‑authority perspective: how other councils are navigating the same terrain

Nottinghamshire’s experience is consistent with other UK local authorities that have gone public about similar tools. Buckinghamshire Council, for example, has reported Copilot‑assisted minute‑taking and Magic Notes‑style pilots that project large per‑practitioner weekly time savings — evidence that ambient transcription and meeting summarisation are high‑value, low‑risk early adopters when governance is in place. These comparable local outcomes strengthen Nottinghamshire’s choice of first use cases but underline the need for shared sector standards on metrics and auditability.Critical appraisal — where the council gets it right, and where it must sharpen

Nottinghamshire’s report strikes a sensible balance: it avoids hype, prioritises business cases and insists on compliance with the new procurement and data protection rules. Proposing an AI CoE alongside strategic oversight structures is a practical, serviceable model that aligns policy with delivery.That said, some aspects demand more specificity:

- The paper would benefit from a published model inventory and risk classification register — documents regulators increasingly expect as part of audit trails. Without them, board‑level oversight risks being a high‑level checkbox rather than an operational control.

- Performance baselines for model accuracy, measured error rates for Magic Notes summaries, and a statistical sampling plan for QA should be publicly summarised to support transparency and scrutiny from councillors and the public. The current survey results are promising but require ongoing monitoring and publication cadence to sustain trust.

- Procurement commitments must be operationalised into contract templates that secure exportability of logs, data residency guarantees, and clear indemnity clauses — all features highlighted by procurement guidance under the Procurement Act. The council signals intent but must follow through in practice.

What to watch next

- Committee scrutiny on 12 March will be the near‑term test of political and ethical buy‑in; councillors should press for published KPIs, DPIA summaries and formal red‑team results.

- Procurement milestones: any supplier decisions for wider AI deployments will be tested against the Procurement Act 2023 requirements and the Procurement Regulations 2024 — the council should expect queries on KPI publication, supplier due diligence and social value commitments.

- Sector alignment: if the Local Government Association and national forums produce standard templates for audits, provenance capture and fairness testing, Nottinghamshire should adopt these to reduce bespoke work and increase inter‑authority comparability.

Conclusion

Nottinghamshire County Council’s shift from pilot experiments to a formally structured governance model is an appropriately measured response to the twin realities of opportunity and risk inherent in public‑sector AI. The authority’s focus on productivity gains — demonstrated by Magic Notes and Copilot pilot metrics — is pragmatic and replicates sector patterns where transcription and meeting summarisation yield tangible time savings. Yet the paper’s success depends on disciplined follow‑through: codified DPIAs, rigorous procurement terms under the new Procurement Act regime, technical red‑teaming and continuous monitoring to manage bias, hallucination and cyber threats.If implemented with the technical diligence the report promises — a staffed Centre of Excellence, enforceable procurement clauses, independent validation, and mandatory human sign‑off for statutory outputs — Nottinghamshire can realise meaningful efficiency gains without eroding professional accountability or exposing residents’ data. The next months will show whether the council converts the pilot figures into durable, governed practice — and whether local government more widely can turn the potential of AI into safe, demonstrable public value.

Source: West Bridgford Wire New governance model proposed as Nottinghamshire council expands AI use | West Bridgford Wire