Nvidia and Microsoft are converging on the same strategic prize from opposite directions: the agentic AI coordination layer that sits between raw model inference and enterprise workflow execution. Nvidia’s bet is that the winners of the next AI era will own the underlying compute stack, including the CPUs that increasingly manage orchestration, state, memory, and tool routing for agents, while Microsoft’s bet is that the value will accrue to an open, interoperable platform that abstracts infrastructure and lets customers mix models, connectors, and governance policies without being locked into a single hardware path. The clash matters because it is not just about chips or cloud services; it is about who controls the system of record for autonomous AI work.

The move from chat-style language models to autonomous agents is changing the shape of AI infrastructure in a way that is easy to underestimate. In the first phase of generative AI, the GPU was the obvious hero because model inference dominated the workload. In the next phase, the agent must reason across multiple steps, maintain state, call tools, fetch context, and enforce policy, which shifts more of the workload toward the CPU coordination layer and the orchestration software that wraps it.

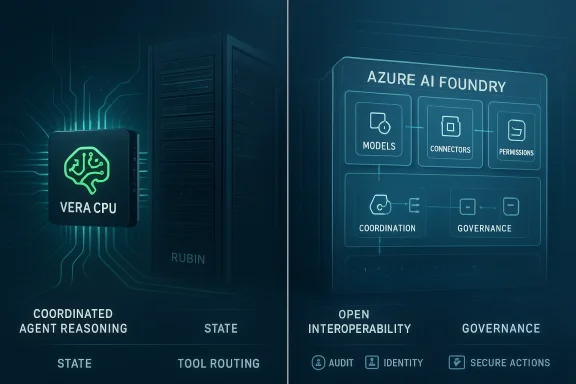

That shift explains why infrastructure vendors are now talking about agentic AI as a full-stack problem rather than a model problem. Microsoft has repeatedly described the emerging app stack as one that needs memory, entitlements, action spaces, observability, and runtimes for orchestrating multiple agents, not just a better model endpoint. Nvidia, meanwhile, is pushing an integrated platform story around its new Vera CPU and Rubin architecture, which it says are designed to accelerate agentic reasoning and large-scale inference.

This is also a market structure story. Enterprises do not merely want faster model output; they want dependable, governed systems that can operate across business processes. That is why platforms such as Azure AI Foundry emphasize model choice, secure grounding, and governance, while Nvidia is trying to make the hardware substrate itself the default place where agentic workloads are optimized. Those are very different routes to the same enterprise budget.

The Bitget framing is directionally useful, but it should be read as a strategic interpretation rather than a settled industry fact. The strongest evidence available today shows that both companies are already building around the agentic stack from their own natural strengths. Nvidia is extending its chip and system dominance into orchestration-adjacent infrastructure, and Microsoft is expanding its cloud, platform, and governance layers so customers can build agents across heterogeneous models and systems.

This is why orchestration is emerging as the new complexity layer. Developers now need mechanisms for task decomposition, durable state, tool invocation, identity, and observability. Microsoft’s recent work on Foundry, Agent 365, and the Microsoft Agent Framework reflects exactly that shift, and so does Nvidia’s emphasis on AI factories, in-network compute, and system-level optimization.

That does not mean the GPU becomes less important. It means the AI workload becomes more heterogeneous, with the GPU still doing heavy inference while the CPU handles the system logic around it. In practical terms, the enterprises building agentic systems will care less about a single benchmark and more about end-to-end throughput, latency under tool use, and reliability under multi-step workflows.

This is also where reliability becomes a buying criterion. A demo agent is easy to build, but a production agent that can survive permissions changes, stale context, and process exceptions is much harder. The more the market learns this lesson, the more value migrates from model novelty to infrastructure trust.

This kind of vertical integration is attractive because it promises performance, simplicity, and tighter optimization. If a customer buys into Nvidia’s stack, the company can align CPU, GPU, networking, memory, and software assumptions in a way that competing vendors often cannot match. That is especially valuable when agentic systems become more complex and when small inefficiencies at the orchestration layer translate into real cost at scale.

It also raises the lock-in question. If the orchestration path, the networking path, and the hardware path are all optimized for Nvidia, customers may find themselves with excellent performance but reduced portability. That trade-off is not automatically bad, but it does mean the buyer is renting a very opinionated architecture.

At the same time, Nvidia’s success creates a strategic tension. The more it expands vertically, the more it competes with the very clouds and platform players that also depend on its chips. That tension is manageable when demand is exploding, but it could become more visible if enterprise procurement becomes more selective or if alternative CPU and AI infrastructure vendors gain enough momentum.

This approach is attractive to enterprises because it reduces dependency risk. If one model underperforms, or if a new open model becomes better suited to a workflow, developers can change course without rebuilding the whole stack. Microsoft has also emphasized open protocols and agent interoperability, which reinforces its claim that the future of agents should be composable rather than closed.

This matters because enterprises do not buy AI in a vacuum. They buy it inside policy environments, audit regimes, and compliance obligations. Microsoft is betting that a platform that makes those constraints easier to manage will win even if a more vertically integrated stack delivers better raw performance.

The competitive advantage here is flexibility at scale. If Microsoft can make its open model catalog, orchestration runtime, and governance tooling feel integrated enough, it may capture the enterprise layer above the infrastructure war. In that scenario, the company would not need to out-chip Nvidia; it would only need to become the default operating environment for agents.

That means the market is still in a proof phase. Buyers are asking not only whether an agent can complete a task, but whether it can do so consistently, safely, and under governance. The platform that wins will likely be the one that makes those requirements feel routine rather than exceptional.

The answer is not simply more model capability. It is redesigning operations so that agents have clear boundaries, durable state, and traceable actions. In that sense, the enterprise adoption challenge is as much organizational as it is technical.

Enterprises also buy for continuity. They want confidence that a platform will survive regulatory scrutiny, security review, and integration churn. So while consumer excitement drives headlines, enterprise adoption will decide where the durable revenue lands.

This has implications for rivals like AMD, custom silicon developers, and cloud-native infrastructure providers. A world with more CPU-centric agent coordination could open room for alternative processors and architectures, especially where buyers want more openness or price leverage. But if Nvidia and Microsoft continue to bundle performance with ecosystem maturity, smaller rivals may struggle to convert technical parity into platform momentum.

For cloud providers, the risk is that they become infrastructure resellers while Nvidia and Microsoft define the real control points. For chip vendors, the risk is that the market rewards specialized integration over general-purpose flexibility. The winner is not necessarily the company with the fastest part; it is the company with the most complete operating story.

A deeper point is that partnerships are also validation. If major customers deploy these systems in meaningful production environments, the market will treat that as proof that agentic AI has crossed from experimental novelty into durable infrastructure. Without that proof, the rhetoric around vertical integration and open platforms remains just that—rhetoric.

It also correctly frames Nvidia and Microsoft as representing two very different philosophies. Nvidia wants to make the stack more integrated and more optimizable, while Microsoft wants the stack to be more open and more portable. That tension is real, and it will shape enterprise buying decisions throughout the next product cycle.

Likewise, the claim that the market is already converging on a 60-70% CPU and 30-40% GPU utilization model is better understood as an illustrative argument than as a universal rule. Real workloads will vary significantly by agent design, tool density, and inference intensity. The useful insight is the direction of travel, not a single percentage split.

That layered view also explains why both companies can win simultaneously in different segments. The enterprise AI market is large enough for a hardware leader, a cloud platform leader, and many application-layer vendors to coexist. The real question is which layer captures the most durable margins as agents move from demos to production.

The broader market should expect three things to matter most: how well agents perform under real business constraints, how easily they can be governed, and how portable they remain across infrastructure choices. Those are the variables that determine whether agentic AI becomes an expensive experiment or a durable computing platform. That distinction will define the next year of enterprise AI spending.

Source: Bitget Nvidia Believes Controlling the Agentic AI CPU Coordination Layer Through Vertical Integration, While Microsoft Advocates for an Open Platform Approach | Bitget News

Overview

Overview

The move from chat-style language models to autonomous agents is changing the shape of AI infrastructure in a way that is easy to underestimate. In the first phase of generative AI, the GPU was the obvious hero because model inference dominated the workload. In the next phase, the agent must reason across multiple steps, maintain state, call tools, fetch context, and enforce policy, which shifts more of the workload toward the CPU coordination layer and the orchestration software that wraps it.That shift explains why infrastructure vendors are now talking about agentic AI as a full-stack problem rather than a model problem. Microsoft has repeatedly described the emerging app stack as one that needs memory, entitlements, action spaces, observability, and runtimes for orchestrating multiple agents, not just a better model endpoint. Nvidia, meanwhile, is pushing an integrated platform story around its new Vera CPU and Rubin architecture, which it says are designed to accelerate agentic reasoning and large-scale inference.

This is also a market structure story. Enterprises do not merely want faster model output; they want dependable, governed systems that can operate across business processes. That is why platforms such as Azure AI Foundry emphasize model choice, secure grounding, and governance, while Nvidia is trying to make the hardware substrate itself the default place where agentic workloads are optimized. Those are very different routes to the same enterprise budget.

The Bitget framing is directionally useful, but it should be read as a strategic interpretation rather than a settled industry fact. The strongest evidence available today shows that both companies are already building around the agentic stack from their own natural strengths. Nvidia is extending its chip and system dominance into orchestration-adjacent infrastructure, and Microsoft is expanding its cloud, platform, and governance layers so customers can build agents across heterogeneous models and systems.

Why Agentic AI Changes the Stack

Agentic systems are different from classic prompts-and-completions workflows because they execute sequences, not single responses. They need to decide what to do next, remember prior steps, access tools, and often wait on external systems before continuing. That makes the infrastructure problem closer to distributed systems engineering than to simple model hosting.This is why orchestration is emerging as the new complexity layer. Developers now need mechanisms for task decomposition, durable state, tool invocation, identity, and observability. Microsoft’s recent work on Foundry, Agent 365, and the Microsoft Agent Framework reflects exactly that shift, and so does Nvidia’s emphasis on AI factories, in-network compute, and system-level optimization.

The CPU Reclaims Importance

The article’s claim that agentic AI pushes more workload toward the CPU is plausible, and the industry evidence supports the broader direction. Microsoft’s own stack talks about agent runtimes, management layers, and governance planes, all of which are coordination-heavy rather than pure matrix-math workloads. Nvidia’s Rubin announcement explicitly positions the Vera CPU as a processor designed for agentic reasoning, underscoring that CPU design is now part of the AI conversation again.That does not mean the GPU becomes less important. It means the AI workload becomes more heterogeneous, with the GPU still doing heavy inference while the CPU handles the system logic around it. In practical terms, the enterprises building agentic systems will care less about a single benchmark and more about end-to-end throughput, latency under tool use, and reliability under multi-step workflows.

Why Orchestration Becomes the Product

Once an AI system can act, not just answer, orchestration becomes the product surface customers actually evaluate. A strong orchestration layer decides whether an agent can touch production systems, how it is audited, how it recovers from failure, and which human approvals are needed along the way. Microsoft’s focus on governance, permissions, and secure connectors is a direct response to that enterprise reality.This is also where reliability becomes a buying criterion. A demo agent is easy to build, but a production agent that can survive permissions changes, stale context, and process exceptions is much harder. The more the market learns this lesson, the more value migrates from model novelty to infrastructure trust.

- Agentic AI increases the importance of state management.

- Tool use makes latency and fault tolerance much more visible.

- Enterprises need governance, not just intelligence.

- Orchestration vendors can capture value even without owning the model.

- CPU-heavy coordination can become a new optimization target.

Nvidia’s Full-Stack Bet

Nvidia’s strategy is consistent with how it has won every prior platform wave: own the enabling infrastructure, then expand upward. With Rubin, the company is explicitly tying the Vera CPU to agentic reasoning and positioning the broader platform as the foundation for future AI factories. That is not just a chip launch; it is a claim that the AI runtime should be optimized end-to-end under Nvidia’s umbrella.This kind of vertical integration is attractive because it promises performance, simplicity, and tighter optimization. If a customer buys into Nvidia’s stack, the company can align CPU, GPU, networking, memory, and software assumptions in a way that competing vendors often cannot match. That is especially valuable when agentic systems become more complex and when small inefficiencies at the orchestration layer translate into real cost at scale.

The Vera Message

The most important element of Nvidia’s message is that the company is redefining what counts as AI infrastructure. The Vera CPU is framed as the right processor for agentic reasoning, not merely a general-purpose server chip. In strategic terms, that lets Nvidia enter a market that is larger than accelerators alone and also makes the company harder to displace from enterprise AI budgets.It also raises the lock-in question. If the orchestration path, the networking path, and the hardware path are all optimized for Nvidia, customers may find themselves with excellent performance but reduced portability. That trade-off is not automatically bad, but it does mean the buyer is renting a very opinionated architecture.

Ecosystem Expansion

Nvidia’s advantage is that its ecosystem is already broad. The Rubin announcement names a long list of cloud providers, OEMs, and AI companies expected to adopt the platform, including Microsoft itself. That breadth matters because platform transitions are rarely won by specs alone; they are won when the ecosystem makes adoption feel inevitable.At the same time, Nvidia’s success creates a strategic tension. The more it expands vertically, the more it competes with the very clouds and platform players that also depend on its chips. That tension is manageable when demand is exploding, but it could become more visible if enterprise procurement becomes more selective or if alternative CPU and AI infrastructure vendors gain enough momentum.

- Nvidia is turning agentic AI into a systems architecture story.

- Vera is meant to be more than a server CPU; it is a coordination CPU.

- Deep integration can improve performance and simplify deployment.

- The downside is potential ecosystem lock-in.

- Broad partner adoption is a major part of the strategy.

Microsoft’s Open Platform Approach

Microsoft is making almost the opposite argument: agentic AI should be a platform capability, not a single-vendor stack. Azure AI Foundry presents itself as a unified, interoperable AI platform with access to more than 11,000 models and a governance layer that spans security, permissions, and observability. In practical terms, Microsoft wants to be the place where organizations build and control agents regardless of which model family or infrastructure layer they choose.This approach is attractive to enterprises because it reduces dependency risk. If one model underperforms, or if a new open model becomes better suited to a workflow, developers can change course without rebuilding the whole stack. Microsoft has also emphasized open protocols and agent interoperability, which reinforces its claim that the future of agents should be composable rather than closed.

Governance as the Differentiator

Microsoft’s strongest argument is not simply that it supports many models. It is that it can combine model diversity with enterprise governance, identity, and security in a way most pure-play AI vendors cannot. Foundry Agent Service, Agent 365, and the broader Azure stack all push in the same direction: agents should be observable, governable, and aligned with corporate controls from the start.This matters because enterprises do not buy AI in a vacuum. They buy it inside policy environments, audit regimes, and compliance obligations. Microsoft is betting that a platform that makes those constraints easier to manage will win even if a more vertically integrated stack delivers better raw performance.

Open Does Not Mean Unstructured

An open platform is not the same thing as an ungoverned platform. Microsoft’s recent materials repeatedly stress secure connections to data, access control, and end-to-end agent management. That is important because “open” can sound vague to procurement teams unless it is paired with reliable control planes and visible boundaries.The competitive advantage here is flexibility at scale. If Microsoft can make its open model catalog, orchestration runtime, and governance tooling feel integrated enough, it may capture the enterprise layer above the infrastructure war. In that scenario, the company would not need to out-chip Nvidia; it would only need to become the default operating environment for agents.

- Microsoft’s pitch is choice plus control.

- Open interoperability lowers switching costs.

- Governance is the key enterprise differentiator.

- The company is trying to own the agent operating layer.

- Flexibility may beat pure performance in many deployments.

The Enterprise Reality Check

The adoption gap is the part of the story that matters most. Even if organizations are using AI broadly, scaling agentic systems remains much harder than many vendors imply. The reason is simple: most enterprises are built around workflows, permissions, and exceptions that were not designed for autonomous action.That means the market is still in a proof phase. Buyers are asking not only whether an agent can complete a task, but whether it can do so consistently, safely, and under governance. The platform that wins will likely be the one that makes those requirements feel routine rather than exceptional.

Why Scaling Is Harder Than Pilots

Many pilot projects succeed because they are narrow and supervised. Scaling fails because the real environment includes stale data, nonstandard approvals, fragmented systems, and human edge cases that the pilot never encountered. That is why layered-on agent deployments often disappoint: they inherit the messiness of old processes instead of rethinking them.The answer is not simply more model capability. It is redesigning operations so that agents have clear boundaries, durable state, and traceable actions. In that sense, the enterprise adoption challenge is as much organizational as it is technical.

Consumer vs. Enterprise

Consumer markets may embrace agentic features faster because the downside risk is lower. A consumer can tolerate a wrong suggestion; an enterprise cannot tolerate an agent that triggers the wrong workflow or exposes sensitive data. That asymmetry is why governance-heavy platforms are likely to outpace consumer-style demos in the near term.Enterprises also buy for continuity. They want confidence that a platform will survive regulatory scrutiny, security review, and integration churn. So while consumer excitement drives headlines, enterprise adoption will decide where the durable revenue lands.

- Pilots are easier than production rollouts.

- Legacy workflows slow down agent adoption.

- Governance and auditability are decisive.

- Consumer use cases face less operational risk.

- Enterprise buyers prioritize continuity and compliance.

Competitive Implications for the AI Supply Chain

The Nvidia-versus-Microsoft split is really a debate about where margin should concentrate in the AI supply chain. If the hardware stack wins, Nvidia captures more value through integrated systems. If the platform stack wins, Microsoft captures value through orchestration, identity, governance, and developer tooling. Either way, the traditional boundary between infrastructure and application is getting thinner.This has implications for rivals like AMD, custom silicon developers, and cloud-native infrastructure providers. A world with more CPU-centric agent coordination could open room for alternative processors and architectures, especially where buyers want more openness or price leverage. But if Nvidia and Microsoft continue to bundle performance with ecosystem maturity, smaller rivals may struggle to convert technical parity into platform momentum.

What Rivals Need to Prove

Rivals cannot win by simply saying they are cheaper. They need to show that they can support the same kind of agentic reliability, observability, and integration depth that enterprises will eventually demand. That is a systems problem, not just a chip problem.For cloud providers, the risk is that they become infrastructure resellers while Nvidia and Microsoft define the real control points. For chip vendors, the risk is that the market rewards specialized integration over general-purpose flexibility. The winner is not necessarily the company with the fastest part; it is the company with the most complete operating story.

The Role of Partnerships

Partner ecosystems will matter enormously because no single company can own every layer of the agentic stack. Nvidia already leans heavily on OEMs and cloud providers, while Microsoft relies on model partners, tool integrations, and enterprise software relationships. The strategic question is whether those partnerships expand choice or simply mask deeper control.A deeper point is that partnerships are also validation. If major customers deploy these systems in meaningful production environments, the market will treat that as proof that agentic AI has crossed from experimental novelty into durable infrastructure. Without that proof, the rhetoric around vertical integration and open platforms remains just that—rhetoric.

- The supply chain is shifting from models to operating layers.

- AMD and others can benefit if buyers want diversification.

- Partnerships function as both distribution and validation.

- Ecosystem depth may matter more than raw benchmark wins.

- The real control point may be orchestration, not inference.

What the Bitget Thesis Gets Right

The Bitget piece correctly identifies that agentic AI changes the economics of compute. It also gets right that the battle is not simply GPU versus CPU, but rather who controls the coordination layer that decides how compute is used. That is the layer where enterprise trust, workflow design, and monetization intersect.It also correctly frames Nvidia and Microsoft as representing two very different philosophies. Nvidia wants to make the stack more integrated and more optimizable, while Microsoft wants the stack to be more open and more portable. That tension is real, and it will shape enterprise buying decisions throughout the next product cycle.

Where the Thesis Needs Caution

Some of the more specific performance and adoption claims in the Bitget framing should be treated cautiously. Vendor claims about efficiency, speed, and efficiency gains are inherently promotional unless and until third-party benchmarks and production case studies confirm them. The broader trend is believable; the exact numbers are another matter.Likewise, the claim that the market is already converging on a 60-70% CPU and 30-40% GPU utilization model is better understood as an illustrative argument than as a universal rule. Real workloads will vary significantly by agent design, tool density, and inference intensity. The useful insight is the direction of travel, not a single percentage split.

A More Balanced Reading

A more balanced interpretation is that the market is fragmenting into layers. The lowest layer rewards infrastructure scale, the middle layer rewards orchestration and governance, and the top layer rewards workflow-specific applications. Nvidia is trying to dominate the bottom two through integration, while Microsoft is trying to dominate the middle two through platform reach.That layered view also explains why both companies can win simultaneously in different segments. The enterprise AI market is large enough for a hardware leader, a cloud platform leader, and many application-layer vendors to coexist. The real question is which layer captures the most durable margins as agents move from demos to production.

- The thesis is strongest on architectural change.

- It is weaker when it turns vendor claims into hard truths.

- The real battle is layered, not binary.

- Performance will matter, but trust will matter just as much.

- Multiple winners can exist across the stack.

Strengths and Opportunities

The current moment favors vendors that can combine technical depth with enterprise confidence. Nvidia has the advantage of hardware leadership and a growing systems narrative, while Microsoft has the advantage of distribution, governance, and platform familiarity. Both are positioned to benefit as companies move from experimentation to production-grade agent deployment.- Nvidia can monetize not only GPUs but also the CPU and system layers of agentic AI.

- Microsoft can become the default enterprise control plane for agents.

- Open interoperability lowers switching friction for customers.

- Full-stack optimization can reduce latency and operating cost.

- Governance tooling is a major enterprise differentiator.

- Partner ecosystems accelerate validation and adoption.

- Agentic AI creates a new pool of budget outside classic model hosting.

Risks and Concerns

The biggest risk is that the market could overestimate how quickly enterprises will redesign around autonomous agents. Adoption may stay confined to narrow use cases longer than vendors hope, which would slow infrastructure spending and compress the upside for both hardware and platform players. There is also a real risk that too much integration leads to customer lock-in and strategic discomfort.- Slow enterprise redesign could delay revenue realization.

- Vendor lock-in may trigger customer caution.

- Security and compliance failures would damage trust quickly.

- Benchmark claims may not translate into production value.

- Competitive responses from AMD and cloud rivals could pressure margins.

- Fragmentation in protocols and models could complicate adoption.

- Overhyped expectations could create a backlash if agents underdeliver.

Looking Ahead

The next phase of competition will likely be decided by production proof, not marketing language. If Nvidia’s Vera and Rubin stack deliver real gains in agentic workloads, the company will strengthen its claim that AI infrastructure should be vertically integrated around optimized silicon. If Microsoft continues to expand Foundry, Agent 365, and its open orchestration story, it can reinforce the idea that the winning platform is the one that makes agents manageable at enterprise scale.The broader market should expect three things to matter most: how well agents perform under real business constraints, how easily they can be governed, and how portable they remain across infrastructure choices. Those are the variables that determine whether agentic AI becomes an expensive experiment or a durable computing platform. That distinction will define the next year of enterprise AI spending.

- Watch for third-party benchmarks of agentic workloads.

- Track enterprise deployments that move beyond pilot status.

- Monitor whether open protocols reduce switching costs.

- Follow changes in CPU demand across AI infrastructure.

- Assess whether governance features become procurement gatekeepers.

Source: Bitget Nvidia Believes Controlling the Agentic AI CPU Coordination Layer Through Vertical Integration, While Microsoft Advocates for an Open Platform Approach | Bitget News