When two headline-grabbing cloud failures struck in rapid succession this October, the outages did more than break apps and frustrate users — they reopened an urgent national conversation about how much of the country’s digital life depends on a handful of hyperscale providers, and whether regulators should treat those providers like critical infrastructure. The first major incident, a cascading DNS/control-plane failure tied to Amazon DynamoDB in AWS’s US‑EAST‑1 (Northern Virginia) region, produced widespread errors and multi‑hour downtimes for hundreds of services; days later, an inadvertent tenant configuration change inside Microsoft’s Azure Front Door control plane caused global latency, timeouts and access failures for Microsoft services and many customer applications. Both vendors have published preliminary incident statements and started mitigation work, but the technical symptoms — DNS resolution failures, control‑plane validation gaps and throttled recovery paths — expose the brittle seams of an internet that increasingly runs through a few private control planes.

The events in question unfolded in late October: Amazon’s had its first public signals on Oct. 19–20, when DynamoDB API endpoints in US‑EAST‑1 began returning elevated API error rates and DNS resolution anomalies that cascaded into broader service disruptions across compute, storage and managed services — producing a disruption window variously described as stretching many hours and, for some downstream workloads, requiring much longer recovery due to backlogs and throttling. Financial-press and technical coverage characterized the outage as a major incident with global ripple effects. Less than two weeks later, on Oct. 29, Microsoft confirmed that an inadvertent tenant configuration change in Azure Front Door (AFD), its global content-delivery and application‑edge fabric, introduced an invalid configuration state that prevented a large number of edge nodes from loading properly and created cascading latency and failures for services that depend on that fabric. Microsoft’s containment actions included blocking further AFD changes, rolling back to a known-good configuration and recovering healthy nodes — measures that restored many services within hours but left open questions about how a faulty deployment bypassed validation protections. Both incidents reignited debate about systemic concentration in public cloud markets, the engineering constraints that produce correlated failures, and whether federal regulators or procurement rules should step in to reduce national exposure to similar future shocks. Advocacy groups and watchdog coalitions have publicly urged stronger oversight and mandatory transparency for incidents that impact critical public services.

The balanced path forward combines:

The outages were a vivid reminder that engineering and policy are intertwined: the choices companies and governments make now about disclosure, procurement and architectural defaults will determine whether the next major outage is an isolated lesson or a repeated national vulnerability. The next phase should be less about headlines and more about measurable, enforceable steps that reduce the blast radius when inevitable errors occur — and that ensure critical services remain available when people depend on them.

Source: FedScoop Calls for government action grow louder amid recent cloud outages

Background

Background

The events in question unfolded in late October: Amazon’s had its first public signals on Oct. 19–20, when DynamoDB API endpoints in US‑EAST‑1 began returning elevated API error rates and DNS resolution anomalies that cascaded into broader service disruptions across compute, storage and managed services — producing a disruption window variously described as stretching many hours and, for some downstream workloads, requiring much longer recovery due to backlogs and throttling. Financial-press and technical coverage characterized the outage as a major incident with global ripple effects. Less than two weeks later, on Oct. 29, Microsoft confirmed that an inadvertent tenant configuration change in Azure Front Door (AFD), its global content-delivery and application‑edge fabric, introduced an invalid configuration state that prevented a large number of edge nodes from loading properly and created cascading latency and failures for services that depend on that fabric. Microsoft’s containment actions included blocking further AFD changes, rolling back to a known-good configuration and recovering healthy nodes — measures that restored many services within hours but left open questions about how a faulty deployment bypassed validation protections. Both incidents reignited debate about systemic concentration in public cloud markets, the engineering constraints that produce correlated failures, and whether federal regulators or procurement rules should step in to reduce national exposure to similar future shocks. Advocacy groups and watchdog coalitions have publicly urged stronger oversight and mandatory transparency for incidents that impact critical public services.Anatomy of the failures: what actually went wrong

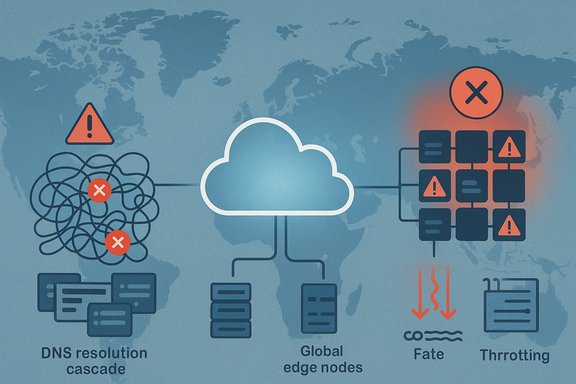

AWS — a DNS/control‑plane cascade centered on DynamoDB

Public reporting and AWS status messages point to a failure mode that begins with DNS endpoint management for DynamoDB in US‑EAST‑1 and expands from there. According to AWS’s own interim disclosures and corroborating reporting, a latent defect in the automated DNS management layer (the system that ensures endpoints resolve correctly across availability zones and load balancers) introduced incorrect or empty DNS records for DynamoDB endpoints. As those records failed to resolve, customer clients and internal AWS control flows saw elevated API error rates; retries, backlogs and control‑plane dependencies then amplified the incident into a broad availability event for dependent services. Recovery required manual intervention to repair records and careful throttling while queues and background processing backlogs cleared. Why DNS? DNS is the unglamorous but critical glue that maps human-readable service endpoints to network addresses. When a widely used managed service such as DynamoDB becomes unreachable at the resolution layer, the impact radiates rapidly because tens of thousands of SDK clients, internal health checks and auxiliary control‑plane calls depend on timely name resolution. A corrupted or absent DNS entry is a failure that looks, to callers, like the service itself being down — and when control‑plane operations (instance launches, credential refreshing, queue replay) also use the same primitives, recovery actions become entangled with the failure state.Microsoft Azure — a control‑plane deployment that bypassed validation

Microsoft’s outage differs in mechanism but converges on the same theme: a control‑plane operation introduced an invalid configuration into Azure Front Door. AFD is a global, Microsoft‑managed edge fabric that both routes customer traffic and fronts Microsoft’s own services; as such, a faulty deployment in AFD has far‑reaching effects. Microsoft’s initial post‑incident description points to a software defect in validation and protection mechanisms that allowed the erroneous configuration to propagate. The company’s immediate mitigations — freezing configuration changes and rolling back — were textbook control‑plane containment, but they also underline how brittle global edge fabrics can be when a single control change touches many nodes.Common failure themes

- Control‑plane coupling: modern cloud platforms expose global management surfaces (control planes) that are logically and operationally centralized. When those planes fail, customer fallback paths can be constrained because remediation typically requires access to the very control surfaces that are impaired.

- Automation and race conditions: automation is essential at hyperscale, but automated updates and cleanup processes can interact in unexpected ways (race conditions, concurrent plan cleanup) and produce invalid global state that human operators then struggle to repair quickly.

- Retry storms and throttling: client SDKs and infrastructure bake in retry logic. During partial failures, badly tuned retry behavior can generate a flood of traffic and amplify the failure; providers often apply throttles to limit damage, but throttles can also slow recovery actions.

- Operational backlogs: fixes themselves are rarely instantaneous — queued messages, backlog drains and replay operations can extend recovery long after the primary fix is applied.

Who was affected — the real-world impact

The outages hit a wide range of consumer and enterprise services. Reports and vendor statements indicate outages or degraded function for gaming services, fintech apps, consumer chat and collaboration platforms, some retail point-of-sale or ordering systems, and even portions of the hyperscalers’ own consumer-facing services. High‑profile names surfaced in coverage (Snapchat, Signal, various fintech and crypto exchanges, restaurant ordering systems, and some government portals), illustrating how heterogeneous the dependency web is. The exact set of affected tenants varies by reporter and timeline because the incidents produced staggered, cascading failures and partial recoveries for different workloads. Quantifying economic loss is fraught: public outage trackers record millions of problem reports across affected services during peak windows, but raw “reported incidents” are not the same as unique customer outages or precise revenue loss. Estimates in early reporting are directional rather than contractual; real financial exposure will be tabulated in later forensic analyses and vendor SLAs. A practical takeaway for business leaders is simple: if your business relies on single‑region deployments or a single managed control‑plane primitive for mission‑critical flows, those architectural choices can translate into immediate revenue and reputation risk.Why the outages matter to governments and regulators

The incidents have sharpened policy debates on a few fronts:- Systemic concentration risk: AWS, Microsoft, and Google Cloud collectively supply the lion’s share of public cloud infrastructure spend. That market concentration means failures at any one of the big providers can create correlated impacts across sectors, from finance to health to public services.

- Critical third‑party designation: advocates are pushing for designating core cloud services that support essential public functions as “critical third parties,” which would bring mandatory incident reporting, independent audits and potentially higher resilience requirements.

- Procurement and sovereignty: governments are reassessing procurement language and exploring incentives for regional/sov‑cloud alternatives for regulated workloads.

- Transparency and incident reporting: stakeholders want structured, machine‑readable post‑incident disclosures within set timelines so buyers, auditors and regulators can measure exposure and remediation progress.

The political side: AI job-reporting bill and the wider regulatory climate

Separately, lawmakers introduced the AI‑Related Job Impacts Clarity Act, a bipartisan proposal from Sens. Mark Warner and Josh Hawley that would require federal agencies and major employers to report quarterly on AI‑related layoffs, retrainings and hirings to the Department of Labor. The bill’s sponsors argue better data is needed to make sound policy choices as automation and AI reshape labor markets; critics note projections cited in bill materials — such as claims that AI could drive unemployment by 10–20% within five years — are speculative and should be treated as such. The AI bill illustrates a broader appetite in Congress for more granular operational transparency from large tech firms, whether the issue is workforce impacts or cloud resilience. Important caveat: projections about future unemployment tied to AI are contested. They often derive from macroeconomic modelling with broad assumptions about productivity, adoption rate and policy responses; therefore, while the bill’s reporting requirement is concrete, the numerical forecasts cited in political messaging should be presented as projections, not established facts.Tradeoffs and realistic policy levers

Calls to “regulate the cloud like telecoms” are understandable in the abstract but require nuance in practice.- Heavy structural remedies (forced divestitures or utility‑style rate regulation) would be politically and technically fraught and could slow the pace of innovation that hyperscalers enable for AI, edge computing and global delivery.

- Targeted behavioral and procurement measures are more pragmatic: mandated incident transparency, minimum resilience requirements for public‑interest workloads (e.g., multi‑region minimums or audited failover tests), portability and egress pricing transparency, and contractual rights for independent post‑incident audits can shift incentives without destroying economies of scale.

Engineering and procurement advice for IT leaders (practical, tactical)

For Windows administrators, SREs, and enterprise architects, the outages underscore actionable items that materially reduce exposure.- Map dependencies now.

- Inventory every service path that touches vendor-managed control planes (identity providers, CDNs, managed databases, DNS).

- Prioritize the smallest set of choke points.

- For the most critical business functions, invest in true multi‑region or vendor‑diversified architectures where the cost of downtime exceeds the migration expense.

- Harden DNS and identity fallbacks.

- Use multiple authoritative DNS providers, shorten TTLs for critical records, and implement smart failover health checks.

- Maintain out‑of‑band admin paths.

- Ensure CLI, PowerShell and emergency admin accounts are tested and can operate when web consoles are impaired.

- Test recovery paths regularly.

- Run tabletop exercises simulating control‑plane loss, SSO failures and DNS anomalies; validate human runbooks under stress.

- Tune SDK retries and add circuit breakers.

- Prevent retry storms that amplify failure domains and design for graceful degradation in client UX.

- Contract for transparency.

- Negotiate SLAs that include prompt, detailed post‑incident reports and equivalent tenant‑level telemetry for forensic needs.

Where vendors can and should improve

Hyperscalers themselves have clear technical workstreams to pursue:- Strengthen validation and canarying in control‑plane deployments, with robust software‑in‑the‑loop tests that catch invalid global configurations before they propagate.

- Implement isolation between identity/token issuance and global routing fabrics to reduce cascading failures when one plane experiences trouble.

- Publish structured, machine‑readable post‑incident reports with exact timelines, scope, mitigation steps and measurable remediation commitments.

- Improve customer tooling that makes multi‑region failover and portability simpler and less costly for common workloads.

Risks of over‑reaction and unintended consequences

While calls for regulation will grow louder, policymakers must be careful not to lock in harmful outcomes:- Overly prescriptive technical mandates can ossify interfaces and slow innovation.

- Protectionist "sovereign cloud" procurement without interoperability incentives can fragment markets and raise costs for cross‑border services.

- Blanket multi‑cloud requirements for all workloads are expensive, operationally complex and can increase risk if not implemented by skilled teams.

Final assessment — a practical way forward

The October outages are a consequential wake‑up call: they do not mean the cloud model is inherently broken, but they do mean that resilience must be a deliberate design choice, not a byproduct of convenience. Hyperscalers deliver economies of scale, global reach and the infrastructure that powers modern AI and SaaS businesses — and for most enterprises, cloud remains the only economically viable path to those capabilities. But the convenience of scale amplifies systemic fragility when control planes are centralized and default deployment practices favor single regions.The balanced path forward combines:

- Short‑term technical action by enterprises (dependency mapping, failover rehearsals, out‑of‑band admin capabilities).

- Medium‑term operational fixes by providers (better validation, isolation of critical control primitives, clearer post‑incident reporting).

- Targeted policy steps to protect the public interest (structured incident disclosure, resilience expectations for public services and procurement incentives for portability).

The outages were a vivid reminder that engineering and policy are intertwined: the choices companies and governments make now about disclosure, procurement and architectural defaults will determine whether the next major outage is an isolated lesson or a repeated national vulnerability. The next phase should be less about headlines and more about measurable, enforceable steps that reduce the blast radius when inevitable errors occur — and that ensure critical services remain available when people depend on them.

Source: FedScoop Calls for government action grow louder amid recent cloud outages