OpenAI’s engineers have quietly built an internal code-hosting platform and — according to multiple reports — the company is now weighing whether to productize that tool as a commercial alternative to the Microsoft‑owned GitHub, a move that would set up one of the most surprising competitive cross-currents in the cloud‑AI era and raise new questions about reliability, strategy, and trust in the developer ecosystem.

OpenAI and Microsoft forged a deep, multifaceted partnership over the past several years: Microsoft has been a principal investor, the single largest cloud partner for OpenAI, and the primary commercial distributor for many OpenAI services. At the same time, Microsoft owns GitHub, the dominant code‑hosting service developers use for source control, pull requests, CI/CD, package registries, and collaborative workflows.

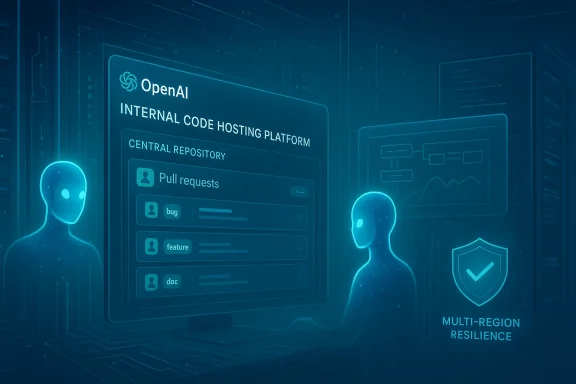

In late February and early March 2026, multiple outlets reported that OpenAI staff built an internal code repository after repeated GitHub outages impeded day‑to‑day engineering work. The internal tool reportedly solved specific reliability and agent‑integration needs for OpenAI’s own teams. Now — still described as nascent and possibly months away from any external availability — the project is being discussed internally as a potential commercial product OpenAI could sell to developer and enterprise customers.

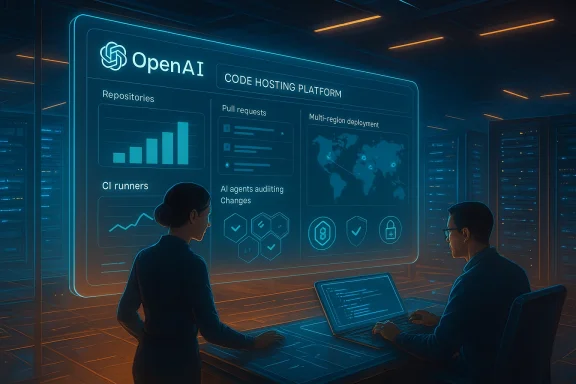

That combination — a nascent product built to address reliability, plus OpenAI’s enormous developer footprint and deep AI tooling — is why the rumor matters. If real and commercialized, an OpenAI code host could touch virtually every step of modern software production: source control, AI‑assisted commits, automated review agents, CI/CD automation, artifact hosting, and security scanning.

What changed this time is that the broader developer community experienced an unusually dense string of service degradations and outages on GitHub in early 2026. Public status pages and independent incident trackers documented multiple partial and intermittent outages that affected web access, API requests, Git operations, Actions runs, and AI features (including Copilot) across February. For teams that rely on continuous integration, fast merge cycles, and AI‑assisted coding agents, repeated multi‑hour degradations are not a minor inconvenience — they are a productivity and reliability risk.

OpenAI’s engineering teams reportedly responded by creating an internal code host optimized for availability and tighter integration with its own AI agents. Whether that internal tool becomes a public product is the key strategic question.

A commercial GitHub competitor built by OpenAI would be a clear conflict with Microsoft’s product portfolio. The strategic implications are significant:

First, OpenAI has aggressively pursued infrastructure and product expansion. In late February 2026 reports indicate a massive funding/partnership round led by large tech companies that dramatically boosted the company’s capacity and balance sheet. That capital could underwrite ambitious new products and the large upfront costs of a code‑hosting service.

Second, the company has faced public backlash over sensitive contracts and governance decisions. A recent defense contract with the U.S. Department of Defense triggered public protests and a wave of criticism, prompting OpenAI’s CEO to publicly acknowledge mistakes in timing and communication and to amend parts of the agreement. That controversy sparked a short‑term consumer response — app uninstall spikes, social media campaigns, and a momentary lift for alternative AI apps — and it highlights how reputation and trust can rapidly affect adoption.

Financial narratives are mixed. Some analysts and outlets have highlighted sizeable projected cash burn scenarios for advanced AI developers; others point to growing revenue from subscriptions and enterprise services. Projections about losses or cash exhaustion are inherently speculative and depend heavily on pricing, enterprise contracts, capital commitments, and the future cost curve of chip supply and GPUs. Any claim about impending bankruptcy or precise multi‑billion shortfalls should be treated as a forecast, subject to revision, and contingent on future strategic choices.

There are persuasive reasons for OpenAI to explore this path: internal reliability needs, the commercial logic of bundling AI agents with hosting, and the opportunity to monetize a platform that touches software delivery’s most vital seams. There are equally persuasive reasons for caution: technical scale challenges, migration friction, and the broader strategic awkwardness of competing with a major investor and cloud partner.

The immediate facts are straightforward: engineers at OpenAI built an internal repository to reduce operational fragility; GitHub experienced a spate of incidents that exposed real developer pain; and OpenAI is reportedly discussing commercialization. Beyond those facts lies a messy strategic calculus. Success would require uniting world‑class systems engineering, airtight enterprise security, and a migration story convincing enough to overcome the greatest single obstacle in developer tools: inertia.

For IT leaders, the practical takeaway is the same whether or not OpenAI ships a product: the reliability of core developer services matters. Treat Git hosting and CI/CD as strategic dependencies, prepare migration and redundancy plans, and scrutinize how tightly any AI agent integration binds your organization to a single provider.

In short, the rumor is both a symptom and a signal. It’s a symptom of rising expectations for reliability in a world where AI increasingly automates development tasks. And it’s a signal that the boundaries between platform, tool, and model are blurring — which will force enterprises, developers, and regulators to rethink how software is built, hosted, and governed in the age of AI.

Source: Windows Central OpenAI challenges Microsoft amid rumors of a GitHub competitor

Background

Background

OpenAI and Microsoft forged a deep, multifaceted partnership over the past several years: Microsoft has been a principal investor, the single largest cloud partner for OpenAI, and the primary commercial distributor for many OpenAI services. At the same time, Microsoft owns GitHub, the dominant code‑hosting service developers use for source control, pull requests, CI/CD, package registries, and collaborative workflows.In late February and early March 2026, multiple outlets reported that OpenAI staff built an internal code repository after repeated GitHub outages impeded day‑to‑day engineering work. The internal tool reportedly solved specific reliability and agent‑integration needs for OpenAI’s own teams. Now — still described as nascent and possibly months away from any external availability — the project is being discussed internally as a potential commercial product OpenAI could sell to developer and enterprise customers.

That combination — a nascent product built to address reliability, plus OpenAI’s enormous developer footprint and deep AI tooling — is why the rumor matters. If real and commercialized, an OpenAI code host could touch virtually every step of modern software production: source control, AI‑assisted commits, automated review agents, CI/CD automation, artifact hosting, and security scanning.

Why engineers build internal tooling — and why that matters now

The practical trigger: repeated GitHub disruptions

Large engineering organizations often build internal systems when public tools don’t meet availability, scale, or latency requirements. Google’s internal monorepo system, Piper, and Meta’s Sapling are canonical examples: both were built to manage extreme scale and specialized developer workflows, and neither was originally intended as a commercial product.What changed this time is that the broader developer community experienced an unusually dense string of service degradations and outages on GitHub in early 2026. Public status pages and independent incident trackers documented multiple partial and intermittent outages that affected web access, API requests, Git operations, Actions runs, and AI features (including Copilot) across February. For teams that rely on continuous integration, fast merge cycles, and AI‑assisted coding agents, repeated multi‑hour degradations are not a minor inconvenience — they are a productivity and reliability risk.

OpenAI’s engineering teams reportedly responded by creating an internal code host optimized for availability and tighter integration with its own AI agents. Whether that internal tool becomes a public product is the key strategic question.

From internal tool to commercial product: the logic

There are three commercial incentives for turning internal developer tools into products:- Reliability as a differentiator: a code host that demonstrably outperforms incumbents on uptime and latency — especially for large monorepos and agent workflows — can attract enterprise teams.

- Bundling with AI services: OpenAI can combine a code repository with AI coding agents, automated code generation, and code understanding features (e.g., agentic Codex-style assistants), creating a vertically integrated developer platform.

- Revenue diversification: OpenAI is expanding commercially beyond API metered usage and ChatGPT subscriptions; developer tooling is a logical adjacent revenue stream that fits with enterprise adoption.

The Microsoft paradox: partner, investor, and competitor

The relationship between OpenAI and Microsoft has always been multi‑layered: investment, cloud partnership (historically Azure), product integration, and co‑development. That mix has delivered mutual commercial advantages — but it also creates strategic tension when either party moves into the other’s core product space.A commercial GitHub competitor built by OpenAI would be a clear conflict with Microsoft’s product portfolio. The strategic implications are significant:

- Microsoft stands to lose not only a key customer but potentially a major distribution channel for developer services tied to the OpenAI model stack.

- GitHub has deep network effects: hundreds of millions of repositories, tens of millions of paying developers, and built‑in features like Actions, Packages, Codespaces, and an entrenched enterprise footprint. Unseating that requires more than reliability; it requires trust, integration, and migration ease.

- Microsoft’s own investments in AI for developers — Copilot, Spark, and deeper IDE integrations — create a product overlap that raises questions about dual loyalties, data routing, and model access.

What the rumored product could look like

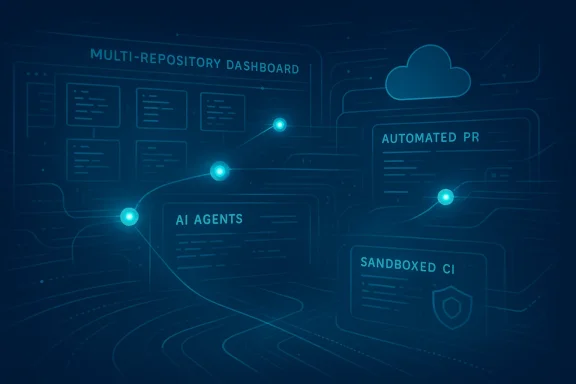

No definitive product blueprint has been released, but informed speculation and analogous offerings suggest some likely priorities if OpenAI decides to commercialize the internal repository:- Core code hosting features: Git semantics, pull requests, branch protections, issue tracking, and code search designed for scale.

- Deep AI integration: native agent workflows that can write, review, refactor, and generate tests; AI‑driven code search and semantic analysis; automatic pull request drafting.

- Reliability and multi‑region replication: stronger SLAs, partition‑tolerant architectures, and multi‑cloud or hybrid on‑prem options aimed at enterprise customers.

- Developer productivity additions: integrated CI/CD pipelines, AI‑accelerated code review, and a marketplace for AI‑based developer tools.

- Security and compliance: built‑in SCA (software composition analysis), secret scanning, dependency alerts, and SOC/ISO certifications for regulated customers.

Technical and operational challenges

Building a commercial code‑hosting platform at GitHub scale is monstrously hard. The challenges are both technical and socio‑operational:- Scale and durability: repository storage, git object storage, large file handling, and high‑throughput clone/push operations require global, highly optimized storage systems and careful egress/cost controls.

- CI/CD scale: hosted runners and ephemeral build environments are resource‑intensive. Managing multi‑tenant build capacity without ballooning costs is a nontrivial systems engineering problem.

- Latency and availability: to win on reliability OpenAI would need multi‑region, read‑replicated architectures and defensive measures for cascading failures in third‑party dependencies.

- Migration friction: convincing large organizations to migrate hundreds of repositories, pipelines, and secrets is a slow, risk‑averse process.

- Security and trust: enterprises require audit logs, data residency options, vulnerability scanning, and formal certifications. Perception matters: some open‑source communities may be wary of entrusting code to a centralized AI company.

- Economic model: hosting, storage, compute for AI agent operations, and support all cost money. Pricing must balance competitiveness with the heavy infrastructure costs of inference and storage.

Strategic levers and market dynamics

1) Developer mindshare and network effects

GitHub’s lead is not just product features; it’s the network. Any newcomer must offer a clear migration path and a set of features that are either strictly better or uniquely integrated with developer‑facing AI services.2) AI agents as a lock‑in vector

If OpenAI bundles agentic workflows that demonstrably reduce engineering time — for example, by automating end‑to‑end story implementation or reducing bug fix cycles — that capability becomes a sticky differentiator. Enterprises may tolerate a new hosting vendor if the productivity gains are real and measurable.3) Multi‑cloud and hybrid strategies

To avoid direct Azure lock‑in and to reassure customers worried about single‑vendor risk, OpenAI would likely offer multi‑cloud or on‑prem solutions, or partner with other cloud providers for distribution. The recent large strategic investments OpenAI has announced (and the reported new funding partnerships) increase the plausibility of multi‑cloud options.4) Regulatory and antitrust scrutiny

A move into core developer infrastructure by a company that also supplies widely used AI models invites fresh regulatory scrutiny, particularly around competition policy, interoperability, and potential anti‑competitive bundling.The broader context: funding, burn, and public trust

Two broader forces shape how this rumor should be read.First, OpenAI has aggressively pursued infrastructure and product expansion. In late February 2026 reports indicate a massive funding/partnership round led by large tech companies that dramatically boosted the company’s capacity and balance sheet. That capital could underwrite ambitious new products and the large upfront costs of a code‑hosting service.

Second, the company has faced public backlash over sensitive contracts and governance decisions. A recent defense contract with the U.S. Department of Defense triggered public protests and a wave of criticism, prompting OpenAI’s CEO to publicly acknowledge mistakes in timing and communication and to amend parts of the agreement. That controversy sparked a short‑term consumer response — app uninstall spikes, social media campaigns, and a momentary lift for alternative AI apps — and it highlights how reputation and trust can rapidly affect adoption.

Financial narratives are mixed. Some analysts and outlets have highlighted sizeable projected cash burn scenarios for advanced AI developers; others point to growing revenue from subscriptions and enterprise services. Projections about losses or cash exhaustion are inherently speculative and depend heavily on pricing, enterprise contracts, capital commitments, and the future cost curve of chip supply and GPUs. Any claim about impending bankruptcy or precise multi‑billion shortfalls should be treated as a forecast, subject to revision, and contingent on future strategic choices.

Risks and downside scenarios

For OpenAI- Strategic fragmentation: competing with Microsoft in core product areas risks unraveling the cooperative strands of the partnership that facilitate compute, distribution, and enterprise sales.

- Execution risk: failing to deliver on reliability, security, or migration tooling could leave OpenAI with an expensive engineering effort and little adoption.

- Community backlash: the open‑source community could resist moving to a proprietary host, especially if code indexing or model training rights are unclear.

- Regulatory heat: antitrust and procurement regulators could probe any move that tightens OpenAI’s control over both models and developer platforms.

- Customer churn: repeated outages and perceived neglect could nudge some enterprise customers to evaluate alternatives.

- Competitive exposure: Microsoft’s investments in AI and cloud make GitHub itself a continuing center of innovation; a well‑executed rival would force Microsoft to accelerate GitHub innovation, reliability investments, and tighter Copilot and Azure integration.

- Relationship strain: a public commercial conflict could complicate Azure compute agreements, model licensing, and joint go‑to‑market arrangements.

- Migration costs: moving thousands of repositories plus CI/CD pipelines and policies is time‑consuming and risky.

- Vendor lock‑in: platforms that tightly bind AI agents to a code host risk creating new lock‑in dynamics.

- Data privacy and IP: Corporate legal teams will require explicit guarantees about code reuse, model training, and IP boundary protections.

What success looks like — and what failure looks like

Success for a new OpenAI code host would look like:- Reliable global SLAs that consistently beat or match incumbent enterprise expectations.

- Enterprise adoption at meaningful scale, especially from teams that value AI‑driven workflows.

- Clear, auditable controls for data residency, IP protection, and compliance certifications.

- Developer tooling that integrates smoothly into IDEs and pipelines without breaking existing workflows.

- A slow, costly engineering slog that never reaches parity with mature Git hosting features.

- Limited adoption because enterprises refuse to migrate or distrust the vendor.

- Public disputes with Microsoft that result in contract or cloud‑compute complications.

- Regulatory action or developer boycott over model training or data‑use practices.

Practical implications for developers and IT leaders

If you manage developer platforms or evaluate code‑hosting services, now is the time to:- Audit dependencies: map which critical workflows depend on GitHub services (Actions, Codespaces, Copilot) and estimate business impact for multi‑hour outages.

- Test migration paths: evaluate backup and mirror strategies, such as replicating repositories to secondary hosts or self‑hosting critical components.

- Re‑examine SLAs: insist on clearer uptime and incident notification terms from current vendors and include availability clauses in procurement.

- Consider agent risk: if you adopt AI‑driven coding agents, assess where those agents execute and how their compute and security posture align with compliance needs.

- Watch vendor contracts: be wary of any terms that would allow a provider to reuse your code for model training without clear, auditable permission and compensation clauses.

What to watch next

- Official announcements: OpenAI and Microsoft statements will be determinative. Expect cautious corporate language but watch for commitments on compute, IP, and partnership terms.

- Product signals: a public beta, waitlist, or developer preview would indicate commercialization intent; absence of these could mean the project stays internal.

- Enterprise deals: early enterprise customers or pilot contracts would be a strong positive signal that the product can meet compliance and migration demands.

- GitHub product response: look for accelerated reliability work, feature parity pushes, and possibly price or SLA changes to retain customers.

- Regulatory attention: antitrust or procurement agencies may watch moves that alter competitive dynamics between major cloud and AI vendors.

Final analysis

The rumor that OpenAI is building a GitHub rival is significant because it reframes the relationship between AIdriven model providers and developer infrastructure. At stake is much more than where code lives; it’s who controls the workflow where human engineers and AI agents collaborate to produce software.There are persuasive reasons for OpenAI to explore this path: internal reliability needs, the commercial logic of bundling AI agents with hosting, and the opportunity to monetize a platform that touches software delivery’s most vital seams. There are equally persuasive reasons for caution: technical scale challenges, migration friction, and the broader strategic awkwardness of competing with a major investor and cloud partner.

The immediate facts are straightforward: engineers at OpenAI built an internal repository to reduce operational fragility; GitHub experienced a spate of incidents that exposed real developer pain; and OpenAI is reportedly discussing commercialization. Beyond those facts lies a messy strategic calculus. Success would require uniting world‑class systems engineering, airtight enterprise security, and a migration story convincing enough to overcome the greatest single obstacle in developer tools: inertia.

For IT leaders, the practical takeaway is the same whether or not OpenAI ships a product: the reliability of core developer services matters. Treat Git hosting and CI/CD as strategic dependencies, prepare migration and redundancy plans, and scrutinize how tightly any AI agent integration binds your organization to a single provider.

In short, the rumor is both a symptom and a signal. It’s a symptom of rising expectations for reliability in a world where AI increasingly automates development tasks. And it’s a signal that the boundaries between platform, tool, and model are blurring — which will force enterprises, developers, and regulators to rethink how software is built, hosted, and governed in the age of AI.

Source: Windows Central OpenAI challenges Microsoft amid rumors of a GitHub competitor