The February joint statement from OpenAI and Microsoft is as much a legal and commercial reset as it is a public relations exercise: both companies told the market the strategic lines drawn in their October 2025 agreement still stand, even as OpenAI announced a sweeping new partnership and investment from Amazon and other backers the same day. The message is straightforward — collaborate widely, but honor the contractually negotiated anchors — yet the consequences for cloud architecture, enterprise procurement, competition policy, and developer strategy are far from trivial.

Since 2019 Microsoft and OpenAI have been tightly entwined: large-scale Azure commitments, preferred commercial arrangements, and IP access that shaped how the modern AI cloud market formed. That relationship was significantly updated in October 2025 to reflect OpenAI’s new capitalization and long-term commercial terms. The February 27, 2026 joint statement reiterates that the October 2025 terms remain in force even as OpenAI pursues additional investments and infrastructure partners.

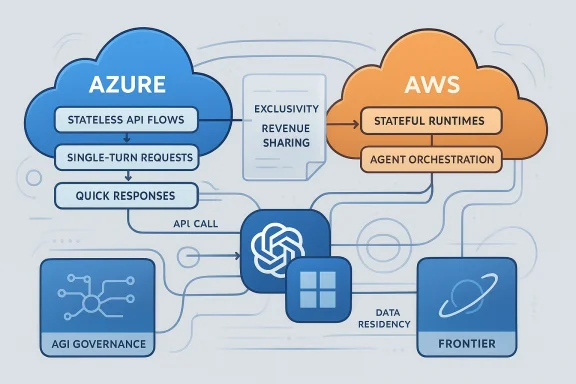

At the same time, OpenAI and Amazon published simultaneous announcements describing a multi-billion-dollar strategic partnership that includes joint engineering work (a “Stateful Runtime Environment” for agents on Amazon Bedrock), a multi-gigawatt Trainium compute commitment to AWS, and a reported $50 billion investment pledge from Amazon. OpenAI also continues to build its own large-scale infrastructure program, Stargate, and maintains existing relationships with other infrastructure partners such as Oracle and SoftBank. These parallel moves — Microsoft’s reaffirmation and OpenAI’s multi-cloud expansion — define the current moment.

Key practical considerations:

From a technical and enterprise perspective, however, the arrangement introduces real operational trade‑offs. Routing stateless traffic through a single cloud while distributing stateful runtimes across others creates latency, cost, and compliance complexity that organizations must manage. The market will respond with tooling, contractual innovations, and architectural patterns, but that transition will be nontrivial.

Finally, regulatory attention is likely to intensify. Exclusive IP access, revenue sharing that spans third‑party partnerships, and the routing of APIs through a single provider are exactly the kinds of structural arrangements that raise competition policy questions. Enterprises should proceed with both optimism and appropriate caution: these developments expand options and capabilities, but they also demand new disciplines in architecture, procurement, and governance.

The next months will tell whether market gravity — driven by performance, price, and developer experience — pulls the ecosystem toward multi‑cloud interoperability, or whether commercial incentives and contractual anchors sustain a more polarized provider landscape. In either case, engineering and legal teams will be the ones building the bridge.

Source: OpenAI Joint Statement from OpenAI and Microsoft

Background

Background

Since 2019 Microsoft and OpenAI have been tightly entwined: large-scale Azure commitments, preferred commercial arrangements, and IP access that shaped how the modern AI cloud market formed. That relationship was significantly updated in October 2025 to reflect OpenAI’s new capitalization and long-term commercial terms. The February 27, 2026 joint statement reiterates that the October 2025 terms remain in force even as OpenAI pursues additional investments and infrastructure partners.At the same time, OpenAI and Amazon published simultaneous announcements describing a multi-billion-dollar strategic partnership that includes joint engineering work (a “Stateful Runtime Environment” for agents on Amazon Bedrock), a multi-gigawatt Trainium compute commitment to AWS, and a reported $50 billion investment pledge from Amazon. OpenAI also continues to build its own large-scale infrastructure program, Stargate, and maintains existing relationships with other infrastructure partners such as Oracle and SoftBank. These parallel moves — Microsoft’s reaffirmation and OpenAI’s multi-cloud expansion — define the current moment.

What the joint statement actually says

The companies’ public joint text makes several discrete, contract-sensitive assertions:- IP and licensing: Microsoft retains its exclusive license and access to OpenAI model IP and related products, a continuity claim that preserves Microsoft’s strategic position developed over years.

- Revenue sharing: The revenue‑share arrangement negotiated previously remains unchanged and explicitly covers partnerships between OpenAI and other cloud providers.

- Azure exclusivity for stateless APIs: Microsoft asserts Azure remains the exclusive cloud provider for stateless OpenAI APIs — the classic request‑response model in which a single query returns an immediate answer.

- Hosting of first‑party products: OpenAI’s first‑party offerings — including its enterprise platform Frontier — are affirmed as continuing to be hosted on Azure.

- AGI definition/process: The contractual definition and determination process for artificial general intelligence (AGI) are unchanged, preserving previously negotiated triggers and governance mechanics.

- Flexibility for compute: OpenAI is explicitly allowed to commit compute capacity elsewhere (e.g., the Stargate program) to meet scale and performance needs.

What OpenAI + Amazon announced — and where the messages overlap

OpenAI’s and Amazon’s announcement the same day described new, complementary technical initiatives:- A co‑created Stateful Runtime Environment available on Amazon Bedrock, designed to run AI agents that retain context over time (memory, identity, tool access).

- AWS as the exclusive third‑party cloud distribution provider for OpenAI Frontier, according to OpenAI and AWS materials.

- A 2‑gigawatt Trainium compute commitment from OpenAI to AWS (Trainium is Amazon’s custom silicon family for training/inference).

- A reported $50 billion investment by Amazon in OpenAI (staged: $15B initial + up to $35B conditional).

Why “stateless” vs “stateful” matters — a technical primer

The joint statement’s repeated use of the term stateless is not marketing fluff; it’s a technical, contractual boundary.- Stateless APIs: Single-turn requests that do not persist conversational or task context across calls. Examples: text completion queries, single‑shot summarization. These are typically simple to route, cache, and meter.

- Stateful runtimes: Long‑running environments where agents keep memory, multi‑step workflows, user identity, or persistent session data. These support agent orchestration, multi-turn action sequences, and integrations with customer systems.

- Latency and user experience: Cross‑cloud hops (user → AWS runtime → Azure stateless model → back to AWS) add round‑trip time, which affects interactive agents and real‑time applications.

- Egress and networking costs: Frequent movement of payloads across cloud boundaries multiplies egress charges and increases predictable operational expenditures.

- Data gravity and locality: Enterprise data that must remain in a particular region or cloud for compliance or performance will need explicit architectural planning.

- Operational complexity: Debugging, tracing, and governance across two providers require mature observability and cross‑cloud SRE practices.

Commercial and legal implications

The reaffirmation of Microsoft’s IP and revenue arrangements is strategically significant.- Microsoft’s exclusive license to certain OpenAI IP gives it a durable commercial moat. In practice, it means Microsoft has privileged access to model capabilities and derivative rights that competitors do not.

- The revenue‑share language — explicitly covering revenue from third‑party partnerships — ensures Microsoft will receive a cut from OpenAI’s expansion with other cloud providers. That preserves Microsoft’s economic exposure to OpenAI’s growth even as the latter diversifies compute and distribution.

- Regulators will look closely. Exclusive IP access and routing guarantees that channel stateless API traffic exclusively through a single cloud provider are the exact kinds of contractual terms that invite antitrust scrutiny if they substantially foreclose competition or limit customer choice.

Enterprise impact: what CIOs and procurement teams should watch

For organizations evaluating vendor lock‑in, compliance, and performance, the current landscape presents choices rather than a single path.Key practical considerations:

- Product SLAs and hosting guarantees: Confirm whether a given OpenAI product is the first‑party Azure‑hosted instance or a third‑party distributed version on AWS. The operational model affects data residency, auditability, and incident response.

- Data residency and compliance: If stateless API calls must flow through Azure, understand where logs and telemetry are stored and whether data movement violates regional regulations or customer policies.

- Cost modeling: Account for potential cross‑cloud egress fees, additional NAT/peering, and interconnect costs when designing stateful agents that mix Azure and AWS components.

- Procurement carve‑outs: Negotiate contract language that clarifies which parties host what parts of the stack, where intellectual property is exercised, and who is responsible for compliance auditing and incident remediation.

- Vendor consolidation vs best‑of‑breed: Determine whether the business prefers a single‑cloud integrated vendor (Azure or AWS) or a hybrid approach that optimizes for latency, cost, or capability.

Architecture guidance for developers and SRE teams

Designing resilient, performant agent systems under the new contractual landscape requires concrete patterns. Below are recommended steps and mitigations.- Map data flows explicitly.

- Identify which calls are stateless vs stateful and track where each path originates and terminates.

- Minimize cross‑cloud hops for latency‑sensitive tasks.

- Collocate caches or proxy layers near the stateless API gateway to reduce round trips.

- Use asynchronous patterns when synchronous performance isn't required.

- Queue or stream heavy inference workloads to decouple runtime from immediate user interactions.

- Implement smart caching and memoization.

- Cache repeated prompts/responses at the edge; use content-addressable keys to avoid unnecessary model calls.

- Plan for egress and networking costs up front.

- Design for batching, result compression, and size‑aware payloads; model cost into TCO.

- Harden security and observability across clouds.

- Unified tracing, SAML/OAuth federation, and end‑to‑end encryption and logging retention policies are essential.

- Negotiate commercial protections.

- If you rely on a cross‑cloud model, secure contractual indemnities, performance guarantees, and clarity on who hosts production data.

Competitive and regulatory risks

There are immediate strategic risks associated with the arrangement:- Platform concentration: Channeling stateless API access through one provider concentrates control of model access points. That raises legitimate regulatory questions about whether enterprises can still access models competitively or are forced into vendor‑specific economics.

- Opaque governance: The maintenance of prior AGI‑trigger language and exclusive IP rights makes the governance of future, more capable models a political and legal flashpoint. Independent verification and transparency around the AGI trigger remain sensitive topics.

- Ecosystem fragmentation: If the market bifurcates into Azure‑centric stateless flows and AWS‑centric stateful runtimes, developer ecosystems may fragment, increasing the learning curve and integration cost for multi‑cloud deployments.

- Economic concentration risk: Revenue shares, exclusive licensing, and multi‑cloud service commitments create overlapping economic dependencies that could complicate future contract renegotiations or M&A outcomes.

Strengths and strategic reasoning behind the setup

Despite the complexity and friction, there are defensible business reasons for both parties’ positions.- OpenAI needs enormous, globally distributed compute capacity at short notice. Stargate and third‑party cloud relationships accelerate that scale beyond any single provider.

- Microsoft benefits from preserving core economic and IP positions while enabling OpenAI to scale — a compromise that yields both stability and flexibility.

- Amazon gains enterprise traction and a differentiated product story (stateful environments on Bedrock) that addresses the natural next step in agent-based applications.

- Enterprises potentially gain choice at the architectural level: they can opt for Azure‑hosted first‑party products or AWS‑distributed, Bedrock-native stateful runtimes, depending on priorities.

Where ambiguity remains — and what to watch next

Several aspects deserve scrutiny and clarification in the weeks and months ahead:- The exact operational interface between Azure‑hosted stateless APIs and AWS‑hosted stateful runtimes: how are tokens, rate limits, and provenance handled?

- The financial structure of the reported investments: what portion is cash vs. committed compute, and what are the timing and conditional triggers for the staged amounts?

- How AGI‑trigger governance will be operationalized, audited, and potentially adjudicated if contested.

- How enterprise contracts will address compliance, especially when data traverses cloud boundaries.

Practical takeaways for WindowsForum readers

- For developers: design for graceful degradation — build agents that can operate with partial state or local fallback to avoid cross‑cloud round‑trips when latency matters.

- For IT architects: insist on explicit SLAs and data‑handling clauses that define where data is stored, who can access logs, and how incidents are managed across cloud boundaries.

- For procurement and legal teams: map revenue‑share, IP, and distribution claims to concrete contract language; don’t rely on press releases for enforceable commitments.

- For CIOs: treat this as a transitional market state. Expect further tie‑ups and competitive responses from other cloud vendors; plan multi‑year roadmaps that tolerate churn in vendor feature sets.

Final assessment — pragmatic flexibility with structural friction

The joint statement is a carefully worded attempt to preserve the gains of a decade‑long strategic relationship while allowing OpenAI the operational room to scale rapidly. From a corporate strategy perspective, it’s an elegant compromise: keep Microsoft’s economic and IP anchors, let OpenAI pursue multi‑cloud compute and distribution, and publicly state continuity to stabilize investor and partner expectations.From a technical and enterprise perspective, however, the arrangement introduces real operational trade‑offs. Routing stateless traffic through a single cloud while distributing stateful runtimes across others creates latency, cost, and compliance complexity that organizations must manage. The market will respond with tooling, contractual innovations, and architectural patterns, but that transition will be nontrivial.

Finally, regulatory attention is likely to intensify. Exclusive IP access, revenue sharing that spans third‑party partnerships, and the routing of APIs through a single provider are exactly the kinds of structural arrangements that raise competition policy questions. Enterprises should proceed with both optimism and appropriate caution: these developments expand options and capabilities, but they also demand new disciplines in architecture, procurement, and governance.

The next months will tell whether market gravity — driven by performance, price, and developer experience — pulls the ecosystem toward multi‑cloud interoperability, or whether commercial incentives and contractual anchors sustain a more polarized provider landscape. In either case, engineering and legal teams will be the ones building the bridge.

Source: OpenAI Joint Statement from OpenAI and Microsoft