OpenAI’s latest consumer push — a lower‑cost ChatGPT Go plan and a public plan to test ads inside the free and Go tiers — marks a turning point in how generative AI moves from enthusiasm to a mainstream, revenue‑driven product. The shift is both pragmatic and risky: companies are finally asking users to pay for sustained model capacity, while also preparing to monetize the massive free audience through advertising and commerce integrations. In short, the consumer AI era has entered its monetization moment, and that changes everything from who gets the best models to how trustworthy our daily AI assistants will feel.

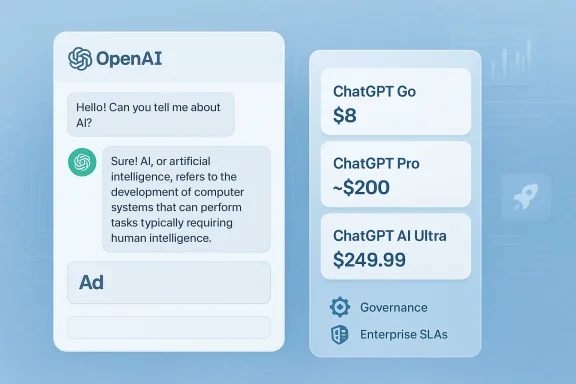

The machine behind this moment is familiar: millions of daily users, increasingly capable multimodal models, and infrastructure costs that dwarf historic cloud workloads. OpenAI’s January 16, 2026 announcement made two things official at once — a global rollout of a lower‑price consumer tier, ChatGPT Go ($8/month in the U.S.), and plans to begin testing ads in ChatGPT’s free and Go tiers in the U.S. as a way to subsidize wider access. These steps are explicitly framed as pragmatic: expand reach, keep premium tiers ad‑free, and diversify revenue beyond subscriptions and API fees.

Those product moves reflect broader market dynamics: consumer AI adoption has surged — industry research and central bank surveys show majority usage — but paying users remain a small minority. Venture and industry reports estimate a multi‑billion dollar consumer AI market today, with forecasts that scale into the hundreds of billions across the decade if conversion rates rise. That gap between usage and monetization is the business problem vendors are trying to solve.

At the same time, new premium tiers aimed at heavy users — Perplexity’s Max ($200/month), OpenAI Pro ($200/month), and Google’s high‑end AI Ultra ($249.99/month) with very large storage allotments — show vendors are carving the market into distinct segments: casual, bargain paying users, and prosumers who will pay for high throughput, advanced models, and integrated agentic features.

Independent reporting and investor analyses reinforce the urgency. Public filings, trade press and reporting suggest OpenAI’s user base has reached the high hundreds of millions of weekly active users, and company projections shared with investors (per reporting) include aggressive paid‑user targets for 2030. Converting a few percentage points of that reach into subscriptions or ad revenue is thus a core financial priority.

These differences explain why vendors are splitting their product strategies: funnel mass consumer usage via free apps and cheaper plans, and monetize intense usage with pricier pro/prosumer tiers or metered billing.

From a commercial standpoint, the logic is compelling: conversation surfaces offer hyper‑intent signals that can make ads more effective than classic search placements. A user asking “best blender under $150” reveals purchase intent and contextual constraints that are ideal for targeted commerce ads. For advertisers, in‑chat placements reduce friction between intent and conversion.

But the model presents novel risks:

The upside: more people can access capable AI tools, heavy users can buy the assurances and performance they need, and vendors finally have pathways to monetize the enormous consumption of compute and engineering effort behind modern LLMs. The downside: ad‑supported chat threatens trust, feature stratification may create affordability gaps for heavy learners and small businesses, and regulatory scrutiny around data use and ad transparency is likely to intensify.

For Windows users and IT professionals, the practical takeaway is to prepare: model subscription costs, pilot premium plans where they add concrete value, and insist on contractual safeguards that protect data and preserve auditability. In an era where AI assistants can compose, act, and learn, the interface between human work and machine action is more consequential — and more monetized — than it was a year ago.

OpenAI’s announcements and the broader industry moves documented this month are not theoretical press releases; they are strategic inflection points. How vendors implement ad systems, price tiers, and enterprise guarantees will determine whether consumer AI becomes a democratising utility or a stratified marketplace where the best models are reserved for those who can pay. The next twelve months will tell which path the industry chooses — and whether user trust can be economically sustained while companies pursue the revenues necessary to run the most powerful models.

Source: PYMNTS.com ChatGPT Leads Consumer AI Use as OpenAI Pushes New Paid Tiers | PYMNTS.com

Background / Overview

Background / Overview

The machine behind this moment is familiar: millions of daily users, increasingly capable multimodal models, and infrastructure costs that dwarf historic cloud workloads. OpenAI’s January 16, 2026 announcement made two things official at once — a global rollout of a lower‑price consumer tier, ChatGPT Go ($8/month in the U.S.), and plans to begin testing ads in ChatGPT’s free and Go tiers in the U.S. as a way to subsidize wider access. These steps are explicitly framed as pragmatic: expand reach, keep premium tiers ad‑free, and diversify revenue beyond subscriptions and API fees. Those product moves reflect broader market dynamics: consumer AI adoption has surged — industry research and central bank surveys show majority usage — but paying users remain a small minority. Venture and industry reports estimate a multi‑billion dollar consumer AI market today, with forecasts that scale into the hundreds of billions across the decade if conversion rates rise. That gap between usage and monetization is the business problem vendors are trying to solve.

At the same time, new premium tiers aimed at heavy users — Perplexity’s Max ($200/month), OpenAI Pro ($200/month), and Google’s high‑end AI Ultra ($249.99/month) with very large storage allotments — show vendors are carving the market into distinct segments: casual, bargain paying users, and prosumers who will pay for high throughput, advanced models, and integrated agentic features.

Why this matters: the monetization calculus

There are three economic realities shaping vendor behavior.- Running top‑tier, multimodal LLMs at scale is extremely expensive. Training, inference, and the safety/monitoring layers carry recurring costs that scale with every additional user and feature.

- User behavior has shifted from curiosity to habitual use: large surveys show more than half of adults have used generative AI in the prior year, and many people now use these assistants regularly.

- Converting free users to paying subscribers is hard; a small but crucial percentage of users generate the bulk of revenue today.

Independent reporting and investor analyses reinforce the urgency. Public filings, trade press and reporting suggest OpenAI’s user base has reached the high hundreds of millions of weekly active users, and company projections shared with investors (per reporting) include aggressive paid‑user targets for 2030. Converting a few percentage points of that reach into subscriptions or ad revenue is thus a core financial priority.

Consumer adoption: numbers, nuance, and what they hide

The raw adoption story is straightforward and well documented: consumer interaction with AI assistants is now mainstream. Multiple independent datasets corroborate this:- Menlo Ventures’ 2025 consumer report extrapolates that more than 60% of Americans used AI in the preceding six months and estimates a global base of roughly 1.7–1.8 billion users, while placing present consumer spending near $12 billion. That study highlights the vast gap between usage and payments.

- The St. Louis Fed’s policy research found that over half of U.S. adults had used generative AI in 2025 and quantified early productivity impacts for workers who use these tools. The Fed’s analysis is notable because it situates adoption inside labor markets and time‑savings metrics rather than purely consumer hobbyism.

These differences explain why vendors are splitting their product strategies: funnel mass consumer usage via free apps and cheaper plans, and monetize intense usage with pricier pro/prosumer tiers or metered billing.

The “Great Tier‑ification”: product, price and positioning

Vendors are deliberately segmenting offerings to extract revenue at every price point.- Entry-level nudges: Companies have introduced inexpensive plans designed as low‑friction upgrades for free users. ChatGPT Go at $8/month and Google AI Plus at $7.99/month are examples of this tactic: modest price reductions, more messages, longer memory, and limited access to higher‑fidelity models and features.

- Pro and prosumer lanes: For creators, researchers, and teams, vendors now offer expensive tiers — typically in the $200–$250/month range — that promise large context windows, agentic features, and vastly higher quotas. Google’s AI Ultra ($249.99/month) bundles experimental tools and massive storage; Perplexity’s Max ($200/month) gives early access to features such as the Comet browser and deep agentic integrations. These tiers are being marketed as workhorses for people who rely on AI output day in and day out.

- Quotas, credits, and metering: A hallmark of the new wave is stricter usage caps, credit systems, and metered APIs. This helps vendors govern free usage and directs heavy compute consumption into priced lanes.

Ads in chat: mechanics, promise, and peril

OpenAI’s ad plan is engineered to minimize user friction: ads will be clearly labeled, placed under organic answers, and excluded from sensitive topics and accounts identified as minors. The stated principles emphasize answer independence and conversation privacy: OpenAI says it will not sell conversation data to advertisers and will allow users to control personalization.From a commercial standpoint, the logic is compelling: conversation surfaces offer hyper‑intent signals that can make ads more effective than classic search placements. A user asking “best blender under $150” reveals purchase intent and contextual constraints that are ideal for targeted commerce ads. For advertisers, in‑chat placements reduce friction between intent and conversion.

But the model presents novel risks:

- Perception of bias: Even if ads are labeled and separated, placing sponsored content within the same conversational UI undermines the perceived neutrality of responses. Users may begin to wonder whether suggestions are influenced by revenue rather than utility.

- Privacy and personalization: The claim that conversation content won’t be sold to advertisers is meaningful, but the ad system will likely use some derived signals to target placements. The engineering and policy challenge is proving that those signals are used in privacy‑preserving ways that meet regulatory expectations.

- UX degradation and ad fatigue: Early experiments — including sponsored follow‑ups at other AI companies — have proven that intrusive ad formats risk turning users oe on a sponsored follow‑up model indicates that novelty ad flows can backfire. Vendors will need to balance revenue targets with long‑term trust.

Competition and the changing product map

The race to monetize is not only about price points; it is about ecosystem integration and trust.- OpenAI: Widely adopted and extensible via plugins and custom GPTs, OpenAI is leaning on scale plus new lower‑cost tiers and an ad experiment to broaden reach. The company’s announcements and product changes are consistent with a move from research to productization.

- Google (Gemini): Positions Gemini as a multimodal, Workspace‑integrated assistant and has expanded consumer tiers with AI Plus ($7.99/month) and AI Ultra ($249.99/month) for prosumers. Google’s advantage is deep integration across Gmail, Drive, Search and Android.

- Microsoft (Copilot) and others: Microsoft continues to position Copilot inside Microsoft 365, focusing on tenant‑level governance and enterprise SLAs. Anthropic, Perplexity and others target niches — safety, long‑form context, or citation‑first research — and some are building prosumer tools that directly compete for creators and knowledge workers.

Risks and regulatory implications

The shift to paid tiers and ad monetization raises several regulatory and systemic risk questions:- Consumer protection and disclosure: Ads in conversational UIs require clear labeling and robust controls to avoid deceptive placements.

- Data use and training: Even vendors that promise not to sell conversation data may use derived signals for ad targeting or model improvement. Enterprises and privacy regulators will demand transparency and contractual guarantees, particularly for sensitive industries.

- Competition and market concentration: Firms with both the largest user bases and the broadest ad ecosystems could entrench advantage, making it harder for niche competitors to monetize without access to comparable reach.

- Misinformation and reliability: When advanced features are behind paywalls, reliable, auditable reasoning may become a premium service — potentially widening the gap between those who can afford well‑tested outputs and those who cannot.

What this means for consumers and Windows users

For everyday users on Windows devices, the landscape breaks down into practical choices:- If you use AI for casual drafting, brainstorming, or entertainment, the free tier or a low‑cost plan like ChatGPT Go or Google AI Plus will likely suffice.

- If you depend on AI to do work — long documents, code review, agentic workflows, or high‑volume content creation — expect to pay for the reliability, extended context, and throughput that premium plans provide.

- Be mindful of privacy trade‑offs. If you want an ad‑free experience and stronger data guarantees, budget for a paid tier or the enterprise alternative that offers non‑training promises and contractual data residency.

- For Windows administrators and IT teams, it’s time to model AI licensing costs and update acceptable‑use and data handling policies. Agentic features that can act on corporate data require explicit procurement decisions, non‑training clauses where needed, and logging/telemetry integration with existing SIEM tools.

Practical advice for power users and IT pros

- Audit current usage. Identify who in your team uses which assistant and for what tasks. This will reveal where paid tiers yield clear ROI.

- Pilot premium tiers for high‑value workflows. Use contract periods to measure time savings, error rates, and incident frequency.

- Insist on contractual non‑training and data residency clauses when necessary. For regulated industries, these clauses are not negotiable.

- Prepare governance: integrate logs into SIEM, require human‑in‑the‑loop for transactional agent actions, set retention policies, and train staff on adversarial prompt injection.

- Evaluate vendor roadmaps and support SLAs before wide deployments. Model routing, agent orchestration, and uptime guarantees differ materially across vendors.

Strengths of the new approach — why vendors are right to act now

- Accessibility for more users: Lower‑cost tiers broaden adoption in price‑sensitive markets and reduce barriers for everyday tasks.

- Sustainable funding: Ads plus tiered subscriptions diversify revenue, enabling continued investment in models, safety, and developer ecosystems.

- Product differentiation: Tiers allow vendors to offer truly distinct experiences — basic drafting vs. agentic automation — instead of a single “one‑size‑fits‑all” product.

- Clear ROI for professionals: Pro and prosumer tiers—when properly engineered—deliver measurable productivity gains for creators, researchers, and enterprise teams.

Where the plan falls short — unresolved risks and weak points

- Trust vs. monetization: Ads in chat risk eroding trust unless engineering and policy safeguards are bulletproof and independently auditable.

- Global complexity: Regional pricing, availability, and legal regimes complicate a single product narrative; what’s marketed as “global” may still have access and regulatory disparities.

- Monetization dependence: Heavy reliance on ads risks short‑term revenue but long‑term UX degradation; conversely, subscriptions alone may underserve casual users.

- Open questions about data use: Promises not to sell raw conversation data are meaningful, but derived signals and personalization will still be used unless rigidly constrained. Independent audits and clear, enforceable contracts remain essential.

How to read the market from here

Expect to see three parallel trends accelerate:- Continued tier proliferation: More intermediate price points, family sharing plans, and regionally localized pricing.

- Ads and commerce experiments: Pilots that move beyond static cards into conversational commerce; success will depend on sustained user acceptance and demonstrable privacy protections.

- Edge of the enterprise: More enterprise‑grade controls, non‑training guarantees, and customizable model routing as businesses insist on predictable, auditable behavior.

Final assessment and verdict

The industry’s pivot to pricing tiers and ad experiments is hardly surprising; it is the logical next stage after mass adoption. OpenAI’s global rollout of ChatGPT Go and promise to test ads is a credible, defensible attempt to subsidize free access while accelerating paid conversion. The approach is commercially necessary and productively plausible — but it is not without cost.The upside: more people can access capable AI tools, heavy users can buy the assurances and performance they need, and vendors finally have pathways to monetize the enormous consumption of compute and engineering effort behind modern LLMs. The downside: ad‑supported chat threatens trust, feature stratification may create affordability gaps for heavy learners and small businesses, and regulatory scrutiny around data use and ad transparency is likely to intensify.

For Windows users and IT professionals, the practical takeaway is to prepare: model subscription costs, pilot premium plans where they add concrete value, and insist on contractual safeguards that protect data and preserve auditability. In an era where AI assistants can compose, act, and learn, the interface between human work and machine action is more consequential — and more monetized — than it was a year ago.

OpenAI’s announcements and the broader industry moves documented this month are not theoretical press releases; they are strategic inflection points. How vendors implement ad systems, price tiers, and enterprise guarantees will determine whether consumer AI becomes a democratising utility or a stratified marketplace where the best models are reserved for those who can pay. The next twelve months will tell which path the industry chooses — and whether user trust can be economically sustained while companies pursue the revenues necessary to run the most powerful models.

Source: PYMNTS.com ChatGPT Leads Consumer AI Use as OpenAI Pushes New Paid Tiers | PYMNTS.com